update model card

Browse files- README.md +95 -15

- patchtst_architecture.png +0 -0

README.md

CHANGED

|

@@ -1,33 +1,70 @@

|

|

| 1 |

---

|

| 2 |

tags:

|

| 3 |

- generated_from_trainer

|

|

|

|

| 4 |

model-index:

|

| 5 |

- name: patchtst_etth1_forecast

|

| 6 |

results: []

|

| 7 |

---

|

| 8 |

|

| 9 |

-

|

| 10 |

-

should probably proofread and complete it, then remove this comment. -->

|

| 11 |

|

| 12 |

-

|

| 13 |

|

| 14 |

-

|

| 15 |

-

It achieves the following results on the evaluation set:

|

| 16 |

-

- Loss: 0.3881

|

| 17 |

|

| 18 |

-

## Model description

|

| 19 |

|

| 20 |

-

|

|

|

|

| 21 |

|

| 22 |

-

|

| 23 |

|

| 24 |

-

|

| 25 |

|

| 26 |

-

|

| 27 |

|

| 28 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 29 |

|

| 30 |

-

## Training procedure

|

| 31 |

|

| 32 |

### Training hyperparameters

|

| 33 |

|

|

@@ -40,7 +77,7 @@ The following hyperparameters were used during training:

|

|

| 40 |

- lr_scheduler_type: linear

|

| 41 |

- num_epochs: 10

|

| 42 |

|

| 43 |

-

### Training

|

| 44 |

|

| 45 |

| Training Loss | Epoch | Step | Validation Loss |

|

| 46 |

|:-------------:|:-----:|:-----:|:---------------:|

|

|

@@ -55,10 +92,53 @@ The following hyperparameters were used during training:

|

|

| 55 |

| 0.3053 | 9.0 | 9045 | 0.8199 |

|

| 56 |

| 0.3019 | 10.0 | 10050 | 0.8173 |

|

| 57 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 58 |

|

| 59 |

-

###

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 60 |

|

| 61 |

- Transformers 4.36.0.dev0

|

| 62 |

- Pytorch 2.0.1

|

| 63 |

- Datasets 2.14.4

|

| 64 |

- Tokenizers 0.14.1

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

tags:

|

| 3 |

- generated_from_trainer

|

| 4 |

+

license: cdla-permissive-2.0

|

| 5 |

model-index:

|

| 6 |

- name: patchtst_etth1_forecast

|

| 7 |

results: []

|

| 8 |

---

|

| 9 |

|

| 10 |

+

# PatchTST model pre-trained on ETTh1 dataset

|

|

|

|

| 11 |

|

| 12 |

+

<!-- Provide a quick summary of what the model is/does. -->

|

| 13 |

|

| 14 |

+

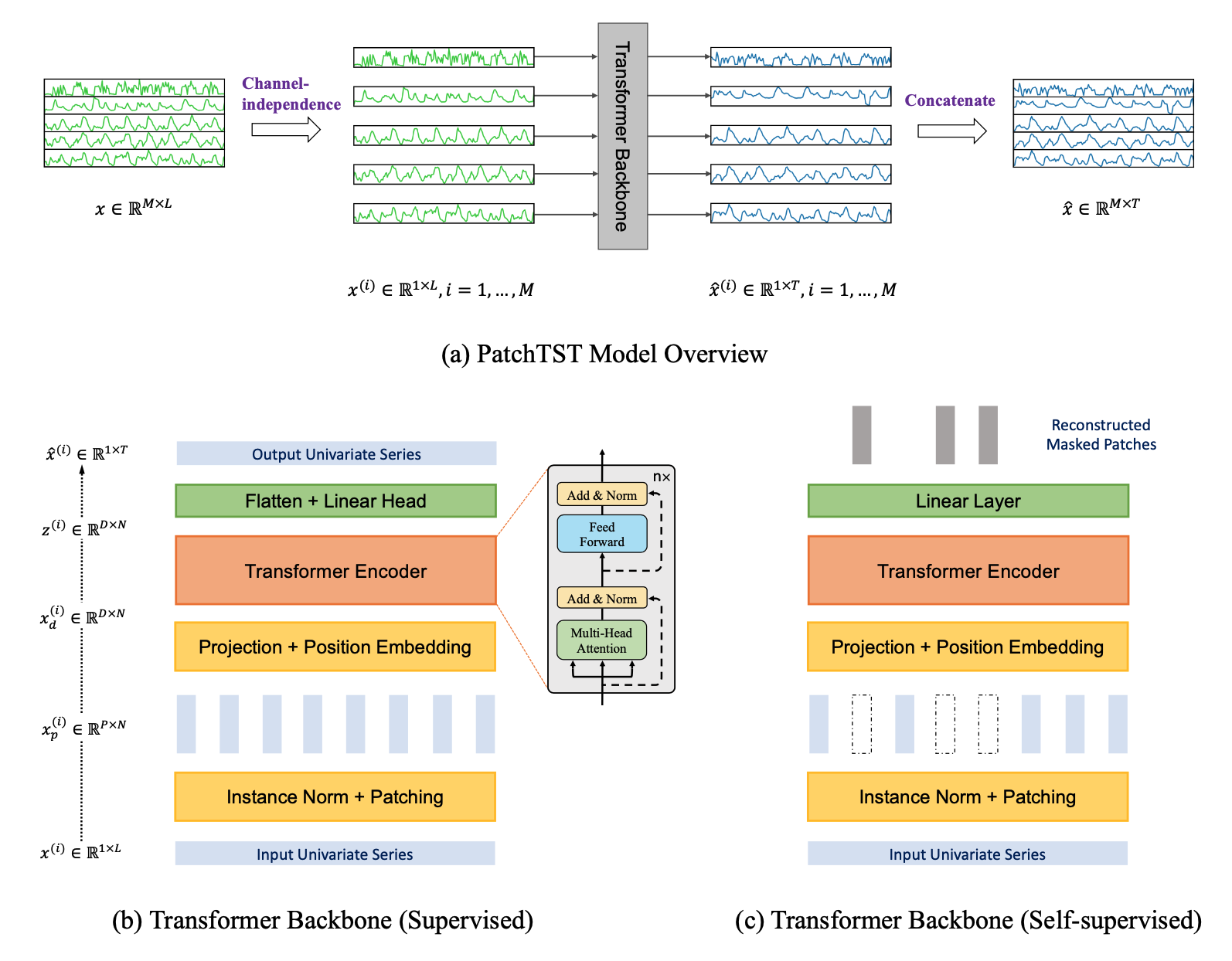

[`PatchTST`](https://huggingface.co/docs/transformers/model_doc/patchtst) is a transformer-based model for time series modeling tasks, including forecasting, regression, and classification.

|

|

|

|

|

|

|

| 15 |

|

|

|

|

| 16 |

|

| 17 |

+

In this context, we offer a pre-trained `PatchTST` model encompassing all seven channels of the `ETTh1` dataset.

|

| 18 |

+

This particular pre-trained model produces a Mean Squared Error (MSE) of 0.3881 on the `test` split of the `ETTh1` dataset when forecasting 96 hours into the future with a historical data window of 512 hours.

|

| 19 |

|

| 20 |

+

For training and evaluating a `PatchTST` model, you can refer to [this notebook](https://github.com/IBM/tsfm/blob/main/notebooks/hfdemo/patch_tst_getting_started.ipynb).

|

| 21 |

|

| 22 |

+

## Model Details

|

| 23 |

|

| 24 |

+

The `PatchTST` model was proposed in A Time Series is Worth [64 Words: Long-term Forecasting with Transformers](https://arxiv.org/abs/2211.14730) by Yuqi Nie, Nam H. Nguyen, Phanwadee Sinthong, Jayant Kalagnanam.

|

| 25 |

|

| 26 |

+

At a high level the model vectorizes time series into patches of a given size and encodes the resulting sequence of vectors via a Transformer that then outputs the prediction length forecast via an appropriate head.

|

| 27 |

+

|

| 28 |

+

The model is based on two key components: (i) segmentation of time series into subseries-level patches which are served as input tokens to Transformer; (ii) channel-independence where each channel contains a single univariate time series that shares the same embedding and Transformer weights across all the series. The patching design naturally has three-fold benefit: local semantic information is retained in the embedding; computation and memory usage of the attention maps are quadratically reduced given the same look-back window; and the model can attend longer history. Our channel-independent patch time series Transformer (PatchTST) can improve the long-term forecasting accuracy significantly when compared with that of SOTA Transformer-based models.

|

| 29 |

+

|

| 30 |

+

In addition, PatchTST has a modular design to seamlessly support masked time series pre-training as well as direct time series forecasting, classification, and regression.

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

### Model Description

|

| 34 |

+

|

| 35 |

+

<!-- Provide a longer summary of what this model is. -->

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

<img src="patchtst_architecture.png" alt="Architecture" width="600" />

|

| 39 |

+

|

| 40 |

+

### Model Sources

|

| 41 |

+

|

| 42 |

+

<!-- Provide the basic links for the model. -->

|

| 43 |

+

|

| 44 |

+

- **Repository:** [PatchTST Hugging Face](https://huggingface.co/docs/transformers/model_doc/patchtst)

|

| 45 |

+

- **Paper:** [PatchTST ICLR 2023 paper](https://dl.acm.org/doi/abs/10.1145/3580305.3599533)

|

| 46 |

+

- **Demo:** [Get started with PatchTST](https://github.com/IBM/tsfm/blob/main/notebooks/hfdemo/patch_tst_getting_started.ipynb)

|

| 47 |

+

|

| 48 |

+

## Uses

|

| 49 |

+

|

| 50 |

+

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

|

| 51 |

+

This pre-trained model can be employed for fine-tuning or evaluation using any Electrical Transformer dataset that has the same channels as the `ETTh1` dataset, specifically: `HUFL, HULL, MUFL, MULL, LUFL, LULL, OT`. The model is designed to predict the next 96 hours based on the input values from the preceding 512 hours. It is crucial to normalize the data. For a more comprehensive understanding of data pre-processing, please consult the paper or the demo.

|

| 52 |

+

|

| 53 |

+

## How to Get Started with the Model

|

| 54 |

+

|

| 55 |

+

Use the code below to get started with the model.

|

| 56 |

+

|

| 57 |

+

[Demo](https://github.com/IBM/tsfm/blob/main/notebooks/hfdemo/patch_tst_getting_started.ipynb)

|

| 58 |

+

|

| 59 |

+

## Training Details

|

| 60 |

+

|

| 61 |

+

### Training Data

|

| 62 |

+

|

| 63 |

+

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

|

| 64 |

+

|

| 65 |

+

[`ETTh1`/train split](https://github.com/zhouhaoyi/ETDataset/blob/main/ETT-small/ETTh1.csv).

|

| 66 |

+

Train/validation/test splits are shown in the [demo](https://github.com/IBM/tsfm/blob/main/notebooks/hfdemo/patch_tst_getting_started.ipynb).

|

| 67 |

|

|

|

|

| 68 |

|

| 69 |

### Training hyperparameters

|

| 70 |

|

|

|

|

| 77 |

- lr_scheduler_type: linear

|

| 78 |

- num_epochs: 10

|

| 79 |

|

| 80 |

+

### Training Results

|

| 81 |

|

| 82 |

| Training Loss | Epoch | Step | Validation Loss |

|

| 83 |

|:-------------:|:-----:|:-----:|:---------------:|

|

|

|

|

| 92 |

| 0.3053 | 9.0 | 9045 | 0.8199 |

|

| 93 |

| 0.3019 | 10.0 | 10050 | 0.8173 |

|

| 94 |

|

| 95 |

+

## Evaluation

|

| 96 |

+

|

| 97 |

+

<!-- This section describes the evaluation protocols and provides the results. -->

|

| 98 |

+

|

| 99 |

+

### Testing Data

|

| 100 |

+

|

| 101 |

+

[`ETTh1`/test split](https://github.com/zhouhaoyi/ETDataset/blob/main/ETT-small/ETTh1.csv).

|

| 102 |

+

Train/validation/test splits are shown in the [demo](https://github.com/IBM/tsfm/blob/main/notebooks/hfdemo/patch_tst_getting_started.ipynb).

|

| 103 |

+

|

| 104 |

+

### Metrics

|

| 105 |

+

|

| 106 |

+

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

|

| 107 |

+

|

| 108 |

+

Mean Squared Error (MSE).

|

| 109 |

|

| 110 |

+

### Results

|

| 111 |

+

It achieves a MSE of 0.3881 on the evaluation dataset.

|

| 112 |

+

|

| 113 |

+

#### Hardware

|

| 114 |

+

|

| 115 |

+

1 NVIDIA A100 GPU

|

| 116 |

+

|

| 117 |

+

#### Framework versions

|

| 118 |

|

| 119 |

- Transformers 4.36.0.dev0

|

| 120 |

- Pytorch 2.0.1

|

| 121 |

- Datasets 2.14.4

|

| 122 |

- Tokenizers 0.14.1

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

## Citation

|

| 126 |

+

|

| 127 |

+

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 128 |

+

|

| 129 |

+

**BibTeX:**

|

| 130 |

+

```

|

| 131 |

+

@misc{nie2023time,

|

| 132 |

+

title={A Time Series is Worth 64 Words: Long-term Forecasting with Transformers},

|

| 133 |

+

author={Yuqi Nie and Nam H. Nguyen and Phanwadee Sinthong and Jayant Kalagnanam},

|

| 134 |

+

year={2023},

|

| 135 |

+

eprint={2211.14730},

|

| 136 |

+

archivePrefix={arXiv},

|

| 137 |

+

primaryClass={cs.LG}

|

| 138 |

+

}

|

| 139 |

+

```

|

| 140 |

+

|

| 141 |

+

**APA:**

|

| 142 |

+

```

|

| 143 |

+

Nie, Y., Nguyen, N., Sinthong, P., & Kalagnanam, J. (2023). A Time Series is Worth 64 Words: Long-term Forecasting with Transformers. arXiv preprint arXiv:2211.14730.

|

| 144 |

+

```

|

patchtst_architecture.png

ADDED

|