metadata

datasets:

- ewof/code-alpaca-instruct-unfiltered

library_name: peft

tags:

- gpt-j

- gpt-j-6b

- code

- instruct

- instruct-code

- code-alpaca

- alpaca-instruct

- alpaca

- llama7b

- gpt2

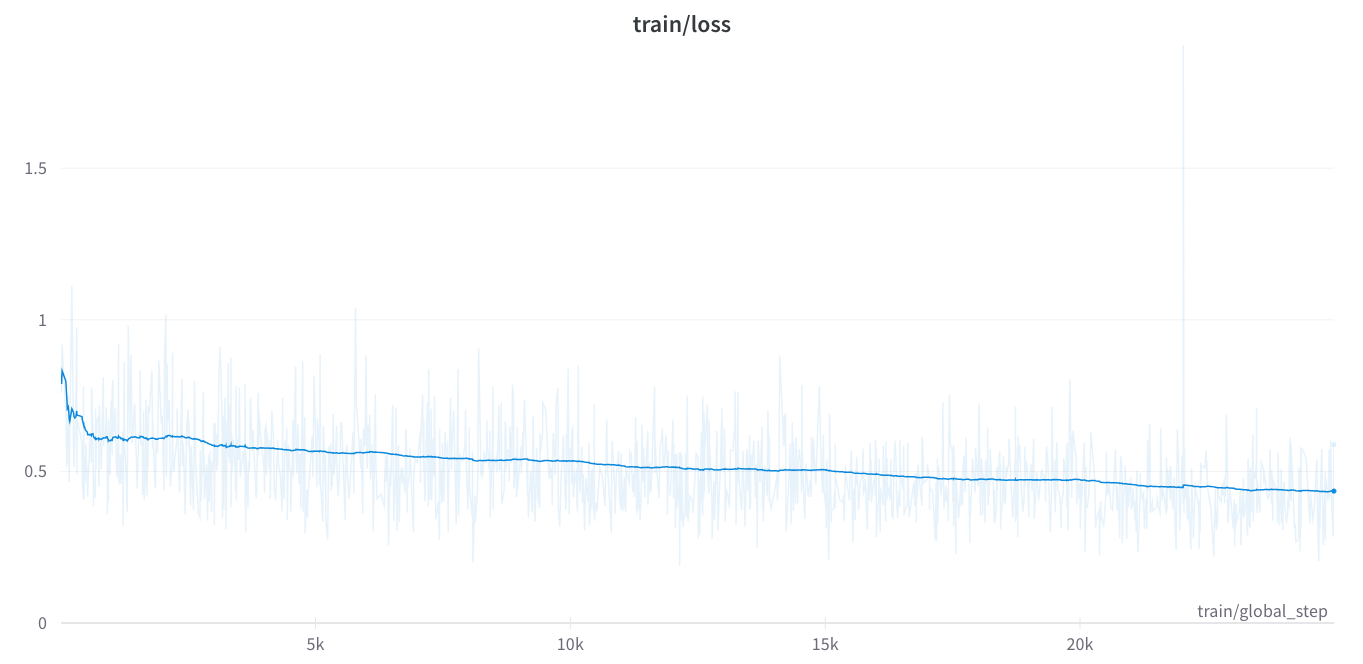

We finetuned GPT-J 6B on Code-Alpaca-Instruct Dataset (ewof/code-alpaca-instruct-unfiltered) for 5 epochs or ~ 25,000 steps using MonsterAPI no-code LLM finetuner.

This dataset is HuggingFaceH4/CodeAlpaca_20K unfiltered, removing 36 instances of blatant alignment.

The finetuning session got completed in 206 minutes and costed us only $8 for the entire finetuning run!

Hyperparameters & Run details:

- Model Path: EleutherAI/gpt-j-6b

- Dataset: ewof/code-alpaca-instruct-unfiltered

- Learning rate: 0.0003

- Number of epochs: 5

- Data split: Training: 90% / Validation: 10%

- Gradient accumulation steps: 1