Duplicate from TheBloke/wizard-vicuna-13B-SuperHOT-8K-fp16

Browse filesCo-authored-by: Tom Jobbins <TheBloke@users.noreply.huggingface.co>

- .gitattributes +35 -0

- README.md +282 -0

- config.json +28 -0

- generation_config.json +7 -0

- huggingface-metadata.txt +8 -0

- llama_rope_scaled_monkey_patch.py +65 -0

- modelling_llama.py +894 -0

- pytorch_model-00001-of-00003.bin +3 -0

- pytorch_model-00002-of-00003.bin +3 -0

- pytorch_model-00003-of-00003.bin +3 -0

- pytorch_model.bin.index.json +410 -0

- special_tokens_map.json +24 -0

- tokenizer.json +0 -0

- tokenizer.model +3 -0

- tokenizer_config.json +34 -0

.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

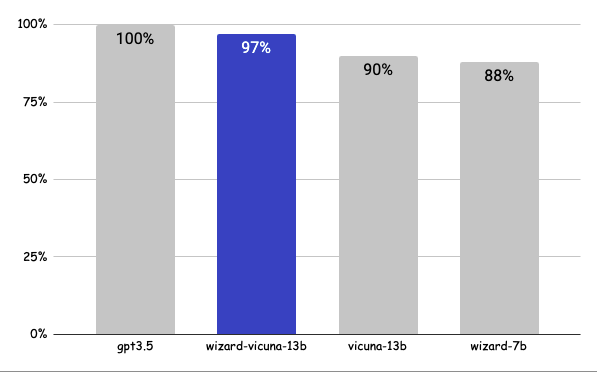

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,282 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

inference: false

|

| 3 |

+

license: other

|

| 4 |

+

duplicated_from: TheBloke/wizard-vicuna-13B-SuperHOT-8K-fp16

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

<!-- header start -->

|

| 8 |

+

<div style="width: 100%;">

|

| 9 |

+

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 10 |

+

</div>

|

| 11 |

+

<div style="display: flex; justify-content: space-between; width: 100%;">

|

| 12 |

+

<div style="display: flex; flex-direction: column; align-items: flex-start;">

|

| 13 |

+

<p><a href="https://discord.gg/theblokeai">Chat & support: my new Discord server</a></p>

|

| 14 |

+

</div>

|

| 15 |

+

<div style="display: flex; flex-direction: column; align-items: flex-end;">

|

| 16 |

+

<p><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

|

| 17 |

+

</div>

|

| 18 |

+

</div>

|

| 19 |

+

<!-- header end -->

|

| 20 |

+

|

| 21 |

+

# June Lee's Wizard Vicuna 13B fp16

|

| 22 |

+

|

| 23 |

+

This is fp16 pytorch format model files for [June Lee's Wizard Vicuna 13B](https://huggingface.co/TheBloke/wizard-vicuna-13B-HF) merged with [Kaio Ken's SuperHOT 8K](https://huggingface.co/kaiokendev/superhot-13b-8k-no-rlhf-test).

|

| 24 |

+

|

| 25 |

+

[Kaio Ken's SuperHOT 13b LoRA](https://huggingface.co/kaiokendev/superhot-13b-8k-no-rlhf-test) is merged on to the base model, and then 8K context can be achieved during inference by using `trust_remote_code=True`.

|

| 26 |

+

|

| 27 |

+

Note that `config.json` has been set to a sequence length of 8192. This can be modified to 4096 if you want to try with a smaller sequence length.

|

| 28 |

+

|

| 29 |

+

## Repositories available

|

| 30 |

+

|

| 31 |

+

* [4-bit GPTQ models for GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-SuperHOT-8K-GPTQ)

|

| 32 |

+

* [2, 3, 4, 5, 6 and 8-bit GGML models for CPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-SuperHOT-8K-GGML)

|

| 33 |

+

* [Unquantised SuperHOT fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/TheBloke/wizard-vicuna-13B-SuperHOT-8K-fp16)

|

| 34 |

+

* [Unquantised base fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/junelee/wizard-vicuna-13b)

|

| 35 |

+

|

| 36 |

+

## How to use this model from Python code

|

| 37 |

+

|

| 38 |

+

First make sure you have Einops installed:

|

| 39 |

+

|

| 40 |

+

```

|

| 41 |

+

pip3 install auto-gptq

|

| 42 |

+

```

|

| 43 |

+

|

| 44 |

+

Then run the following code. `config.json` has been default to a sequence length of 8192, but you can also configure this in your Python code.

|

| 45 |

+

|

| 46 |

+

The provided modelling code, activated with `trust_remote_code=True` will automatically set the `scale` parameter from the configured `max_position_embeddings`. Eg for 8192, `scale` is set to `4`.

|

| 47 |

+

|

| 48 |

+

```python

|

| 49 |

+

from transformers import AutoConfig, AutoTokenizer, AutoModelForCausalLM, pipeline

|

| 50 |

+

import argparse

|

| 51 |

+

|

| 52 |

+

model_name_or_path = "TheBloke/wizard-vicuna-13B-SuperHOT-8K-fp16"

|

| 53 |

+

|

| 54 |

+

use_triton = False

|

| 55 |

+

|

| 56 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

|

| 57 |

+

|

| 58 |

+

config = AutoConfig.from_pretrained(model_name_or_path, trust_remote_code=True)

|

| 59 |

+

# Change this to the sequence length you want

|

| 60 |

+

config.max_position_embeddings = 8192

|

| 61 |

+

|

| 62 |

+

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

|

| 63 |

+

config=config,

|

| 64 |

+

trust_remote_code=True,

|

| 65 |

+

device_map='auto')

|

| 66 |

+

|

| 67 |

+

# Note: check to confirm if this is correct prompt template is correct for this model!

|

| 68 |

+

prompt = "Tell me about AI"

|

| 69 |

+

prompt_template=f'''USER: {prompt}

|

| 70 |

+

ASSISTANT:'''

|

| 71 |

+

|

| 72 |

+

print("\n\n*** Generate:")

|

| 73 |

+

|

| 74 |

+

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

|

| 75 |

+

output = model.generate(inputs=input_ids, temperature=0.7, max_new_tokens=512)

|

| 76 |

+

print(tokenizer.decode(output[0]))

|

| 77 |

+

|

| 78 |

+

# Inference can also be done using transformers' pipeline

|

| 79 |

+

|

| 80 |

+

print("*** Pipeline:")

|

| 81 |

+

pipe = pipeline(

|

| 82 |

+

"text-generation",

|

| 83 |

+

model=model,

|

| 84 |

+

tokenizer=tokenizer,

|

| 85 |

+

max_new_tokens=512,

|

| 86 |

+

temperature=0.7,

|

| 87 |

+

top_p=0.95,

|

| 88 |

+

repetition_penalty=1.15

|

| 89 |

+

)

|

| 90 |

+

|

| 91 |

+

print(pipe(prompt_template)[0]['generated_text'])

|

| 92 |

+

```

|

| 93 |

+

|

| 94 |

+

## Using other UIs: monkey patch

|

| 95 |

+

|

| 96 |

+

Provided in the repo is `llama_rope_scaled_monkey_patch.py`, written by @kaiokendev.

|

| 97 |

+

|

| 98 |

+

It can be theoretically be added to any Python UI or custom code to enable the same result as `trust_remote_code=True`. I have not tested this, and it should be superseded by using `trust_remote_code=True`, but I include it for completeness and for interest.

|

| 99 |

+

|

| 100 |

+

<!-- footer start -->

|

| 101 |

+

## Discord

|

| 102 |

+

|

| 103 |

+

For further support, and discussions on these models and AI in general, join us at:

|

| 104 |

+

|

| 105 |

+

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

|

| 106 |

+

|

| 107 |

+

## Thanks, and how to contribute.

|

| 108 |

+

|

| 109 |

+

Thanks to the [chirper.ai](https://chirper.ai) team!

|

| 110 |

+

|

| 111 |

+

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

|

| 112 |

+

|

| 113 |

+

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

|

| 114 |

+

|

| 115 |

+

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

|

| 116 |

+

|

| 117 |

+

* Patreon: https://patreon.com/TheBlokeAI

|

| 118 |

+

* Ko-Fi: https://ko-fi.com/TheBlokeAI

|

| 119 |

+

|

| 120 |

+

**Special thanks to**: Luke from CarbonQuill, Aemon Algiz, Dmitriy Samsonov.

|

| 121 |

+

|

| 122 |

+

**Patreon special mentions**: zynix , ya boyyy, Trenton Dambrowitz, Imad Khwaja, Alps Aficionado, chris gileta, John Detwiler, Willem Michiel, RoA, Mano Prime, Rainer Wilmers, Fred von Graf, Matthew Berman, Ghost , Nathan LeClaire, Iucharbius , Ai Maven, Illia Dulskyi, Joseph William Delisle, Space Cruiser, Lone Striker, Karl Bernard, Eugene Pentland, Greatston Gnanesh, Jonathan Leane, Randy H, Pierre Kircher, Willian Hasse, Stephen Murray, Alex , terasurfer , Edmond Seymore, Oscar Rangel, Luke Pendergrass, Asp the Wyvern, Junyu Yang, David Flickinger, Luke, Spiking Neurons AB, subjectnull, Pyrater, Nikolai Manek, senxiiz, Ajan Kanaga, Johann-Peter Hartmann, Artur Olbinski, Kevin Schuppel, Derek Yates, Kalila, K, Talal Aujan, Khalefa Al-Ahmad, Gabriel Puliatti, John Villwock, WelcomeToTheClub, Daniel P. Andersen, Preetika Verma, Deep Realms, Fen Risland, trip7s trip, webtim, Sean Connelly, Michael Levine, Chris McCloskey, biorpg, vamX, Viktor Bowallius, Cory Kujawski.

|

| 123 |

+

|

| 124 |

+

Thank you to all my generous patrons and donaters!

|

| 125 |

+

|

| 126 |

+

<!-- footer end -->

|

| 127 |

+

|

| 128 |

+

# Original model card: Kaio Ken's SuperHOT 8K

|

| 129 |

+

|

| 130 |

+

### SuperHOT Prototype 2 w/ 8K Context

|

| 131 |

+

|

| 132 |

+

This is a second prototype of SuperHOT, this time 30B with 8K context and no RLHF, using the same technique described in [the github blog](https://kaiokendev.github.io/til#extending-context-to-8k).

|

| 133 |

+

Tests have shown that the model does indeed leverage the extended context at 8K.

|

| 134 |

+

|

| 135 |

+

You will need to **use either the monkeypatch** or, if you are already using the monkeypatch, **change the scaling factor to 0.25 and the maximum sequence length to 8192**

|

| 136 |

+

|

| 137 |

+

#### Looking for Merged & Quantized Models?

|

| 138 |

+

- 30B 4-bit CUDA: [tmpupload/superhot-30b-8k-4bit-safetensors](https://huggingface.co/tmpupload/superhot-30b-8k-4bit-safetensors)

|

| 139 |

+

- 30B 4-bit CUDA 128g: [tmpupload/superhot-30b-8k-4bit-128g-safetensors](https://huggingface.co/tmpupload/superhot-30b-8k-4bit-128g-safetensors)

|

| 140 |

+

|

| 141 |

+

|

| 142 |

+

#### Training Details

|

| 143 |

+

I trained the LoRA with the following configuration:

|

| 144 |

+

- 1200 samples (~400 samples over 2048 sequence length)

|

| 145 |

+

- learning rate of 3e-4

|

| 146 |

+

- 3 epochs

|

| 147 |

+

- The exported modules are:

|

| 148 |

+

- q_proj

|

| 149 |

+

- k_proj

|

| 150 |

+

- v_proj

|

| 151 |

+

- o_proj

|

| 152 |

+

- no bias

|

| 153 |

+

- Rank = 4

|

| 154 |

+

- Alpha = 8

|

| 155 |

+

- no dropout

|

| 156 |

+

- weight decay of 0.1

|

| 157 |

+

- AdamW beta1 of 0.9 and beta2 0.99, epsilon of 1e-5

|

| 158 |

+

- Trained on 4-bit base model

|

| 159 |

+

|

| 160 |

+

# Original model card: June Lee's Wizard Vicuna 13B

|

| 161 |

+

|

| 162 |

+

<!-- header start -->

|

| 163 |

+

<div style="width: 100%;">

|

| 164 |

+

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 165 |

+

</div>

|

| 166 |

+

<div style="display: flex; justify-content: space-between; width: 100%;">

|

| 167 |

+

<div style="display: flex; flex-direction: column; align-items: flex-start;">

|

| 168 |

+

<p><a href="https://discord.gg/Jq4vkcDakD">Chat & support: my new Discord server</a></p>

|

| 169 |

+

</div>

|

| 170 |

+

<div style="display: flex; flex-direction: column; align-items: flex-end;">

|

| 171 |

+

<p><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

|

| 172 |

+

</div>

|

| 173 |

+

</div>

|

| 174 |

+

<!-- header end -->

|

| 175 |

+

# Wizard-Vicuna-13B-HF

|

| 176 |

+

|

| 177 |

+

This is a float16 HF format repo for [junelee's wizard-vicuna 13B](https://huggingface.co/junelee/wizard-vicuna-13b).

|

| 178 |

+

|

| 179 |

+

June Lee's repo was also HF format. The reason I've made this is that the original repo was in float32, meaning it required 52GB disk space, VRAM and RAM.

|

| 180 |

+

|

| 181 |

+

This model was converted to float16 to make it easier to load and manage.

|

| 182 |

+

|

| 183 |

+

## Repositories available

|

| 184 |

+

|

| 185 |

+

* [4bit GPTQ models for GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-GPTQ).

|

| 186 |

+

* [4bit and 5bit GGML models for CPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-GGML).

|

| 187 |

+

* [float16 HF format model for GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-HF).

|

| 188 |

+

|

| 189 |

+

<!-- footer start -->

|

| 190 |

+

## Discord

|

| 191 |

+

|

| 192 |

+

For further support, and discussions on these models and AI in general, join us at:

|

| 193 |

+

|

| 194 |

+

[TheBloke AI's Discord server](https://discord.gg/Jq4vkcDakD)

|

| 195 |

+

|

| 196 |

+

## Thanks, and how to contribute.

|

| 197 |

+

|

| 198 |

+

Thanks to the [chirper.ai](https://chirper.ai) team!

|

| 199 |

+

|

| 200 |

+

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

|

| 201 |

+

|

| 202 |

+

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

|

| 203 |

+

|

| 204 |

+

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

|

| 205 |

+

|

| 206 |

+

* Patreon: https://patreon.com/TheBlokeAI

|

| 207 |

+

* Ko-Fi: https://ko-fi.com/TheBlokeAI

|

| 208 |

+

|

| 209 |

+

**Patreon special mentions**: Aemon Algiz, Dmitriy Samsonov, Nathan LeClaire, Trenton Dambrowitz, Mano Prime, David Flickinger, vamX, Nikolai Manek, senxiiz, Khalefa Al-Ahmad, Illia Dulskyi, Jonathan Leane, Talal Aujan, V. Lukas, Joseph William Delisle, Pyrater, Oscar Rangel, Lone Striker, Luke Pendergrass, Eugene Pentland, Sebastain Graf, Johann-Peter Hartman.

|

| 210 |

+

|

| 211 |

+

Thank you to all my generous patrons and donaters!

|

| 212 |

+

<!-- footer end -->

|

| 213 |

+

|

| 214 |

+

# Original WizardVicuna-13B model card

|

| 215 |

+

|

| 216 |

+

Github page: https://github.com/melodysdreamj/WizardVicunaLM

|

| 217 |

+

|

| 218 |

+

# WizardVicunaLM

|

| 219 |

+

### Wizard's dataset + ChatGPT's conversation extension + Vicuna's tuning method

|

| 220 |

+

I am a big fan of the ideas behind WizardLM and VicunaLM. I particularly like the idea of WizardLM handling the dataset itself more deeply and broadly, as well as VicunaLM overcoming the limitations of single-turn conversations by introducing multi-round conversations. As a result, I combined these two ideas to create WizardVicunaLM. This project is highly experimental and designed for proof of concept, not for actual usage.

|

| 221 |

+

|

| 222 |

+

|

| 223 |

+

## Benchmark

|

| 224 |

+

### Approximately 7% performance improvement over VicunaLM

|

| 225 |

+

|

| 226 |

+

|

| 227 |

+

|

| 228 |

+

### Detail

|

| 229 |

+

|

| 230 |

+

The questions presented here are not from rigorous tests, but rather, I asked a few questions and requested GPT-4 to score them. The models compared were ChatGPT 3.5, WizardVicunaLM, VicunaLM, and WizardLM, in that order.

|

| 231 |

+

|

| 232 |

+

| | gpt3.5 | wizard-vicuna-13b | vicuna-13b | wizard-7b | link |

|

| 233 |

+

|-----|--------|-------------------|------------|-----------|----------|

|

| 234 |

+

| Q1 | 95 | 90 | 85 | 88 | [link](https://sharegpt.com/c/YdhIlby) |

|

| 235 |

+

| Q2 | 95 | 97 | 90 | 89 | [link](https://sharegpt.com/c/YOqOV4g) |

|

| 236 |

+

| Q3 | 85 | 90 | 80 | 65 | [link](https://sharegpt.com/c/uDmrcL9) |

|

| 237 |

+

| Q4 | 90 | 85 | 80 | 75 | [link](https://sharegpt.com/c/XBbK5MZ) |

|

| 238 |

+

| Q5 | 90 | 85 | 80 | 75 | [link](https://sharegpt.com/c/AQ5tgQX) |

|

| 239 |

+

| Q6 | 92 | 85 | 87 | 88 | [link](https://sharegpt.com/c/eVYwfIr) |

|

| 240 |

+

| Q7 | 95 | 90 | 85 | 92 | [link](https://sharegpt.com/c/Kqyeub4) |

|

| 241 |

+

| Q8 | 90 | 85 | 75 | 70 | [link](https://sharegpt.com/c/M0gIjMF) |

|

| 242 |

+

| Q9 | 92 | 85 | 70 | 60 | [link](https://sharegpt.com/c/fOvMtQt) |

|

| 243 |

+

| Q10 | 90 | 80 | 75 | 85 | [link](https://sharegpt.com/c/YYiCaUz) |

|

| 244 |

+

| Q11 | 90 | 85 | 75 | 65 | [link](https://sharegpt.com/c/HMkKKGU) |

|

| 245 |

+

| Q12 | 85 | 90 | 80 | 88 | [link](https://sharegpt.com/c/XbW6jgB) |

|

| 246 |

+

| Q13 | 90 | 95 | 88 | 85 | [link](https://sharegpt.com/c/JXZb7y6) |

|

| 247 |

+

| Q14 | 94 | 89 | 90 | 91 | [link](https://sharegpt.com/c/cTXH4IS) |

|

| 248 |

+

| Q15 | 90 | 85 | 88 | 87 | [link](https://sharegpt.com/c/GZiM0Yt) |

|

| 249 |

+

| | 91 | 88 | 82 | 80 | |

|

| 250 |

+

|

| 251 |

+

|

| 252 |

+

## Principle

|

| 253 |

+

|

| 254 |

+

We adopted the approach of WizardLM, which is to extend a single problem more in-depth. However, instead of using individual instructions, we expanded it using Vicuna's conversation format and applied Vicuna's fine-tuning techniques.

|

| 255 |

+

|

| 256 |

+

Turning a single command into a rich conversation is what we've done [here](https://sharegpt.com/c/6cmxqq0).

|

| 257 |

+

|

| 258 |

+

After creating the training data, I later trained it according to the Vicuna v1.1 [training method](https://github.com/lm-sys/FastChat/blob/main/scripts/train_vicuna_13b.sh).

|

| 259 |

+

|

| 260 |

+

|

| 261 |

+

## Detailed Method

|

| 262 |

+

|

| 263 |

+

First, we explore and expand various areas in the same topic using the 7K conversations created by WizardLM. However, we made it in a continuous conversation format instead of the instruction format. That is, it starts with WizardLM's instruction, and then expands into various areas in one conversation using ChatGPT 3.5.

|

| 264 |

+

|

| 265 |

+

After that, we applied the following model using Vicuna's fine-tuning format.

|

| 266 |

+

|

| 267 |

+

## Training Process

|

| 268 |

+

|

| 269 |

+

Trained with 8 A100 GPUs for 35 hours.

|

| 270 |

+

|

| 271 |

+

## Weights

|

| 272 |

+

You can see the [dataset](https://huggingface.co/datasets/junelee/wizard_vicuna_70k) we used for training and the [13b model](https://huggingface.co/junelee/wizard-vicuna-13b) in the huggingface.

|

| 273 |

+

|

| 274 |

+

## Conclusion

|

| 275 |

+

If we extend the conversation to gpt4 32K, we can expect a dramatic improvement, as we can generate 8x more, more accurate and richer conversations.

|

| 276 |

+

|

| 277 |

+

## License

|

| 278 |

+

The model is licensed under the LLaMA model, and the dataset is licensed under the terms of OpenAI because it uses ChatGPT. Everything else is free.

|

| 279 |

+

|

| 280 |

+

## Author

|

| 281 |

+

|

| 282 |

+

[JUNE LEE](https://github.com/melodysdreamj) - He is active in Songdo Artificial Intelligence Study and GDG Songdo.

|

config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/workspace/superhot_process/wizard-vicuna-13b/source",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"LlamaForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"bos_token_id": 1,

|

| 7 |

+

"eos_token_id": 2,

|

| 8 |

+

"hidden_act": "silu",

|

| 9 |

+

"hidden_size": 5120,

|

| 10 |

+

"initializer_range": 0.02,

|

| 11 |

+

"intermediate_size": 13824,

|

| 12 |

+

"max_position_embeddings": 8192,

|

| 13 |

+

"model_type": "llama",

|

| 14 |

+

"num_attention_heads": 40,

|

| 15 |

+

"num_hidden_layers": 40,

|

| 16 |

+

"pad_token_id": 0,

|

| 17 |

+

"rms_norm_eps": 1e-06,

|

| 18 |

+

"tie_word_embeddings": false,

|

| 19 |

+

"torch_dtype": "float16",

|

| 20 |

+

"transformers_version": "4.30.0.dev0",

|

| 21 |

+

"use_cache": true,

|

| 22 |

+

"vocab_size": 32000,

|

| 23 |

+

"auto_map": {

|

| 24 |

+

"AutoModel": "modelling_llama.LlamaModel",

|

| 25 |

+

"AutoModelForCausalLM": "modelling_llama.LlamaForCausalLM",

|

| 26 |

+

"AutoModelForSequenceClassification": "modelling_llama.LlamaForSequenceClassification"

|

| 27 |

+

}

|

| 28 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"pad_token_id": 0,

|

| 6 |

+

"transformers_version": "4.30.0.dev0"

|

| 7 |

+

}

|

huggingface-metadata.txt

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

url: https://huggingface.co/TheBloke/wizard-vicuna-13B-SuperHOT-8K-fp16

|

| 2 |

+

branch: main

|

| 3 |

+

download date: 2023-06-30 04:50:50

|

| 4 |

+

sha256sum:

|

| 5 |

+

44fb43b331a35a7b6cdebcf4b3952ac435742cd6fe04c71155912aa36db9186c pytorch_model-00001-of-00003.bin

|

| 6 |

+

6494699287f79959d68995c0ece4638371ea6e7d28d3cc0d86bf1f01e9ea4169 pytorch_model-00002-of-00003.bin

|

| 7 |

+

db94f4bee1864d8bc1e38ca01f7c1ccd91ba93616025ddc027e84c4fdc341343 pytorch_model-00003-of-00003.bin

|

| 8 |

+

9e556afd44213b6bd1be2b850ebbbd98f5481437a8021afaf58ee7fb1818d347 tokenizer.model

|

llama_rope_scaled_monkey_patch.py

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

import transformers

|

| 3 |

+

import transformers.models.llama.modeling_llama

|

| 4 |

+

from einops import rearrange

|

| 5 |

+

import random

|

| 6 |

+

|

| 7 |

+

# This monkey patch file is not needed if using ExLlama, or if using `trust_remote_code=True``

|

| 8 |

+

|

| 9 |

+

class ScaledRotaryEmbedding(torch.nn.Module):

|

| 10 |

+

def __init__(self, dim, max_position_embeddings=2048, base=10000, device=None):

|

| 11 |

+

super().__init__()

|

| 12 |

+

inv_freq = 1.0 / (base ** (torch.arange(0, dim, 2).float().to(device) / dim))

|

| 13 |

+

self.register_buffer("inv_freq", inv_freq)

|

| 14 |

+

|

| 15 |

+

max_position_embeddings = 8192

|

| 16 |

+

|

| 17 |

+

# Build here to make `torch.jit.trace` work.

|

| 18 |

+

self.max_seq_len_cached = max_position_embeddings

|

| 19 |

+

t = torch.arange(

|

| 20 |

+

self.max_seq_len_cached,

|

| 21 |

+

device=self.inv_freq.device,

|

| 22 |

+

dtype=self.inv_freq.dtype,

|

| 23 |

+

)

|

| 24 |

+

|

| 25 |

+

self.scale = 1 / 4

|

| 26 |

+

t *= self.scale

|

| 27 |

+

|

| 28 |

+

freqs = torch.einsum("i,j->ij", t, self.inv_freq)

|

| 29 |

+

# Different from paper, but it uses a different permutation in order to obtain the same calculation

|

| 30 |

+

emb = torch.cat((freqs, freqs), dim=-1)

|

| 31 |

+

self.register_buffer(

|

| 32 |

+

"cos_cached", emb.cos()[None, None, :, :], persistent=False

|

| 33 |

+

)

|

| 34 |

+

self.register_buffer(

|

| 35 |

+

"sin_cached", emb.sin()[None, None, :, :], persistent=False

|

| 36 |

+

)

|

| 37 |

+

|

| 38 |

+

def forward(self, x, seq_len=None):

|

| 39 |

+

# x: [bs, num_attention_heads, seq_len, head_size]

|

| 40 |

+

# This `if` block is unlikely to be run after we build sin/cos in `__init__`. Keep the logic here just in case.

|

| 41 |

+

if seq_len > self.max_seq_len_cached:

|

| 42 |

+

self.max_seq_len_cached = seq_len

|

| 43 |

+

t = torch.arange(

|

| 44 |

+

self.max_seq_len_cached, device=x.device, dtype=self.inv_freq.dtype

|

| 45 |

+

)

|

| 46 |

+

t *= self.scale

|

| 47 |

+

freqs = torch.einsum("i,j->ij", t, self.inv_freq)

|

| 48 |

+

# Different from paper, but it uses a different permutation in order to obtain the same calculation

|

| 49 |

+

emb = torch.cat((freqs, freqs), dim=-1).to(x.device)

|

| 50 |

+

self.register_buffer(

|

| 51 |

+

"cos_cached", emb.cos()[None, None, :, :], persistent=False

|

| 52 |

+

)

|

| 53 |

+

self.register_buffer(

|

| 54 |

+

"sin_cached", emb.sin()[None, None, :, :], persistent=False

|

| 55 |

+

)

|

| 56 |

+

return (

|

| 57 |

+

self.cos_cached[:, :, :seq_len, ...].to(dtype=x.dtype),

|

| 58 |

+

self.sin_cached[:, :, :seq_len, ...].to(dtype=x.dtype),

|

| 59 |

+

)

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

def replace_llama_rope_with_scaled_rope():

|

| 63 |

+

transformers.models.llama.modeling_llama.LlamaRotaryEmbedding = (

|

| 64 |

+

ScaledRotaryEmbedding

|

| 65 |

+

)

|

modelling_llama.py

ADDED

|

@@ -0,0 +1,894 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# coding=utf-8

|

| 2 |

+

# Copyright 2022 EleutherAI and the HuggingFace Inc. team. All rights reserved.

|

| 3 |

+

#

|

| 4 |

+

# This code is based on EleutherAI's GPT-NeoX library and the GPT-NeoX

|

| 5 |

+

# and OPT implementations in this library. It has been modified from its

|

| 6 |

+

# original forms to accommodate minor architectural differences compared

|

| 7 |

+

# to GPT-NeoX and OPT used by the Meta AI team that trained the model.

|

| 8 |

+

#

|

| 9 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 10 |

+

# you may not use this file except in compliance with the License.

|

| 11 |

+

# You may obtain a copy of the License at

|

| 12 |

+

#

|

| 13 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 14 |

+

#

|

| 15 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 16 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 17 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 18 |

+

# See the License for the specific language governing permissions and

|

| 19 |

+

# limitations under the License.

|

| 20 |

+

""" PyTorch LLaMA model."""

|

| 21 |

+

import math

|

| 22 |

+

from typing import List, Optional, Tuple, Union

|

| 23 |

+

|

| 24 |

+

import torch

|

| 25 |

+

import torch.utils.checkpoint

|

| 26 |

+

from torch import nn

|

| 27 |

+

from torch.nn import BCEWithLogitsLoss, CrossEntropyLoss, MSELoss

|

| 28 |

+

|

| 29 |

+

from transformers.activations import ACT2FN

|

| 30 |

+

from transformers.modeling_outputs import BaseModelOutputWithPast, CausalLMOutputWithPast, SequenceClassifierOutputWithPast

|

| 31 |

+

from transformers.modeling_utils import PreTrainedModel

|

| 32 |

+

from transformers.utils import add_start_docstrings, add_start_docstrings_to_model_forward, logging, replace_return_docstrings

|

| 33 |

+

from transformers.models.llama.modeling_llama import LlamaConfig

|

| 34 |

+

|

| 35 |

+

logger = logging.get_logger(__name__)

|

| 36 |

+

|

| 37 |

+

_CONFIG_FOR_DOC = "LlamaConfig"

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

# Copied from transformers.models.bart.modeling_bart._make_causal_mask

|

| 41 |

+

def _make_causal_mask(

|

| 42 |

+

input_ids_shape: torch.Size, dtype: torch.dtype, device: torch.device, past_key_values_length: int = 0

|

| 43 |

+

):

|

| 44 |

+

"""

|

| 45 |

+

Make causal mask used for bi-directional self-attention.

|

| 46 |

+

"""

|

| 47 |

+

bsz, tgt_len = input_ids_shape

|

| 48 |

+

mask = torch.full((tgt_len, tgt_len), torch.tensor(torch.finfo(dtype).min, device=device), device=device)

|

| 49 |

+

mask_cond = torch.arange(mask.size(-1), device=device)

|

| 50 |

+

mask.masked_fill_(mask_cond < (mask_cond + 1).view(mask.size(-1), 1), 0)

|

| 51 |

+

mask = mask.to(dtype)

|

| 52 |

+

|

| 53 |

+

if past_key_values_length > 0:

|

| 54 |

+

mask = torch.cat([torch.zeros(tgt_len, past_key_values_length, dtype=dtype, device=device), mask], dim=-1)

|

| 55 |

+

return mask[None, None, :, :].expand(bsz, 1, tgt_len, tgt_len + past_key_values_length)

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

# Copied from transformers.models.bart.modeling_bart._expand_mask

|

| 59 |

+

def _expand_mask(mask: torch.Tensor, dtype: torch.dtype, tgt_len: Optional[int] = None):

|

| 60 |

+

"""

|

| 61 |

+

Expands attention_mask from `[bsz, seq_len]` to `[bsz, 1, tgt_seq_len, src_seq_len]`.

|

| 62 |

+

"""

|

| 63 |

+

bsz, src_len = mask.size()

|

| 64 |

+

tgt_len = tgt_len if tgt_len is not None else src_len

|

| 65 |

+

|

| 66 |

+

expanded_mask = mask[:, None, None, :].expand(bsz, 1, tgt_len, src_len).to(dtype)

|

| 67 |

+

|

| 68 |

+

inverted_mask = 1.0 - expanded_mask

|

| 69 |

+

|

| 70 |

+

return inverted_mask.masked_fill(inverted_mask.to(torch.bool), torch.finfo(dtype).min)

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

class LlamaRMSNorm(nn.Module):

|

| 74 |

+

def __init__(self, hidden_size, eps=1e-6):

|

| 75 |

+

"""

|

| 76 |

+

LlamaRMSNorm is equivalent to T5LayerNorm

|

| 77 |

+

"""

|

| 78 |

+

super().__init__()

|

| 79 |

+

self.weight = nn.Parameter(torch.ones(hidden_size))

|

| 80 |

+

self.variance_epsilon = eps

|

| 81 |

+

|

| 82 |

+

def forward(self, hidden_states):

|

| 83 |

+

input_dtype = hidden_states.dtype

|

| 84 |

+

variance = hidden_states.to(torch.float32).pow(2).mean(-1, keepdim=True)

|

| 85 |

+

hidden_states = hidden_states * torch.rsqrt(variance + self.variance_epsilon)

|

| 86 |

+

|

| 87 |

+

return (self.weight * hidden_states).to(input_dtype)

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

class LlamaRotaryEmbedding(torch.nn.Module):

|

| 91 |

+

def __init__(self, dim, max_position_embeddings=2048, base=10000, scale=1, device=None):

|

| 92 |

+

super().__init__()

|

| 93 |

+

inv_freq = 1.0 / (base ** (torch.arange(0, dim, 2).float().to(device) / dim))

|

| 94 |

+

self.register_buffer("inv_freq", inv_freq)

|

| 95 |

+

|

| 96 |

+

# Build here to make `torch.jit.trace` work.

|

| 97 |

+

self.max_seq_len_cached = max_position_embeddings

|

| 98 |

+

t = torch.arange(self.max_seq_len_cached, device=self.inv_freq.device, dtype=self.inv_freq.dtype)

|

| 99 |

+

|

| 100 |

+

self.scale = scale

|

| 101 |

+

t *= self.scale

|

| 102 |

+

|

| 103 |

+

freqs = torch.einsum("i,j->ij", t, self.inv_freq)

|

| 104 |

+

# Different from paper, but it uses a different permutation in order to obtain the same calculation

|

| 105 |

+

emb = torch.cat((freqs, freqs), dim=-1)

|

| 106 |

+

dtype = torch.get_default_dtype()

|

| 107 |

+

self.register_buffer("cos_cached", emb.cos()[None, None, :, :].to(dtype), persistent=False)

|

| 108 |

+

self.register_buffer("sin_cached", emb.sin()[None, None, :, :].to(dtype), persistent=False)

|

| 109 |

+

|

| 110 |

+

def forward(self, x, seq_len=None):

|

| 111 |

+

# x: [bs, num_attention_heads, seq_len, head_size]

|

| 112 |

+

# This `if` block is unlikely to be run after we build sin/cos in `__init__`. Keep the logic here just in case.

|

| 113 |

+

if seq_len > self.max_seq_len_cached:

|

| 114 |

+

self.max_seq_len_cached = seq_len

|

| 115 |

+

t = torch.arange(self.max_seq_len_cached, device=x.device, dtype=self.inv_freq.dtype)

|

| 116 |

+

freqs = torch.einsum("i,j->ij", t, self.inv_freq)

|

| 117 |

+