Gilad Avidan

commited on

Commit

•

e724e19

1

Parent(s):

9d88e8e

initial commit

Browse files- README.md +121 -0

- config.json +249 -0

- handler.py +36 -0

- preprocessor_config.json +28 -0

- pytorch_model.bin +3 -0

- test_handler.py +9 -0

- tf_model.h5 +3 -0

README.md

ADDED

|

@@ -0,0 +1,121 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

---

|

| 4 |

+

|

| 5 |

+

# Model Card for Segment Anything Model (SAM) - ViT Huge (ViT-H) version

|

| 6 |

+

|

| 7 |

+

<p>

|

| 8 |

+

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-architecture.png" alt="Model architecture">

|

| 9 |

+

<em> Detailed architecture of Segment Anything Model (SAM).</em>

|

| 10 |

+

</p>

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

# Table of Contents

|

| 14 |

+

|

| 15 |

+

0. [TL;DR](#TL;DR)

|

| 16 |

+

1. [Model Details](#model-details)

|

| 17 |

+

2. [Usage](#usage)

|

| 18 |

+

3. [Citation](#citation)

|

| 19 |

+

|

| 20 |

+

# TL;DR

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

[Link to original repository](https://github.com/facebookresearch/segment-anything)

|

| 24 |

+

|

| 25 |

+

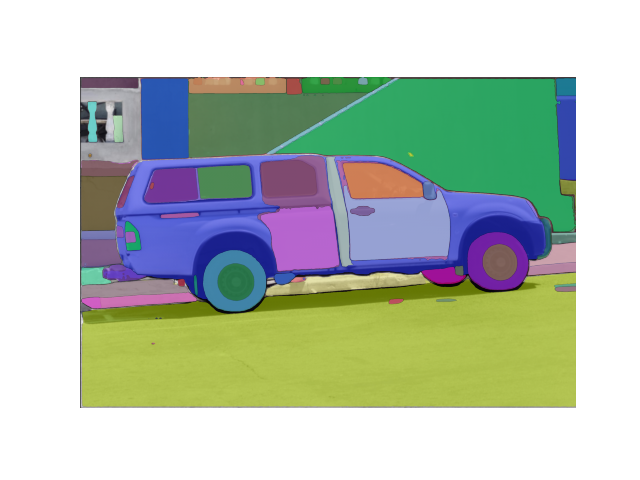

| <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-beancans.png" alt="Snow" width="600" height="600"> | <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-dog-masks.png" alt="Forest" width="600" height="600"> | <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-car-seg.png" alt="Mountains" width="600" height="600"> |

|

| 26 |

+

|---------------------------------------------------------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------------------------------------------------------------------|

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

The **Segment Anything Model (SAM)** produces high quality object masks from input prompts such as points or boxes, and it can be used to generate masks for all objects in an image. It has been trained on a [dataset](https://segment-anything.com/dataset/index.html) of 11 million images and 1.1 billion masks, and has strong zero-shot performance on a variety of segmentation tasks.

|

| 30 |

+

The abstract of the paper states:

|

| 31 |

+

|

| 32 |

+

> We introduce the Segment Anything (SA) project: a new task, model, and dataset for image segmentation. Using our efficient model in a data collection loop, we built the largest segmentation dataset to date (by far), with over 1 billion masks on 11M licensed and privacy respecting images. The model is designed and trained to be promptable, so it can transfer zero-shot to new image distributions and tasks. We evaluate its capabilities on numerous tasks and find that its zero-shot performance is impressive -- often competitive with or even superior to prior fully supervised results. We are releasing the Segment Anything Model (SAM) and corresponding dataset (SA-1B) of 1B masks and 11M images at [https://segment-anything.com](https://segment-anything.com) to foster research into foundation models for computer vision.

|

| 33 |

+

|

| 34 |

+

**Disclaimer**: Content from **this** model card has been written by the Hugging Face team, and parts of it were copy pasted from the original [SAM model card](https://github.com/facebookresearch/segment-anything).

|

| 35 |

+

|

| 36 |

+

# Model Details

|

| 37 |

+

|

| 38 |

+

The SAM model is made up of 3 modules:

|

| 39 |

+

- The `VisionEncoder`: a VIT based image encoder. It computes the image embeddings using attention on patches of the image. Relative Positional Embedding is used.

|

| 40 |

+

- The `PromptEncoder`: generates embeddings for points and bounding boxes

|

| 41 |

+

- The `MaskDecoder`: a two-ways transformer which performs cross attention between the image embedding and the point embeddings (->) and between the point embeddings and the image embeddings. The outputs are fed

|

| 42 |

+

- The `Neck`: predicts the output masks based on the contextualized masks produced by the `MaskDecoder`.

|

| 43 |

+

# Usage

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

## Prompted-Mask-Generation

|

| 47 |

+

|

| 48 |

+

```python

|

| 49 |

+

from PIL import Image

|

| 50 |

+

import requests

|

| 51 |

+

from transformers import SamModel, SamProcessor

|

| 52 |

+

|

| 53 |

+

model = SamModel.from_pretrained("facebook/sam-vit-huge")

|

| 54 |

+

processor = SamProcessor.from_pretrained("facebook/sam-vit-huge")

|

| 55 |

+

|

| 56 |

+

img_url = "https://huggingface.co/ybelkada/segment-anything/resolve/main/assets/car.png"

|

| 57 |

+

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert("RGB")

|

| 58 |

+

input_points = [[[450, 600]]] # 2D localization of a window

|

| 59 |

+

```

|

| 60 |

+

|

| 61 |

+

|

| 62 |

+

```python

|

| 63 |

+

inputs = processor(raw_image, input_points=input_points, return_tensors="pt").to("cuda")

|

| 64 |

+

outputs = model(**inputs)

|

| 65 |

+

masks = processor.image_processor.post_process_masks(outputs.pred_masks.cpu(), inputs["original_sizes"].cpu(), inputs["reshaped_input_sizes"].cpu())

|

| 66 |

+

scores = outputs.iou_scores

|

| 67 |

+

```

|

| 68 |

+

Among other arguments to generate masks, you can pass 2D locations on the approximate position of your object of interest, a bounding box wrapping the object of interest (the format should be x, y coordinate of the top right and bottom left point of the bounding box), a segmentation mask. At this time of writing, passing a text as input is not supported by the official model according to [the official repository](https://github.com/facebookresearch/segment-anything/issues/4#issuecomment-1497626844).

|

| 69 |

+

For more details, refer to this notebook, which shows a walk throught of how to use the model, with a visual example!

|

| 70 |

+

|

| 71 |

+

## Automatic-Mask-Generation

|

| 72 |

+

|

| 73 |

+

The model can be used for generating segmentation masks in a "zero-shot" fashion, given an input image. The model is automatically prompt with a grid of `1024` points

|

| 74 |

+

which are all fed to the model.

|

| 75 |

+

|

| 76 |

+

The pipeline is made for automatic mask generation. The following snippet demonstrates how easy you can run it (on any device! Simply feed the appropriate `points_per_batch` argument)

|

| 77 |

+

```python

|

| 78 |

+

from transformers import pipeline

|

| 79 |

+

generator = pipeline("mask-generation", device = 0, points_per_batch = 256)

|

| 80 |

+

image_url = "https://huggingface.co/ybelkada/segment-anything/resolve/main/assets/car.png"

|

| 81 |

+

outputs = generator(image_url, points_per_batch = 256)

|

| 82 |

+

```

|

| 83 |

+

Now to display the image:

|

| 84 |

+

```python

|

| 85 |

+

import matplotlib.pyplot as plt

|

| 86 |

+

from PIL import Image

|

| 87 |

+

import numpy as np

|

| 88 |

+

|

| 89 |

+

def show_mask(mask, ax, random_color=False):

|

| 90 |

+

if random_color:

|

| 91 |

+

color = np.concatenate([np.random.random(3), np.array([0.6])], axis=0)

|

| 92 |

+

else:

|

| 93 |

+

color = np.array([30 / 255, 144 / 255, 255 / 255, 0.6])

|

| 94 |

+

h, w = mask.shape[-2:]

|

| 95 |

+

mask_image = mask.reshape(h, w, 1) * color.reshape(1, 1, -1)

|

| 96 |

+

ax.imshow(mask_image)

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

plt.imshow(np.array(raw_image))

|

| 100 |

+

ax = plt.gca()

|

| 101 |

+

for mask in outputs["masks"]:

|

| 102 |

+

show_mask(mask, ax=ax, random_color=True)

|

| 103 |

+

plt.axis("off")

|

| 104 |

+

plt.show()

|

| 105 |

+

```

|

| 106 |

+

This should give you the following

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

# Citation

|

| 111 |

+

|

| 112 |

+

If you use this model, please use the following BibTeX entry.

|

| 113 |

+

|

| 114 |

+

```

|

| 115 |

+

@article{kirillov2023segany,

|

| 116 |

+

title={Segment Anything},

|

| 117 |

+

author={Kirillov, Alexander and Mintun, Eric and Ravi, Nikhila and Mao, Hanzi and Rolland, Chloe and Gustafson, Laura and Xiao, Tete and Whitehead, Spencer and Berg, Alexander C. and Lo, Wan-Yen and Doll{\'a}r, Piotr and Girshick, Ross},

|

| 118 |

+

journal={arXiv:2304.02643},

|

| 119 |

+

year={2023}

|

| 120 |

+

}

|

| 121 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,249 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": null,

|

| 3 |

+

"_name_or_path": "/tmp/facebook/sam-vit-huge",

|

| 4 |

+

"architectures": [

|

| 5 |

+

"SamModel"

|

| 6 |

+

],

|

| 7 |

+

"initializer_range": 0.02,

|

| 8 |

+

"mask_decoder_config": {

|

| 9 |

+

"_name_or_path": "",

|

| 10 |

+

"add_cross_attention": false,

|

| 11 |

+

"architectures": null,

|

| 12 |

+

"attention_downsample_rate": 2,

|

| 13 |

+

"bad_words_ids": null,

|

| 14 |

+

"begin_suppress_tokens": null,

|

| 15 |

+

"bos_token_id": null,

|

| 16 |

+

"chunk_size_feed_forward": 0,

|

| 17 |

+

"cross_attention_hidden_size": null,

|

| 18 |

+

"decoder_start_token_id": null,

|

| 19 |

+

"diversity_penalty": 0.0,

|

| 20 |

+

"do_sample": false,

|

| 21 |

+

"early_stopping": false,

|

| 22 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 23 |

+

"eos_token_id": null,

|

| 24 |

+

"exponential_decay_length_penalty": null,

|

| 25 |

+

"finetuning_task": null,

|

| 26 |

+

"forced_bos_token_id": null,

|

| 27 |

+

"forced_eos_token_id": null,

|

| 28 |

+

"hidden_act": "relu",

|

| 29 |

+

"hidden_size": 256,

|

| 30 |

+

"id2label": {

|

| 31 |

+

"0": "LABEL_0",

|

| 32 |

+

"1": "LABEL_1"

|

| 33 |

+

},

|

| 34 |

+

"iou_head_depth": 3,

|

| 35 |

+

"iou_head_hidden_dim": 256,

|

| 36 |

+

"is_decoder": false,

|

| 37 |

+

"is_encoder_decoder": false,

|

| 38 |

+

"label2id": {

|

| 39 |

+

"LABEL_0": 0,

|

| 40 |

+

"LABEL_1": 1

|

| 41 |

+

},

|

| 42 |

+

"layer_norm_eps": 1e-06,

|

| 43 |

+

"length_penalty": 1.0,

|

| 44 |

+

"max_length": 20,

|

| 45 |

+

"min_length": 0,

|

| 46 |

+

"mlp_dim": 2048,

|

| 47 |

+

"model_type": "",

|

| 48 |

+

"no_repeat_ngram_size": 0,

|

| 49 |

+

"num_attention_heads": 8,

|

| 50 |

+

"num_beam_groups": 1,

|

| 51 |

+

"num_beams": 1,

|

| 52 |

+

"num_hidden_layers": 2,

|

| 53 |

+

"num_multimask_outputs": 3,

|

| 54 |

+

"num_return_sequences": 1,

|

| 55 |

+

"output_attentions": false,

|

| 56 |

+

"output_hidden_states": false,

|

| 57 |

+

"output_scores": false,

|

| 58 |

+

"pad_token_id": null,

|

| 59 |

+

"prefix": null,

|

| 60 |

+

"problem_type": null,

|

| 61 |

+

"pruned_heads": {},

|

| 62 |

+

"remove_invalid_values": false,

|

| 63 |

+

"repetition_penalty": 1.0,

|

| 64 |

+

"return_dict": true,

|

| 65 |

+

"return_dict_in_generate": false,

|

| 66 |

+

"sep_token_id": null,

|

| 67 |

+

"suppress_tokens": null,

|

| 68 |

+

"task_specific_params": null,

|

| 69 |

+

"temperature": 1.0,

|

| 70 |

+

"tf_legacy_loss": false,

|

| 71 |

+

"tie_encoder_decoder": false,

|

| 72 |

+

"tie_word_embeddings": true,

|

| 73 |

+

"tokenizer_class": null,

|

| 74 |

+

"top_k": 50,

|

| 75 |

+

"top_p": 1.0,

|

| 76 |

+

"torch_dtype": null,

|

| 77 |

+

"torchscript": false,

|

| 78 |

+

"transformers_version": "4.29.0.dev0",

|

| 79 |

+

"typical_p": 1.0,

|

| 80 |

+

"use_bfloat16": false

|

| 81 |

+

},

|

| 82 |

+

"model_type": "sam",

|

| 83 |

+

"prompt_encoder_config": {

|

| 84 |

+

"_name_or_path": "",

|

| 85 |

+

"add_cross_attention": false,

|

| 86 |

+

"architectures": null,

|

| 87 |

+

"bad_words_ids": null,

|

| 88 |

+

"begin_suppress_tokens": null,

|

| 89 |

+

"bos_token_id": null,

|

| 90 |

+

"chunk_size_feed_forward": 0,

|

| 91 |

+

"cross_attention_hidden_size": null,

|

| 92 |

+

"decoder_start_token_id": null,

|

| 93 |

+

"diversity_penalty": 0.0,

|

| 94 |

+

"do_sample": false,

|

| 95 |

+

"early_stopping": false,

|

| 96 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 97 |

+

"eos_token_id": null,

|

| 98 |

+

"exponential_decay_length_penalty": null,

|

| 99 |

+

"finetuning_task": null,

|

| 100 |

+

"forced_bos_token_id": null,

|

| 101 |

+

"forced_eos_token_id": null,

|

| 102 |

+

"hidden_act": "gelu",

|

| 103 |

+

"hidden_size": 256,

|

| 104 |

+

"id2label": {

|

| 105 |

+

"0": "LABEL_0",

|

| 106 |

+

"1": "LABEL_1"

|

| 107 |

+

},

|

| 108 |

+

"image_embedding_size": 64,

|

| 109 |

+

"image_size": 1024,

|

| 110 |

+

"is_decoder": false,

|

| 111 |

+

"is_encoder_decoder": false,

|

| 112 |

+

"label2id": {

|

| 113 |

+

"LABEL_0": 0,

|

| 114 |

+

"LABEL_1": 1

|

| 115 |

+

},

|

| 116 |

+

"layer_norm_eps": 1e-06,

|

| 117 |

+

"length_penalty": 1.0,

|

| 118 |

+

"mask_input_channels": 16,

|

| 119 |

+

"max_length": 20,

|

| 120 |

+

"min_length": 0,

|

| 121 |

+

"model_type": "",

|

| 122 |

+

"no_repeat_ngram_size": 0,

|

| 123 |

+

"num_beam_groups": 1,

|

| 124 |

+

"num_beams": 1,

|

| 125 |

+

"num_point_embeddings": 4,

|

| 126 |

+

"num_return_sequences": 1,

|

| 127 |

+

"output_attentions": false,

|

| 128 |

+

"output_hidden_states": false,

|

| 129 |

+

"output_scores": false,

|

| 130 |

+

"pad_token_id": null,

|

| 131 |

+

"patch_size": 16,

|

| 132 |

+

"prefix": null,

|

| 133 |

+

"problem_type": null,

|

| 134 |

+

"pruned_heads": {},

|

| 135 |

+

"remove_invalid_values": false,

|

| 136 |

+

"repetition_penalty": 1.0,

|

| 137 |

+

"return_dict": true,

|

| 138 |

+

"return_dict_in_generate": false,

|

| 139 |

+

"sep_token_id": null,

|

| 140 |

+

"suppress_tokens": null,

|

| 141 |

+

"task_specific_params": null,

|

| 142 |

+

"temperature": 1.0,

|

| 143 |

+

"tf_legacy_loss": false,

|

| 144 |

+

"tie_encoder_decoder": false,

|

| 145 |

+

"tie_word_embeddings": true,

|

| 146 |

+

"tokenizer_class": null,

|

| 147 |

+

"top_k": 50,

|

| 148 |

+

"top_p": 1.0,

|

| 149 |

+

"torch_dtype": null,

|

| 150 |

+

"torchscript": false,

|

| 151 |

+

"transformers_version": "4.29.0.dev0",

|

| 152 |

+

"typical_p": 1.0,

|

| 153 |

+

"use_bfloat16": false

|

| 154 |

+

},

|

| 155 |

+

"torch_dtype": "float32",

|

| 156 |

+

"transformers_version": null,

|

| 157 |

+

"vision_config": {

|

| 158 |

+

"_name_or_path": "",

|

| 159 |

+

"add_cross_attention": false,

|

| 160 |

+

"architectures": null,

|

| 161 |

+

"attention_dropout": 0.0,

|

| 162 |

+

"bad_words_ids": null,

|

| 163 |

+

"begin_suppress_tokens": null,

|

| 164 |

+

"bos_token_id": null,

|

| 165 |

+

"chunk_size_feed_forward": 0,

|

| 166 |

+

"cross_attention_hidden_size": null,

|

| 167 |

+

"decoder_start_token_id": null,

|

| 168 |

+

"diversity_penalty": 0.0,

|

| 169 |

+

"do_sample": false,

|

| 170 |

+

"dropout": 0.0,

|

| 171 |

+

"early_stopping": false,

|

| 172 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 173 |

+

"eos_token_id": null,

|

| 174 |

+

"exponential_decay_length_penalty": null,

|

| 175 |

+

"finetuning_task": null,

|

| 176 |

+

"forced_bos_token_id": null,

|

| 177 |

+

"forced_eos_token_id": null,

|

| 178 |

+

"global_attn_indexes": [

|

| 179 |

+

7,

|

| 180 |

+

15,

|

| 181 |

+

23,

|

| 182 |

+

31

|

| 183 |

+

],

|

| 184 |

+

"hidden_act": "gelu",

|

| 185 |

+

"hidden_size": 1280,

|

| 186 |

+

"id2label": {

|

| 187 |

+

"0": "LABEL_0",

|

| 188 |

+

"1": "LABEL_1"

|

| 189 |

+

},

|

| 190 |

+

"image_size": 1024,

|

| 191 |

+

"initializer_factor": 1.0,

|

| 192 |

+

"initializer_range": 1e-10,

|

| 193 |

+

"intermediate_size": 6144,

|

| 194 |

+

"is_decoder": false,

|

| 195 |

+

"is_encoder_decoder": false,

|

| 196 |

+

"label2id": {

|

| 197 |

+

"LABEL_0": 0,

|

| 198 |

+

"LABEL_1": 1

|

| 199 |

+

},

|

| 200 |

+

"layer_norm_eps": 1e-06,

|

| 201 |

+

"length_penalty": 1.0,

|

| 202 |

+

"max_length": 20,

|

| 203 |

+

"min_length": 0,

|

| 204 |

+

"mlp_dim": 5120,

|

| 205 |

+

"mlp_ratio": 4.0,

|

| 206 |

+

"model_type": "",

|

| 207 |

+

"no_repeat_ngram_size": 0,

|

| 208 |

+

"num_attention_heads": 16,

|

| 209 |

+

"num_beam_groups": 1,

|

| 210 |

+

"num_beams": 1,

|

| 211 |

+

"num_channels": 3,

|

| 212 |

+

"num_hidden_layers": 32,

|

| 213 |

+

"num_pos_feats": 128,

|

| 214 |

+

"num_return_sequences": 1,

|

| 215 |

+

"output_attentions": false,

|

| 216 |

+

"output_channels": 256,

|

| 217 |

+

"output_hidden_states": false,

|

| 218 |

+

"output_scores": false,

|

| 219 |

+

"pad_token_id": null,

|

| 220 |

+

"patch_size": 16,

|

| 221 |

+

"prefix": null,

|

| 222 |

+

"problem_type": null,

|

| 223 |

+

"projection_dim": 512,

|

| 224 |

+

"pruned_heads": {},

|

| 225 |

+

"qkv_bias": true,

|

| 226 |

+

"remove_invalid_values": false,

|

| 227 |

+

"repetition_penalty": 1.0,

|

| 228 |

+

"return_dict": true,

|

| 229 |

+

"return_dict_in_generate": false,

|

| 230 |

+

"sep_token_id": null,

|

| 231 |

+

"suppress_tokens": null,

|

| 232 |

+

"task_specific_params": null,

|

| 233 |

+

"temperature": 1.0,

|

| 234 |

+

"tf_legacy_loss": false,

|

| 235 |

+

"tie_encoder_decoder": false,

|

| 236 |

+

"tie_word_embeddings": true,

|

| 237 |

+

"tokenizer_class": null,

|

| 238 |

+

"top_k": 50,

|

| 239 |

+

"top_p": 1.0,

|

| 240 |

+

"torch_dtype": null,

|

| 241 |

+

"torchscript": false,

|

| 242 |

+

"transformers_version": "4.29.0.dev0",

|

| 243 |

+

"typical_p": 1.0,

|

| 244 |

+

"use_abs_pos": true,

|

| 245 |

+

"use_bfloat16": false,

|

| 246 |

+

"use_rel_pos": true,

|

| 247 |

+

"window_size": 14

|

| 248 |

+

}

|

| 249 |

+

}

|

handler.py

ADDED

|

@@ -0,0 +1,36 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import base64

|

| 2 |

+

from io import BytesIO

|

| 3 |

+

from typing import Dict, List, Any

|

| 4 |

+

from PIL import Image

|

| 5 |

+

import torch

|

| 6 |

+

from transformers import SamModel, SamProcessor

|

| 7 |

+

|

| 8 |

+

class EndpointHandler():

|

| 9 |

+

def __init__(self, path=""):

|

| 10 |

+

# Preload all the elements you are going to need at inference.

|

| 11 |

+

self.device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

|

| 12 |

+

self.model = SamModel.from_pretrained("facebook/sam-vit-huge").to(self.device)

|

| 13 |

+

self.processor = SamProcessor.from_pretrained("facebook/sam-vit-huge")

|

| 14 |

+

|

| 15 |

+

def __call__(self, data: Dict[str, Any]) -> List[Dict[str, Any]]:

|

| 16 |

+

"""

|

| 17 |

+

data args:

|

| 18 |

+

inputs (:obj: `str` | `PIL.Image` | `np.array`)

|

| 19 |

+

kwargs

|

| 20 |

+

Return:

|

| 21 |

+

A :obj:`list` | `dict`: will be serialized and returned

|

| 22 |

+

"""

|

| 23 |

+

|

| 24 |

+

inputs = data.pop("inputs", data)

|

| 25 |

+

parameters = data.pop("parameters", {"mode": "image"})

|

| 26 |

+

|

| 27 |

+

# Decode base64 image to PIL

|

| 28 |

+

image = Image.open(BytesIO(base64.b64decode(inputs['image']))).convert("RGB")

|

| 29 |

+

input_points = [inputs['points']] # 2D localization of a window

|

| 30 |

+

|

| 31 |

+

model_inputs = self.processor(image, input_points=input_points, return_tensors="pt").to(self.device)

|

| 32 |

+

outputs = self.model(**model_inputs)

|

| 33 |

+

masks = self.processor.image_processor.post_process_masks(outputs.pred_masks.cpu(), model_inputs["original_sizes"].cpu(), model_inputs["reshaped_input_sizes"].cpu())

|

| 34 |

+

scores = outputs.iou_scores

|

| 35 |

+

|

| 36 |

+

return {"masks": masks, "scores": scores}

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_convert_rgb": true,

|

| 3 |

+

"do_normalize": true,

|

| 4 |

+

"do_pad": true,

|

| 5 |

+

"do_rescale": true,

|

| 6 |

+

"do_resize": true,

|

| 7 |

+

"image_mean": [

|

| 8 |

+

0.485,

|

| 9 |

+

0.456,

|

| 10 |

+

0.406

|

| 11 |

+

],

|

| 12 |

+

"image_processor_type": "SamImageProcessor",

|

| 13 |

+

"image_std": [

|

| 14 |

+

0.229,

|

| 15 |

+

0.224,

|

| 16 |

+

0.225

|

| 17 |

+

],

|

| 18 |

+

"pad_size": {

|

| 19 |

+

"height": 1024,

|

| 20 |

+

"width": 1024

|

| 21 |

+

},

|

| 22 |

+

"processor_class": "SamProcessor",

|

| 23 |

+

"resample": 2,

|

| 24 |

+

"rescale_factor": 0.00392156862745098,

|

| 25 |

+

"size": {

|

| 26 |

+

"longest_edge": 1024

|

| 27 |

+

}

|

| 28 |

+

}

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9a14fd58481d203300024d94128edfce246b4b0db7e0b548ed52bf63578cbc38

|

| 3 |

+

size 2564565013

|

test_handler.py

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import base64

|

| 2 |

+

from handler import EndpointHandler

|

| 3 |

+

|

| 4 |

+

my_handler = EndpointHandler(path=".")

|

| 5 |

+

image = base64.b64encode(open("./car.png", "rb").read())

|

| 6 |

+

|

| 7 |

+

input = {"image": image, "points": [[450, 600]]}

|

| 8 |

+

result = my_handler(input)

|

| 9 |

+

print(result)

|

tf_model.h5

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a53881d671f1900f5d4890c00f3f27819fdc94323c012b648ca1bb0b3b1f0a60

|

| 3 |

+

size 2565031808

|