Transformers documentation

Dilated Neighborhood Attention Transformer

Dilated Neighborhood Attention Transformer

Overview

DiNAT は Dilated Neighborhood Attender Transformer で提案されました。 Ali Hassani and Humphrey Shi.

NAT を拡張するために、拡張近隣アテンション パターンを追加してグローバル コンテキストをキャプチャします。 そしてそれと比較して大幅なパフォーマンスの向上が見られます。

論文の要約は次のとおりです。

トランスフォーマーは急速に、さまざまなモダリティにわたって最も頻繁に適用される深層学習アーキテクチャの 1 つになりつつあります。 ドメインとタスク。ビジョンでは、単純なトランスフォーマーへの継続的な取り組みに加えて、階層型トランスフォーマーが また、そのパフォーマンスと既存のフレームワークへの簡単な統合のおかげで、大きな注目を集めました。 これらのモデルは通常、スライディング ウィンドウの近隣アテンション (NA) などの局所的な注意メカニズムを採用しています。 または Swin Transformer のシフト ウィンドウ セルフ アテンション。自己注意の二次複雑さを軽減するのに効果的ですが、 局所的な注意は、自己注意の最も望ましい 2 つの特性を弱めます。それは、長距離の相互依存性モデリングです。 そして全体的な受容野。このペーパーでは、自然で柔軟で、 NA への効率的な拡張により、よりグローバルなコンテキストを捕捉し、受容野をゼロから指数関数的に拡張することができます。 追加費用。 NA のローカルな注目と DiNA のまばらなグローバルな注目は相互に補完し合うため、私たちは 両方に基づいて構築された新しい階層型ビジョン トランスフォーマーである Dilated Neighborhood Attendant Transformer (DiNAT) を導入します。 DiNAT のバリアントは、NAT、Swin、ConvNeXt などの強力なベースラインに比べて大幅に改善されています。 私たちの大規模モデルは、COCO オブジェクト検出において Swin モデルよりも高速で、ボックス AP が 1.5% 優れています。 COCO インスタンス セグメンテーションでは 1.3% のマスク AP、ADE20K セマンティック セグメンテーションでは 1.1% の mIoU。 新しいフレームワークと組み合わせた当社の大規模バリアントは、COCO (58.2 PQ) 上の新しい最先端のパノプティック セグメンテーション モデルです。 および ADE20K (48.5 PQ)、および Cityscapes (44.5 AP) および ADE20K (35.4 AP) のインスタンス セグメンテーション モデル (追加データなし)。 また、ADE20K (58.2 mIoU) 上の最先端の特殊なセマンティック セグメンテーション モデルとも一致します。 都市景観 (84.5 mIoU) では 2 位にランクされています (追加データなし)。

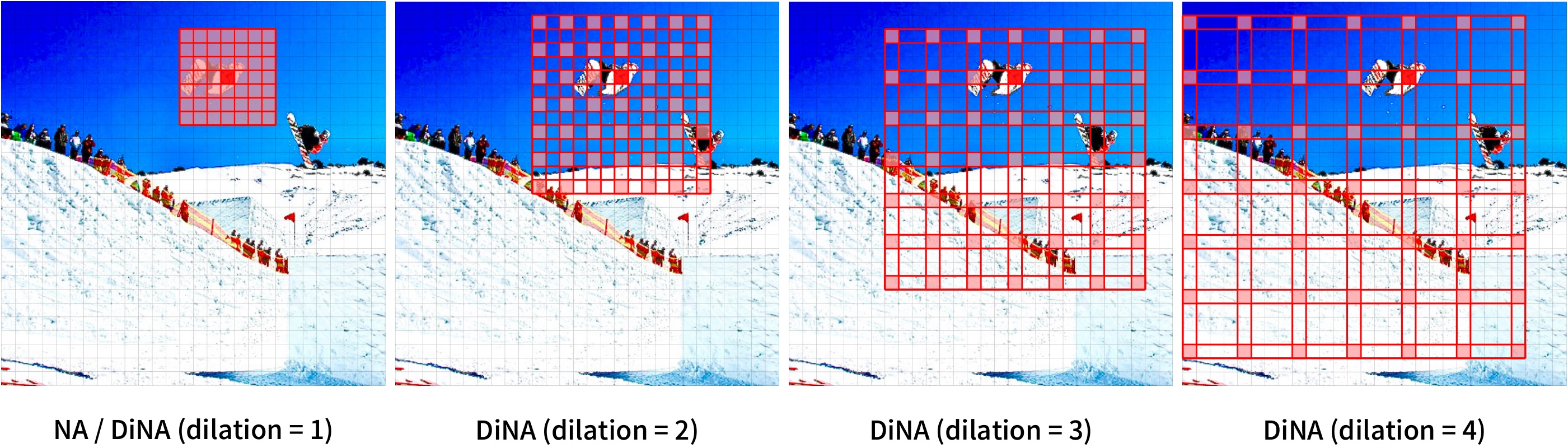

異なる拡張値を使用した近隣アテンション。

元の論文から抜粋。

異なる拡張値を使用した近隣アテンション。

元の論文から抜粋。 このモデルは Ali Hassani によって提供されました。 元のコードは ここ にあります。

Usage tips

DiNAT は バックボーン として使用できます。 「outputhiddenstates = True」の場合、

hidden_states と reshaped_hidden_states の両方を出力します。 reshape_hidden_states は、(batch_size, height, width, num_channels) ではなく、(batch, num_channels, height, width) の形状を持っています。

ノート:

- DiNAT は、NATTEN による近隣アテンションと拡張近隣アテンションの実装に依存しています。

shi-labs.com/natten を参照して、Linux 用のビルド済みホイールを使用してインストールするか、

pip install nattenを実行してシステム上に構築できます。 後者はコンパイルに時間がかかる可能性があることに注意してください。 NATTEN はまだ Windows デバイスをサポートしていません。 - 現時点ではパッチ サイズ 4 のみがサポートされています。

Resources

DiNAT の使用を開始するのに役立つ公式 Hugging Face およびコミュニティ (🌎 で示されている) リソースのリスト。

- DinatForImageClassification は、この サンプル スクリプト および ノートブック。

- 参照: 画像分類タスク ガイド

ここに含めるリソースの送信に興味がある場合は、お気軽にプル リクエストを開いてください。審査させていただきます。リソースは、既存のリソースを複製するのではなく、何か新しいものを示すことが理想的です。

DinatConfig

class transformers.DinatConfig

< source >( patch_size = 4 num_channels = 3 embed_dim = 64 depths = [3, 4, 6, 5] num_heads = [2, 4, 8, 16] kernel_size = 7 dilations = [[1, 8, 1], [1, 4, 1, 4], [1, 2, 1, 2, 1, 2], [1, 1, 1, 1, 1]] mlp_ratio = 3.0 qkv_bias = True hidden_dropout_prob = 0.0 attention_probs_dropout_prob = 0.0 drop_path_rate = 0.1 hidden_act = 'gelu' initializer_range = 0.02 layer_norm_eps = 1e-05 layer_scale_init_value = 0.0 out_features = None out_indices = None **kwargs )

Parameters

- patch_size (

int, optional, defaults to 4) — The size (resolution) of each patch. NOTE: Only patch size of 4 is supported at the moment. - num_channels (

int, optional, defaults to 3) — The number of input channels. - embed_dim (

int, optional, defaults to 64) — Dimensionality of patch embedding. - depths (

List[int], optional, defaults to[3, 4, 6, 5]) — Number of layers in each level of the encoder. - num_heads (

List[int], optional, defaults to[2, 4, 8, 16]) — Number of attention heads in each layer of the Transformer encoder. - kernel_size (

int, optional, defaults to 7) — Neighborhood Attention kernel size. - dilations (

List[List[int]], optional, defaults to[[1, 8, 1], [1, 4, 1, 4], [1, 2, 1, 2, 1, 2], [1, 1, 1, 1, 1]]) — Dilation value of each NA layer in the Transformer encoder. - mlp_ratio (

float, optional, defaults to 3.0) — Ratio of MLP hidden dimensionality to embedding dimensionality. - qkv_bias (

bool, optional, defaults toTrue) — Whether or not a learnable bias should be added to the queries, keys and values. - hidden_dropout_prob (

float, optional, defaults to 0.0) — The dropout probability for all fully connected layers in the embeddings and encoder. - attention_probs_dropout_prob (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - drop_path_rate (

float, optional, defaults to 0.1) — Stochastic depth rate. - hidden_act (

strorfunction, optional, defaults to"gelu") — The non-linear activation function (function or string) in the encoder. If string,"gelu","relu","selu"and"gelu_new"are supported. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - layer_norm_eps (

float, optional, defaults to 1e-05) — The epsilon used by the layer normalization layers. - layer_scale_init_value (

float, optional, defaults to 0.0) — The initial value for the layer scale. Disabled if <=0. - out_features (

List[str], optional) — If used as backbone, list of features to output. Can be any of"stem","stage1","stage2", etc. (depending on how many stages the model has). If unset andout_indicesis set, will default to the corresponding stages. If unset andout_indicesis unset, will default to the last stage. Must be in the same order as defined in thestage_namesattribute. - out_indices (

List[int], optional) — If used as backbone, list of indices of features to output. Can be any of 0, 1, 2, etc. (depending on how many stages the model has). If unset andout_featuresis set, will default to the corresponding stages. If unset andout_featuresis unset, will default to the last stage. Must be in the same order as defined in thestage_namesattribute.

This is the configuration class to store the configuration of a DinatModel. It is used to instantiate a Dinat model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the Dinat shi-labs/dinat-mini-in1k-224 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Example:

>>> from transformers import DinatConfig, DinatModel

>>> # Initializing a Dinat shi-labs/dinat-mini-in1k-224 style configuration

>>> configuration = DinatConfig()

>>> # Initializing a model (with random weights) from the shi-labs/dinat-mini-in1k-224 style configuration

>>> model = DinatModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configDinatModel

class transformers.DinatModel

< source >( config add_pooling_layer = True )

Parameters

- config (DinatConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare Dinat Model transformer outputting raw hidden-states without any specific head on top. This model is a PyTorch torch.nn.Module sub-class. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: typing.Optional[torch.FloatTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.models.dinat.modeling_dinat.DinatModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using AutoImageProcessor. See ViTImageProcessor.call() for details. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.dinat.modeling_dinat.DinatModelOutput or tuple(torch.FloatTensor)

A transformers.models.dinat.modeling_dinat.DinatModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (DinatConfig) and inputs.

-

last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. -

pooler_output (

torch.FloatTensorof shape(batch_size, hidden_size), optional, returned whenadd_pooling_layer=Trueis passed) — Average pooling of the last layer hidden-state. -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each stage) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

reshaped_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, hidden_size, height, width).Hidden-states of the model at the output of each layer plus the initial embedding outputs reshaped to include the spatial dimensions.

The DinatModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import AutoImageProcessor, DinatModel

>>> import torch

>>> from datasets import load_dataset

>>> dataset = load_dataset("huggingface/cats-image", trust_remote_code=True)

>>> image = dataset["test"]["image"][0]

>>> image_processor = AutoImageProcessor.from_pretrained("shi-labs/dinat-mini-in1k-224")

>>> model = DinatModel.from_pretrained("shi-labs/dinat-mini-in1k-224")

>>> inputs = image_processor(image, return_tensors="pt")

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_state

>>> list(last_hidden_states.shape)

[1, 7, 7, 512]DinatForImageClassification

class transformers.DinatForImageClassification

< source >( config )

Parameters

- config (DinatConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

Dinat Model transformer with an image classification head on top (a linear layer on top of the final hidden state of the [CLS] token) e.g. for ImageNet.

This model is a PyTorch torch.nn.Module sub-class. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: typing.Optional[torch.FloatTensor] = None labels: typing.Optional[torch.LongTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.models.dinat.modeling_dinat.DinatImageClassifierOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using AutoImageProcessor. See ViTImageProcessor.call() for details. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

torch.LongTensorof shape(batch_size,), optional) — Labels for computing the image classification/regression loss. Indices should be in[0, ..., config.num_labels - 1]. Ifconfig.num_labels == 1a regression loss is computed (Mean-Square loss), Ifconfig.num_labels > 1a classification loss is computed (Cross-Entropy).

Returns

transformers.models.dinat.modeling_dinat.DinatImageClassifierOutput or tuple(torch.FloatTensor)

A transformers.models.dinat.modeling_dinat.DinatImageClassifierOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (DinatConfig) and inputs.

-

loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsis provided) — Classification (or regression if config.num_labels==1) loss. -

logits (

torch.FloatTensorof shape(batch_size, config.num_labels)) — Classification (or regression if config.num_labels==1) scores (before SoftMax). -

hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the model at the output of each layer plus the initial embedding outputs.

-

attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each stage) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

-

reshaped_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each stage) of shape(batch_size, hidden_size, height, width).Hidden-states of the model at the output of each layer plus the initial embedding outputs reshaped to include the spatial dimensions.

The DinatForImageClassification forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Example:

>>> from transformers import AutoImageProcessor, DinatForImageClassification

>>> import torch

>>> from datasets import load_dataset

>>> dataset = load_dataset("huggingface/cats-image", trust_remote_code=True)

>>> image = dataset["test"]["image"][0]

>>> image_processor = AutoImageProcessor.from_pretrained("shi-labs/dinat-mini-in1k-224")

>>> model = DinatForImageClassification.from_pretrained("shi-labs/dinat-mini-in1k-224")

>>> inputs = image_processor(image, return_tensors="pt")

>>> with torch.no_grad():

... logits = model(**inputs).logits

>>> # model predicts one of the 1000 ImageNet classes

>>> predicted_label = logits.argmax(-1).item()

>>> print(model.config.id2label[predicted_label])

tabby, tabby cat