ViTMAE

Overview

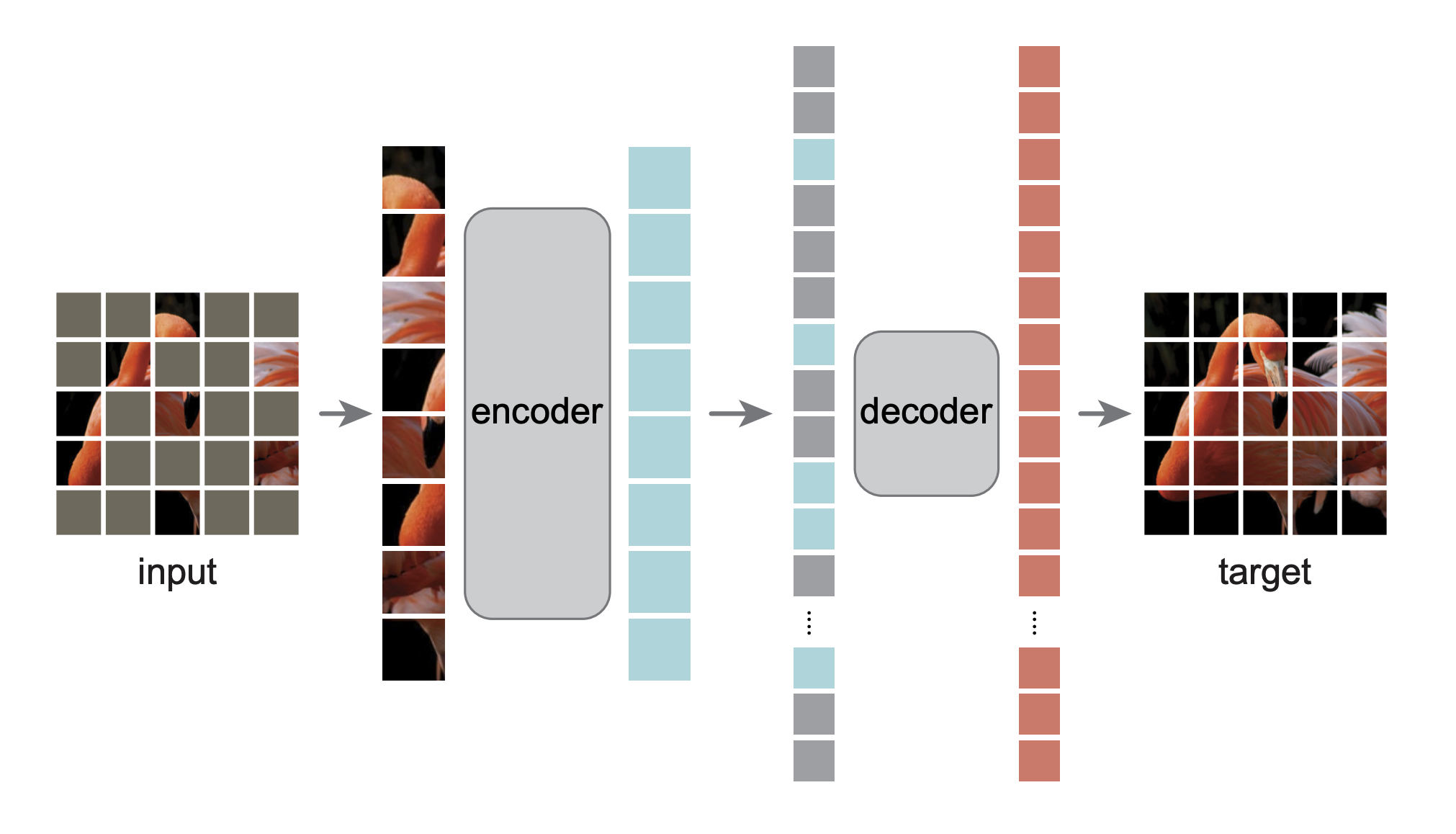

The ViTMAE model was proposed in Masked Autoencoders Are Scalable Vision Learners by Kaiming He, Xinlei Chen, Saining Xie, Yanghao Li, Piotr Dollár, Ross Girshick. The paper shows that, by pre-training a Vision Transformer (ViT) to reconstruct pixel values for masked patches, one can get results after fine-tuning that outperform supervised pre-training.

The abstract from the paper is the following:

This paper shows that masked autoencoders (MAE) are scalable self-supervised learners for computer vision. Our MAE approach is simple: we mask random patches of the input image and reconstruct the missing pixels. It is based on two core designs. First, we develop an asymmetric encoder-decoder architecture, with an encoder that operates only on the visible subset of patches (without mask tokens), along with a lightweight decoder that reconstructs the original image from the latent representation and mask tokens. Second, we find that masking a high proportion of the input image, e.g., 75%, yields a nontrivial and meaningful self-supervisory task. Coupling these two designs enables us to train large models efficiently and effectively: we accelerate training (by 3x or more) and improve accuracy. Our scalable approach allows for learning high-capacity models that generalize well: e.g., a vanilla ViT-Huge model achieves the best accuracy (87.8%) among methods that use only ImageNet-1K data. Transfer performance in downstream tasks outperforms supervised pre-training and shows promising scaling behavior.

MAE architecture. Taken from the original paper.

MAE architecture. Taken from the original paper. This model was contributed by nielsr. TensorFlow version of the model was contributed by sayakpaul and ariG23498 (equal contribution). The original code can be found here.

Usage tips

- MAE (masked auto encoding) is a method for self-supervised pre-training of Vision Transformers (ViTs). The pre-training objective is relatively simple: by masking a large portion (75%) of the image patches, the model must reconstruct raw pixel values. One can use ViTMAEForPreTraining for this purpose.

- After pre-training, one “throws away” the decoder used to reconstruct pixels, and one uses the encoder for fine-tuning/linear probing. This means that after fine-tuning, one can directly plug in the weights into a ViTForImageClassification.

- One can use ViTImageProcessor to prepare images for the model. See the code examples for more info.

- Note that the encoder of MAE is only used to encode the visual patches. The encoded patches are then concatenated with mask tokens, which the decoder (which also consists of Transformer blocks) takes as input. Each mask token is a shared, learned vector that indicates the presence of a missing patch to be predicted. Fixed sin/cos position embeddings are added both to the input of the encoder and the decoder.

- For a visual understanding of how MAEs work you can check out this post.

Resources

A list of official Hugging Face and community (indicated by 🌎) resources to help you get started with ViTMAE.

- ViTMAEForPreTraining is supported by this example script, allowing you to pre-train the model from scratch/further pre-train the model on custom data.

- A notebook that illustrates how to visualize reconstructed pixel values with ViTMAEForPreTraining can be found here.

If you’re interested in submitting a resource to be included here, please feel free to open a Pull Request and we’ll review it! The resource should ideally demonstrate something new instead of duplicating an existing resource.

ViTMAEConfig

class transformers.ViTMAEConfig

< source >( hidden_size = 768 num_hidden_layers = 12 num_attention_heads = 12 intermediate_size = 3072 hidden_act = 'gelu' hidden_dropout_prob = 0.0 attention_probs_dropout_prob = 0.0 initializer_range = 0.02 layer_norm_eps = 1e-12 image_size = 224 patch_size = 16 num_channels = 3 qkv_bias = True decoder_num_attention_heads = 16 decoder_hidden_size = 512 decoder_num_hidden_layers = 8 decoder_intermediate_size = 2048 mask_ratio = 0.75 norm_pix_loss = False **kwargs )

Parameters

- hidden_size (

int, optional, defaults to 768) — Dimensionality of the encoder layers and the pooler layer. - num_hidden_layers (

int, optional, defaults to 12) — Number of hidden layers in the Transformer encoder. - num_attention_heads (

int, optional, defaults to 12) — Number of attention heads for each attention layer in the Transformer encoder. - intermediate_size (

int, optional, defaults to 3072) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the Transformer encoder. - hidden_act (

strorfunction, optional, defaults to"gelu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","selu"and"gelu_new"are supported. - hidden_dropout_prob (

float, optional, defaults to 0.0) — The dropout probability for all fully connected layers in the embeddings, encoder, and pooler. - attention_probs_dropout_prob (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - initializer_range (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - layer_norm_eps (

float, optional, defaults to 1e-12) — The epsilon used by the layer normalization layers. - image_size (

int, optional, defaults to 224) — The size (resolution) of each image. - patch_size (

int, optional, defaults to 16) — The size (resolution) of each patch. - num_channels (

int, optional, defaults to 3) — The number of input channels. - qkv_bias (

bool, optional, defaults toTrue) — Whether to add a bias to the queries, keys and values. - decoder_num_attention_heads (

int, optional, defaults to 16) — Number of attention heads for each attention layer in the decoder. - decoder_hidden_size (

int, optional, defaults to 512) — Dimensionality of the decoder. - decoder_num_hidden_layers (

int, optional, defaults to 8) — Number of hidden layers in the decoder. - decoder_intermediate_size (

int, optional, defaults to 2048) — Dimensionality of the “intermediate” (i.e., feed-forward) layer in the decoder. - mask_ratio (

float, optional, defaults to 0.75) — The ratio of the number of masked tokens in the input sequence. - norm_pix_loss (

bool, optional, defaults toFalse) — Whether or not to train with normalized pixels (see Table 3 in the paper). Using normalized pixels improved representation quality in the experiments of the authors.

This is the configuration class to store the configuration of a ViTMAEModel. It is used to instantiate an ViT MAE model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the ViT facebook/vit-mae-base architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Example:

>>> from transformers import ViTMAEConfig, ViTMAEModel

>>> # Initializing a ViT MAE vit-mae-base style configuration

>>> configuration = ViTMAEConfig()

>>> # Initializing a model (with random weights) from the vit-mae-base style configuration

>>> model = ViTMAEModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configViTMAEModel

class transformers.ViTMAEModel

< source >( config )

Parameters

- config (ViTMAEConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare ViTMAE Model transformer outputting raw hidden-states without any specific head on top. This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: Optional = None noise: Optional = None head_mask: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.models.vit_mae.modeling_vit_mae.ViTMAEModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using AutoImageProcessor. See ViTImageProcessor.call() for details. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.vit_mae.modeling_vit_mae.ViTMAEModelOutput or tuple(torch.FloatTensor)

A transformers.models.vit_mae.modeling_vit_mae.ViTMAEModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ViTMAEConfig) and inputs.

- last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. - mask (

torch.FloatTensorof shape(batch_size, sequence_length)) — Tensor indicating which patches are masked (1) and which are not (0). - ids_restore (

torch.LongTensorof shape(batch_size, sequence_length)) — Tensor containing the original index of the (shuffled) masked patches. - hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the model at the output of each layer plus the initial embedding outputs. - attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The ViTMAEModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, ViTMAEModel

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("facebook/vit-mae-base")

>>> model = ViTMAEModel.from_pretrained("facebook/vit-mae-base")

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_stateViTMAEForPreTraining

class transformers.ViTMAEForPreTraining

< source >( config )

Parameters

- config (ViTMAEConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The ViTMAE Model transformer with the decoder on top for self-supervised pre-training.

Note that we provide a script to pre-train this model on custom data in our examples directory.

This model is a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: Optional = None noise: Optional = None head_mask: Optional = None output_attentions: Optional = None output_hidden_states: Optional = None return_dict: Optional = None ) → transformers.models.vit_mae.modeling_vit_mae.ViTMAEForPreTrainingOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using AutoImageProcessor. See ViTImageProcessor.call() for details. - head_mask (

torch.FloatTensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.vit_mae.modeling_vit_mae.ViTMAEForPreTrainingOutput or tuple(torch.FloatTensor)

A transformers.models.vit_mae.modeling_vit_mae.ViTMAEForPreTrainingOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ViTMAEConfig) and inputs.

- loss (

torch.FloatTensorof shape(1,)) — Pixel reconstruction loss. - logits (

torch.FloatTensorof shape(batch_size, sequence_length, patch_size ** 2 * num_channels)) — Pixel reconstruction logits. - mask (

torch.FloatTensorof shape(batch_size, sequence_length)) — Tensor indicating which patches are masked (1) and which are not (0). - ids_restore (

torch.LongTensorof shape(batch_size, sequence_length)) — Tensor containing the original index of the (shuffled) masked patches. - hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the model at the output of each layer plus the initial embedding outputs. - attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The ViTMAEForPreTraining forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, ViTMAEForPreTraining

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("facebook/vit-mae-base")

>>> model = ViTMAEForPreTraining.from_pretrained("facebook/vit-mae-base")

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> loss = outputs.loss

>>> mask = outputs.mask

>>> ids_restore = outputs.ids_restoreTFViTMAEModel

class transformers.TFViTMAEModel

< source >( config: ViTMAEConfig *inputs **kwargs )

Parameters

- config (ViTMAEConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare ViTMAE Model transformer outputting raw hidden-states without any specific head on top. This model inherits from TFPreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a keras.Model subclass. Use it as a regular TF 2.0 Keras Model and refer to the TF 2.0 documentation for all matter related to general usage and behavior.

TensorFlow models and layers in transformers accept two formats as input:

- having all inputs as keyword arguments (like PyTorch models), or

- having all inputs as a list, tuple or dict in the first positional argument.

The reason the second format is supported is that Keras methods prefer this format when passing inputs to models

and layers. Because of this support, when using methods like model.fit() things should “just work” for you - just

pass your inputs and labels in any format that model.fit() supports! If, however, you want to use the second

format outside of Keras methods like fit() and predict(), such as when creating your own layers or models with

the Keras Functional API, there are three possibilities you can use to gather all the input Tensors in the first

positional argument:

- a single Tensor with

pixel_valuesonly and nothing else:model(pixel_values) - a list of varying length with one or several input Tensors IN THE ORDER given in the docstring:

model([pixel_values, attention_mask])ormodel([pixel_values, attention_mask, token_type_ids]) - a dictionary with one or several input Tensors associated to the input names given in the docstring:

model({"pixel_values": pixel_values, "token_type_ids": token_type_ids})

Note that when creating models and layers with subclassing then you don’t need to worry about any of this, as you can just pass inputs like you would to any other Python function!

call

< source >( pixel_values: TFModelInputType | None = None noise: tf.Tensor = None head_mask: np.ndarray | tf.Tensor | None = None output_attentions: Optional[bool] = None output_hidden_states: Optional[bool] = None return_dict: Optional[bool] = None training: bool = False ) → transformers.models.vit_mae.modeling_tf_vit_mae.TFViTMAEModelOutput or tuple(tf.Tensor)

Parameters

- pixel_values (

np.ndarray,tf.Tensor,List[tf.Tensor]`Dict[str, tf.Tensor]orDict[str, np.ndarray]and each example must have the shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using AutoImageProcessor. See ViTImageProcessor.call() for details. - head_mask (

np.ndarrayortf.Tensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. This argument can be used only in eager mode, in graph mode the value in the config will be used instead. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. This argument can be used only in eager mode, in graph mode the value in the config will be used instead. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. This argument can be used in eager mode, in graph mode the value will always be set to True. - training (

bool, optional, defaults to `False“) — Whether or not to use the model in training mode (some modules like dropout modules have different behaviors between training and evaluation).

Returns

transformers.models.vit_mae.modeling_tf_vit_mae.TFViTMAEModelOutput or tuple(tf.Tensor)

A transformers.models.vit_mae.modeling_tf_vit_mae.TFViTMAEModelOutput or a tuple of tf.Tensor (if

return_dict=False is passed or when config.return_dict=False) comprising various elements depending on the

configuration (ViTMAEConfig) and inputs.

- last_hidden_state (

tf.Tensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the model. - mask (

tf.Tensorof shape(batch_size, sequence_length)) — Tensor indicating which patches are masked (1) and which are not (0). - ids_restore (

tf.Tensorof shape(batch_size, sequence_length)) — Tensor containing the original index of the (shuffled) masked patches. - hidden_states (

tuple(tf.Tensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftf.Tensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the model at the output of each layer plus the initial embedding outputs. - attentions (

tuple(tf.Tensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftf.Tensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The TFViTMAEModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, TFViTMAEModel

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("facebook/vit-mae-base")

>>> model = TFViTMAEModel.from_pretrained("facebook/vit-mae-base")

>>> inputs = image_processor(images=image, return_tensors="tf")

>>> outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_stateTFViTMAEForPreTraining

class transformers.TFViTMAEForPreTraining

< source >( config )

Parameters

- config (ViTMAEConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The ViTMAE Model transformer with the decoder on top for self-supervised pre-training. This model inherits from TFPreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a keras.Model subclass. Use it as a regular TF 2.0 Keras Model and refer to the TF 2.0 documentation for all matter related to general usage and behavior.

TensorFlow models and layers in transformers accept two formats as input:

- having all inputs as keyword arguments (like PyTorch models), or

- having all inputs as a list, tuple or dict in the first positional argument.

The reason the second format is supported is that Keras methods prefer this format when passing inputs to models

and layers. Because of this support, when using methods like model.fit() things should “just work” for you - just

pass your inputs and labels in any format that model.fit() supports! If, however, you want to use the second

format outside of Keras methods like fit() and predict(), such as when creating your own layers or models with

the Keras Functional API, there are three possibilities you can use to gather all the input Tensors in the first

positional argument:

- a single Tensor with

pixel_valuesonly and nothing else:model(pixel_values) - a list of varying length with one or several input Tensors IN THE ORDER given in the docstring:

model([pixel_values, attention_mask])ormodel([pixel_values, attention_mask, token_type_ids]) - a dictionary with one or several input Tensors associated to the input names given in the docstring:

model({"pixel_values": pixel_values, "token_type_ids": token_type_ids})

Note that when creating models and layers with subclassing then you don’t need to worry about any of this, as you can just pass inputs like you would to any other Python function!

call

< source >( pixel_values: TFModelInputType | None = None noise: tf.Tensor = None head_mask: np.ndarray | tf.Tensor | None = None output_attentions: Optional[bool] = None output_hidden_states: Optional[bool] = None return_dict: Optional[bool] = None training: bool = False ) → transformers.models.vit_mae.modeling_tf_vit_mae.TFViTMAEForPreTrainingOutput or tuple(tf.Tensor)

Parameters

- pixel_values (

np.ndarray,tf.Tensor,List[tf.Tensor]`Dict[str, tf.Tensor]orDict[str, np.ndarray]and each example must have the shape(batch_size, num_channels, height, width)) — Pixel values. Pixel values can be obtained using AutoImageProcessor. See ViTImageProcessor.call() for details. - head_mask (

np.ndarrayortf.Tensorof shape(num_heads,)or(num_layers, num_heads), optional) — Mask to nullify selected heads of the self-attention modules. Mask values selected in[0, 1]:- 1 indicates the head is not masked,

- 0 indicates the head is masked.

- output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. This argument can be used only in eager mode, in graph mode the value in the config will be used instead. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. This argument can be used only in eager mode, in graph mode the value in the config will be used instead. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. This argument can be used in eager mode, in graph mode the value will always be set to True. - training (

bool, optional, defaults to `False“) — Whether or not to use the model in training mode (some modules like dropout modules have different behaviors between training and evaluation).

Returns

transformers.models.vit_mae.modeling_tf_vit_mae.TFViTMAEForPreTrainingOutput or tuple(tf.Tensor)

A transformers.models.vit_mae.modeling_tf_vit_mae.TFViTMAEForPreTrainingOutput or a tuple of tf.Tensor (if

return_dict=False is passed or when config.return_dict=False) comprising various elements depending on the

configuration (ViTMAEConfig) and inputs.

- loss (

tf.Tensorof shape(1,)) — Pixel reconstruction loss. - logits (

tf.Tensorof shape(batch_size, sequence_length, patch_size ** 2 * num_channels)) — Pixel reconstruction logits. - mask (

tf.Tensorof shape(batch_size, sequence_length)) — Tensor indicating which patches are masked (1) and which are not (0). - ids_restore (

tf.Tensorof shape(batch_size, sequence_length)) — Tensor containing the original index of the (shuffled) masked patches. - hidden_states (

tuple(tf.Tensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftf.Tensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the model at the output of each layer plus the initial embedding outputs. - attentions (

tuple(tf.Tensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftf.Tensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in the self-attention heads.

The TFViTMAEForPreTraining forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, TFViTMAEForPreTraining

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("facebook/vit-mae-base")

>>> model = TFViTMAEForPreTraining.from_pretrained("facebook/vit-mae-base")

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> loss = outputs.loss

>>> mask = outputs.mask

>>> ids_restore = outputs.ids_restore