Transformers documentation

Conditional DETR

Conditional DETR

Overview

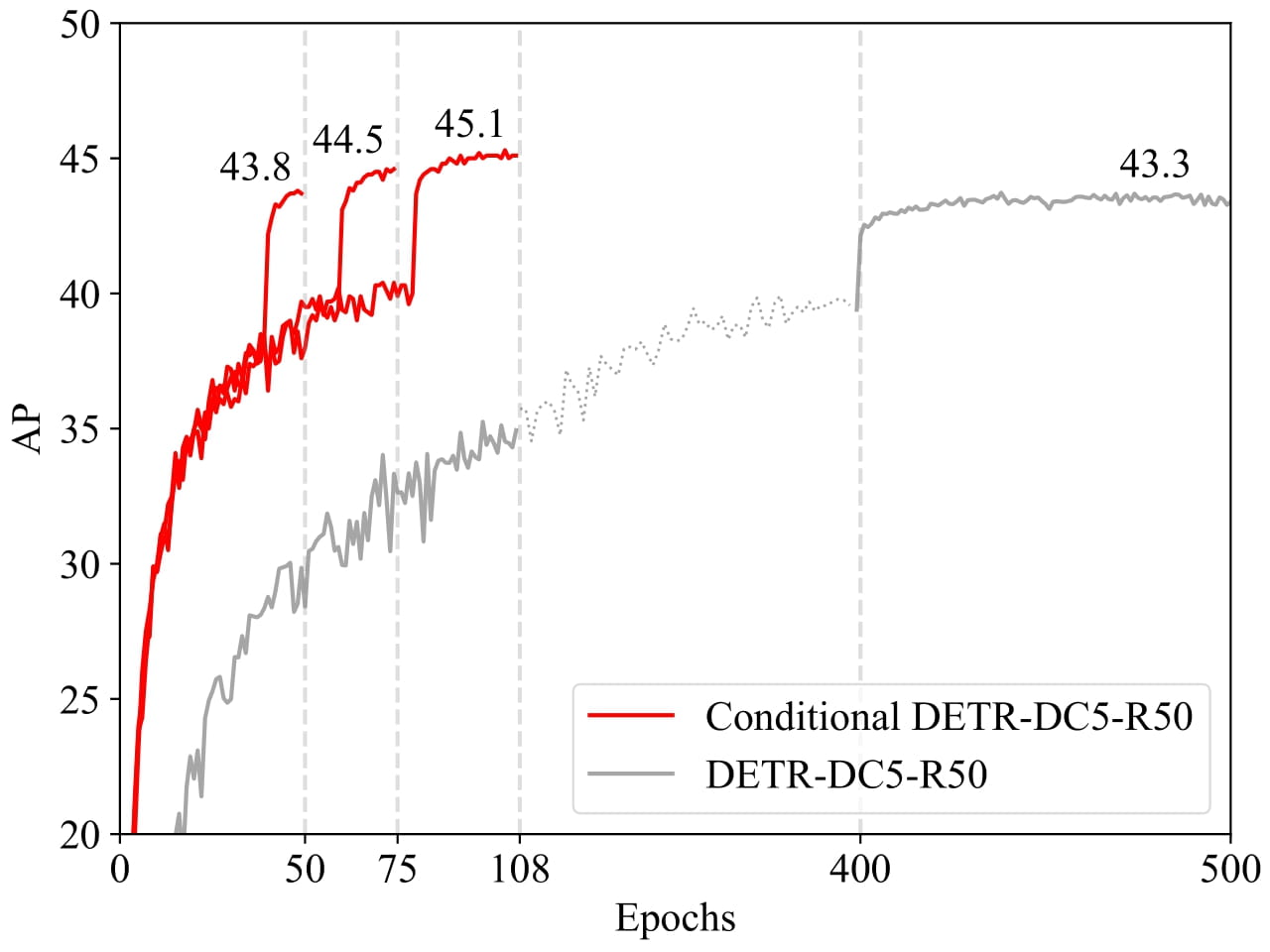

条件付き DETR モデルは、Conditional DETR for Fast Training Convergence で Depu Meng、Xiaokang Chen、Zejia Fan、Gang Zeng、Houqiang Li、Yuhui Yuan、Lei Sun, Jingdong Wang によって提案されました。王京東。条件付き DETR は、高速 DETR トレーニングのための条件付きクロスアテンション メカニズムを提供します。条件付き DETR は DETR よりも 6.7 倍から 10 倍速く収束します。

論文の要約は次のとおりです。

最近開発された DETR アプローチは、トランスフォーマー エンコーダーおよびデコーダー アーキテクチャを物体検出に適用し、有望なパフォーマンスを実現します。この論文では、トレーニングの収束が遅いという重要な問題を扱い、高速 DETR トレーニングのための条件付きクロスアテンション メカニズムを紹介します。私たちのアプローチは、DETR におけるクロスアテンションが 4 つの四肢の位置特定とボックスの予測にコンテンツの埋め込みに大きく依存しているため、高品質のコンテンツの埋め込みの必要性が高まり、トレーニングの難易度が高くなるという点に動機づけられています。条件付き DETR と呼ばれる私たちのアプローチは、デコーダーのマルチヘッド クロスアテンションのためにデコーダーの埋め込みから条件付きの空間クエリを学習します。利点は、条件付き空間クエリを通じて、各クロスアテンション ヘッドが、個別の領域 (たとえば、1 つのオブジェクトの端またはオブジェクト ボックス内の領域) を含むバンドに注目できることです。これにより、オブジェクト分類とボックス回帰のための個別の領域をローカライズするための空間範囲が狭まり、コンテンツの埋め込みへの依存が緩和され、トレーニングが容易になります。実験結果は、条件付き DETR がバックボーン R50 および R101 で 6.7 倍速く収束し、より強力なバックボーン DC5-R50 および DC5-R101 で 10 倍速く収束することを示しています。コードは https://github.com/Atten4Vis/ConditionalDETR で入手できます。

条件付き DETR は、元の DETR に比べてはるかに速い収束を示します。 元の論文から引用。

条件付き DETR は、元の DETR に比べてはるかに速い収束を示します。 元の論文から引用。 このモデルは DepuMeng によって寄稿されました。元のコードは ここ にあります。

Resources

ConditionalDetrConfig

class transformers.ConditionalDetrConfig

< source >( use_timm_backbone = True backbone_config = None num_channels = 3 num_queries = 300 encoder_layers = 6 encoder_ffn_dim = 2048 encoder_attention_heads = 8 decoder_layers = 6 decoder_ffn_dim = 2048 decoder_attention_heads = 8 encoder_layerdrop = 0.0 decoder_layerdrop = 0.0 is_encoder_decoder = True activation_function = 'relu' d_model = 256 dropout = 0.1 attention_dropout = 0.0 activation_dropout = 0.0 init_std = 0.02 init_xavier_std = 1.0 auxiliary_loss = False position_embedding_type = 'sine' backbone = 'resnet50' use_pretrained_backbone = True dilation = False class_cost = 2 bbox_cost = 5 giou_cost = 2 mask_loss_coefficient = 1 dice_loss_coefficient = 1 cls_loss_coefficient = 2 bbox_loss_coefficient = 5 giou_loss_coefficient = 2 focal_alpha = 0.25 **kwargs )

Parameters

- use_timm_backbone (

bool, optional, defaults toTrue) — Whether or not to use thetimmlibrary for the backbone. If set toFalse, will use theAutoBackboneAPI. - backbone_config (

PretrainedConfigordict, optional) — The configuration of the backbone model. Only used in caseuse_timm_backboneis set toFalsein which case it will default toResNetConfig(). - num_channels (

int, optional, defaults to 3) — The number of input channels. - num_queries (

int, optional, defaults to 100) — Number of object queries, i.e. detection slots. This is the maximal number of objects ConditionalDetrModel can detect in a single image. For COCO, we recommend 100 queries. - d_model (

int, optional, defaults to 256) — Dimension of the layers. - encoder_layers (

int, optional, defaults to 6) — Number of encoder layers. - decoder_layers (

int, optional, defaults to 6) — Number of decoder layers. - encoder_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer encoder. - decoder_attention_heads (

int, optional, defaults to 8) — Number of attention heads for each attention layer in the Transformer decoder. - decoder_ffn_dim (

int, optional, defaults to 2048) — Dimension of the “intermediate” (often named feed-forward) layer in decoder. - encoder_ffn_dim (

int, optional, defaults to 2048) — Dimension of the “intermediate” (often named feed-forward) layer in decoder. - activation_function (

strorfunction, optional, defaults to"relu") — The non-linear activation function (function or string) in the encoder and pooler. If string,"gelu","relu","silu"and"gelu_new"are supported. - dropout (

float, optional, defaults to 0.1) — The dropout probability for all fully connected layers in the embeddings, encoder, and pooler. - attention_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the attention probabilities. - activation_dropout (

float, optional, defaults to 0.0) — The dropout ratio for activations inside the fully connected layer. - init_std (

float, optional, defaults to 0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - init_xavier_std (

float, optional, defaults to 1) — The scaling factor used for the Xavier initialization gain in the HM Attention map module. - encoder_layerdrop (

float, optional, defaults to 0.0) — The LayerDrop probability for the encoder. See the [LayerDrop paper](see https://arxiv.org/abs/1909.11556) for more details. - decoder_layerdrop (

float, optional, defaults to 0.0) — The LayerDrop probability for the decoder. See the [LayerDrop paper](see https://arxiv.org/abs/1909.11556) for more details. - auxiliary_loss (

bool, optional, defaults toFalse) — Whether auxiliary decoding losses (loss at each decoder layer) are to be used. - position_embedding_type (

str, optional, defaults to"sine") — Type of position embeddings to be used on top of the image features. One of"sine"or"learned". - backbone (

str, optional, defaults to"resnet50") — Name of convolutional backbone to use in caseuse_timm_backbone=True. Supports any convolutional backbone from the timm package. For a list of all available models, see this page. - use_pretrained_backbone (

bool, optional, defaults toTrue) — Whether to use pretrained weights for the backbone. Only supported whenuse_timm_backbone=True. - dilation (

bool, optional, defaults toFalse) — Whether to replace stride with dilation in the last convolutional block (DC5). Only supported whenuse_timm_backbone=True. - class_cost (

float, optional, defaults to 1) — Relative weight of the classification error in the Hungarian matching cost. - bbox_cost (

float, optional, defaults to 5) — Relative weight of the L1 error of the bounding box coordinates in the Hungarian matching cost. - giou_cost (

float, optional, defaults to 2) — Relative weight of the generalized IoU loss of the bounding box in the Hungarian matching cost. - mask_loss_coefficient (

float, optional, defaults to 1) — Relative weight of the Focal loss in the panoptic segmentation loss. - dice_loss_coefficient (

float, optional, defaults to 1) — Relative weight of the DICE/F-1 loss in the panoptic segmentation loss. - bbox_loss_coefficient (

float, optional, defaults to 5) — Relative weight of the L1 bounding box loss in the object detection loss. - giou_loss_coefficient (

float, optional, defaults to 2) — Relative weight of the generalized IoU loss in the object detection loss. - eos_coefficient (

float, optional, defaults to 0.1) — Relative classification weight of the ‘no-object’ class in the object detection loss. - focal_alpha (

float, optional, defaults to 0.25) — Alpha parameter in the focal loss.

This is the configuration class to store the configuration of a ConditionalDetrModel. It is used to instantiate a Conditional DETR model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the Conditional DETR microsoft/conditional-detr-resnet-50 architecture.

Configuration objects inherit from PretrainedConfig and can be used to control the model outputs. Read the documentation from PretrainedConfig for more information.

Examples:

>>> from transformers import ConditionalDetrConfig, ConditionalDetrModel

>>> # Initializing a Conditional DETR microsoft/conditional-detr-resnet-50 style configuration

>>> configuration = ConditionalDetrConfig()

>>> # Initializing a model (with random weights) from the microsoft/conditional-detr-resnet-50 style configuration

>>> model = ConditionalDetrModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configConditionalDetrImageProcessor

class transformers.ConditionalDetrImageProcessor

< source >( format: typing.Union[str, transformers.models.conditional_detr.image_processing_conditional_detr.AnnotionFormat] = <AnnotionFormat.COCO_DETECTION: 'coco_detection'> do_resize: bool = True size: typing.Dict[str, int] = None resample: Resampling = <Resampling.BILINEAR: 2> do_rescale: bool = True rescale_factor: typing.Union[int, float] = 0.00392156862745098 do_normalize: bool = True image_mean: typing.Union[float, typing.List[float]] = None image_std: typing.Union[float, typing.List[float]] = None do_pad: bool = True **kwargs )

Parameters

- format (

str, optional, defaults to"coco_detection") — Data format of the annotations. One of “coco_detection” or “coco_panoptic”. - do_resize (

bool, optional, defaults toTrue) — Controls whether to resize the image’s (height, width) dimensions to the specifiedsize. Can be overridden by thedo_resizeparameter in thepreprocessmethod. - size (

Dict[str, int]optional, defaults to{"shortest_edge" -- 800, "longest_edge": 1333}): Size of the image’s (height, width) dimensions after resizing. Can be overridden by thesizeparameter in thepreprocessmethod. - resample (

PILImageResampling, optional, defaults toPILImageResampling.BILINEAR) — Resampling filter to use if resizing the image. - do_rescale (

bool, optional, defaults toTrue) — Controls whether to rescale the image by the specified scalerescale_factor. Can be overridden by thedo_rescaleparameter in thepreprocessmethod. - rescale_factor (

intorfloat, optional, defaults to1/255) — Scale factor to use if rescaling the image. Can be overridden by therescale_factorparameter in thepreprocessmethod. do_normalize — Controls whether to normalize the image. Can be overridden by thedo_normalizeparameter in thepreprocessmethod. - image_mean (

floatorList[float], optional, defaults toIMAGENET_DEFAULT_MEAN) — Mean values to use when normalizing the image. Can be a single value or a list of values, one for each channel. Can be overridden by theimage_meanparameter in thepreprocessmethod. - image_std (

floatorList[float], optional, defaults toIMAGENET_DEFAULT_STD) — Standard deviation values to use when normalizing the image. Can be a single value or a list of values, one for each channel. Can be overridden by theimage_stdparameter in thepreprocessmethod. - do_pad (

bool, optional, defaults toTrue) — Controls whether to pad the image to the largest image in a batch and create a pixel mask. Can be overridden by thedo_padparameter in thepreprocessmethod.

Constructs a Conditional Detr image processor.

preprocess

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), typing.List[ForwardRef('PIL.Image.Image')], typing.List[numpy.ndarray], typing.List[ForwardRef('torch.Tensor')]] annotations: typing.Union[typing.Dict[str, typing.Union[int, str, typing.List[typing.Dict]]], typing.List[typing.Dict[str, typing.Union[int, str, typing.List[typing.Dict]]]], NoneType] = None return_segmentation_masks: bool = None masks_path: typing.Union[str, pathlib.Path, NoneType] = None do_resize: typing.Optional[bool] = None size: typing.Union[typing.Dict[str, int], NoneType] = None resample = None do_rescale: typing.Optional[bool] = None rescale_factor: typing.Union[int, float, NoneType] = None do_normalize: typing.Optional[bool] = None image_mean: typing.Union[float, typing.List[float], NoneType] = None image_std: typing.Union[float, typing.List[float], NoneType] = None do_pad: typing.Optional[bool] = None format: typing.Union[str, transformers.models.conditional_detr.image_processing_conditional_detr.AnnotionFormat, NoneType] = None return_tensors: typing.Union[str, transformers.utils.generic.TensorType, NoneType] = None data_format: typing.Union[str, transformers.image_utils.ChannelDimension] = <ChannelDimension.FIRST: 'channels_first'> input_data_format: typing.Union[str, transformers.image_utils.ChannelDimension, NoneType] = None **kwargs )

Parameters

- images (

ImageInput) — Image or batch of images to preprocess. Expects a single or batch of images with pixel values ranging from 0 to 255. If passing in images with pixel values between 0 and 1, setdo_rescale=False. - annotations (

AnnotationTypeorList[AnnotationType], optional) — List of annotations associated with the image or batch of images. If annotation is for object detection, the annotations should be a dictionary with the following keys:- “image_id” (

int): The image id. - “annotations” (

List[Dict]): List of annotations for an image. Each annotation should be a dictionary. An image can have no annotations, in which case the list should be empty. If annotation is for segmentation, the annotations should be a dictionary with the following keys: - “image_id” (

int): The image id. - “segments_info” (

List[Dict]): List of segments for an image. Each segment should be a dictionary. An image can have no segments, in which case the list should be empty. - “file_name” (

str): The file name of the image.

- “image_id” (

- return_segmentation_masks (

bool, optional, defaults to self.return_segmentation_masks) — Whether to return segmentation masks. - masks_path (

strorpathlib.Path, optional) — Path to the directory containing the segmentation masks. - do_resize (

bool, optional, defaults to self.do_resize) — Whether to resize the image. - size (

Dict[str, int], optional, defaults to self.size) — Size of the image after resizing. - resample (

PILImageResampling, optional, defaults to self.resample) — Resampling filter to use when resizing the image. - do_rescale (

bool, optional, defaults to self.do_rescale) — Whether to rescale the image. - rescale_factor (

float, optional, defaults to self.rescale_factor) — Rescale factor to use when rescaling the image. - do_normalize (

bool, optional, defaults to self.do_normalize) — Whether to normalize the image. - image_mean (

floatorList[float], optional, defaults to self.image_mean) — Mean to use when normalizing the image. - image_std (

floatorList[float], optional, defaults to self.image_std) — Standard deviation to use when normalizing the image. - do_pad (

bool, optional, defaults to self.do_pad) — Whether to pad the image. - format (

strorAnnotionFormat, optional, defaults to self.format) — Format of the annotations. - return_tensors (

strorTensorType, optional, defaults to self.return_tensors) — Type of tensors to return. IfNone, will return the list of images. - data_format (

ChannelDimensionorstr, optional, defaults toChannelDimension.FIRST) — The channel dimension format for the output image. Can be one of:"channels_first"orChannelDimension.FIRST: image in (num_channels, height, width) format."channels_last"orChannelDimension.LAST: image in (height, width, num_channels) format.- Unset: Use the channel dimension format of the input image.

- input_data_format (

ChannelDimensionorstr, optional) — The channel dimension format for the input image. If unset, the channel dimension format is inferred from the input image. Can be one of:"channels_first"orChannelDimension.FIRST: image in (num_channels, height, width) format."channels_last"orChannelDimension.LAST: image in (height, width, num_channels) format."none"orChannelDimension.NONE: image in (height, width) format.

Preprocess an image or a batch of images so that it can be used by the model.

post_process_object_detection

< source >( outputs threshold: float = 0.5 target_sizes: typing.Union[transformers.utils.generic.TensorType, typing.List[typing.Tuple]] = None top_k: int = 100 ) → List[Dict]

Parameters

- outputs (

DetrObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional) — Score threshold to keep object detection predictions. - target_sizes (

torch.TensororList[Tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (Tuple[int, int]) containing the target size (height, width) of each image in the batch. If left to None, predictions will not be resized. - top_k (

int, optional, defaults to 100) — Keep only top k bounding boxes before filtering by thresholding.

Returns

List[Dict]

A list of dictionaries, each dictionary containing the scores, labels and boxes for an image in the batch as predicted by the model.

Converts the raw output of ConditionalDetrForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format. Only supports PyTorch.

post_process_instance_segmentation

< source >( outputs threshold: float = 0.5 mask_threshold: float = 0.5 overlap_mask_area_threshold: float = 0.8 target_sizes: typing.Union[typing.List[typing.Tuple[int, int]], NoneType] = None return_coco_annotation: typing.Optional[bool] = False ) → List[Dict]

Parameters

- outputs (ConditionalDetrForSegmentation) — Raw outputs of the model.

- threshold (

float, optional, defaults to 0.5) — The probability score threshold to keep predicted instance masks. - mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values. - overlap_mask_area_threshold (

float, optional, defaults to 0.8) — The overlap mask area threshold to merge or discard small disconnected parts within each binary instance mask. - target_sizes (

List[Tuple], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction. If unset, predictions will not be resized. - return_coco_annotation (

bool, optional) — Defaults toFalse. If set toTrue, segmentation maps are returned in COCO run-length encoding (RLE) format.

Returns

List[Dict]

A list of dictionaries, one per image, each dictionary containing two keys:

- segmentation — A tensor of shape

(height, width)where each pixel represents asegment_idorList[List]run-length encoding (RLE) of the segmentation map if return_coco_annotation is set toTrue. Set toNoneif no mask if found abovethreshold. - segments_info — A dictionary that contains additional information on each segment.

- id — An integer representing the

segment_id. - label_id — An integer representing the label / semantic class id corresponding to

segment_id. - score — Prediction score of segment with

segment_id.

- id — An integer representing the

Converts the output of ConditionalDetrForSegmentation into instance segmentation predictions. Only supports PyTorch.

post_process_semantic_segmentation

< source >( outputs target_sizes: typing.List[typing.Tuple[int, int]] = None ) → List[torch.Tensor]

Parameters

- outputs (ConditionalDetrForSegmentation) — Raw outputs of the model.

- target_sizes (

List[Tuple[int, int]], optional) — A list of tuples (Tuple[int, int]) containing the target size (height, width) of each image in the batch. If unset, predictions will not be resized.

Returns

List[torch.Tensor]

A list of length batch_size, where each item is a semantic segmentation map of shape (height, width)

corresponding to the target_sizes entry (if target_sizes is specified). Each entry of each

torch.Tensor correspond to a semantic class id.

Converts the output of ConditionalDetrForSegmentation into semantic segmentation maps. Only supports PyTorch.

post_process_panoptic_segmentation

< source >( outputs threshold: float = 0.5 mask_threshold: float = 0.5 overlap_mask_area_threshold: float = 0.8 label_ids_to_fuse: typing.Optional[typing.Set[int]] = None target_sizes: typing.Union[typing.List[typing.Tuple[int, int]], NoneType] = None ) → List[Dict]

Parameters

- outputs (ConditionalDetrForSegmentation) — The outputs from ConditionalDetrForSegmentation.

- threshold (

float, optional, defaults to 0.5) — The probability score threshold to keep predicted instance masks. - mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values. - overlap_mask_area_threshold (

float, optional, defaults to 0.8) — The overlap mask area threshold to merge or discard small disconnected parts within each binary instance mask. - label_ids_to_fuse (

Set[int], optional) — The labels in this state will have all their instances be fused together. For instance we could say there can only be one sky in an image, but several persons, so the label ID for sky would be in that set, but not the one for person. - target_sizes (

List[Tuple], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction in batch. If unset, predictions will not be resized.

Returns

List[Dict]

A list of dictionaries, one per image, each dictionary containing two keys:

- segmentation — a tensor of shape

(height, width)where each pixel represents asegment_idorNoneif no mask if found abovethreshold. Iftarget_sizesis specified, segmentation is resized to the correspondingtarget_sizesentry. - segments_info — A dictionary that contains additional information on each segment.

- id — an integer representing the

segment_id. - label_id — An integer representing the label / semantic class id corresponding to

segment_id. - was_fused — a boolean,

Trueiflabel_idwas inlabel_ids_to_fuse,Falseotherwise. Multiple instances of the same class / label were fused and assigned a singlesegment_id. - score — Prediction score of segment with

segment_id.

- id — an integer representing the

Converts the output of ConditionalDetrForSegmentation into image panoptic segmentation predictions. Only supports PyTorch.

ConditionalDetrFeatureExtractor

Preprocess an image or a batch of images.

post_process_object_detection

< source >( outputs threshold: float = 0.5 target_sizes: typing.Union[transformers.utils.generic.TensorType, typing.List[typing.Tuple]] = None top_k: int = 100 ) → List[Dict]

Parameters

- outputs (

DetrObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional) — Score threshold to keep object detection predictions. - target_sizes (

torch.TensororList[Tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (Tuple[int, int]) containing the target size (height, width) of each image in the batch. If left to None, predictions will not be resized. - top_k (

int, optional, defaults to 100) — Keep only top k bounding boxes before filtering by thresholding.

Returns

List[Dict]

A list of dictionaries, each dictionary containing the scores, labels and boxes for an image in the batch as predicted by the model.

Converts the raw output of ConditionalDetrForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format. Only supports PyTorch.

post_process_instance_segmentation

< source >( outputs threshold: float = 0.5 mask_threshold: float = 0.5 overlap_mask_area_threshold: float = 0.8 target_sizes: typing.Union[typing.List[typing.Tuple[int, int]], NoneType] = None return_coco_annotation: typing.Optional[bool] = False ) → List[Dict]

Parameters

- outputs (ConditionalDetrForSegmentation) — Raw outputs of the model.

- threshold (

float, optional, defaults to 0.5) — The probability score threshold to keep predicted instance masks. - mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values. - overlap_mask_area_threshold (

float, optional, defaults to 0.8) — The overlap mask area threshold to merge or discard small disconnected parts within each binary instance mask. - target_sizes (

List[Tuple], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction. If unset, predictions will not be resized. - return_coco_annotation (

bool, optional) — Defaults toFalse. If set toTrue, segmentation maps are returned in COCO run-length encoding (RLE) format.

Returns

List[Dict]

A list of dictionaries, one per image, each dictionary containing two keys:

- segmentation — A tensor of shape

(height, width)where each pixel represents asegment_idorList[List]run-length encoding (RLE) of the segmentation map if return_coco_annotation is set toTrue. Set toNoneif no mask if found abovethreshold. - segments_info — A dictionary that contains additional information on each segment.

- id — An integer representing the

segment_id. - label_id — An integer representing the label / semantic class id corresponding to

segment_id. - score — Prediction score of segment with

segment_id.

- id — An integer representing the

Converts the output of ConditionalDetrForSegmentation into instance segmentation predictions. Only supports PyTorch.

post_process_semantic_segmentation

< source >( outputs target_sizes: typing.List[typing.Tuple[int, int]] = None ) → List[torch.Tensor]

Parameters

- outputs (ConditionalDetrForSegmentation) — Raw outputs of the model.

- target_sizes (

List[Tuple[int, int]], optional) — A list of tuples (Tuple[int, int]) containing the target size (height, width) of each image in the batch. If unset, predictions will not be resized.

Returns

List[torch.Tensor]

A list of length batch_size, where each item is a semantic segmentation map of shape (height, width)

corresponding to the target_sizes entry (if target_sizes is specified). Each entry of each

torch.Tensor correspond to a semantic class id.

Converts the output of ConditionalDetrForSegmentation into semantic segmentation maps. Only supports PyTorch.

post_process_panoptic_segmentation

< source >( outputs threshold: float = 0.5 mask_threshold: float = 0.5 overlap_mask_area_threshold: float = 0.8 label_ids_to_fuse: typing.Optional[typing.Set[int]] = None target_sizes: typing.Union[typing.List[typing.Tuple[int, int]], NoneType] = None ) → List[Dict]

Parameters

- outputs (ConditionalDetrForSegmentation) — The outputs from ConditionalDetrForSegmentation.

- threshold (

float, optional, defaults to 0.5) — The probability score threshold to keep predicted instance masks. - mask_threshold (

float, optional, defaults to 0.5) — Threshold to use when turning the predicted masks into binary values. - overlap_mask_area_threshold (

float, optional, defaults to 0.8) — The overlap mask area threshold to merge or discard small disconnected parts within each binary instance mask. - label_ids_to_fuse (

Set[int], optional) — The labels in this state will have all their instances be fused together. For instance we could say there can only be one sky in an image, but several persons, so the label ID for sky would be in that set, but not the one for person. - target_sizes (

List[Tuple], optional) — List of length (batch_size), where each list item (Tuple[int, int]]) corresponds to the requested final size (height, width) of each prediction in batch. If unset, predictions will not be resized.

Returns

List[Dict]

A list of dictionaries, one per image, each dictionary containing two keys:

- segmentation — a tensor of shape

(height, width)where each pixel represents asegment_idorNoneif no mask if found abovethreshold. Iftarget_sizesis specified, segmentation is resized to the correspondingtarget_sizesentry. - segments_info — A dictionary that contains additional information on each segment.

- id — an integer representing the

segment_id. - label_id — An integer representing the label / semantic class id corresponding to

segment_id. - was_fused — a boolean,

Trueiflabel_idwas inlabel_ids_to_fuse,Falseotherwise. Multiple instances of the same class / label were fused and assigned a singlesegment_id. - score — Prediction score of segment with

segment_id.

- id — an integer representing the

Converts the output of ConditionalDetrForSegmentation into image panoptic segmentation predictions. Only supports PyTorch.

ConditionalDetrModel

class transformers.ConditionalDetrModel

< source >( config: ConditionalDetrConfig )

Parameters

- config (ConditionalDetrConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare Conditional DETR Model (consisting of a backbone and encoder-decoder Transformer) outputting raw hidden-states without any specific head on top.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: FloatTensor pixel_mask: typing.Optional[torch.LongTensor] = None decoder_attention_mask: typing.Optional[torch.LongTensor] = None encoder_outputs: typing.Optional[torch.FloatTensor] = None inputs_embeds: typing.Optional[torch.FloatTensor] = None decoder_inputs_embeds: typing.Optional[torch.FloatTensor] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it.Pixel values can be obtained using AutoImageProcessor. See ConditionalDetrImageProcessor.call() for details.

- pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- decoder_attention_mask (

torch.FloatTensorof shape(batch_size, num_queries), optional) — Not used by default. Can be used to mask object queries. - encoder_outputs (

tuple(tuple(torch.FloatTensor), optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. - inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passing the flattened feature map (output of the backbone + projection layer), you can choose to directly pass a flattened representation of an image. - decoder_inputs_embeds (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size), optional) — Optionally, instead of initializing the queries with a tensor of zeros, you can choose to directly pass an embedded representation. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrModelOutput or tuple(torch.FloatTensor)

A transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ConditionalDetrConfig) and inputs.

- last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size)) — Sequence of hidden-states at the output of the last layer of the decoder of the model. - decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the decoder at the output of each layer plus the initial embedding outputs. - decoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads. - encoder_last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the encoder at the output of each layer plus the initial embedding outputs. - encoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the encoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - intermediate_hidden_states (

torch.FloatTensorof shape(config.decoder_layers, batch_size, sequence_length, hidden_size), optional, returned whenconfig.auxiliary_loss=True) — Intermediate decoder activations, i.e. the output of each decoder layer, each of them gone through a layernorm.

The ConditionalDetrModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, AutoModel

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> model = AutoModel.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> # prepare image for the model

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> # forward pass

>>> outputs = model(**inputs)

>>> # the last hidden states are the final query embeddings of the Transformer decoder

>>> # these are of shape (batch_size, num_queries, hidden_size)

>>> last_hidden_states = outputs.last_hidden_state

>>> list(last_hidden_states.shape)

[1, 300, 256]ConditionalDetrForObjectDetection

class transformers.ConditionalDetrForObjectDetection

< source >( config: ConditionalDetrConfig )

Parameters

- config (ConditionalDetrConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

CONDITIONAL_DETR Model (consisting of a backbone and encoder-decoder Transformer) with object detection heads on top, for tasks such as COCO detection.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: FloatTensor pixel_mask: typing.Optional[torch.LongTensor] = None decoder_attention_mask: typing.Optional[torch.LongTensor] = None encoder_outputs: typing.Optional[torch.FloatTensor] = None inputs_embeds: typing.Optional[torch.FloatTensor] = None decoder_inputs_embeds: typing.Optional[torch.FloatTensor] = None labels: typing.Optional[typing.List[dict]] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrObjectDetectionOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it.Pixel values can be obtained using AutoImageProcessor. See ConditionalDetrImageProcessor.call() for details.

- pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- decoder_attention_mask (

torch.FloatTensorof shape(batch_size, num_queries), optional) — Not used by default. Can be used to mask object queries. - encoder_outputs (

tuple(tuple(torch.FloatTensor), optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. - inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passing the flattened feature map (output of the backbone + projection layer), you can choose to directly pass a flattened representation of an image. - decoder_inputs_embeds (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size), optional) — Optionally, instead of initializing the queries with a tensor of zeros, you can choose to directly pass an embedded representation. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

List[Dict]of len(batch_size,), optional) — Labels for computing the bipartite matching loss. List of dicts, each dictionary containing at least the following 2 keys: ‘class_labels’ and ‘boxes’ (the class labels and bounding boxes of an image in the batch respectively). The class labels themselves should be atorch.LongTensorof len(number of bounding boxes in the image,)and the boxes atorch.FloatTensorof shape(number of bounding boxes in the image, 4).

Returns

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrObjectDetectionOutput or tuple(torch.FloatTensor)

A transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrObjectDetectionOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ConditionalDetrConfig) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsare provided)) — Total loss as a linear combination of a negative log-likehood (cross-entropy) for class prediction and a bounding box loss. The latter is defined as a linear combination of the L1 loss and the generalized scale-invariant IoU loss. - loss_dict (

Dict, optional) — A dictionary containing the individual losses. Useful for logging. - logits (

torch.FloatTensorof shape(batch_size, num_queries, num_classes + 1)) — Classification logits (including no-object) for all queries. - pred_boxes (

torch.FloatTensorof shape(batch_size, num_queries, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual image in the batch (disregarding possible padding). You can use post_process_object_detection() to retrieve the unnormalized bounding boxes. - auxiliary_outputs (

list[Dict], optional) — Optional, only returned when auxilary losses are activated (i.e.config.auxiliary_lossis set toTrue) and labels are provided. It is a list of dictionaries containing the two above keys (logitsandpred_boxes) for each decoder layer. - last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the decoder of the model. - decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the decoder at the output of each layer plus the initial embedding outputs. - decoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads. - encoder_last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the encoder at the output of each layer plus the initial embedding outputs. - encoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the encoder, after the attention softmax, used to compute the weighted average in the self-attention heads.

The ConditionalDetrForObjectDetection forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> from transformers import AutoImageProcessor, AutoModelForObjectDetection

>>> from PIL import Image

>>> import requests

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> model = AutoModelForObjectDetection.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> outputs = model(**inputs)

>>> # convert outputs (bounding boxes and class logits) to COCO API

>>> target_sizes = torch.tensor([image.size[::-1]])

>>> results = image_processor.post_process_object_detection(outputs, threshold=0.5, target_sizes=target_sizes)[

... 0

... ]

>>> for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

... box = [round(i, 2) for i in box.tolist()]

... print(

... f"Detected {model.config.id2label[label.item()]} with confidence "

... f"{round(score.item(), 3)} at location {box}"

... )

Detected remote with confidence 0.833 at location [38.31, 72.1, 177.63, 118.45]

Detected cat with confidence 0.831 at location [9.2, 51.38, 321.13, 469.0]

Detected cat with confidence 0.804 at location [340.3, 16.85, 642.93, 370.95]

Detected remote with confidence 0.683 at location [334.48, 73.49, 366.37, 190.01]

Detected couch with confidence 0.535 at location [0.52, 1.19, 640.35, 475.1]ConditionalDetrForSegmentation

class transformers.ConditionalDetrForSegmentation

< source >( config: ConditionalDetrConfig )

Parameters

- config (ConditionalDetrConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

CONDITIONAL_DETR Model (consisting of a backbone and encoder-decoder Transformer) with a segmentation head on top, for tasks such as COCO panoptic.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: FloatTensor pixel_mask: typing.Optional[torch.LongTensor] = None decoder_attention_mask: typing.Optional[torch.FloatTensor] = None encoder_outputs: typing.Optional[torch.FloatTensor] = None inputs_embeds: typing.Optional[torch.FloatTensor] = None decoder_inputs_embeds: typing.Optional[torch.FloatTensor] = None labels: typing.Optional[typing.List[dict]] = None output_attentions: typing.Optional[bool] = None output_hidden_states: typing.Optional[bool] = None return_dict: typing.Optional[bool] = None ) → transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrSegmentationOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, height, width)) — Pixel values. Padding will be ignored by default should you provide it.Pixel values can be obtained using AutoImageProcessor. See ConditionalDetrImageProcessor.call() for details.

- pixel_mask (

torch.LongTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- decoder_attention_mask (

torch.FloatTensorof shape(batch_size, num_queries), optional) — Not used by default. Can be used to mask object queries. - encoder_outputs (

tuple(tuple(torch.FloatTensor), optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. - inputs_embeds (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Optionally, instead of passing the flattened feature map (output of the backbone + projection layer), you can choose to directly pass a flattened representation of an image. - decoder_inputs_embeds (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size), optional) — Optionally, instead of initializing the queries with a tensor of zeros, you can choose to directly pass an embedded representation. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

List[Dict]of len(batch_size,), optional) — Labels for computing the bipartite matching loss, DICE/F-1 loss and Focal loss. List of dicts, each dictionary containing at least the following 3 keys: ‘class_labels’, ‘boxes’ and ‘masks’ (the class labels, bounding boxes and segmentation masks of an image in the batch respectively). The class labels themselves should be atorch.LongTensorof len(number of bounding boxes in the image,), the boxes atorch.FloatTensorof shape(number of bounding boxes in the image, 4)and the masks atorch.FloatTensorof shape(number of bounding boxes in the image, height, width).

Returns

transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrSegmentationOutput or tuple(torch.FloatTensor)

A transformers.models.conditional_detr.modeling_conditional_detr.ConditionalDetrSegmentationOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (ConditionalDetrConfig) and inputs.

- loss (

torch.FloatTensorof shape(1,), optional, returned whenlabelsare provided)) — Total loss as a linear combination of a negative log-likehood (cross-entropy) for class prediction and a bounding box loss. The latter is defined as a linear combination of the L1 loss and the generalized scale-invariant IoU loss. - loss_dict (

Dict, optional) — A dictionary containing the individual losses. Useful for logging. - logits (

torch.FloatTensorof shape(batch_size, num_queries, num_classes + 1)) — Classification logits (including no-object) for all queries. - pred_boxes (

torch.FloatTensorof shape(batch_size, num_queries, 4)) — Normalized boxes coordinates for all queries, represented as (center_x, center_y, width, height). These values are normalized in [0, 1], relative to the size of each individual image in the batch (disregarding possible padding). You can use post_process_object_detection() to retrieve the unnormalized bounding boxes. - pred_masks (

torch.FloatTensorof shape(batch_size, num_queries, height/4, width/4)) — Segmentation masks logits for all queries. See also post_process_semantic_segmentation() or post_process_instance_segmentation() post_process_panoptic_segmentation() to evaluate semantic, instance and panoptic segmentation masks respectively. - auxiliary_outputs (

list[Dict], optional) — Optional, only returned when auxiliary losses are activated (i.e.config.auxiliary_lossis set toTrue) and labels are provided. It is a list of dictionaries containing the two above keys (logitsandpred_boxes) for each decoder layer. - last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the decoder of the model. - decoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the decoder at the output of each layer plus the initial embedding outputs. - decoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads. - cross_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the decoder’s cross-attention layer, after the attention softmax, used to compute the weighted average in the cross-attention heads. - encoder_last_hidden_state (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model. - encoder_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the encoder at the output of each layer plus the initial embedding outputs. - encoder_attentions (

tuple(torch.FloatTensor), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights of the encoder, after the attention softmax, used to compute the weighted average in the self-attention heads.

The ConditionalDetrForSegmentation forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the Module

instance afterwards instead of this since the former takes care of running the pre and post processing steps while

the latter silently ignores them.

Examples:

>>> import io

>>> import requests

>>> from PIL import Image

>>> import torch

>>> import numpy

>>> from transformers import (

... AutoImageProcessor,

... ConditionalDetrConfig,

... ConditionalDetrForSegmentation,

... )

>>> from transformers.image_transforms import rgb_to_id

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(url, stream=True).raw)

>>> image_processor = AutoImageProcessor.from_pretrained("microsoft/conditional-detr-resnet-50")

>>> # randomly initialize all weights of the model

>>> config = ConditionalDetrConfig()

>>> model = ConditionalDetrForSegmentation(config)

>>> # prepare image for the model

>>> inputs = image_processor(images=image, return_tensors="pt")

>>> # forward pass

>>> outputs = model(**inputs)

>>> # Use the `post_process_panoptic_segmentation` method of the `image_processor` to retrieve post-processed panoptic segmentation maps

>>> # Segmentation results are returned as a list of dictionaries

>>> result = image_processor.post_process_panoptic_segmentation(outputs, target_sizes=[(300, 500)])

>>> # A tensor of shape (height, width) where each value denotes a segment id, filled with -1 if no segment is found

>>> panoptic_seg = result[0]["segmentation"]

>>> # Get prediction score and segment_id to class_id mapping of each segment

>>> panoptic_segments_info = result[0]["segments_info"]