Optimum documentation

Optimum Inference with ONNX Runtime

Optimum Inference with ONNX Runtime

Optimum is a utility package for building and running inference with accelerated runtime like ONNX Runtime. Optimum can be used to load optimized models from the Hugging Face Hub and create pipelines to run accelerated inference without rewriting your APIs.

Switching from Transformers to Optimum

The optimum.onnxruntime.ORTModelForXXX model classes are API compatible with Hugging Face Transformers models. This

means you can just replace your AutoModelForXXX class with the corresponding ORTModelForXXX class in optimum.onnxruntime.

You do not need to adapt your code to get it to work with ORTModelForXXX classes:

from transformers import AutoTokenizer, pipeline

-from transformers import AutoModelForQuestionAnswering

+from optimum.onnxruntime import ORTModelForQuestionAnswering

-model = AutoModelForQuestionAnswering.from_pretrained("deepset/roberta-base-squad2") # PyTorch checkpoint

+model = ORTModelForQuestionAnswering.from_pretrained("optimum/roberta-base-squad2") # ONNX checkpoint

tokenizer = AutoTokenizer.from_pretrained("deepset/roberta-base-squad2")

onnx_qa = pipeline("question-answering",model=model,tokenizer=tokenizer)

question = "What's my name?"

context = "My name is Philipp and I live in Nuremberg."

pred = onnx_qa(question, context)Loading a vanilla Transformers model

Because the model you want to work with might not be already converted to ONNX, ORTModel

includes a method to convert vanilla Transformers models to ONNX ones. Simply pass export=True to the

from_pretrained() method, and your model will be loaded and converted to ONNX on-the-fly:

>>> from optimum.onnxruntime import ORTModelForSequenceClassification

>>> # Load the model from the hub and export it to the ONNX format

>>> model = ORTModelForSequenceClassification.from_pretrained(

... "distilbert-base-uncased-finetuned-sst-2-english", export=True

... )Pushing ONNX models to the Hugging Face Hub

It is also possible, just as with regular PreTrainedModels, to push your ORTModelForXXX to the

Hugging Face Model Hub:

>>> from optimum.onnxruntime import ORTModelForSequenceClassification

>>> # Load the model from the hub and export it to the ONNX format

>>> model = ORTModelForSequenceClassification.from_pretrained(

... "distilbert-base-uncased-finetuned-sst-2-english", export=True

... )

>>> # Save the converted model

>>> model.save_pretrained("a_local_path_for_convert_onnx_model")

# Push the onnx model to HF Hub

>>> model.push_to_hub(

... "a_local_path_for_convert_onnx_model", repository_id="my-onnx-repo", use_auth_token=True

... )Export and inference of sequence-to-sequence models

Sequence-to-sequence (Seq2Seq) models can also be used when running inference with ONNX Runtime. When Seq2Seq models are exported to the ONNX format, they are decomposed into three parts that are later combined during inference:

- The encoder part of the model

- The decoder part of the model + the language modeling head

- The same decoder part of the model + language modeling head but taking and using pre-computed key / values as inputs and outputs. This makes inference faster.

Here is an example of how you can load a T5 model to the ONNX format and run inference for a translation task:

>>> from transformers import AutoTokenizer, pipeline

>>> from optimum.onnxruntime import ORTModelForSeq2SeqLM

# Load the model from the hub and export it to the ONNX format

>>> model_name = "t5-small"

>>> model = ORTModelForSeq2SeqLM.from_pretrained(model_name, export=True)

>>> tokenizer = AutoTokenizer.from_pretrained(model_name)

# Create a pipeline

>>> onnx_translation = pipeline("translation_en_to_fr", model=model, tokenizer=tokenizer)

>>> text = "He never went out without a book under his arm, and he often came back with two."

>>> result = onnx_translation(text)

>>> # [{'translation_text': "Il n'est jamais sorti sans un livre sous son bras, et il est souvent revenu avec deux."}]Export and inference of Stable Diffusion models

Stable Diffusion models can also be used when running inference with ONNX Runtime. When Stable Diffusion models are exported to the ONNX format, they are split into three components that are later combined during inference:

- The text encoder

- The U-NET

- The VAE decoder

Make sure you have 🤗 Diffusers installed.

To install diffusers:

pip install diffusersHere is an example of how you can load an ONNX Stable Diffusion model and run inference using ONNX Runtime:

from optimum.onnxruntime import ORTStableDiffusionPipeline

model_id = "runwayml/stable-diffusion-v1-5"

stable_diffusion = ORTStableDiffusionPipeline.from_pretrained(model_id, revision="onnx")

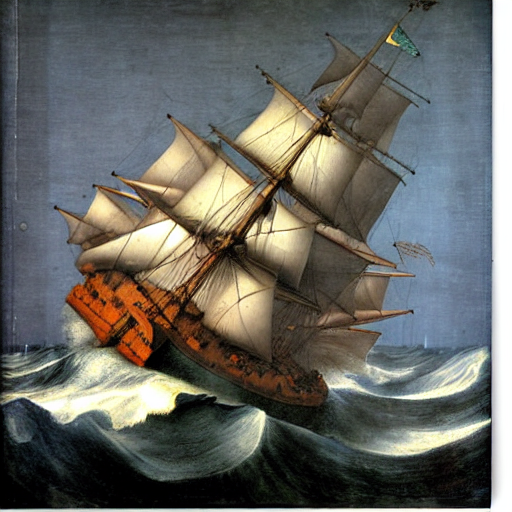

prompt = "sailing ship in storm by Leonardo da Vinci"

image = stable_diffusion(prompt).images[0]To load your PyTorch model and convert it to ONNX on-the-fly, you can set export=True.

stable_diffusion = ORTStableDiffusionPipeline.from_pretrained(model_id, export=True)

# Don't forget to save the ONNX model

save_directory = "a_local_path"

stable_diffusion.save_pretrained(save_directory)