dwu

commited on

Commit

·

a1722e4

1

Parent(s):

e2be89a

initial commit

Browse files- .gitattributes +1 -0

- README.md +127 -0

- config.json +23 -0

- generation_config.json +7 -0

- huggingface-metadata.txt +6 -0

- quantize_config.json +5 -0

- special_tokens_map.json +24 -0

- tokenizer.model +3 -0

- tokenizer_config.json +34 -0

- trainer_state.json +0 -0

- wizard-vicuna-13B-GPTQ-8bit-128g.no-act-order.safetensors +3 -0

.gitattributes

CHANGED

|

@@ -32,3 +32,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

wizard-vicuna-13B-GPTQ-8bit-128g.no-act-order.safetensors filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,127 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

tags:

|

| 5 |

+

- causal-lm

|

| 6 |

+

- llama

|

| 7 |

+

inference: false

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Wizard-Vicuna-13B-GPTQ-8bit-128g

|

| 11 |

+

|

| 12 |

+

This repository contains 8-bit quantized models in GPTQ format of [TheBlokes's wizard-vicuna 13B in FP16 HF format](https://huggingface.co/TheBloke/wizard-vicuna-13B-HF).

|

| 13 |

+

|

| 14 |

+

These models are the result of quantization to 8-bit using [GPTQ-for-LLaMa](https://github.com/qwopqwop200/GPTQ-for-LLaMa).

|

| 15 |

+

|

| 16 |

+

While most metrics suggest that 8-bit is only marginally better than 4-bit, I have found that the 8-bit model follows instructions significantly better. The responses from the 8-bit model feel very close to the quality of GPT-3, whereas the 4-bit model lacks some "intelligence".

|

| 17 |

+

|

| 18 |

+

With this quantized model, I can replace GPT-3 for most of my work. However, a drawback is that it requires approximately 15GB of VRAM, so you need a GPU with at least 16GB of VRAM.

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

The content below is straight copy and paste from TheBloke's README with the 4 bit content changed to 8 bit and referencing this model.

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

## How to easily download and use this model in text-generation-webui

|

| 25 |

+

|

| 26 |

+

Open the text-generation-webui UI as normal.

|

| 27 |

+

|

| 28 |

+

1. Click the **Model tab**.

|

| 29 |

+

2. Under **Download custom model or LoRA**, enter `deetungsten/wizard-vicuna-13B-GPTQ-8bit-128g`.

|

| 30 |

+

3. Click **Download**.

|

| 31 |

+

4. Wait until it says it's finished downloading.

|

| 32 |

+

5. Click the **Refresh** icon next to **Model** in the top left.

|

| 33 |

+

6. In the **Model drop-down**: choose the model you just downloaded, `wizard-vicuna-13B-GPTQ-8bit-128g`.

|

| 34 |

+

7. If you see an error in the bottom right, ignore it - it's temporary.

|

| 35 |

+

8. Fill out the `GPTQ parameters` on the right: `Bits = 8`, `Groupsize = 128`, `model_type = Llama`

|

| 36 |

+

9. Click **Save settings for this model** in the top right.

|

| 37 |

+

10. Click **Reload the Model** in the top right.

|

| 38 |

+

11. Once it says it's loaded, click the **Text Generation tab** and enter a prompt!

|

| 39 |

+

|

| 40 |

+

## Provided files

|

| 41 |

+

|

| 42 |

+

**Compatible file - wizard-vicuna-13B-GPTQ-8bit-128g.no-act-order.safetensors**

|

| 43 |

+

|

| 44 |

+

In the `main` branch - the default one - you will find `wizard-vicuna-13B-GPTQ-8bit-128g.no-act-order.safetensors`

|

| 45 |

+

|

| 46 |

+

This will work with all versions of GPTQ-for-LLaMa. It has maximum compatibility

|

| 47 |

+

|

| 48 |

+

It was created without the `--act-order` parameter. It may have slightly lower inference quality compared to the other file, but is guaranteed to work on all versions of GPTQ-for-LLaMa and text-generation-webui.

|

| 49 |

+

|

| 50 |

+

* `wizard-vicuna-13B-GPTQ-8bit-128g.no-act-order.safetensors`

|

| 51 |

+

* Works with all versions of GPTQ-for-LLaMa code, both Triton and CUDA branches

|

| 52 |

+

* Works with text-generation-webui one-click-installers

|

| 53 |

+

* Parameters: Groupsize = 128g. No act-order.

|

| 54 |

+

* Command used to create the GPTQ:

|

| 55 |

+

```

|

| 56 |

+

CUDA_VISIBLE_DEVICES=0 python3 llama.py wizard-vicuna-13B-HF c4 --wbits 8 --true-sequential --groupsize 128 --save_safetensors wizard-vicuna-13B-GPTQ-8bit.compat.no-act-order.safetensors

|

| 57 |

+

```

|

| 58 |

+

|

| 59 |

+

# Original WizardVicuna-13B model card

|

| 60 |

+

|

| 61 |

+

Github page: https://github.com/melodysdreamj/WizardVicunaLM

|

| 62 |

+

|

| 63 |

+

# WizardVicunaLM

|

| 64 |

+

### Wizard's dataset + ChatGPT's conversation extension + Vicuna's tuning method

|

| 65 |

+

I am a big fan of the ideas behind WizardLM and VicunaLM. I particularly like the idea of WizardLM handling the dataset itself more deeply and broadly, as well as VicunaLM overcoming the limitations of single-turn conversations by introducing multi-round conversations. As a result, I combined these two ideas to create WizardVicunaLM. This project is highly experimental and designed for proof of concept, not for actual usage.

|

| 66 |

+

|

| 67 |

+

|

| 68 |

+

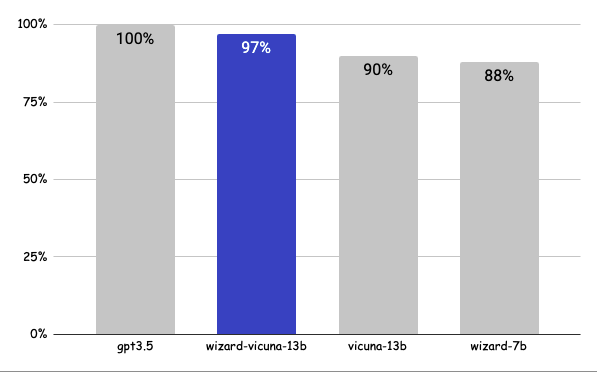

## Benchmark

|

| 69 |

+

### Approximately 7% performance improvement over VicunaLM

|

| 70 |

+

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

### Detail

|

| 74 |

+

|

| 75 |

+

The questions presented here are not from rigorous tests, but rather, I asked a few questions and requested GPT-4 to score them. The models compared were ChatGPT 3.5, WizardVicunaLM, VicunaLM, and WizardLM, in that order.

|

| 76 |

+

|

| 77 |

+

| | gpt3.5 | wizard-vicuna-13b | vicuna-13b | wizard-7b | link |

|

| 78 |

+

|-----|--------|-------------------|------------|-----------|----------|

|

| 79 |

+

| Q1 | 95 | 90 | 85 | 88 | [link](https://sharegpt.com/c/YdhIlby) |

|

| 80 |

+

| Q2 | 95 | 97 | 90 | 89 | [link](https://sharegpt.com/c/YOqOV4g) |

|

| 81 |

+

| Q3 | 85 | 90 | 80 | 65 | [link](https://sharegpt.com/c/uDmrcL9) |

|

| 82 |

+

| Q4 | 90 | 85 | 80 | 75 | [link](https://sharegpt.com/c/XBbK5MZ) |

|

| 83 |

+

| Q5 | 90 | 85 | 80 | 75 | [link](https://sharegpt.com/c/AQ5tgQX) |

|

| 84 |

+

| Q6 | 92 | 85 | 87 | 88 | [link](https://sharegpt.com/c/eVYwfIr) |

|

| 85 |

+

| Q7 | 95 | 90 | 85 | 92 | [link](https://sharegpt.com/c/Kqyeub4) |

|

| 86 |

+

| Q8 | 90 | 85 | 75 | 70 | [link](https://sharegpt.com/c/M0gIjMF) |

|

| 87 |

+

| Q9 | 92 | 85 | 70 | 60 | [link](https://sharegpt.com/c/fOvMtQt) |

|

| 88 |

+

| Q10 | 90 | 80 | 75 | 85 | [link](https://sharegpt.com/c/YYiCaUz) |

|

| 89 |

+

| Q11 | 90 | 85 | 75 | 65 | [link](https://sharegpt.com/c/HMkKKGU) |

|

| 90 |

+

| Q12 | 85 | 90 | 80 | 88 | [link](https://sharegpt.com/c/XbW6jgB) |

|

| 91 |

+

| Q13 | 90 | 95 | 88 | 85 | [link](https://sharegpt.com/c/JXZb7y6) |

|

| 92 |

+

| Q14 | 94 | 89 | 90 | 91 | [link](https://sharegpt.com/c/cTXH4IS) |

|

| 93 |

+

| Q15 | 90 | 85 | 88 | 87 | [link](https://sharegpt.com/c/GZiM0Yt) |

|

| 94 |

+

| | 91 | 88 | 82 | 80 | |

|

| 95 |

+

|

| 96 |

+

|

| 97 |

+

## Principle

|

| 98 |

+

|

| 99 |

+

We adopted the approach of WizardLM, which is to extend a single problem more in-depth. However, instead of using individual instructions, we expanded it using Vicuna's conversation format and applied Vicuna's fine-tuning techniques.

|

| 100 |

+

|

| 101 |

+

Turning a single command into a rich conversation is what we've done [here](https://sharegpt.com/c/6cmxqq0).

|

| 102 |

+

|

| 103 |

+

After creating the training data, I later trained it according to the Vicuna v1.1 [training method](https://github.com/lm-sys/FastChat/blob/main/scripts/train_vicuna_13b.sh).

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

## Detailed Method

|

| 107 |

+

|

| 108 |

+

First, we explore and expand various areas in the same topic using the 7K conversations created by WizardLM. However, we made it in a continuous conversation format instead of the instruction format. That is, it starts with WizardLM's instruction, and then expands into various areas in one conversation using ChatGPT 3.5.

|

| 109 |

+

|

| 110 |

+

After that, we applied the following model using Vicuna's fine-tuning format.

|

| 111 |

+

|

| 112 |

+

## Training Process

|

| 113 |

+

|

| 114 |

+

Trained with 8 A100 GPUs for 35 hours.

|

| 115 |

+

|

| 116 |

+

## Weights

|

| 117 |

+

You can see the [dataset](https://huggingface.co/datasets/junelee/wizard_vicuna_70k) we used for training and the [13b model](https://huggingface.co/junelee/wizard-vicuna-13b) in the huggingface.

|

| 118 |

+

|

| 119 |

+

## Conclusion

|

| 120 |

+

If we extend the conversation to gpt4 32K, we can expect a dramatic improvement, as we can generate 8x more, more accurate and richer conversations.

|

| 121 |

+

|

| 122 |

+

## License

|

| 123 |

+

The model is licensed under the LLaMA model, and the dataset is licensed under the terms of OpenAI because it uses ChatGPT. Everything else is free.

|

| 124 |

+

|

| 125 |

+

## Author

|

| 126 |

+

|

| 127 |

+

[JUNE LEE](https://github.com/melodysdreamj) - He is active in Songdo Artificial Intelligence Study and GDG Songdo.

|

config.json

ADDED

|

@@ -0,0 +1,23 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "wizard_vicuna_13b_600_step",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"LlamaForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"bos_token_id": 1,

|

| 7 |

+

"eos_token_id": 2,

|

| 8 |

+

"hidden_act": "silu",

|

| 9 |

+

"hidden_size": 5120,

|

| 10 |

+

"initializer_range": 0.02,

|

| 11 |

+

"intermediate_size": 13824,

|

| 12 |

+

"max_position_embeddings": 2048,

|

| 13 |

+

"model_type": "llama",

|

| 14 |

+

"num_attention_heads": 40,

|

| 15 |

+

"num_hidden_layers": 40,

|

| 16 |

+

"pad_token_id": 0,

|

| 17 |

+

"rms_norm_eps": 1e-06,

|

| 18 |

+

"tie_word_embeddings": false,

|

| 19 |

+

"torch_dtype": "float32",

|

| 20 |

+

"transformers_version": "4.28.1",

|

| 21 |

+

"use_cache": true,

|

| 22 |

+

"vocab_size": 32000

|

| 23 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"pad_token_id": 0,

|

| 6 |

+

"transformers_version": "4.28.1"

|

| 7 |

+

}

|

huggingface-metadata.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

url: https://huggingface.co/TheBloke/wizard-vicuna-13B-GPTQ

|

| 2 |

+

branch: main

|

| 3 |

+

download date: 2023-05-11 17:51:27

|

| 4 |

+

sha256sum:

|

| 5 |

+

9e556afd44213b6bd1be2b850ebbbd98f5481437a8021afaf58ee7fb1818d347 tokenizer.model

|

| 6 |

+

f73b241fd29129c0f6f6024719fd22eeb1d0cac0dceba2c7151bd70b5e654640 wizard-vicuna-13B-GPTQ-4bit.compat.no-act-order.safetensors

|

quantize_config.json

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bits": 4,

|

| 3 |

+

"desc_act": false,

|

| 4 |

+

"group_size": 128

|

| 5 |

+

}

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<s>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "</s>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<unk>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<unk>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9e556afd44213b6bd1be2b850ebbbd98f5481437a8021afaf58ee7fb1818d347

|

| 3 |

+

size 499723

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": true,

|

| 3 |

+

"add_eos_token": false,

|

| 4 |

+

"bos_token": {

|

| 5 |

+

"__type": "AddedToken",

|

| 6 |

+

"content": "<s>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": true,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false

|

| 11 |

+

},

|

| 12 |

+

"clean_up_tokenization_spaces": false,

|

| 13 |

+

"eos_token": {

|

| 14 |

+

"__type": "AddedToken",

|

| 15 |

+

"content": "</s>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": true,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false

|

| 20 |

+

},

|

| 21 |

+

"model_max_length": 2048,

|

| 22 |

+

"pad_token": null,

|

| 23 |

+

"padding_side": "right",

|

| 24 |

+

"sp_model_kwargs": {},

|

| 25 |

+

"tokenizer_class": "LlamaTokenizer",

|

| 26 |

+

"unk_token": {

|

| 27 |

+

"__type": "AddedToken",

|

| 28 |

+

"content": "<unk>",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": true,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false

|

| 33 |

+

}

|

| 34 |

+

}

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

wizard-vicuna-13B-GPTQ-8bit-128g.no-act-order.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4a9931a35d05c1846f6caac74c0a8c65620a7fd7dcd3697e6563de085c2ca8cb

|

| 3 |

+

size 13648607292

|