metadata

language:

- en

tags:

- summarization

datasets:

- ccdv/WCEP-10

metrics:

- rouge

model-index:

- name: ccdv/lsg-bart-base-4096-wcep

results: []

This model relies on a custom modeling file, you need to add trust_remote_code=True

See #13467

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, pipeline

tokenizer = AutoTokenizer.from_pretrained("ccdv/lsg-bart-base-4096-wcep", trust_remote_code=True)

model = AutoModelForSeq2SeqLM.from_pretrained("ccdv/lsg-bart-base-4096-wcep", trust_remote_code=True)

text = "Replace by what you want."

pipe = pipeline("text2text-generation", model=model, tokenizer=tokenizer, device=0)

generated_text = pipe(text, truncation=True, max_length=64, no_repeat_ngram_size=7)

ccdv/lsg-bart-base-4096-wcep

This model is a fine-tuned version of ccdv/lsg-bart-base-4096 on the ccdv/WCEP-10 roberta dataset.

It achieves the following results on the test set:

| Length | Sparse Type | Block Size | Sparsity | Connexions | R1 | R2 | RL | RLsum |

|---|---|---|---|---|---|---|---|---|

| 4096 | Local | 256 | 0 | 768 | 46.02 | 24.23 | 37.38 | 38.72 |

| 4096 | Local | 128 | 0 | 384 | 45.43 | 23.86 | 36.94 | 38.30 |

| 4096 | Pooling | 128 | 4 | 644 | 45.36 | 23.61 | 36.75 | 38.06 |

| 4096 | Stride | 128 | 4 | 644 | 45.87 | 24.31 | 37.41 | 38.70 |

| 4096 | Block Stride | 128 | 4 | 644 | 45.78 | 24.16 | 37.20 | 38.48 |

| 4096 | Norm | 128 | 4 | 644 | 45.34 | 23.39 | 36.47 | 37.78 |

| 4096 | LSH | 128 | 4 | 644 | 45.15 | 23.53 | 36.74 | 38.02 |

With smaller block size (lower ressources):

| Length | Sparse Type | Block Size | Sparsity | Connexions | R1 | R2 | RL | RLsum |

|---|---|---|---|---|---|---|---|---|

| 4096 | Local | 64 | 0 | 192 | 44.48 | 22.98 | 36.20 | 37.52 |

| 4096 | Local | 32 | 0 | 96 | 43.60 | 22.17 | 35.61 | 36.66 |

| 4096 | Pooling | 32 | 4 | 160 | 43.91 | 22.41 | 35.80 | 36.92 |

| 4096 | Stride | 32 | 4 | 160 | 44.62 | 23.11 | 36.32 | 37.53 |

| 4096 | Block Stride | 32 | 4 | 160 | 44.47 | 23.02 | 36.28 | 37.46 |

| 4096 | Norm | 32 | 4 | 160 | 44.45 | 23.03 | 36.10 | 37.33 |

| 4096 | LSH | 32 | 4 | 160 | 43.87 | 22.50 | 35.75 | 36.93 |

Model description

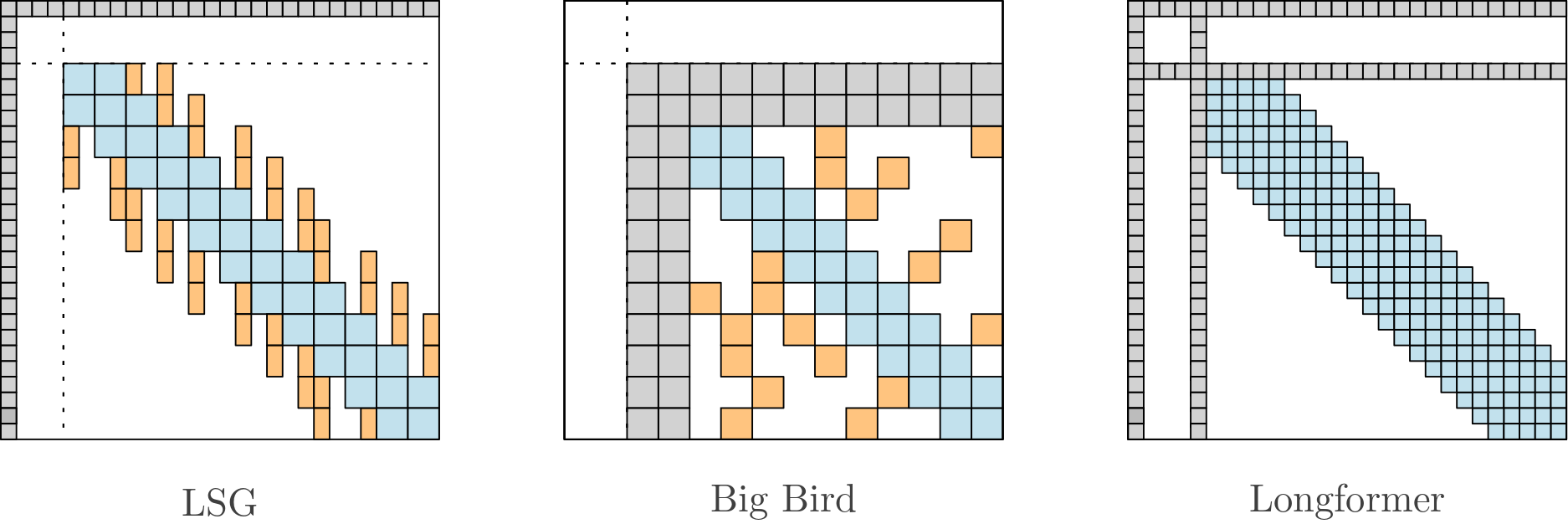

The model relies on Local-Sparse-Global attention to handle long sequences:

The model has about ~145 millions parameters (6 encoder layers - 6 decoder layers).

The model is warm started from BART-base, converted to handle long sequences (encoder only) and fine tuned.

Intended uses & limitations

More information needed

Training and evaluation data

More information needed

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-05

- train_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10.0

Generate hyperparameters

The following hyperparameters were used during generation:

- dataset_name: ccdv/WCEP-10

- dataset_config_name: roberta

- eval_batch_size: 8

- eval_samples: 1022

- early_stopping: True

- ignore_pad_token_for_loss: True

- length_penalty: 2.0

- max_length: 64

- min_length: 0

- num_beams: 5

- no_repeat_ngram_size: None

- seed: 123

Framework versions

- Transformers 4.18.0

- Pytorch 1.10.1+cu102

- Datasets 2.1.0

- Tokenizers 0.11.6