model card

Browse files

README.md

CHANGED

|

@@ -1,3 +1,41 @@

|

|

| 1 |

---

|

| 2 |

license: openrail

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: openrail

|

| 3 |

---

|

| 4 |

+

|

| 5 |

+

# PMC_LLaMA

|

| 6 |

+

|

| 7 |

+

To obtain the foundation model in medical field, we propose [MedLLaMA_13B](https://huggingface.co/chaoyi-wu/MedLLaMA_13B) and PMC_LLaMA_13B.

|

| 8 |

+

|

| 9 |

+

MedLLaMA_13B is initialized from LLaMA-13B and further pretrained with medical corpus. Despite the expert knowledge gained, it lacks instruction-following ability.

|

| 10 |

+

Hereby we construct a instruction-tuning dataset and evaluate the tuned model.

|

| 11 |

+

|

| 12 |

+

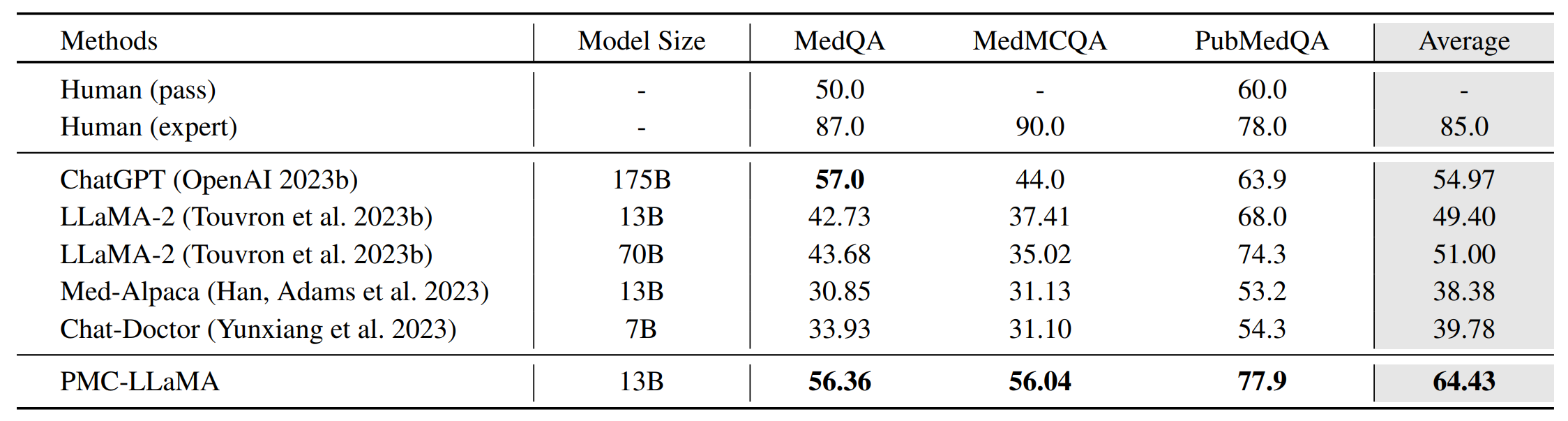

As shown in the table, PMC_LLaMA_13B achieves comparable results to ChatGPT on medical QA benchmarks.

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

## Usage

|

| 18 |

+

|

| 19 |

+

```python

|

| 20 |

+

import transformers

|

| 21 |

+

import torch

|

| 22 |

+

|

| 23 |

+

tokenizer = transformers.LlamaTokenizer.from_pretrained('axiong/PMC_LLaMA_13B')

|

| 24 |

+

model = transformers.LlamaForCausalLM.from_pretrained('axiong/PMC_LLaMA_13B')

|

| 25 |

+

|

| 26 |

+

sentence = 'Hello, doctor'

|

| 27 |

+

batch = tokenizer(

|

| 28 |

+

sentence,

|

| 29 |

+

return_tensors="pt",

|

| 30 |

+

add_special_tokens=False

|

| 31 |

+

)

|

| 32 |

+

with torch.no_grad():

|

| 33 |

+

generated = model.generate(

|

| 34 |

+

inputs = batch["input_ids"],

|

| 35 |

+

max_length=200,

|

| 36 |

+

do_sample=True,

|

| 37 |

+

top_k=50

|

| 38 |

+

)

|

| 39 |

+

print('model predict: ',tokenizer.decode(generated[0]))

|

| 40 |

+

```

|

| 41 |

+

|