language:

- en

tags:

- causal-lm

- llama

inference: false

Wizard-Vicuna-13B-GGML

This is GGML format quantised 4bit and 5bit models of junelee's wizard-vicuna 13B.

It is the result of quantising to 4bit and 5bit GGML for CPU inference using llama.cpp.

Repositories available

- 4bit GPTQ models for GPU inference.

- 4bit and 5bit GGML models for CPU inference.

- float16 HF format model for GPU inference.

REQUIRES LATEST LLAMA.CPP (May 12th 2023 - commit b9fd7ee)!

llama.cpp recently made a breaking change to its quantisation methods.

I have re-quantised the GGML files in this repo. Therefore you will require llama.cpp compiled on May 12th or later (commit b9fd7ee or later) to use them.

The previous files, which will still work in older versions of llama.cpp, can be found in branch previous_llama.

Provided files

| Name | Quant method | Bits | Size | RAM required | Use case |

|---|---|---|---|---|---|

wizard-vicuna-13B.ggml.q4_0.bin |

q4_0 | 4bit | 8.14GB | 10.5GB | 4-bit. |

wizard-vicuna-13B.ggml.q5_0.bin |

q5_0 | 5bit | 8.95GB | 11.0GB | 5-bit. Higher accuracy, higher resource usage and slower inference. |

wizard-vicuna-13B.ggml.q5_1.bin |

q5_1 | 5bit | 9.76GB | 12.25GB | 5-bit. Even higher accuracy, and higher resource usage and slower inference. |

How to run in llama.cpp

I use the following command line; adjust for your tastes and needs:

./main -t 18 -m wizard-vicuna-13B.ggml.q4_0.bin --color -c 2048 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "### Instruction: write a story about llamas ### Response:"

Change -t 18 to the number of physical CPU cores you have. For example if your system has 8 cores/16 threads, use -t 8.

How to run in text-generation-webui

GGML models can be loaded into text-generation-webui by installing the llama.cpp module, then placing the ggml model file in a model folder as usual.

Further instructions here: text-generation-webui/docs/llama.cpp-models.md.

Note: at this time text-generation-webui may not support the new May 12th llama.cpp quantisation methods.

Thireus has written a great guide on how to update it to the latest llama.cpp code which may be useful to get text-gen-ui working with the new llama.cpp quant methods sooner.

Original WizardVicuna-13B model card

Github page: https://github.com/melodysdreamj/WizardVicunaLM

WizardVicunaLM

Wizard's dataset + ChatGPT's conversation extension + Vicuna's tuning method

I am a big fan of the ideas behind WizardLM and VicunaLM. I particularly like the idea of WizardLM handling the dataset itself more deeply and broadly, as well as VicunaLM overcoming the limitations of single-turn conversations by introducing multi-round conversations. As a result, I combined these two ideas to create WizardVicunaLM. This project is highly experimental and designed for proof of concept, not for actual usage.

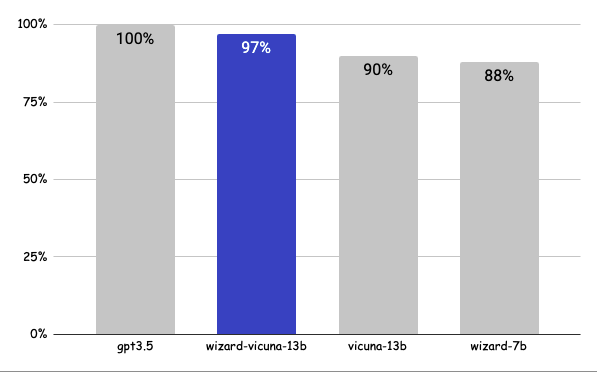

Benchmark

Approximately 7% performance improvement over VicunaLM

Detail

The questions presented here are not from rigorous tests, but rather, I asked a few questions and requested GPT-4 to score them. The models compared were ChatGPT 3.5, WizardVicunaLM, VicunaLM, and WizardLM, in that order.

| gpt3.5 | wizard-vicuna-13b | vicuna-13b | wizard-7b | link | |

|---|---|---|---|---|---|

| Q1 | 95 | 90 | 85 | 88 | link |

| Q2 | 95 | 97 | 90 | 89 | link |

| Q3 | 85 | 90 | 80 | 65 | link |

| Q4 | 90 | 85 | 80 | 75 | link |

| Q5 | 90 | 85 | 80 | 75 | link |

| Q6 | 92 | 85 | 87 | 88 | link |

| Q7 | 95 | 90 | 85 | 92 | link |

| Q8 | 90 | 85 | 75 | 70 | link |

| Q9 | 92 | 85 | 70 | 60 | link |

| Q10 | 90 | 80 | 75 | 85 | link |

| Q11 | 90 | 85 | 75 | 65 | link |

| Q12 | 85 | 90 | 80 | 88 | link |

| Q13 | 90 | 95 | 88 | 85 | link |

| Q14 | 94 | 89 | 90 | 91 | link |

| Q15 | 90 | 85 | 88 | 87 | link |

| 91 | 88 | 82 | 80 |

Principle

We adopted the approach of WizardLM, which is to extend a single problem more in-depth. However, instead of using individual instructions, we expanded it using Vicuna's conversation format and applied Vicuna's fine-tuning techniques.

Turning a single command into a rich conversation is what we've done here.

After creating the training data, I later trained it according to the Vicuna v1.1 training method.

Detailed Method

First, we explore and expand various areas in the same topic using the 7K conversations created by WizardLM. However, we made it in a continuous conversation format instead of the instruction format. That is, it starts with WizardLM's instruction, and then expands into various areas in one conversation using ChatGPT 3.5.

After that, we applied the following model using Vicuna's fine-tuning format.

Training Process

Trained with 8 A100 GPUs for 35 hours.

Weights

You can see the dataset we used for training and the 13b model in the huggingface.

Conclusion

If we extend the conversation to gpt4 32K, we can expect a dramatic improvement, as we can generate 8x more, more accurate and richer conversations.

License

The model is licensed under the LLaMA model, and the dataset is licensed under the terms of OpenAI because it uses ChatGPT. Everything else is free.

Author

JUNE LEE - He is active in Songdo Artificial Intelligence Study and GDG Songdo.