license: creativeml-openrail-m

language:

- en

library_name: diffusers

pipeline_tag: text-to-video

tags:

- AIGC

- text2video

- image2video

- infinite-length

- human

MuseV: Infinite-length and High Fidelity Virtual Human Video Generation with Parallel Denoising

Zhiqiang Xia *,

Zhaokang Chen*,

Bin Wu†,

Chao Li,

Kwok-Wai Hung,

Chao Zhan,

Wenjiang Zhou

(*Equal Contribution, †Corresponding Author)

Lyra Lab, Tencent Music Entertainment

[project](comming soon) Technical report (comming soon)

We have setup the world simulator vision since March 2023, believing diffusion models can simulate the world. MuseV was a milestone achieved around July 2023. Amazed by the progress of Sora, we decided to opensource MuseV, hopefully it will benefit the community. Next we will move on to the promising diffusion+transformer scheme.

We will soon release MuseTalk, a real-time high quality lip sync model, which can be applied with MuseV as a complete virtual human generation solution. Please stay tuned!

Intro

MuseV is a diffusion-based virtual human video generation framework, which

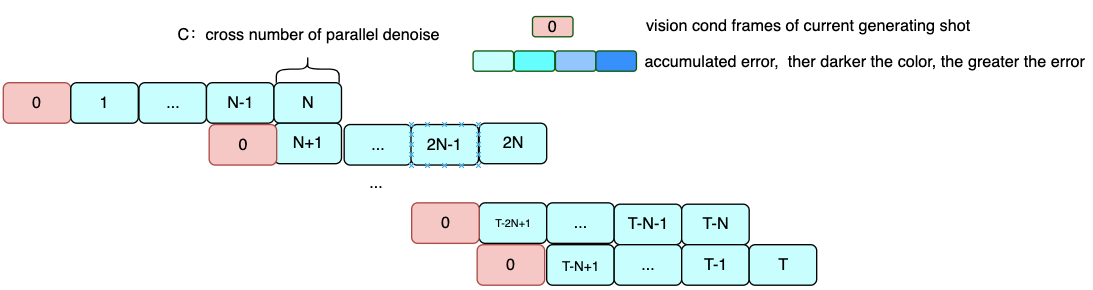

- supports infinite length generation using a novel Parallel Denoising scheme.

- checkpoint available for virtual human video generation trained on human dataset.

- supports Image2Video, Text2Image2Video, Video2Video.

- compatible with the Stable Diffusion ecosystem, including

base_model,lora,controlnet, etc. - supports multi reference image technology, including

IPAdapter,ReferenceOnly,ReferenceNet,IPAdapterFaceID. - training codes (comming very soon).

Model

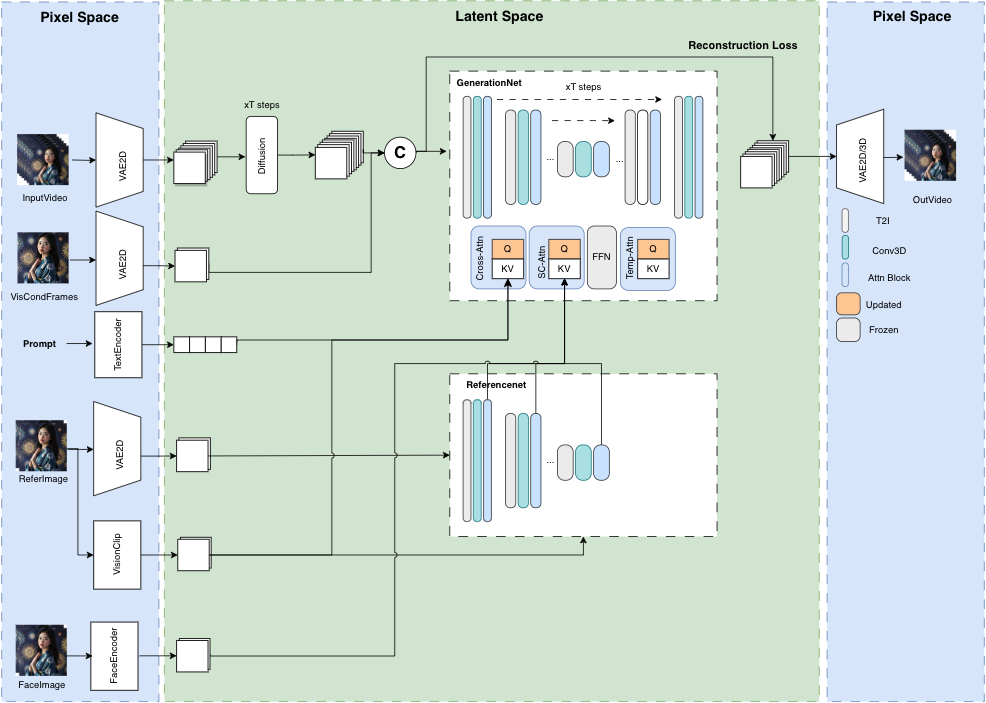

overview of model structure

parallel denoising

Cases

All frames are generated from text2video model, without any post process.

Bellow Case could be found in configs/tasks/example.yaml

Text/Image2Video

Human

| image | video | prompt |

|

(masterpiece, best quality, highres:1),(1girl, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) | |

|

(masterpiece, best quality, highres:1),(1girl, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) | |

|

(masterpiece, best quality, highres:1), peaceful beautiful sea scene | |

|

(masterpiece, best quality, highres:1), peaceful beautiful sea scene | |

|

(masterpiece, best quality, highres:1), peaceful beautiful sea scene | |

|

(masterpiece, best quality, highres:1), playing guitar | |

|

(masterpiece, best quality, highres:1), playing guitar | |

|

(masterpiece, best quality, highres:1), playing guitar | |

|

(masterpiece, best quality, highres:1), playing guitar | |

|

(masterpiece, best quality, highres:1),(1man, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) | |

|

(masterpiece, best quality, highres:1),(1girl, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) | |

|

(masterpiece, best quality, highres:1),(1man, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) | |

|

(masterpiece, best quality, highres:1),(1girl, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) | |

|

(masterpiece, best quality, highres:1),(1girl, solo:1),(beautiful face, soft skin, costume:1),(eye blinks:{eye_blinks_factor}),(head wave:1.3) |

scene

| image | video | prompt |

|

(masterpiece, best quality, highres:1), peaceful beautiful waterfall, an endless waterfall | |

|

(masterpiece, best quality, highres:1), peaceful beautiful river | |

|

(masterpiece, best quality, highres:1), peaceful beautiful sea scene |

VideoMiddle2Video

News

- [03/22/2024] release

MuseVproject and trained modelmusev,muse_referencenet.

Quickstart

please refer to MuseV

Acknowledgements

MuseV builds on TuneAVideo, diffusers. Thanks for open-sourcing!

Citation

@article{musev,

title={MuseV: Infinite-length and High Fidelity Virtual Human Video Generation with Parallel Denoising},

author={Xia, Zhiqiang and Chen, Zhaokang and Wu, Bin and Li, Chao and Hung, Kwok-Wai and Zhan, Chao and Zhou, Wenjiang},

journal={arxiv},

year={2024}

}

Disclaimer/License

code: The code of MuseV is released under the MIT License. There is no limitation for both academic and commercial usage.model: The trained model are available for non-commercial research purposes only.other opensource model: Other open-source models used must comply with their license, such asinsightface,IP-Adapter,ft-mse-vae, etc.AIGC: This project strives to impact the domain of AI-driven video generation positively. Users are granted the freedom to create videos using this tool, but they are expected to comply with local laws and utilize it responsibly. The developers do not assume any responsibility for potential misuse by users.