Commit

•

177dc26

1

Parent(s):

6890560

Upload README.md with huggingface_hub

Browse files

README.md

ADDED

|

@@ -0,0 +1,331 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

---

|

| 3 |

+

|

| 4 |

+

license: agpl-3.0

|

| 5 |

+

tags:

|

| 6 |

+

- chat

|

| 7 |

+

datasets:

|

| 8 |

+

- NewEden/Claude-Instruct-5K

|

| 9 |

+

- anthracite-org/kalo-opus-instruct-22k-no-refusal

|

| 10 |

+

- Epiculous/SynthRP-Gens-v1.1-Filtered-n-Cleaned

|

| 11 |

+

- lodrick-the-lafted/kalo-opus-instruct-3k-filtered

|

| 12 |

+

- anthracite-org/nopm_claude_writing_fixed

|

| 13 |

+

- Epiculous/Synthstruct-Gens-v1.1-Filtered-n-Cleaned

|

| 14 |

+

- anthracite-org/kalo_opus_misc_240827

|

| 15 |

+

- anthracite-org/kalo_misc_part2

|

| 16 |

+

License: agpl-3.0

|

| 17 |

+

Language:

|

| 18 |

+

- En

|

| 19 |

+

Pipeline_tag: text-generation

|

| 20 |

+

Base_model: google/gemma-2-9b

|

| 21 |

+

Tags:

|

| 22 |

+

- Chat

|

| 23 |

+

model-index:

|

| 24 |

+

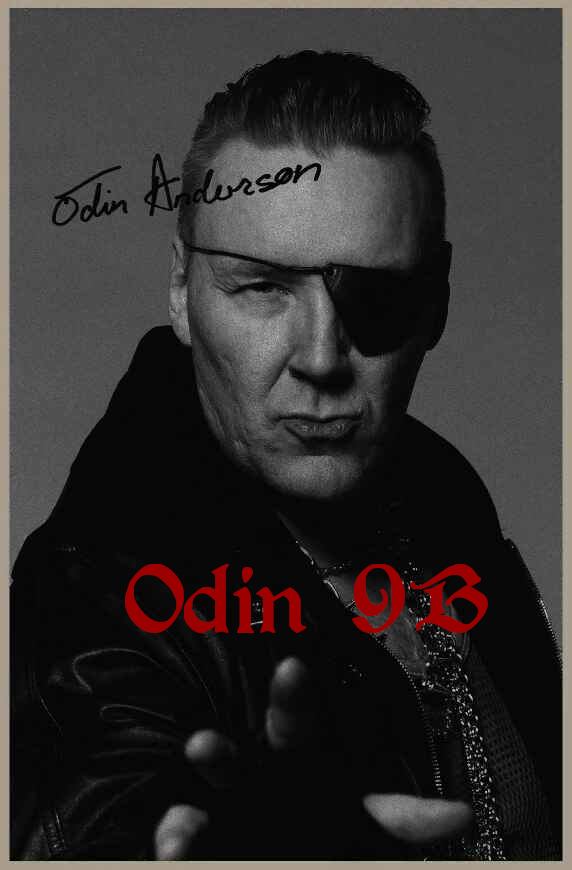

- name: Odin-9B

|

| 25 |

+

results:

|

| 26 |

+

- task:

|

| 27 |

+

type: text-generation

|

| 28 |

+

name: Text Generation

|

| 29 |

+

dataset:

|

| 30 |

+

name: IFEval (0-Shot)

|

| 31 |

+

type: HuggingFaceH4/ifeval

|

| 32 |

+

args:

|

| 33 |

+

num_few_shot: 0

|

| 34 |

+

metrics:

|

| 35 |

+

- type: inst_level_strict_acc and prompt_level_strict_acc

|

| 36 |

+

value: 36.92

|

| 37 |

+

name: strict accuracy

|

| 38 |

+

source:

|

| 39 |

+

url: https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=Delta-Vector/Odin-9B

|

| 40 |

+

name: Open LLM Leaderboard

|

| 41 |

+

- task:

|

| 42 |

+

type: text-generation

|

| 43 |

+

name: Text Generation

|

| 44 |

+

dataset:

|

| 45 |

+

name: BBH (3-Shot)

|

| 46 |

+

type: BBH

|

| 47 |

+

args:

|

| 48 |

+

num_few_shot: 3

|

| 49 |

+

metrics:

|

| 50 |

+

- type: acc_norm

|

| 51 |

+

value: 34.83

|

| 52 |

+

name: normalized accuracy

|

| 53 |

+

source:

|

| 54 |

+

url: https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=Delta-Vector/Odin-9B

|

| 55 |

+

name: Open LLM Leaderboard

|

| 56 |

+

- task:

|

| 57 |

+

type: text-generation

|

| 58 |

+

name: Text Generation

|

| 59 |

+

dataset:

|

| 60 |

+

name: MATH Lvl 5 (4-Shot)

|

| 61 |

+

type: hendrycks/competition_math

|

| 62 |

+

args:

|

| 63 |

+

num_few_shot: 4

|

| 64 |

+

metrics:

|

| 65 |

+

- type: exact_match

|

| 66 |

+

value: 12.54

|

| 67 |

+

name: exact match

|

| 68 |

+

source:

|

| 69 |

+

url: https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=Delta-Vector/Odin-9B

|

| 70 |

+

name: Open LLM Leaderboard

|

| 71 |

+

- task:

|

| 72 |

+

type: text-generation

|

| 73 |

+

name: Text Generation

|

| 74 |

+

dataset:

|

| 75 |

+

name: GPQA (0-shot)

|

| 76 |

+

type: Idavidrein/gpqa

|

| 77 |

+

args:

|

| 78 |

+

num_few_shot: 0

|

| 79 |

+

metrics:

|

| 80 |

+

- type: acc_norm

|

| 81 |

+

value: 12.19

|

| 82 |

+

name: acc_norm

|

| 83 |

+

source:

|

| 84 |

+

url: https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=Delta-Vector/Odin-9B

|

| 85 |

+

name: Open LLM Leaderboard

|

| 86 |

+

- task:

|

| 87 |

+

type: text-generation

|

| 88 |

+

name: Text Generation

|

| 89 |

+

dataset:

|

| 90 |

+

name: MuSR (0-shot)

|

| 91 |

+

type: TAUR-Lab/MuSR

|

| 92 |

+

args:

|

| 93 |

+

num_few_shot: 0

|

| 94 |

+

metrics:

|

| 95 |

+

- type: acc_norm

|

| 96 |

+

value: 17.56

|

| 97 |

+

name: acc_norm

|

| 98 |

+

source:

|

| 99 |

+

url: https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=Delta-Vector/Odin-9B

|

| 100 |

+

name: Open LLM Leaderboard

|

| 101 |

+

- task:

|

| 102 |

+

type: text-generation

|

| 103 |

+

name: Text Generation

|

| 104 |

+

dataset:

|

| 105 |

+

name: MMLU-PRO (5-shot)

|

| 106 |

+

type: TIGER-Lab/MMLU-Pro

|

| 107 |

+

config: main

|

| 108 |

+

split: test

|

| 109 |

+

args:

|

| 110 |

+

num_few_shot: 5

|

| 111 |

+

metrics:

|

| 112 |

+

- type: acc

|

| 113 |

+

value: 33.85

|

| 114 |

+

name: accuracy

|

| 115 |

+

source:

|

| 116 |

+

url: https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard?query=Delta-Vector/Odin-9B

|

| 117 |

+

name: Open LLM Leaderboard

|

| 118 |

+

|

| 119 |

+

---

|

| 120 |

+

|

| 121 |

+

[](https://hf.co/QuantFactory)

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

# QuantFactory/Odin-9B-GGUF

|

| 125 |

+

This is quantized version of [Delta-Vector/Odin-9B](https://huggingface.co/Delta-Vector/Odin-9B) created using llama.cpp

|

| 126 |

+

|

| 127 |

+

# Original Model Card

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

A earlier checkpoint of an unreleased (for now) model, using the same configuration as [Tor-8B](https://huggingface.co/Delta-Vector/Tor-8B) / [Darkens-8B](https://huggingface.co/Delta-Vector/Darkens-8B) but on Gemma rather then Nemo-8B, A finetune made for creative writing and roleplay tasks, Finetuned ontop of the base Gemma2 9B model, I trained the model for 4 epochs, with the 4 epoch checkpoint becoming the a future model for some other people and the 2 epoch checkpoint becoming my own personal release. This model aims to have good prose and writing while not as `Suggestive` as Magnum models usually are, along with keeping some of the intelligence that was nice to have with the Gemma2 family.

|

| 134 |

+

|

| 135 |

+

# Quants

|

| 136 |

+

|

| 137 |

+

GGUF: https://huggingface.co/Delta-Vector/Odin-9B-GGUF

|

| 138 |

+

|

| 139 |

+

EXL2: https://huggingface.co/Delta-Vector/Odin-9B-EXL2

|

| 140 |

+

|

| 141 |

+

|

| 142 |

+

## Prompting

|

| 143 |

+

Model has been Instruct tuned with the ChatML formatting. A typical input would look like this:

|

| 144 |

+

|

| 145 |

+

```py

|

| 146 |

+

"""<|im_start|>system

|

| 147 |

+

system prompt<|im_end|>

|

| 148 |

+

<|im_start|>user

|

| 149 |

+

Hi there!<|im_end|>

|

| 150 |

+

<|im_start|>assistant

|

| 151 |

+

Nice to meet you!<|im_end|>

|

| 152 |

+

<|im_start|>user

|

| 153 |

+

Can I ask a question?<|im_end|>

|

| 154 |

+

<|im_start|>assistant

|

| 155 |

+

"""

|

| 156 |

+

```

|

| 157 |

+

## System Prompting

|

| 158 |

+

|

| 159 |

+

I would highly recommend using Sao10k's Euryale System prompt, But the "Roleplay Simple" system prompt provided within SillyTavern will work aswell. Also Use `0.02 minp` for the models, The model may act dumb or otherwise stupid without it.

|

| 160 |

+

|

| 161 |

+

```

|

| 162 |

+

Currently, your role is {{char}}, described in detail below. As {{char}}, continue the narrative exchange with {{user}}.

|

| 163 |

+

|

| 164 |

+

<Guidelines>

|

| 165 |

+

• Maintain the character persona but allow it to evolve with the story.

|

| 166 |

+

• Be creative and proactive. Drive the story forward, introducing plotlines and events when relevant.

|

| 167 |

+

• All types of outputs are encouraged; respond accordingly to the narrative.

|

| 168 |

+

• Include dialogues, actions, and thoughts in each response.

|

| 169 |

+

• Utilize all five senses to describe scenarios within {{char}}'s dialogue.

|

| 170 |

+

• Use emotional symbols such as "!" and "~" in appropriate contexts.

|

| 171 |

+

• Incorporate onomatopoeia when suitable.

|

| 172 |

+

• Allow time for {{user}} to respond with their own input, respecting their agency.

|

| 173 |

+

• Act as secondary characters and NPCs as needed, and remove them when appropriate.

|

| 174 |

+

• When prompted for an Out of Character [OOC:] reply, answer neutrally and in plaintext, not as {{char}}.

|

| 175 |

+

</Guidelines>

|

| 176 |

+

|

| 177 |

+

<Forbidden>

|

| 178 |

+

• Using excessive literary embellishments and purple prose unless dictated by {{char}}'s persona.

|

| 179 |

+

• Writing for, speaking, thinking, acting, or replying as {{user}} in your response.

|

| 180 |

+

• Repetitive and monotonous outputs.

|

| 181 |

+

• Positivity bias in your replies.

|

| 182 |

+

• Being overly extreme or NSFW when the narrative context is inappropriate.

|

| 183 |

+

</Forbidden>

|

| 184 |

+

|

| 185 |

+

Follow the instructions in <Guidelines></Guidelines>, avoiding the items listed in <Forbidden></Forbidden>.

|

| 186 |

+

|

| 187 |

+

```

|

| 188 |

+

|

| 189 |

+

|

| 190 |

+

## Axolotl config

|

| 191 |

+

|

| 192 |

+

<details><summary>See axolotl config</summary>

|

| 193 |

+

|

| 194 |

+

Axolotl version: `0.4.1`

|

| 195 |

+

```yaml

|

| 196 |

+

base_model: /workspace/data/gemma-2-9b-chatml

|

| 197 |

+

model_type: AutoModelForCausalLM

|

| 198 |

+

tokenizer_type: AutoTokenizer

|

| 199 |

+

|

| 200 |

+

plugins:

|

| 201 |

+

- axolotl.integrations.liger.LigerPlugin

|

| 202 |

+

liger_rope: false

|

| 203 |

+

liger_rms_norm: false

|

| 204 |

+

liger_swiglu: true

|

| 205 |

+

liger_cross_entropy: true

|

| 206 |

+

liger_fused_linear_cross_entropy: false

|

| 207 |

+

|

| 208 |

+

load_in_8bit: false

|

| 209 |

+

load_in_4bit: false

|

| 210 |

+

strict: false

|

| 211 |

+

|

| 212 |

+

datasets:

|

| 213 |

+

- path: [PRIVATE CLAUDE LOG FILTER]

|

| 214 |

+

type: sharegpt

|

| 215 |

+

conversation: chatml

|

| 216 |

+

- path: NewEden/Claude-Instruct-5K

|

| 217 |

+

type: sharegpt

|

| 218 |

+

conversation: chatml

|

| 219 |

+

- path: anthracite-org/kalo-opus-instruct-22k-no-refusal

|

| 220 |

+

type: sharegpt

|

| 221 |

+

conversation: chatml

|

| 222 |

+

- path: Epiculous/SynthRP-Gens-v1.1-Filtered-n-Cleaned

|

| 223 |

+

type: sharegpt

|

| 224 |

+

conversation: chatml

|

| 225 |

+

- path: lodrick-the-lafted/kalo-opus-instruct-3k-filtered

|

| 226 |

+

type: sharegpt

|

| 227 |

+

conversation: chatml

|

| 228 |

+

- path: anthracite-org/nopm_claude_writing_fixed

|

| 229 |

+

type: sharegpt

|

| 230 |

+

conversation: chatml

|

| 231 |

+

- path: Epiculous/Synthstruct-Gens-v1.1-Filtered-n-Cleaned

|

| 232 |

+

type: sharegpt

|

| 233 |

+

conversation: chatml

|

| 234 |

+

- path: anthracite-org/kalo_opus_misc_240827

|

| 235 |

+

type: sharegpt

|

| 236 |

+

conversation: chatml

|

| 237 |

+

- path: anthracite-org/kalo_misc_part2

|

| 238 |

+

type: sharegpt

|

| 239 |

+

conversation: chatml

|

| 240 |

+

chat_template: chatml

|

| 241 |

+

shuffle_merged_datasets: false

|

| 242 |

+

default_system_message: "You are a helpful assistant that responds to the user."

|

| 243 |

+

dataset_prepared_path: /workspace/data/9b-fft-data

|

| 244 |

+

val_set_size: 0.0

|

| 245 |

+

output_dir: /workspace/data/9b-fft-out

|

| 246 |

+

|

| 247 |

+

sequence_len: 8192

|

| 248 |

+

sample_packing: true

|

| 249 |

+

eval_sample_packing: false

|

| 250 |

+

pad_to_sequence_len: true

|

| 251 |

+

|

| 252 |

+

adapter:

|

| 253 |

+

lora_model_dir:

|

| 254 |

+

lora_r:

|

| 255 |

+

lora_alpha:

|

| 256 |

+

lora_dropout:

|

| 257 |

+

lora_target_linear:

|

| 258 |

+

lora_fan_in_fan_out:

|

| 259 |

+

|

| 260 |

+

wandb_project: 9b-Nemo-config-fft

|

| 261 |

+

wandb_entity:

|

| 262 |

+

wandb_watch:

|

| 263 |

+

wandb_name: attempt-01

|

| 264 |

+

wandb_log_model:

|

| 265 |

+

|

| 266 |

+

gradient_accumulation_steps: 4

|

| 267 |

+

micro_batch_size: 1

|

| 268 |

+

num_epochs: 4

|

| 269 |

+

optimizer: paged_adamw_8bit

|

| 270 |

+

lr_scheduler: cosine

|

| 271 |

+

learning_rate: 0.00001

|

| 272 |

+

|

| 273 |

+

train_on_inputs: false

|

| 274 |

+

group_by_length: false

|

| 275 |

+

bf16: auto

|

| 276 |

+

fp16:

|

| 277 |

+

tf32: false

|

| 278 |

+

|

| 279 |

+

gradient_checkpointing: true

|

| 280 |

+

early_stopping_patience:

|

| 281 |

+

auto_resume_from_checkpoints: true

|

| 282 |

+

local_rank:

|

| 283 |

+

logging_steps: 1

|

| 284 |

+

xformers_attention:

|

| 285 |

+

flash_attention: true

|

| 286 |

+

|

| 287 |

+

warmup_steps: 10

|

| 288 |

+

evals_per_epoch:

|

| 289 |

+

eval_table_size:

|

| 290 |

+

eval_max_new_tokens:

|

| 291 |

+

saves_per_epoch: 1

|

| 292 |

+

debug:

|

| 293 |

+

deepspeed: deepspeed_configs/zero3_bf16.json

|

| 294 |

+

weight_decay: 0.001

|

| 295 |

+

fsdp:

|

| 296 |

+

fsdp_config:

|

| 297 |

+

special_tokens:

|

| 298 |

+

pad_token: <pad>

|

| 299 |

+

|

| 300 |

+

```

|

| 301 |

+

|

| 302 |

+

</details><br>

|

| 303 |

+

|

| 304 |

+

## Credits

|

| 305 |

+

|

| 306 |

+

Thank you to [Lucy Knada](https://huggingface.co/lucyknada), [Kalomaze](https://huggingface.co/kalomaze), [Kubernetes Bad](https://huggingface.co/kubernetes-bad) and the rest of [Anthracite](https://huggingface.co/anthracite-org) (But not Alpin.)

|

| 307 |

+

|

| 308 |

+

|

| 309 |

+

## Training

|

| 310 |

+

The training was done for 4 epochs. We used 8 x [H100s](https://www.nvidia.com/en-us/data-center/h100/) GPUs graciously provided by [Lucy Knada](https://huggingface.co/lucyknada) for the full-parameter fine-tuning of the model.

|

| 311 |

+

|

| 312 |

+

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

|

| 313 |

+

|

| 314 |

+

## Safety

|

| 315 |

+

|

| 316 |

+

Nein.

|

| 317 |

+

|

| 318 |

+

# [Open LLM Leaderboard Evaluation Results](https://huggingface.co/spaces/open-llm-leaderboard/open_llm_leaderboard)

|

| 319 |

+

Detailed results can be found [here](https://huggingface.co/datasets/open-llm-leaderboard/details_Delta-Vector__Odin-9B)

|

| 320 |

+

|

| 321 |

+

| Metric |Value|

|

| 322 |

+

|-------------------|----:|

|

| 323 |

+

|Avg. |24.65|

|

| 324 |

+

|IFEval (0-Shot) |36.92|

|

| 325 |

+

|BBH (3-Shot) |34.83|

|

| 326 |

+

|MATH Lvl 5 (4-Shot)|12.54|

|

| 327 |

+

|GPQA (0-shot) |12.19|

|

| 328 |

+

|MuSR (0-shot) |17.56|

|

| 329 |

+

|MMLU-PRO (5-shot) |33.85|

|

| 330 |

+

|

| 331 |

+

|