metadata

license: apache-2.0

library_name: pruna-engine

thumbnail: >-

https://assets-global.website-files.com/646b351987a8d8ce158d1940/64ec9e96b4334c0e1ac41504_Logo%20with%20white%20text.svg

metrics:

- memory_disk

- memory_inference

- inference_latency

- inference_throughput

- inference_CO2_emissions

- inference_energy_consumption

Simply make AI models cheaper, smaller, faster, and greener!

- Give a thumbs up if you like this model!

- Contact us and tell us which model to compress next here.

- Request access to easily compress your own AI models here.

- Read the documentations to know more here

- Share feedback and suggestions on the Slack of Pruna AI (Coming soon!).

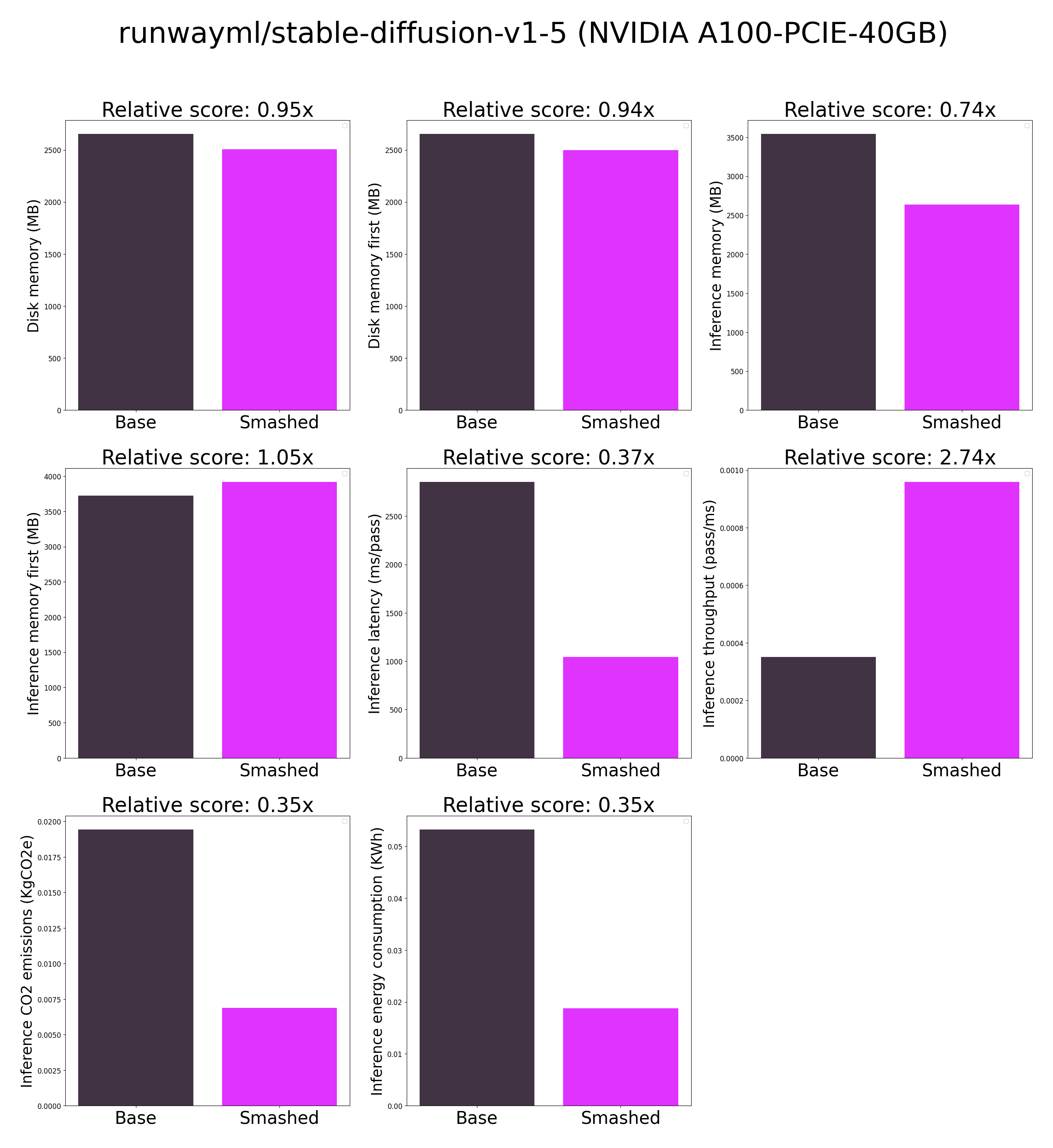

Results

These results were obtained on NVIDIA A100-PCIE-40GB with configuration described in config.json. Results may vary in other settings (e.g. other hardware, image size, batch size, ...).

Setup

You can run the smashed model with these steps:

0. Check that you have cuda installed. You can do this by running nvcc --version or conda install nvidia/label/cuda-12.1.0::cuda.

- Install the

pruna-engineavailable here on Pypi. It might take 15 minutes to install.pip install pruna-engine[gpu] --extra-index-url https://pypi.nvidia.com --extra-index-url https://pypi.ngc.nvidia.com - Download the model files using one of these three options.

- Option 1 - Use command line interface (CLI):

mkdir runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed huggingface-cli download PrunaAI/runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed --local-dir runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed --local-dir-use-symlinks False - Option 2 - Use Python:

import subprocess repo_name = "runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed" subprocess.run(["mkdir", repo_name]) subprocess.run(["huggingface-cli", "download", 'PrunaAI/'+ repo_name, "--local-dir", repo_name, "--local-dir-use-symlinks", "False"]) - Option 3 - Download them manually on the HuggingFace model page.

- Option 1 - Use command line interface (CLI):

- Load & run the model.

from pruna_engine.PrunaModel import PrunaModel model_path = "PrunaAI/runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed/model" # Specify the downloaded model path. smashed_model = PrunaModel.load_model(model_path) # Load the model. y = smashed_model(x) # Run the model where x is the expected input of.

Configurations

The configuration info are in config.json.

License

We follow the same license as the original model. Please check the license of the original model ORIGINAL_runwayml-stable-diffusion-v1-5-turbo-tiny-green-smashed before using this model.