Upload folder using huggingface_hub (#5)

Browse files- 5772fce89ebdf607c820cc9d25739cb0d2630cd2f138f45553449b5456d2807d (02982c251d5d896a67bdb96b4c3caa97894fdcce)

- README.md +4 -2

- plots.png +0 -0

- smash_config.json +1 -1

README.md

CHANGED

|

@@ -60,8 +60,10 @@ You can run the smashed model with these steps:

|

|

| 60 |

```python

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

-

|

| 64 |

-

|

|

|

|

|

|

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("facebook/opt-125m")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

|

|

|

| 60 |

```python

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

+

try:

|

| 64 |

+

model = HQQModelForCausalLM.from_quantized("PrunaAI/facebook-opt-125m-HQQ-1bit-smashed", device_map='auto')

|

| 65 |

+

except:

|

| 66 |

+

model = AutoHQQHFModel.from_quantized("PrunaAI/facebook-opt-125m-HQQ-1bit-smashed")

|

| 67 |

tokenizer = AutoTokenizer.from_pretrained("facebook/opt-125m")

|

| 68 |

|

| 69 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

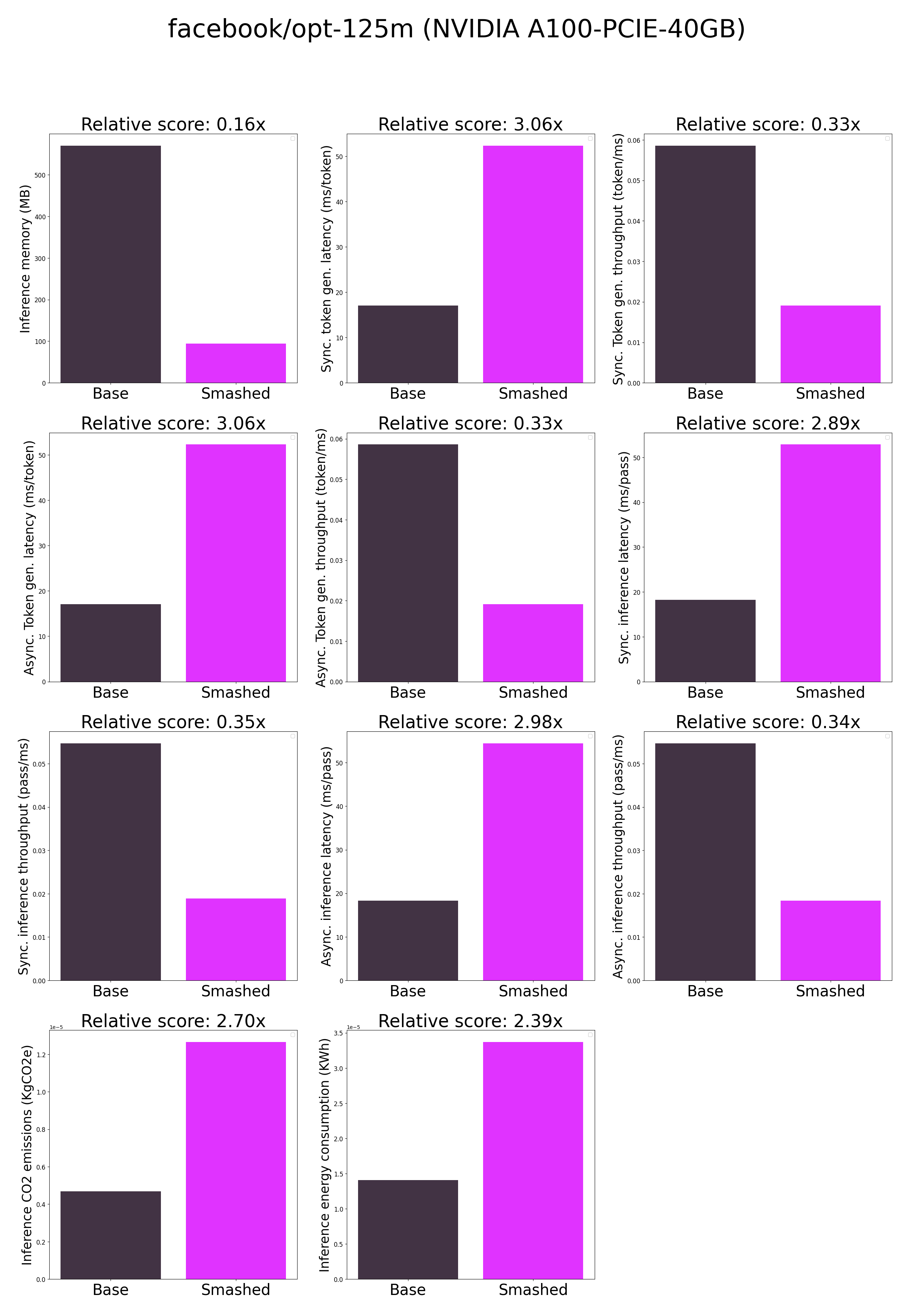

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -14,7 +14,7 @@

|

|

| 14 |

"controlnet": "None",

|

| 15 |

"unet_dim": 4,

|

| 16 |

"device": "cuda",

|

| 17 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 18 |

"batch_size": 1,

|

| 19 |

"tokenizer": "GPT2TokenizerFast(name_or_path='facebook/opt-125m', vocab_size=50265, model_max_length=1000000000000000019884624838656, is_fast=True, padding_side='right', truncation_side='right', special_tokens={'bos_token': '</s>', 'eos_token': '</s>', 'unk_token': '</s>', 'pad_token': '<pad>'}, clean_up_tokenization_spaces=True), added_tokens_decoder={\n\t1: AddedToken(\"<pad>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t2: AddedToken(\"</s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n}",

|

| 20 |

"task": "text_text_generation",

|

|

|

|

| 14 |

"controlnet": "None",

|

| 15 |

"unet_dim": 4,

|

| 16 |

"device": "cuda",

|

| 17 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsh3ybd3wx",

|

| 18 |

"batch_size": 1,

|

| 19 |

"tokenizer": "GPT2TokenizerFast(name_or_path='facebook/opt-125m', vocab_size=50265, model_max_length=1000000000000000019884624838656, is_fast=True, padding_side='right', truncation_side='right', special_tokens={'bos_token': '</s>', 'eos_token': '</s>', 'unk_token': '</s>', 'pad_token': '<pad>'}, clean_up_tokenization_spaces=True), added_tokens_decoder={\n\t1: AddedToken(\"<pad>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t2: AddedToken(\"</s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n}",

|

| 20 |

"task": "text_text_generation",

|