Upload folder using huggingface_hub (#2)

Browse files- 86ce1a2a5663a9a94ea04f6e92e6eabd40efc0d4f1fb6c1a57c376231a028fe4 (ba2303a752455fdcdb11ec0336a6c8899918ed38)

- 6eb3b41788093b0d32fb4be44bd7f8a17d318c6a22b6d7c791bc48944d169e77 (1c7bacc6caf1c89be19a856127146990a4371b39)

- config.json +1 -1

- plots.png +0 -0

- smash_config.json +1 -1

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmpxbmzkwit",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

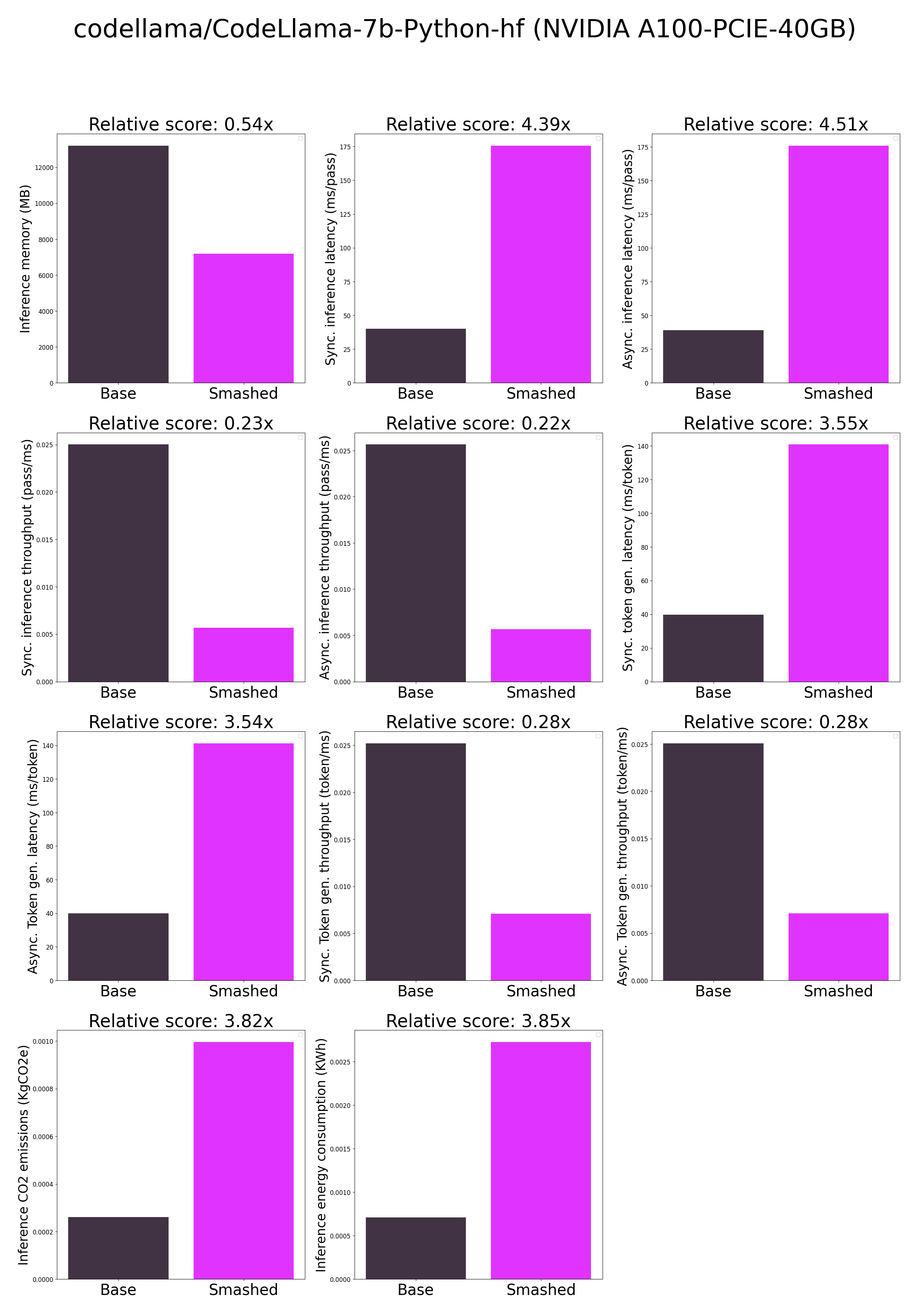

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "codellama/CodeLlama-7b-Python-hf",

|

| 14 |

"pruning_ratio": 0.0,

|

|

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsh2vtbccq",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "codellama/CodeLlama-7b-Python-hf",

|

| 14 |

"pruning_ratio": 0.0,

|