Add LoRa_German_ORPO checkpoint-12750

Browse files- QLoRa/adapter_config.json +43 -0

- QLoRa/adapter_model.safetensors +3 -0

- QLoRa/all_results.json +7 -0

- QLoRa/special_tokens_map.json +24 -0

- QLoRa/tokenizer.model +3 -0

- QLoRa/tokenizer_config.json +45 -0

- QLoRa/train_results.json +7 -0

- QLoRa/trainer_log.jsonl +0 -0

- QLoRa/trainer_state.json +0 -0

- QLoRa/training_args.bin +3 -0

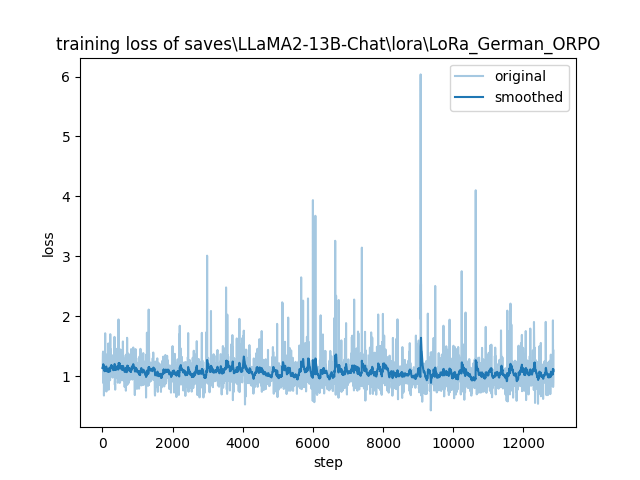

- QLoRa/training_loss.png +0 -0

QLoRa/adapter_config.json

ADDED

|

@@ -0,0 +1,43 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"alpha_pattern": {},

|

| 3 |

+

"auto_mapping": null,

|

| 4 |

+

"base_model_name_or_path": "C:\\Llama-2-13b-chat-hf",

|

| 5 |

+

"bias": "none",

|

| 6 |

+

"fan_in_fan_out": false,

|

| 7 |

+

"inference_mode": true,

|

| 8 |

+

"init_lora_weights": true,

|

| 9 |

+

"layer_replication": null,

|

| 10 |

+

"layers_pattern": null,

|

| 11 |

+

"layers_to_transform": null,

|

| 12 |

+

"loftq_config": {},

|

| 13 |

+

"lora_alpha": 1024,

|

| 14 |

+

"lora_dropout": 0.15,

|

| 15 |

+

"megatron_config": null,

|

| 16 |

+

"megatron_core": "megatron.core",

|

| 17 |

+

"modules_to_save": [

|

| 18 |

+

"all"

|

| 19 |

+

],

|

| 20 |

+

"peft_type": "LORA",

|

| 21 |

+

"r": 512,

|

| 22 |

+

"rank_pattern": {},

|

| 23 |

+

"revision": null,

|

| 24 |

+

"target_modules": [

|

| 25 |

+

"model.layers.39.self_attn.k_proj",

|

| 26 |

+

"model.layers.19.self_attn.q_proj",

|

| 27 |

+

"model.layers.39.self_attn.o_proj",

|

| 28 |

+

"model.layers.19.mlp.down_proj",

|

| 29 |

+

"model.layers.19.mlp.up_proj",

|

| 30 |

+

"model.layers.19.mlp.gate_proj",

|

| 31 |

+

"model.layers.19.self_attn.v_proj",

|

| 32 |

+

"model.layers.39.mlp.down_proj",

|

| 33 |

+

"model.layers.39.self_attn.v_proj",

|

| 34 |

+

"model.layers.39.self_attn.q_proj",

|

| 35 |

+

"model.layers.39.mlp.up_proj",

|

| 36 |

+

"model.layers.19.self_attn.o_proj",

|

| 37 |

+

"model.layers.39.mlp.gate_proj",

|

| 38 |

+

"model.layers.19.self_attn.k_proj"

|

| 39 |

+

],

|

| 40 |

+

"task_type": "CAUSAL_LM",

|

| 41 |

+

"use_dora": false,

|

| 42 |

+

"use_rslora": true

|

| 43 |

+

}

|

QLoRa/adapter_model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:587da53f702cedce10d137903fad822cc0ff44dfc5b1845cb8c0893964f539c7

|

| 3 |

+

size 400559880

|

QLoRa/all_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 1.0734075052920875,

|

| 4 |

+

"train_runtime": 27866.917,

|

| 5 |

+

"train_samples_per_second": 0.461,

|

| 6 |

+

"train_steps_per_second": 0.461

|

| 7 |

+

}

|

QLoRa/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<s>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": false,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "</s>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": false,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "</s>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<unk>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": false,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

QLoRa/tokenizer.model

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9e556afd44213b6bd1be2b850ebbbd98f5481437a8021afaf58ee7fb1818d347

|

| 3 |

+

size 499723

|

QLoRa/tokenizer_config.json

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": true,

|

| 3 |

+

"add_eos_token": false,

|

| 4 |

+

"add_prefix_space": true,

|

| 5 |

+

"added_tokens_decoder": {

|

| 6 |

+

"0": {

|

| 7 |

+

"content": "<unk>",

|

| 8 |

+

"lstrip": false,

|

| 9 |

+

"normalized": false,

|

| 10 |

+

"rstrip": false,

|

| 11 |

+

"single_word": false,

|

| 12 |

+

"special": true

|

| 13 |

+

},

|

| 14 |

+

"1": {

|

| 15 |

+

"content": "<s>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": false,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false,

|

| 20 |

+

"special": true

|

| 21 |

+

},

|

| 22 |

+

"2": {

|

| 23 |

+

"content": "</s>",

|

| 24 |

+

"lstrip": false,

|

| 25 |

+

"normalized": false,

|

| 26 |

+

"rstrip": false,

|

| 27 |

+

"single_word": false,

|

| 28 |

+

"special": true

|

| 29 |

+

}

|

| 30 |

+

},

|

| 31 |

+

"bos_token": "<s>",

|

| 32 |

+

"chat_template": "{% set system_message = 'Below is an instruction that describes a task. Write a response that appropriately completes the request.' %}{% if messages[0]['role'] == 'system' %}{% set system_message = messages[0]['content'] %}{% endif %}{% if system_message is defined %}{{ system_message }}{% endif %}{% for message in messages %}{% set content = message['content'] %}{% if message['role'] == 'user' %}{{ '### Instruction:\\n' + content + '\\n\\n### Response:\\n' }}{% elif message['role'] == 'assistant' %}{{ content + '</s>' + '\\n\\n' }}{% endif %}{% endfor %}",

|

| 33 |

+

"clean_up_tokenization_spaces": false,

|

| 34 |

+

"eos_token": "</s>",

|

| 35 |

+

"legacy": false,

|

| 36 |

+

"model_max_length": 1000000000000000019884624838656,

|

| 37 |

+

"pad_token": "</s>",

|

| 38 |

+

"padding_side": "right",

|

| 39 |

+

"sp_model_kwargs": {},

|

| 40 |

+

"spaces_between_special_tokens": false,

|

| 41 |

+

"split_special_tokens": false,

|

| 42 |

+

"tokenizer_class": "LlamaTokenizer",

|

| 43 |

+

"unk_token": "<unk>",

|

| 44 |

+

"use_default_system_prompt": false

|

| 45 |

+

}

|

QLoRa/train_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 1.0,

|

| 3 |

+

"train_loss": 1.0734075052920875,

|

| 4 |

+

"train_runtime": 27866.917,

|

| 5 |

+

"train_samples_per_second": 0.461,

|

| 6 |

+

"train_steps_per_second": 0.461

|

| 7 |

+

}

|

QLoRa/trainer_log.jsonl

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

QLoRa/trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

QLoRa/training_args.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:038cccee303d062803cc8d451d82e39e74232f258992d015af0b572785b91591

|

| 3 |

+

size 5112

|

QLoRa/training_loss.png

ADDED

|