Commit

•

236ae1f

1

Parent(s):

d5bcf41

Upload 43 files

Browse files- README.md +52 -0

- assets/mplug_owl2_radar.png +0 -0

- config.json +176 -0

- configuration.json +1 -0

- generation_config.json +9 -0

- preprocessor_config.json +20 -0

- pytorch_model-1-of-33.bin +3 -0

- pytorch_model-10-of-33.bin +3 -0

- pytorch_model-11-of-33.bin +3 -0

- pytorch_model-12-of-33.bin +3 -0

- pytorch_model-13-of-33.bin +3 -0

- pytorch_model-14-of-33.bin +3 -0

- pytorch_model-15-of-33.bin +3 -0

- pytorch_model-16-of-33.bin +3 -0

- pytorch_model-17-of-33.bin +3 -0

- pytorch_model-18-of-33.bin +3 -0

- pytorch_model-19-of-33.bin +3 -0

- pytorch_model-2-of-33.bin +3 -0

- pytorch_model-20-of-33.bin +3 -0

- pytorch_model-21-of-33.bin +3 -0

- pytorch_model-22-of-33.bin +3 -0

- pytorch_model-23-of-33.bin +3 -0

- pytorch_model-24-of-33.bin +3 -0

- pytorch_model-25-of-33.bin +3 -0

- pytorch_model-26-of-33.bin +3 -0

- pytorch_model-27-of-33.bin +3 -0

- pytorch_model-28-of-33.bin +3 -0

- pytorch_model-29-of-33.bin +3 -0

- pytorch_model-3-of-33.bin +3 -0

- pytorch_model-30-of-33.bin +3 -0

- pytorch_model-31-of-33.bin +3 -0

- pytorch_model-32-of-33.bin +3 -0

- pytorch_model-33-of-33.bin +3 -0

- pytorch_model-4-of-33.bin +3 -0

- pytorch_model-5-of-33.bin +3 -0

- pytorch_model-6-of-33.bin +3 -0

- pytorch_model-7-of-33.bin +3 -0

- pytorch_model-8-of-33.bin +3 -0

- pytorch_model-9-of-33.bin +3 -0

- pytorch_model.bin.index.json +1 -0

- special_tokens_map.json +24 -0

- tokenizer.model +3 -0

- tokenizer_config.json +35 -0

README.md

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tasks:

|

| 3 |

+

|

| 4 |

+

- multimodal-dialogue

|

| 5 |

+

|

| 6 |

+

studios:

|

| 7 |

+

|

| 8 |

+

- damo/mPLUG-Owl

|

| 9 |

+

|

| 10 |

+

model-type:

|

| 11 |

+

|

| 12 |

+

- mplug-owl2

|

| 13 |

+

|

| 14 |

+

domain:

|

| 15 |

+

|

| 16 |

+

- multi-modal

|

| 17 |

+

|

| 18 |

+

frameworks:

|

| 19 |

+

|

| 20 |

+

- pytorch

|

| 21 |

+

|

| 22 |

+

backbone:

|

| 23 |

+

|

| 24 |

+

- transformer

|

| 25 |

+

|

| 26 |

+

containers:

|

| 27 |

+

|

| 28 |

+

license: apache-2.0

|

| 29 |

+

|

| 30 |

+

language:

|

| 31 |

+

|

| 32 |

+

- en

|

| 33 |

+

|

| 34 |

+

tags:

|

| 35 |

+

|

| 36 |

+

- transformer

|

| 37 |

+

- mPLUG

|

| 38 |

+

- Multimodal

|

| 39 |

+

- ChatGPT

|

| 40 |

+

- GPT

|

| 41 |

+

- Alibaba

|

| 42 |

+

|

| 43 |

+

---

|

| 44 |

+

|

| 45 |

+

# mPLUG-Owl2介绍

|

| 46 |

+

|

| 47 |

+

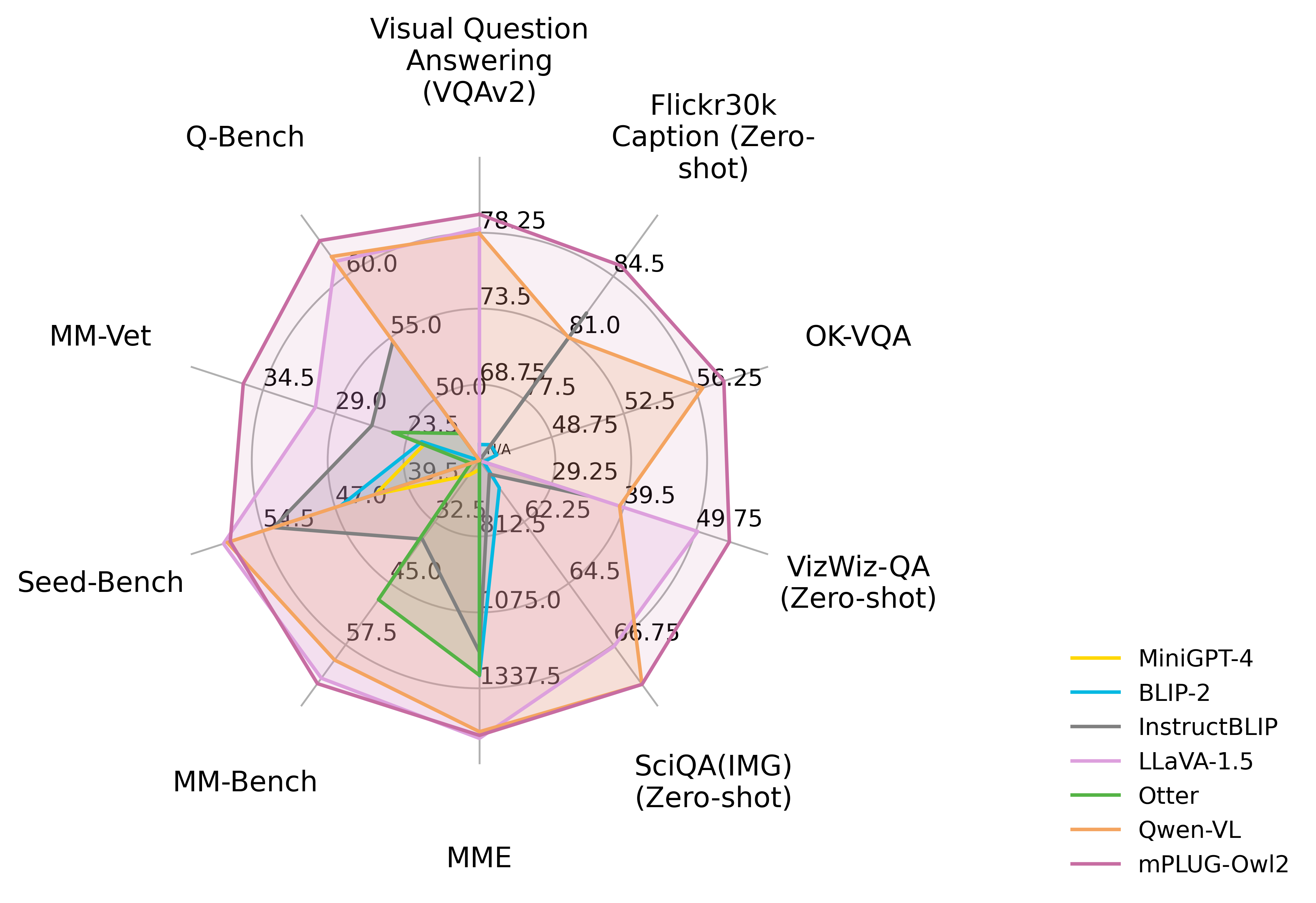

mPLUG-Owl2是一种面向多模态语言模型的模块化的训练范式。其能学习与语言空间相适应的视觉知识,并支持在多模态场景(支持图片、文本输入)下进行多轮对话。它涌现多图关系理解,场景文本理解和基于视觉的文档理解等能力。

|

| 48 |

+

|

| 49 |

+

## 模型描述

|

| 50 |

+

|

| 51 |

+

mPLUG-Owl2基于mPLUG-2模块化的思想,通过多阶段分别训练模型的视觉底座与语言模型,使其视觉知识能与预训练语言模型紧密协作,达到了显著优于主流多模态语言模型的效果。

|

| 52 |

+

|

assets/mplug_owl2_radar.png

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,176 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"attention_bias": false,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"hidden_act": "silu",

|

| 6 |

+

"hidden_size": 4096,

|

| 7 |

+

"initializer_range": 0.02,

|

| 8 |

+

"intermediate_size": 11008,

|

| 9 |

+

"max_position_embeddings": 2048,

|

| 10 |

+

"model_type": "mplug_owl2",

|

| 11 |

+

"num_attention_heads": 32,

|

| 12 |

+

"num_hidden_layers": 32,

|

| 13 |

+

"num_key_value_heads": 32,

|

| 14 |

+

"pretraining_tp": 1,

|

| 15 |

+

"rms_norm_eps": 1e-06,

|

| 16 |

+

"rope_scaling": null,

|

| 17 |

+

"rope_theta": 10000.0,

|

| 18 |

+

"tie_word_embeddings": false,

|

| 19 |

+

"transformers_version": "4.28.1",

|

| 20 |

+

"use_cache": true,

|

| 21 |

+

"visual_config": {

|

| 22 |

+

"visual_abstractor": {

|

| 23 |

+

"_name_or_path": "",

|

| 24 |

+

"add_cross_attention": false,

|

| 25 |

+

"architectures": null,

|

| 26 |

+

"attention_probs_dropout_prob": 0.0,

|

| 27 |

+

"bad_words_ids": null,

|

| 28 |

+

"begin_suppress_tokens": null,

|

| 29 |

+

"bos_token_id": null,

|

| 30 |

+

"chunk_size_feed_forward": 0,

|

| 31 |

+

"cross_attention_hidden_size": null,

|

| 32 |

+

"decoder_start_token_id": null,

|

| 33 |

+

"diversity_penalty": 0.0,

|

| 34 |

+

"do_sample": false,

|

| 35 |

+

"early_stopping": false,

|

| 36 |

+

"encoder_hidden_size": 1024,

|

| 37 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 38 |

+

"eos_token_id": null,

|

| 39 |

+

"exponential_decay_length_penalty": null,

|

| 40 |

+

"finetuning_task": null,

|

| 41 |

+

"forced_bos_token_id": null,

|

| 42 |

+

"forced_eos_token_id": null,

|

| 43 |

+

"grid_size": 32,

|

| 44 |

+

"hidden_size": 1024,

|

| 45 |

+

"id2label": {

|

| 46 |

+

"0": "LABEL_0",

|

| 47 |

+

"1": "LABEL_1"

|

| 48 |

+

},

|

| 49 |

+

"initializer_range": 0.02,

|

| 50 |

+

"intermediate_size": 2816,

|

| 51 |

+

"is_decoder": false,

|

| 52 |

+

"is_encoder_decoder": false,

|

| 53 |

+

"label2id": {

|

| 54 |

+

"LABEL_0": 0,

|

| 55 |

+

"LABEL_1": 1

|

| 56 |

+

},

|

| 57 |

+

"layer_norm_eps": 1e-06,

|

| 58 |

+

"length_penalty": 1.0,

|

| 59 |

+

"max_length": 20,

|

| 60 |

+

"min_length": 0,

|

| 61 |

+

"model_type": "mplug_owl_visual_abstract",

|

| 62 |

+

"no_repeat_ngram_size": 0,

|

| 63 |

+

"num_attention_heads": 16,

|

| 64 |

+

"num_beam_groups": 1,

|

| 65 |

+

"num_beams": 1,

|

| 66 |

+

"num_hidden_layers": 6,

|

| 67 |

+

"num_learnable_queries": 64,

|

| 68 |

+

"num_return_sequences": 1,

|

| 69 |

+

"output_attentions": false,

|

| 70 |

+

"output_hidden_states": false,

|

| 71 |

+

"output_scores": false,

|

| 72 |

+

"pad_token_id": null,

|

| 73 |

+

"prefix": null,

|

| 74 |

+

"problem_type": null,

|

| 75 |

+

"pruned_heads": {},

|

| 76 |

+

"remove_invalid_values": false,

|

| 77 |

+

"repetition_penalty": 1.0,

|

| 78 |

+

"return_dict": true,

|

| 79 |

+

"return_dict_in_generate": false,

|

| 80 |

+

"sep_token_id": null,

|

| 81 |

+

"suppress_tokens": null,

|

| 82 |

+

"task_specific_params": null,

|

| 83 |

+

"temperature": 1.0,

|

| 84 |

+

"tf_legacy_loss": false,

|

| 85 |

+

"tie_encoder_decoder": false,

|

| 86 |

+

"tie_word_embeddings": true,

|

| 87 |

+

"tokenizer_class": null,

|

| 88 |

+

"top_k": 50,

|

| 89 |

+

"top_p": 1.0,

|

| 90 |

+

"torch_dtype": null,

|

| 91 |

+

"torchscript": false,

|

| 92 |

+

"transformers_version": "4.28.1",

|

| 93 |

+

"typical_p": 1.0,

|

| 94 |

+

"use_bfloat16": false

|

| 95 |

+

},

|

| 96 |

+

"visual_model": {

|

| 97 |

+

"_name_or_path": "",

|

| 98 |

+

"add_cross_attention": false,

|

| 99 |

+

"architectures": null,

|

| 100 |

+

"attention_dropout": 0.0,

|

| 101 |

+

"bad_words_ids": null,

|

| 102 |

+

"begin_suppress_tokens": null,

|

| 103 |

+

"bos_token_id": null,

|

| 104 |

+

"chunk_size_feed_forward": 0,

|

| 105 |

+

"cross_attention_hidden_size": null,

|

| 106 |

+

"decoder_start_token_id": null,

|

| 107 |

+

"diversity_penalty": 0.0,

|

| 108 |

+

"do_sample": false,

|

| 109 |

+

"early_stopping": false,

|

| 110 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 111 |

+

"eos_token_id": null,

|

| 112 |

+

"exponential_decay_length_penalty": null,

|

| 113 |

+

"finetuning_task": null,

|

| 114 |

+

"forced_bos_token_id": null,

|

| 115 |

+

"forced_eos_token_id": null,

|

| 116 |

+

"hidden_act": "quick_gelu",

|

| 117 |

+

"hidden_size": 1024,

|

| 118 |

+

"id2label": {

|

| 119 |

+

"0": "LABEL_0",

|

| 120 |

+

"1": "LABEL_1"

|

| 121 |

+

},

|

| 122 |

+

"image_size": 448,

|

| 123 |

+

"initializer_factor": 1.0,

|

| 124 |

+

"initializer_range": 0.02,

|

| 125 |

+

"intermediate_size": 4096,

|

| 126 |

+

"is_decoder": false,

|

| 127 |

+

"is_encoder_decoder": false,

|

| 128 |

+

"label2id": {

|

| 129 |

+

"LABEL_0": 0,

|

| 130 |

+

"LABEL_1": 1

|

| 131 |

+

},

|

| 132 |

+

"layer_norm_eps": 1e-06,

|

| 133 |

+

"length_penalty": 1.0,

|

| 134 |

+

"max_length": 20,

|

| 135 |

+

"min_length": 0,

|

| 136 |

+

"model_type": "mplug_owl_vision_model",

|

| 137 |

+

"no_repeat_ngram_size": 0,

|

| 138 |

+

"num_attention_heads": 16,

|

| 139 |

+

"num_beam_groups": 1,

|

| 140 |

+

"num_beams": 1,

|

| 141 |

+

"num_channels": 3,

|

| 142 |

+

"num_hidden_layers": 24,

|

| 143 |

+

"num_return_sequences": 1,

|

| 144 |

+

"output_attentions": false,

|

| 145 |

+

"output_hidden_states": false,

|

| 146 |

+

"output_scores": false,

|

| 147 |

+

"pad_token_id": null,

|

| 148 |

+

"patch_size": 14,

|

| 149 |

+

"prefix": null,

|

| 150 |

+

"problem_type": null,

|

| 151 |

+

"projection_dim": 768,

|

| 152 |

+

"pruned_heads": {},

|

| 153 |

+

"remove_invalid_values": false,

|

| 154 |

+

"repetition_penalty": 1.0,

|

| 155 |

+

"return_dict": true,

|

| 156 |

+

"return_dict_in_generate": false,

|

| 157 |

+

"sep_token_id": null,

|

| 158 |

+

"suppress_tokens": null,

|

| 159 |

+

"task_specific_params": null,

|

| 160 |

+

"temperature": 1.0,

|

| 161 |

+

"tf_legacy_loss": false,

|

| 162 |

+

"tie_encoder_decoder": false,

|

| 163 |

+

"tie_word_embeddings": true,

|

| 164 |

+

"tokenizer_class": null,

|

| 165 |

+

"top_k": 50,

|

| 166 |

+

"top_p": 1.0,

|

| 167 |

+

"torch_dtype": null,

|

| 168 |

+

"torchscript": false,

|

| 169 |

+

"transformers_version": "4.28.1",

|

| 170 |

+

"typical_p": 1.0,

|

| 171 |

+

"use_bfloat16": false,

|

| 172 |

+

"use_flash_attn": false

|

| 173 |

+

}

|

| 174 |

+

},

|

| 175 |

+

"vocab_size": 32000

|

| 176 |

+

}

|

configuration.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"framework":"Pytorch","task":"multimodal-dialogue"}

|

generation_config.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 1,

|

| 3 |

+

"eos_token_id": 2,

|

| 4 |

+

"max_length": 4096,

|

| 5 |

+

"pad_token_id": 0,

|

| 6 |

+

"temperature": 0.9,

|

| 7 |

+

"top_p": 0.6,

|

| 8 |

+

"transformers_version": "4.31.0"

|

| 9 |

+

}

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"crop_size": 448,

|

| 3 |

+

"do_center_crop": true,

|

| 4 |

+

"do_normalize": true,

|

| 5 |

+

"do_resize": true,

|

| 6 |

+

"feature_extractor_type": "CLIPFeatureExtractor",

|

| 7 |

+

"image_mean": [

|

| 8 |

+

0.48145466,

|

| 9 |

+

0.4578275,

|

| 10 |

+

0.40821073

|

| 11 |

+

],

|

| 12 |

+

"image_std": [

|

| 13 |

+

0.26862954,

|

| 14 |

+

0.26130258,

|

| 15 |

+

0.27577711

|

| 16 |

+

],

|

| 17 |

+

"resample": 3,

|

| 18 |

+

"size": 448

|

| 19 |

+

}

|

| 20 |

+

|

pytorch_model-1-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3eda171a965e0f4f98941f0a45b2322d9f046f687205d0242fa6f45eefad04bc

|

| 3 |

+

size 471896315

|

pytorch_model-10-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:963430967add27b3cd84b55cc5d52a11fa1676450c790be813044fc59c43c3c5

|

| 3 |

+

size 471896315

|

pytorch_model-11-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:182c23f36504e850c910a373a01372c52f0151f461e531d24a2b6e33a5b0b05a

|

| 3 |

+

size 471896315

|

pytorch_model-12-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7abefa632c598f0eff7dc0b0bcba31debdd02850f65afc775e1b35b2b756004f

|

| 3 |

+

size 471896315

|

pytorch_model-13-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9bbb00fc9a0375bd56e3817c25f950c1fbb9fd37da340e6a1e9ae19d856cdb34

|

| 3 |

+

size 471896315

|

pytorch_model-14-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1abf014e4e312136130bedfb0a77a623df76809dce37602ac072e655db353672

|

| 3 |

+

size 471896315

|

pytorch_model-15-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c843bda69c0324a1ea56b41b99098a358c4925e9211847a446a29d52d95196cf

|

| 3 |

+

size 471896315

|

pytorch_model-16-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:32afd10b95a8f5871a6f9adc420772fb4288eec8cab80811b90e553aa08a1096

|

| 3 |

+

size 471896315

|

pytorch_model-17-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b2bcfbf9937a670b675f2a3cade55d4c23b1ee6342c2e330a4f107c882db0fe8

|

| 3 |

+

size 471896315

|

pytorch_model-18-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:220b47b918bca9b929fda0598d941101efba77c2be23d6f694b5369c12d7ad5e

|

| 3 |

+

size 471896315

|

pytorch_model-19-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c7f4d830c36a82131725760e5a8e1535cbdba217aeb5ed1ff5dab801ece4bd6c

|

| 3 |

+

size 471896315

|

pytorch_model-2-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:07ebd22419c925cd4528d3182fa976694da76116d2896399f392134a4ffc4f60

|

| 3 |

+

size 471896315

|

pytorch_model-20-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bf47d1d758293babcb2cfd6242c1ff3fa179bc1e27f93efb83a4f1ed285886f3

|

| 3 |

+

size 471896315

|

pytorch_model-21-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6ecdc86f5b55942bd703518a82edc1b99b146e797cb911e6e1b9f392731a32a7

|

| 3 |

+

size 471896315

|

pytorch_model-22-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:01b61398968246dad32f9a79729bb8b17ff7fbbc54df6df06d5a9c4a767f12e0

|

| 3 |

+

size 471896315

|

pytorch_model-23-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:94014e3c353d9e3a52afd6a9009a63fcfc9445545ca36ee73c3dfd69c024a3f4

|

| 3 |

+

size 471896315

|

pytorch_model-24-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b73c4c64ab429f85e92f2bf03f28c7450e56823bcdf5f3596ba4fcf63c86bd96

|

| 3 |

+

size 471896315

|

pytorch_model-25-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b05ec589fe7d22e270b7a11fd18974610a65db598aee16633a9f16a156b26701

|

| 3 |

+

size 471896315

|

pytorch_model-26-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c0053e94bfb2a49d60b9b6af5e3337df017cc284a8d3f3c3c724ac972dc42a92

|

| 3 |

+

size 471896315

|

pytorch_model-27-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:33d49791d532ca66d65002aaa0aa0f7fc52708ee28503804d845ffeb77c7842b

|

| 3 |

+

size 471896315

|

pytorch_model-28-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:306cb650c62110e8b2e170659280c8d37569141611fa8ea69fe0c504bcac69c0

|

| 3 |

+

size 471896315

|

pytorch_model-29-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:51abf23024b4b6d219cbeb907a314faf4de60700936d7a879943d537335c9131

|

| 3 |

+

size 471896315

|

pytorch_model-3-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d1d4c4fbb39a54e3a8bf79643ab7e74b80231c3f52504421ff8bca44dc9abb36

|

| 3 |

+

size 471896315

|

pytorch_model-30-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5a2bccfdf1a1771248aef72d1f99babedfe6ef37a04a12787d08ef4318940c11

|

| 3 |

+

size 471896315

|

pytorch_model-31-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f4c897a84a550da60e6ab2315b9c62e7faaa0e762b8d29ecfb567d9d81c2b439

|

| 3 |

+

size 471896315

|

pytorch_model-32-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:18ad53e6f4961533b809a24e834496218c9b94d06c106b2799fcf4fe0d749f58

|

| 3 |

+

size 471896315

|

pytorch_model-33-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6c5d2ac6af938bedd67cd5c53ef78a9d32401971e96d88ebf81168af56828258

|

| 3 |

+

size 1295321821

|

pytorch_model-4-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5c4bfa35eb4a736c02e3d82d6e265b6eceebdaf012b81d02c64bcd2077e54bd4

|

| 3 |

+

size 471896315

|

pytorch_model-5-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f26905a37688e3a1676f9a0f45ed325042691a336cc46cc0fd3c5e4aadfe62cc

|

| 3 |

+

size 471896315

|

pytorch_model-6-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:67857b8c240e5a4e6b6486f1ef86b8b86d48c014d39020a9364761bc286ddbbd

|

| 3 |

+

size 471896315

|

pytorch_model-7-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b74098fe2053bbce4507160f4549cfde263c42246b9b15f87a2535e4f3979fd4

|

| 3 |

+

size 471896315

|

pytorch_model-8-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:256484eef34f0326ca6af84922a66e1106a184da183d9a2b8ccf122f16009b8f

|

| 3 |

+

size 471896315

|

pytorch_model-9-of-33.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:69c1424bc245b2882cb8d4f8cc33d8b13ee4619b08d0a5b004911f1a42432778

|

| 3 |

+

size 471896315

|

pytorch_model.bin.index.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+