Upload folder using huggingface_hub

Browse files- README.md +65 -0

- all_results.json +12 -0

- config.json +30 -0

- eval_results.json +7 -0

- generation_config.json +6 -0

- model-00001-of-00002.safetensors +3 -0

- model-00002-of-00002.safetensors +3 -0

- model.safetensors.index.json +262 -0

- special_tokens_map.json +51 -0

- tokenizer.json +0 -0

- tokenizer_config.json +86 -0

- train_results.json +8 -0

- trainer_log.jsonl +0 -0

- trainer_state.json +0 -0

- training_args.bin +3 -0

- training_eval_loss.png +0 -0

- training_loss.png +0 -0

README.md

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: other

|

| 4 |

+

base_model: llm-jp/llm-jp-3-3.7b-instruct

|

| 5 |

+

tags:

|

| 6 |

+

- llama-factory

|

| 7 |

+

- full

|

| 8 |

+

- generated_from_trainer

|

| 9 |

+

model-index:

|

| 10 |

+

- name: sft

|

| 11 |

+

results: []

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 15 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 16 |

+

|

| 17 |

+

# sft

|

| 18 |

+

|

| 19 |

+

This model is a fine-tuned version of [llm-jp/llm-jp-3-3.7b-instruct](https://huggingface.co/llm-jp/llm-jp-3-3.7b-instruct) on the longwriter dataset.

|

| 20 |

+

It achieves the following results on the evaluation set:

|

| 21 |

+

- Loss: 0.7541

|

| 22 |

+

|

| 23 |

+

## Model description

|

| 24 |

+

|

| 25 |

+

More information needed

|

| 26 |

+

|

| 27 |

+

## Intended uses & limitations

|

| 28 |

+

|

| 29 |

+

More information needed

|

| 30 |

+

|

| 31 |

+

## Training and evaluation data

|

| 32 |

+

|

| 33 |

+

More information needed

|

| 34 |

+

|

| 35 |

+

## Training procedure

|

| 36 |

+

|

| 37 |

+

### Training hyperparameters

|

| 38 |

+

|

| 39 |

+

The following hyperparameters were used during training:

|

| 40 |

+

- learning_rate: 1e-05

|

| 41 |

+

- train_batch_size: 2

|

| 42 |

+

- eval_batch_size: 1

|

| 43 |

+

- seed: 42

|

| 44 |

+

- distributed_type: multi-GPU

|

| 45 |

+

- num_devices: 4

|

| 46 |

+

- total_train_batch_size: 8

|

| 47 |

+

- total_eval_batch_size: 4

|

| 48 |

+

- optimizer: Use OptimizerNames.ADAMW_BNB with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

|

| 49 |

+

- lr_scheduler_type: cosine

|

| 50 |

+

- lr_scheduler_warmup_ratio: 0.1

|

| 51 |

+

- num_epochs: 2.0

|

| 52 |

+

|

| 53 |

+

### Training results

|

| 54 |

+

|

| 55 |

+

| Training Loss | Epoch | Step | Validation Loss |

|

| 56 |

+

|:-------------:|:------:|:----:|:---------------:|

|

| 57 |

+

| 0.7184 | 1.2626 | 500 | 0.7673 |

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

### Framework versions

|

| 61 |

+

|

| 62 |

+

- Transformers 4.46.1

|

| 63 |

+

- Pytorch 2.5.1+cu124

|

| 64 |

+

- Datasets 3.1.0

|

| 65 |

+

- Tokenizers 0.20.3

|

all_results.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.0,

|

| 3 |

+

"eval_loss": 0.7540631294250488,

|

| 4 |

+

"eval_runtime": 1.57,

|

| 5 |

+

"eval_samples_per_second": 20.382,

|

| 6 |

+

"eval_steps_per_second": 5.096,

|

| 7 |

+

"total_flos": 29590009675776.0,

|

| 8 |

+

"train_loss": 0.7852752542104384,

|

| 9 |

+

"train_runtime": 1619.8348,

|

| 10 |

+

"train_samples_per_second": 3.907,

|

| 11 |

+

"train_steps_per_second": 0.489

|

| 12 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,30 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "llm-jp/llm-jp-3-3.7b-instruct",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"LlamaForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_bias": false,

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"head_dim": 128,

|

| 11 |

+

"hidden_act": "silu",

|

| 12 |

+

"hidden_size": 3072,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 8192,

|

| 15 |

+

"max_position_embeddings": 4096,

|

| 16 |

+

"mlp_bias": false,

|

| 17 |

+

"model_type": "llama",

|

| 18 |

+

"num_attention_heads": 24,

|

| 19 |

+

"num_hidden_layers": 28,

|

| 20 |

+

"num_key_value_heads": 24,

|

| 21 |

+

"pretraining_tp": 1,

|

| 22 |

+

"rms_norm_eps": 1e-05,

|

| 23 |

+

"rope_scaling": null,

|

| 24 |

+

"rope_theta": 10000,

|

| 25 |

+

"tie_word_embeddings": false,

|

| 26 |

+

"torch_dtype": "bfloat16",

|

| 27 |

+

"transformers_version": "4.46.1",

|

| 28 |

+

"use_cache": false,

|

| 29 |

+

"vocab_size": 99584

|

| 30 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.0,

|

| 3 |

+

"eval_loss": 0.7540631294250488,

|

| 4 |

+

"eval_runtime": 1.57,

|

| 5 |

+

"eval_samples_per_second": 20.382,

|

| 6 |

+

"eval_steps_per_second": 5.096

|

| 7 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"transformers_version": "4.46.1"

|

| 6 |

+

}

|

model-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3b40704533f336b8e7055cdcd93d76f6bc4a240310f8749c7df6b508e7684234

|

| 3 |

+

size 4990951344

|

model-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ba39f9a9527a594dd191efbaf7267a37f0ea6e8fd114250d237ef0a6aedb7786

|

| 3 |

+

size 2574904248

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,262 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 7565826048

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00002-of-00002.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00002.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 10 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 11 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 12 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 17 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 18 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 19 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 20 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 21 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 22 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 23 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 24 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 25 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 26 |

+

"model.layers.10.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 27 |

+

"model.layers.10.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 28 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 29 |

+

"model.layers.10.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 30 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 31 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 32 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 33 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 34 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 35 |

+

"model.layers.11.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 36 |

+

"model.layers.11.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 37 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 38 |

+

"model.layers.11.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 39 |

+

"model.layers.11.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 40 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 41 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 42 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 43 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 44 |

+

"model.layers.12.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 45 |

+

"model.layers.12.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 46 |

+

"model.layers.12.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 47 |

+

"model.layers.12.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 48 |

+

"model.layers.12.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 49 |

+

"model.layers.12.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 50 |

+

"model.layers.12.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 51 |

+

"model.layers.12.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 52 |

+

"model.layers.12.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 53 |

+

"model.layers.13.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 54 |

+

"model.layers.13.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 55 |

+

"model.layers.13.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 56 |

+

"model.layers.13.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 57 |

+

"model.layers.13.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 58 |

+

"model.layers.13.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 59 |

+

"model.layers.13.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 60 |

+

"model.layers.13.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 61 |

+

"model.layers.13.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 62 |

+

"model.layers.14.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 63 |

+

"model.layers.14.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 64 |

+

"model.layers.14.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 65 |

+

"model.layers.14.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 66 |

+

"model.layers.14.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 67 |

+

"model.layers.14.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 68 |

+

"model.layers.14.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 69 |

+

"model.layers.14.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 70 |

+

"model.layers.14.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 71 |

+

"model.layers.15.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 72 |

+

"model.layers.15.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 73 |

+

"model.layers.15.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 74 |

+

"model.layers.15.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 75 |

+

"model.layers.15.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 76 |

+

"model.layers.15.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 77 |

+

"model.layers.15.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 78 |

+

"model.layers.15.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 79 |

+

"model.layers.15.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 80 |

+

"model.layers.16.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 81 |

+

"model.layers.16.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 82 |

+

"model.layers.16.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 83 |

+

"model.layers.16.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 84 |

+

"model.layers.16.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 85 |

+

"model.layers.16.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 86 |

+

"model.layers.16.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 87 |

+

"model.layers.16.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 88 |

+

"model.layers.16.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 89 |

+

"model.layers.17.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 90 |

+

"model.layers.17.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 91 |

+

"model.layers.17.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 92 |

+

"model.layers.17.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 93 |

+

"model.layers.17.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 94 |

+

"model.layers.17.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 95 |

+

"model.layers.17.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 96 |

+

"model.layers.17.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 97 |

+

"model.layers.17.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 98 |

+

"model.layers.18.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 99 |

+

"model.layers.18.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 100 |

+

"model.layers.18.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 101 |

+

"model.layers.18.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 102 |

+

"model.layers.18.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 103 |

+

"model.layers.18.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 104 |

+

"model.layers.18.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 105 |

+

"model.layers.18.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 106 |

+

"model.layers.18.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 107 |

+

"model.layers.19.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 108 |

+

"model.layers.19.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 109 |

+

"model.layers.19.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 110 |

+

"model.layers.19.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 111 |

+

"model.layers.19.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 112 |

+

"model.layers.19.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 113 |

+

"model.layers.19.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 114 |

+

"model.layers.19.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 115 |

+

"model.layers.19.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 116 |

+

"model.layers.2.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 117 |

+

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 118 |

+

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 119 |

+

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 120 |

+

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 121 |

+

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 122 |

+

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 123 |

+

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 124 |

+

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 125 |

+

"model.layers.20.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 126 |

+

"model.layers.20.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 127 |

+

"model.layers.20.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 128 |

+

"model.layers.20.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 129 |

+

"model.layers.20.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 130 |

+

"model.layers.20.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 131 |

+

"model.layers.20.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 132 |

+

"model.layers.20.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 133 |

+

"model.layers.20.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 134 |

+

"model.layers.21.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 135 |

+

"model.layers.21.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 136 |

+

"model.layers.21.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 137 |

+

"model.layers.21.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 138 |

+

"model.layers.21.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 139 |

+

"model.layers.21.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 140 |

+

"model.layers.21.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 141 |

+

"model.layers.21.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 142 |

+

"model.layers.21.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 143 |

+

"model.layers.22.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 144 |

+

"model.layers.22.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 145 |

+

"model.layers.22.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 146 |

+

"model.layers.22.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 147 |

+

"model.layers.22.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 148 |

+

"model.layers.22.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 149 |

+

"model.layers.22.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 150 |

+

"model.layers.22.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 151 |

+

"model.layers.22.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 152 |

+

"model.layers.23.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 153 |

+

"model.layers.23.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 154 |

+

"model.layers.23.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 155 |

+

"model.layers.23.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 156 |

+

"model.layers.23.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 157 |

+

"model.layers.23.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 158 |

+

"model.layers.23.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 159 |

+

"model.layers.23.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 160 |

+

"model.layers.23.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 161 |

+

"model.layers.24.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 162 |

+

"model.layers.24.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 163 |

+

"model.layers.24.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 164 |

+

"model.layers.24.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 165 |

+

"model.layers.24.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 166 |

+

"model.layers.24.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 167 |

+

"model.layers.24.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 168 |

+

"model.layers.24.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 169 |

+

"model.layers.24.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 170 |

+

"model.layers.25.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 171 |

+

"model.layers.25.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 172 |

+

"model.layers.25.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 173 |

+

"model.layers.25.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 174 |

+

"model.layers.25.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 175 |

+

"model.layers.25.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 176 |

+

"model.layers.25.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 177 |

+

"model.layers.25.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 178 |

+

"model.layers.25.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 179 |

+

"model.layers.26.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 180 |

+

"model.layers.26.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 181 |

+

"model.layers.26.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 182 |

+

"model.layers.26.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 183 |

+

"model.layers.26.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 184 |

+

"model.layers.26.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 185 |

+

"model.layers.26.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 186 |

+

"model.layers.26.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 187 |

+

"model.layers.26.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 188 |

+

"model.layers.27.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 189 |

+

"model.layers.27.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

| 190 |

+

"model.layers.27.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

| 191 |

+

"model.layers.27.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

| 192 |

+

"model.layers.27.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

| 193 |

+

"model.layers.27.self_attn.k_proj.weight": "model-00002-of-00002.safetensors",

|

| 194 |

+

"model.layers.27.self_attn.o_proj.weight": "model-00002-of-00002.safetensors",

|

| 195 |

+

"model.layers.27.self_attn.q_proj.weight": "model-00002-of-00002.safetensors",

|

| 196 |

+

"model.layers.27.self_attn.v_proj.weight": "model-00002-of-00002.safetensors",

|

| 197 |

+

"model.layers.3.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 198 |

+

"model.layers.3.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 199 |

+

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 200 |

+

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 201 |

+

"model.layers.3.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 202 |

+

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 203 |

+

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 204 |

+

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 205 |

+

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 206 |

+

"model.layers.4.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 207 |

+

"model.layers.4.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 208 |

+

"model.layers.4.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 209 |

+

"model.layers.4.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 210 |

+

"model.layers.4.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 211 |

+

"model.layers.4.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 212 |

+

"model.layers.4.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 213 |

+

"model.layers.4.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 214 |

+

"model.layers.4.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 215 |

+

"model.layers.5.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 216 |

+

"model.layers.5.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 217 |

+

"model.layers.5.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 218 |

+

"model.layers.5.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 219 |

+

"model.layers.5.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 220 |

+

"model.layers.5.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 221 |

+

"model.layers.5.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 222 |

+

"model.layers.5.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 223 |

+

"model.layers.5.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 224 |

+

"model.layers.6.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 225 |

+

"model.layers.6.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 226 |

+

"model.layers.6.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 227 |

+

"model.layers.6.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 228 |

+

"model.layers.6.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 229 |

+

"model.layers.6.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 230 |

+

"model.layers.6.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 231 |

+

"model.layers.6.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 232 |

+

"model.layers.6.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 233 |

+

"model.layers.7.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 234 |

+

"model.layers.7.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 235 |

+

"model.layers.7.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 236 |

+

"model.layers.7.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 237 |

+

"model.layers.7.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 238 |

+

"model.layers.7.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 239 |

+

"model.layers.7.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 240 |

+

"model.layers.7.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 241 |

+

"model.layers.7.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 242 |

+

"model.layers.8.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 243 |

+

"model.layers.8.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 244 |

+

"model.layers.8.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 245 |

+

"model.layers.8.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 246 |

+

"model.layers.8.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 247 |

+

"model.layers.8.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 248 |

+

"model.layers.8.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 249 |

+

"model.layers.8.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 250 |

+

"model.layers.8.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 251 |

+

"model.layers.9.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 252 |

+

"model.layers.9.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

| 253 |

+

"model.layers.9.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

| 254 |

+

"model.layers.9.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

| 255 |

+

"model.layers.9.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

| 256 |

+

"model.layers.9.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

| 257 |

+

"model.layers.9.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

| 258 |

+

"model.layers.9.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

| 259 |

+

"model.layers.9.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

| 260 |

+

"model.norm.weight": "model-00002-of-00002.safetensors"

|

| 261 |

+

}

|

| 262 |

+

}

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<s>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": false,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"cls_token": {

|

| 10 |

+

"content": "<CLS|LLM-jp>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": false,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"eos_token": {

|

| 17 |

+

"content": "</s>",

|

| 18 |

+

"lstrip": false,

|

| 19 |

+

"normalized": false,

|

| 20 |

+

"rstrip": false,

|

| 21 |

+

"single_word": false

|

| 22 |

+

},

|

| 23 |

+

"mask_token": {

|

| 24 |

+

"content": "<MASK|LLM-jp>",

|

| 25 |

+

"lstrip": false,

|

| 26 |

+

"normalized": false,

|

| 27 |

+

"rstrip": false,

|

| 28 |

+

"single_word": false

|

| 29 |

+

},

|

| 30 |

+

"pad_token": {

|

| 31 |

+

"content": "<PAD|LLM-jp>",

|

| 32 |

+

"lstrip": false,

|

| 33 |

+

"normalized": false,

|

| 34 |

+

"rstrip": false,

|

| 35 |

+

"single_word": false

|

| 36 |

+

},

|

| 37 |

+

"sep_token": {

|

| 38 |

+

"content": "<SEP|LLM-jp>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false

|

| 43 |

+

},

|

| 44 |

+

"unk_token": {

|

| 45 |

+

"content": "<unk>",

|

| 46 |

+

"lstrip": false,

|

| 47 |

+

"normalized": false,

|

| 48 |

+

"rstrip": false,

|

| 49 |

+

"single_word": false

|

| 50 |

+

}

|

| 51 |

+

}

|

tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,86 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": true,

|

| 3 |

+

"add_eos_token": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"0": {

|

| 6 |

+

"content": "<unk>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"1": {

|

| 14 |

+

"content": "<s>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": false,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"2": {

|

| 22 |

+

"content": "</s>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

},

|

| 29 |

+

"3": {

|

| 30 |

+

"content": "<MASK|LLM-jp>",

|

| 31 |

+

"lstrip": false,

|

| 32 |

+

"normalized": false,

|

| 33 |

+

"rstrip": false,

|

| 34 |

+

"single_word": false,

|

| 35 |

+

"special": true

|

| 36 |

+

},

|

| 37 |

+

"4": {

|

| 38 |

+

"content": "<PAD|LLM-jp>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false,

|

| 43 |

+

"special": true

|

| 44 |

+

},

|

| 45 |

+

"5": {

|

| 46 |

+

"content": "<CLS|LLM-jp>",

|

| 47 |

+

"lstrip": false,

|

| 48 |

+

"normalized": false,

|

| 49 |

+

"rstrip": false,

|

| 50 |

+

"single_word": false,

|

| 51 |

+

"special": true

|

| 52 |

+

},

|

| 53 |

+

"6": {

|

| 54 |

+

"content": "<SEP|LLM-jp>",

|

| 55 |

+

"lstrip": false,

|

| 56 |

+

"normalized": false,

|

| 57 |

+

"rstrip": false,

|

| 58 |

+

"single_word": false,

|

| 59 |

+

"special": true

|

| 60 |

+

},

|

| 61 |

+

"7": {

|

| 62 |

+

"content": "<EOD|LLM-jp>",

|

| 63 |

+

"lstrip": false,

|

| 64 |

+

"normalized": false,

|

| 65 |

+

"rstrip": false,

|

| 66 |

+

"single_word": false,

|

| 67 |

+

"special": true

|

| 68 |

+

}

|

| 69 |

+

},

|

| 70 |

+

"bos_token": "<s>",

|

| 71 |

+

"chat_template": "{% set system_message = '以下は、タスクを説明する指示です。要求を適切に満たす応答を書きなさい。\n\n' %}{% if messages[0]['role'] == 'system' %}{% set loop_messages = messages[1:] %}{% set system_message = messages[0]['content'] %}{% else %}{% set loop_messages = messages %}{% endif %}{% if system_message is defined %}{{ system_message }}{% endif %}{% for message in loop_messages %}{% set content = message['content'] %}{% if message['role'] == 'user' %}{{ '### 指示:\n' + content + '\n\n### 応答:\n' }}{% elif message['role'] == 'assistant' %}{{ content + '</s>' + '\n\n' }}{% endif %}{% endfor %}",

|

| 72 |

+

"clean_up_tokenization_spaces": false,

|

| 73 |

+

"cls_token": "<CLS|LLM-jp>",

|

| 74 |

+

"eod_token": "</s>",

|

| 75 |

+

"eos_token": "</s>",

|

| 76 |

+

"extra_ids": 0,

|

| 77 |

+

"mask_token": "<MASK|LLM-jp>",

|

| 78 |

+

"model_max_length": 1000000000000000019884624838656,

|

| 79 |

+

"pad_token": "<PAD|LLM-jp>",

|

| 80 |

+

"padding_side": "right",

|

| 81 |

+

"sep_token": "<SEP|LLM-jp>",

|

| 82 |

+

"sp_model_kwargs": {},

|

| 83 |

+

"split_special_tokens": false,

|

| 84 |

+

"tokenizer_class": "PreTrainedTokenizerFast",

|

| 85 |

+

"unk_token": "<unk>"

|

| 86 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.0,

|

| 3 |

+

"total_flos": 29590009675776.0,

|

| 4 |

+

"train_loss": 0.7852752542104384,

|

| 5 |

+

"train_runtime": 1619.8348,

|

| 6 |

+

"train_samples_per_second": 3.907,

|

| 7 |

+

"train_steps_per_second": 0.489

|

| 8 |

+

}

|

trainer_log.jsonl

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

training_args.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:43d84eaf87afd5ad3779c2beff5c6c30267257de03faa93df77d53555315e42b

|

| 3 |

+

size 7160

|

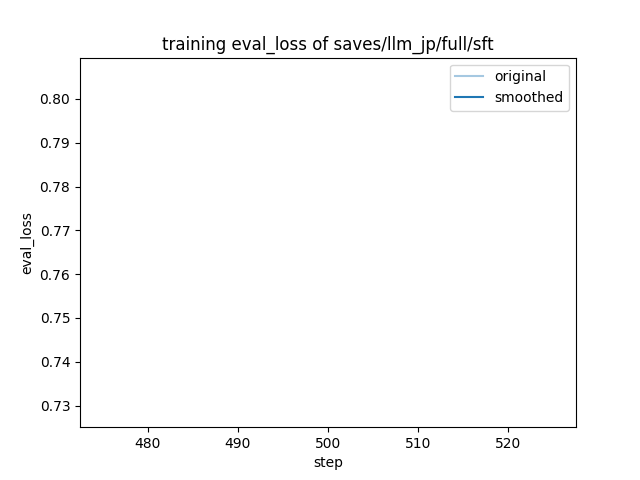

training_eval_loss.png

ADDED

|

training_loss.png

ADDED

|