license: creativeml-openrail-m

datasets:

- laion/laion400m

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

language:

- en

LDM3D model

The LDM3D model was proposed in "LDM3D: Latent Diffusion Model for 3D" by Gabriela Ben Melech Stan, Diana Wofk, Scottie Fox, Alex Redden, Will Saxton, Jean Yu, Estelle Aflalo, Shao-Yen Tseng, Fabio Nonato, Matthias Muller, Vasudev Lal.

LDM3D got accepted to CVPRW'23.

This checkpoint has been finetuned on panoramic images (see how we finetuned below) A demo using this checkpoint has been open-sourced in this space

Model description

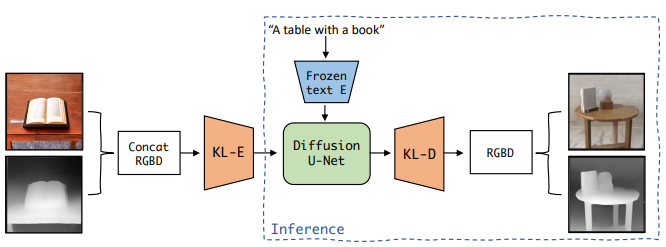

The abstract from the paper is the following: This research paper proposes a Latent Diffusion Model for 3D (LDM3D) that generates both image and depth map data from a given text prompt, allowing users to generate RGBD images from text prompts. The LDM3D model is fine-tuned on a dataset of tuples containing an RGB image, depth map and caption, and validated through extensive experiments. We also develop an application called DepthFusion, which uses the generated RGB images and depth maps to create immersive and interactive 360-degree-view experiences using TouchDesigner. This technology has the potential to transform a wide range of industries, from entertainment and gaming to architecture and design. Overall, this paper presents a significant contribution to the field of generative AI and computer vision, and showcases the potential of LDM3D and DepthFusion to revolutionize content creation and digital experiences.

LDM3D overview taken from the original paper

LDM3D overview taken from the original paper

Intended uses

You can use this model to generate RGB and depth map given a text prompt. A short video summarizing the approach can be found at this url and a VR demo can be found here. A demo is also accessible on Spaces

How to use

Here is how to use this model to get the features of a given text in PyTorch:

from diffusers import StableDiffusionLDM3DPipeline

pipe = StableDiffusionLDM3DPipeline.from_pretrained("Intel/ldm3d-pano")

pipe.to("cuda")

prompt ="360 view of a large bedroom"

name = "bedroom_pano"

output = pipe(prompt, width=1024, height=512,)

rgb_image, depth_image = output.rgb, output.depth

rgb_image[0].save(name+"_ldm3d_rgb.jpg")

depth_image[0].save(name+"_ldm3d_depth.png")

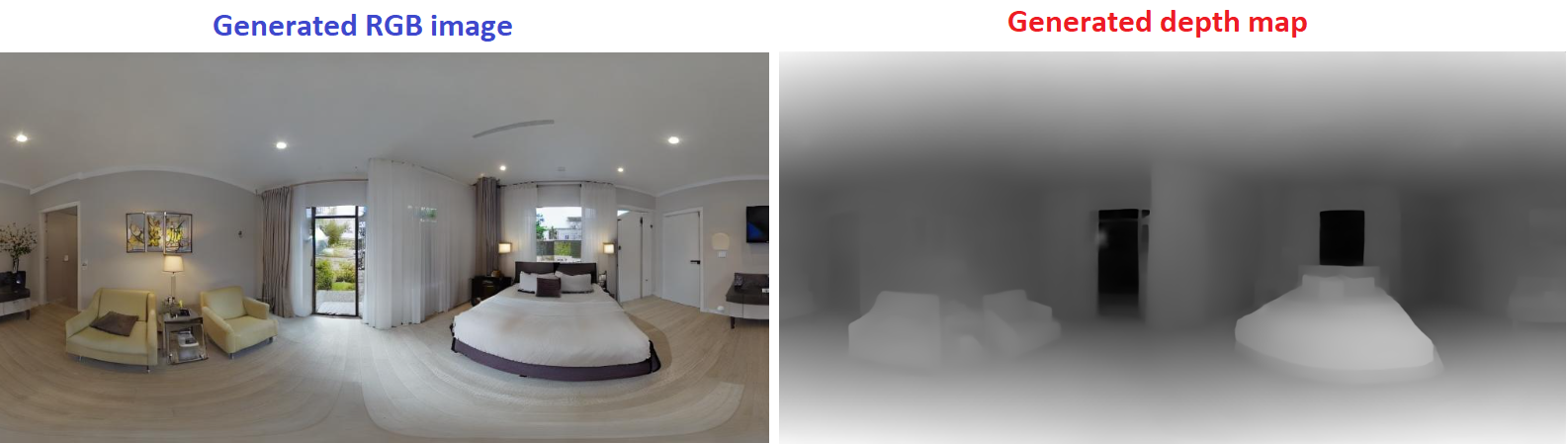

This is the result:

Finetuning

This checkpoint finetunes the previous ldm3d-4c on 2 panoramic-images datasets:

- polyhaven: 585 images for the training set, 66 images for the validation set

- ihdri: 57 outdoor images for the training set, 7 outdoor images for the validation set.

These datasets were augmented using Text2Light to create a dataset containing 13852 training samples and 1606 validation samples.

In order to generate the depth map of those samples, we used DPT-large and to generate the caption we used BLIP-2

BibTeX entry and citation info

@misc{stan2023ldm3d,

title={LDM3D: Latent Diffusion Model for 3D},

author={Gabriela Ben Melech Stan and Diana Wofk and Scottie Fox and Alex Redden and Will Saxton and Jean Yu and Estelle Aflalo and Shao-Yen Tseng and Fabio Nonato and Matthias Muller and Vasudev Lal},

year={2023},

eprint={2305.10853},

archivePrefix={arXiv},

primaryClass={cs.CV}

}