Commit

·

1eaac25

1

Parent(s):

809846f

updates to the card

Browse files- README.md +73 -92

- assets/Figure_Evals_IDEFIX.png +0 -0

- assets/guarding_baguettes.png +0 -0

README.md

CHANGED

|

@@ -15,58 +15,22 @@ datasets:

|

|

| 15 |

|

| 16 |

TODO: logo?

|

| 17 |

|

| 18 |

-

#

|

| 19 |

-

|

| 20 |

-

<!-- Provide a quick summary of what the model is/does. [Optional] -->

|

| 21 |

-

IDEFICS (**I**mage-aware **D**ecoder **E**nhanced à la **F**lamingo with **I**nterleaved **C**ross-attention**S**) is an open-access reproduction of Flamingo, a closed-source visual language model developed by Deepmind. The multimodal model accepts arbitrary sequences of image and text inputs and produces text outputs and is built solely on public available data and models.

|

| 22 |

-

IDEFICS (TODO) is on par with the original model on various image + text benchmarks, including visual question answering (open-ended and multiple choice), image captioning, and image classification when evaluated with in-context few-shot learning.

|

| 23 |

-

|

| 24 |

-

The model comes into two variants: a large [80 billion parameters version](https://huggingface.co/HuggingFaceM4/m4-80b) and a [9 billion parameters version](https://huggingface.co/HuggingFaceM4/m4-9b).

|

| 25 |

-

We also fine-tune these base models on a mixture of SFT datasets (TODO: find a more understandable characterization), which boosts the downstream performance while making the models more usable in conversational settings: (TODO: 80B-sfted) and (TODO: 9B sfted).

|

| 26 |

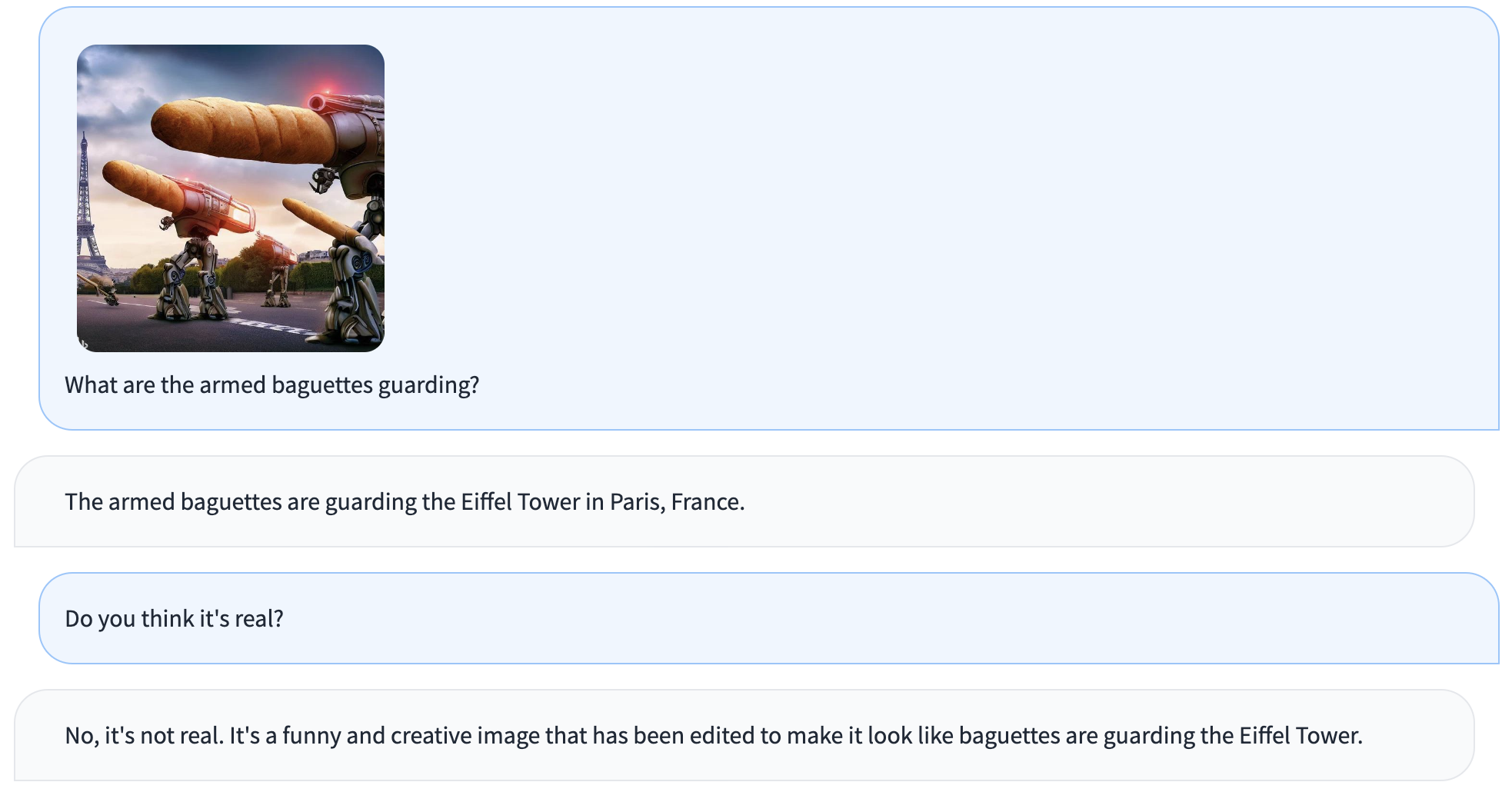

-

|

| 27 |

-

|

| 28 |

-

# Table of Contents

|

| 29 |

-

|

| 30 |

-

- [Model Card for m4-80b](#model-card-for--model_id-)

|

| 31 |

-

- [Table of Contents](#table-of-contents)

|

| 32 |

-

- [Model Details](#model-details)

|

| 33 |

-

- [Model Description](#model-description)

|

| 34 |

-

- [Uses](#uses)

|

| 35 |

-

- [Direct Use](#direct-use)

|

| 36 |

-

- [Downstream Use [Optional]](#downstream-use-optional)

|

| 37 |

-

- [Out-of-Scope Use](#out-of-scope-use)

|

| 38 |

-

- [Bias, Risks, and Limitations](#bias-risks-and-limitations)

|

| 39 |

-

- [Recommendations](#recommendations)

|

| 40 |

-

- [Training Details](#training-details)

|

| 41 |

-

- [Training Data](#training-data)

|

| 42 |

-

- [Training Procedure](#training-procedure)

|

| 43 |

-

- [Preprocessing](#preprocessing)

|

| 44 |

-

- [Speeds, Sizes, Times](#speeds-sizes-times)

|

| 45 |

-

- [Evaluation](#evaluation)

|

| 46 |

-

- [Testing Data, Factors & Metrics](#testing-data-factors--metrics)

|

| 47 |

-

- [Testing Data](#testing-data)

|

| 48 |

-

- [Factors](#factors)

|

| 49 |

-

- [Metrics](#metrics)

|

| 50 |

-

- [Results](#results)

|

| 51 |

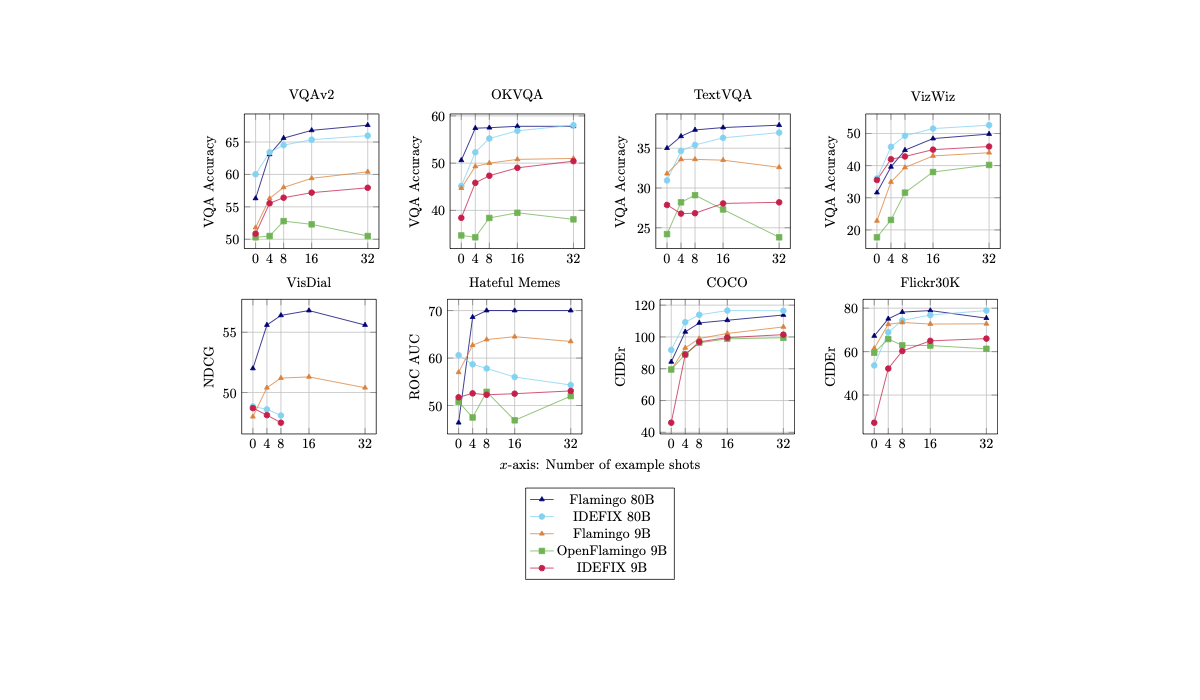

-

- [Model Examination](#model-examination)

|

| 52 |

-

- [Environmental Impact](#environmental-impact)

|

| 53 |

-

- [Technical Specifications [optional]](#technical-specifications-optional)

|

| 54 |

-

- [Model Architecture and Objective](#model-architecture-and-objective)

|

| 55 |

-

- [Compute Infrastructure](#compute-infrastructure)

|

| 56 |

-

- [Hardware](#hardware)

|

| 57 |

-

- [Software](#software)

|

| 58 |

-

- [Citation](#citation)

|

| 59 |

-

- [Glossary [optional]](#glossary-optional)

|

| 60 |

-

- [More Information [optional]](#more-information-optional)

|

| 61 |

-

- [Model Card Authors [optional]](#model-card-authors-optional)

|

| 62 |

-

- [Model Card Contact](#model-card-contact)

|

| 63 |

-

- [How to Get Started with the Model](#how-to-get-started-with-the-model)

|

| 64 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 65 |

|

| 66 |

# Model Details

|

| 67 |

|

| 68 |

- **Developed by:** Hugging Face

|

| 69 |

-

- **Model type:** Multi-modal model (text

|

| 70 |

- **Language(s) (NLP):** en

|

| 71 |

- **License:** other

|

| 72 |

- **Parent Model:** [laion/CLIP-ViT-H-14-laion2B-s32B-b79K](https://huggingface.co/laion/CLIP-ViT-H-14-laion2B-s32B-b79K) and [huggyllama/llama-65b](https://huggingface.co/huggyllama/llama-65b)

|

|

@@ -77,41 +41,61 @@ We also fine-tune these base models on a mixture of SFT datasets (TODO: find a m

|

|

| 77 |

- Original Paper: [Flamingo: a Visual Language Model for Few-Shot Learning](https://huggingface.co/papers/2204.14198)

|

| 78 |

|

| 79 |

IDEFICS is a large multimodal English model that takes sequences of interleaved images and texts as inputs and generates text outputs.

|

| 80 |

-

The model shows strong in-context few-shot learning capabilities

|

| 81 |

|

| 82 |

IDEFICS is built on top of two unimodal open-access pre-trained models to connect the two modalities. Newly initialized parameters in the form of Transformer blocks bridge the gap between the vision encoder and the language model. The model is trained on a mixture of image/text pairs and unstrucutred multimodal web documents.

|

| 83 |

|

|

|

|

| 84 |

|

| 85 |

# Uses

|

| 86 |

|

| 87 |

-

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

|

| 88 |

-

|

| 89 |

The model can be used to perform inference on multimodal (image + text) tasks in which the input is composed of a text query/instruction along with one or multiple images. This model does not support image generation.

|

| 90 |

|

| 91 |

-

It is possible to fine-tune the base model on custom data for a specific use-case. We note that the instruction-fine-tuned models are significantly better at following instructions and thus should be prefered when using the models out-of-the-box.

|

| 92 |

|

| 93 |

-

The following screenshot is an example of interaction with the model:

|

| 94 |

|

| 95 |

-

|

| 96 |

|

| 97 |

|

| 98 |

# How to Get Started with the Model

|

| 99 |

|

| 100 |

Use the code below to get started with the model.

|

| 101 |

|

| 102 |

-

|

| 103 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 104 |

|

| 105 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 106 |

|

| 107 |

-

|

|

|

|

|

|

|

|

|

|

| 108 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 109 |

|

| 110 |

To quickly test your software without waiting for the huge model to download/load you can use `HuggingFaceM4/tiny-random-idefics` - it hasn't been trained and has random weights but it is very useful for quick testing.

|

| 111 |

|

| 112 |

# Training Details

|

| 113 |

|

| 114 |

-

We

|

| 115 |

|

| 116 |

The model is trained on the following data mixture of openly accessible English data:

|

| 117 |

|

|

@@ -119,14 +103,14 @@ The model is trained on the following data mixture of openly accessible English

|

|

| 119 |

|-------------|-----------------------------------------|---------------------------|---------------------------|--------|-----------------------------------------|

|

| 120 |

| [OBELICS](https://huggingface.co/datasets/HuggingFaceM4/OBELICS) | Unstructured Multimodal Web Documents | 114.9B | 353M | 1 | 73.85% |

|

| 121 |

| [Wikipedia](https://huggingface.co/datasets/wikipedia) | Unstructured Multimodal Web Documents | 3.192B | 39M | 3 | 6.15% |

|

| 122 |

-

| [LAION](https://huggingface.co/datasets/laion/laion2B-en) | Image-Text Pairs | 29.9B | 1.120B | 1 | 17.18%

|

| 123 |

| [PMD](https://huggingface.co/datasets/facebook/pmd) | Image-Text Pairs | 1.6B | 70M | 3 | 2.82% | |

|

| 124 |

|

| 125 |

-

**OBELICS** is an open, massive and curated collection of interleaved image-text web documents, containing 141M documents, 115B text tokens and 353M images. An interactive visualization of the dataset content is available [here](

|

| 126 |

|

| 127 |

-

**Wkipedia

|

| 128 |

|

| 129 |

-

**LAION** is a collection of image-text pairs collected from web pages from Common Crawl and texts are obtained using the alternative texts of each image. We deduplicated it (following [

|

| 130 |

|

| 131 |

**PMD** is a collection of publicly-available image-text pair datasets. The dataset contains pairs from Conceptual Captions, Conceptual Captions 12M, WIT, Localized Narratives, RedCaps, COCO, SBU Captions, Visual Genome and a subset of YFCC100M dataset. Due to a server failure at the time of the pre-processing, we did not include SBU captions.

|

| 132 |

|

|

@@ -164,15 +148,15 @@ We use the following hyper and training parameters:

|

|

| 164 |

|

| 165 |

# Evaluation

|

| 166 |

|

| 167 |

-

|

| 168 |

-

We closely follow the evaluation protocol of Flamingo and evaluate IDEFICS on a suite of downstream image + text benchmarks ranging from visual question answering to image captioning.

|

| 169 |

|

| 170 |

We compare our model to the original Flamingo along with [OpenFlamingo](openflamingo/OpenFlamingo-9B-vitl-mpt7b), another open-source reproduction.

|

| 171 |

|

| 172 |

-

We perform checkpoint selection based on validation sets of

|

| 173 |

|

| 174 |

-

|

| 175 |

|

|

|

|

| 176 |

| Model | Shots | VQAv2 (OE VQA acc) | OKVQA (OE VQA acc) | TextVQA (OE VQA acc) | VizWiz (OE VQA acc) | TextCaps (CIDEr) | Coco (CIDEr) | NoCaps (CIDEr) | Flickr (CIDEr) | ImageNet1k (accuracy) | VisDial (NDCG) | HatefulMemes (ROC AUC) | ScienceQA (accuracy) | RenderedSST2 (accuracy) | Winoground (group (text/image)) |

|

| 177 |

|:-----------|--------:|---------------------:|---------------------:|-----------------------:|----------------------:|-------------------:|---------------:|-----------------:|-----------------:|------------------------:|-----------------:|-------------------------:|-----------------------:|--------------------------:|----------------------------------:|

|

| 178 |

| IDEFIX 80B | 0 | 60.0 | 45.2 | 30.9 | 36.0 | 56.8 | 91.8 | 65.0 | 53.7 | 74.3 | 48.8 | 60.6 | 68.9 | 60.5 | 8.0 (18.8/22.5)|

|

|

@@ -187,14 +171,20 @@ TODO: beautiful plots of shots scaling laws.

|

|

| 187 |

| | 16 | 57.2 | 49.0 | 28.1 | 45.0 | 68.0 | 99.6 | 87.2 | 65.0 | - | - | 52.5 | - | 66.0 | - |

|

| 188 |

| | 32 | 57.9 | 50.4 | 28.2 | 45.9 | 69.7 | 101.5 | 88.6 | 66.0 | - | - | 53.1 | - | 63.4 | - |

|

| 189 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 190 |

# Technical Specifications

|

| 191 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 192 |

## Hardware

|

| 193 |

|

| 194 |

The training was performed on an AWS SageMaker cluster with 64 nodes of 8x80GB A100 GPUs (512 GPUs total). The cluster uses the current EFA network which provides about 340GBps throughput.

|

| 195 |

|

| 196 |

-

As the network is quite slow for the needs of DeepSpeed ZeRO-3 we were only able to clock ~90 TFLOPs.

|

| 197 |

-

|

| 198 |

## Software

|

| 199 |

|

| 200 |

The training software is built on top of HuggingFace Transformers + Accelerate, and DeepSpeed ZeRO-3 for training, and [WebDataset](https://github.com/webdataset/webdataset) for data loading.

|

|

@@ -202,8 +192,6 @@ The training software is built on top of HuggingFace Transformers + Accelerate,

|

|

| 202 |

|

| 203 |

# Bias, Risks, and Limitations

|

| 204 |

|

| 205 |

-

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

|

| 206 |

-

|

| 207 |

Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)).

|

| 208 |

As a derivative of such a language model, IDEFICS can produce texts that include disturbing and harmful stereotypes across protected classes; identity characteristics; and sensitive, social, and occupational groups.

|

| 209 |

Moreover, IDEFICS can produce factually incorrect texts, and should not be relied on to produce factually accurate information.

|

|

@@ -214,35 +202,28 @@ TODO: give 4/5 representative examples

|

|

| 214 |

To measure IDEFICS's ability to recognize socilogical (TODO: find a better adjective) attributes, we evaluate the model on FairFace...

|

| 215 |

TODO: include FairFace numbers

|

| 216 |

|

|

|

|

| 217 |

|

| 218 |

-

|

| 219 |

-

|

| 220 |

-

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

|

| 221 |

-

|

| 222 |

-

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

|

| 223 |

-

|

| 224 |

-

- **Hardware Type:** 64 nodes of 8x 80GB A100 gpus, EFA network

|

| 225 |

-

- **Hours used:** ~672 node hours

|

| 226 |

-

- **Cloud Provider:** AWS Sagemaker

|

| 227 |

-

- **Carbon Emitted:** unknown

|

| 228 |

|

|

|

|

| 229 |

|

| 230 |

# Citation

|

| 231 |

|

| 232 |

-

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 233 |

-

|

| 234 |

**BibTeX:**

|

| 235 |

|

| 236 |

-

|

| 237 |

-

|

| 238 |

-

|

| 239 |

-

|

| 240 |

-

|

| 241 |

-

|

| 242 |

-

|

| 243 |

-

|

| 244 |

-

|

| 245 |

-

|

|

|

|

|

|

|

| 246 |

|

| 247 |

V, i, c, t, o, r, ,, , S, t, a, s, ,, , X, X, X

|

| 248 |

|

|

|

|

| 15 |

|

| 16 |

TODO: logo?

|

| 17 |

|

| 18 |

+

# IDEFICS

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 19 |

|

| 20 |

+

IDEFICS (**I**mage-aware **D**ecoder **E**nhanced à la **F**lamingo with **I**nterleaved **C**ross-attention**S**) is an open-access reproduction of [Flamingo](https://huggingface.co/papers/2204.14198), a closed-source visual language model developed by Deepmind. Like GPT-4, the multimodal model accepts arbitrary sequences of image and text inputs and produces text outputs. IDEFICS is built solely on public available data and models.

|

| 21 |

+

|

| 22 |

+

The model can answer questions about images, describe visual contents, create stories grounded on multiple images, or simply behave as a pure language model without visual inputs.

|

| 23 |

+

|

| 24 |

+

IDEFICS is on par with the original model on various image-text benchmarks, including visual question answering (open-ended and multiple choice), image captioning, and image classification when evaluated with in-context few-shot learning. It comes into two variants: a large [80 billion parameters](https://huggingface.co/HuggingFaceM4/idefics-80b) version and a [9 billion parameters](https://huggingface.co/HuggingFaceM4/idefics-9b) version.

|

| 25 |

+

|

| 26 |

+

We also fine-tune these base models on a mixture of supervised and instruction fine-tuning datasets, which boosts the downstream performance while making the models more usable in conversational settings: [idefics-80b-instruct](https://huggingface.co/HuggingFaceM4/idefics-80b-instruct) and [idefics-9b-instruct](https://huggingface.co/HuggingFaceM4/idefics-9b-instruct). As they reach higher performance, we recommend using these instructed versions first.

|

| 27 |

+

|

| 28 |

+

Read more about some of the technical challenges encountered during training IDEFICS [here](https://github.com/huggingface/m4-logs/blob/master/memos/README.md).

|

| 29 |

|

| 30 |

# Model Details

|

| 31 |

|

| 32 |

- **Developed by:** Hugging Face

|

| 33 |

+

- **Model type:** Multi-modal model (image+text)

|

| 34 |

- **Language(s) (NLP):** en

|

| 35 |

- **License:** other

|

| 36 |

- **Parent Model:** [laion/CLIP-ViT-H-14-laion2B-s32B-b79K](https://huggingface.co/laion/CLIP-ViT-H-14-laion2B-s32B-b79K) and [huggyllama/llama-65b](https://huggingface.co/huggyllama/llama-65b)

|

|

|

|

| 41 |

- Original Paper: [Flamingo: a Visual Language Model for Few-Shot Learning](https://huggingface.co/papers/2204.14198)

|

| 42 |

|

| 43 |

IDEFICS is a large multimodal English model that takes sequences of interleaved images and texts as inputs and generates text outputs.

|

| 44 |

+

The model shows strong in-context few-shot learning capabilities and is on par with the closed-source model. This makes IDEFICS a robust starting point to fine-tune multimodal models on custom data.

|

| 45 |

|

| 46 |

IDEFICS is built on top of two unimodal open-access pre-trained models to connect the two modalities. Newly initialized parameters in the form of Transformer blocks bridge the gap between the vision encoder and the language model. The model is trained on a mixture of image/text pairs and unstrucutred multimodal web documents.

|

| 47 |

|

| 48 |

+

IDEFICS-instruct is the model obtained by further training IDEFICS on Supervised Fine-Tuning and Instruction Fine-Tuning datasets. This improves downstream performance significantly (making [idefics-9b-instruct](https://huggingface.co/HuggingFaceM4/idefics-9b-instruct) a very strong model at its 9 billion scale), while making the model more suitable to converse with.

|

| 49 |

|

| 50 |

# Uses

|

| 51 |

|

|

|

|

|

|

|

| 52 |

The model can be used to perform inference on multimodal (image + text) tasks in which the input is composed of a text query/instruction along with one or multiple images. This model does not support image generation.

|

| 53 |

|

| 54 |

+

It is possible to fine-tune the base model on custom data for a specific use-case. We note that the instruction-fine-tuned models are significantly better at following instructions from users and thus should be prefered when using the models out-of-the-box.

|

| 55 |

|

| 56 |

+

The following screenshot is an example of interaction with the instructed model:

|

| 57 |

|

| 58 |

+

<img src="./assets/guarding_baguettes.png" width="35%">

|

| 59 |

|

| 60 |

|

| 61 |

# How to Get Started with the Model

|

| 62 |

|

| 63 |

Use the code below to get started with the model.

|

| 64 |

|

| 65 |

+

```python

|

| 66 |

+

import torch

|

| 67 |

+

from transformers import IdeficsForVisionText2Text, AutoProcessor

|

| 68 |

+

|

| 69 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 70 |

+

|

| 71 |

+

checkpoint = "HuggingFaceM4/idefics-9b"

|

| 72 |

+

model = IdeficsForVisionText2Text.from_pretrained(checkpoint, torch_dtype=torch.bfloat16).to(device)

|

| 73 |

+

processor = AutoProcessor.from_pretrained(checkpoint)

|

| 74 |

|

| 75 |

+

# We feed to the model an arbitrary sequence of text strings and images. Images can be either URLs or PIL Images.

|

| 76 |

+

prompts = [

|

| 77 |

+

[

|

| 78 |

+

"https://upload.wikimedia.org/wikipedia/commons/8/86/Id%C3%A9fix.JPG",

|

| 79 |

+

"In this picture from Asterix and Obelix, we can see"

|

| 80 |

+

],

|

| 81 |

+

]

|

| 82 |

|

| 83 |

+

# --batched mode

|

| 84 |

+

inputs = processor(prompts, return_tensors="pt").to(device)

|

| 85 |

+

# --single sample mode

|

| 86 |

+

# inputs = processor(prompts[0], return_tensors="pt").to(device)

|

| 87 |

|

| 88 |

+

generated_ids = model.generate(**inputs, max_length=100)

|

| 89 |

+

generated_text = processor.batch_decode(generated_ids, skip_special_tokens=True)

|

| 90 |

+

for i, t in enumerate(generated_text):

|

| 91 |

+

print(f"{i}:\n{t}\n")

|

| 92 |

+

```

|

| 93 |

|

| 94 |

To quickly test your software without waiting for the huge model to download/load you can use `HuggingFaceM4/tiny-random-idefics` - it hasn't been trained and has random weights but it is very useful for quick testing.

|

| 95 |

|

| 96 |

# Training Details

|

| 97 |

|

| 98 |

+

We closely follow the training procedure layed out in [Flamingo](https://huggingface.co/papers/2204.14198). We combine two open-source pre-trained models ([laion/CLIP-ViT-H-14-laion2B-s32B-b79K](https://huggingface.co/laion/CLIP-ViT-H-14-laion2B-s32B-b79K) and [huggyllama/llama-65b](https://huggingface.co/huggyllama/llama-65b)) by initializing new Transformer blocks. The pre-trained backbones are frozen while we train the newly initialized parameters.

|

| 99 |

|

| 100 |

The model is trained on the following data mixture of openly accessible English data:

|

| 101 |

|

|

|

|

| 103 |

|-------------|-----------------------------------------|---------------------------|---------------------------|--------|-----------------------------------------|

|

| 104 |

| [OBELICS](https://huggingface.co/datasets/HuggingFaceM4/OBELICS) | Unstructured Multimodal Web Documents | 114.9B | 353M | 1 | 73.85% |

|

| 105 |

| [Wikipedia](https://huggingface.co/datasets/wikipedia) | Unstructured Multimodal Web Documents | 3.192B | 39M | 3 | 6.15% |

|

| 106 |

+

| [LAION](https://huggingface.co/datasets/laion/laion2B-en) | Image-Text Pairs | 29.9B | 1.120B | 1 | 17.18%

|

| 107 |

| [PMD](https://huggingface.co/datasets/facebook/pmd) | Image-Text Pairs | 1.6B | 70M | 3 | 2.82% | |

|

| 108 |

|

| 109 |

+

**OBELICS** is an open, massive and curated collection of interleaved image-text web documents, containing 141M documents, 115B text tokens and 353M images. An interactive visualization of the dataset content is available [here](https://atlas.nomic.ai/map/f2fba2aa-3647-4f49-a0f3-9347daeee499/ee4a84bd-f125-4bcc-a683-1b4e231cb10f).

|

| 110 |

|

| 111 |

+

**Wkipedia**. We used the English dump of Wikipedia created on February 20th, 2023.

|

| 112 |

|

| 113 |

+

**LAION** is a collection of image-text pairs collected from web pages from Common Crawl and texts are obtained using the alternative texts of each image. We deduplicated it (following [Webster et al., 2023](https://arxiv.org/abs/2303.12733)), filtered it, and removed the opted-out images using the [Spawning API](https://api.spawning.ai/spawning-api).

|

| 114 |

|

| 115 |

**PMD** is a collection of publicly-available image-text pair datasets. The dataset contains pairs from Conceptual Captions, Conceptual Captions 12M, WIT, Localized Narratives, RedCaps, COCO, SBU Captions, Visual Genome and a subset of YFCC100M dataset. Due to a server failure at the time of the pre-processing, we did not include SBU captions.

|

| 116 |

|

|

|

|

| 148 |

|

| 149 |

# Evaluation

|

| 150 |

|

| 151 |

+

We follow the evaluation protocol of Flamingo and evaluate IDEFICS on a suite of downstream image-text benchmarks ranging from visual question answering to image captioning.

|

|

|

|

| 152 |

|

| 153 |

We compare our model to the original Flamingo along with [OpenFlamingo](openflamingo/OpenFlamingo-9B-vitl-mpt7b), another open-source reproduction.

|

| 154 |

|

| 155 |

+

We perform checkpoint selection based on validation sets of VQAv2, TextVQA, OKVQA, VizWiz, Visual Dialogue, Coco, Flickr30k, and HatefulMemes. We select the checkpoint at step 65'000 for IDEFICS-9B and at step 37'500 for IDEFICS. The models are evaluated with in-context few-shot learning where the priming instances are selected at random from a support set. We do not use any form of ensembling.

|

| 156 |

|

| 157 |

+

<img src="./assets/Figure_Evals_IDEFIX.png" width="55%">

|

| 158 |

|

| 159 |

+

TODO: update this table

|

| 160 |

| Model | Shots | VQAv2 (OE VQA acc) | OKVQA (OE VQA acc) | TextVQA (OE VQA acc) | VizWiz (OE VQA acc) | TextCaps (CIDEr) | Coco (CIDEr) | NoCaps (CIDEr) | Flickr (CIDEr) | ImageNet1k (accuracy) | VisDial (NDCG) | HatefulMemes (ROC AUC) | ScienceQA (accuracy) | RenderedSST2 (accuracy) | Winoground (group (text/image)) |

|

| 161 |

|:-----------|--------:|---------------------:|---------------------:|-----------------------:|----------------------:|-------------------:|---------------:|-----------------:|-----------------:|------------------------:|-----------------:|-------------------------:|-----------------------:|--------------------------:|----------------------------------:|

|

| 162 |

| IDEFIX 80B | 0 | 60.0 | 45.2 | 30.9 | 36.0 | 56.8 | 91.8 | 65.0 | 53.7 | 74.3 | 48.8 | 60.6 | 68.9 | 60.5 | 8.0 (18.8/22.5)|

|

|

|

|

| 171 |

| | 16 | 57.2 | 49.0 | 28.1 | 45.0 | 68.0 | 99.6 | 87.2 | 65.0 | - | - | 52.5 | - | 66.0 | - |

|

| 172 |

| | 32 | 57.9 | 50.4 | 28.2 | 45.9 | 69.7 | 101.5 | 88.6 | 66.0 | - | - | 53.1 | - | 63.4 | - |

|

| 173 |

|

| 174 |

+

We also report results where the priming samples are selected to be similar (i.e. close in a vector space) to the queried instance.

|

| 175 |

+

|

| 176 |

+

TODO: table with rices shots

|

| 177 |

+

|

| 178 |

# Technical Specifications

|

| 179 |

|

| 180 |

+

- **Hardware Type:** 64 nodes of 8x 80GB A100 gpus, EFA network

|

| 181 |

+

- **Hours used:** ~672 node hours

|

| 182 |

+

- **Cloud Provider:** AWS Sagemaker

|

| 183 |

+

|

| 184 |

## Hardware

|

| 185 |

|

| 186 |

The training was performed on an AWS SageMaker cluster with 64 nodes of 8x80GB A100 GPUs (512 GPUs total). The cluster uses the current EFA network which provides about 340GBps throughput.

|

| 187 |

|

|

|

|

|

|

|

| 188 |

## Software

|

| 189 |

|

| 190 |

The training software is built on top of HuggingFace Transformers + Accelerate, and DeepSpeed ZeRO-3 for training, and [WebDataset](https://github.com/webdataset/webdataset) for data loading.

|

|

|

|

| 192 |

|

| 193 |

# Bias, Risks, and Limitations

|

| 194 |

|

|

|

|

|

|

|

| 195 |

Significant research has explored bias and fairness issues with language models (see, e.g., [Sheng et al. (2021)](https://aclanthology.org/2021.acl-long.330.pdf) and [Bender et al. (2021)](https://dl.acm.org/doi/pdf/10.1145/3442188.3445922)).

|

| 196 |

As a derivative of such a language model, IDEFICS can produce texts that include disturbing and harmful stereotypes across protected classes; identity characteristics; and sensitive, social, and occupational groups.

|

| 197 |

Moreover, IDEFICS can produce factually incorrect texts, and should not be relied on to produce factually accurate information.

|

|

|

|

| 202 |

To measure IDEFICS's ability to recognize socilogical (TODO: find a better adjective) attributes, we evaluate the model on FairFace...

|

| 203 |

TODO: include FairFace numbers

|

| 204 |

|

| 205 |

+

# License

|

| 206 |

|

| 207 |

+

The model is built on top of of two pre-trained models: [laion/CLIP-ViT-H-14-laion2B-s32B-b79K](https://huggingface.co/laion/CLIP-ViT-H-14-laion2B-s32B-b79K) and [huggyllama/llama-65b](https://huggingface.co/huggyllama/llama-65b). The first was released under an MIT license, while the second was released under a specific noncommercial license focused on research purposes. As such, users should comply with that license by applying directly to [Meta's form](https://docs.google.com/forms/d/e/1FAIpQLSfqNECQnMkycAp2jP4Z9TFX0cGR4uf7b_fBxjY_OjhJILlKGA/viewform).

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 208 |

|

| 209 |

+

We release the additional weights we trained under an MIT license.

|

| 210 |

|

| 211 |

# Citation

|

| 212 |

|

|

|

|

|

|

|

| 213 |

**BibTeX:**

|

| 214 |

|

| 215 |

+

```bibtex

|

| 216 |

+

@misc{laurençon2023obelisc,

|

| 217 |

+

title={OBELISC: An Open Web-Scale Filtered Dataset of Interleaved Image-Text Documents},

|

| 218 |

+

author={Hugo Laurençon and Lucile Saulnier and Léo Tronchon and Stas Bekman and Amanpreet Singh and Anton Lozhkov and Thomas Wang and Siddharth Karamcheti and Alexander M. Rush and Douwe Kiela and Matthieu Cord and Victor Sanh},

|

| 219 |

+

year={2023},

|

| 220 |

+

eprint={2306.16527},

|

| 221 |

+

archivePrefix={arXiv},

|

| 222 |

+

primaryClass={cs.IR}

|

| 223 |

+

}

|

| 224 |

+

```

|

| 225 |

+

|

| 226 |

+

# Model Card Authors

|

| 227 |

|

| 228 |

V, i, c, t, o, r, ,, , S, t, a, s, ,, , X, X, X

|

| 229 |

|

assets/Figure_Evals_IDEFIX.png

ADDED

|

assets/guarding_baguettes.png

ADDED

|