metadata

license: apache-2.0

base_model: bert-base-multilingual-cased

datasets:

- HiTZ/multilingual-abstrct

language:

- en

- es

- fr

- it

metrics:

- f1

pipeline_tag: token-classification

library_name: transformers

widget:

- text: >-

In the comparison of responders versus patients with both SD (6m) and PD,

responders indicated better physical well-being (P=.004) and mood (P=.02)

at month 3.

- text: >-

En la comparación de los que respondieron frente a los pacientes tanto con

SD (6m) como con EP, los que respondieron indicaron un mejor bienestar

físico (P=.004) y estado de ánimo (P=.02) en el mes 3.

- text: >-

Dans la comparaison entre les répondeurs et les patients atteints de SD

(6m) et de PD, les répondeurs ont indiqué un meilleur bien-être physique

(P=.004) et une meilleure humeur (P=.02) au mois 3.

- text: >-

Nel confronto tra i responder e i pazienti con SD (6m) e PD, i responder

hanno indicato un migliore benessere fisico (P=.004) e umore (P=.02) al

terzo mese.

mBERT for multilingual Argument Detection in the Medical Domain

This model is a fine-tuned version of bert-base-multilingual-cased for the argument component detection task on AbstRCT data in English, Spanish, French and Italian (https://huggingface.co/datasets/HiTZ/multilingual-abstrct).

Performance

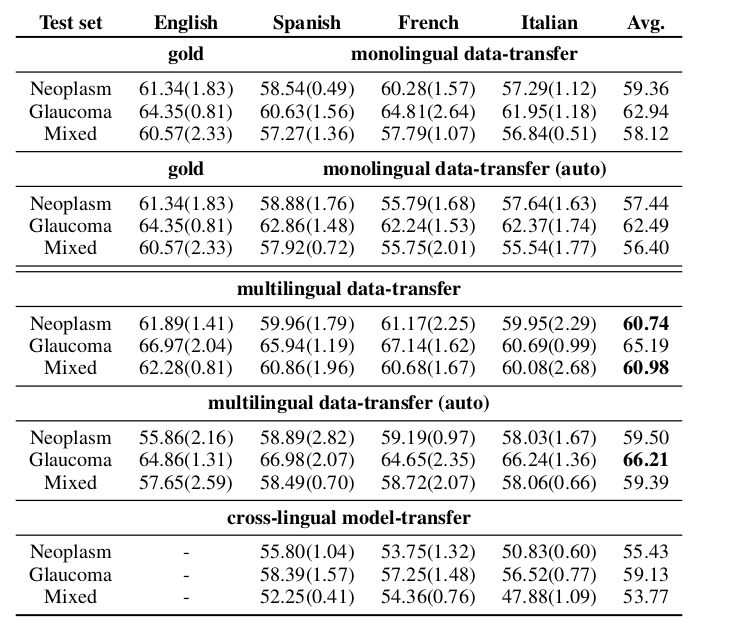

F1-macro scores (at sequence level) and their averages per test set from the argument component detection results of monolingual, monolingual automatically post-processed, multilingual, multilingual automatically post-processed, and crosslingual experiments.

Label Dictionary

"id2label": {

"0": "B-Claim",

"1": "B-Premise",

"2": "I-Claim",

"3": "I-Premise",

"4": "O"

}

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

Framework versions

- Transformers 4.40.0.dev0

- Pytorch 2.1.2+cu121

- Datasets 2.16.1

- Tokenizers 0.15.2

Contact: Anar Yeginbergen and Rodrigo Agerri HiTZ Center - Ixa, University of the Basque Country UPV/EHU