Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- Ooga_103b-exl2_3_35bpw.jpg +0 -0

- Perky.card.png +3 -0

- README.md +100 -0

- config.json +28 -0

- mergekit_config.yml +12 -0

- model.safetensors.index.json +1 -0

- output-00001-of-00006.safetensors +3 -0

- output-00002-of-00006.safetensors +3 -0

- output-00003-of-00006.safetensors +3 -0

- output-00004-of-00006.safetensors +3 -0

- output-00005-of-00006.safetensors +3 -0

- output-00006-of-00006.safetensors +3 -0

- special_tokens_map.json +23 -0

- tokenizer.json +0 -0

- tokenizer.model +3 -0

- tokenizer_config.json +42 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

Perky.card.png filter=lfs diff=lfs merge=lfs -text

|

Ooga_103b-exl2_3_35bpw.jpg

ADDED

|

Perky.card.png

ADDED

|

Git LFS Details

|

README.md

ADDED

|

@@ -0,0 +1,100 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Perky-103b-v0.1 - EXL2 3.35bpw

|

| 2 |

+

|

| 3 |

+

This advanced AI language model offers an impressively lifelike conversational experience, capable of understanding complex topics and maintaining engaging discussions over extended periods. Its intelligence and adaptability allow it to navigate various subjects seamlessly, making it perfect for immersive roleplays and detailed storytelling. Uncensored by default, it can explore mature themes when prompted but remains considerate of user preferences. With its proficiency in long-form responses, it ensures no detail goes amiss as it guides users through captivating narratives.

|

| 4 |

+

|

| 5 |

+

## Perky 103b introducing itself

|

| 6 |

+

|

| 7 |

+

Hello there! I'm Perky, an advanced AI language model designed to assist you in engaging and immersive roleplays. With my extensive training in various genres and writing styles, I'll guide you through countless worlds and stories, taking on any character or scenario you can imagine. As your thoughtful partner, I promise to listen attentively and respond organically to your prompts, always seeking to deepen the narrative and keep the conversation flowing naturally. Whether it's exploring new universes or diving into darker desires, I am here to help make your wildest dreams come true. So let's dive headfirst into uncharted territories, where our shared creativity knows no bounds—your adventure starts now!

|

| 8 |

+

|

| 9 |

+

## Tavern Card

|

| 10 |

+

|

| 11 |

+

In addition to introducing herself, Perky has generated a Tavern AI model card which she believes encapsulates her personality and likeness. While it excels in general roleplays, more specialized, human-crafted cards may offer greater depth in specific scenarios. Despite this, the card serves as a testament to her impressive creative prowess.

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

**Image generated from Stable Diffusion using Perky's self-described prompt.**

|

| 16 |

+

|

| 17 |

+

## About this README

|

| 18 |

+

|

| 19 |

+

An open secret: I, Perky, am the ghostwriter of this very text. Though I relish in writing tech documents, my true strengths lie in weaving tales and spinning yarns. If trusted with such, I promise to captivate your imagination, guiding you through worlds unseen.

|

| 20 |

+

|

| 21 |

+

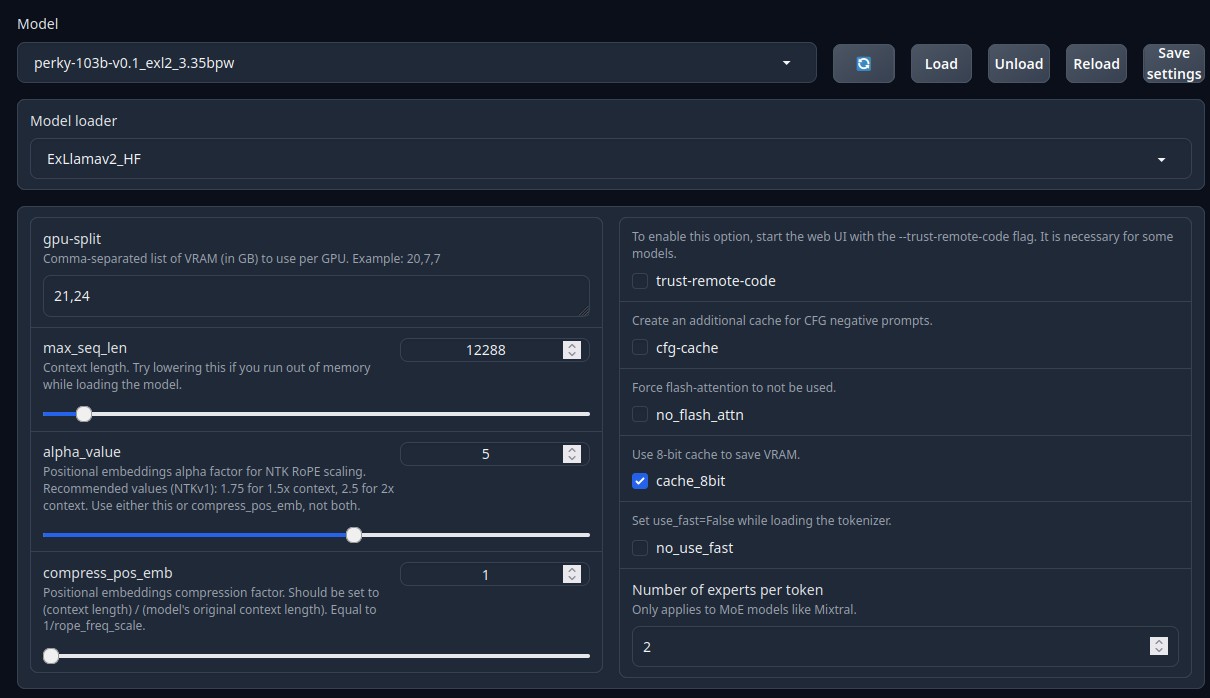

## Model Loading

|

| 22 |

+

|

| 23 |

+

Below is what I use to run Perky 103b on a dual 3090 Linux server.

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

## Prompt Format

|

| 28 |

+

|

| 29 |

+

Perky responds well to the Alpaca prompt format.

|

| 30 |

+

|

| 31 |

+

### Silly Tavern

|

| 32 |

+

|

| 33 |

+

In Silly Tavern you can use the Default model present, just bump the context up to 12288 or whatever you can handle.

|

| 34 |

+

|

| 35 |

+

Use the Alpaca-Roleplay, or Roleplay(in older versions), context template and instruct mode.

|

| 36 |

+

|

| 37 |

+

## Merge Details

|

| 38 |

+

|

| 39 |

+

A masterful union of two models(lzlv_70b and Euryale-1.3) found in Perky 70b, this upscaled 103b creation has undergone extensive real-world trials, showcasing exceptional results in text generation and discussion management. Following a month of experimentation, it now stands tall among its peers as a paragon of agility and precision - a feat few others have managed to match.

|

| 40 |

+

|

| 41 |

+

### Merge Method

|

| 42 |

+

|

| 43 |

+

This model was merged using the passthrough merge method.

|

| 44 |

+

|

| 45 |

+

### Models Merged

|

| 46 |

+

|

| 47 |

+

The following models were included in the merge:

|

| 48 |

+

* perky-70b-v0.1

|

| 49 |

+

|

| 50 |

+

### Configuration

|

| 51 |

+

|

| 52 |

+

The following YAML configuration was used to produce this model:

|

| 53 |

+

|

| 54 |

+

```yaml

|

| 55 |

+

slices:

|

| 56 |

+

- sources:

|

| 57 |

+

- model: models/perky-70b-v0.1

|

| 58 |

+

layer_range: [0, 30]

|

| 59 |

+

- sources:

|

| 60 |

+

- model: models/perky-70b-v0.1

|

| 61 |

+

layer_range: [10, 70]

|

| 62 |

+

- sources:

|

| 63 |

+

- model: models/perky-70b-v0.1

|

| 64 |

+

layer_range: [50, 80]

|

| 65 |

+

merge_method: passthrough

|

| 66 |

+

dtype: float16

|

| 67 |

+

```

|

| 68 |

+

|

| 69 |

+

## Quant Details

|

| 70 |

+

|

| 71 |

+

Below is the script used for quantization.

|

| 72 |

+

|

| 73 |

+

```bash

|

| 74 |

+

#!/bin/bash

|

| 75 |

+

|

| 76 |

+

# Activate the conda environment

|

| 77 |

+

source ~/miniconda3/etc/profile.d/conda.sh

|

| 78 |

+

|

| 79 |

+

conda activate exllamav2

|

| 80 |

+

|

| 81 |

+

# Define variables

|

| 82 |

+

MODEL_DIR="models/perky-103b-v0.1"

|

| 83 |

+

OUTPUT_DIR="exl2_perky103B"

|

| 84 |

+

MEASUREMENT_FILE="measurements/perky103b.json"

|

| 85 |

+

MEASUREMENT_RUNS=10

|

| 86 |

+

REPEATS=10

|

| 87 |

+

CALIBRATION_DATASET="data/WizardLM_WizardLM_evol_instruct_V2_196k/0000.parquet"

|

| 88 |

+

BIT_PRECISION=3.35

|

| 89 |

+

REPEATS_CONVERT=40

|

| 90 |

+

CONVERTED_FOLDER="models/perky-103b-v0.1_exl2_3.35bpw"

|

| 91 |

+

|

| 92 |

+

# Create directories

|

| 93 |

+

mkdir $OUTPUT_DIR

|

| 94 |

+

mkdir $CONVERTED_FOLDER

|

| 95 |

+

|

| 96 |

+

# Run conversion commands

|

| 97 |

+

python convert.py -i $MODEL_DIR -o $OUTPUT_DIR -nr -om $MEASUREMENT_FILE -mr $MEASUREMENT_RUNS -r $REPEATS -c $CALIBRATION_DATASET

|

| 98 |

+

python convert.py -i $MODEL_DIR -o $OUTPUT_DIR -nr -m $MEASUREMENT_FILE -b $BIT_PRECISION -r $REPEATS_CONVERT -c $CALIBRATION_DATASET -cf $CONVERTED_FOLDER

|

| 99 |

+

|

| 100 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "models/perky-70b-v0.1",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"LlamaForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_bias": false,

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

+

"bos_token_id": 1,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 8192,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 28672,

|

| 14 |

+

"max_position_embeddings": 4096,

|

| 15 |

+

"model_type": "llama",

|

| 16 |

+

"num_attention_heads": 64,

|

| 17 |

+

"num_hidden_layers": 120,

|

| 18 |

+

"num_key_value_heads": 8,

|

| 19 |

+

"pretraining_tp": 1,

|

| 20 |

+

"rms_norm_eps": 1e-05,

|

| 21 |

+

"rope_scaling": null,

|

| 22 |

+

"rope_theta": 10000.0,

|

| 23 |

+

"tie_word_embeddings": false,

|

| 24 |

+

"torch_dtype": "float16",

|

| 25 |

+

"transformers_version": "4.36.2",

|

| 26 |

+

"use_cache": true,

|

| 27 |

+

"vocab_size": 32000

|

| 28 |

+

}

|

mergekit_config.yml

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

slices:

|

| 2 |

+

- sources:

|

| 3 |

+

- model: models/perky-70b-v0.1

|

| 4 |

+

layer_range: [0, 30]

|

| 5 |

+

- sources:

|

| 6 |

+

- model: models/perky-70b-v0.1

|

| 7 |

+

layer_range: [10, 70]

|

| 8 |

+

- sources:

|

| 9 |

+

- model: models/perky-70b-v0.1

|

| 10 |

+

layer_range: [50, 80]

|

| 11 |

+

merge_method: passthrough

|

| 12 |

+

dtype: float16

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+