Commit

•

7cac5a7

1

Parent(s):

3b24a8a

add

Browse files- README.md +129 -0

- model_index.json +24 -0

- scheduler/scheduler_config.json +12 -0

- text_encoder/config.json +25 -0

- text_encoder/pytorch_model.bin +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +34 -0

- tokenizer/vocab.json +0 -0

- trinart2_step115000.ckpt +3 -0

- trinart2_step60000.ckpt +3 -0

- trinart2_step95000.ckpt +3 -0

- unet/config.json +36 -0

- unet/diffusion_pytorch_model.bin +3 -0

- vae/config.json +29 -0

- vae/diffusion_pytorch_model.bin +3 -0

README.md

ADDED

|

@@ -0,0 +1,129 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

inference: true

|

| 3 |

+

tags:

|

| 4 |

+

- stable-diffusion

|

| 5 |

+

- stable-diffusion-diffusers

|

| 6 |

+

- text-to-image

|

| 7 |

+

license: creativeml-openrail-m

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

## Please Note!

|

| 11 |

+

|

| 12 |

+

This model is NOT the 19.2M images Characters Model on TrinArt, but an improved version of the original Trin-sama Twitter bot model. This model is intended to retain the original SD's aesthetics as much as possible while nudging the model to anime/manga style.

|

| 13 |

+

|

| 14 |

+

Other TrinArt models can be found at:

|

| 15 |

+

|

| 16 |

+

https://huggingface.co/naclbit/trinart_derrida_characters_v2_stable_diffusion

|

| 17 |

+

|

| 18 |

+

https://huggingface.co/naclbit/trinart_characters_19.2m_stable_diffusion_v1

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

## Diffusers

|

| 22 |

+

|

| 23 |

+

The model has been ported to `diffusers` by [ayan4m1](https://huggingface.co/ayan4m1)

|

| 24 |

+

and can easily be run from one of the branches:

|

| 25 |

+

- `revision="diffusers-60k"` for the checkpoint trained on 60,000 steps,

|

| 26 |

+

- `revision="diffusers-95k"` for the checkpoint trained on 95,000 steps,

|

| 27 |

+

- `revision="diffusers-115k"` for the checkpoint trained on 115,000 steps.

|

| 28 |

+

|

| 29 |

+

For more information, please have a look at [the "Three flavors" section](#three-flavors).

|

| 30 |

+

|

| 31 |

+

## Gradio

|

| 32 |

+

|

| 33 |

+

We also support a [Gradio](https://github.com/gradio-app/gradio) web ui with diffusers to run inside a colab notebook: [](https://colab.research.google.com/drive/1RWvik_C7nViiR9bNsu3fvMR3STx6RvDx?usp=sharing)

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

### Example Text2Image

|

| 37 |

+

|

| 38 |

+

```python

|

| 39 |

+

# !pip install diffusers==0.3.0

|

| 40 |

+

from diffusers import StableDiffusionPipeline

|

| 41 |

+

|

| 42 |

+

# using the 60,000 steps checkpoint

|

| 43 |

+

pipe = StableDiffusionPipeline.from_pretrained("naclbit/trinart_stable_diffusion_v2", revision="diffusers-60k")

|

| 44 |

+

pipe.to("cuda")

|

| 45 |

+

|

| 46 |

+

image = pipe("A magical dragon flying in front of the Himalaya in manga style").images[0]

|

| 47 |

+

image

|

| 48 |

+

```

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

If you want to run the pipeline faster or on a different hardware, please have a look at the [optimization docs](https://huggingface.co/docs/diffusers/optimization/fp16).

|

| 53 |

+

|

| 54 |

+

### Example Image2Image

|

| 55 |

+

|

| 56 |

+

```python

|

| 57 |

+

# !pip install diffusers==0.3.0

|

| 58 |

+

from diffusers import StableDiffusionImg2ImgPipeline

|

| 59 |

+

import requests

|

| 60 |

+

from PIL import Image

|

| 61 |

+

from io import BytesIO

|

| 62 |

+

|

| 63 |

+

url = "https://scitechdaily.com/images/Dog-Park.jpg"

|

| 64 |

+

|

| 65 |

+

response = requests.get(url)

|

| 66 |

+

init_image = Image.open(BytesIO(response.content)).convert("RGB")

|

| 67 |

+

init_image = init_image.resize((768, 512))

|

| 68 |

+

|

| 69 |

+

# using the 115,000 steps checkpoint

|

| 70 |

+

pipe = StableDiffusionImg2ImgPipeline.from_pretrained("naclbit/trinart_stable_diffusion_v2", revision="diffusers-115k")

|

| 71 |

+

pipe.to("cuda")

|

| 72 |

+

|

| 73 |

+

images = pipe(prompt="Manga drawing of Brad Pitt", init_image=init_image, strength=0.75, guidance_scale=7.5).images

|

| 74 |

+

image

|

| 75 |

+

```

|

| 76 |

+

|

| 77 |

+

If you want to run the pipeline faster or on a different hardware, please have a look at the [optimization docs](https://huggingface.co/docs/diffusers/optimization/fp16).

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

## Stable Diffusion TrinArt/Trin-sama AI finetune v2

|

| 81 |

+

|

| 82 |

+

trinart_stable_diffusion is a SD model finetuned by about 40,000 assorted high resolution manga/anime-style pictures for 8 epochs. This is the same model running on Twitter bot @trinsama (https://twitter.com/trinsama)

|

| 83 |

+

|

| 84 |

+

Twitterボット「とりんさまAI」@trinsama (https://twitter.com/trinsama) で使用しているSDのファインチューン済モデルです。一定のルールで選別された約4万枚のアニメ・マンガスタイルの高解像度画像を用いて約8エポックの訓練を行いました。

|

| 85 |

+

|

| 86 |

+

## Version 2

|

| 87 |

+

|

| 88 |

+

V2 checkpoint uses dropouts, 10,000 more images and a new tagging strategy and trained longer to improve results while retaining the original aesthetics.

|

| 89 |

+

|

| 90 |

+

バージョン2は画像を1万枚追加したほか、ドロップアウトの適用、タグ付けの改善とより長いトレーニング時間により、SDのスタイルを保ったまま出力内容の改善を目指しています。

|

| 91 |

+

|

| 92 |

+

## Three flavors

|

| 93 |

+

|

| 94 |

+

Step 115000/95000 checkpoints were trained further, but you may use step 60000 checkpoint instead if style nudging is too much.

|

| 95 |

+

|

| 96 |

+

ステップ115000/95000のチェックポイントでスタイルが変わりすぎると感じる場合は、ステップ60000のチェックポイントを使用してみてください。

|

| 97 |

+

|

| 98 |

+

#### img2img

|

| 99 |

+

|

| 100 |

+

If you want to run **latent-diffusion**'s stock ddim img2img script with this model, **use_ema** must be set to False.

|

| 101 |

+

|

| 102 |

+

**latent-diffusion** のscriptsフォルダに入っているddim img2imgをこのモデルで動かす場合、use_emaはFalseにする必要があります。

|

| 103 |

+

|

| 104 |

+

#### Hardware

|

| 105 |

+

|

| 106 |

+

- 8xNVIDIA A100 40GB

|

| 107 |

+

|

| 108 |

+

#### Training Info

|

| 109 |

+

|

| 110 |

+

- Custom dataset loader with augmentations: XFlip, center crop and aspect-ratio locked scaling

|

| 111 |

+

- LR: 1.0e-5

|

| 112 |

+

- 10% dropouts

|

| 113 |

+

|

| 114 |

+

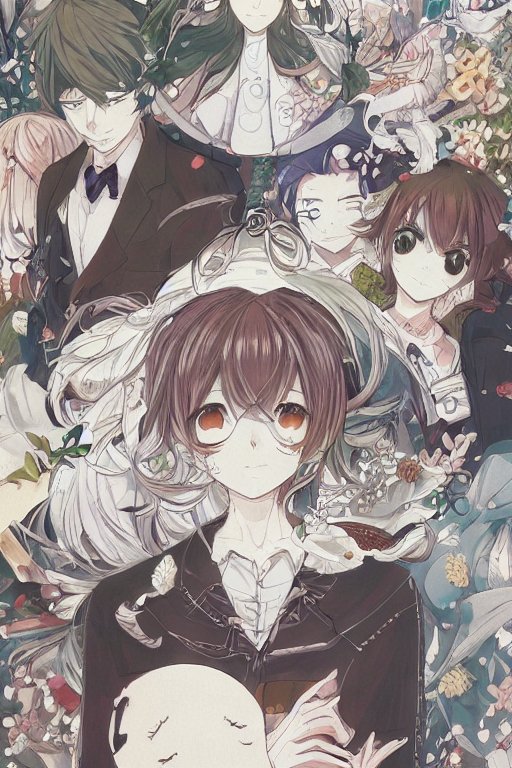

#### Examples

|

| 115 |

+

|

| 116 |

+

Each images were diffused using K. Crowson's k-lms (from k-diffusion repo) method for 50 steps.

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

#### Credits

|

| 123 |

+

|

| 124 |

+

- Sta, AI Novelist Dev (https://ai-novel.com/) @ Bit192, Inc.

|

| 125 |

+

- Stable Diffusion - Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bjorn

|

| 126 |

+

|

| 127 |

+

#### License

|

| 128 |

+

|

| 129 |

+

CreativeML OpenRAIL-M

|

model_index.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "StableDiffusionPipeline",

|

| 3 |

+

"_diffusers_version": "0.8.0.dev0",

|

| 4 |

+

"scheduler": [

|

| 5 |

+

"diffusers",

|

| 6 |

+

"PNDMScheduler"

|

| 7 |

+

],

|

| 8 |

+

"text_encoder": [

|

| 9 |

+

"transformers",

|

| 10 |

+

"CLIPTextModel"

|

| 11 |

+

],

|

| 12 |

+

"tokenizer": [

|

| 13 |

+

"transformers",

|

| 14 |

+

"CLIPTokenizer"

|

| 15 |

+

],

|

| 16 |

+

"unet": [

|

| 17 |

+

"diffusers",

|

| 18 |

+

"UNet2DConditionModel"

|

| 19 |

+

],

|

| 20 |

+

"vae": [

|

| 21 |

+

"diffusers",

|

| 22 |

+

"AutoencoderKL"

|

| 23 |

+

]

|

| 24 |

+

}

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "PNDMScheduler",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"num_train_timesteps": 1000,

|

| 8 |

+

"set_alpha_to_one": false,

|

| 9 |

+

"skip_prk_steps": true,

|

| 10 |

+

"steps_offset": 1,

|

| 11 |

+

"trained_betas": null

|

| 12 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "openai/clip-vit-large-patch14",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.23.1",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3cc329c12fd921cda5679c859ad7025b1f3e9e492aa3cc3e87994de5287d5bd2

|

| 3 |

+

size 492305335

|

tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"do_lower_case": true,

|

| 12 |

+

"eos_token": {

|

| 13 |

+

"__type": "AddedToken",

|

| 14 |

+

"content": "<|endoftext|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": true,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false

|

| 19 |

+

},

|

| 20 |

+

"errors": "replace",

|

| 21 |

+

"model_max_length": 77,

|

| 22 |

+

"name_or_path": "openai/clip-vit-large-patch14",

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"special_tokens_map_file": "./special_tokens_map.json",

|

| 25 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 26 |

+

"unk_token": {

|

| 27 |

+

"__type": "AddedToken",

|

| 28 |

+

"content": "<|endoftext|>",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": true,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false

|

| 33 |

+

}

|

| 34 |

+

}

|

tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

trinart2_step115000.ckpt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:776af18775dfccf29725a994df855e7d8f7b8ea525013e3a466f210ec15c8fd4

|

| 3 |

+

size 2132888288

|

trinart2_step60000.ckpt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8cfec49e460fd191e284aa7165e03bf5539b47b78bce37952b7747feb69f2d45

|

| 3 |

+

size 780140544

|

trinart2_step95000.ckpt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c1799d22a355ba25c9ceeb6e3c91fc61788c8e274b73508ae8a15877c5dbcf63

|

| 3 |

+

size 2132888288

|

unet/config.json

ADDED

|

@@ -0,0 +1,36 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"attention_head_dim": 8,

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

320,

|

| 8 |

+

640,

|

| 9 |

+

1280,

|

| 10 |

+

1280

|

| 11 |

+

],

|

| 12 |

+

"center_input_sample": false,

|

| 13 |

+

"cross_attention_dim": 768,

|

| 14 |

+

"down_block_types": [

|

| 15 |

+

"CrossAttnDownBlock2D",

|

| 16 |

+

"CrossAttnDownBlock2D",

|

| 17 |

+

"CrossAttnDownBlock2D",

|

| 18 |

+

"DownBlock2D"

|

| 19 |

+

],

|

| 20 |

+

"downsample_padding": 1,

|

| 21 |

+

"flip_sin_to_cos": true,

|

| 22 |

+

"freq_shift": 0,

|

| 23 |

+

"in_channels": 4,

|

| 24 |

+

"layers_per_block": 2,

|

| 25 |

+

"mid_block_scale_factor": 1,

|

| 26 |

+

"norm_eps": 1e-05,

|

| 27 |

+

"norm_num_groups": 32,

|

| 28 |

+

"out_channels": 4,

|

| 29 |

+

"sample_size": 32,

|

| 30 |

+

"up_block_types": [

|

| 31 |

+

"UpBlock2D",

|

| 32 |

+

"CrossAttnUpBlock2D",

|

| 33 |

+

"CrossAttnUpBlock2D",

|

| 34 |

+

"CrossAttnUpBlock2D"

|

| 35 |

+

]

|

| 36 |

+

}

|

unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:aaa80770bdbdc88da6dc6bbfb82120686a36796d6b73ee4d32f5622c2d6682d9

|

| 3 |

+

size 3438354725

|

vae/config.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.6.0",

|

| 4 |

+

"act_fn": "silu",

|

| 5 |

+

"block_out_channels": [

|

| 6 |

+

128,

|

| 7 |

+

256,

|

| 8 |

+

512,

|

| 9 |

+

512

|

| 10 |

+

],

|

| 11 |

+

"down_block_types": [

|

| 12 |

+

"DownEncoderBlock2D",

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D"

|

| 16 |

+

],

|

| 17 |

+

"in_channels": 3,

|

| 18 |

+

"latent_channels": 4,

|

| 19 |

+

"layers_per_block": 2,

|

| 20 |

+

"norm_num_groups": 32,

|

| 21 |

+

"out_channels": 3,

|

| 22 |

+

"sample_size": 256,

|

| 23 |

+

"up_block_types": [

|

| 24 |

+

"UpDecoderBlock2D",

|

| 25 |

+

"UpDecoderBlock2D",

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D"

|

| 28 |

+

]

|

| 29 |

+

}

|

vae/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b8cf5b49d164db18a485d392b2d9a9b4e3636d70613cb756d2e1bc460dd13161

|

| 3 |

+

size 334707217

|