Commit

•

426e0f2

1

Parent(s):

26f421d

add model

Browse files- README.md +76 -0

- added_tokens.json +1 -0

- config.json +40 -0

- preprocessor_config.json +21 -0

- pytorch_model.bin +3 -0

- special_tokens_map.json +1 -0

- tokenizer_config.json +1 -0

- vocab.json +0 -0

README.md

ADDED

|

@@ -0,0 +1,76 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: en

|

| 3 |

+

datasets:

|

| 4 |

+

- librispeech_asr

|

| 5 |

+

- common_voice

|

| 6 |

+

tags:

|

| 7 |

+

- speech

|

| 8 |

+

license: apache-2.0

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# M-CTC-T

|

| 12 |

+

|

| 13 |

+

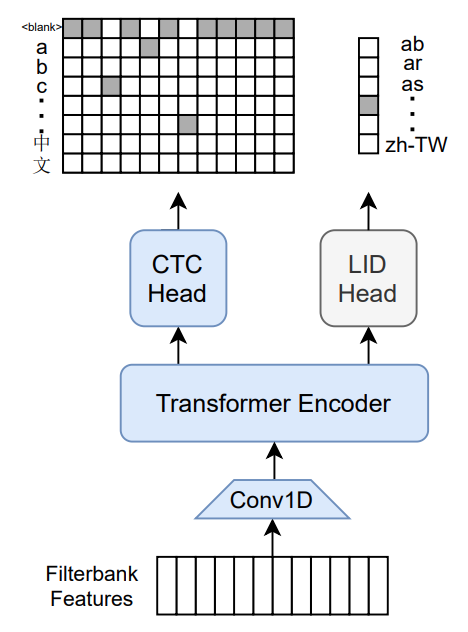

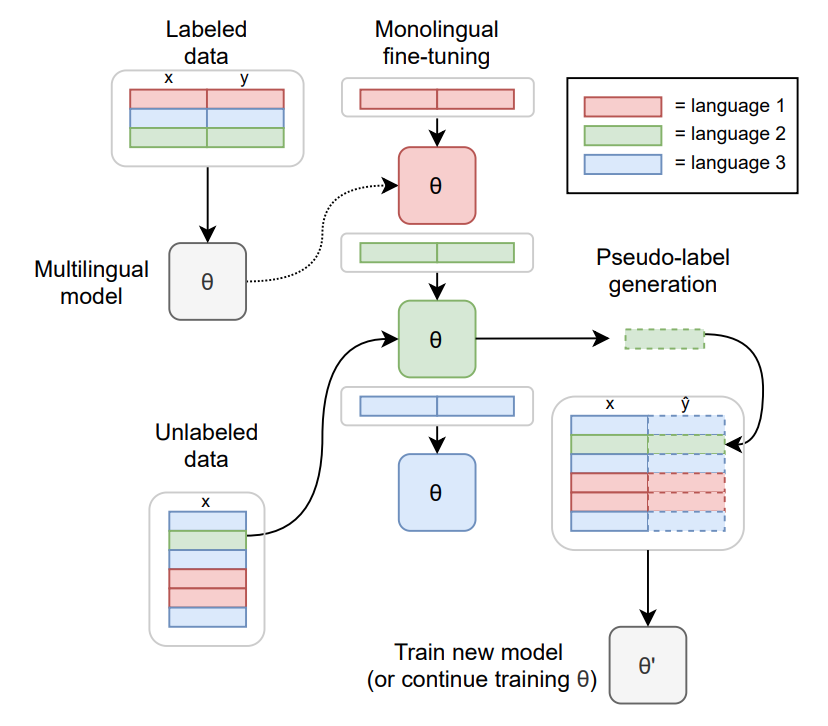

Massively multilingual speech recognizer from Meta AI. The model is a 1B-param transformer encoder, with a CTC head over 8065 character labels and a language identification head over 60 language ID labels. It is trained on Common Voice (version 6.1, December 2020 release) and VoxPopuli. After training on Common Voice and VoxPopuli, the model is trained on Common Voice only. The labels are unnormalized character-level transcripts (punctuation and capitalization are not removed). The model takes as input Mel filterbank features from a 16Khz audio signal.

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

The original Flashlight code, model checkpoints, and Colab notebook can be found at https://github.com/flashlight/wav2letter/tree/main/recipes/mling_pl .

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

## Citation

|

| 21 |

+

|

| 22 |

+

[Paper](https://arxiv.org/abs/2111.00161)

|

| 23 |

+

|

| 24 |

+

Authors: Loren Lugosch, Tatiana Likhomanenko, Gabriel Synnaeve, Ronan Collobert

|

| 25 |

+

|

| 26 |

+

```

|

| 27 |

+

@article{lugosch2021pseudo,

|

| 28 |

+

title={Pseudo-Labeling for Massively Multilingual Speech Recognition},

|

| 29 |

+

author={Lugosch, Loren and Likhomanenko, Tatiana and Synnaeve, Gabriel and Collobert, Ronan},

|

| 30 |

+

journal={ICASSP},

|

| 31 |

+

year={2022}

|

| 32 |

+

}

|

| 33 |

+

```

|

| 34 |

+

|

| 35 |

+

Additional thanks to [Chan Woo Kim](https://huggingface.co/cwkeam) and [Patrick von Platen](https://huggingface.co/patrickvonplaten) for porting the model from Flashlight to PyTorch.

|

| 36 |

+

|

| 37 |

+

# Training method

|

| 38 |

+

|

| 39 |

+

TO-DO: replace with the training diagram from paper

|

| 40 |

+

|

| 41 |

+

For more information on how the model was trained, please take a look at the [official paper](https://arxiv.org/abs/2111.00161).

|

| 42 |

+

|

| 43 |

+

# Usage

|

| 44 |

+

|

| 45 |

+

To transcribe audio files the model can be used as a standalone acoustic model as follows:

|

| 46 |

+

|

| 47 |

+

```python

|

| 48 |

+

import torch

|

| 49 |

+

import torchaudio

|

| 50 |

+

from datasets import load_dataset

|

| 51 |

+

from transformers import MCTCTForCTC, MCTCTProcessor

|

| 52 |

+

|

| 53 |

+

model = MCTCTForCTC.from_pretrained("speechbrain/mctct-large")

|

| 54 |

+

processor = MCTCTProcessor.from_pretrained("speechbrain/mctct-large")

|

| 55 |

+

|

| 56 |

+

# load dummy dataset and read soundfiles

|

| 57 |

+

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

|

| 58 |

+

|

| 59 |

+

# tokenize

|

| 60 |

+

input_features = processor(ds[0]["audio"]["array"], return_tensors="pt").input_features

|

| 61 |

+

|

| 62 |

+

# retrieve logits

|

| 63 |

+

logits = model(input_features).logits

|

| 64 |

+

|

| 65 |

+

# take argmax and decode

|

| 66 |

+

predicted_ids = torch.argmax(logits, dim=-1)

|

| 67 |

+

transcription = processor.batch_decode(predicted_ids)

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

Results for Common Voice, averaged over all languages:

|

| 71 |

+

|

| 72 |

+

*Character error rate (CER)*:

|

| 73 |

+

|

| 74 |

+

| Valid | Test |

|

| 75 |

+

|-------|------|

|

| 76 |

+

| 21.4 | 23.3 |

|

added_tokens.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"<s>": 8065, "</s>": 8066}

|

config.json

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"MCTCTForCTC"

|

| 4 |

+

],

|

| 5 |

+

"attention_head_dim": 384,

|

| 6 |

+

"attention_probs_dropout_prob": 0.3,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"conv_channels": null,

|

| 9 |

+

"conv_dropout": 0.3,

|

| 10 |

+

"conv_glu_dim": 1,

|

| 11 |

+

"conv_kernel": [

|

| 12 |

+

7

|

| 13 |

+

],

|

| 14 |

+

"conv_stride": [

|

| 15 |

+

3

|

| 16 |

+

],

|

| 17 |

+

"ctc_loss_reduction": "sum",

|

| 18 |

+

"ctc_zero_infinity": false,

|

| 19 |

+

"eos_token_id": 2,

|

| 20 |

+

"hidden_act": "relu",

|

| 21 |

+

"hidden_dropout_prob": 0.3,

|

| 22 |

+

"hidden_size": 1536,

|

| 23 |

+

"initializer_range": 0.02,

|

| 24 |

+

"input_channels": 1,

|

| 25 |

+

"input_feat_per_channel": 80,

|

| 26 |

+

"intermediate_size": 6144,

|

| 27 |

+

"layer_norm_eps": 1e-05,

|

| 28 |

+

"layerdrop": 0.3,

|

| 29 |

+

"max_position_embeddings": 920,

|

| 30 |

+

"model_type": "mctct",

|

| 31 |

+

"num_attention_heads": 4,

|

| 32 |

+

"num_conv_layers": 1,

|

| 33 |

+

"num_hidden_layers": 36,

|

| 34 |

+

"pad_token_id": 1,

|

| 35 |

+

"position_embedding_type": "relative_key",

|

| 36 |

+

"torch_dtype": "float32",

|

| 37 |

+

"transformers_version": "4.18.0.dev0",

|

| 38 |

+

"use_cache": true,

|

| 39 |

+

"vocab_size": 8065

|

| 40 |

+

}

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"K": 257,

|

| 3 |

+

"do_normalize": true,

|

| 4 |

+

"feature_extractor_type": "MCTCFeatureExtractor",

|

| 5 |

+

"feature_size": 80,

|

| 6 |

+

"frame_signal_scale": 32768.0,

|

| 7 |

+

"hop_length": 10,

|

| 8 |

+

"mel_floor": 1.0,

|

| 9 |

+

"n_fft": 512,

|

| 10 |

+

"normalize_means": true,

|

| 11 |

+

"normalize_vars": true,

|

| 12 |

+

"padding_side": "right",

|

| 13 |

+

"padding_value": 0.0,

|

| 14 |

+

"preemphasis_coeff": 0.97,

|

| 15 |

+

"return_attention_mask": false,

|

| 16 |

+

"sample_size": 400,

|

| 17 |

+

"sample_stride": 160,

|

| 18 |

+

"sampling_rate": 16000,

|

| 19 |

+

"win_function": "hamming_window",

|

| 20 |

+

"win_length": 25

|

| 21 |

+

}

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5526e34abfb3af87ffdb16a95fce53ca3ec8764e0e12a4323306d3c66496586d

|

| 3 |

+

size 4236046301

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"bos_token": "<s>", "eos_token": "</s>", "unk_token": "<unk>", "pad_token": "<pad>", "additional_special_tokens": [{"content": "<s>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, {"content": "</s>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}]}

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"unk_token": "<unk>", "bos_token": "<s>", "eos_token": "</s>", "pad_token": "<pad>", "do_lower_case": false, "word_delimiter_token": "|", "replace_word_delimiter_char": " ", "return_attention_mask": false, "do_normalize": true, "special_tokens_map_file": "./mctc-large/special_tokens_map.json", "name_or_path": "./mctc-large", "tokenizer_class": "Wav2Vec2CTCTokenizer"}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|