Spaces:

Sleeping

Sleeping

Update spaces

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

- .gitignore +52 -0

- LICENSE +201 -0

- LICENSE_NVIDIA +99 -0

- LICENSE_WEIGHT +407 -0

- README.md +117 -0

- app.py +210 -0

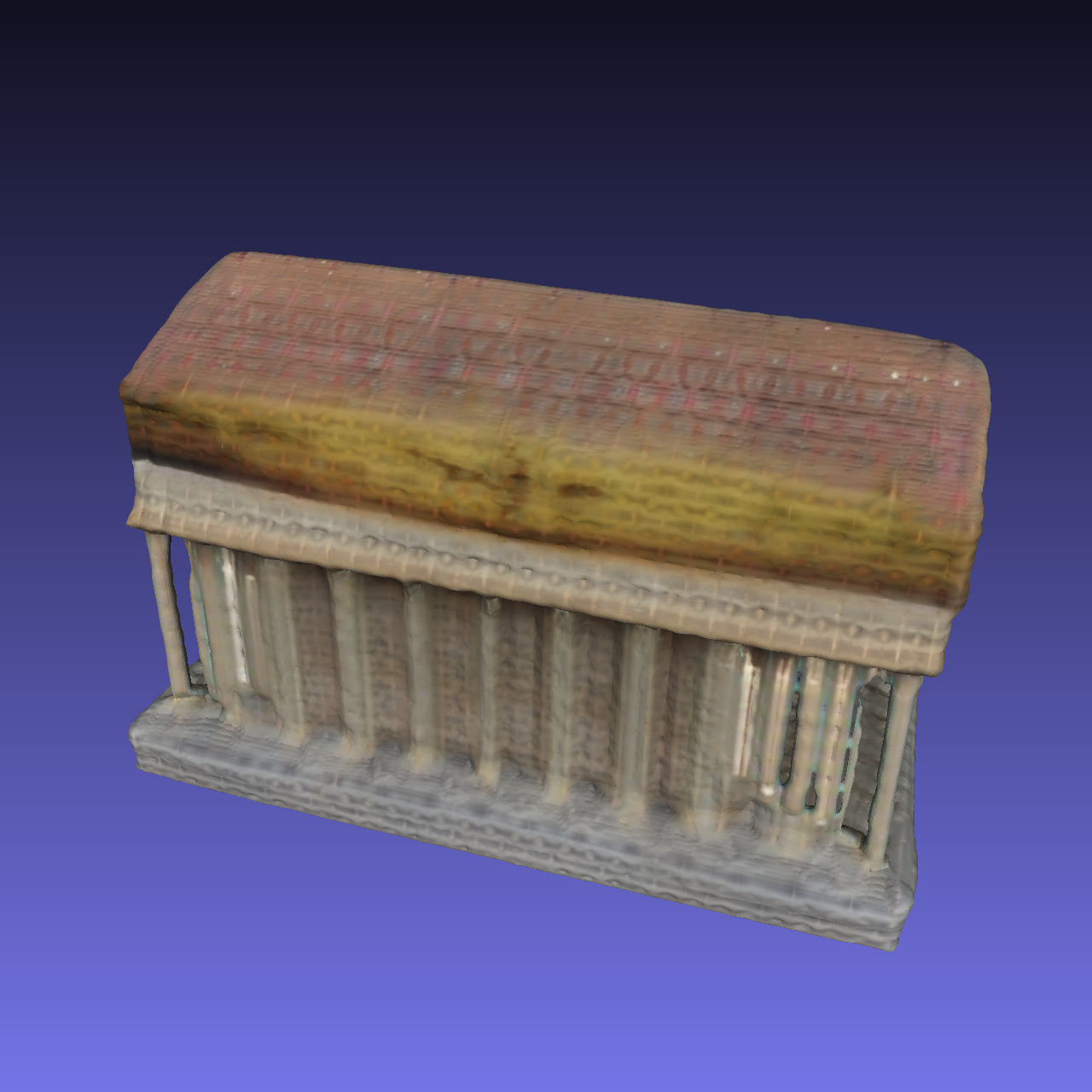

- assets/mesh_snapshot/crop.building.ply00.png +0 -0

- assets/mesh_snapshot/crop.building.ply01.png +0 -0

- assets/mesh_snapshot/crop.owl.ply00.png +0 -0

- assets/mesh_snapshot/crop.owl.ply01.png +0 -0

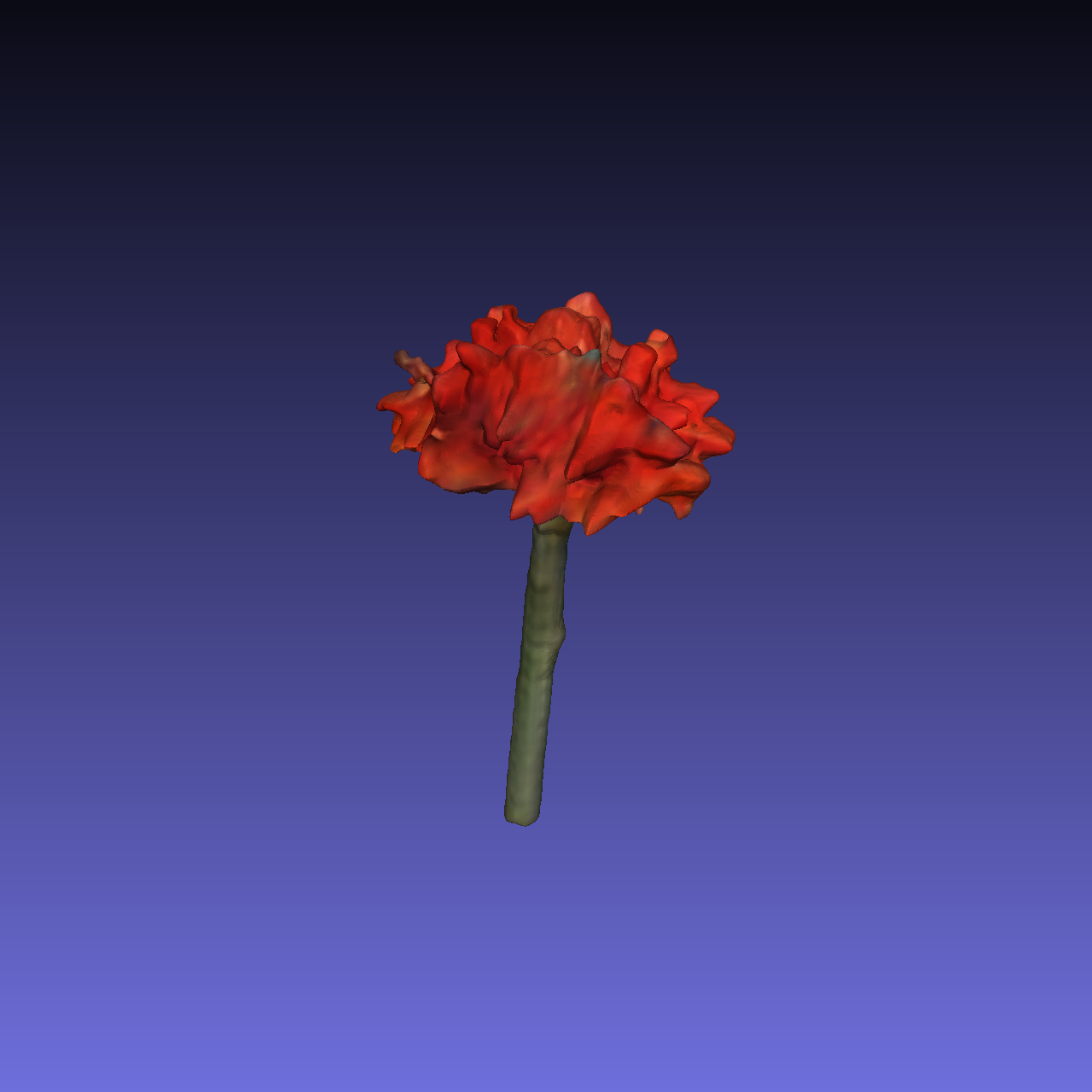

- assets/mesh_snapshot/crop.rose.ply00.png +0 -0

- assets/mesh_snapshot/crop.rose.ply01.png +0 -0

- assets/rendered_video/teaser.gif +3 -0

- assets/sample_input/building.png +0 -0

- assets/sample_input/ceramic.png +0 -0

- assets/sample_input/fire.png +0 -0

- assets/sample_input/girl.png +0 -0

- assets/sample_input/hotdogs.png +0 -0

- assets/sample_input/hydrant.png +0 -0

- assets/sample_input/lamp.png +0 -0

- assets/sample_input/mailbox.png +0 -0

- assets/sample_input/owl.png +0 -0

- assets/sample_input/traffic.png +0 -0

- configs/infer-b.yaml +8 -0

- configs/infer-gradio.yaml +7 -0

- configs/infer-l.yaml +8 -0

- configs/infer-s.yaml +8 -0

- model_card.md +67 -0

- openlrm/__init__.py +15 -0

- openlrm/datasets/__init__.py +16 -0

- openlrm/datasets/base.py +68 -0

- openlrm/datasets/cam_utils.py +179 -0

- openlrm/launch.py +36 -0

- openlrm/losses/__init__.py +18 -0

- openlrm/losses/perceptual.py +70 -0

- openlrm/losses/pixelwise.py +58 -0

- openlrm/losses/tvloss.py +55 -0

- openlrm/models/__init__.py +21 -0

- openlrm/models/block.py +124 -0

- openlrm/models/embedder.py +37 -0

- openlrm/models/encoders/__init__.py +15 -0

- openlrm/models/encoders/dino_wrapper.py +68 -0

- openlrm/models/encoders/dinov2/__init__.py +15 -0

- openlrm/models/encoders/dinov2/hub/__init__.py +4 -0

- openlrm/models/encoders/dinov2/hub/backbones.py +166 -0

- openlrm/models/encoders/dinov2/hub/classifiers.py +268 -0

- openlrm/models/encoders/dinov2/hub/depth/__init__.py +7 -0

- openlrm/models/encoders/dinov2/hub/depth/decode_heads.py +747 -0

- openlrm/models/encoders/dinov2/hub/depth/encoder_decoder.py +351 -0

.gitattributes

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

assets/rendered_video/teaser.gif filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Ignore Python cache files

|

| 2 |

+

**/__pycache__

|

| 3 |

+

|

| 4 |

+

# Ignore compiled Python files

|

| 5 |

+

*.pyc

|

| 6 |

+

|

| 7 |

+

# Ignore editor-specific files

|

| 8 |

+

.vscode/

|

| 9 |

+

.idea/

|

| 10 |

+

|

| 11 |

+

# Ignore operating system files

|

| 12 |

+

.DS_Store

|

| 13 |

+

Thumbs.db

|

| 14 |

+

|

| 15 |

+

# Ignore log files

|

| 16 |

+

*.log

|

| 17 |

+

|

| 18 |

+

# Ignore temporary and cache files

|

| 19 |

+

*.tmp

|

| 20 |

+

*.cache

|

| 21 |

+

|

| 22 |

+

# Ignore build artifacts

|

| 23 |

+

/build/

|

| 24 |

+

/dist/

|

| 25 |

+

|

| 26 |

+

# Ignore virtual environment files

|

| 27 |

+

/venv/

|

| 28 |

+

/.venv/

|

| 29 |

+

|

| 30 |

+

# Ignore package manager files

|

| 31 |

+

/node_modules/

|

| 32 |

+

/yarn.lock

|

| 33 |

+

/package-lock.json

|

| 34 |

+

|

| 35 |

+

# Ignore database files

|

| 36 |

+

*.db

|

| 37 |

+

*.sqlite

|

| 38 |

+

|

| 39 |

+

# Ignore secret files

|

| 40 |

+

*.secret

|

| 41 |

+

|

| 42 |

+

# Ignore compiled binaries

|

| 43 |

+

*.exe

|

| 44 |

+

*.dll

|

| 45 |

+

*.so

|

| 46 |

+

*.dylib

|

| 47 |

+

|

| 48 |

+

# Ignore backup files

|

| 49 |

+

*.bak

|

| 50 |

+

*.swp

|

| 51 |

+

*.swo

|

| 52 |

+

*.~*

|

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

LICENSE_NVIDIA

ADDED

|

@@ -0,0 +1,99 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (c) 2021-2022, NVIDIA Corporation & affiliates. All rights

|

| 2 |

+

reserved.

|

| 3 |

+

|

| 4 |

+

|

| 5 |

+

NVIDIA Source Code License for EG3D

|

| 6 |

+

|

| 7 |

+

|

| 8 |

+

=======================================================================

|

| 9 |

+

|

| 10 |

+

1. Definitions

|

| 11 |

+

|

| 12 |

+

"Licensor" means any person or entity that distributes its Work.

|

| 13 |

+

|

| 14 |

+

"Software" means the original work of authorship made available under

|

| 15 |

+

this License.

|

| 16 |

+

|

| 17 |

+

"Work" means the Software and any additions to or derivative works of

|

| 18 |

+

the Software that are made available under this License.

|

| 19 |

+

|

| 20 |

+

The terms "reproduce," "reproduction," "derivative works," and

|

| 21 |

+

"distribution" have the meaning as provided under U.S. copyright law;

|

| 22 |

+

provided, however, that for the purposes of this License, derivative

|

| 23 |

+

works shall not include works that remain separable from, or merely

|

| 24 |

+

link (or bind by name) to the interfaces of, the Work.

|

| 25 |

+

|

| 26 |

+

Works, including the Software, are "made available" under this License

|

| 27 |

+

by including in or with the Work either (a) a copyright notice

|

| 28 |

+

referencing the applicability of this License to the Work, or (b) a

|

| 29 |

+

copy of this License.

|

| 30 |

+

|

| 31 |

+

2. License Grants

|

| 32 |

+

|

| 33 |

+

2.1 Copyright Grant. Subject to the terms and conditions of this

|

| 34 |

+

License, each Licensor grants to you a perpetual, worldwide,

|

| 35 |

+

non-exclusive, royalty-free, copyright license to reproduce,

|

| 36 |

+

prepare derivative works of, publicly display, publicly perform,

|

| 37 |

+

sublicense and distribute its Work and any resulting derivative

|

| 38 |

+

works in any form.

|

| 39 |

+

|

| 40 |

+

3. Limitations

|

| 41 |

+

|

| 42 |

+

3.1 Redistribution. You may reproduce or distribute the Work only

|

| 43 |

+

if (a) you do so under this License, (b) you include a complete

|

| 44 |

+

copy of this License with your distribution, and (c) you retain

|

| 45 |

+

without modification any copyright, patent, trademark, or

|

| 46 |

+

attribution notices that are present in the Work.

|

| 47 |

+

|

| 48 |

+

3.2 Derivative Works. You may specify that additional or different

|

| 49 |

+

terms apply to the use, reproduction, and distribution of your

|

| 50 |

+

derivative works of the Work ("Your Terms") only if (a) Your Terms

|

| 51 |

+

provide that the use limitation in Section 3.3 applies to your

|

| 52 |

+

derivative works, and (b) you identify the specific derivative

|

| 53 |

+

works that are subject to Your Terms. Notwithstanding Your Terms,

|

| 54 |

+

this License (including the redistribution requirements in Section

|

| 55 |

+

3.1) will continue to apply to the Work itself.

|

| 56 |

+

|

| 57 |

+

3.3 Use Limitation. The Work and any derivative works thereof only

|

| 58 |

+

may be used or intended for use non-commercially. The Work or

|

| 59 |

+

derivative works thereof may be used or intended for use by NVIDIA

|

| 60 |

+

or it’s affiliates commercially or non-commercially. As used

|

| 61 |

+

herein, "non-commercially" means for research or evaluation

|

| 62 |

+

purposes only and not for any direct or indirect monetary gain.

|

| 63 |

+

|

| 64 |

+

3.4 Patent Claims. If you bring or threaten to bring a patent claim

|

| 65 |

+

against any Licensor (including any claim, cross-claim or

|

| 66 |

+

counterclaim in a lawsuit) to enforce any patents that you allege

|

| 67 |

+

are infringed by any Work, then your rights under this License from

|

| 68 |

+

such Licensor (including the grants in Sections 2.1) will terminate

|

| 69 |

+

immediately.

|

| 70 |

+

|

| 71 |

+

3.5 Trademarks. This License does not grant any rights to use any

|

| 72 |

+

Licensor’s or its affiliates’ names, logos, or trademarks, except

|

| 73 |

+

as necessary to reproduce the notices described in this License.

|

| 74 |

+

|

| 75 |

+

3.6 Termination. If you violate any term of this License, then your

|

| 76 |

+

rights under this License (including the grants in Sections 2.1)

|

| 77 |

+

will terminate immediately.

|

| 78 |

+

|

| 79 |

+

4. Disclaimer of Warranty.

|

| 80 |

+

|

| 81 |

+

THE WORK IS PROVIDED "AS IS" WITHOUT WARRANTIES OR CONDITIONS OF ANY

|

| 82 |

+

KIND, EITHER EXPRESS OR IMPLIED, INCLUDING WARRANTIES OR CONDITIONS OF

|

| 83 |

+

MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE, TITLE OR

|

| 84 |

+

NON-INFRINGEMENT. YOU BEAR THE RISK OF UNDERTAKING ANY ACTIVITIES UNDER

|

| 85 |

+

THIS LICENSE.

|

| 86 |

+

|

| 87 |

+

5. Limitation of Liability.

|

| 88 |

+

|

| 89 |

+

EXCEPT AS PROHIBITED BY APPLICABLE LAW, IN NO EVENT AND UNDER NO LEGAL

|

| 90 |

+

THEORY, WHETHER IN TORT (INCLUDING NEGLIGENCE), CONTRACT, OR OTHERWISE

|

| 91 |

+

SHALL ANY LICENSOR BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY DIRECT,

|

| 92 |

+

INDIRECT, SPECIAL, INCIDENTAL, OR CONSEQUENTIAL DAMAGES ARISING OUT OF

|

| 93 |

+

OR RELATED TO THIS LICENSE, THE USE OR INABILITY TO USE THE WORK

|

| 94 |

+

(INCLUDING BUT NOT LIMITED TO LOSS OF GOODWILL, BUSINESS INTERRUPTION,

|

| 95 |

+

LOST PROFITS OR DATA, COMPUTER FAILURE OR MALFUNCTION, OR ANY OTHER

|

| 96 |

+

COMMERCIAL DAMAGES OR LOSSES), EVEN IF THE LICENSOR HAS BEEN ADVISED OF

|

| 97 |

+

THE POSSIBILITY OF SUCH DAMAGES.

|

| 98 |

+

|

| 99 |

+

=======================================================================

|

LICENSE_WEIGHT

ADDED

|

@@ -0,0 +1,407 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Attribution-NonCommercial 4.0 International

|

| 2 |

+

|

| 3 |

+

=======================================================================

|

| 4 |

+

|

| 5 |

+

Creative Commons Corporation ("Creative Commons") is not a law firm and

|

| 6 |

+

does not provide legal services or legal advice. Distribution of

|

| 7 |

+

Creative Commons public licenses does not create a lawyer-client or

|

| 8 |

+

other relationship. Creative Commons makes its licenses and related

|

| 9 |

+

information available on an "as-is" basis. Creative Commons gives no

|

| 10 |

+

warranties regarding its licenses, any material licensed under their

|

| 11 |

+

terms and conditions, or any related information. Creative Commons

|

| 12 |

+

disclaims all liability for damages resulting from their use to the

|

| 13 |

+

fullest extent possible.

|

| 14 |

+

|

| 15 |

+

Using Creative Commons Public Licenses

|

| 16 |

+

|

| 17 |

+

Creative Commons public licenses provide a standard set of terms and

|

| 18 |

+

conditions that creators and other rights holders may use to share

|

| 19 |

+

original works of authorship and other material subject to copyright

|

| 20 |

+

and certain other rights specified in the public license below. The

|

| 21 |

+

following considerations are for informational purposes only, are not

|

| 22 |

+

exhaustive, and do not form part of our licenses.

|

| 23 |

+

|

| 24 |

+

Considerations for licensors: Our public licenses are

|

| 25 |

+

intended for use by those authorized to give the public

|

| 26 |

+

permission to use material in ways otherwise restricted by

|

| 27 |

+

copyright and certain other rights. Our licenses are

|

| 28 |

+

irrevocable. Licensors should read and understand the terms

|

| 29 |

+

and conditions of the license they choose before applying it.

|

| 30 |

+

Licensors should also secure all rights necessary before

|

| 31 |

+

applying our licenses so that the public can reuse the

|

| 32 |

+

material as expected. Licensors should clearly mark any

|

| 33 |

+

material not subject to the license. This includes other CC-

|

| 34 |

+

licensed material, or material used under an exception or

|

| 35 |

+

limitation to copyright. More considerations for licensors:

|

| 36 |

+

wiki.creativecommons.org/Considerations_for_licensors

|

| 37 |

+

|

| 38 |

+

Considerations for the public: By using one of our public

|

| 39 |

+

licenses, a licensor grants the public permission to use the

|

| 40 |

+

licensed material under specified terms and conditions. If

|

| 41 |

+

the licensor's permission is not necessary for any reason--for

|

| 42 |

+

example, because of any applicable exception or limitation to

|

| 43 |

+

copyright--then that use is not regulated by the license. Our

|

| 44 |

+

licenses grant only permissions under copyright and certain

|

| 45 |

+

other rights that a licensor has authority to grant. Use of

|

| 46 |

+

the licensed material may still be restricted for other

|

| 47 |

+

reasons, including because others have copyright or other

|

| 48 |

+

rights in the material. A licensor may make special requests,

|

| 49 |

+

such as asking that all changes be marked or described.

|

| 50 |

+

Although not required by our licenses, you are encouraged to

|

| 51 |

+

respect those requests where reasonable. More considerations

|

| 52 |

+

for the public:

|

| 53 |

+

wiki.creativecommons.org/Considerations_for_licensees

|

| 54 |

+

|

| 55 |

+

=======================================================================

|

| 56 |

+

|

| 57 |

+

Creative Commons Attribution-NonCommercial 4.0 International Public

|

| 58 |

+

License

|

| 59 |

+

|

| 60 |

+

By exercising the Licensed Rights (defined below), You accept and agree

|

| 61 |

+

to be bound by the terms and conditions of this Creative Commons

|

| 62 |

+

Attribution-NonCommercial 4.0 International Public License ("Public

|

| 63 |

+

License"). To the extent this Public License may be interpreted as a

|

| 64 |

+

contract, You are granted the Licensed Rights in consideration of Your

|

| 65 |

+

acceptance of these terms and conditions, and the Licensor grants You

|

| 66 |

+

such rights in consideration of benefits the Licensor receives from

|

| 67 |

+

making the Licensed Material available under these terms and

|

| 68 |

+

conditions.

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

Section 1 -- Definitions.

|

| 72 |

+

|

| 73 |

+

a. Adapted Material means material subject to Copyright and Similar

|

| 74 |

+

Rights that is derived from or based upon the Licensed Material

|

| 75 |

+

and in which the Licensed Material is translated, altered,

|

| 76 |

+

arranged, transformed, or otherwise modified in a manner requiring

|

| 77 |

+

permission under the Copyright and Similar Rights held by the

|

| 78 |

+

Licensor. For purposes of this Public License, where the Licensed

|

| 79 |

+

Material is a musical work, performance, or sound recording,

|

| 80 |

+

Adapted Material is always produced where the Licensed Material is

|

| 81 |

+

synched in timed relation with a moving image.

|

| 82 |

+

|

| 83 |

+

b. Adapter's License means the license You apply to Your Copyright

|

| 84 |

+

and Similar Rights in Your contributions to Adapted Material in

|

| 85 |

+

accordance with the terms and conditions of this Public License.

|

| 86 |

+

|

| 87 |

+

c. Copyright and Similar Rights means copyright and/or similar rights

|

| 88 |

+

closely related to copyright including, without limitation,

|

| 89 |

+

performance, broadcast, sound recording, and Sui Generis Database

|

| 90 |

+

Rights, without regard to how the rights are labeled or

|

| 91 |

+

categorized. For purposes of this Public License, the rights

|

| 92 |

+

specified in Section 2(b)(1)-(2) are not Copyright and Similar

|

| 93 |

+

Rights.

|

| 94 |

+

d. Effective Technological Measures means those measures that, in the

|

| 95 |

+

absence of proper authority, may not be circumvented under laws

|

| 96 |

+

fulfilling obligations under Article 11 of the WIPO Copyright

|

| 97 |

+

Treaty adopted on December 20, 1996, and/or similar international

|

| 98 |

+

agreements.

|

| 99 |

+

|

| 100 |

+

e. Exceptions and Limitations means fair use, fair dealing, and/or

|

| 101 |

+

any other exception or limitation to Copyright and Similar Rights

|

| 102 |

+

that applies to Your use of the Licensed Material.

|

| 103 |

+

|

| 104 |

+

f. Licensed Material means the artistic or literary work, database,

|

| 105 |

+

or other material to which the Licensor applied this Public

|

| 106 |

+

License.

|

| 107 |

+

|

| 108 |

+

g. Licensed Rights means the rights granted to You subject to the

|

| 109 |

+

terms and conditions of this Public License, which are limited to

|

| 110 |

+

all Copyright and Similar Rights that apply to Your use of the

|

| 111 |

+

Licensed Material and that the Licensor has authority to license.

|

| 112 |

+

|

| 113 |

+

h. Licensor means the individual(s) or entity(ies) granting rights

|

| 114 |

+

under this Public License.

|

| 115 |

+

|

| 116 |

+

i. NonCommercial means not primarily intended for or directed towards

|

| 117 |

+

commercial advantage or monetary compensation. For purposes of

|

| 118 |

+

this Public License, the exchange of the Licensed Material for

|

| 119 |

+

other material subject to Copyright and Similar Rights by digital

|

| 120 |

+

file-sharing or similar means is NonCommercial provided there is

|

| 121 |

+

no payment of monetary compensation in connection with the

|

| 122 |

+

exchange.

|

| 123 |

+

|

| 124 |

+

j. Share means to provide material to the public by any means or

|

| 125 |

+

process that requires permission under the Licensed Rights, such

|

| 126 |

+

as reproduction, public display, public performance, distribution,

|

| 127 |

+

dissemination, communication, or importation, and to make material

|

| 128 |

+

available to the public including in ways that members of the

|

| 129 |

+

public may access the material from a place and at a time

|

| 130 |

+

individually chosen by them.

|

| 131 |

+

|

| 132 |

+

k. Sui Generis Database Rights means rights other than copyright

|

| 133 |

+

resulting from Directive 96/9/EC of the European Parliament and of

|

| 134 |

+

the Council of 11 March 1996 on the legal protection of databases,

|

| 135 |

+

as amended and/or succeeded, as well as other essentially

|

| 136 |

+

equivalent rights anywhere in the world.

|

| 137 |

+

|

| 138 |

+

l. You means the individual or entity exercising the Licensed Rights

|

| 139 |

+

under this Public License. Your has a corresponding meaning.

|

| 140 |

+

|

| 141 |

+

|

| 142 |

+

Section 2 -- Scope.

|

| 143 |

+

|

| 144 |

+

a. License grant.

|

| 145 |

+

|

| 146 |

+

1. Subject to the terms and conditions of this Public License,

|

| 147 |

+

the Licensor hereby grants You a worldwide, royalty-free,

|

| 148 |

+

non-sublicensable, non-exclusive, irrevocable license to

|

| 149 |

+

exercise the Licensed Rights in the Licensed Material to:

|

| 150 |

+

|

| 151 |

+

a. reproduce and Share the Licensed Material, in whole or

|

| 152 |

+

in part, for NonCommercial purposes only; and

|

| 153 |

+

|

| 154 |

+

b. produce, reproduce, and Share Adapted Material for

|

| 155 |

+

NonCommercial purposes only.

|

| 156 |

+

|

| 157 |

+

2. Exceptions and Limitations. For the avoidance of doubt, where

|

| 158 |

+

Exceptions and Limitations apply to Your use, this Public

|

| 159 |

+

License does not apply, and You do not need to comply with

|

| 160 |

+

its terms and conditions.

|

| 161 |

+

|

| 162 |

+

3. Term. The term of this Public License is specified in Section

|

| 163 |

+

6(a).

|

| 164 |

+

|

| 165 |

+

4. Media and formats; technical modifications allowed. The

|

| 166 |

+

Licensor authorizes You to exercise the Licensed Rights in

|

| 167 |

+

all media and formats whether now known or hereafter created,

|

| 168 |

+

and to make technical modifications necessary to do so. The

|

| 169 |

+

Licensor waives and/or agrees not to assert any right or

|

| 170 |

+

authority to forbid You from making technical modifications

|

| 171 |

+

necessary to exercise the Licensed Rights, including

|

| 172 |

+

technical modifications necessary to circumvent Effective

|

| 173 |

+

Technological Measures. For purposes of this Public License,

|

| 174 |

+

simply making modifications authorized by this Section 2(a)

|

| 175 |

+

(4) never produces Adapted Material.

|

| 176 |

+

|

| 177 |

+

5. Downstream recipients.

|

| 178 |

+

|

| 179 |

+

a. Offer from the Licensor -- Licensed Material. Every

|

| 180 |

+

recipient of the Licensed Material automatically

|

| 181 |

+

receives an offer from the Licensor to exercise the

|

| 182 |

+

Licensed Rights under the terms and conditions of this

|

| 183 |

+

Public License.

|

| 184 |

+

|

| 185 |

+

b. No downstream restrictions. You may not offer or impose

|

| 186 |

+

any additional or different terms or conditions on, or

|

| 187 |

+

apply any Effective Technological Measures to, the

|

| 188 |

+

Licensed Material if doing so restricts exercise of the

|

| 189 |

+

Licensed Rights by any recipient of the Licensed

|

| 190 |

+

Material.

|

| 191 |

+

|

| 192 |

+

6. No endorsement. Nothing in this Public License constitutes or

|

| 193 |

+

may be construed as permission to assert or imply that You

|

| 194 |

+

are, or that Your use of the Licensed Material is, connected

|

| 195 |

+

with, or sponsored, endorsed, or granted official status by,

|

| 196 |

+

the Licensor or others designated to receive attribution as

|

| 197 |

+

provided in Section 3(a)(1)(A)(i).

|

| 198 |

+

|

| 199 |

+

b. Other rights.

|

| 200 |

+

|

| 201 |

+

1. Moral rights, such as the right of integrity, are not

|

| 202 |

+

licensed under this Public License, nor are publicity,

|

| 203 |

+

privacy, and/or other similar personality rights; however, to

|

| 204 |

+

the extent possible, the Licensor waives and/or agrees not to

|

| 205 |

+

assert any such rights held by the Licensor to the limited

|

| 206 |

+

extent necessary to allow You to exercise the Licensed

|

| 207 |

+

Rights, but not otherwise.

|

| 208 |

+

|

| 209 |

+

2. Patent and trademark rights are not licensed under this

|

| 210 |

+

Public License.

|

| 211 |

+

|

| 212 |

+

3. To the extent possible, the Licensor waives any right to

|

| 213 |

+

collect royalties from You for the exercise of the Licensed

|

| 214 |

+

Rights, whether directly or through a collecting society

|

| 215 |

+

under any voluntary or waivable statutory or compulsory

|

| 216 |

+

licensing scheme. In all other cases the Licensor expressly

|

| 217 |

+

reserves any right to collect such royalties, including when

|

| 218 |

+

the Licensed Material is used other than for NonCommercial

|

| 219 |

+

purposes.

|

| 220 |

+

|

| 221 |

+

|

| 222 |

+

Section 3 -- License Conditions.

|

| 223 |

+

|

| 224 |

+

Your exercise of the Licensed Rights is expressly made subject to the

|

| 225 |

+

following conditions.

|

| 226 |

+

|

| 227 |

+

a. Attribution.

|

| 228 |

+

|

| 229 |

+

1. If You Share the Licensed Material (including in modified

|

| 230 |

+

form), You must:

|

| 231 |

+

|

| 232 |

+

a. retain the following if it is supplied by the Licensor

|

| 233 |

+

with the Licensed Material:

|

| 234 |

+

|

| 235 |

+

i. identification of the creator(s) of the Licensed

|

| 236 |

+

Material and any others designated to receive

|

| 237 |

+

attribution, in any reasonable manner requested by

|

| 238 |

+

the Licensor (including by pseudonym if

|

| 239 |

+

designated);

|

| 240 |

+

|

| 241 |

+

ii. a copyright notice;

|

| 242 |

+

|

| 243 |

+

iii. a notice that refers to this Public License;

|

| 244 |

+

|

| 245 |

+

iv. a notice that refers to the disclaimer of

|

| 246 |

+

warranties;

|

| 247 |

+

|

| 248 |

+

v. a URI or hyperlink to the Licensed Material to the

|

| 249 |

+

extent reasonably practicable;

|

| 250 |

+

|

| 251 |

+

b. indicate if You modified the Licensed Material and

|

| 252 |

+

retain an indication of any previous modifications; and

|

| 253 |

+

|

| 254 |

+

c. indicate the Licensed Material is licensed under this

|

| 255 |

+

Public License, and include the text of, or the URI or

|

| 256 |

+

hyperlink to, this Public License.

|

| 257 |

+

|

| 258 |

+

2. You may satisfy the conditions in Section 3(a)(1) in any

|

| 259 |

+

reasonable manner based on the medium, means, and context in

|

| 260 |

+

which You Share the Licensed Material. For example, it may be

|

| 261 |

+

reasonable to satisfy the conditions by providing a URI or

|

| 262 |

+

hyperlink to a resource that includes the required

|

| 263 |

+

information.

|

| 264 |

+

|

| 265 |

+

3. If requested by the Licensor, You must remove any of the

|

| 266 |

+

information required by Section 3(a)(1)(A) to the extent

|

| 267 |

+

reasonably practicable.

|

| 268 |

+

|

| 269 |

+

4. If You Share Adapted Material You produce, the Adapter's

|

| 270 |

+

License You apply must not prevent recipients of the Adapted

|

| 271 |

+

Material from complying with this Public License.

|

| 272 |

+

|

| 273 |

+

|

| 274 |

+

Section 4 -- Sui Generis Database Rights.

|

| 275 |

+

|

| 276 |

+

Where the Licensed Rights include Sui Generis Database Rights that

|

| 277 |

+

apply to Your use of the Licensed Material:

|

| 278 |

+

|

| 279 |

+

a. for the avoidance of doubt, Section 2(a)(1) grants You the right

|

| 280 |

+

to extract, reuse, reproduce, and Share all or a substantial

|

| 281 |

+

portion of the contents of the database for NonCommercial purposes

|

| 282 |

+

only;

|

| 283 |

+

|

| 284 |

+

b. if You include all or a substantial portion of the database

|

| 285 |

+

contents in a database in which You have Sui Generis Database

|

| 286 |

+

Rights, then the database in which You have Sui Generis Database

|

| 287 |

+

Rights (but not its individual contents) is Adapted Material; and

|

| 288 |

+

|

| 289 |

+

c. You must comply with the conditions in Section 3(a) if You Share

|

| 290 |

+

all or a substantial portion of the contents of the database.

|

| 291 |

+

|

| 292 |

+

For the avoidance of doubt, this Section 4 supplements and does not

|

| 293 |

+

replace Your obligations under this Public License where the Licensed

|

| 294 |

+

Rights include other Copyright and Similar Rights.

|

| 295 |

+

|

| 296 |

+

|

| 297 |

+

Section 5 -- Disclaimer of Warranties and Limitation of Liability.

|

| 298 |

+

|

| 299 |

+

a. UNLESS OTHERWISE SEPARATELY UNDERTAKEN BY THE LICENSOR, TO THE

|

| 300 |

+

EXTENT POSSIBLE, THE LICENSOR OFFERS THE LICENSED MATERIAL AS-IS

|

| 301 |

+

AND AS-AVAILABLE, AND MAKES NO REPRESENTATIONS OR WARRANTIES OF

|

| 302 |

+

ANY KIND CONCERNING THE LICENSED MATERIAL, WHETHER EXPRESS,

|

| 303 |

+

IMPLIED, STATUTORY, OR OTHER. THIS INCLUDES, WITHOUT LIMITATION,

|

| 304 |

+

WARRANTIES OF TITLE, MERCHANTABILITY, FITNESS FOR A PARTICULAR

|

| 305 |

+

PURPOSE, NON-INFRINGEMENT, ABSENCE OF LATENT OR OTHER DEFECTS,

|

| 306 |

+

ACCURACY, OR THE PRESENCE OR ABSENCE OF ERRORS, WHETHER OR NOT

|

| 307 |

+

KNOWN OR DISCOVERABLE. WHERE DISCLAIMERS OF WARRANTIES ARE NOT

|

| 308 |

+

ALLOWED IN FULL OR IN PART, THIS DISCLAIMER MAY NOT APPLY TO YOU.

|

| 309 |

+

|

| 310 |

+

b. TO THE EXTENT POSSIBLE, IN NO EVENT WILL THE LICENSOR BE LIABLE

|

| 311 |

+

TO YOU ON ANY LEGAL THEORY (INCLUDING, WITHOUT LIMITATION,

|

| 312 |

+

NEGLIGENCE) OR OTHERWISE FOR ANY DIRECT, SPECIAL, INDIRECT,

|

| 313 |

+

INCIDENTAL, CONSEQUENTIAL, PUNITIVE, EXEMPLARY, OR OTHER LOSSES,

|

| 314 |

+

COSTS, EXPENSES, OR DAMAGES ARISING OUT OF THIS PUBLIC LICENSE OR

|

| 315 |

+

USE OF THE LICENSED MATERIAL, EVEN IF THE LICENSOR HAS BEEN

|

| 316 |

+

ADVISED OF THE POSSIBILITY OF SUCH LOSSES, COSTS, EXPENSES, OR

|

| 317 |

+

DAMAGES. WHERE A LIMITATION OF LIABILITY IS NOT ALLOWED IN FULL OR

|

| 318 |

+

IN PART, THIS LIMITATION MAY NOT APPLY TO YOU.

|

| 319 |

+

|

| 320 |

+

c. The disclaimer of warranties and limitation of liability provided

|

| 321 |

+

above shall be interpreted in a manner that, to the extent

|

| 322 |

+

possible, most closely approximates an absolute disclaimer and

|

| 323 |

+

waiver of all liability.

|

| 324 |

+

|

| 325 |

+

|

| 326 |

+

Section 6 -- Term and Termination.

|

| 327 |

+

|

| 328 |

+

a. This Public License applies for the term of the Copyright and

|

| 329 |

+

Similar Rights licensed here. However, if You fail to comply with

|

| 330 |

+

this Public License, then Your rights under this Public License

|

| 331 |

+

terminate automatically.

|

| 332 |

+

|

| 333 |

+

b. Where Your right to use the Licensed Material has terminated under

|

| 334 |

+

Section 6(a), it reinstates:

|

| 335 |

+

|

| 336 |

+

1. automatically as of the date the violation is cured, provided

|

| 337 |

+

it is cured within 30 days of Your discovery of the

|

| 338 |

+

violation; or

|

| 339 |

+

|

| 340 |

+

2. upon express reinstatement by the Licensor.

|

| 341 |

+

|

| 342 |

+

For the avoidance of doubt, this Section 6(b) does not affect any

|

| 343 |

+

right the Licensor may have to seek remedies for Your violations

|

| 344 |

+

of this Public License.

|

| 345 |

+

|

| 346 |

+

c. For the avoidance of doubt, the Licensor may also offer the

|

| 347 |

+

Licensed Material under separate terms or conditions or stop

|

| 348 |

+

distributing the Licensed Material at any time; however, doing so

|

| 349 |

+

will not terminate this Public License.

|

| 350 |

+

|

| 351 |

+

d. Sections 1, 5, 6, 7, and 8 survive termination of this Public

|

| 352 |

+

License.

|

| 353 |

+

|

| 354 |

+

|

| 355 |

+

Section 7 -- Other Terms and Conditions.

|

| 356 |

+

|

| 357 |

+

a. The Licensor shall not be bound by any additional or different

|

| 358 |

+

terms or conditions communicated by You unless expressly agreed.

|

| 359 |

+

|

| 360 |

+

b. Any arrangements, understandings, or agreements regarding the

|

| 361 |

+

Licensed Material not stated herein are separate from and

|

| 362 |

+