Spaces:

Runtime error

Runtime error

uploadfile first

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- text-generation-webui/.dockerignore +9 -0

- text-generation-webui/.env.example +25 -0

- text-generation-webui/.github/FUNDING.yml +1 -0

- text-generation-webui/.github/ISSUE_TEMPLATE/bug_report_template.yml +53 -0

- text-generation-webui/.github/ISSUE_TEMPLATE/feature_request.md +16 -0

- text-generation-webui/.github/dependabot.yml +11 -0

- text-generation-webui/.github/workflows/stale.yml +22 -0

- text-generation-webui/.gitignore +27 -0

- text-generation-webui/Dockerfile.tt +68 -0

- text-generation-webui/LICENSE +661 -0

- text-generation-webui/README.md +310 -0

- text-generation-webui/api-example-stream.py +92 -0

- text-generation-webui/api-example.py +57 -0

- text-generation-webui/cache/Example.png_cache.png +0 -0

- text-generation-webui/characters/Example.png +0 -0

- text-generation-webui/characters/Example.yaml +16 -0

- text-generation-webui/characters/instruction-following/Alpaca.yaml +3 -0

- text-generation-webui/characters/instruction-following/ChatGLM.yaml +3 -0

- text-generation-webui/characters/instruction-following/Koala.yaml +3 -0

- text-generation-webui/characters/instruction-following/Open Assistant.yaml +3 -0

- text-generation-webui/characters/instruction-following/Vicuna.yaml +3 -0

- text-generation-webui/convert-to-flexgen.py +63 -0

- text-generation-webui/convert-to-safetensors.py +38 -0

- text-generation-webui/css/chat.css +43 -0

- text-generation-webui/css/chat.js +4 -0

- text-generation-webui/css/html_4chan_style.css +103 -0

- text-generation-webui/css/html_bubble_chat_style.css +86 -0

- text-generation-webui/css/html_cai_style.css +91 -0

- text-generation-webui/css/html_instruct_style.css +73 -0

- text-generation-webui/css/html_readable_style.css +28 -0

- text-generation-webui/css/main.css +79 -0

- text-generation-webui/css/main.js +18 -0

- text-generation-webui/docker-compose.yml +31 -0

- text-generation-webui/download-model.py +270 -0

- text-generation-webui/extensions/api/requirements.txt +1 -0

- text-generation-webui/extensions/api/script.py +118 -0

- text-generation-webui/extensions/character_bias/script.py +82 -0

- text-generation-webui/extensions/elevenlabs_tts/outputs/outputs-will-be-saved-here.txt +0 -0

- text-generation-webui/extensions/elevenlabs_tts/requirements.txt +3 -0

- text-generation-webui/extensions/elevenlabs_tts/script.py +122 -0

- text-generation-webui/extensions/gallery/__pycache__/script.cpython-310.pyc +0 -0

- text-generation-webui/extensions/gallery/script.py +96 -0

- text-generation-webui/extensions/google_translate/requirements.txt +1 -0

- text-generation-webui/extensions/google_translate/script.py +46 -0

- text-generation-webui/extensions/llama_prompts/script.py +21 -0

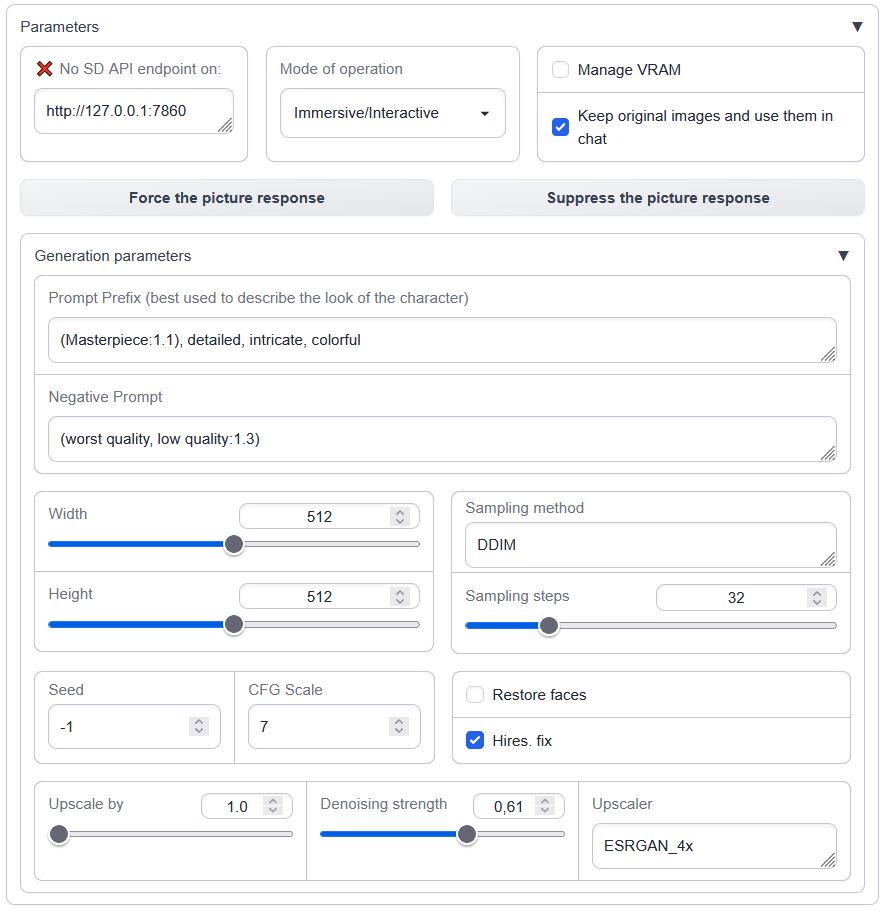

- text-generation-webui/extensions/sd_api_pictures/README.MD +90 -0

- text-generation-webui/extensions/sd_api_pictures/script.py +299 -0

- text-generation-webui/extensions/send_pictures/script.py +47 -0

- text-generation-webui/extensions/silero_tts/outputs/outputs-will-be-saved-here.txt +0 -0

- text-generation-webui/extensions/silero_tts/requirements.txt +5 -0

text-generation-webui/.dockerignore

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.env

|

| 2 |

+

Dockerfile

|

| 3 |

+

/characters

|

| 4 |

+

/loras

|

| 5 |

+

/models

|

| 6 |

+

/presets

|

| 7 |

+

/prompts

|

| 8 |

+

/softprompts

|

| 9 |

+

/training

|

text-generation-webui/.env.example

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# by default the Dockerfile specifies these versions: 3.5;5.0;6.0;6.1;7.0;7.5;8.0;8.6+PTX

|

| 2 |

+

# however for me to work i had to specify the exact version for my card ( 2060 ) it was 7.5

|

| 3 |

+

# https://developer.nvidia.com/cuda-gpus you can find the version for your card here

|

| 4 |

+

TORCH_CUDA_ARCH_LIST=7.5

|

| 5 |

+

|

| 6 |

+

# these commands worked for me with roughly 4.5GB of vram

|

| 7 |

+

CLI_ARGS=--model llama-7b-4bit --wbits 4 --listen --auto-devices

|

| 8 |

+

|

| 9 |

+

# the following examples have been tested with the files linked in docs/README_docker.md:

|

| 10 |

+

# example running 13b with 4bit/128 groupsize : CLI_ARGS=--model llama-13b-4bit-128g --wbits 4 --listen --groupsize 128 --pre_layer 25

|

| 11 |

+

# example with loading api extension and public share: CLI_ARGS=--model llama-7b-4bit --wbits 4 --listen --auto-devices --no-stream --extensions api --share

|

| 12 |

+

# example running 7b with 8bit groupsize : CLI_ARGS=--model llama-7b --load-in-8bit --listen --auto-devices

|

| 13 |

+

|

| 14 |

+

# the port the webui binds to on the host

|

| 15 |

+

HOST_PORT=7860

|

| 16 |

+

# the port the webui binds to inside the container

|

| 17 |

+

CONTAINER_PORT=7860

|

| 18 |

+

|

| 19 |

+

# the port the api binds to on the host

|

| 20 |

+

HOST_API_PORT=5000

|

| 21 |

+

# the port the api binds to inside the container

|

| 22 |

+

CONTAINER_API_PORT=5000

|

| 23 |

+

|

| 24 |

+

# the version used to install text-generation-webui from

|

| 25 |

+

WEBUI_VERSION=HEAD

|

text-generation-webui/.github/FUNDING.yml

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

ko_fi: oobabooga

|

text-generation-webui/.github/ISSUE_TEMPLATE/bug_report_template.yml

ADDED

|

@@ -0,0 +1,53 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: "Bug report"

|

| 2 |

+

description: Report a bug

|

| 3 |

+

labels: [ "bug" ]

|

| 4 |

+

body:

|

| 5 |

+

- type: markdown

|

| 6 |

+

attributes:

|

| 7 |

+

value: |

|

| 8 |

+

Thanks for taking the time to fill out this bug report!

|

| 9 |

+

- type: textarea

|

| 10 |

+

id: bug-description

|

| 11 |

+

attributes:

|

| 12 |

+

label: Describe the bug

|

| 13 |

+

description: A clear and concise description of what the bug is.

|

| 14 |

+

placeholder: Bug description

|

| 15 |

+

validations:

|

| 16 |

+

required: true

|

| 17 |

+

- type: checkboxes

|

| 18 |

+

attributes:

|

| 19 |

+

label: Is there an existing issue for this?

|

| 20 |

+

description: Please search to see if an issue already exists for the issue you encountered.

|

| 21 |

+

options:

|

| 22 |

+

- label: I have searched the existing issues

|

| 23 |

+

required: true

|

| 24 |

+

- type: textarea

|

| 25 |

+

id: reproduction

|

| 26 |

+

attributes:

|

| 27 |

+

label: Reproduction

|

| 28 |

+

description: Please provide the steps necessary to reproduce your issue.

|

| 29 |

+

placeholder: Reproduction

|

| 30 |

+

validations:

|

| 31 |

+

required: true

|

| 32 |

+

- type: textarea

|

| 33 |

+

id: screenshot

|

| 34 |

+

attributes:

|

| 35 |

+

label: Screenshot

|

| 36 |

+

description: "If possible, please include screenshot(s) so that we can understand what the issue is."

|

| 37 |

+

- type: textarea

|

| 38 |

+

id: logs

|

| 39 |

+

attributes:

|

| 40 |

+

label: Logs

|

| 41 |

+

description: "Please include the full stacktrace of the errors you get in the command-line (if any)."

|

| 42 |

+

render: shell

|

| 43 |

+

validations:

|

| 44 |

+

required: true

|

| 45 |

+

- type: textarea

|

| 46 |

+

id: system-info

|

| 47 |

+

attributes:

|

| 48 |

+

label: System Info

|

| 49 |

+

description: "Please share your system info with us: operating system, GPU brand, and GPU model. If you are using a Google Colab notebook, mention that instead."

|

| 50 |

+

render: shell

|

| 51 |

+

placeholder:

|

| 52 |

+

validations:

|

| 53 |

+

required: true

|

text-generation-webui/.github/ISSUE_TEMPLATE/feature_request.md

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

name: Feature request

|

| 3 |

+

about: Suggest an improvement or new feature for the web UI

|

| 4 |

+

title: ''

|

| 5 |

+

labels: 'enhancement'

|

| 6 |

+

assignees: ''

|

| 7 |

+

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

**Description**

|

| 11 |

+

|

| 12 |

+

A clear and concise description of what you want to be implemented.

|

| 13 |

+

|

| 14 |

+

**Additional Context**

|

| 15 |

+

|

| 16 |

+

If applicable, please provide any extra information, external links, or screenshots that could be useful.

|

text-generation-webui/.github/dependabot.yml

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# To get started with Dependabot version updates, you'll need to specify which

|

| 2 |

+

# package ecosystems to update and where the package manifests are located.

|

| 3 |

+

# Please see the documentation for all configuration options:

|

| 4 |

+

# https://docs.github.com/github/administering-a-repository/configuration-options-for-dependency-updates

|

| 5 |

+

|

| 6 |

+

version: 2

|

| 7 |

+

updates:

|

| 8 |

+

- package-ecosystem: "pip" # See documentation for possible values

|

| 9 |

+

directory: "/" # Location of package manifests

|

| 10 |

+

schedule:

|

| 11 |

+

interval: "weekly"

|

text-generation-webui/.github/workflows/stale.yml

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: Close inactive issues

|

| 2 |

+

on:

|

| 3 |

+

schedule:

|

| 4 |

+

- cron: "10 23 * * *"

|

| 5 |

+

|

| 6 |

+

jobs:

|

| 7 |

+

close-issues:

|

| 8 |

+

runs-on: ubuntu-latest

|

| 9 |

+

permissions:

|

| 10 |

+

issues: write

|

| 11 |

+

pull-requests: write

|

| 12 |

+

steps:

|

| 13 |

+

- uses: actions/stale@v5

|

| 14 |

+

with:

|

| 15 |

+

stale-issue-message: ""

|

| 16 |

+

close-issue-message: "This issue has been closed due to inactivity for 30 days. If you believe it is still relevant, please leave a comment below."

|

| 17 |

+

days-before-issue-stale: 30

|

| 18 |

+

days-before-issue-close: 0

|

| 19 |

+

stale-issue-label: "stale"

|

| 20 |

+

days-before-pr-stale: -1

|

| 21 |

+

days-before-pr-close: -1

|

| 22 |

+

repo-token: ${{ secrets.GITHUB_TOKEN }}

|

text-generation-webui/.gitignore

ADDED

|

@@ -0,0 +1,27 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

cache

|

| 2 |

+

characters

|

| 3 |

+

training/datasets

|

| 4 |

+

extensions/silero_tts/outputs

|

| 5 |

+

extensions/elevenlabs_tts/outputs

|

| 6 |

+

extensions/sd_api_pictures/outputs

|

| 7 |

+

logs

|

| 8 |

+

loras

|

| 9 |

+

models

|

| 10 |

+

repositories

|

| 11 |

+

softprompts

|

| 12 |

+

torch-dumps

|

| 13 |

+

*pycache*

|

| 14 |

+

*/*pycache*

|

| 15 |

+

*/*/pycache*

|

| 16 |

+

venv/

|

| 17 |

+

.venv/

|

| 18 |

+

.vscode

|

| 19 |

+

*.bak

|

| 20 |

+

*.ipynb

|

| 21 |

+

*.log

|

| 22 |

+

|

| 23 |

+

settings.json

|

| 24 |

+

img_bot*

|

| 25 |

+

img_me*

|

| 26 |

+

prompts/[0-9]*

|

| 27 |

+

models/config-user.yaml

|

text-generation-webui/Dockerfile.tt

ADDED

|

@@ -0,0 +1,68 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM nvidia/cuda:11.8.0-devel-ubuntu22.04 as builder

|

| 2 |

+

|

| 3 |

+

RUN apt-get update && \

|

| 4 |

+

apt-get install --no-install-recommends -y git vim build-essential python3-dev python3-venv && \

|

| 5 |

+

rm -rf /var/lib/apt/lists/*

|

| 6 |

+

|

| 7 |

+

RUN git clone https://github.com/oobabooga/GPTQ-for-LLaMa /build

|

| 8 |

+

|

| 9 |

+

WORKDIR /build

|

| 10 |

+

|

| 11 |

+

RUN python3 -m venv /build/venv

|

| 12 |

+

RUN . /build/venv/bin/activate && \

|

| 13 |

+

pip3 install --upgrade pip setuptools && \

|

| 14 |

+

pip3 install torch torchvision torchaudio && \

|

| 15 |

+

pip3 install -r requirements.txt

|

| 16 |

+

|

| 17 |

+

# https://developer.nvidia.com/cuda-gpus

|

| 18 |

+

# for a rtx 2060: ARG TORCH_CUDA_ARCH_LIST="7.5"

|

| 19 |

+

ARG TORCH_CUDA_ARCH_LIST="3.5;5.0;6.0;6.1;7.0;7.5;8.0;8.6+PTX"

|

| 20 |

+

RUN . /build/venv/bin/activate && \

|

| 21 |

+

python3 setup_cuda.py bdist_wheel -d .

|

| 22 |

+

|

| 23 |

+

FROM nvidia/cuda:11.8.0-runtime-ubuntu22.04

|

| 24 |

+

|

| 25 |

+

LABEL maintainer="Your Name <your.email@example.com>"

|

| 26 |

+

LABEL description="Docker image for GPTQ-for-LLaMa and Text Generation WebUI"

|

| 27 |

+

|

| 28 |

+

RUN apt-get update && \

|

| 29 |

+

apt-get install --no-install-recommends -y libportaudio2 libasound-dev git python3 python3-pip make g++ && \

|

| 30 |

+

rm -rf /var/lib/apt/lists/*

|

| 31 |

+

|

| 32 |

+

RUN --mount=type=cache,target=/root/.cache/pip pip3 install virtualenv

|

| 33 |

+

RUN mkdir /app

|

| 34 |

+

|

| 35 |

+

WORKDIR /app

|

| 36 |

+

|

| 37 |

+

ARG WEBUI_VERSION

|

| 38 |

+

RUN test -n "${WEBUI_VERSION}" && git reset --hard ${WEBUI_VERSION} || echo "Using provided webui source"

|

| 39 |

+

|

| 40 |

+

RUN virtualenv /app/venv

|

| 41 |

+

RUN . /app/venv/bin/activate && \

|

| 42 |

+

pip3 install --upgrade pip setuptools && \

|

| 43 |

+

pip3 install torch torchvision torchaudio

|

| 44 |

+

|

| 45 |

+

COPY --from=builder /build /app/repositories/GPTQ-for-LLaMa

|

| 46 |

+

RUN . /app/venv/bin/activate && \

|

| 47 |

+

pip3 install /app/repositories/GPTQ-for-LLaMa/*.whl

|

| 48 |

+

|

| 49 |

+

COPY extensions/api/requirements.txt /app/extensions/api/requirements.txt

|

| 50 |

+

COPY extensions/elevenlabs_tts/requirements.txt /app/extensions/elevenlabs_tts/requirements.txt

|

| 51 |

+

COPY extensions/google_translate/requirements.txt /app/extensions/google_translate/requirements.txt

|

| 52 |

+

COPY extensions/silero_tts/requirements.txt /app/extensions/silero_tts/requirements.txt

|

| 53 |

+

COPY extensions/whisper_stt/requirements.txt /app/extensions/whisper_stt/requirements.txt

|

| 54 |

+

RUN --mount=type=cache,target=/root/.cache/pip . /app/venv/bin/activate && cd extensions/api && pip3 install -r requirements.txt

|

| 55 |

+

RUN --mount=type=cache,target=/root/.cache/pip . /app/venv/bin/activate && cd extensions/elevenlabs_tts && pip3 install -r requirements.txt

|

| 56 |

+

RUN --mount=type=cache,target=/root/.cache/pip . /app/venv/bin/activate && cd extensions/google_translate && pip3 install -r requirements.txt

|

| 57 |

+

RUN --mount=type=cache,target=/root/.cache/pip . /app/venv/bin/activate && cd extensions/silero_tts && pip3 install -r requirements.txt

|

| 58 |

+

RUN --mount=type=cache,target=/root/.cache/pip . /app/venv/bin/activate && cd extensions/whisper_stt && pip3 install -r requirements.txt

|

| 59 |

+

|

| 60 |

+

COPY requirements.txt /app/requirements.txt

|

| 61 |

+

RUN . /app/venv/bin/activate && \

|

| 62 |

+

pip3 install -r requirements.txt

|

| 63 |

+

|

| 64 |

+

RUN cp /app/venv/lib/python3.10/site-packages/bitsandbytes/libbitsandbytes_cuda118.so /app/venv/lib/python3.10/site-packages/bitsandbytes/libbitsandbytes_cpu.so

|

| 65 |

+

|

| 66 |

+

COPY . /app/

|

| 67 |

+

ENV CLI_ARGS=""

|

| 68 |

+

CMD . /app/venv/bin/activate && python3 server.py ${CLI_ARGS}

|

text-generation-webui/LICENSE

ADDED

|

@@ -0,0 +1,661 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

GNU AFFERO GENERAL PUBLIC LICENSE

|

| 2 |

+

Version 3, 19 November 2007

|

| 3 |

+

|

| 4 |

+

Copyright (C) 2007 Free Software Foundation, Inc. <https://fsf.org/>

|

| 5 |

+

Everyone is permitted to copy and distribute verbatim copies

|

| 6 |

+

of this license document, but changing it is not allowed.

|

| 7 |

+

|

| 8 |

+

Preamble

|

| 9 |

+

|

| 10 |

+

The GNU Affero General Public License is a free, copyleft license for

|

| 11 |

+

software and other kinds of works, specifically designed to ensure

|

| 12 |

+

cooperation with the community in the case of network server software.

|

| 13 |

+

|

| 14 |

+

The licenses for most software and other practical works are designed

|

| 15 |

+

to take away your freedom to share and change the works. By contrast,

|

| 16 |

+

our General Public Licenses are intended to guarantee your freedom to

|

| 17 |

+

share and change all versions of a program--to make sure it remains free

|

| 18 |

+

software for all its users.

|

| 19 |

+

|

| 20 |

+

When we speak of free software, we are referring to freedom, not

|

| 21 |

+

price. Our General Public Licenses are designed to make sure that you

|

| 22 |

+

have the freedom to distribute copies of free software (and charge for

|

| 23 |

+

them if you wish), that you receive source code or can get it if you

|

| 24 |

+

want it, that you can change the software or use pieces of it in new

|

| 25 |

+

free programs, and that you know you can do these things.

|

| 26 |

+

|

| 27 |

+

Developers that use our General Public Licenses protect your rights

|

| 28 |

+

with two steps: (1) assert copyright on the software, and (2) offer

|

| 29 |

+

you this License which gives you legal permission to copy, distribute

|

| 30 |

+

and/or modify the software.

|

| 31 |

+

|

| 32 |

+

A secondary benefit of defending all users' freedom is that

|

| 33 |

+

improvements made in alternate versions of the program, if they

|

| 34 |

+

receive widespread use, become available for other developers to

|

| 35 |

+

incorporate. Many developers of free software are heartened and

|

| 36 |

+

encouraged by the resulting cooperation. However, in the case of

|

| 37 |

+

software used on network servers, this result may fail to come about.

|

| 38 |

+

The GNU General Public License permits making a modified version and

|

| 39 |

+

letting the public access it on a server without ever releasing its

|

| 40 |

+

source code to the public.

|

| 41 |

+

|

| 42 |

+

The GNU Affero General Public License is designed specifically to

|

| 43 |

+

ensure that, in such cases, the modified source code becomes available

|

| 44 |

+

to the community. It requires the operator of a network server to

|

| 45 |

+

provide the source code of the modified version running there to the

|

| 46 |

+

users of that server. Therefore, public use of a modified version, on

|

| 47 |

+

a publicly accessible server, gives the public access to the source

|

| 48 |

+

code of the modified version.

|

| 49 |

+

|

| 50 |

+

An older license, called the Affero General Public License and

|

| 51 |

+

published by Affero, was designed to accomplish similar goals. This is

|

| 52 |

+

a different license, not a version of the Affero GPL, but Affero has

|

| 53 |

+

released a new version of the Affero GPL which permits relicensing under

|

| 54 |

+

this license.

|

| 55 |

+

|

| 56 |

+

The precise terms and conditions for copying, distribution and

|

| 57 |

+

modification follow.

|

| 58 |

+

|

| 59 |

+

TERMS AND CONDITIONS

|

| 60 |

+

|

| 61 |

+

0. Definitions.

|

| 62 |

+

|

| 63 |

+

"This License" refers to version 3 of the GNU Affero General Public License.

|

| 64 |

+

|

| 65 |

+

"Copyright" also means copyright-like laws that apply to other kinds of

|

| 66 |

+

works, such as semiconductor masks.

|

| 67 |

+

|

| 68 |

+

"The Program" refers to any copyrightable work licensed under this

|

| 69 |

+

License. Each licensee is addressed as "you". "Licensees" and

|

| 70 |

+

"recipients" may be individuals or organizations.

|

| 71 |

+

|

| 72 |

+

To "modify" a work means to copy from or adapt all or part of the work

|

| 73 |

+

in a fashion requiring copyright permission, other than the making of an

|

| 74 |

+

exact copy. The resulting work is called a "modified version" of the

|

| 75 |

+

earlier work or a work "based on" the earlier work.

|

| 76 |

+

|

| 77 |

+

A "covered work" means either the unmodified Program or a work based

|

| 78 |

+

on the Program.

|

| 79 |

+

|

| 80 |

+

To "propagate" a work means to do anything with it that, without

|

| 81 |

+

permission, would make you directly or secondarily liable for

|

| 82 |

+

infringement under applicable copyright law, except executing it on a

|

| 83 |

+

computer or modifying a private copy. Propagation includes copying,

|

| 84 |

+

distribution (with or without modification), making available to the

|

| 85 |

+

public, and in some countries other activities as well.

|

| 86 |

+

|

| 87 |

+

To "convey" a work means any kind of propagation that enables other

|

| 88 |

+

parties to make or receive copies. Mere interaction with a user through

|

| 89 |

+

a computer network, with no transfer of a copy, is not conveying.

|

| 90 |

+

|

| 91 |

+

An interactive user interface displays "Appropriate Legal Notices"

|

| 92 |

+

to the extent that it includes a convenient and prominently visible

|

| 93 |

+

feature that (1) displays an appropriate copyright notice, and (2)

|

| 94 |

+

tells the user that there is no warranty for the work (except to the

|

| 95 |

+

extent that warranties are provided), that licensees may convey the

|

| 96 |

+

work under this License, and how to view a copy of this License. If

|

| 97 |

+

the interface presents a list of user commands or options, such as a

|

| 98 |

+

menu, a prominent item in the list meets this criterion.

|

| 99 |

+

|

| 100 |

+

1. Source Code.

|

| 101 |

+

|

| 102 |

+

The "source code" for a work means the preferred form of the work

|

| 103 |

+

for making modifications to it. "Object code" means any non-source

|

| 104 |

+

form of a work.

|

| 105 |

+

|

| 106 |

+

A "Standard Interface" means an interface that either is an official

|

| 107 |

+

standard defined by a recognized standards body, or, in the case of

|

| 108 |

+

interfaces specified for a particular programming language, one that

|

| 109 |

+

is widely used among developers working in that language.

|

| 110 |

+

|

| 111 |

+

The "System Libraries" of an executable work include anything, other

|

| 112 |

+

than the work as a whole, that (a) is included in the normal form of

|

| 113 |

+

packaging a Major Component, but which is not part of that Major

|

| 114 |

+

Component, and (b) serves only to enable use of the work with that

|

| 115 |

+

Major Component, or to implement a Standard Interface for which an

|

| 116 |

+

implementation is available to the public in source code form. A

|

| 117 |

+

"Major Component", in this context, means a major essential component

|

| 118 |

+

(kernel, window system, and so on) of the specific operating system

|

| 119 |

+

(if any) on which the executable work runs, or a compiler used to

|

| 120 |

+

produce the work, or an object code interpreter used to run it.

|

| 121 |

+

|

| 122 |

+

The "Corresponding Source" for a work in object code form means all

|

| 123 |

+

the source code needed to generate, install, and (for an executable

|

| 124 |

+

work) run the object code and to modify the work, including scripts to

|

| 125 |

+

control those activities. However, it does not include the work's

|

| 126 |

+

System Libraries, or general-purpose tools or generally available free

|

| 127 |

+

programs which are used unmodified in performing those activities but

|

| 128 |

+

which are not part of the work. For example, Corresponding Source

|

| 129 |

+

includes interface definition files associated with source files for

|

| 130 |

+

the work, and the source code for shared libraries and dynamically

|

| 131 |

+

linked subprograms that the work is specifically designed to require,

|

| 132 |

+

such as by intimate data communication or control flow between those

|

| 133 |

+

subprograms and other parts of the work.

|

| 134 |

+

|

| 135 |

+

The Corresponding Source need not include anything that users

|

| 136 |

+

can regenerate automatically from other parts of the Corresponding

|

| 137 |

+

Source.

|

| 138 |

+

|

| 139 |

+

The Corresponding Source for a work in source code form is that

|

| 140 |

+

same work.

|

| 141 |

+

|

| 142 |

+

2. Basic Permissions.

|

| 143 |

+

|

| 144 |

+

All rights granted under this License are granted for the term of

|

| 145 |

+

copyright on the Program, and are irrevocable provided the stated

|

| 146 |

+

conditions are met. This License explicitly affirms your unlimited

|

| 147 |

+

permission to run the unmodified Program. The output from running a

|

| 148 |

+

covered work is covered by this License only if the output, given its

|

| 149 |

+

content, constitutes a covered work. This License acknowledges your

|

| 150 |

+

rights of fair use or other equivalent, as provided by copyright law.

|

| 151 |

+

|

| 152 |

+

You may make, run and propagate covered works that you do not

|

| 153 |

+

convey, without conditions so long as your license otherwise remains

|

| 154 |

+

in force. You may convey covered works to others for the sole purpose

|

| 155 |

+

of having them make modifications exclusively for you, or provide you

|

| 156 |

+

with facilities for running those works, provided that you comply with

|

| 157 |

+

the terms of this License in conveying all material for which you do

|

| 158 |

+

not control copyright. Those thus making or running the covered works

|

| 159 |

+

for you must do so exclusively on your behalf, under your direction

|

| 160 |

+

and control, on terms that prohibit them from making any copies of

|

| 161 |

+

your copyrighted material outside their relationship with you.

|

| 162 |

+

|

| 163 |

+

Conveying under any other circumstances is permitted solely under

|

| 164 |

+

the conditions stated below. Sublicensing is not allowed; section 10

|

| 165 |

+

makes it unnecessary.

|

| 166 |

+

|

| 167 |

+

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

|

| 168 |

+

|

| 169 |

+

No covered work shall be deemed part of an effective technological

|

| 170 |

+

measure under any applicable law fulfilling obligations under article

|

| 171 |

+

11 of the WIPO copyright treaty adopted on 20 December 1996, or

|

| 172 |

+

similar laws prohibiting or restricting circumvention of such

|

| 173 |

+

measures.

|

| 174 |

+

|

| 175 |

+

When you convey a covered work, you waive any legal power to forbid

|

| 176 |

+

circumvention of technological measures to the extent such circumvention

|

| 177 |

+

is effected by exercising rights under this License with respect to

|

| 178 |

+

the covered work, and you disclaim any intention to limit operation or

|

| 179 |

+

modification of the work as a means of enforcing, against the work's

|

| 180 |

+

users, your or third parties' legal rights to forbid circumvention of

|

| 181 |

+

technological measures.

|

| 182 |

+

|

| 183 |

+

4. Conveying Verbatim Copies.

|

| 184 |

+

|

| 185 |

+

You may convey verbatim copies of the Program's source code as you

|

| 186 |

+

receive it, in any medium, provided that you conspicuously and

|

| 187 |

+

appropriately publish on each copy an appropriate copyright notice;

|

| 188 |

+

keep intact all notices stating that this License and any

|

| 189 |

+

non-permissive terms added in accord with section 7 apply to the code;

|

| 190 |

+

keep intact all notices of the absence of any warranty; and give all

|

| 191 |

+

recipients a copy of this License along with the Program.

|

| 192 |

+

|

| 193 |

+

You may charge any price or no price for each copy that you convey,

|

| 194 |

+

and you may offer support or warranty protection for a fee.

|

| 195 |

+

|

| 196 |

+

5. Conveying Modified Source Versions.

|

| 197 |

+

|

| 198 |

+

You may convey a work based on the Program, or the modifications to

|

| 199 |

+

produce it from the Program, in the form of source code under the

|

| 200 |

+

terms of section 4, provided that you also meet all of these conditions:

|

| 201 |

+

|

| 202 |

+

a) The work must carry prominent notices stating that you modified

|

| 203 |

+

it, and giving a relevant date.

|

| 204 |

+

|

| 205 |

+

b) The work must carry prominent notices stating that it is

|

| 206 |

+

released under this License and any conditions added under section

|

| 207 |

+

7. This requirement modifies the requirement in section 4 to

|

| 208 |

+

"keep intact all notices".

|

| 209 |

+

|

| 210 |

+

c) You must license the entire work, as a whole, under this

|

| 211 |

+

License to anyone who comes into possession of a copy. This

|

| 212 |

+

License will therefore apply, along with any applicable section 7

|

| 213 |

+

additional terms, to the whole of the work, and all its parts,

|

| 214 |

+

regardless of how they are packaged. This License gives no

|

| 215 |

+

permission to license the work in any other way, but it does not

|

| 216 |

+

invalidate such permission if you have separately received it.

|

| 217 |

+

|

| 218 |

+

d) If the work has interactive user interfaces, each must display

|

| 219 |

+

Appropriate Legal Notices; however, if the Program has interactive

|

| 220 |

+

interfaces that do not display Appropriate Legal Notices, your

|

| 221 |

+

work need not make them do so.

|

| 222 |

+

|

| 223 |

+

A compilation of a covered work with other separate and independent

|

| 224 |

+

works, which are not by their nature extensions of the covered work,

|

| 225 |

+

and which are not combined with it such as to form a larger program,

|

| 226 |

+

in or on a volume of a storage or distribution medium, is called an

|

| 227 |

+

"aggregate" if the compilation and its resulting copyright are not

|

| 228 |

+

used to limit the access or legal rights of the compilation's users

|

| 229 |

+

beyond what the individual works permit. Inclusion of a covered work

|

| 230 |

+

in an aggregate does not cause this License to apply to the other

|

| 231 |

+

parts of the aggregate.

|

| 232 |

+

|

| 233 |

+

6. Conveying Non-Source Forms.

|

| 234 |

+

|

| 235 |

+

You may convey a covered work in object code form under the terms

|

| 236 |

+

of sections 4 and 5, provided that you also convey the

|

| 237 |

+

machine-readable Corresponding Source under the terms of this License,

|

| 238 |

+

in one of these ways:

|

| 239 |

+

|

| 240 |

+

a) Convey the object code in, or embodied in, a physical product

|

| 241 |

+

(including a physical distribution medium), accompanied by the

|

| 242 |

+

Corresponding Source fixed on a durable physical medium

|

| 243 |

+

customarily used for software interchange.

|

| 244 |

+

|

| 245 |

+

b) Convey the object code in, or embodied in, a physical product

|

| 246 |

+

(including a physical distribution medium), accompanied by a

|

| 247 |

+

written offer, valid for at least three years and valid for as

|

| 248 |

+

long as you offer spare parts or customer support for that product

|

| 249 |

+

model, to give anyone who possesses the object code either (1) a

|

| 250 |

+

copy of the Corresponding Source for all the software in the

|

| 251 |

+

product that is covered by this License, on a durable physical

|

| 252 |

+

medium customarily used for software interchange, for a price no

|

| 253 |

+

more than your reasonable cost of physically performing this

|

| 254 |

+

conveying of source, or (2) access to copy the

|

| 255 |

+

Corresponding Source from a network server at no charge.

|

| 256 |

+

|

| 257 |

+

c) Convey individual copies of the object code with a copy of the

|

| 258 |

+

written offer to provide the Corresponding Source. This

|

| 259 |

+

alternative is allowed only occasionally and noncommercially, and

|

| 260 |

+

only if you received the object code with such an offer, in accord

|

| 261 |

+

with subsection 6b.

|

| 262 |

+

|

| 263 |

+

d) Convey the object code by offering access from a designated

|

| 264 |

+

place (gratis or for a charge), and offer equivalent access to the

|

| 265 |

+

Corresponding Source in the same way through the same place at no

|

| 266 |

+

further charge. You need not require recipients to copy the

|

| 267 |

+

Corresponding Source along with the object code. If the place to

|

| 268 |

+

copy the object code is a network server, the Corresponding Source

|

| 269 |

+

may be on a different server (operated by you or a third party)

|

| 270 |

+

that supports equivalent copying facilities, provided you maintain

|

| 271 |

+

clear directions next to the object code saying where to find the

|

| 272 |

+

Corresponding Source. Regardless of what server hosts the

|

| 273 |

+

Corresponding Source, you remain obligated to ensure that it is

|

| 274 |

+

available for as long as needed to satisfy these requirements.

|

| 275 |

+

|

| 276 |

+

e) Convey the object code using peer-to-peer transmission, provided

|

| 277 |

+

you inform other peers where the object code and Corresponding

|

| 278 |

+

Source of the work are being offered to the general public at no

|

| 279 |

+

charge under subsection 6d.

|

| 280 |

+

|

| 281 |

+

A separable portion of the object code, whose source code is excluded

|

| 282 |

+

from the Corresponding Source as a System Library, need not be

|

| 283 |

+

included in conveying the object code work.

|

| 284 |

+

|

| 285 |

+

A "User Product" is either (1) a "consumer product", which means any

|

| 286 |

+

tangible personal property which is normally used for personal, family,

|

| 287 |

+

or household purposes, or (2) anything designed or sold for incorporation

|

| 288 |

+

into a dwelling. In determining whether a product is a consumer product,

|

| 289 |

+

doubtful cases shall be resolved in favor of coverage. For a particular

|

| 290 |

+

product received by a particular user, "normally used" refers to a

|

| 291 |

+

typical or common use of that class of product, regardless of the status

|

| 292 |

+

of the particular user or of the way in which the particular user

|

| 293 |

+

actually uses, or expects or is expected to use, the product. A product

|

| 294 |

+

is a consumer product regardless of whether the product has substantial

|

| 295 |

+

commercial, industrial or non-consumer uses, unless such uses represent

|

| 296 |

+

the only significant mode of use of the product.

|

| 297 |

+

|

| 298 |

+

"Installation Information" for a User Product means any methods,

|

| 299 |

+

procedures, authorization keys, or other information required to install

|

| 300 |

+

and execute modified versions of a covered work in that User Product from

|

| 301 |

+

a modified version of its Corresponding Source. The information must

|

| 302 |

+

suffice to ensure that the continued functioning of the modified object

|

| 303 |

+

code is in no case prevented or interfered with solely because

|

| 304 |

+

modification has been made.

|

| 305 |

+

|

| 306 |

+

If you convey an object code work under this section in, or with, or

|

| 307 |

+

specifically for use in, a User Product, and the conveying occurs as

|

| 308 |

+

part of a transaction in which the right of possession and use of the

|

| 309 |

+

User Product is transferred to the recipient in perpetuity or for a

|

| 310 |

+

fixed term (regardless of how the transaction is characterized), the

|

| 311 |

+

Corresponding Source conveyed under this section must be accompanied

|

| 312 |

+

by the Installation Information. But this requirement does not apply

|

| 313 |

+

if neither you nor any third party retains the ability to install

|

| 314 |

+

modified object code on the User Product (for example, the work has

|

| 315 |

+

been installed in ROM).

|

| 316 |

+

|

| 317 |

+

The requirement to provide Installation Information does not include a

|

| 318 |

+

requirement to continue to provide support service, warranty, or updates

|

| 319 |

+

for a work that has been modified or installed by the recipient, or for

|

| 320 |

+

the User Product in which it has been modified or installed. Access to a

|

| 321 |

+

network may be denied when the modification itself materially and

|

| 322 |

+

adversely affects the operation of the network or violates the rules and

|

| 323 |

+

protocols for communication across the network.

|

| 324 |

+

|

| 325 |

+

Corresponding Source conveyed, and Installation Information provided,

|

| 326 |

+

in accord with this section must be in a format that is publicly

|

| 327 |

+

documented (and with an implementation available to the public in

|

| 328 |

+

source code form), and must require no special password or key for

|

| 329 |

+

unpacking, reading or copying.

|

| 330 |

+

|

| 331 |

+

7. Additional Terms.

|

| 332 |

+

|

| 333 |

+

"Additional permissions" are terms that supplement the terms of this

|

| 334 |

+

License by making exceptions from one or more of its conditions.

|

| 335 |

+

Additional permissions that are applicable to the entire Program shall

|

| 336 |

+

be treated as though they were included in this License, to the extent

|

| 337 |

+

that they are valid under applicable law. If additional permissions

|

| 338 |

+

apply only to part of the Program, that part may be used separately

|

| 339 |

+

under those permissions, but the entire Program remains governed by

|

| 340 |

+

this License without regard to the additional permissions.

|

| 341 |

+

|

| 342 |

+

When you convey a copy of a covered work, you may at your option

|

| 343 |

+

remove any additional permissions from that copy, or from any part of

|

| 344 |

+

it. (Additional permissions may be written to require their own

|

| 345 |

+

removal in certain cases when you modify the work.) You may place

|

| 346 |

+

additional permissions on material, added by you to a covered work,

|

| 347 |

+

for which you have or can give appropriate copyright permission.

|

| 348 |

+

|

| 349 |

+

Notwithstanding any other provision of this License, for material you

|

| 350 |

+

add to a covered work, you may (if authorized by the copyright holders of

|

| 351 |

+

that material) supplement the terms of this License with terms:

|

| 352 |

+

|

| 353 |

+

a) Disclaiming warranty or limiting liability differently from the

|

| 354 |

+

terms of sections 15 and 16 of this License; or

|

| 355 |

+

|

| 356 |

+

b) Requiring preservation of specified reasonable legal notices or

|

| 357 |

+

author attributions in that material or in the Appropriate Legal

|

| 358 |

+

Notices displayed by works containing it; or

|

| 359 |

+

|

| 360 |

+

c) Prohibiting misrepresentation of the origin of that material, or

|

| 361 |

+

requiring that modified versions of such material be marked in

|

| 362 |

+

reasonable ways as different from the original version; or

|

| 363 |

+

|

| 364 |

+

d) Limiting the use for publicity purposes of names of licensors or

|

| 365 |

+

authors of the material; or

|

| 366 |

+

|

| 367 |

+

e) Declining to grant rights under trademark law for use of some

|

| 368 |

+

trade names, trademarks, or service marks; or

|

| 369 |

+

|

| 370 |

+

f) Requiring indemnification of licensors and authors of that

|

| 371 |

+

material by anyone who conveys the material (or modified versions of

|

| 372 |

+

it) with contractual assumptions of liability to the recipient, for

|

| 373 |

+

any liability that these contractual assumptions directly impose on

|

| 374 |

+

those licensors and authors.

|

| 375 |

+

|

| 376 |

+

All other non-permissive additional terms are considered "further

|

| 377 |

+

restrictions" within the meaning of section 10. If the Program as you

|

| 378 |

+

received it, or any part of it, contains a notice stating that it is

|

| 379 |

+

governed by this License along with a term that is a further

|

| 380 |

+

restriction, you may remove that term. If a license document contains

|

| 381 |

+

a further restriction but permits relicensing or conveying under this

|

| 382 |

+

License, you may add to a covered work material governed by the terms

|

| 383 |

+

of that license document, provided that the further restriction does

|

| 384 |

+

not survive such relicensing or conveying.

|

| 385 |

+

|

| 386 |

+

If you add terms to a covered work in accord with this section, you

|

| 387 |

+

must place, in the relevant source files, a statement of the

|

| 388 |

+

additional terms that apply to those files, or a notice indicating

|

| 389 |

+

where to find the applicable terms.

|

| 390 |

+

|

| 391 |

+

Additional terms, permissive or non-permissive, may be stated in the

|

| 392 |

+

form of a separately written license, or stated as exceptions;

|

| 393 |

+

the above requirements apply either way.

|

| 394 |

+

|

| 395 |

+

8. Termination.

|

| 396 |

+

|

| 397 |

+

You may not propagate or modify a covered work except as expressly

|

| 398 |

+

provided under this License. Any attempt otherwise to propagate or

|

| 399 |

+

modify it is void, and will automatically terminate your rights under

|

| 400 |

+

this License (including any patent licenses granted under the third

|

| 401 |

+

paragraph of section 11).

|

| 402 |

+

|

| 403 |

+

However, if you cease all violation of this License, then your

|

| 404 |

+

license from a particular copyright holder is reinstated (a)

|

| 405 |

+

provisionally, unless and until the copyright holder explicitly and

|

| 406 |