Spaces:

Runtime error

Runtime error

Upload 15 files

Browse files- .gitattributes +2 -0

- app.py +45 -12

- australia.jpg +0 -0

- biden.jpg +0 -0

- elon.jpg +0 -0

- horns.jpg +0 -0

- man.png +0 -0

- nun.jpg +0 -0

- painting.png +3 -0

- pentagon.jpg +0 -0

- pollock.jpg +0 -0

- radcliffe.jpg +0 -0

- requirements.txt +2 -4

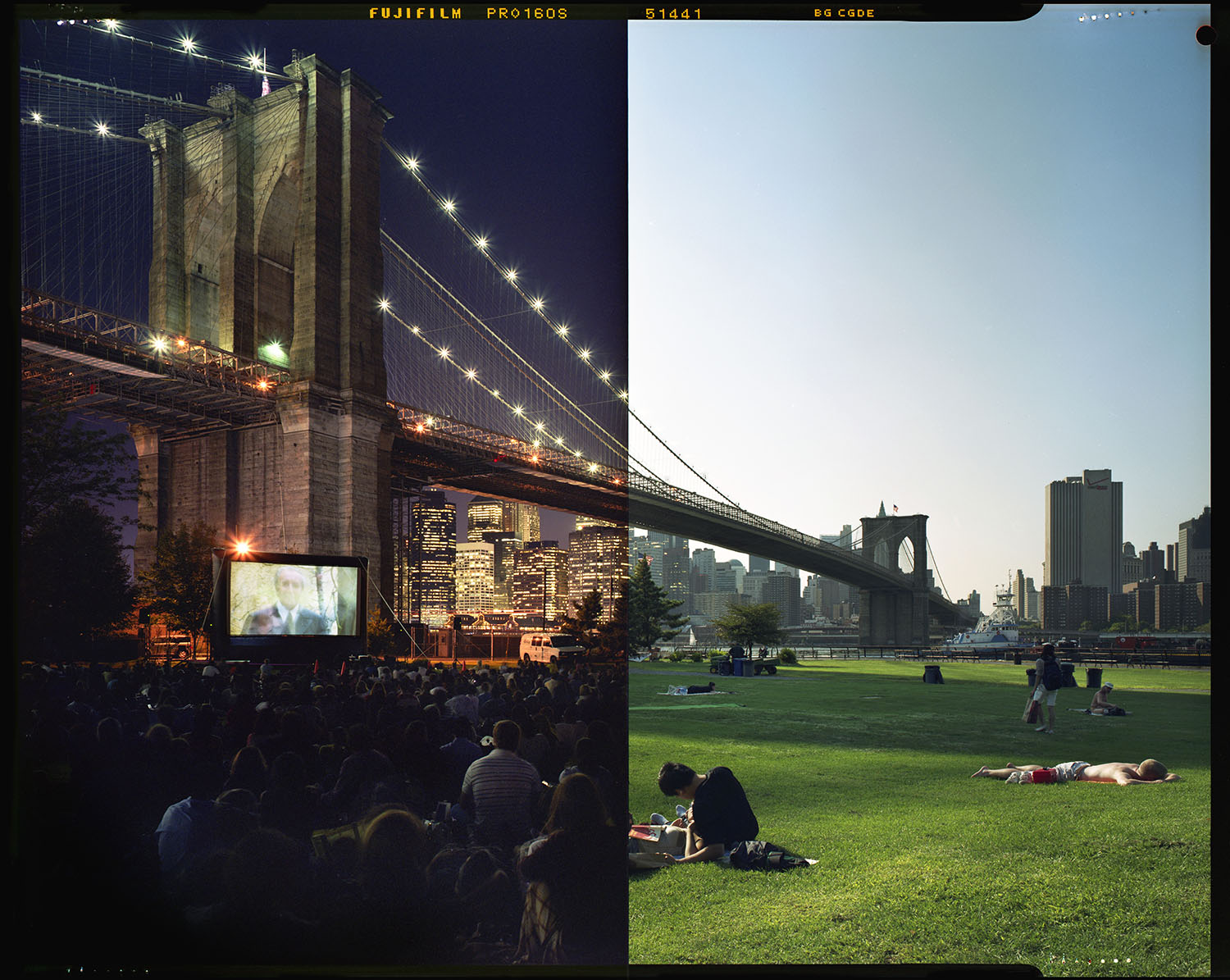

- split.jpg +0 -0

- waves.png +3 -0

- yeti.jpeg +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

painting.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

waves.png filter=lfs diff=lfs merge=lfs -text

|

app.py

CHANGED

|

@@ -1,20 +1,53 @@

|

|

| 1 |

import gradio as gr

|

|

|

|

| 2 |

|

| 3 |

-

import

|

| 4 |

-

|

| 5 |

-

from transformers import BlipProcessor, BlipForConditionalGeneration

|

| 6 |

|

| 7 |

-

|

| 8 |

-

|

| 9 |

|

| 10 |

-

|

| 11 |

-

inputs = processor(raw_image, return_tensors="pt")

|

| 12 |

-

out = model.generate(**inputs)

|

| 13 |

-

return processor.decode(out[0], skip_special_tokens=True)

|

| 14 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 15 |

outputs = [

|

| 16 |

-

gr.outputs.Textbox(label="Caption

|

| 17 |

]

|

| 18 |

|

| 19 |

-

|

| 20 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

import gradio as gr

|

| 2 |

+

from transformers import AutoProcessor, BlipForConditionalGeneration

|

| 3 |

|

| 4 |

+

# from transformers import AutoProcessor, AutoTokenizer, AutoImageProcessor, AutoModelForCausalLM, BlipForConditionalGeneration, Blip2ForConditionalGeneration, VisionEncoderDecoderModel

|

| 5 |

+

import torch

|

|

|

|

| 6 |

|

| 7 |

+

blip_processor_large = AutoProcessor.from_pretrained("umm-maybe/image-generator-identifier")

|

| 8 |

+

blip_model_large = BlipForConditionalGeneration.from_pretrained("umm-maybe/image-generator-identifier")

|

| 9 |

|

| 10 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

+

blip_model_large.to(device)

|

| 13 |

+

|

| 14 |

+

def generate_caption(processor, model, image, tokenizer=None, use_float_16=False):

|

| 15 |

+

inputs = processor(images=image, return_tensors="pt").to(device)

|

| 16 |

+

|

| 17 |

+

if use_float_16:

|

| 18 |

+

inputs = inputs.to(torch.float16)

|

| 19 |

+

|

| 20 |

+

generated_ids = model.generate(pixel_values=inputs.pixel_values, max_length=50)

|

| 21 |

+

|

| 22 |

+

if tokenizer is not None:

|

| 23 |

+

generated_caption = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

| 24 |

+

else:

|

| 25 |

+

generated_caption = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

| 26 |

+

|

| 27 |

+

return generated_caption

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

def generate_captions(image):

|

| 31 |

+

|

| 32 |

+

caption_blip_large = generate_caption(blip_processor_large, blip_model_large, image)

|

| 33 |

+

|

| 34 |

+

return caption_blip_large

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

examples = [["australia.jpg"], ["biden.png"], ["elon.jpg"], ["horns.jpg"], ["man.jpg"], ["nun.jpg"], ["painting.jpg"], ["pentagon.jpg"], ["pollock.jpg"], ["radcliffe.jpg"], ["split.jpg"], ["waves.jpg"], ["yeti.jpg"]]

|

| 39 |

outputs = [

|

| 40 |

+

gr.outputs.Textbox(label="Caption including detected generator (if applicable)"),

|

| 41 |

]

|

| 42 |

|

| 43 |

+

title = "Generator Identification via Image Captioning"

|

| 44 |

+

description = "Gradio Demo to illustrate the use of a fine-tuned BLIP image captioning to identify synthetic images. To use it, simply upload your image and click 'submit', or click one of the examples to load them."

|

| 45 |

+

|

| 46 |

+

interface = gr.Interface(fn=generate_captions,

|

| 47 |

+

inputs=gr.inputs.Image(type="pil"),

|

| 48 |

+

outputs=outputs,

|

| 49 |

+

examples=examples,

|

| 50 |

+

title=title,

|

| 51 |

+

description=description,

|

| 52 |

+

enable_queue=True)

|

| 53 |

+

interface.launch()

|

australia.jpg

ADDED

|

biden.jpg

ADDED

|

elon.jpg

ADDED

|

horns.jpg

ADDED

|

man.png

ADDED

|

nun.jpg

ADDED

|

painting.png

ADDED

|

Git LFS Details

|

pentagon.jpg

ADDED

|

pollock.jpg

ADDED

|

radcliffe.jpg

ADDED

|

requirements.txt

CHANGED

|

@@ -1,5 +1,3 @@

|

|

|

|

|

| 1 |

torch

|

| 2 |

-

|

| 3 |

-

requests

|

| 4 |

-

pillow

|

| 5 |

-

transformers

|

|

|

|

| 1 |

+

git+https://github.com/huggingface/transformers.git@main

|

| 2 |

torch

|

| 3 |

+

torchvision

|

|

|

|

|

|

|

|

|

split.jpg

ADDED

|

waves.png

ADDED

|

Git LFS Details

|

yeti.jpeg

ADDED

|