initial yolov8to

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- yolov8-to/CITATION.cff +20 -0

- yolov8-to/CONTRIBUTING.md +115 -0

- yolov8-to/LICENSE +661 -0

- yolov8-to/MANIFEST.in +8 -0

- yolov8-to/README.md +271 -0

- yolov8-to/README.zh-CN.md +269 -0

- yolov8-to/docker/Dockerfile +83 -0

- yolov8-to/docker/Dockerfile-arm64 +39 -0

- yolov8-to/docker/Dockerfile-cpu +49 -0

- yolov8-to/docker/Dockerfile-jetson +46 -0

- yolov8-to/docker/Dockerfile-python +49 -0

- yolov8-to/docker/Dockerfile-runner +37 -0

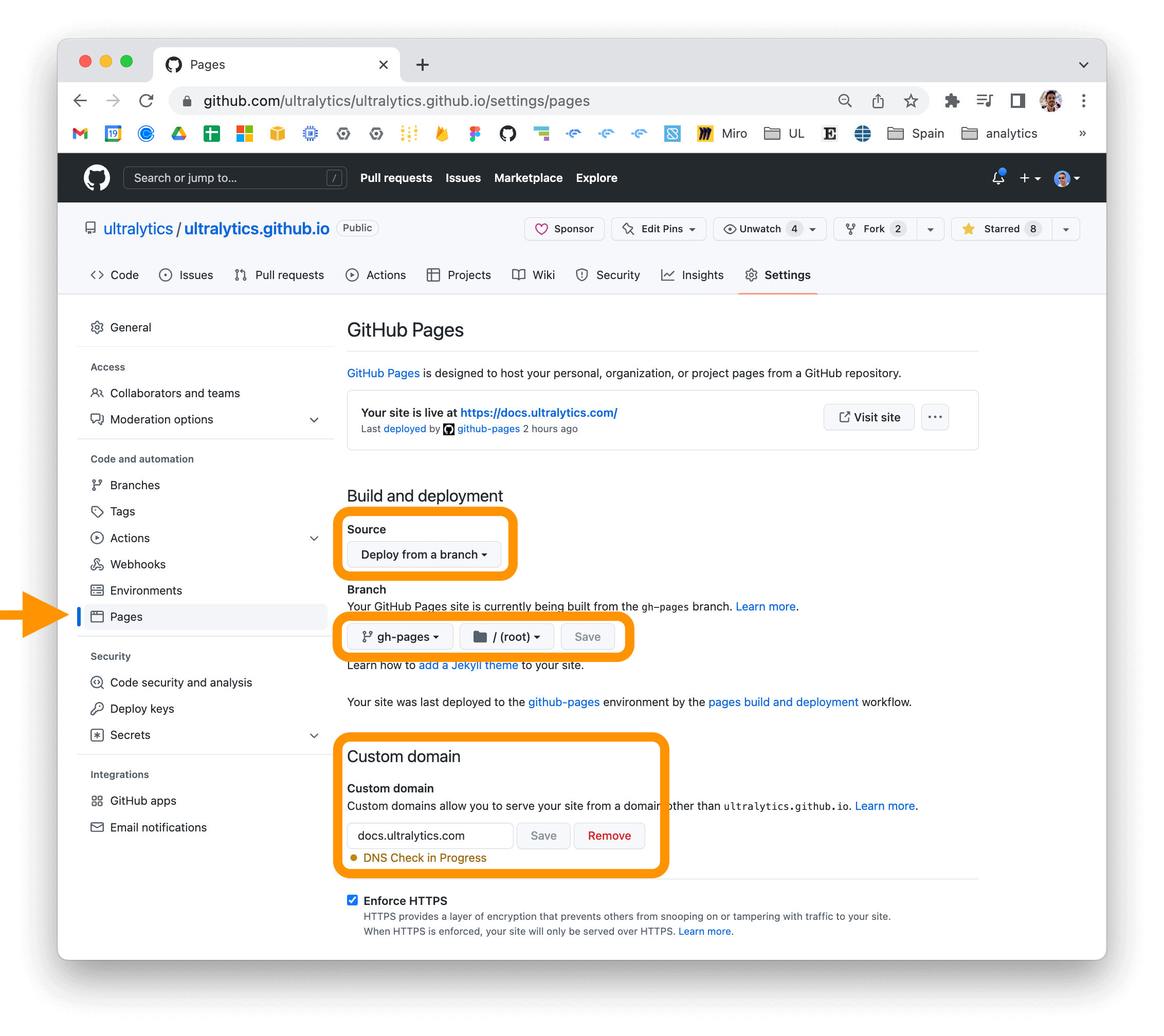

- yolov8-to/docs/CNAME +1 -0

- yolov8-to/docs/README.md +90 -0

- yolov8-to/docs/SECURITY.md +26 -0

- yolov8-to/docs/assets/favicon.ico +0 -0

- yolov8-to/docs/build_reference.py +126 -0

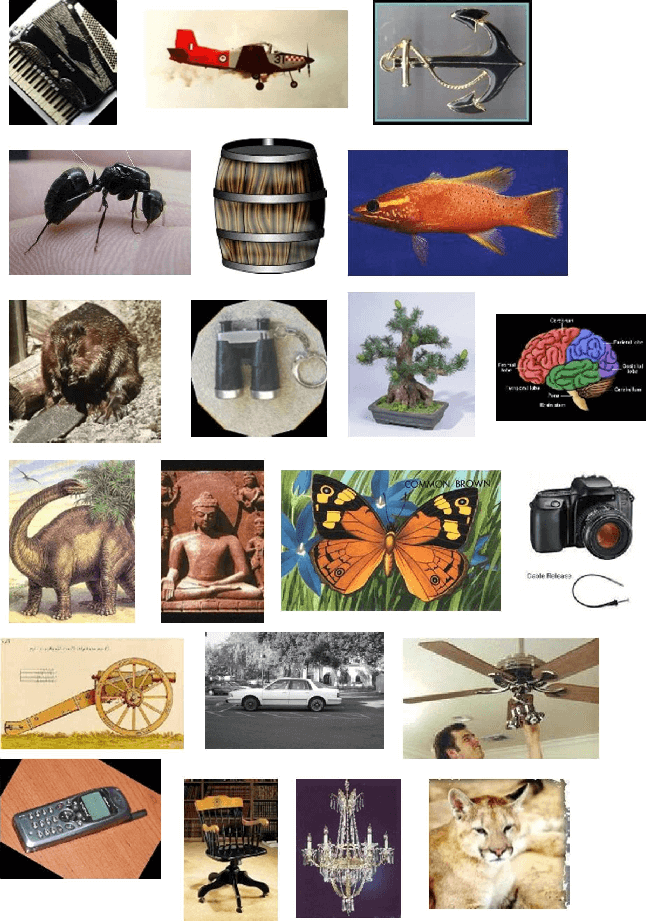

- yolov8-to/docs/datasets/classify/caltech101.md +81 -0

- yolov8-to/docs/datasets/classify/caltech256.md +78 -0

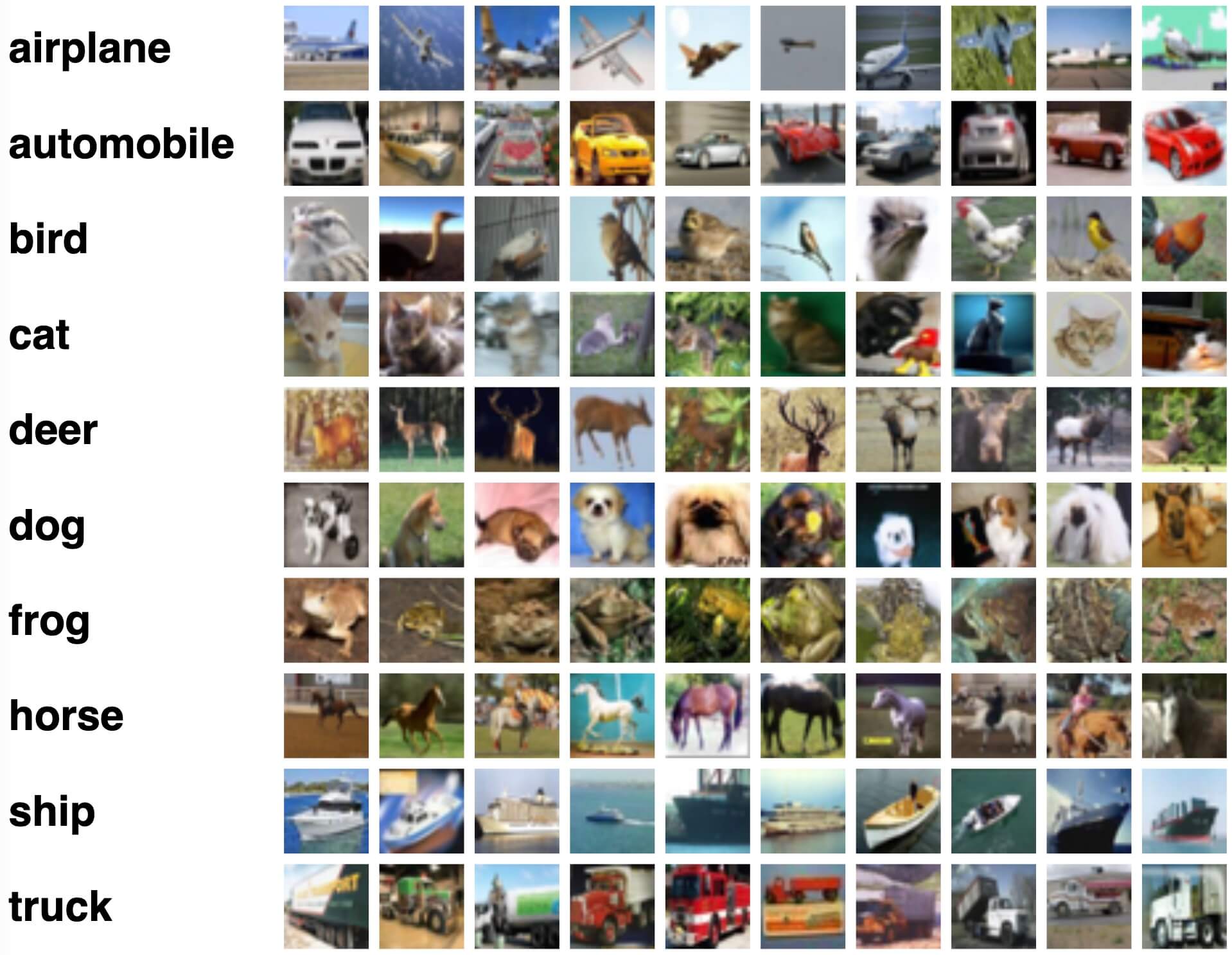

- yolov8-to/docs/datasets/classify/cifar10.md +80 -0

- yolov8-to/docs/datasets/classify/cifar100.md +80 -0

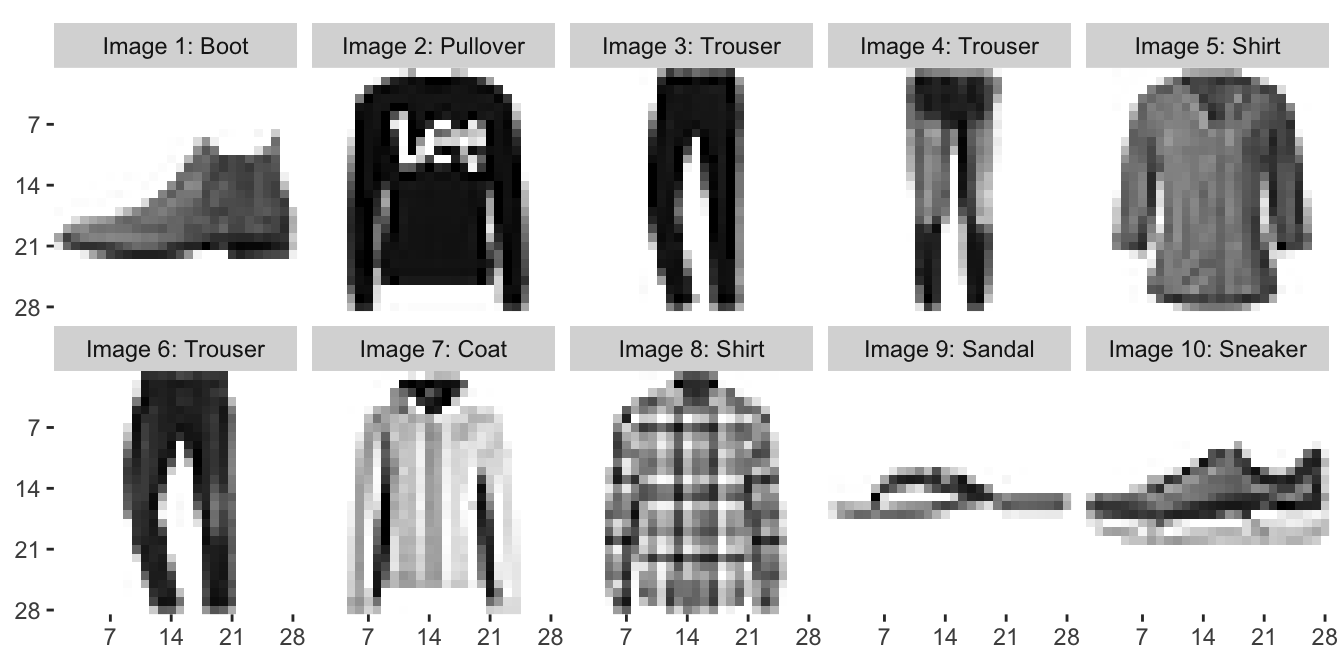

- yolov8-to/docs/datasets/classify/fashion-mnist.md +79 -0

- yolov8-to/docs/datasets/classify/imagenet.md +83 -0

- yolov8-to/docs/datasets/classify/imagenet10.md +78 -0

- yolov8-to/docs/datasets/classify/imagenette.md +113 -0

- yolov8-to/docs/datasets/classify/imagewoof.md +84 -0

- yolov8-to/docs/datasets/classify/index.md +120 -0

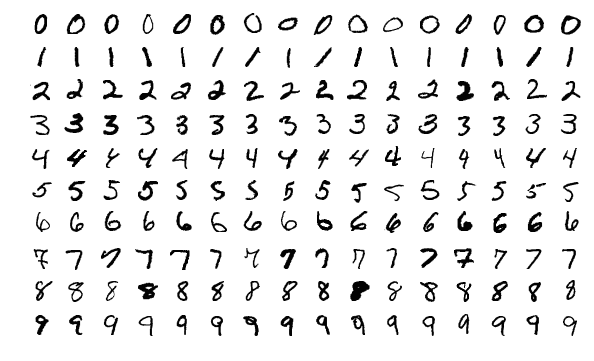

- yolov8-to/docs/datasets/classify/mnist.md +86 -0

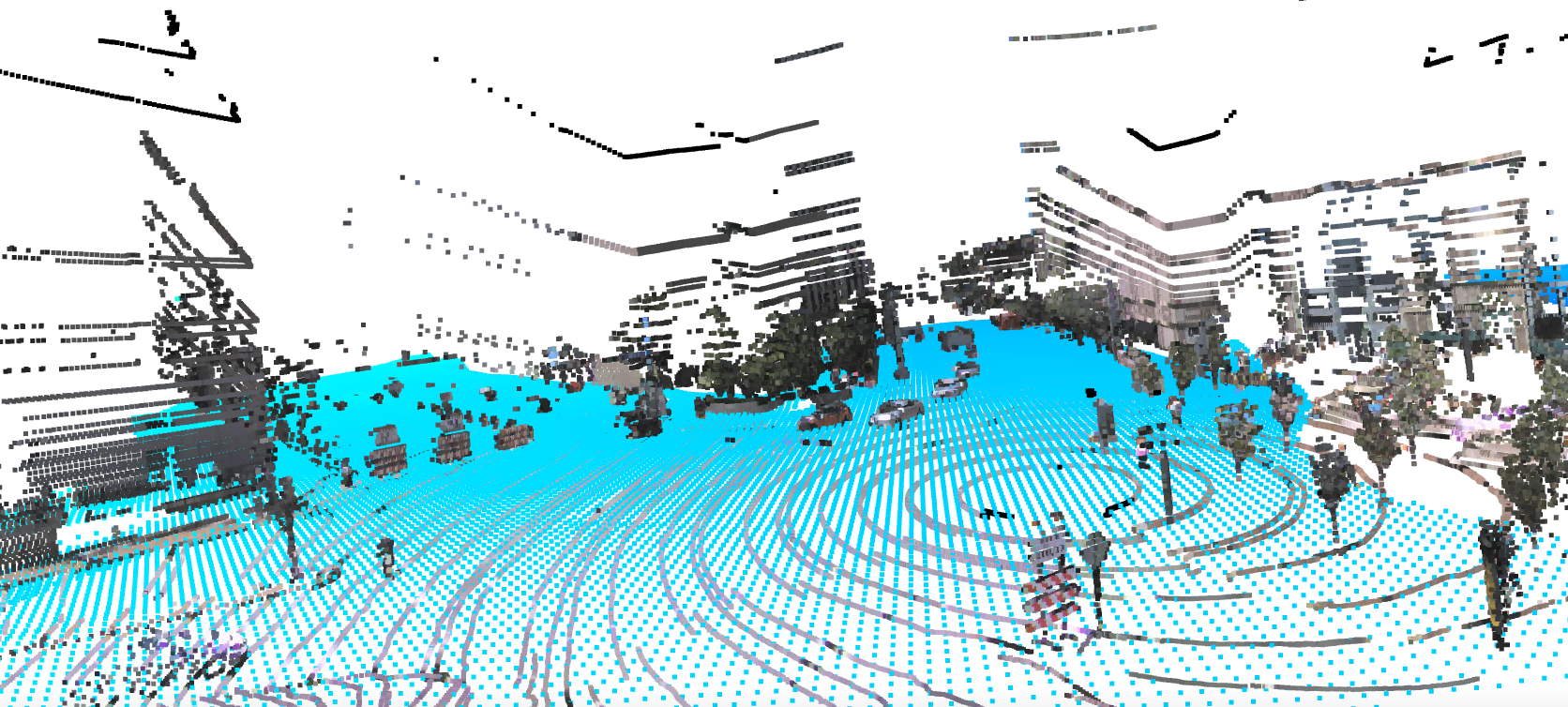

- yolov8-to/docs/datasets/detect/argoverse.md +97 -0

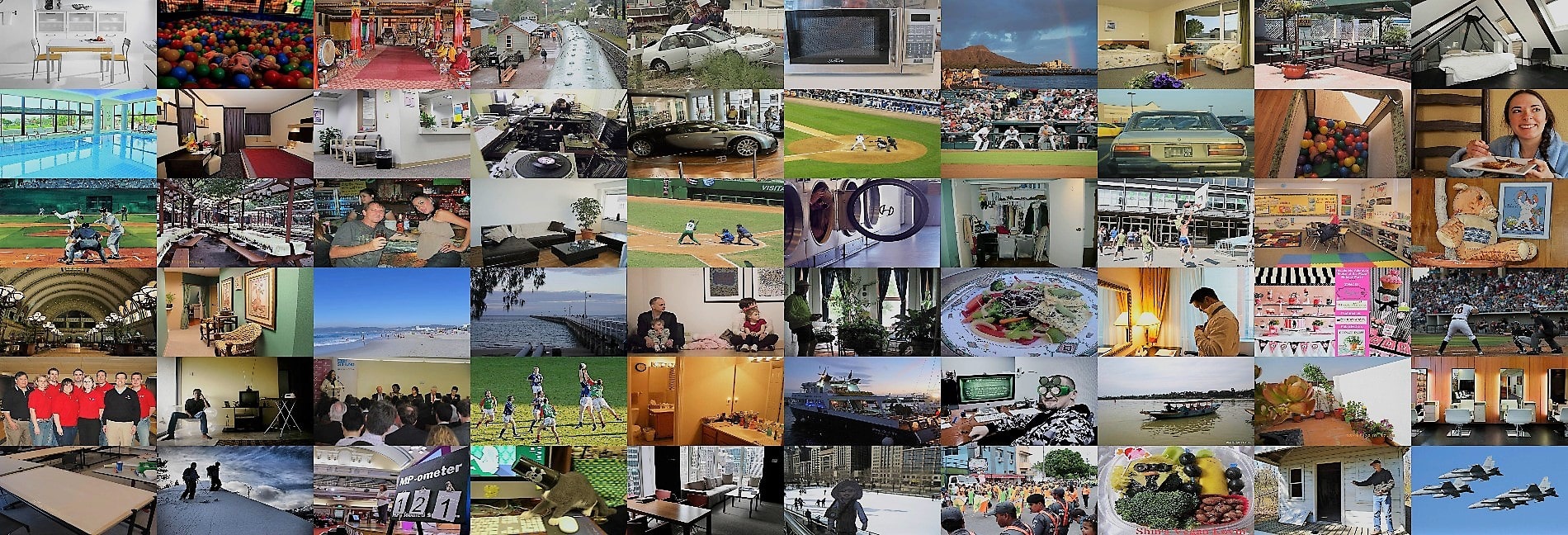

- yolov8-to/docs/datasets/detect/coco.md +94 -0

- yolov8-to/docs/datasets/detect/coco8.md +84 -0

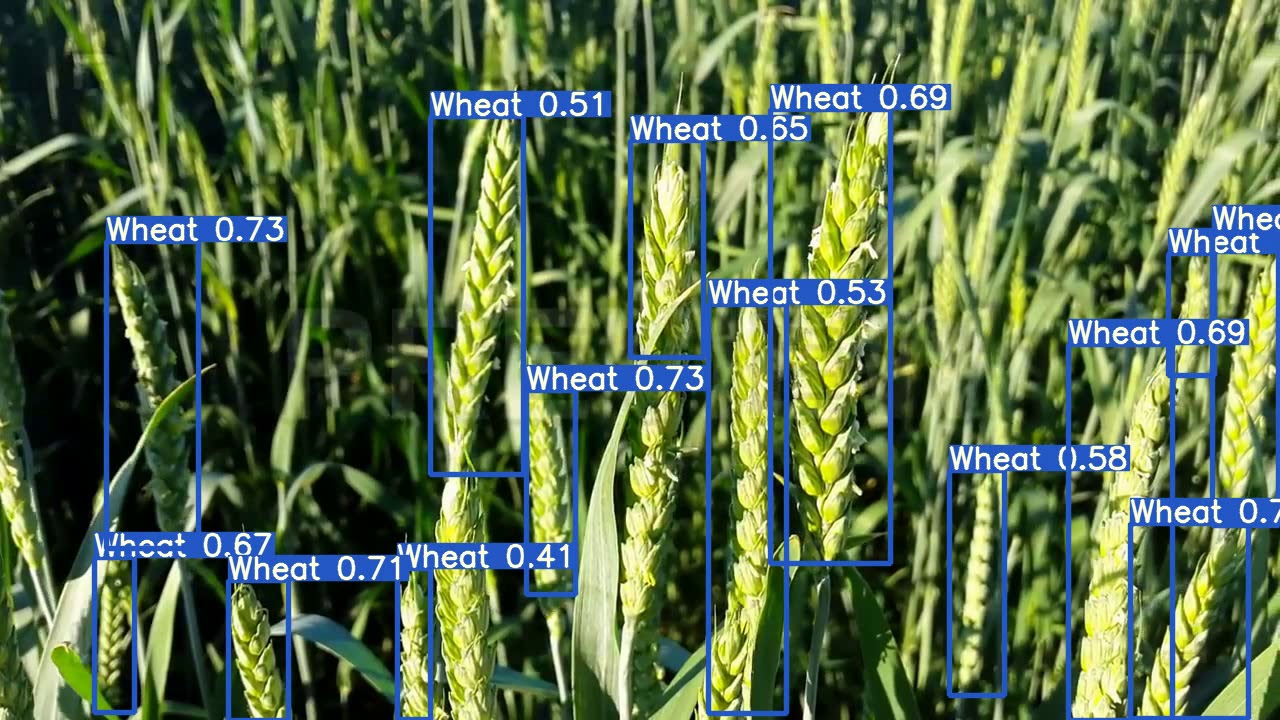

- yolov8-to/docs/datasets/detect/globalwheat2020.md +91 -0

- yolov8-to/docs/datasets/detect/index.md +108 -0

- yolov8-to/docs/datasets/detect/objects365.md +92 -0

- yolov8-to/docs/datasets/detect/open-images-v7.md +110 -0

- yolov8-to/docs/datasets/detect/sku-110k.md +93 -0

- yolov8-to/docs/datasets/detect/visdrone.md +92 -0

- yolov8-to/docs/datasets/detect/voc.md +95 -0

- yolov8-to/docs/datasets/detect/xview.md +97 -0

- yolov8-to/docs/datasets/index.md +66 -0

- yolov8-to/docs/datasets/obb/dota-v2.md +129 -0

- yolov8-to/docs/datasets/obb/index.md +84 -0

- yolov8-to/docs/datasets/pose/coco.md +95 -0

- yolov8-to/docs/datasets/pose/coco8-pose.md +84 -0

- yolov8-to/docs/datasets/pose/index.md +130 -0

- yolov8-to/docs/datasets/segment/coco.md +94 -0

- yolov8-to/docs/datasets/segment/coco8-seg.md +84 -0

- yolov8-to/docs/datasets/segment/index.md +148 -0

- yolov8-to/docs/datasets/track/index.md +30 -0

- yolov8-to/docs/guides/hyperparameter-tuning.md +96 -0

yolov8-to/CITATION.cff

ADDED

|

@@ -0,0 +1,20 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

cff-version: 1.2.0

|

| 2 |

+

preferred-citation:

|

| 3 |

+

type: software

|

| 4 |

+

message: If you use this software, please cite it as below.

|

| 5 |

+

authors:

|

| 6 |

+

- family-names: Jocher

|

| 7 |

+

given-names: Glenn

|

| 8 |

+

orcid: "https://orcid.org/0000-0001-5950-6979"

|

| 9 |

+

- family-names: Chaurasia

|

| 10 |

+

given-names: Ayush

|

| 11 |

+

orcid: "https://orcid.org/0000-0002-7603-6750"

|

| 12 |

+

- family-names: Qiu

|

| 13 |

+

given-names: Jing

|

| 14 |

+

orcid: "https://orcid.org/0000-0003-3783-7069"

|

| 15 |

+

title: "YOLO by Ultralytics"

|

| 16 |

+

version: 8.0.0

|

| 17 |

+

# doi: 10.5281/zenodo.3908559 # TODO

|

| 18 |

+

date-released: 2023-1-10

|

| 19 |

+

license: AGPL-3.0

|

| 20 |

+

url: "https://github.com/ultralytics/ultralytics"

|

yolov8-to/CONTRIBUTING.md

ADDED

|

@@ -0,0 +1,115 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

## Contributing to YOLOv8 🚀

|

| 2 |

+

|

| 3 |

+

We love your input! We want to make contributing to YOLOv8 as easy and transparent as possible, whether it's:

|

| 4 |

+

|

| 5 |

+

- Reporting a bug

|

| 6 |

+

- Discussing the current state of the code

|

| 7 |

+

- Submitting a fix

|

| 8 |

+

- Proposing a new feature

|

| 9 |

+

- Becoming a maintainer

|

| 10 |

+

|

| 11 |

+

YOLOv8 works so well due to our combined community effort, and for every small improvement you contribute you will be

|

| 12 |

+

helping push the frontiers of what's possible in AI 😃!

|

| 13 |

+

|

| 14 |

+

## Submitting a Pull Request (PR) 🛠️

|

| 15 |

+

|

| 16 |

+

Submitting a PR is easy! This example shows how to submit a PR for updating `requirements.txt` in 4 steps:

|

| 17 |

+

|

| 18 |

+

### 1. Select File to Update

|

| 19 |

+

|

| 20 |

+

Select `requirements.txt` to update by clicking on it in GitHub.

|

| 21 |

+

|

| 22 |

+

<p align="center"><img width="800" alt="PR_step1" src="https://user-images.githubusercontent.com/26833433/122260847-08be2600-ced4-11eb-828b-8287ace4136c.png"></p>

|

| 23 |

+

|

| 24 |

+

### 2. Click 'Edit this file'

|

| 25 |

+

|

| 26 |

+

Button is in top-right corner.

|

| 27 |

+

|

| 28 |

+

<p align="center"><img width="800" alt="PR_step2" src="https://user-images.githubusercontent.com/26833433/122260844-06f46280-ced4-11eb-9eec-b8a24be519ca.png"></p>

|

| 29 |

+

|

| 30 |

+

### 3. Make Changes

|

| 31 |

+

|

| 32 |

+

Change `matplotlib` version from `3.2.2` to `3.3`.

|

| 33 |

+

|

| 34 |

+

<p align="center"><img width="800" alt="PR_step3" src="https://user-images.githubusercontent.com/26833433/122260853-0a87e980-ced4-11eb-9fd2-3650fb6e0842.png"></p>

|

| 35 |

+

|

| 36 |

+

### 4. Preview Changes and Submit PR

|

| 37 |

+

|

| 38 |

+

Click on the **Preview changes** tab to verify your updates. At the bottom of the screen select 'Create a **new branch**

|

| 39 |

+

for this commit', assign your branch a descriptive name such as `fix/matplotlib_version` and click the green **Propose

|

| 40 |

+

changes** button. All done, your PR is now submitted to YOLOv8 for review and approval 😃!

|

| 41 |

+

|

| 42 |

+

<p align="center"><img width="800" alt="PR_step4" src="https://user-images.githubusercontent.com/26833433/122260856-0b208000-ced4-11eb-8e8e-77b6151cbcc3.png"></p>

|

| 43 |

+

|

| 44 |

+

### PR recommendations

|

| 45 |

+

|

| 46 |

+

To allow your work to be integrated as seamlessly as possible, we advise you to:

|

| 47 |

+

|

| 48 |

+

- ✅ Verify your PR is **up-to-date** with `ultralytics/ultralytics` `main` branch. If your PR is behind you can update

|

| 49 |

+

your code by clicking the 'Update branch' button or by running `git pull` and `git merge main` locally.

|

| 50 |

+

|

| 51 |

+

<p align="center"><img width="751" alt="Screenshot 2022-08-29 at 22 47 15" src="https://user-images.githubusercontent.com/26833433/187295893-50ed9f44-b2c9-4138-a614-de69bd1753d7.png"></p>

|

| 52 |

+

|

| 53 |

+

- ✅ Verify all YOLOv8 Continuous Integration (CI) **checks are passing**.

|

| 54 |

+

|

| 55 |

+

<p align="center"><img width="751" alt="Screenshot 2022-08-29 at 22 47 03" src="https://user-images.githubusercontent.com/26833433/187296922-545c5498-f64a-4d8c-8300-5fa764360da6.png"></p>

|

| 56 |

+

|

| 57 |

+

- ✅ Reduce changes to the absolute **minimum** required for your bug fix or feature addition. _"It is not daily increase

|

| 58 |

+

but daily decrease, hack away the unessential. The closer to the source, the less wastage there is."_ — Bruce Lee

|

| 59 |

+

|

| 60 |

+

### Docstrings

|

| 61 |

+

|

| 62 |

+

Not all functions or classes require docstrings but when they do, we

|

| 63 |

+

follow [google-style docstrings format](https://google.github.io/styleguide/pyguide.html#38-comments-and-docstrings).

|

| 64 |

+

Here is an example:

|

| 65 |

+

|

| 66 |

+

```python

|

| 67 |

+

"""

|

| 68 |

+

What the function does. Performs NMS on given detection predictions.

|

| 69 |

+

|

| 70 |

+

Args:

|

| 71 |

+

arg1: The description of the 1st argument

|

| 72 |

+

arg2: The description of the 2nd argument

|

| 73 |

+

|

| 74 |

+

Returns:

|

| 75 |

+

What the function returns. Empty if nothing is returned.

|

| 76 |

+

|

| 77 |

+

Raises:

|

| 78 |

+

Exception Class: When and why this exception can be raised by the function.

|

| 79 |

+

"""

|

| 80 |

+

```

|

| 81 |

+

|

| 82 |

+

## Submitting a Bug Report 🐛

|

| 83 |

+

|

| 84 |

+

If you spot a problem with YOLOv8 please submit a Bug Report!

|

| 85 |

+

|

| 86 |

+

For us to start investigating a possible problem we need to be able to reproduce it ourselves first. We've created a few

|

| 87 |

+

short guidelines below to help users provide what we need in order to get started.

|

| 88 |

+

|

| 89 |

+

When asking a question, people will be better able to provide help if you provide **code** that they can easily

|

| 90 |

+

understand and use to **reproduce** the problem. This is referred to by community members as creating

|

| 91 |

+

a [minimum reproducible example](https://docs.ultralytics.com/help/minimum_reproducible_example/). Your code that reproduces

|

| 92 |

+

the problem should be:

|

| 93 |

+

|

| 94 |

+

- ✅ **Minimal** – Use as little code as possible that still produces the same problem

|

| 95 |

+

- ✅ **Complete** – Provide **all** parts someone else needs to reproduce your problem in the question itself

|

| 96 |

+

- ✅ **Reproducible** – Test the code you're about to provide to make sure it reproduces the problem

|

| 97 |

+

|

| 98 |

+

In addition to the above requirements, for [Ultralytics](https://ultralytics.com/) to provide assistance your code

|

| 99 |

+

should be:

|

| 100 |

+

|

| 101 |

+

- ✅ **Current** – Verify that your code is up-to-date with current

|

| 102 |

+

GitHub [main](https://github.com/ultralytics/ultralytics/tree/main) branch, and if necessary `git pull` or `git clone`

|

| 103 |

+

a new copy to ensure your problem has not already been resolved by previous commits.

|

| 104 |

+

- ✅ **Unmodified** – Your problem must be reproducible without any modifications to the codebase in this

|

| 105 |

+

repository. [Ultralytics](https://ultralytics.com/) does not provide support for custom code ⚠️.

|

| 106 |

+

|

| 107 |

+

If you believe your problem meets all of the above criteria, please close this issue and raise a new one using the 🐛

|

| 108 |

+

**Bug Report** [template](https://github.com/ultralytics/ultralytics/issues/new/choose) and providing

|

| 109 |

+

a [minimum reproducible example](https://docs.ultralytics.com/help/minimum_reproducible_example/) to help us better

|

| 110 |

+

understand and diagnose your problem.

|

| 111 |

+

|

| 112 |

+

## License

|

| 113 |

+

|

| 114 |

+

By contributing, you agree that your contributions will be licensed under

|

| 115 |

+

the [AGPL-3.0 license](https://choosealicense.com/licenses/agpl-3.0/)

|

yolov8-to/LICENSE

ADDED

|

@@ -0,0 +1,661 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

GNU AFFERO GENERAL PUBLIC LICENSE

|

| 2 |

+

Version 3, 19 November 2007

|

| 3 |

+

|

| 4 |

+

Copyright (C) 2007 Free Software Foundation, Inc. <https://fsf.org/>

|

| 5 |

+

Everyone is permitted to copy and distribute verbatim copies

|

| 6 |

+

of this license document, but changing it is not allowed.

|

| 7 |

+

|

| 8 |

+

Preamble

|

| 9 |

+

|

| 10 |

+

The GNU Affero General Public License is a free, copyleft license for

|

| 11 |

+

software and other kinds of works, specifically designed to ensure

|

| 12 |

+

cooperation with the community in the case of network server software.

|

| 13 |

+

|

| 14 |

+

The licenses for most software and other practical works are designed

|

| 15 |

+

to take away your freedom to share and change the works. By contrast,

|

| 16 |

+

our General Public Licenses are intended to guarantee your freedom to

|

| 17 |

+

share and change all versions of a program--to make sure it remains free

|

| 18 |

+

software for all its users.

|

| 19 |

+

|

| 20 |

+

When we speak of free software, we are referring to freedom, not

|

| 21 |

+

price. Our General Public Licenses are designed to make sure that you

|

| 22 |

+

have the freedom to distribute copies of free software (and charge for

|

| 23 |

+

them if you wish), that you receive source code or can get it if you

|

| 24 |

+

want it, that you can change the software or use pieces of it in new

|

| 25 |

+

free programs, and that you know you can do these things.

|

| 26 |

+

|

| 27 |

+

Developers that use our General Public Licenses protect your rights

|

| 28 |

+

with two steps: (1) assert copyright on the software, and (2) offer

|

| 29 |

+

you this License which gives you legal permission to copy, distribute

|

| 30 |

+

and/or modify the software.

|

| 31 |

+

|

| 32 |

+

A secondary benefit of defending all users' freedom is that

|

| 33 |

+

improvements made in alternate versions of the program, if they

|

| 34 |

+

receive widespread use, become available for other developers to

|

| 35 |

+

incorporate. Many developers of free software are heartened and

|

| 36 |

+

encouraged by the resulting cooperation. However, in the case of

|

| 37 |

+

software used on network servers, this result may fail to come about.

|

| 38 |

+

The GNU General Public License permits making a modified version and

|

| 39 |

+

letting the public access it on a server without ever releasing its

|

| 40 |

+

source code to the public.

|

| 41 |

+

|

| 42 |

+

The GNU Affero General Public License is designed specifically to

|

| 43 |

+

ensure that, in such cases, the modified source code becomes available

|

| 44 |

+

to the community. It requires the operator of a network server to

|

| 45 |

+

provide the source code of the modified version running there to the

|

| 46 |

+

users of that server. Therefore, public use of a modified version, on

|

| 47 |

+

a publicly accessible server, gives the public access to the source

|

| 48 |

+

code of the modified version.

|

| 49 |

+

|

| 50 |

+

An older license, called the Affero General Public License and

|

| 51 |

+

published by Affero, was designed to accomplish similar goals. This is

|

| 52 |

+

a different license, not a version of the Affero GPL, but Affero has

|

| 53 |

+

released a new version of the Affero GPL which permits relicensing under

|

| 54 |

+

this license.

|

| 55 |

+

|

| 56 |

+

The precise terms and conditions for copying, distribution and

|

| 57 |

+

modification follow.

|

| 58 |

+

|

| 59 |

+

TERMS AND CONDITIONS

|

| 60 |

+

|

| 61 |

+

0. Definitions.

|

| 62 |

+

|

| 63 |

+

"This License" refers to version 3 of the GNU Affero General Public License.

|

| 64 |

+

|

| 65 |

+

"Copyright" also means copyright-like laws that apply to other kinds of

|

| 66 |

+

works, such as semiconductor masks.

|

| 67 |

+

|

| 68 |

+

"The Program" refers to any copyrightable work licensed under this

|

| 69 |

+

License. Each licensee is addressed as "you". "Licensees" and

|

| 70 |

+

"recipients" may be individuals or organizations.

|

| 71 |

+

|

| 72 |

+

To "modify" a work means to copy from or adapt all or part of the work

|

| 73 |

+

in a fashion requiring copyright permission, other than the making of an

|

| 74 |

+

exact copy. The resulting work is called a "modified version" of the

|

| 75 |

+

earlier work or a work "based on" the earlier work.

|

| 76 |

+

|

| 77 |

+

A "covered work" means either the unmodified Program or a work based

|

| 78 |

+

on the Program.

|

| 79 |

+

|

| 80 |

+

To "propagate" a work means to do anything with it that, without

|

| 81 |

+

permission, would make you directly or secondarily liable for

|

| 82 |

+

infringement under applicable copyright law, except executing it on a

|

| 83 |

+

computer or modifying a private copy. Propagation includes copying,

|

| 84 |

+

distribution (with or without modification), making available to the

|

| 85 |

+

public, and in some countries other activities as well.

|

| 86 |

+

|

| 87 |

+

To "convey" a work means any kind of propagation that enables other

|

| 88 |

+

parties to make or receive copies. Mere interaction with a user through

|

| 89 |

+

a computer network, with no transfer of a copy, is not conveying.

|

| 90 |

+

|

| 91 |

+

An interactive user interface displays "Appropriate Legal Notices"

|

| 92 |

+

to the extent that it includes a convenient and prominently visible

|

| 93 |

+

feature that (1) displays an appropriate copyright notice, and (2)

|

| 94 |

+

tells the user that there is no warranty for the work (except to the

|

| 95 |

+

extent that warranties are provided), that licensees may convey the

|

| 96 |

+

work under this License, and how to view a copy of this License. If

|

| 97 |

+

the interface presents a list of user commands or options, such as a

|

| 98 |

+

menu, a prominent item in the list meets this criterion.

|

| 99 |

+

|

| 100 |

+

1. Source Code.

|

| 101 |

+

|

| 102 |

+

The "source code" for a work means the preferred form of the work

|

| 103 |

+

for making modifications to it. "Object code" means any non-source

|

| 104 |

+

form of a work.

|

| 105 |

+

|

| 106 |

+

A "Standard Interface" means an interface that either is an official

|

| 107 |

+

standard defined by a recognized standards body, or, in the case of

|

| 108 |

+

interfaces specified for a particular programming language, one that

|

| 109 |

+

is widely used among developers working in that language.

|

| 110 |

+

|

| 111 |

+

The "System Libraries" of an executable work include anything, other

|

| 112 |

+

than the work as a whole, that (a) is included in the normal form of

|

| 113 |

+

packaging a Major Component, but which is not part of that Major

|

| 114 |

+

Component, and (b) serves only to enable use of the work with that

|

| 115 |

+

Major Component, or to implement a Standard Interface for which an

|

| 116 |

+

implementation is available to the public in source code form. A

|

| 117 |

+

"Major Component", in this context, means a major essential component

|

| 118 |

+

(kernel, window system, and so on) of the specific operating system

|

| 119 |

+

(if any) on which the executable work runs, or a compiler used to

|

| 120 |

+

produce the work, or an object code interpreter used to run it.

|

| 121 |

+

|

| 122 |

+

The "Corresponding Source" for a work in object code form means all

|

| 123 |

+

the source code needed to generate, install, and (for an executable

|

| 124 |

+

work) run the object code and to modify the work, including scripts to

|

| 125 |

+

control those activities. However, it does not include the work's

|

| 126 |

+

System Libraries, or general-purpose tools or generally available free

|

| 127 |

+

programs which are used unmodified in performing those activities but

|

| 128 |

+

which are not part of the work. For example, Corresponding Source

|

| 129 |

+

includes interface definition files associated with source files for

|

| 130 |

+

the work, and the source code for shared libraries and dynamically

|

| 131 |

+

linked subprograms that the work is specifically designed to require,

|

| 132 |

+

such as by intimate data communication or control flow between those

|

| 133 |

+

subprograms and other parts of the work.

|

| 134 |

+

|

| 135 |

+

The Corresponding Source need not include anything that users

|

| 136 |

+

can regenerate automatically from other parts of the Corresponding

|

| 137 |

+

Source.

|

| 138 |

+

|

| 139 |

+

The Corresponding Source for a work in source code form is that

|

| 140 |

+

same work.

|

| 141 |

+

|

| 142 |

+

2. Basic Permissions.

|

| 143 |

+

|

| 144 |

+

All rights granted under this License are granted for the term of

|

| 145 |

+

copyright on the Program, and are irrevocable provided the stated

|

| 146 |

+

conditions are met. This License explicitly affirms your unlimited

|

| 147 |

+

permission to run the unmodified Program. The output from running a

|

| 148 |

+

covered work is covered by this License only if the output, given its

|

| 149 |

+

content, constitutes a covered work. This License acknowledges your

|

| 150 |

+

rights of fair use or other equivalent, as provided by copyright law.

|

| 151 |

+

|

| 152 |

+

You may make, run and propagate covered works that you do not

|

| 153 |

+

convey, without conditions so long as your license otherwise remains

|

| 154 |

+

in force. You may convey covered works to others for the sole purpose

|

| 155 |

+

of having them make modifications exclusively for you, or provide you

|

| 156 |

+

with facilities for running those works, provided that you comply with

|

| 157 |

+

the terms of this License in conveying all material for which you do

|

| 158 |

+

not control copyright. Those thus making or running the covered works

|

| 159 |

+

for you must do so exclusively on your behalf, under your direction

|

| 160 |

+

and control, on terms that prohibit them from making any copies of

|

| 161 |

+

your copyrighted material outside their relationship with you.

|

| 162 |

+

|

| 163 |

+

Conveying under any other circumstances is permitted solely under

|

| 164 |

+

the conditions stated below. Sublicensing is not allowed; section 10

|

| 165 |

+

makes it unnecessary.

|

| 166 |

+

|

| 167 |

+

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

|

| 168 |

+

|

| 169 |

+

No covered work shall be deemed part of an effective technological

|

| 170 |

+

measure under any applicable law fulfilling obligations under article

|

| 171 |

+

11 of the WIPO copyright treaty adopted on 20 December 1996, or

|

| 172 |

+

similar laws prohibiting or restricting circumvention of such

|

| 173 |

+

measures.

|

| 174 |

+

|

| 175 |

+

When you convey a covered work, you waive any legal power to forbid

|

| 176 |

+

circumvention of technological measures to the extent such circumvention

|

| 177 |

+

is effected by exercising rights under this License with respect to

|

| 178 |

+

the covered work, and you disclaim any intention to limit operation or

|

| 179 |

+

modification of the work as a means of enforcing, against the work's

|

| 180 |

+

users, your or third parties' legal rights to forbid circumvention of

|

| 181 |

+

technological measures.

|

| 182 |

+

|

| 183 |

+

4. Conveying Verbatim Copies.

|

| 184 |

+

|

| 185 |

+

You may convey verbatim copies of the Program's source code as you

|

| 186 |

+

receive it, in any medium, provided that you conspicuously and

|

| 187 |

+

appropriately publish on each copy an appropriate copyright notice;

|

| 188 |

+

keep intact all notices stating that this License and any

|

| 189 |

+

non-permissive terms added in accord with section 7 apply to the code;

|

| 190 |

+

keep intact all notices of the absence of any warranty; and give all

|

| 191 |

+

recipients a copy of this License along with the Program.

|

| 192 |

+

|

| 193 |

+

You may charge any price or no price for each copy that you convey,

|

| 194 |

+

and you may offer support or warranty protection for a fee.

|

| 195 |

+

|

| 196 |

+

5. Conveying Modified Source Versions.

|

| 197 |

+

|

| 198 |

+

You may convey a work based on the Program, or the modifications to

|

| 199 |

+

produce it from the Program, in the form of source code under the

|

| 200 |

+

terms of section 4, provided that you also meet all of these conditions:

|

| 201 |

+

|

| 202 |

+

a) The work must carry prominent notices stating that you modified

|

| 203 |

+

it, and giving a relevant date.

|

| 204 |

+

|

| 205 |

+

b) The work must carry prominent notices stating that it is

|

| 206 |

+

released under this License and any conditions added under section

|

| 207 |

+

7. This requirement modifies the requirement in section 4 to

|

| 208 |

+

"keep intact all notices".

|

| 209 |

+

|

| 210 |

+

c) You must license the entire work, as a whole, under this

|

| 211 |

+

License to anyone who comes into possession of a copy. This

|

| 212 |

+

License will therefore apply, along with any applicable section 7

|

| 213 |

+

additional terms, to the whole of the work, and all its parts,

|

| 214 |

+

regardless of how they are packaged. This License gives no

|

| 215 |

+

permission to license the work in any other way, but it does not

|

| 216 |

+

invalidate such permission if you have separately received it.

|

| 217 |

+

|

| 218 |

+

d) If the work has interactive user interfaces, each must display

|

| 219 |

+

Appropriate Legal Notices; however, if the Program has interactive

|

| 220 |

+

interfaces that do not display Appropriate Legal Notices, your

|

| 221 |

+

work need not make them do so.

|

| 222 |

+

|

| 223 |

+

A compilation of a covered work with other separate and independent

|

| 224 |

+

works, which are not by their nature extensions of the covered work,

|

| 225 |

+

and which are not combined with it such as to form a larger program,

|

| 226 |

+

in or on a volume of a storage or distribution medium, is called an

|

| 227 |

+

"aggregate" if the compilation and its resulting copyright are not

|

| 228 |

+

used to limit the access or legal rights of the compilation's users

|

| 229 |

+

beyond what the individual works permit. Inclusion of a covered work

|

| 230 |

+

in an aggregate does not cause this License to apply to the other

|

| 231 |

+

parts of the aggregate.

|

| 232 |

+

|

| 233 |

+

6. Conveying Non-Source Forms.

|

| 234 |

+

|

| 235 |

+

You may convey a covered work in object code form under the terms

|

| 236 |

+

of sections 4 and 5, provided that you also convey the

|

| 237 |

+

machine-readable Corresponding Source under the terms of this License,

|

| 238 |

+

in one of these ways:

|

| 239 |

+

|

| 240 |

+

a) Convey the object code in, or embodied in, a physical product

|

| 241 |

+

(including a physical distribution medium), accompanied by the

|

| 242 |

+

Corresponding Source fixed on a durable physical medium

|

| 243 |

+

customarily used for software interchange.

|

| 244 |

+

|

| 245 |

+

b) Convey the object code in, or embodied in, a physical product

|

| 246 |

+

(including a physical distribution medium), accompanied by a

|

| 247 |

+

written offer, valid for at least three years and valid for as

|

| 248 |

+

long as you offer spare parts or customer support for that product

|

| 249 |

+

model, to give anyone who possesses the object code either (1) a

|

| 250 |

+

copy of the Corresponding Source for all the software in the

|

| 251 |

+

product that is covered by this License, on a durable physical

|

| 252 |

+

medium customarily used for software interchange, for a price no

|

| 253 |

+

more than your reasonable cost of physically performing this

|

| 254 |

+

conveying of source, or (2) access to copy the

|

| 255 |

+

Corresponding Source from a network server at no charge.

|

| 256 |

+

|

| 257 |

+

c) Convey individual copies of the object code with a copy of the

|

| 258 |

+

written offer to provide the Corresponding Source. This

|

| 259 |

+

alternative is allowed only occasionally and noncommercially, and

|

| 260 |

+

only if you received the object code with such an offer, in accord

|

| 261 |

+

with subsection 6b.

|

| 262 |

+

|

| 263 |

+

d) Convey the object code by offering access from a designated

|

| 264 |

+

place (gratis or for a charge), and offer equivalent access to the

|

| 265 |

+

Corresponding Source in the same way through the same place at no

|

| 266 |

+

further charge. You need not require recipients to copy the

|

| 267 |

+

Corresponding Source along with the object code. If the place to

|

| 268 |

+

copy the object code is a network server, the Corresponding Source

|

| 269 |

+

may be on a different server (operated by you or a third party)

|

| 270 |

+

that supports equivalent copying facilities, provided you maintain

|

| 271 |

+

clear directions next to the object code saying where to find the

|

| 272 |

+

Corresponding Source. Regardless of what server hosts the

|

| 273 |

+

Corresponding Source, you remain obligated to ensure that it is

|

| 274 |

+

available for as long as needed to satisfy these requirements.

|

| 275 |

+

|

| 276 |

+

e) Convey the object code using peer-to-peer transmission, provided

|

| 277 |

+

you inform other peers where the object code and Corresponding

|

| 278 |

+

Source of the work are being offered to the general public at no

|

| 279 |

+

charge under subsection 6d.

|

| 280 |

+

|

| 281 |

+

A separable portion of the object code, whose source code is excluded

|

| 282 |

+

from the Corresponding Source as a System Library, need not be

|

| 283 |

+

included in conveying the object code work.

|

| 284 |

+

|

| 285 |

+

A "User Product" is either (1) a "consumer product", which means any

|

| 286 |

+

tangible personal property which is normally used for personal, family,

|

| 287 |

+

or household purposes, or (2) anything designed or sold for incorporation

|

| 288 |

+

into a dwelling. In determining whether a product is a consumer product,

|

| 289 |

+

doubtful cases shall be resolved in favor of coverage. For a particular

|

| 290 |

+

product received by a particular user, "normally used" refers to a

|

| 291 |

+

typical or common use of that class of product, regardless of the status

|

| 292 |

+

of the particular user or of the way in which the particular user

|

| 293 |

+

actually uses, or expects or is expected to use, the product. A product

|

| 294 |

+

is a consumer product regardless of whether the product has substantial

|

| 295 |

+

commercial, industrial or non-consumer uses, unless such uses represent

|

| 296 |

+

the only significant mode of use of the product.

|

| 297 |

+

|

| 298 |

+

"Installation Information" for a User Product means any methods,

|

| 299 |

+

procedures, authorization keys, or other information required to install

|

| 300 |

+

and execute modified versions of a covered work in that User Product from

|

| 301 |

+

a modified version of its Corresponding Source. The information must

|

| 302 |

+

suffice to ensure that the continued functioning of the modified object

|

| 303 |

+

code is in no case prevented or interfered with solely because

|

| 304 |

+

modification has been made.

|

| 305 |

+

|

| 306 |

+

If you convey an object code work under this section in, or with, or

|

| 307 |

+

specifically for use in, a User Product, and the conveying occurs as

|

| 308 |

+

part of a transaction in which the right of possession and use of the

|

| 309 |

+

User Product is transferred to the recipient in perpetuity or for a

|

| 310 |

+

fixed term (regardless of how the transaction is characterized), the

|

| 311 |

+

Corresponding Source conveyed under this section must be accompanied

|

| 312 |

+

by the Installation Information. But this requirement does not apply

|

| 313 |

+

if neither you nor any third party retains the ability to install

|

| 314 |

+

modified object code on the User Product (for example, the work has

|

| 315 |

+

been installed in ROM).

|

| 316 |

+

|

| 317 |

+

The requirement to provide Installation Information does not include a

|

| 318 |

+

requirement to continue to provide support service, warranty, or updates

|

| 319 |

+

for a work that has been modified or installed by the recipient, or for

|

| 320 |

+

the User Product in which it has been modified or installed. Access to a

|

| 321 |

+

network may be denied when the modification itself materially and

|

| 322 |

+

adversely affects the operation of the network or violates the rules and

|

| 323 |

+

protocols for communication across the network.

|

| 324 |

+

|

| 325 |

+

Corresponding Source conveyed, and Installation Information provided,

|

| 326 |

+

in accord with this section must be in a format that is publicly

|

| 327 |

+

documented (and with an implementation available to the public in

|

| 328 |

+

source code form), and must require no special password or key for

|

| 329 |

+

unpacking, reading or copying.

|

| 330 |

+

|

| 331 |

+

7. Additional Terms.

|

| 332 |

+

|

| 333 |

+

"Additional permissions" are terms that supplement the terms of this

|

| 334 |

+

License by making exceptions from one or more of its conditions.

|

| 335 |

+

Additional permissions that are applicable to the entire Program shall

|

| 336 |

+

be treated as though they were included in this License, to the extent

|

| 337 |

+

that they are valid under applicable law. If additional permissions

|

| 338 |

+

apply only to part of the Program, that part may be used separately

|

| 339 |

+

under those permissions, but the entire Program remains governed by

|

| 340 |

+

this License without regard to the additional permissions.

|

| 341 |

+

|

| 342 |

+

When you convey a copy of a covered work, you may at your option

|

| 343 |

+

remove any additional permissions from that copy, or from any part of

|

| 344 |

+

it. (Additional permissions may be written to require their own

|

| 345 |

+

removal in certain cases when you modify the work.) You may place

|

| 346 |

+

additional permissions on material, added by you to a covered work,

|

| 347 |

+

for which you have or can give appropriate copyright permission.

|

| 348 |

+

|

| 349 |

+

Notwithstanding any other provision of this License, for material you

|

| 350 |

+

add to a covered work, you may (if authorized by the copyright holders of

|

| 351 |

+

that material) supplement the terms of this License with terms:

|

| 352 |

+

|

| 353 |

+

a) Disclaiming warranty or limiting liability differently from the

|

| 354 |

+

terms of sections 15 and 16 of this License; or

|

| 355 |

+

|

| 356 |

+

b) Requiring preservation of specified reasonable legal notices or

|

| 357 |

+

author attributions in that material or in the Appropriate Legal

|

| 358 |

+

Notices displayed by works containing it; or

|

| 359 |

+

|

| 360 |

+

c) Prohibiting misrepresentation of the origin of that material, or

|

| 361 |

+

requiring that modified versions of such material be marked in

|

| 362 |

+

reasonable ways as different from the original version; or

|

| 363 |

+

|

| 364 |

+

d) Limiting the use for publicity purposes of names of licensors or

|

| 365 |

+

authors of the material; or

|

| 366 |

+

|

| 367 |

+

e) Declining to grant rights under trademark law for use of some

|

| 368 |

+

trade names, trademarks, or service marks; or

|

| 369 |

+

|

| 370 |

+

f) Requiring indemnification of licensors and authors of that

|

| 371 |

+

material by anyone who conveys the material (or modified versions of

|

| 372 |

+

it) with contractual assumptions of liability to the recipient, for

|

| 373 |

+

any liability that these contractual assumptions directly impose on

|

| 374 |

+

those licensors and authors.

|

| 375 |

+

|

| 376 |

+

All other non-permissive additional terms are considered "further

|

| 377 |

+

restrictions" within the meaning of section 10. If the Program as you

|

| 378 |

+

received it, or any part of it, contains a notice stating that it is

|

| 379 |

+

governed by this License along with a term that is a further

|

| 380 |

+

restriction, you may remove that term. If a license document contains

|

| 381 |

+

a further restriction but permits relicensing or conveying under this

|

| 382 |

+

License, you may add to a covered work material governed by the terms

|

| 383 |

+

of that license document, provided that the further restriction does

|

| 384 |

+

not survive such relicensing or conveying.

|

| 385 |

+

|

| 386 |

+

If you add terms to a covered work in accord with this section, you

|

| 387 |

+

must place, in the relevant source files, a statement of the

|

| 388 |

+

additional terms that apply to those files, or a notice indicating

|

| 389 |

+

where to find the applicable terms.

|

| 390 |

+

|

| 391 |

+

Additional terms, permissive or non-permissive, may be stated in the

|

| 392 |

+

form of a separately written license, or stated as exceptions;

|

| 393 |

+

the above requirements apply either way.

|

| 394 |

+

|

| 395 |

+

8. Termination.

|

| 396 |

+

|

| 397 |

+

You may not propagate or modify a covered work except as expressly

|

| 398 |

+

provided under this License. Any attempt otherwise to propagate or

|

| 399 |

+

modify it is void, and will automatically terminate your rights under

|

| 400 |

+

this License (including any patent licenses granted under the third

|

| 401 |

+

paragraph of section 11).

|

| 402 |

+

|

| 403 |

+

However, if you cease all violation of this License, then your

|

| 404 |

+

license from a particular copyright holder is reinstated (a)

|

| 405 |

+

provisionally, unless and until the copyright holder explicitly and

|

| 406 |

+

finally terminates your license, and (b) permanently, if the copyright

|

| 407 |

+

holder fails to notify you of the violation by some reasonable means

|

| 408 |

+

prior to 60 days after the cessation.

|

| 409 |

+

|

| 410 |

+

Moreover, your license from a particular copyright holder is

|

| 411 |

+

reinstated permanently if the copyright holder notifies you of the

|

| 412 |

+

violation by some reasonable means, this is the first time you have

|

| 413 |

+

received notice of violation of this License (for any work) from that

|

| 414 |

+

copyright holder, and you cure the violation prior to 30 days after

|

| 415 |

+

your receipt of the notice.

|

| 416 |

+

|

| 417 |

+

Termination of your rights under this section does not terminate the

|

| 418 |

+

licenses of parties who have received copies or rights from you under

|

| 419 |

+

this License. If your rights have been terminated and not permanently

|

| 420 |

+

reinstated, you do not qualify to receive new licenses for the same

|

| 421 |

+

material under section 10.

|

| 422 |

+

|

| 423 |

+

9. Acceptance Not Required for Having Copies.

|

| 424 |

+

|

| 425 |

+

You are not required to accept this License in order to receive or

|

| 426 |

+

run a copy of the Program. Ancillary propagation of a covered work

|

| 427 |

+

occurring solely as a consequence of using peer-to-peer transmission

|

| 428 |

+

to receive a copy likewise does not require acceptance. However,

|

| 429 |

+

nothing other than this License grants you permission to propagate or

|

| 430 |

+

modify any covered work. These actions infringe copyright if you do

|

| 431 |

+

not accept this License. Therefore, by modifying or propagating a

|

| 432 |

+

covered work, you indicate your acceptance of this License to do so.

|

| 433 |

+

|

| 434 |

+

10. Automatic Licensing of Downstream Recipients.

|

| 435 |

+

|

| 436 |

+

Each time you convey a covered work, the recipient automatically

|

| 437 |

+

receives a license from the original licensors, to run, modify and

|

| 438 |

+

propagate that work, subject to this License. You are not responsible

|

| 439 |

+

for enforcing compliance by third parties with this License.

|

| 440 |

+

|

| 441 |

+

An "entity transaction" is a transaction transferring control of an

|

| 442 |

+

organization, or substantially all assets of one, or subdividing an

|

| 443 |

+

organization, or merging organizations. If propagation of a covered

|

| 444 |

+

work results from an entity transaction, each party to that

|

| 445 |

+

transaction who receives a copy of the work also receives whatever

|

| 446 |

+

licenses to the work the party's predecessor in interest had or could

|

| 447 |

+

give under the previous paragraph, plus a right to possession of the

|

| 448 |

+

Corresponding Source of the work from the predecessor in interest, if

|

| 449 |

+

the predecessor has it or can get it with reasonable efforts.

|

| 450 |

+

|

| 451 |

+

You may not impose any further restrictions on the exercise of the

|

| 452 |

+

rights granted or affirmed under this License. For example, you may

|

| 453 |

+

not impose a license fee, royalty, or other charge for exercise of

|

| 454 |

+

rights granted under this License, and you may not initiate litigation

|

| 455 |

+

(including a cross-claim or counterclaim in a lawsuit) alleging that

|

| 456 |

+

any patent claim is infringed by making, using, selling, offering for

|

| 457 |

+

sale, or importing the Program or any portion of it.

|

| 458 |

+

|

| 459 |

+

11. Patents.

|

| 460 |

+

|

| 461 |

+

A "contributor" is a copyright holder who authorizes use under this

|

| 462 |

+

License of the Program or a work on which the Program is based. The

|

| 463 |

+

work thus licensed is called the contributor's "contributor version".

|

| 464 |

+

|

| 465 |

+

A contributor's "essential patent claims" are all patent claims

|

| 466 |

+

owned or controlled by the contributor, whether already acquired or

|

| 467 |

+

hereafter acquired, that would be infringed by some manner, permitted

|

| 468 |

+

by this License, of making, using, or selling its contributor version,

|

| 469 |

+

but do not include claims that would be infringed only as a

|

| 470 |

+

consequence of further modification of the contributor version. For

|

| 471 |

+

purposes of this definition, "control" includes the right to grant

|

| 472 |

+

patent sublicenses in a manner consistent with the requirements of

|

| 473 |

+

this License.

|

| 474 |

+

|

| 475 |

+

Each contributor grants you a non-exclusive, worldwide, royalty-free

|

| 476 |

+

patent license under the contributor's essential patent claims, to

|

| 477 |

+

make, use, sell, offer for sale, import and otherwise run, modify and

|

| 478 |

+

propagate the contents of its contributor version.

|

| 479 |

+

|

| 480 |

+

In the following three paragraphs, a "patent license" is any express

|

| 481 |

+

agreement or commitment, however denominated, not to enforce a patent

|

| 482 |

+

(such as an express permission to practice a patent or covenant not to

|

| 483 |

+

sue for patent infringement). To "grant" such a patent license to a

|

| 484 |

+

party means to make such an agreement or commitment not to enforce a

|

| 485 |

+

patent against the party.

|

| 486 |

+

|

| 487 |

+

If you convey a covered work, knowingly relying on a patent license,

|

| 488 |

+

and the Corresponding Source of the work is not available for anyone

|

| 489 |

+

to copy, free of charge and under the terms of this License, through a

|

| 490 |

+

publicly available network server or other readily accessible means,

|

| 491 |

+

then you must either (1) cause the Corresponding Source to be so

|

| 492 |

+

available, or (2) arrange to deprive yourself of the benefit of the

|

| 493 |

+

patent license for this particular work, or (3) arrange, in a manner

|

| 494 |

+

consistent with the requirements of this License, to extend the patent

|

| 495 |

+

license to downstream recipients. "Knowingly relying" means you have

|

| 496 |

+

actual knowledge that, but for the patent license, your conveying the

|

| 497 |

+

covered work in a country, or your recipient's use of the covered work

|

| 498 |

+

in a country, would infringe one or more identifiable patents in that

|

| 499 |

+

country that you have reason to believe are valid.

|

| 500 |

+

|

| 501 |

+

If, pursuant to or in connection with a single transaction or

|

| 502 |

+

arrangement, you convey, or propagate by procuring conveyance of, a

|

| 503 |

+

covered work, and grant a patent license to some of the parties

|

| 504 |

+

receiving the covered work authorizing them to use, propagate, modify

|

| 505 |

+

or convey a specific copy of the covered work, then the patent license

|

| 506 |

+

you grant is automatically extended to all recipients of the covered

|

| 507 |

+

work and works based on it.

|

| 508 |

+

|

| 509 |

+

A patent license is "discriminatory" if it does not include within