Spaces:

Running

Running

Upload 7 files

Browse files- README.md +5 -5

- app.py +122 -0

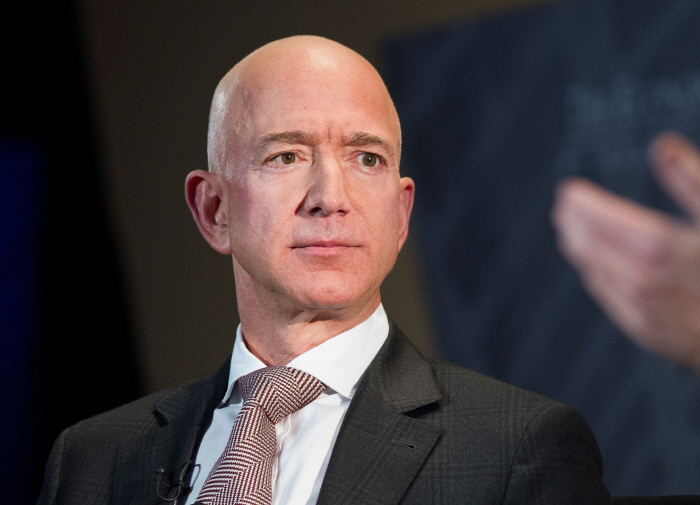

- bezos.jpeg +0 -0

- biden.jpeg +0 -0

- elon.jpg +0 -0

- requirements.txt +6 -0

- zuckerberg.jpeg +0 -0

README.md

CHANGED

|

@@ -1,10 +1,10 @@

|

|

| 1 |

---

|

| 2 |

-

title:

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version:

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

---

|

|

|

|

| 1 |

---

|

| 2 |

+

title: Segmentation

|

| 3 |

+

emoji: 👀

|

| 4 |

+

colorFrom: red

|

| 5 |

+

colorTo: blue

|

| 6 |

sdk: gradio

|

| 7 |

+

sdk_version: 3.44.4

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

---

|

app.py

ADDED

|

@@ -0,0 +1,122 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

|

| 3 |

+

from matplotlib import gridspec

|

| 4 |

+

import matplotlib.pyplot as plt

|

| 5 |

+

import numpy as np

|

| 6 |

+

from PIL import Image

|

| 7 |

+

import tensorflow as tf

|

| 8 |

+

from transformers import SegformerFeatureExtractor, TFSegformerForSemanticSegmentation

|

| 9 |

+

|

| 10 |

+

feature_extractor = SegformerFeatureExtractor.from_pretrained(

|

| 11 |

+

"jonathandinu/face-parsing"

|

| 12 |

+

)

|

| 13 |

+

model = TFSegformerForSemanticSegmentation.from_pretrained("jonathandinu/face-parsing")

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

def ade_palette():

|

| 17 |

+

"""ADE20K palette that maps each class to RGB values."""

|

| 18 |

+

return [

|

| 19 |

+

[125, 237, 123],

|

| 20 |

+

[25, 97, 48],

|

| 21 |

+

[59, 11, 81],

|

| 22 |

+

[163, 123, 42],

|

| 23 |

+

[239, 41, 136],

|

| 24 |

+

[224, 4, 115],

|

| 25 |

+

[114, 84, 169],

|

| 26 |

+

[16, 137, 208],

|

| 27 |

+

[153, 91, 30],

|

| 28 |

+

[48, 90, 221],

|

| 29 |

+

[91, 245, 206],

|

| 30 |

+

[108, 87, 175],

|

| 31 |

+

[232, 181, 231],

|

| 32 |

+

[153, 70, 176],

|

| 33 |

+

[32, 25, 179],

|

| 34 |

+

[118, 177, 239],

|

| 35 |

+

[246, 75, 15],

|

| 36 |

+

[183, 17, 190],

|

| 37 |

+

[79, 235, 51],

|

| 38 |

+

]

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

labels_list = []

|

| 42 |

+

|

| 43 |

+

with open(r"labels.txt", "r") as fp:

|

| 44 |

+

for line in fp:

|

| 45 |

+

labels_list.append(line[:-1])

|

| 46 |

+

|

| 47 |

+

colormap = np.asarray(ade_palette())

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

def label_to_color_image(label):

|

| 51 |

+

if label.ndim != 2:

|

| 52 |

+

raise ValueError("Expect 2-D input label")

|

| 53 |

+

|

| 54 |

+

if np.max(label) >= len(colormap):

|

| 55 |

+

raise ValueError("label value too large.")

|

| 56 |

+

return colormap[label]

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

def draw_plot(pred_img, seg):

|

| 60 |

+

fig = plt.figure(figsize=(20, 15))

|

| 61 |

+

|

| 62 |

+

grid_spec = gridspec.GridSpec(1, 2, width_ratios=[6, 1])

|

| 63 |

+

|

| 64 |

+

plt.subplot(grid_spec[0])

|

| 65 |

+

plt.imshow(pred_img)

|

| 66 |

+

plt.axis("off")

|

| 67 |

+

LABEL_NAMES = np.asarray(labels_list)

|

| 68 |

+

FULL_LABEL_MAP = np.arange(len(LABEL_NAMES)).reshape(len(LABEL_NAMES), 1)

|

| 69 |

+

FULL_COLOR_MAP = label_to_color_image(FULL_LABEL_MAP)

|

| 70 |

+

|

| 71 |

+

unique_labels = np.unique(seg.numpy().astype("uint8"))

|

| 72 |

+

ax = plt.subplot(grid_spec[1])

|

| 73 |

+

plt.imshow(FULL_COLOR_MAP[unique_labels].astype(np.uint8), interpolation="nearest")

|

| 74 |

+

ax.yaxis.tick_right()

|

| 75 |

+

plt.yticks(range(len(unique_labels)), LABEL_NAMES[unique_labels])

|

| 76 |

+

plt.xticks([], [])

|

| 77 |

+

ax.tick_params(width=0.0, labelsize=25)

|

| 78 |

+

return fig

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

def sepia(input_img):

|

| 82 |

+

input_img = Image.fromarray(input_img)

|

| 83 |

+

|

| 84 |

+

inputs = feature_extractor(images=input_img, return_tensors="tf")

|

| 85 |

+

outputs = model(**inputs)

|

| 86 |

+

logits = outputs.logits

|

| 87 |

+

|

| 88 |

+

logits = tf.transpose(logits, [0, 2, 3, 1])

|

| 89 |

+

logits = tf.image.resize(

|

| 90 |

+

logits, input_img.size[::-1]

|

| 91 |

+

) # We reverse the shape of `image` because `image.size` returns width and height.

|

| 92 |

+

seg = tf.math.argmax(logits, axis=-1)[0]

|

| 93 |

+

|

| 94 |

+

color_seg = np.zeros(

|

| 95 |

+

(seg.shape[0], seg.shape[1], 3), dtype=np.uint8

|

| 96 |

+

) # height, width, 3

|

| 97 |

+

for label, color in enumerate(colormap):

|

| 98 |

+

color_seg[seg.numpy() == label, :] = color

|

| 99 |

+

|

| 100 |

+

# Show image + mask

|

| 101 |

+

pred_img = np.array(input_img) * 0.5 + color_seg * 0.5

|

| 102 |

+

pred_img = pred_img.astype(np.uint8)

|

| 103 |

+

|

| 104 |

+

fig = draw_plot(pred_img, seg)

|

| 105 |

+

return fig

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

demo = gr.Interface(

|

| 109 |

+

fn=sepia,

|

| 110 |

+

inputs=gr.Image(shape=(400, 600)),

|

| 111 |

+

outputs=["plot"],

|

| 112 |

+

examples=[

|

| 113 |

+

"elon.jpg",

|

| 114 |

+

"biden.jpeg",

|

| 115 |

+

"bezos.jpeg",

|

| 116 |

+

"zuckerberg.jpeg",

|

| 117 |

+

],

|

| 118 |

+

allow_flagging="never",

|

| 119 |

+

)

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

demo.launch()

|

bezos.jpeg

ADDED

|

biden.jpeg

ADDED

|

elon.jpg

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

torch

|

| 2 |

+

transformers

|

| 3 |

+

tensorflow

|

| 4 |

+

numpy

|

| 5 |

+

Image

|

| 6 |

+

matplotlib

|

zuckerberg.jpeg

ADDED

|