Spaces:

Runtime error

Runtime error

Alex Strick van Linschoten

commited on

Commit

·

b0f2ac0

1

Parent(s):

8de9fe5

update app

Browse files- LICENSE +201 -0

- README.md +1 -13

- allsynthetic-imgsize768.pth +3 -0

- app.py +0 -4

- article.md +79 -0

- fastai-classification-model.pkl +3 -0

- packages.txt +2 -0

- requirements.txt +11 -0

- streamlit_app.py +247 -0

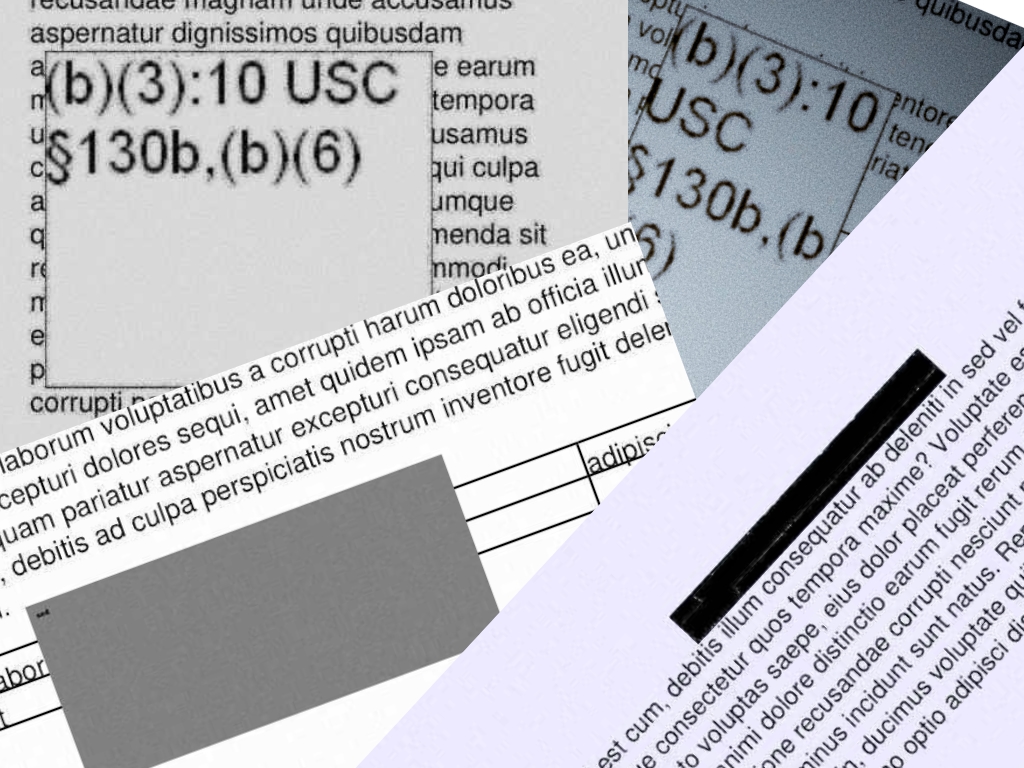

- synthetic-redactions.jpg +0 -0

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,13 +1 @@

|

|

| 1 |

-

|

| 2 |

-

title: Redaction Detector

|

| 3 |

-

emoji: 🔍

|

| 4 |

-

colorFrom: yellow

|

| 5 |

-

colorTo: purple

|

| 6 |

-

sdk: streamlit

|

| 7 |

-

sdk_version: 1.2.0

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

-

license: apache-2.0

|

| 11 |

-

---

|

| 12 |

-

|

| 13 |

-

Check out the configuration reference at https://huggingface.co/docs/hub/spaces#reference

|

|

|

|

| 1 |

+

# redaction-detector-streamlit

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

allsynthetic-imgsize768.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:65362ca715c08973334b0488fb0cada234bff39a20cfa6a6d79eb111e744864e

|

| 3 |

+

size 131183383

|

app.py

DELETED

|

@@ -1,4 +0,0 @@

|

|

| 1 |

-

import streamlit as st

|

| 2 |

-

|

| 3 |

-

x = st.slider('Select a value')

|

| 4 |

-

st.write(x, 'squared is', x * x)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

article.md

ADDED

|

@@ -0,0 +1,79 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

I've been working through the first two lessons of

|

| 2 |

+

[the fastai course](https://course.fast.ai/). For lesson one I trained a model

|

| 3 |

+

to recognise my cat, Mr Blupus. For lesson two the emphasis is on getting those

|

| 4 |

+

models out in the world as some kind of demo or application.

|

| 5 |

+

[Gradio](https://gradio.app) and

|

| 6 |

+

[Huggingface Spaces](https://huggingface.co/spaces) makes it super easy to get a

|

| 7 |

+

prototype of your model on the internet.

|

| 8 |

+

|

| 9 |

+

This MVP app runs two models to mimic the experience of what a final deployed

|

| 10 |

+

version of the project might look like.

|

| 11 |

+

|

| 12 |

+

- The first model (a classification model trained with fastai, available on the

|

| 13 |

+

Huggingface Hub

|

| 14 |

+

[here](https://huggingface.co/strickvl/redaction-classifier-fastai) and

|

| 15 |

+

testable as a standalone demo

|

| 16 |

+

[here](https://huggingface.co/spaces/strickvl/fastai_redaction_classifier)),

|

| 17 |

+

classifies and determines which pages of the PDF are redacted. I've written

|

| 18 |

+

about how I trained this model [here](https://mlops.systems/fastai/redactionmodel/computervision/datalabelling/2021/09/06/redaction-classification-chapter-2.html).

|

| 19 |

+

- The second model (an object detection model trained using [IceVision](https://airctic.com/) (itself

|

| 20 |

+

built partly on top of fastai)) detects which parts of the image are redacted.

|

| 21 |

+

This is a model I've been working on for a while and I described my process in

|

| 22 |

+

a series of blog posts (see below).

|

| 23 |

+

|

| 24 |

+

This MVP app does several things:

|

| 25 |

+

|

| 26 |

+

- it extracts any pages it considers to contain redactions and displays that

|

| 27 |

+

subset as an [image carousel](https://gradio.app/docs/#o_carousel). It also

|

| 28 |

+

displays some text alerting you to which specific pages were redacted.

|

| 29 |

+

- if you click the "Analyse and extract redacted images" checkbox, it will:

|

| 30 |

+

- pass the pages it considered redacted through the object detection model

|

| 31 |

+

- calculate what proportion of the total area of the image was redacted as

|

| 32 |

+

well as what proportion of the actual content (i.e. excluding margins etc

|

| 33 |

+

where there is no content)

|

| 34 |

+

- create a PDF that you can download that contains only the redacted images,

|

| 35 |

+

with an overlay of the redactions that it was able to identify along with

|

| 36 |

+

the confidence score for each item.

|

| 37 |

+

|

| 38 |

+

## The Dataset

|

| 39 |

+

|

| 40 |

+

I downloaded a few thousand publicly-available FOIA documents from a government

|

| 41 |

+

website. I split the PDFs up into individual `.jpg` files and then used

|

| 42 |

+

[Prodigy](https://prodi.gy/) to annotate the data. (This process was described

|

| 43 |

+

in

|

| 44 |

+

[a blogpost written last

|

| 45 |

+

year](https://mlops.systems/fastai/redactionmodel/computervision/datalabelling/2021/09/06/redaction-classification-chapter-2.html).)

|

| 46 |

+

For the object detection model, the process was quite a bit more involved and I

|

| 47 |

+

direct you to the series of articles referenced below in the 'Further Reading' section.

|

| 48 |

+

|

| 49 |

+

## Training the model

|

| 50 |

+

|

| 51 |

+

I trained the classification model with fastai's flexible `vision_learner`, fine-tuning

|

| 52 |

+

`resnet18` which was both smaller than `resnet34` (no surprises there) and less

|

| 53 |

+

liable to early overfitting. I trained the model for 10 epochs.

|

| 54 |

+

|

| 55 |

+

The object detection model is trained using IceVision, with VFNet as the

|

| 56 |

+

model and `resnet50` as the backbone. I trained the model for 50 epochs and

|

| 57 |

+

reached 89% accuracy on the validation data.

|

| 58 |

+

|

| 59 |

+

## Further Reading

|

| 60 |

+

|

| 61 |

+

This initial dataset spurred an ongoing interest in the domain and I've since

|

| 62 |

+

been working on the problem of object detection, i.e. identifying exactly which

|

| 63 |

+

parts of the image contain redactions.

|

| 64 |

+

|

| 65 |

+

Some of the key blogs I've written about this project:

|

| 66 |

+

|

| 67 |

+

- How to annotate data for an object detection problem with Prodigy

|

| 68 |

+

([link](https://mlops.systems/redactionmodel/computervision/datalabelling/2021/11/29/prodigy-object-detection-training.html))

|

| 69 |

+

- How to create synthetic images to supplement a small dataset

|

| 70 |

+

([link](https://mlops.systems/redactionmodel/computervision/python/tools/2022/02/10/synthetic-image-data.html))

|

| 71 |

+

- How to use error analysis and visual tools like FiftyOne to improve model

|

| 72 |

+

performance

|

| 73 |

+

([link](https://mlops.systems/redactionmodel/computervision/tools/debugging/jupyter/2022/03/12/fiftyone-computervision.html))

|

| 74 |

+

- Creating more synthetic data focused on the tasks my model finds hard

|

| 75 |

+

([link](https://mlops.systems/tools/redactionmodel/computervision/2022/04/06/synthetic-data-results.html))

|

| 76 |

+

- Data validation for object detection / computer vision (a three part series —

|

| 77 |

+

[part 1](https://mlops.systems/tools/redactionmodel/computervision/datavalidation/2022/04/19/data-validation-great-expectations-part-1.html),

|

| 78 |

+

[part 2](https://mlops.systems/tools/redactionmodel/computervision/datavalidation/2022/04/26/data-validation-great-expectations-part-2.html),

|

| 79 |

+

[part 3](https://mlops.systems/tools/redactionmodel/computervision/datavalidation/2022/04/28/data-validation-great-expectations-part-3.html))

|

fastai-classification-model.pkl

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2ec0645d5b4428fa3da139e9138653657bb7b1fcad2a138937dcdeb5bb51cf0c

|

| 3 |

+

size 87496043

|

packages.txt

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

python3-opencv

|

| 2 |

+

python-tk

|

requirements.txt

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

--find-links https://download.openmmlab.com/mmcv/dist/cpu/torch1.10.0/index.html

|

| 2 |

+

mmcv-full==1.3.17

|

| 3 |

+

mmdet==2.17.0

|

| 4 |

+

gradio==2.7.5

|

| 5 |

+

icevision[all]==0.12.0

|

| 6 |

+

|

| 7 |

+

fastai

|

| 8 |

+

scikit-image

|

| 9 |

+

pymupdf

|

| 10 |

+

fpdf

|

| 11 |

+

|

streamlit_app.py

ADDED

|

@@ -0,0 +1,247 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from io import BytesIO

|

| 2 |

+

import os

|

| 3 |

+

import pathlib

|

| 4 |

+

import tempfile

|

| 5 |

+

import time

|

| 6 |

+

|

| 7 |

+

import fitz

|

| 8 |

+

import gradio as gr

|

| 9 |

+

import PIL

|

| 10 |

+

import skimage

|

| 11 |

+

import streamlit as st

|

| 12 |

+

from fastai.learner import load_learner

|

| 13 |

+

from fastai.vision.all import *

|

| 14 |

+

from fpdf import FPDF

|

| 15 |

+

from icevision.all import *

|

| 16 |

+

from icevision.models.checkpoint import *

|

| 17 |

+

from PIL import Image as PILImage

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

CHECKPOINT_PATH = "./allsynthetic-imgsize768.pth"

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

@st.cache

|

| 24 |

+

def load_icevision_model():

|

| 25 |

+

return model_from_checkpoint(CHECKPOINT_PATH)

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

@st.cache

|

| 29 |

+

def load_fastai_model():

|

| 30 |

+

return load_learner("fastai-classification-model.pkl")

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

checkpoint_and_model = load_icevision_model()

|

| 34 |

+

model = checkpoint_and_model["model"]

|

| 35 |

+

model_type = checkpoint_and_model["model_type"]

|

| 36 |

+

class_map = checkpoint_and_model["class_map"]

|

| 37 |

+

|

| 38 |

+

img_size = checkpoint_and_model["img_size"]

|

| 39 |

+

valid_tfms = tfms.A.Adapter(

|

| 40 |

+

[*tfms.A.resize_and_pad(img_size), tfms.A.Normalize()]

|

| 41 |

+

)

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

learn = load_fastai_model()

|

| 45 |

+

labels = learn.dls.vocab

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

@st.experimental_memo

|

| 49 |

+

def get_content_area(pred_dict) -> int:

|

| 50 |

+

if "content" not in pred_dict["detection"]["labels"]:

|

| 51 |

+

return 0

|

| 52 |

+

content_bboxes = [

|

| 53 |

+

pred_dict["detection"]["bboxes"][idx]

|

| 54 |

+

for idx, label in enumerate(pred_dict["detection"]["labels"])

|

| 55 |

+

if label == "content"

|

| 56 |

+

]

|

| 57 |

+

cb = content_bboxes[0]

|

| 58 |

+

return (cb.xmax - cb.xmin) * (cb.ymax - cb.ymin)

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

@st.experimental_memo

|

| 62 |

+

def get_redaction_area(pred_dict) -> int:

|

| 63 |

+

if "redaction" not in pred_dict["detection"]["labels"]:

|

| 64 |

+

return 0

|

| 65 |

+

redaction_bboxes = [

|

| 66 |

+

pred_dict["detection"]["bboxes"][idx]

|

| 67 |

+

for idx, label in enumerate(pred_dict["detection"]["labels"])

|

| 68 |

+

if label == "redaction"

|

| 69 |

+

]

|

| 70 |

+

return sum(

|

| 71 |

+

(bbox.xmax - bbox.xmin) * (bbox.ymax - bbox.ymin)

|

| 72 |

+

for bbox in redaction_bboxes

|

| 73 |

+

)

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

st.title("Redaction Detector")

|

| 77 |

+

|

| 78 |

+

st.image(

|

| 79 |

+

"./synthetic-redactions.jpg",

|

| 80 |

+

width=300,

|

| 81 |

+

)

|

| 82 |

+

uploaded_pdf = st.file_uploader(

|

| 83 |

+

"Upload a PDF...",

|

| 84 |

+

type="pdf",

|

| 85 |

+

accept_multiple_files=False,

|

| 86 |

+

help="This application processes PDF files. Please upload a document you believe to contain redactions.",

|

| 87 |

+

on_change=None,

|

| 88 |

+

)

|

| 89 |

+

|

| 90 |

+

# Add a selectbox to the sidebar:

|

| 91 |

+

st.sidebar.header("Customisation Options")

|

| 92 |

+

|

| 93 |

+

graph_checkbox = st.sidebar.checkbox(

|

| 94 |

+

"Show analysis charts",

|

| 95 |

+

value=True,

|

| 96 |

+

help="Display charts analysising the redactions found in the document.",

|

| 97 |

+

)

|

| 98 |

+

|

| 99 |

+

extract_images_checkbox = st.sidebar.checkbox(

|

| 100 |

+

"Extract redacted images",

|

| 101 |

+

value=True,

|

| 102 |

+

help="Create a PDF file containing the redacted images with an object detection overlay highlighting their locations and the confidence the model had when detecting the redactions.",

|

| 103 |

+

)

|

| 104 |

+

|

| 105 |

+

# Add a slider to the sidebar:

|

| 106 |

+

confidence = st.sidebar.slider(

|

| 107 |

+

"Confidence level (%)",

|

| 108 |

+

min_value=0,

|

| 109 |

+

max_value=100,

|

| 110 |

+

value=80,

|

| 111 |

+

)

|

| 112 |

+

|

| 113 |

+

|

| 114 |

+

@st.cache

|

| 115 |

+

def get_pdf_document(input):

|

| 116 |

+

with open(

|

| 117 |

+

pathlib.Path(filename_without_extension / "output.pdf"), "wb"

|

| 118 |

+

) as f:

|

| 119 |

+

f.write(uploaded_pdf.getbuffer())

|

| 120 |

+

return fitz.open("output.pdf")

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

@st.cache

|

| 124 |

+

def get_image_predictions(img):

|

| 125 |

+

return model_type.end2end_detect(

|

| 126 |

+

img,

|

| 127 |

+

valid_tfms,

|

| 128 |

+

model,

|

| 129 |

+

class_map=class_map,

|

| 130 |

+

detection_threshold=confidence / 100,

|

| 131 |

+

display_label=True,

|

| 132 |

+

display_bbox=True,

|

| 133 |

+

return_img=True,

|

| 134 |

+

font_size=16,

|

| 135 |

+

label_color="#FF59D6",

|

| 136 |

+

)

|

| 137 |

+

|

| 138 |

+

|

| 139 |

+

if uploaded_pdf is None:

|

| 140 |

+

st.markdown(pathlib.Path("article.md").read_text())

|

| 141 |

+

else:

|

| 142 |

+

st.text("Opening PDF...")

|

| 143 |

+

filename_without_extension = uploaded_pdf.name[:-4]

|

| 144 |

+

results = []

|

| 145 |

+

images = []

|

| 146 |

+

document = get_pdf_document(uploaded_pdf)

|

| 147 |

+

total_image_areas = 0

|

| 148 |

+

total_content_areas = 0

|

| 149 |

+

total_redaction_area = 0

|

| 150 |

+

tmp_dir = tempfile.gettempdir()

|

| 151 |

+

|

| 152 |

+

for page_num, page in enumerate(document, start=1):

|

| 153 |

+

image_pixmap = page.get_pixmap()

|

| 154 |

+

image = image_pixmap.tobytes()

|

| 155 |

+

_, _, probs = learn.predict(image)

|

| 156 |

+

results.append(

|

| 157 |

+

{labels[i]: float(probs[i]) for i in range(len(labels))}

|

| 158 |

+

)

|

| 159 |

+

if probs[0] > (confidence / 100):

|

| 160 |

+

redaction_count = len(images)

|

| 161 |

+

if not os.path.exists(

|

| 162 |

+

os.path.join(tmp_dir, filename_without_extension or "abc")

|

| 163 |

+

):

|

| 164 |

+

os.makedirs(os.path.join(tmp_dir, filename_without_extension))

|

| 165 |

+

image_pixmap.save(

|

| 166 |

+

os.path.join(

|

| 167 |

+

tmp_dir, filename_without_extension, f"page-{page_num}.png"

|

| 168 |

+

)

|

| 169 |

+

)

|

| 170 |

+

images.append(

|

| 171 |

+

[

|

| 172 |

+

f"Redacted page #{redaction_count + 1} on page {page_num}",

|

| 173 |

+

os.path.join(

|

| 174 |

+

tmp_dir,

|

| 175 |

+

filename_without_extension,

|

| 176 |

+

f"page-{page_num}.png",

|

| 177 |

+

),

|

| 178 |

+

]

|

| 179 |

+

)

|

| 180 |

+

redacted_pages = [

|

| 181 |

+

str(page + 1)

|

| 182 |

+

for page in range(len(results))

|

| 183 |

+

if results[page]["redacted"] > (confidence / 100)

|

| 184 |

+

]

|

| 185 |

+

report = os.path.join(

|

| 186 |

+

tmp_dir, filename_without_extension, "redacted_pages.pdf"

|

| 187 |

+

)

|

| 188 |

+

|

| 189 |

+

if extract_images_checkbox:

|

| 190 |

+

pdf = FPDF(unit="cm", format="A4")

|

| 191 |

+

pdf.set_auto_page_break(0)

|

| 192 |

+

imagelist = sorted(

|

| 193 |

+

[

|

| 194 |

+

i

|

| 195 |

+

for i in os.listdir(

|

| 196 |

+

os.path.join(tmp_dir, filename_without_extension)

|

| 197 |

+

)

|

| 198 |

+

if i.endswith("png")

|

| 199 |

+

]

|

| 200 |

+

)

|

| 201 |

+

for image in imagelist:

|

| 202 |

+

with PILImage.open(

|

| 203 |

+

os.path.join(tmp_dir, filename_without_extension, image)

|

| 204 |

+

) as img:

|

| 205 |

+

size = img.size

|

| 206 |

+

width, height = size

|

| 207 |

+

if width > height:

|

| 208 |

+

pdf.add_page(orientation="L")

|

| 209 |

+

else:

|

| 210 |

+

pdf.add_page(orientation="P")

|

| 211 |

+

pred_dict = get_image_predictions(img)

|

| 212 |

+

|

| 213 |

+

total_image_areas += pred_dict["width"] * pred_dict["height"]

|

| 214 |

+

total_content_areas += get_content_area(pred_dict)

|

| 215 |

+

total_redaction_area += get_redaction_area(pred_dict)

|

| 216 |

+

|

| 217 |

+

pred_dict["img"].save(

|

| 218 |

+

os.path.join(

|

| 219 |

+

tmp_dir, filename_without_extension, f"pred-{image}"

|

| 220 |

+

),

|

| 221 |

+

)

|

| 222 |

+

pdf.image(

|

| 223 |

+

os.path.join(

|

| 224 |

+

tmp_dir, filename_without_extension, f"pred-{image}"

|

| 225 |

+

),

|

| 226 |

+

w=pdf.w,

|

| 227 |

+

h=pdf.h,

|

| 228 |

+

)

|

| 229 |

+

pdf.output(report, "F")

|

| 230 |

+

|

| 231 |

+

text_output = f"A total of {len(redacted_pages)} pages were redacted. \n\nThe redacted page numbers were: {', '.join(redacted_pages)}. \n\n"

|

| 232 |

+

|

| 233 |

+

if not extract_images_checkbox:

|

| 234 |

+

st.text(text_output)

|

| 235 |

+

# DISPLAY IMAGES

|

| 236 |

+

else:

|

| 237 |

+

total_redaction_proportion = round(

|

| 238 |

+

(total_redaction_area / total_image_areas) * 100, 1

|

| 239 |

+

)

|

| 240 |

+

content_redaction_proportion = round(

|

| 241 |

+

(total_redaction_area / total_content_areas) * 100, 1

|

| 242 |

+

)

|

| 243 |

+

|

| 244 |

+

redaction_analysis = f"- {total_redaction_proportion}% of the total area of the redacted pages was redacted. \n- {content_redaction_proportion}% of the actual content of those redacted pages was redacted."

|

| 245 |

+

|

| 246 |

+

st.text(text_output + redaction_analysis)

|

| 247 |

+

# DISPLAY IMAGES

|

synthetic-redactions.jpg

ADDED

|