Spaces:

Sleeping

Sleeping

Commit

•

c3dc61c

1

Parent(s):

134920c

Upload 176 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- yolov5/.dockerignore +222 -0

- yolov5/.gitattributes +2 -0

- yolov5/.github/ISSUE_TEMPLATE/bug-report.yml +85 -0

- yolov5/.github/ISSUE_TEMPLATE/config.yml +11 -0

- yolov5/.github/ISSUE_TEMPLATE/feature-request.yml +50 -0

- yolov5/.github/ISSUE_TEMPLATE/question.yml +33 -0

- yolov5/.github/PULL_REQUEST_TEMPLATE.md +13 -0

- yolov5/.github/dependabot.yml +23 -0

- yolov5/.github/workflows/ci-testing.yml +164 -0

- yolov5/.github/workflows/codeql-analysis.yml +55 -0

- yolov5/.github/workflows/docker.yml +58 -0

- yolov5/.github/workflows/greetings.yml +65 -0

- yolov5/.github/workflows/links.yml +40 -0

- yolov5/.github/workflows/stale.yml +47 -0

- yolov5/.github/workflows/translate-readme.yml +26 -0

- yolov5/.gitignore +257 -0

- yolov5/.pre-commit-config.yaml +73 -0

- yolov5/CITATION.cff +14 -0

- yolov5/CONTRIBUTING.md +93 -0

- yolov5/LICENSE +661 -0

- yolov5/README.md +497 -0

- yolov5/README.zh-CN.md +490 -0

- yolov5/__pycache__/export.cpython-310.pyc +0 -0

- yolov5/__pycache__/export.cpython-38.pyc +0 -0

- yolov5/__pycache__/hubconf.cpython-310.pyc +0 -0

- yolov5/__pycache__/hubconf.cpython-38.pyc +0 -0

- yolov5/benchmarks.py +174 -0

- yolov5/classify/predict.py +226 -0

- yolov5/classify/train.py +333 -0

- yolov5/classify/tutorial.ipynb +0 -0

- yolov5/classify/val.py +170 -0

- yolov5/data/Argoverse.yaml +74 -0

- yolov5/data/GlobalWheat2020.yaml +54 -0

- yolov5/data/ImageNet.yaml +1022 -0

- yolov5/data/Objects365.yaml +438 -0

- yolov5/data/SKU-110K.yaml +53 -0

- yolov5/data/VOC.yaml +100 -0

- yolov5/data/VisDrone.yaml +70 -0

- yolov5/data/coco.yaml +116 -0

- yolov5/data/coco128-seg.yaml +101 -0

- yolov5/data/coco128.yaml +101 -0

- yolov5/data/hyps/hyp.Objects365.yaml +34 -0

- yolov5/data/hyps/hyp.VOC.yaml +40 -0

- yolov5/data/hyps/hyp.no-augmentation.yaml +35 -0

- yolov5/data/hyps/hyp.scratch-high.yaml +34 -0

- yolov5/data/hyps/hyp.scratch-low.yaml +34 -0

- yolov5/data/hyps/hyp.scratch-med.yaml +34 -0

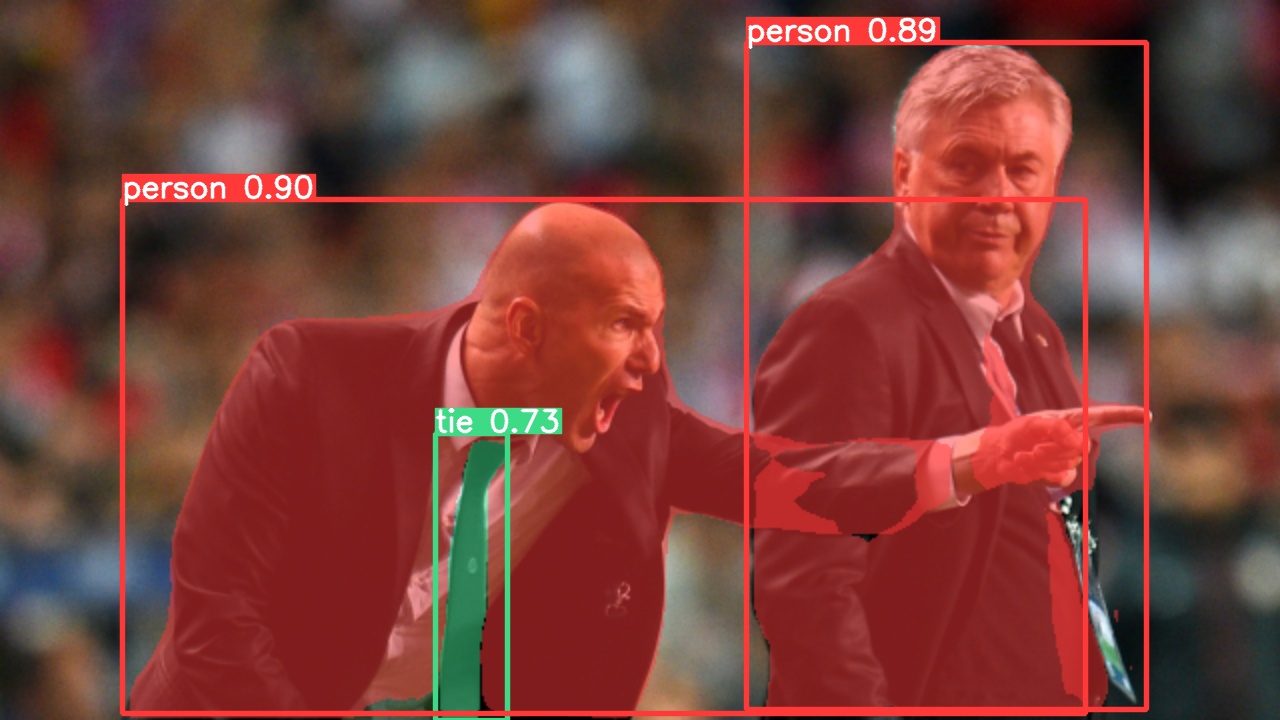

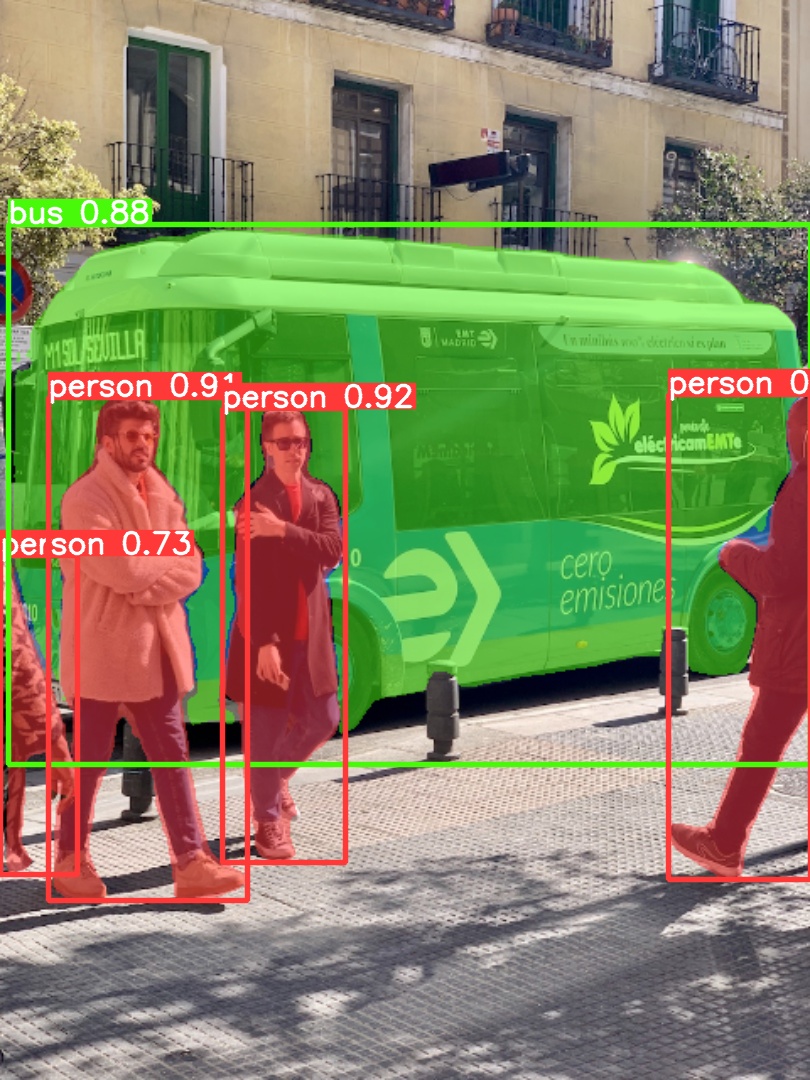

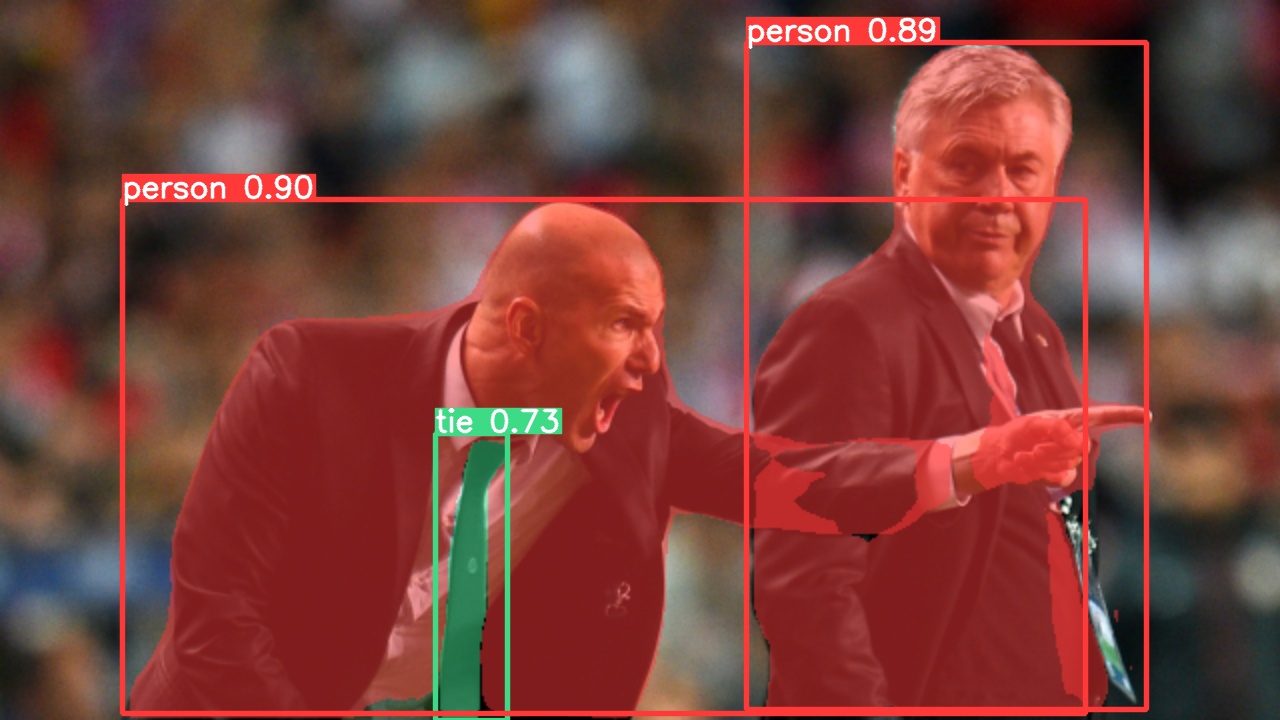

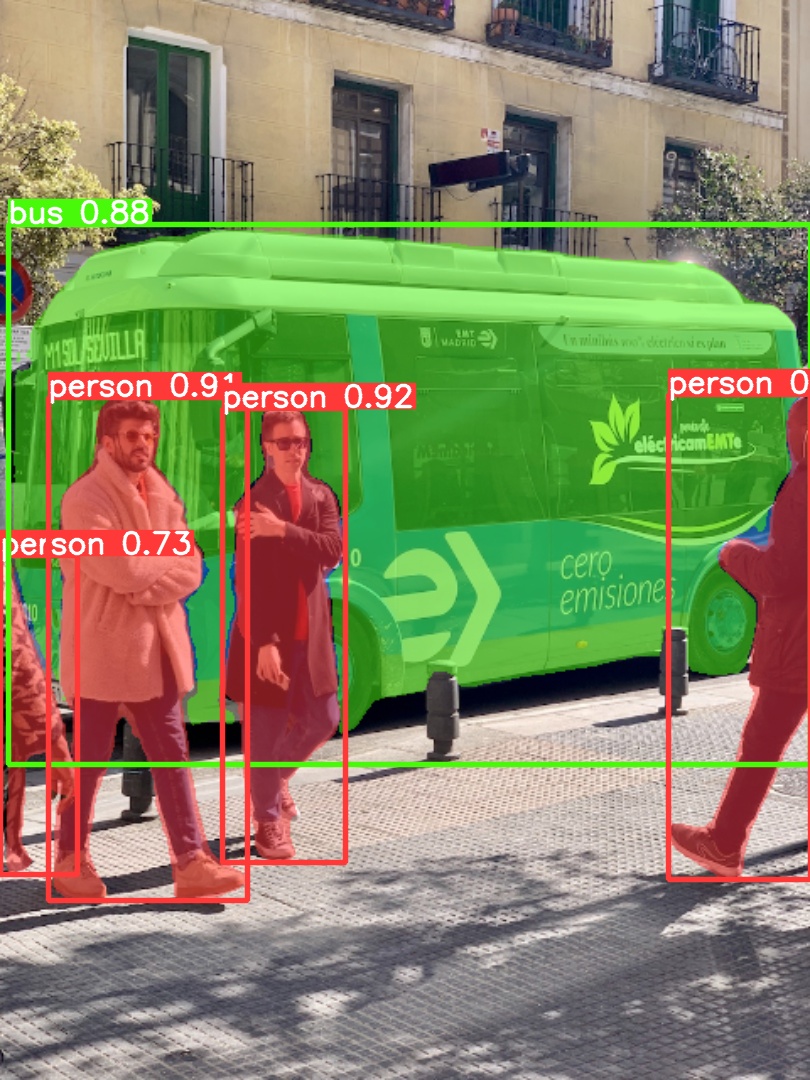

- yolov5/data/images/bus.jpg +0 -0

- yolov5/data/images/zidane.jpg +0 -0

- yolov5/data/scripts/download_weights.sh +22 -0

yolov5/.dockerignore

ADDED

|

@@ -0,0 +1,222 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Repo-specific DockerIgnore -------------------------------------------------------------------------------------------

|

| 2 |

+

.git

|

| 3 |

+

.cache

|

| 4 |

+

.idea

|

| 5 |

+

runs

|

| 6 |

+

output

|

| 7 |

+

coco

|

| 8 |

+

storage.googleapis.com

|

| 9 |

+

|

| 10 |

+

data/samples/*

|

| 11 |

+

**/results*.csv

|

| 12 |

+

*.jpg

|

| 13 |

+

|

| 14 |

+

# Neural Network weights -----------------------------------------------------------------------------------------------

|

| 15 |

+

**/*.pt

|

| 16 |

+

**/*.pth

|

| 17 |

+

**/*.onnx

|

| 18 |

+

**/*.engine

|

| 19 |

+

**/*.mlmodel

|

| 20 |

+

**/*.torchscript

|

| 21 |

+

**/*.torchscript.pt

|

| 22 |

+

**/*.tflite

|

| 23 |

+

**/*.h5

|

| 24 |

+

**/*.pb

|

| 25 |

+

*_saved_model/

|

| 26 |

+

*_web_model/

|

| 27 |

+

*_openvino_model/

|

| 28 |

+

|

| 29 |

+

# Below Copied From .gitignore -----------------------------------------------------------------------------------------

|

| 30 |

+

# Below Copied From .gitignore -----------------------------------------------------------------------------------------

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

# GitHub Python GitIgnore ----------------------------------------------------------------------------------------------

|

| 34 |

+

# Byte-compiled / optimized / DLL files

|

| 35 |

+

__pycache__/

|

| 36 |

+

*.py[cod]

|

| 37 |

+

*$py.class

|

| 38 |

+

|

| 39 |

+

# C extensions

|

| 40 |

+

*.so

|

| 41 |

+

|

| 42 |

+

# Distribution / packaging

|

| 43 |

+

.Python

|

| 44 |

+

env/

|

| 45 |

+

build/

|

| 46 |

+

develop-eggs/

|

| 47 |

+

dist/

|

| 48 |

+

downloads/

|

| 49 |

+

eggs/

|

| 50 |

+

.eggs/

|

| 51 |

+

lib/

|

| 52 |

+

lib64/

|

| 53 |

+

parts/

|

| 54 |

+

sdist/

|

| 55 |

+

var/

|

| 56 |

+

wheels/

|

| 57 |

+

*.egg-info/

|

| 58 |

+

wandb/

|

| 59 |

+

.installed.cfg

|

| 60 |

+

*.egg

|

| 61 |

+

|

| 62 |

+

# PyInstaller

|

| 63 |

+

# Usually these files are written by a python script from a template

|

| 64 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 65 |

+

*.manifest

|

| 66 |

+

*.spec

|

| 67 |

+

|

| 68 |

+

# Installer logs

|

| 69 |

+

pip-log.txt

|

| 70 |

+

pip-delete-this-directory.txt

|

| 71 |

+

|

| 72 |

+

# Unit test / coverage reports

|

| 73 |

+

htmlcov/

|

| 74 |

+

.tox/

|

| 75 |

+

.coverage

|

| 76 |

+

.coverage.*

|

| 77 |

+

.cache

|

| 78 |

+

nosetests.xml

|

| 79 |

+

coverage.xml

|

| 80 |

+

*.cover

|

| 81 |

+

.hypothesis/

|

| 82 |

+

|

| 83 |

+

# Translations

|

| 84 |

+

*.mo

|

| 85 |

+

*.pot

|

| 86 |

+

|

| 87 |

+

# Django stuff:

|

| 88 |

+

*.log

|

| 89 |

+

local_settings.py

|

| 90 |

+

|

| 91 |

+

# Flask stuff:

|

| 92 |

+

instance/

|

| 93 |

+

.webassets-cache

|

| 94 |

+

|

| 95 |

+

# Scrapy stuff:

|

| 96 |

+

.scrapy

|

| 97 |

+

|

| 98 |

+

# Sphinx documentation

|

| 99 |

+

docs/_build/

|

| 100 |

+

|

| 101 |

+

# PyBuilder

|

| 102 |

+

target/

|

| 103 |

+

|

| 104 |

+

# Jupyter Notebook

|

| 105 |

+

.ipynb_checkpoints

|

| 106 |

+

|

| 107 |

+

# pyenv

|

| 108 |

+

.python-version

|

| 109 |

+

|

| 110 |

+

# celery beat schedule file

|

| 111 |

+

celerybeat-schedule

|

| 112 |

+

|

| 113 |

+

# SageMath parsed files

|

| 114 |

+

*.sage.py

|

| 115 |

+

|

| 116 |

+

# dotenv

|

| 117 |

+

.env

|

| 118 |

+

|

| 119 |

+

# virtualenv

|

| 120 |

+

.venv*

|

| 121 |

+

venv*/

|

| 122 |

+

ENV*/

|

| 123 |

+

|

| 124 |

+

# Spyder project settings

|

| 125 |

+

.spyderproject

|

| 126 |

+

.spyproject

|

| 127 |

+

|

| 128 |

+

# Rope project settings

|

| 129 |

+

.ropeproject

|

| 130 |

+

|

| 131 |

+

# mkdocs documentation

|

| 132 |

+

/site

|

| 133 |

+

|

| 134 |

+

# mypy

|

| 135 |

+

.mypy_cache/

|

| 136 |

+

|

| 137 |

+

|

| 138 |

+

# https://github.com/github/gitignore/blob/master/Global/macOS.gitignore -----------------------------------------------

|

| 139 |

+

|

| 140 |

+

# General

|

| 141 |

+

.DS_Store

|

| 142 |

+

.AppleDouble

|

| 143 |

+

.LSOverride

|

| 144 |

+

|

| 145 |

+

# Icon must end with two \r

|

| 146 |

+

Icon

|

| 147 |

+

Icon?

|

| 148 |

+

|

| 149 |

+

# Thumbnails

|

| 150 |

+

._*

|

| 151 |

+

|

| 152 |

+

# Files that might appear in the root of a volume

|

| 153 |

+

.DocumentRevisions-V100

|

| 154 |

+

.fseventsd

|

| 155 |

+

.Spotlight-V100

|

| 156 |

+

.TemporaryItems

|

| 157 |

+

.Trashes

|

| 158 |

+

.VolumeIcon.icns

|

| 159 |

+

.com.apple.timemachine.donotpresent

|

| 160 |

+

|

| 161 |

+

# Directories potentially created on remote AFP share

|

| 162 |

+

.AppleDB

|

| 163 |

+

.AppleDesktop

|

| 164 |

+

Network Trash Folder

|

| 165 |

+

Temporary Items

|

| 166 |

+

.apdisk

|

| 167 |

+

|

| 168 |

+

|

| 169 |

+

# https://github.com/github/gitignore/blob/master/Global/JetBrains.gitignore

|

| 170 |

+

# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio and WebStorm

|

| 171 |

+

# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

|

| 172 |

+

|

| 173 |

+

# User-specific stuff:

|

| 174 |

+

.idea/*

|

| 175 |

+

.idea/**/workspace.xml

|

| 176 |

+

.idea/**/tasks.xml

|

| 177 |

+

.idea/dictionaries

|

| 178 |

+

.html # Bokeh Plots

|

| 179 |

+

.pg # TensorFlow Frozen Graphs

|

| 180 |

+

.avi # videos

|

| 181 |

+

|

| 182 |

+

# Sensitive or high-churn files:

|

| 183 |

+

.idea/**/dataSources/

|

| 184 |

+

.idea/**/dataSources.ids

|

| 185 |

+

.idea/**/dataSources.local.xml

|

| 186 |

+

.idea/**/sqlDataSources.xml

|

| 187 |

+

.idea/**/dynamic.xml

|

| 188 |

+

.idea/**/uiDesigner.xml

|

| 189 |

+

|

| 190 |

+

# Gradle:

|

| 191 |

+

.idea/**/gradle.xml

|

| 192 |

+

.idea/**/libraries

|

| 193 |

+

|

| 194 |

+

# CMake

|

| 195 |

+

cmake-build-debug/

|

| 196 |

+

cmake-build-release/

|

| 197 |

+

|

| 198 |

+

# Mongo Explorer plugin:

|

| 199 |

+

.idea/**/mongoSettings.xml

|

| 200 |

+

|

| 201 |

+

## File-based project format:

|

| 202 |

+

*.iws

|

| 203 |

+

|

| 204 |

+

## Plugin-specific files:

|

| 205 |

+

|

| 206 |

+

# IntelliJ

|

| 207 |

+

out/

|

| 208 |

+

|

| 209 |

+

# mpeltonen/sbt-idea plugin

|

| 210 |

+

.idea_modules/

|

| 211 |

+

|

| 212 |

+

# JIRA plugin

|

| 213 |

+

atlassian-ide-plugin.xml

|

| 214 |

+

|

| 215 |

+

# Cursive Clojure plugin

|

| 216 |

+

.idea/replstate.xml

|

| 217 |

+

|

| 218 |

+

# Crashlytics plugin (for Android Studio and IntelliJ)

|

| 219 |

+

com_crashlytics_export_strings.xml

|

| 220 |

+

crashlytics.properties

|

| 221 |

+

crashlytics-build.properties

|

| 222 |

+

fabric.properties

|

yolov5/.gitattributes

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# this drop notebooks from GitHub language stats

|

| 2 |

+

*.ipynb linguist-vendored

|

yolov5/.github/ISSUE_TEMPLATE/bug-report.yml

ADDED

|

@@ -0,0 +1,85 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: 🐛 Bug Report

|

| 2 |

+

# title: " "

|

| 3 |

+

description: Problems with YOLOv5

|

| 4 |

+

labels: [bug, triage]

|

| 5 |

+

body:

|

| 6 |

+

- type: markdown

|

| 7 |

+

attributes:

|

| 8 |

+

value: |

|

| 9 |

+

Thank you for submitting a YOLOv5 🐛 Bug Report!

|

| 10 |

+

|

| 11 |

+

- type: checkboxes

|

| 12 |

+

attributes:

|

| 13 |

+

label: Search before asking

|

| 14 |

+

description: >

|

| 15 |

+

Please search the [issues](https://github.com/ultralytics/yolov5/issues) to see if a similar bug report already exists.

|

| 16 |

+

options:

|

| 17 |

+

- label: >

|

| 18 |

+

I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar bug report.

|

| 19 |

+

required: true

|

| 20 |

+

|

| 21 |

+

- type: dropdown

|

| 22 |

+

attributes:

|

| 23 |

+

label: YOLOv5 Component

|

| 24 |

+

description: |

|

| 25 |

+

Please select the part of YOLOv5 where you found the bug.

|

| 26 |

+

multiple: true

|

| 27 |

+

options:

|

| 28 |

+

- "Training"

|

| 29 |

+

- "Validation"

|

| 30 |

+

- "Detection"

|

| 31 |

+

- "Export"

|

| 32 |

+

- "PyTorch Hub"

|

| 33 |

+

- "Multi-GPU"

|

| 34 |

+

- "Evolution"

|

| 35 |

+

- "Integrations"

|

| 36 |

+

- "Other"

|

| 37 |

+

validations:

|

| 38 |

+

required: false

|

| 39 |

+

|

| 40 |

+

- type: textarea

|

| 41 |

+

attributes:

|

| 42 |

+

label: Bug

|

| 43 |

+

description: Provide console output with error messages and/or screenshots of the bug.

|

| 44 |

+

placeholder: |

|

| 45 |

+

💡 ProTip! Include as much information as possible (screenshots, logs, tracebacks etc.) to receive the most helpful response.

|

| 46 |

+

validations:

|

| 47 |

+

required: true

|

| 48 |

+

|

| 49 |

+

- type: textarea

|

| 50 |

+

attributes:

|

| 51 |

+

label: Environment

|

| 52 |

+

description: Please specify the software and hardware you used to produce the bug.

|

| 53 |

+

placeholder: |

|

| 54 |

+

- YOLO: YOLOv5 🚀 v6.0-67-g60e42e1 torch 1.9.0+cu111 CUDA:0 (A100-SXM4-40GB, 40536MiB)

|

| 55 |

+

- OS: Ubuntu 20.04

|

| 56 |

+

- Python: 3.9.0

|

| 57 |

+

validations:

|

| 58 |

+

required: false

|

| 59 |

+

|

| 60 |

+

- type: textarea

|

| 61 |

+

attributes:

|

| 62 |

+

label: Minimal Reproducible Example

|

| 63 |

+

description: >

|

| 64 |

+

When asking a question, people will be better able to provide help if you provide code that they can easily understand and use to **reproduce** the problem.

|

| 65 |

+

This is referred to by community members as creating a [minimal reproducible example](https://docs.ultralytics.com/help/minimum_reproducible_example/).

|

| 66 |

+

placeholder: |

|

| 67 |

+

```

|

| 68 |

+

# Code to reproduce your issue here

|

| 69 |

+

```

|

| 70 |

+

validations:

|

| 71 |

+

required: false

|

| 72 |

+

|

| 73 |

+

- type: textarea

|

| 74 |

+

attributes:

|

| 75 |

+

label: Additional

|

| 76 |

+

description: Anything else you would like to share?

|

| 77 |

+

|

| 78 |

+

- type: checkboxes

|

| 79 |

+

attributes:

|

| 80 |

+

label: Are you willing to submit a PR?

|

| 81 |

+

description: >

|

| 82 |

+

(Optional) We encourage you to submit a [Pull Request](https://github.com/ultralytics/yolov5/pulls) (PR) to help improve YOLOv5 for everyone, especially if you have a good understanding of how to implement a fix or feature.

|

| 83 |

+

See the YOLOv5 [Contributing Guide](https://docs.ultralytics.com/help/contributing) to get started.

|

| 84 |

+

options:

|

| 85 |

+

- label: Yes I'd like to help by submitting a PR!

|

yolov5/.github/ISSUE_TEMPLATE/config.yml

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

blank_issues_enabled: true

|

| 2 |

+

contact_links:

|

| 3 |

+

- name: 📄 Docs

|

| 4 |

+

url: https://docs.ultralytics.com/yolov5

|

| 5 |

+

about: View Ultralytics YOLOv5 Docs

|

| 6 |

+

- name: 💬 Forum

|

| 7 |

+

url: https://community.ultralytics.com/

|

| 8 |

+

about: Ask on Ultralytics Community Forum

|

| 9 |

+

- name: 🎧 Discord

|

| 10 |

+

url: https://discord.gg/2wNGbc6g9X

|

| 11 |

+

about: Ask on Ultralytics Discord

|

yolov5/.github/ISSUE_TEMPLATE/feature-request.yml

ADDED

|

@@ -0,0 +1,50 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: 🚀 Feature Request

|

| 2 |

+

description: Suggest a YOLOv5 idea

|

| 3 |

+

# title: " "

|

| 4 |

+

labels: [enhancement]

|

| 5 |

+

body:

|

| 6 |

+

- type: markdown

|

| 7 |

+

attributes:

|

| 8 |

+

value: |

|

| 9 |

+

Thank you for submitting a YOLOv5 🚀 Feature Request!

|

| 10 |

+

|

| 11 |

+

- type: checkboxes

|

| 12 |

+

attributes:

|

| 13 |

+

label: Search before asking

|

| 14 |

+

description: >

|

| 15 |

+

Please search the [issues](https://github.com/ultralytics/yolov5/issues) to see if a similar feature request already exists.

|

| 16 |

+

options:

|

| 17 |

+

- label: >

|

| 18 |

+

I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar feature requests.

|

| 19 |

+

required: true

|

| 20 |

+

|

| 21 |

+

- type: textarea

|

| 22 |

+

attributes:

|

| 23 |

+

label: Description

|

| 24 |

+

description: A short description of your feature.

|

| 25 |

+

placeholder: |

|

| 26 |

+

What new feature would you like to see in YOLOv5?

|

| 27 |

+

validations:

|

| 28 |

+

required: true

|

| 29 |

+

|

| 30 |

+

- type: textarea

|

| 31 |

+

attributes:

|

| 32 |

+

label: Use case

|

| 33 |

+

description: |

|

| 34 |

+

Describe the use case of your feature request. It will help us understand and prioritize the feature request.

|

| 35 |

+

placeholder: |

|

| 36 |

+

How would this feature be used, and who would use it?

|

| 37 |

+

|

| 38 |

+

- type: textarea

|

| 39 |

+

attributes:

|

| 40 |

+

label: Additional

|

| 41 |

+

description: Anything else you would like to share?

|

| 42 |

+

|

| 43 |

+

- type: checkboxes

|

| 44 |

+

attributes:

|

| 45 |

+

label: Are you willing to submit a PR?

|

| 46 |

+

description: >

|

| 47 |

+

(Optional) We encourage you to submit a [Pull Request](https://github.com/ultralytics/yolov5/pulls) (PR) to help improve YOLOv5 for everyone, especially if you have a good understanding of how to implement a fix or feature.

|

| 48 |

+

See the YOLOv5 [Contributing Guide](https://docs.ultralytics.com/help/contributing) to get started.

|

| 49 |

+

options:

|

| 50 |

+

- label: Yes I'd like to help by submitting a PR!

|

yolov5/.github/ISSUE_TEMPLATE/question.yml

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: ❓ Question

|

| 2 |

+

description: Ask a YOLOv5 question

|

| 3 |

+

# title: " "

|

| 4 |

+

labels: [question]

|

| 5 |

+

body:

|

| 6 |

+

- type: markdown

|

| 7 |

+

attributes:

|

| 8 |

+

value: |

|

| 9 |

+

Thank you for asking a YOLOv5 ❓ Question!

|

| 10 |

+

|

| 11 |

+

- type: checkboxes

|

| 12 |

+

attributes:

|

| 13 |

+

label: Search before asking

|

| 14 |

+

description: >

|

| 15 |

+

Please search the [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) to see if a similar question already exists.

|

| 16 |

+

options:

|

| 17 |

+

- label: >

|

| 18 |

+

I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

|

| 19 |

+

required: true

|

| 20 |

+

|

| 21 |

+

- type: textarea

|

| 22 |

+

attributes:

|

| 23 |

+

label: Question

|

| 24 |

+

description: What is your question?

|

| 25 |

+

placeholder: |

|

| 26 |

+

💡 ProTip! Include as much information as possible (screenshots, logs, tracebacks etc.) to receive the most helpful response.

|

| 27 |

+

validations:

|

| 28 |

+

required: true

|

| 29 |

+

|

| 30 |

+

- type: textarea

|

| 31 |

+

attributes:

|

| 32 |

+

label: Additional

|

| 33 |

+

description: Anything else you would like to share?

|

yolov5/.github/PULL_REQUEST_TEMPLATE.md

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<!--

|

| 2 |

+

Thank you for submitting a YOLOv5 🚀 Pull Request! We want to make contributing to YOLOv5 as easy and transparent as possible. A few tips to get you started:

|

| 3 |

+

|

| 4 |

+

- Search existing YOLOv5 [PRs](https://github.com/ultralytics/yolov5/pull) to see if a similar PR already exists.

|

| 5 |

+

- Link this PR to a YOLOv5 [issue](https://github.com/ultralytics/yolov5/issues) to help us understand what bug fix or feature is being implemented.

|

| 6 |

+

- Provide before and after profiling/inference/training results to help us quantify the improvement your PR provides (if applicable).

|

| 7 |

+

|

| 8 |

+

Please see our ✅ [Contributing Guide](https://docs.ultralytics.com/help/contributing) for more details.

|

| 9 |

+

|

| 10 |

+

Note that Copilot will summarize this PR below, do not modify the 'copilot:all' line.

|

| 11 |

+

-->

|

| 12 |

+

|

| 13 |

+

copilot:all

|

yolov5/.github/dependabot.yml

ADDED

|

@@ -0,0 +1,23 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version: 2

|

| 2 |

+

updates:

|

| 3 |

+

- package-ecosystem: pip

|

| 4 |

+

directory: "/"

|

| 5 |

+

schedule:

|

| 6 |

+

interval: weekly

|

| 7 |

+

time: "04:00"

|

| 8 |

+

open-pull-requests-limit: 10

|

| 9 |

+

reviewers:

|

| 10 |

+

- glenn-jocher

|

| 11 |

+

labels:

|

| 12 |

+

- dependencies

|

| 13 |

+

|

| 14 |

+

- package-ecosystem: github-actions

|

| 15 |

+

directory: "/"

|

| 16 |

+

schedule:

|

| 17 |

+

interval: weekly

|

| 18 |

+

time: "04:00"

|

| 19 |

+

open-pull-requests-limit: 5

|

| 20 |

+

reviewers:

|

| 21 |

+

- glenn-jocher

|

| 22 |

+

labels:

|

| 23 |

+

- dependencies

|

yolov5/.github/workflows/ci-testing.yml

ADDED

|

@@ -0,0 +1,164 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# YOLOv5 🚀 by Ultralytics, AGPL-3.0 license

|

| 2 |

+

# YOLOv5 Continuous Integration (CI) GitHub Actions tests

|

| 3 |

+

|

| 4 |

+

name: YOLOv5 CI

|

| 5 |

+

|

| 6 |

+

on:

|

| 7 |

+

push:

|

| 8 |

+

branches: [ master ]

|

| 9 |

+

pull_request:

|

| 10 |

+

branches: [ master ]

|

| 11 |

+

schedule:

|

| 12 |

+

- cron: '0 0 * * *' # runs at 00:00 UTC every day

|

| 13 |

+

|

| 14 |

+

jobs:

|

| 15 |

+

Benchmarks:

|

| 16 |

+

runs-on: ${{ matrix.os }}

|

| 17 |

+

strategy:

|

| 18 |

+

fail-fast: false

|

| 19 |

+

matrix:

|

| 20 |

+

os: [ ubuntu-latest ]

|

| 21 |

+

python-version: [ '3.10' ] # requires python<=3.10

|

| 22 |

+

model: [ yolov5n ]

|

| 23 |

+

steps:

|

| 24 |

+

- uses: actions/checkout@v3

|

| 25 |

+

- uses: actions/setup-python@v4

|

| 26 |

+

with:

|

| 27 |

+

python-version: ${{ matrix.python-version }}

|

| 28 |

+

cache: 'pip' # caching pip dependencies

|

| 29 |

+

- name: Install requirements

|

| 30 |

+

run: |

|

| 31 |

+

python -m pip install --upgrade pip wheel

|

| 32 |

+

pip install -r requirements.txt coremltools openvino-dev tensorflow-cpu --extra-index-url https://download.pytorch.org/whl/cpu

|

| 33 |

+

python --version

|

| 34 |

+

pip --version

|

| 35 |

+

pip list

|

| 36 |

+

- name: Benchmark DetectionModel

|

| 37 |

+

run: |

|

| 38 |

+

python benchmarks.py --data coco128.yaml --weights ${{ matrix.model }}.pt --img 320 --hard-fail 0.29

|

| 39 |

+

- name: Benchmark SegmentationModel

|

| 40 |

+

run: |

|

| 41 |

+

python benchmarks.py --data coco128-seg.yaml --weights ${{ matrix.model }}-seg.pt --img 320 --hard-fail 0.22

|

| 42 |

+

- name: Test predictions

|

| 43 |

+

run: |

|

| 44 |

+

python export.py --weights ${{ matrix.model }}-cls.pt --include onnx --img 224

|

| 45 |

+

python detect.py --weights ${{ matrix.model }}.onnx --img 320

|

| 46 |

+

python segment/predict.py --weights ${{ matrix.model }}-seg.onnx --img 320

|

| 47 |

+

python classify/predict.py --weights ${{ matrix.model }}-cls.onnx --img 224

|

| 48 |

+

|

| 49 |

+

Tests:

|

| 50 |

+

timeout-minutes: 60

|

| 51 |

+

runs-on: ${{ matrix.os }}

|

| 52 |

+

strategy:

|

| 53 |

+

fail-fast: false

|

| 54 |

+

matrix:

|

| 55 |

+

os: [ ubuntu-latest, windows-latest ] # macos-latest bug https://github.com/ultralytics/yolov5/pull/9049

|

| 56 |

+

python-version: [ '3.10' ]

|

| 57 |

+

model: [ yolov5n ]

|

| 58 |

+

include:

|

| 59 |

+

- os: ubuntu-latest

|

| 60 |

+

python-version: '3.8' # '3.6.8' min

|

| 61 |

+

model: yolov5n

|

| 62 |

+

- os: ubuntu-latest

|

| 63 |

+

python-version: '3.9'

|

| 64 |

+

model: yolov5n

|

| 65 |

+

- os: ubuntu-latest

|

| 66 |

+

python-version: '3.8' # torch 1.7.0 requires python >=3.6, <=3.8

|

| 67 |

+

model: yolov5n

|

| 68 |

+

torch: '1.7.0' # min torch version CI https://pypi.org/project/torchvision/

|

| 69 |

+

steps:

|

| 70 |

+

- uses: actions/checkout@v3

|

| 71 |

+

- uses: actions/setup-python@v4

|

| 72 |

+

with:

|

| 73 |

+

python-version: ${{ matrix.python-version }}

|

| 74 |

+

cache: 'pip' # caching pip dependencies

|

| 75 |

+

- name: Install requirements

|

| 76 |

+

run: |

|

| 77 |

+

python -m pip install --upgrade pip wheel

|

| 78 |

+

if [ "${{ matrix.torch }}" == "1.7.0" ]; then

|

| 79 |

+

pip install -r requirements.txt torch==1.7.0 torchvision==0.8.1 --extra-index-url https://download.pytorch.org/whl/cpu

|

| 80 |

+

else

|

| 81 |

+

pip install -r requirements.txt --extra-index-url https://download.pytorch.org/whl/cpu

|

| 82 |

+

fi

|

| 83 |

+

shell: bash # for Windows compatibility

|

| 84 |

+

- name: Check environment

|

| 85 |

+

run: |

|

| 86 |

+

python -c "import utils; utils.notebook_init()"

|

| 87 |

+

echo "RUNNER_OS is ${{ runner.os }}"

|

| 88 |

+

echo "GITHUB_EVENT_NAME is ${{ github.event_name }}"

|

| 89 |

+

echo "GITHUB_WORKFLOW is ${{ github.workflow }}"

|

| 90 |

+

echo "GITHUB_ACTOR is ${{ github.actor }}"

|

| 91 |

+

echo "GITHUB_REPOSITORY is ${{ github.repository }}"

|

| 92 |

+

echo "GITHUB_REPOSITORY_OWNER is ${{ github.repository_owner }}"

|

| 93 |

+

python --version

|

| 94 |

+

pip --version

|

| 95 |

+

pip list

|

| 96 |

+

- name: Test detection

|

| 97 |

+

shell: bash # for Windows compatibility

|

| 98 |

+

run: |

|

| 99 |

+

# export PYTHONPATH="$PWD" # to run '$ python *.py' files in subdirectories

|

| 100 |

+

m=${{ matrix.model }} # official weights

|

| 101 |

+

b=runs/train/exp/weights/best # best.pt checkpoint

|

| 102 |

+

python train.py --imgsz 64 --batch 32 --weights $m.pt --cfg $m.yaml --epochs 1 --device cpu # train

|

| 103 |

+

for d in cpu; do # devices

|

| 104 |

+

for w in $m $b; do # weights

|

| 105 |

+

python val.py --imgsz 64 --batch 32 --weights $w.pt --device $d # val

|

| 106 |

+

python detect.py --imgsz 64 --weights $w.pt --device $d # detect

|

| 107 |

+

done

|

| 108 |

+

done

|

| 109 |

+

python hubconf.py --model $m # hub

|

| 110 |

+

# python models/tf.py --weights $m.pt # build TF model

|

| 111 |

+

python models/yolo.py --cfg $m.yaml # build PyTorch model

|

| 112 |

+

python export.py --weights $m.pt --img 64 --include torchscript # export

|

| 113 |

+

python - <<EOF

|

| 114 |

+

import torch

|

| 115 |

+

im = torch.zeros([1, 3, 64, 64])

|

| 116 |

+

for path in '$m', '$b':

|

| 117 |

+

model = torch.hub.load('.', 'custom', path=path, source='local')

|

| 118 |

+

print(model('data/images/bus.jpg'))

|

| 119 |

+

model(im) # warmup, build grids for trace

|

| 120 |

+

torch.jit.trace(model, [im])

|

| 121 |

+

EOF

|

| 122 |

+

- name: Test segmentation

|

| 123 |

+

shell: bash # for Windows compatibility

|

| 124 |

+

run: |

|

| 125 |

+

m=${{ matrix.model }}-seg # official weights

|

| 126 |

+

b=runs/train-seg/exp/weights/best # best.pt checkpoint

|

| 127 |

+

python segment/train.py --imgsz 64 --batch 32 --weights $m.pt --cfg $m.yaml --epochs 1 --device cpu # train

|

| 128 |

+

python segment/train.py --imgsz 64 --batch 32 --weights '' --cfg $m.yaml --epochs 1 --device cpu # train

|

| 129 |

+

for d in cpu; do # devices

|

| 130 |

+

for w in $m $b; do # weights

|

| 131 |

+

python segment/val.py --imgsz 64 --batch 32 --weights $w.pt --device $d # val

|

| 132 |

+

python segment/predict.py --imgsz 64 --weights $w.pt --device $d # predict

|

| 133 |

+

python export.py --weights $w.pt --img 64 --include torchscript --device $d # export

|

| 134 |

+

done

|

| 135 |

+

done

|

| 136 |

+

- name: Test classification

|

| 137 |

+

shell: bash # for Windows compatibility

|

| 138 |

+

run: |

|

| 139 |

+

m=${{ matrix.model }}-cls.pt # official weights

|

| 140 |

+

b=runs/train-cls/exp/weights/best.pt # best.pt checkpoint

|

| 141 |

+

python classify/train.py --imgsz 32 --model $m --data mnist160 --epochs 1 # train

|

| 142 |

+

python classify/val.py --imgsz 32 --weights $b --data ../datasets/mnist160 # val

|

| 143 |

+

python classify/predict.py --imgsz 32 --weights $b --source ../datasets/mnist160/test/7/60.png # predict

|

| 144 |

+

python classify/predict.py --imgsz 32 --weights $m --source data/images/bus.jpg # predict

|

| 145 |

+

python export.py --weights $b --img 64 --include torchscript # export

|

| 146 |

+

python - <<EOF

|

| 147 |

+

import torch

|

| 148 |

+

for path in '$m', '$b':

|

| 149 |

+

model = torch.hub.load('.', 'custom', path=path, source='local')

|

| 150 |

+

EOF

|

| 151 |

+

|

| 152 |

+

Summary:

|

| 153 |

+

runs-on: ubuntu-latest

|

| 154 |

+

needs: [Benchmarks, Tests] # Add job names that you want to check for failure

|

| 155 |

+

if: always() # This ensures the job runs even if previous jobs fail

|

| 156 |

+

steps:

|

| 157 |

+

- name: Check for failure and notify

|

| 158 |

+

if: (needs.Benchmarks.result == 'failure' || needs.Tests.result == 'failure' || needs.Benchmarks.result == 'cancelled' || needs.Tests.result == 'cancelled') && github.repository == 'ultralytics/yolov5' && (github.event_name == 'schedule' || github.event_name == 'push')

|

| 159 |

+

uses: slackapi/slack-github-action@v1.24.0

|

| 160 |

+

with:

|

| 161 |

+

payload: |

|

| 162 |

+

{"text": "<!channel> GitHub Actions error for ${{ github.workflow }} ❌\n\n\n*Repository:* https://github.com/${{ github.repository }}\n*Action:* https://github.com/${{ github.repository }}/actions/runs/${{ github.run_id }}\n*Author:* ${{ github.actor }}\n*Event:* ${{ github.event_name }}\n"}

|

| 163 |

+

env:

|

| 164 |

+

SLACK_WEBHOOK_URL: ${{ secrets.SLACK_WEBHOOK_URL_YOLO }}

|

yolov5/.github/workflows/codeql-analysis.yml

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# This action runs GitHub's industry-leading static analysis engine, CodeQL, against a repository's source code to find security vulnerabilities.

|

| 2 |

+

# https://github.com/github/codeql-action

|

| 3 |

+

|

| 4 |

+

name: "CodeQL"

|

| 5 |

+

|

| 6 |

+

on:

|

| 7 |

+

schedule:

|

| 8 |

+

- cron: '0 0 1 * *' # Runs at 00:00 UTC on the 1st of every month

|

| 9 |

+

workflow_dispatch:

|

| 10 |

+

|

| 11 |

+

jobs:

|

| 12 |

+

analyze:

|

| 13 |

+

name: Analyze

|

| 14 |

+

runs-on: ubuntu-latest

|

| 15 |

+

|

| 16 |

+

strategy:

|

| 17 |

+

fail-fast: false

|

| 18 |

+

matrix:

|

| 19 |

+

language: ['python']

|

| 20 |

+

# CodeQL supports [ 'cpp', 'csharp', 'go', 'java', 'javascript', 'python' ]

|

| 21 |

+

# Learn more:

|

| 22 |

+

# https://docs.github.com/en/free-pro-team@latest/github/finding-security-vulnerabilities-and-errors-in-your-code/configuring-code-scanning#changing-the-languages-that-are-analyzed

|

| 23 |

+

|

| 24 |

+

steps:

|

| 25 |

+

- name: Checkout repository

|

| 26 |

+

uses: actions/checkout@v3

|

| 27 |

+

|

| 28 |

+

# Initializes the CodeQL tools for scanning.

|

| 29 |

+

- name: Initialize CodeQL

|

| 30 |

+

uses: github/codeql-action/init@v2

|

| 31 |

+

with:

|

| 32 |

+

languages: ${{ matrix.language }}

|

| 33 |

+

# If you wish to specify custom queries, you can do so here or in a config file.

|

| 34 |

+

# By default, queries listed here will override any specified in a config file.

|

| 35 |

+

# Prefix the list here with "+" to use these queries and those in the config file.

|

| 36 |

+

# queries: ./path/to/local/query, your-org/your-repo/queries@main

|

| 37 |

+

|

| 38 |

+

# Autobuild attempts to build any compiled languages (C/C++, C#, or Java).

|

| 39 |

+

# If this step fails, then you should remove it and run the build manually (see below)

|

| 40 |

+

- name: Autobuild

|

| 41 |

+

uses: github/codeql-action/autobuild@v2

|

| 42 |

+

|

| 43 |

+

# ℹ️ Command-line programs to run using the OS shell.

|

| 44 |

+

# 📚 https://git.io/JvXDl

|

| 45 |

+

|

| 46 |

+

# ✏️ If the Autobuild fails above, remove it and uncomment the following three lines

|

| 47 |

+

# and modify them (or add more) to build your code if your project

|

| 48 |

+

# uses a compiled language

|

| 49 |

+

|

| 50 |

+

#- run: |

|

| 51 |

+

# make bootstrap

|

| 52 |

+

# make release

|

| 53 |

+

|

| 54 |

+

- name: Perform CodeQL Analysis

|

| 55 |

+

uses: github/codeql-action/analyze@v2

|

yolov5/.github/workflows/docker.yml

ADDED

|

@@ -0,0 +1,58 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# YOLOv5 🚀 by Ultralytics, AGPL-3.0 license

|

| 2 |

+

# Builds ultralytics/yolov5:latest images on DockerHub https://hub.docker.com/r/ultralytics/yolov5

|

| 3 |

+

|

| 4 |

+

name: Publish Docker Images

|

| 5 |

+

|

| 6 |

+

on:

|

| 7 |

+

push:

|

| 8 |

+

branches: [ master ]

|

| 9 |

+

workflow_dispatch:

|

| 10 |

+

|

| 11 |

+

jobs:

|

| 12 |

+

docker:

|

| 13 |

+

if: github.repository == 'ultralytics/yolov5'

|

| 14 |

+

name: Push Docker image to Docker Hub

|

| 15 |

+

runs-on: ubuntu-latest

|

| 16 |

+

steps:

|

| 17 |

+

- name: Checkout repo

|

| 18 |

+

uses: actions/checkout@v3

|

| 19 |

+

|

| 20 |

+

- name: Set up QEMU

|

| 21 |

+

uses: docker/setup-qemu-action@v2

|

| 22 |

+

|

| 23 |

+

- name: Set up Docker Buildx

|

| 24 |

+

uses: docker/setup-buildx-action@v2

|

| 25 |

+

|

| 26 |

+

- name: Login to Docker Hub

|

| 27 |

+

uses: docker/login-action@v2

|

| 28 |

+

with:

|

| 29 |

+

username: ${{ secrets.DOCKERHUB_USERNAME }}

|

| 30 |

+

password: ${{ secrets.DOCKERHUB_TOKEN }}

|

| 31 |

+

|

| 32 |

+

- name: Build and push arm64 image

|

| 33 |

+

uses: docker/build-push-action@v4

|

| 34 |

+

continue-on-error: true

|

| 35 |

+

with:

|

| 36 |

+

context: .

|

| 37 |

+

platforms: linux/arm64

|

| 38 |

+

file: utils/docker/Dockerfile-arm64

|

| 39 |

+

push: true

|

| 40 |

+

tags: ultralytics/yolov5:latest-arm64

|

| 41 |

+

|

| 42 |

+

- name: Build and push CPU image

|

| 43 |

+

uses: docker/build-push-action@v4

|

| 44 |

+

continue-on-error: true

|

| 45 |

+

with:

|

| 46 |

+

context: .

|

| 47 |

+

file: utils/docker/Dockerfile-cpu

|

| 48 |

+

push: true

|

| 49 |

+

tags: ultralytics/yolov5:latest-cpu

|

| 50 |

+

|

| 51 |

+

- name: Build and push GPU image

|

| 52 |

+

uses: docker/build-push-action@v4

|

| 53 |

+

continue-on-error: true

|

| 54 |

+

with:

|

| 55 |

+

context: .

|

| 56 |

+

file: utils/docker/Dockerfile

|

| 57 |

+

push: true

|

| 58 |

+

tags: ultralytics/yolov5:latest

|

yolov5/.github/workflows/greetings.yml

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# YOLOv5 🚀 by Ultralytics, AGPL-3.0 license

|

| 2 |

+

|

| 3 |

+

name: Greetings

|

| 4 |

+

|

| 5 |

+

on:

|

| 6 |

+

pull_request_target:

|

| 7 |

+

types: [opened]

|

| 8 |

+

issues:

|

| 9 |

+

types: [opened]

|

| 10 |

+

|

| 11 |

+

jobs:

|

| 12 |

+

greeting:

|

| 13 |

+

runs-on: ubuntu-latest

|

| 14 |

+

steps:

|

| 15 |

+

- uses: actions/first-interaction@v1

|

| 16 |

+

with:

|

| 17 |

+

repo-token: ${{ secrets.GITHUB_TOKEN }}

|

| 18 |

+

pr-message: |

|

| 19 |

+

👋 Hello @${{ github.actor }}, thank you for submitting a YOLOv5 🚀 PR! To allow your work to be integrated as seamlessly as possible, we advise you to:

|

| 20 |

+

|

| 21 |

+

- ✅ Verify your PR is **up-to-date** with `ultralytics/yolov5` `master` branch. If your PR is behind you can update your code by clicking the 'Update branch' button or by running `git pull` and `git merge master` locally.

|

| 22 |

+

- ✅ Verify all YOLOv5 Continuous Integration (CI) **checks are passing**.

|

| 23 |

+

- ✅ Reduce changes to the absolute **minimum** required for your bug fix or feature addition. _"It is not daily increase but daily decrease, hack away the unessential. The closer to the source, the less wastage there is."_ — Bruce Lee

|

| 24 |

+

|

| 25 |

+

issue-message: |

|

| 26 |

+

👋 Hello @${{ github.actor }}, thank you for your interest in YOLOv5 🚀! Please visit our ⭐️ [Tutorials](https://docs.ultralytics.com/yolov5/) to get started, where you can find quickstart guides for simple tasks like [Custom Data Training](https://docs.ultralytics.com/yolov5/tutorials/train_custom_data/) all the way to advanced concepts like [Hyperparameter Evolution](https://docs.ultralytics.com/yolov5/tutorials/hyperparameter_evolution/).

|

| 27 |

+

|

| 28 |

+

If this is a 🐛 Bug Report, please provide a **minimum reproducible example** to help us debug it.

|

| 29 |

+

|

| 30 |

+

If this is a custom training ❓ Question, please provide as much information as possible, including dataset image examples and training logs, and verify you are following our [Tips for Best Training Results](https://docs.ultralytics.com/yolov5/tutorials/tips_for_best_training_results/).

|

| 31 |

+

|

| 32 |

+

## Requirements

|

| 33 |

+

|

| 34 |

+

[**Python>=3.7.0**](https://www.python.org/) with all [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) installed including [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/). To get started:

|

| 35 |

+

```bash

|

| 36 |

+

git clone https://github.com/ultralytics/yolov5 # clone

|

| 37 |

+

cd yolov5

|

| 38 |

+

pip install -r requirements.txt # install

|

| 39 |

+

```

|

| 40 |

+

|

| 41 |

+

## Environments

|

| 42 |

+

|

| 43 |

+

YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

|

| 44 |

+

|

| 45 |

+

- **Notebooks** with free GPU: <a href="https://bit.ly/yolov5-paperspace-notebook"><img src="https://assets.paperspace.io/img/gradient-badge.svg" alt="Run on Gradient"></a> <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>

|

| 46 |

+

- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://docs.ultralytics.com/yolov5/environments/google_cloud_quickstart_tutorial/)

|

| 47 |

+

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://docs.ultralytics.com/yolov5/environments/aws_quickstart_tutorial/)

|

| 48 |

+

- **Docker Image**. See [Docker Quickstart Guide](https://docs.ultralytics.com/yolov5/environments/docker_image_quickstart_tutorial/) <a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"></a>

|

| 49 |

+

|

| 50 |

+

## Status

|

| 51 |

+

|

| 52 |

+

<a href="https://github.com/ultralytics/yolov5/actions/workflows/ci-testing.yml"><img src="https://github.com/ultralytics/yolov5/actions/workflows/ci-testing.yml/badge.svg" alt="YOLOv5 CI"></a>

|

| 53 |

+

|

| 54 |

+

If this badge is green, all [YOLOv5 GitHub Actions](https://github.com/ultralytics/yolov5/actions) Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 [training](https://github.com/ultralytics/yolov5/blob/master/train.py), [validation](https://github.com/ultralytics/yolov5/blob/master/val.py), [inference](https://github.com/ultralytics/yolov5/blob/master/detect.py), [export](https://github.com/ultralytics/yolov5/blob/master/export.py) and [benchmarks](https://github.com/ultralytics/yolov5/blob/master/benchmarks.py) on macOS, Windows, and Ubuntu every 24 hours and on every commit.

|

| 55 |

+

|

| 56 |

+

## Introducing YOLOv8 🚀

|

| 57 |

+

|

| 58 |

+

We're excited to announce the launch of our latest state-of-the-art (SOTA) object detection model for 2023 - [YOLOv8](https://github.com/ultralytics/ultralytics) 🚀!

|

| 59 |

+

|

| 60 |

+

Designed to be fast, accurate, and easy to use, YOLOv8 is an ideal choice for a wide range of object detection, image segmentation and image classification tasks. With YOLOv8, you'll be able to quickly and accurately detect objects in real-time, streamline your workflows, and achieve new levels of accuracy in your projects.

|

| 61 |

+

|

| 62 |

+

Check out our [YOLOv8 Docs](https://docs.ultralytics.com/) for details and get started with:

|

| 63 |

+

```bash

|

| 64 |

+

pip install ultralytics

|

| 65 |

+

```

|

yolov5/.github/workflows/links.yml

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Ultralytics YOLO 🚀, AGPL-3.0 license

|

| 2 |

+

# YOLO Continuous Integration (CI) GitHub Actions tests broken link checker

|

| 3 |

+

# Accept 429(Instagram, 'too many requests'), 999(LinkedIn, 'unknown status code'), Timeout(Twitter)

|

| 4 |