Spaces:

Sleeping

Sleeping

Upload 97 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- LICENSE +203 -0

- Pose_Anything_Teaser.png +0 -0

- README.md +145 -13

- app.py +320 -0

- configs/1shot-swin/base_split1_config.py +190 -0

- configs/1shot-swin/base_split2_config.py +190 -0

- configs/1shot-swin/base_split3_config.py +190 -0

- configs/1shot-swin/base_split4_config.py +190 -0

- configs/1shot-swin/base_split5_config.py +190 -0

- configs/1shot-swin/graph_split1_config.py +191 -0

- configs/1shot-swin/graph_split2_config.py +191 -0

- configs/1shot-swin/graph_split3_config.py +191 -0

- configs/1shot-swin/graph_split4_config.py +191 -0

- configs/1shot-swin/graph_split5_config.py +191 -0

- configs/1shots/base_split1_config.py +190 -0

- configs/1shots/base_split2_config.py +190 -0

- configs/1shots/base_split3_config.py +190 -0

- configs/1shots/base_split4_config.py +190 -0

- configs/1shots/base_split5_config.py +190 -0

- configs/1shots/graph_split1_config.py +191 -0

- configs/1shots/graph_split2_config.py +191 -0

- configs/1shots/graph_split3_config.py +191 -0

- configs/1shots/graph_split4_config.py +191 -0

- configs/1shots/graph_split5_config.py +191 -0

- configs/5shot-swin/base_split1_config.py +190 -0

- configs/5shot-swin/base_split2_config.py +190 -0

- configs/5shot-swin/base_split3_config.py +190 -0

- configs/5shot-swin/base_split4_config.py +190 -0

- configs/5shot-swin/base_split5_config.py +190 -0

- configs/5shot-swin/graph_split1_config.py +191 -0

- configs/5shot-swin/graph_split2_config.py +191 -0

- configs/5shot-swin/graph_split3_config.py +191 -0

- configs/5shot-swin/graph_split4_config.py +191 -0

- configs/5shot-swin/graph_split5_config.py +191 -0

- configs/5shots/base_split1_config.py +190 -0

- configs/5shots/base_split2_config.py +190 -0

- configs/5shots/base_split3_config.py +190 -0

- configs/5shots/base_split4_config.py +190 -0

- configs/5shots/base_split5_config.py +190 -0

- configs/5shots/graph_split1_config.py +191 -0

- configs/5shots/graph_split2_config.py +191 -0

- configs/5shots/graph_split3_config.py +191 -0

- configs/5shots/graph_split4_config.py +191 -0

- configs/5shots/graph_split5_config.py +191 -0

- configs/demo.py +194 -0

- configs/demo_b.py +191 -0

- demo.py +289 -0

- docker/Dockerfile +50 -0

- gradio_teaser.png +0 -0

- models/VERSION +1 -0

LICENSE

ADDED

|

@@ -0,0 +1,203 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (c) 2022 SenseTime. All Rights Reserved.

|

| 2 |

+

|

| 3 |

+

Apache License

|

| 4 |

+

Version 2.0, January 2004

|

| 5 |

+

http://www.apache.org/licenses/

|

| 6 |

+

|

| 7 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 8 |

+

|

| 9 |

+

1. Definitions.

|

| 10 |

+

|

| 11 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 12 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 13 |

+

|

| 14 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 15 |

+

the copyright owner that is granting the License.

|

| 16 |

+

|

| 17 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 18 |

+

other entities that control, are controlled by, or are under common

|

| 19 |

+

control with that entity. For the purposes of this definition,

|

| 20 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 21 |

+

direction or management of such entity, whether by contract or

|

| 22 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 23 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 24 |

+

|

| 25 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 26 |

+

exercising permissions granted by this License.

|

| 27 |

+

|

| 28 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 29 |

+

including but not limited to software source code, documentation

|

| 30 |

+

source, and configuration files.

|

| 31 |

+

|

| 32 |

+

"Object" form shall mean any form resulting from mechanical

|

| 33 |

+

transformation or translation of a Source form, including but

|

| 34 |

+

not limited to compiled object code, generated documentation,

|

| 35 |

+

and conversions to other media types.

|

| 36 |

+

|

| 37 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 38 |

+

Object form, made available under the License, as indicated by a

|

| 39 |

+

copyright notice that is included in or attached to the work

|

| 40 |

+

(an example is provided in the Appendix below).

|

| 41 |

+

|

| 42 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 43 |

+

form, that is based on (or derived from) the Work and for which the

|

| 44 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 45 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 46 |

+

of this License, Derivative Works shall not include works that remain

|

| 47 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 48 |

+

the Work and Derivative Works thereof.

|

| 49 |

+

|

| 50 |

+

"Contribution" shall mean any work of authorship, including

|

| 51 |

+

the original version of the Work and any modifications or additions

|

| 52 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 53 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 54 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 55 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 56 |

+

means any form of electronic, verbal, or written communication sent

|

| 57 |

+

to the Licensor or its representatives, including but not limited to

|

| 58 |

+

communication on electronic mailing lists, source code control systems,

|

| 59 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 60 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 61 |

+

excluding communication that is conspicuously marked or otherwise

|

| 62 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 63 |

+

|

| 64 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 65 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 66 |

+

subsequently incorporated within the Work.

|

| 67 |

+

|

| 68 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 69 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 70 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 71 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 72 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 73 |

+

Work and such Derivative Works in Source or Object form.

|

| 74 |

+

|

| 75 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 76 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 77 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 78 |

+

(except as stated in this section) patent license to make, have made,

|

| 79 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 80 |

+

where such license applies only to those patent claims licensable

|

| 81 |

+

by such Contributor that are necessarily infringed by their

|

| 82 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 83 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 84 |

+

institute patent litigation against any entity (including a

|

| 85 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 86 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 87 |

+

or contributory patent infringement, then any patent licenses

|

| 88 |

+

granted to You under this License for that Work shall terminate

|

| 89 |

+

as of the date such litigation is filed.

|

| 90 |

+

|

| 91 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 92 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 93 |

+

modifications, and in Source or Object form, provided that You

|

| 94 |

+

meet the following conditions:

|

| 95 |

+

|

| 96 |

+

(a) You must give any other recipients of the Work or

|

| 97 |

+

Derivative Works a copy of this License; and

|

| 98 |

+

|

| 99 |

+

(b) You must cause any modified files to carry prominent notices

|

| 100 |

+

stating that You changed the files; and

|

| 101 |

+

|

| 102 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 103 |

+

that You distribute, all copyright, patent, trademark, and

|

| 104 |

+

attribution notices from the Source form of the Work,

|

| 105 |

+

excluding those notices that do not pertain to any part of

|

| 106 |

+

the Derivative Works; and

|

| 107 |

+

|

| 108 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 109 |

+

distribution, then any Derivative Works that You distribute must

|

| 110 |

+

include a readable copy of the attribution notices contained

|

| 111 |

+

within such NOTICE file, excluding those notices that do not

|

| 112 |

+

pertain to any part of the Derivative Works, in at least one

|

| 113 |

+

of the following places: within a NOTICE text file distributed

|

| 114 |

+

as part of the Derivative Works; within the Source form or

|

| 115 |

+

documentation, if provided along with the Derivative Works; or,

|

| 116 |

+

within a display generated by the Derivative Works, if and

|

| 117 |

+

wherever such third-party notices normally appear. The contents

|

| 118 |

+

of the NOTICE file are for informational purposes only and

|

| 119 |

+

do not modify the License. You may add Your own attribution

|

| 120 |

+

notices within Derivative Works that You distribute, alongside

|

| 121 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 122 |

+

that such additional attribution notices cannot be construed

|

| 123 |

+

as modifying the License.

|

| 124 |

+

|

| 125 |

+

You may add Your own copyright statement to Your modifications and

|

| 126 |

+

may provide additional or different license terms and conditions

|

| 127 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 128 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 129 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 130 |

+

the conditions stated in this License.

|

| 131 |

+

|

| 132 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 133 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 134 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 135 |

+

this License, without any additional terms or conditions.

|

| 136 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 137 |

+

the terms of any separate license agreement you may have executed

|

| 138 |

+

with Licensor regarding such Contributions.

|

| 139 |

+

|

| 140 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 141 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 142 |

+

except as required for reasonable and customary use in describing the

|

| 143 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 144 |

+

|

| 145 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 146 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 147 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 148 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 149 |

+

implied, including, without limitation, any warranties or conditions

|

| 150 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 151 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 152 |

+

appropriateness of using or redistributing the Work and assume any

|

| 153 |

+

risks associated with Your exercise of permissions under this License.

|

| 154 |

+

|

| 155 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 156 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 157 |

+

unless required by applicable law (such as deliberate and grossly

|

| 158 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 159 |

+

liable to You for damages, including any direct, indirect, special,

|

| 160 |

+

incidental, or consequential damages of any character arising as a

|

| 161 |

+

result of this License or out of the use or inability to use the

|

| 162 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 163 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 164 |

+

other commercial damages or losses), even if such Contributor

|

| 165 |

+

has been advised of the possibility of such damages.

|

| 166 |

+

|

| 167 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 168 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 169 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 170 |

+

or other liability obligations and/or rights consistent with this

|

| 171 |

+

License. However, in accepting such obligations, You may act only

|

| 172 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 173 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 174 |

+

defend, and hold each Contributor harmless for any liability

|

| 175 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 176 |

+

of your accepting any such warranty or additional liability.

|

| 177 |

+

|

| 178 |

+

END OF TERMS AND CONDITIONS

|

| 179 |

+

|

| 180 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 181 |

+

|

| 182 |

+

To apply the Apache License to your work, attach the following

|

| 183 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 184 |

+

replaced with your own identifying information. (Don't include

|

| 185 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 186 |

+

comment syntax for the file format. We also recommend that a

|

| 187 |

+

file or class name and description of purpose be included on the

|

| 188 |

+

same "printed page" as the copyright notice for easier

|

| 189 |

+

identification within third-party archives.

|

| 190 |

+

|

| 191 |

+

Copyright 2020 MMClassification Authors.

|

| 192 |

+

|

| 193 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 194 |

+

you may not use this file except in compliance with the License.

|

| 195 |

+

You may obtain a copy of the License at

|

| 196 |

+

|

| 197 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 198 |

+

|

| 199 |

+

Unless required by applicable law or agreed to in writing, software

|

| 200 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 201 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 202 |

+

See the License for the specific language governing permissions and

|

| 203 |

+

limitations under the License.

|

Pose_Anything_Teaser.png

ADDED

|

README.md

CHANGED

|

@@ -1,13 +1,145 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

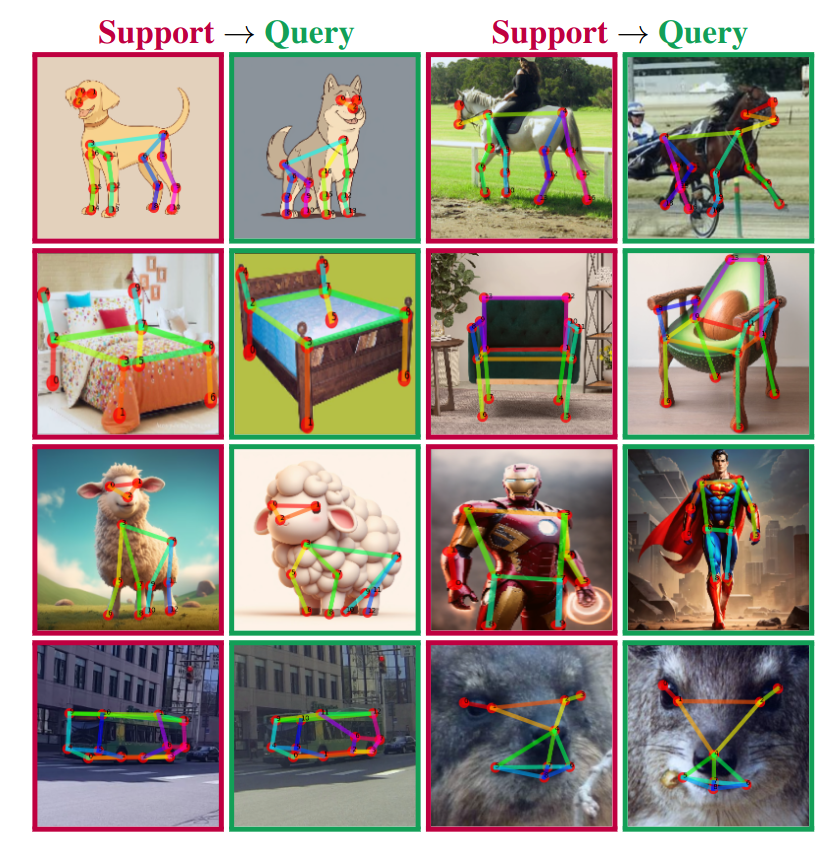

| 1 |

+

# Pose Anything: A Graph-Based Approach for Category-Agnostic Pose Estimation

|

| 2 |

+

<a href="https://orhir.github.io/pose-anything/"><img src="https://img.shields.io/static/v1?label=Project&message=Website&color=blue"></a>

|

| 3 |

+

<a href="https://arxiv.org/abs/2311.17891"><img src="https://img.shields.io/badge/arXiv-2311.17891-b31b1b.svg"></a>

|

| 4 |

+

<a href="https://www.apache.org/licenses/LICENSE-2.0.txt"><img src="https://img.shields.io/badge/License-Apache-yellow"></a>

|

| 5 |

+

[](https://paperswithcode.com/sota/2d-pose-estimation-on-mp-100?p=pose-anything-a-graph-based-approach-for)

|

| 6 |

+

|

| 7 |

+

By [Or Hirschorn](https://scholar.google.co.il/citations?user=GgFuT_QAAAAJ&hl=iw&oi=ao) and [Shai Avidan](https://scholar.google.co.il/citations?hl=iw&user=hpItE1QAAAAJ)

|

| 8 |

+

|

| 9 |

+

This repo is the official implementation of "[Pose Anything: A Graph-Based Approach for Category-Agnostic Pose Estimation](https://arxiv.org/pdf/2311.17891.pdf)".

|

| 10 |

+

<p align="center">

|

| 11 |

+

<img src="Pose_Anything_Teaser.png" width="384">

|

| 12 |

+

</p>

|

| 13 |

+

|

| 14 |

+

## Introduction

|

| 15 |

+

|

| 16 |

+

We present a novel approach to CAPE that leverages the inherent geometrical relations between keypoints through a newly designed Graph Transformer Decoder. By capturing and incorporating this crucial structural information, our method enhances the accuracy of keypoint localization, marking a significant departure from conventional CAPE techniques that treat keypoints as isolated entities.

|

| 17 |

+

|

| 18 |

+

## Citation

|

| 19 |

+

If you find this useful, please cite this work as follows:

|

| 20 |

+

```bibtex

|

| 21 |

+

@misc{hirschorn2023pose,

|

| 22 |

+

title={Pose Anything: A Graph-Based Approach for Category-Agnostic Pose Estimation},

|

| 23 |

+

author={Or Hirschorn and Shai Avidan},

|

| 24 |

+

year={2023},

|

| 25 |

+

eprint={2311.17891},

|

| 26 |

+

archivePrefix={arXiv},

|

| 27 |

+

primaryClass={cs.CV}

|

| 28 |

+

}

|

| 29 |

+

```

|

| 30 |

+

|

| 31 |

+

## Getting Started

|

| 32 |

+

|

| 33 |

+

### Docker [Recommended]

|

| 34 |

+

We provide a docker image for easy use.

|

| 35 |

+

You can simply pull the docker image from docker hub, containing all the required libraries and packages:

|

| 36 |

+

|

| 37 |

+

```

|

| 38 |

+

docker pull orhir/pose_anything

|

| 39 |

+

docker run --name pose_anything -v {DATA_DIR}:/workspace/PoseAnything/PoseAnything/data/mp100 -it orhir/pose_anything /bin/bash

|

| 40 |

+

```

|

| 41 |

+

### Conda Environment

|

| 42 |

+

We train and evaluate our model on Python 3.8 and Pytorch 2.0.1 with CUDA 12.1.

|

| 43 |

+

|

| 44 |

+

Please first install pytorch and torchvision following official documentation Pytorch.

|

| 45 |

+

Then, follow [MMPose](https://mmpose.readthedocs.io/en/latest/installation.html) to install the following packages:

|

| 46 |

+

```

|

| 47 |

+

mmcv-full=1.6.2

|

| 48 |

+

mmpose=0.29.0

|

| 49 |

+

```

|

| 50 |

+

Having installed these packages, run:

|

| 51 |

+

```

|

| 52 |

+

python setup.py develop

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

## Demo on Custom Images

|

| 56 |

+

We provide a demo code to test our code on custom images.

|

| 57 |

+

|

| 58 |

+

***A bigger and more accurate version of the model - COMING SOON!***

|

| 59 |

+

|

| 60 |

+

### Gradio Demo

|

| 61 |

+

We first require to install gradio:

|

| 62 |

+

```

|

| 63 |

+

pip install gradio==3.44.0

|

| 64 |

+

```

|

| 65 |

+

Then, Download the [pretrained model](https://drive.google.com/file/d/1RT1Q8AMEa1kj6k9ZqrtWIKyuR4Jn4Pqc/view?usp=drive_link) and run:

|

| 66 |

+

```

|

| 67 |

+

python app.py --checkpoint [path_to_pretrained_ckpt]

|

| 68 |

+

```

|

| 69 |

+

### Terminal Demo

|

| 70 |

+

Download

|

| 71 |

+

the [pretrained model](https://drive.google.com/file/d/1RT1Q8AMEa1kj6k9ZqrtWIKyuR4Jn4Pqc/view?usp=drive_link)

|

| 72 |

+

and run:

|

| 73 |

+

|

| 74 |

+

```

|

| 75 |

+

python demo.py --support [path_to_support_image] --query [path_to_query_image] --config configs/demo_b.py --checkpoint [path_to_pretrained_ckpt]

|

| 76 |

+

```

|

| 77 |

+

***Note:*** The demo code supports any config with suitable checkpoint file. More pre-trained models can be found in the evaluation section.

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

## MP-100 Dataset

|

| 81 |

+

Please follow the [official guide](https://github.com/luminxu/Pose-for-Everything/blob/main/mp100/README.md) to prepare the MP-100 dataset for training and evaluation, and organize the data structure properly.

|

| 82 |

+

|

| 83 |

+

We provide an updated annotation file, which includes skeleton definitions, in the following [link](https://drive.google.com/drive/folders/1uRyGB-P5Tc_6TmAZ6RnOi0SWjGq9b28T?usp=sharing).

|

| 84 |

+

|

| 85 |

+

**Please note:**

|

| 86 |

+

|

| 87 |

+

Current version of the MP-100 dataset includes some discrepancies and filenames errors:

|

| 88 |

+

1. Note that the mentioned DeepFasion dataset is actually DeepFashion2 dataset. The link in the official repo is wrong. Use this [repo](https://github.com/switchablenorms/DeepFashion2/tree/master) instead.

|

| 89 |

+

2. We provide a script to fix CarFusion filename errors, which can be run by:

|

| 90 |

+

```

|

| 91 |

+

python tools/fix_carfusion.py [path_to_CarFusion_dataset] [path_to_mp100_annotation]

|

| 92 |

+

```

|

| 93 |

+

|

| 94 |

+

## Training

|

| 95 |

+

|

| 96 |

+

### Backbone Options

|

| 97 |

+

To use pre-trained Swin-Transformer as used in our paper, we provide the weights, taken from this [repo](https://github.com/microsoft/Swin-Transformer/blob/main/MODELHUB.md), in the following [link](https://drive.google.com/drive/folders/1-q4mSxlNAUwDlevc3Hm5Ij0l_2OGkrcg?usp=sharing).

|

| 98 |

+

These should be placed in the `./pretrained` folder.

|

| 99 |

+

|

| 100 |

+

We also support DINO and ResNet backbones. To use them, you can easily change the config file to use the desired backbone.

|

| 101 |

+

This can be done by changing the `pretrained` field in the config file to `dinov2`, `dino` or `resnet` respectively (this will automatically load the pretrained weights from the official repo).

|

| 102 |

+

|

| 103 |

+

### Training

|

| 104 |

+

To train the model, run:

|

| 105 |

+

```

|

| 106 |

+

python train.py --config [path_to_config_file] --work-dir [path_to_work_dir]

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

## Evaluation and Pretrained Models

|

| 110 |

+

You can download the pretrained checkpoints from following [link](https://drive.google.com/drive/folders/1RmrqzE3g0qYRD5xn54-aXEzrIkdYXpEW?usp=sharing).

|

| 111 |

+

|

| 112 |

+

Here we provide the evaluation results of our pretrained models on MP-100 dataset along with the config files and checkpoints:

|

| 113 |

+

|

| 114 |

+

### 1-Shot Models

|

| 115 |

+

| Setting | split 1 | split 2 | split 3 | split 4 | split 5 |

|

| 116 |

+

|:-------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|

|

| 117 |

+

| Tiny | 91.06 | 88024 | 86.09 | 86.17 | 85.78 |

|

| 118 |

+

| | [link](https://drive.google.com/file/d/1GubmkVkqybs-eD4hiRkgBzkUVGE_rIFX/view?usp=drive_link) / [config](configs/1shots/graph_split1_config.py) | [link](https://drive.google.com/file/d/1EEekDF3xV_wJOVk7sCQWUA8ygUKzEm2l/view?usp=drive_link) / [config](configs/1shots/graph_split2_config.py) | [link](https://drive.google.com/file/d/1FuwpNBdPI3mfSovta2fDGKoqJynEXPZQ/view?usp=drive_link) / [config](configs/1shots/graph_split3_config.py) | [link](https://drive.google.com/file/d/1_SSqSANuZlbC0utzIfzvZihAW9clefcR/view?usp=drive_link) / [config](configs/1shots/graph_split4_config.py) | [link](https://drive.google.com/file/d/1nUHr07W5F55u-FKQEPFq_CECgWZOKKLF/view?usp=drive_link) / [config](configs/1shots/graph_split5_config.py) |

|

| 119 |

+

| Small | 93.66 | 90.42 | 89.79 | 88.68 | 89.61 |

|

| 120 |

+

| | [link](https://drive.google.com/file/d/1RT1Q8AMEa1kj6k9ZqrtWIKyuR4Jn4Pqc/view?usp=drive_link) / [config](configs/1shot-swin/graph_split1_config.py) | [link](https://drive.google.com/file/d/1BT5b8MlnkflcdhTFiBROIQR3HccLsPQd/view?usp=drive_link) / [config](configs/1shot-swin/graph_split2_config.py) | [link](https://drive.google.com/file/d/1Z64cw_1CSDGObabSAWKnMK0BA_bqDHxn/view?usp=drive_link) / [config](configs/1shot-swin/graph_split3_config.py) | [link](https://drive.google.com/file/d/1vf82S8LAjIzpuBcbEoDCa26cR8DqNriy/view?usp=drive_link) / [config](configs/1shot-swin/graph_split4_config.py) | [link](https://drive.google.com/file/d/14FNx0JNbkS2CvXQMiuMU_kMZKFGO2rDV/view?usp=drive_link) / [config](configs/1shot-swin/graph_split5_config.py) |

|

| 121 |

+

|

| 122 |

+

### 5-Shot Models

|

| 123 |

+

| Setting | split 1 | split 2 | split 3 | split 4 | split 5 |

|

| 124 |

+

|:-------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|:---------------------------------------------------------------------------------------------------------------------------------------------------:|

|

| 125 |

+

| Tiny | 94.18 | 91.46 | 90.50 | 90.18 | 89.47 |

|

| 126 |

+

| | [link](https://drive.google.com/file/d/1PeMuwv5YwiF3UCE5oN01Qchu5K3BaQ9L/view?usp=drive_link) / [config](configs/5shots/graph_split1_config.py) | [link](https://drive.google.com/file/d/1enIapPU1D8lZOET7q_qEjnhC1HFy3jWK/view?usp=drive_link) / [config](configs/5shots/graph_split2_config.py) | [link](https://drive.google.com/file/d/1MTeZ9Ba-ucLuqX0KBoLbBD5PaEct7VUp/view?usp=drive_link) / [config](configs/5shots/graph_split3_config.py) | [link](https://drive.google.com/file/d/1U2N7DI2F0v7NTnPCEEAgx-WKeBZNAFoa/view?usp=drive_link) / [config](configs/5shots/graph_split4_config.py) | [link](https://drive.google.com/file/d/1wapJDgtBWtmz61JNY7ktsFyvckRKiR2C/view?usp=drive_link) / [config](configs/5shots/graph_split5_config.py) |

|

| 127 |

+

| Small | 96.51 | 92.15 | 91.99 | 92.01 | 92.36 |

|

| 128 |

+

| | [link](https://drive.google.com/file/d/1p5rnA0MhmndSKEbyXMk49QXvNE03QV2p/view?usp=drive_link) / [config](configs/5shot-swin/graph_split1_config.py) | [link](https://drive.google.com/file/d/1Q3KNyUW_Gp3JytYxUPhkvXFiDYF6Hv8w/view?usp=drive_link) / [config](configs/5shot-swin/graph_split2_config.py) | [link](https://drive.google.com/file/d/1gWgTk720fSdAf_ze1FkfXTW0t7k-69dV/view?usp=drive_link) / [config](configs/5shot-swin/graph_split3_config.py) | [link](https://drive.google.com/file/d/1LuaRQ8a6AUPrkr7l5j2W6Fe_QbgASkwY/view?usp=drive_link) / [config](configs/5shot-swin/graph_split4_config.py) | [link](https://drive.google.com/file/d/1z--MAOPCwMG_GQXru9h2EStbnIvtHv1L/view?usp=drive_link) / [config](configs/5shot-swin/graph_split5_config.py) |

|

| 129 |

+

|

| 130 |

+

### Evaluation

|

| 131 |

+

The evaluation on a single GPU will take approximately 30 min.

|

| 132 |

+

|

| 133 |

+

To evaluate the pretrained model, run:

|

| 134 |

+

```

|

| 135 |

+

python test.py [path_to_config_file] [path_to_pretrained_ckpt]

|

| 136 |

+

```

|

| 137 |

+

## Acknowledgement

|

| 138 |

+

|

| 139 |

+

Our code is based on code from:

|

| 140 |

+

- [MMPose](https://github.com/open-mmlab/mmpose)

|

| 141 |

+

- [CapeFormer](https://github.com/flyinglynx/CapeFormer)

|

| 142 |

+

|

| 143 |

+

|

| 144 |

+

## License

|

| 145 |

+

This project is released under the Apache 2.0 license.

|

app.py

ADDED

|

@@ -0,0 +1,320 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

# Copyright (c) OpenMMLab. All rights reserved.

|

| 3 |

+

import os

|

| 4 |

+

import random

|

| 5 |

+

|

| 6 |

+

# os.system('python -m pip install timm')

|

| 7 |

+

# os.system('python -m pip install -U openxlab')

|

| 8 |

+

# os.system('python -m pip install -U pillow')

|

| 9 |

+

# os.system('python -m pip install Openmim')

|

| 10 |

+

# os.system('python -m mim install mmengine')

|

| 11 |

+

os.system('python -m mim install "mmcv-full==1.6.2"')

|

| 12 |

+

os.system('python -m mim install "mmpose==0.29.0"')

|

| 13 |

+

os.system('python -m mim install "gradio==3.44.0"')

|

| 14 |

+

os.system('python setup.py develop')

|

| 15 |

+

|

| 16 |

+

import gradio as gr

|

| 17 |

+

import numpy as np

|

| 18 |

+

import torch

|

| 19 |

+

from PIL import ImageDraw, Image

|

| 20 |

+

from matplotlib import pyplot as plt

|

| 21 |

+

from mmcv import Config

|

| 22 |

+

from mmcv.runner import load_checkpoint

|

| 23 |

+

from mmpose.core import wrap_fp16_model

|

| 24 |

+

from mmpose.models import build_posenet

|

| 25 |

+

from torchvision import transforms

|

| 26 |

+

from demo import Resize_Pad

|

| 27 |

+

from models import *

|

| 28 |

+

import matplotlib

|

| 29 |

+

|

| 30 |

+

matplotlib.use('agg')

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

def plot_results(support_img, query_img, support_kp, support_w, query_kp,

|

| 34 |

+

query_w, skeleton,

|

| 35 |

+

initial_proposals, prediction, radius=6):

|

| 36 |

+

h, w, c = support_img.shape

|

| 37 |

+

prediction = prediction[-1].cpu().numpy() * h

|

| 38 |

+

query_img = (query_img - np.min(query_img)) / (

|

| 39 |

+

np.max(query_img) - np.min(query_img))

|

| 40 |

+

for id, (img, w, keypoint) in enumerate(zip([query_img],

|

| 41 |

+

[query_w],

|

| 42 |

+

[prediction])):

|

| 43 |

+

f, axes = plt.subplots()

|

| 44 |

+

plt.imshow(img)

|

| 45 |

+

for k in range(keypoint.shape[0]):

|

| 46 |

+

if w[k] > 0:

|

| 47 |

+

kp = keypoint[k, :2]

|

| 48 |

+

c = (1, 0, 0, 0.75) if w[k] == 1 else (0, 0, 1, 0.6)

|

| 49 |

+

patch = plt.Circle(kp, radius, color=c)

|

| 50 |

+

axes.add_patch(patch)

|

| 51 |

+

axes.text(kp[0], kp[1], k)

|

| 52 |

+

plt.draw()

|

| 53 |

+

for l, limb in enumerate(skeleton):

|

| 54 |

+

kp = keypoint[:, :2]

|

| 55 |

+

if l > len(COLORS) - 1:

|

| 56 |

+

c = [x / 255 for x in random.sample(range(0, 255), 3)]

|

| 57 |

+

else:

|

| 58 |

+

c = [x / 255 for x in COLORS[l]]

|

| 59 |

+

if w[limb[0]] > 0 and w[limb[1]] > 0:

|

| 60 |

+

patch = plt.Line2D([kp[limb[0], 0], kp[limb[1], 0]],

|

| 61 |

+

[kp[limb[0], 1], kp[limb[1], 1]],

|

| 62 |

+

linewidth=6, color=c, alpha=0.6)

|

| 63 |

+

axes.add_artist(patch)

|

| 64 |

+

plt.axis('off') # command for hiding the axis.

|

| 65 |

+

plt.subplots_adjust(0, 0, 1, 1, 0, 0)

|

| 66 |

+

return plt

|

| 67 |

+

|

| 68 |

+

|

| 69 |

+

COLORS = [

|

| 70 |

+

[255, 85, 0], [255, 170, 0], [255, 255, 0], [170, 255, 0],

|

| 71 |

+

[85, 255, 0], [0, 255, 0], [0, 255, 85], [0, 255, 170], [0, 255, 255],

|

| 72 |

+

[0, 170, 255], [0, 85, 255], [0, 0, 255], [85, 0, 255], [170, 0, 255],

|

| 73 |

+

[255, 0, 255], [255, 0, 170], [255, 0, 85], [255, 0, 0]

|

| 74 |

+

]

|

| 75 |

+

|

| 76 |

+

kp_src = []

|

| 77 |

+

skeleton = []

|

| 78 |

+

count = 0

|

| 79 |

+

color_idx = 0

|

| 80 |

+

prev_pt = None

|

| 81 |

+

prev_pt_idx = None

|

| 82 |

+

prev_clicked = None

|

| 83 |

+

original_support_image = None

|

| 84 |

+

checkpoint_path = ''

|

| 85 |

+

|

| 86 |

+

def process(query_img,

|

| 87 |

+

cfg_path='configs/demo_b.py'):

|

| 88 |

+

global skeleton

|

| 89 |

+

cfg = Config.fromfile(cfg_path)

|

| 90 |

+

kp_src_np = np.array(kp_src).copy().astype(np.float32)

|

| 91 |

+

kp_src_np[:, 0] = kp_src_np[:, 0] / 128. * cfg.model.encoder_config.img_size

|

| 92 |

+

kp_src_np[:, 1] = kp_src_np[:, 1] / 128. * cfg.model.encoder_config.img_size

|

| 93 |

+

kp_src_np = np.flip(kp_src_np, 1).copy()

|

| 94 |

+

kp_src_tensor = torch.tensor(kp_src_np).float()

|

| 95 |

+

preprocess = transforms.Compose([

|

| 96 |

+

transforms.ToTensor(),

|

| 97 |

+

transforms.Normalize((0.485, 0.456, 0.406), (0.229, 0.224, 0.225)),

|

| 98 |

+

Resize_Pad(cfg.model.encoder_config.img_size,

|

| 99 |

+

cfg.model.encoder_config.img_size)])

|

| 100 |

+

|

| 101 |

+

if len(skeleton) == 0:

|

| 102 |

+

skeleton = [(0, 0)]

|

| 103 |

+

|

| 104 |

+

support_img = preprocess(original_support_image).flip(0)[None]

|

| 105 |

+

np_query = np.array(query_img)[:, :, ::-1].copy()

|

| 106 |

+

q_img = preprocess(np_query).flip(0)[None]

|

| 107 |

+

# Create heatmap from keypoints

|

| 108 |

+

genHeatMap = TopDownGenerateTargetFewShot()

|

| 109 |

+

data_cfg = cfg.data_cfg

|

| 110 |

+

data_cfg['image_size'] = np.array([cfg.model.encoder_config.img_size,

|

| 111 |

+

cfg.model.encoder_config.img_size])

|

| 112 |

+

data_cfg['joint_weights'] = None

|

| 113 |

+

data_cfg['use_different_joint_weights'] = False

|

| 114 |

+

kp_src_3d = torch.concatenate(

|

| 115 |

+

(kp_src_tensor, torch.zeros(kp_src_tensor.shape[0], 1)), dim=-1)

|

| 116 |

+

kp_src_3d_weight = torch.concatenate(

|

| 117 |

+

(torch.ones_like(kp_src_tensor),

|

| 118 |

+

torch.zeros(kp_src_tensor.shape[0], 1)), dim=-1)

|

| 119 |

+

target_s, target_weight_s = genHeatMap._msra_generate_target(data_cfg,

|

| 120 |

+

kp_src_3d,

|

| 121 |

+

kp_src_3d_weight,

|

| 122 |

+

sigma=1)

|

| 123 |

+

target_s = torch.tensor(target_s).float()[None]

|

| 124 |

+

target_weight_s = torch.ones_like(

|

| 125 |

+

torch.tensor(target_weight_s).float()[None])

|

| 126 |

+

|

| 127 |

+

data = {

|

| 128 |

+

'img_s': [support_img],

|

| 129 |

+

'img_q': q_img,

|

| 130 |

+

'target_s': [target_s],

|

| 131 |

+

'target_weight_s': [target_weight_s],

|

| 132 |

+

'target_q': None,

|

| 133 |

+

'target_weight_q': None,

|

| 134 |

+

'return_loss': False,

|

| 135 |

+

'img_metas': [{'sample_skeleton': [skeleton],

|

| 136 |

+

'query_skeleton': skeleton,

|

| 137 |

+

'sample_joints_3d': [kp_src_3d],

|

| 138 |

+

'query_joints_3d': kp_src_3d,

|

| 139 |

+

'sample_center': [kp_src_tensor.mean(dim=0)],

|

| 140 |

+

'query_center': kp_src_tensor.mean(dim=0),

|

| 141 |

+

'sample_scale': [

|

| 142 |

+

kp_src_tensor.max(dim=0)[0] -

|

| 143 |

+

kp_src_tensor.min(dim=0)[0]],

|

| 144 |

+

'query_scale': kp_src_tensor.max(dim=0)[0] -

|

| 145 |

+

kp_src_tensor.min(dim=0)[0],

|

| 146 |

+

'sample_rotation': [0],

|

| 147 |

+

'query_rotation': 0,

|

| 148 |

+

'sample_bbox_score': [1],

|

| 149 |

+

'query_bbox_score': 1,

|

| 150 |

+

'query_image_file': '',

|

| 151 |

+

'sample_image_file': [''],

|

| 152 |

+

}]

|

| 153 |

+

}

|

| 154 |

+

# Load model

|

| 155 |

+

model = build_posenet(cfg.model)

|

| 156 |

+

fp16_cfg = cfg.get('fp16', None)

|

| 157 |

+

if fp16_cfg is not None:

|

| 158 |

+

wrap_fp16_model(model)

|

| 159 |

+

load_checkpoint(model, checkpoint_path, map_location='cpu')

|

| 160 |

+

model.eval()

|

| 161 |

+

with torch.no_grad():

|

| 162 |

+

outputs = model(**data)

|

| 163 |

+

# visualize results

|

| 164 |

+

vis_s_weight = target_weight_s[0]

|

| 165 |

+

vis_q_weight = target_weight_s[0]

|

| 166 |

+

vis_s_image = support_img[0].detach().cpu().numpy().transpose(1, 2, 0)

|

| 167 |

+

vis_q_image = q_img[0].detach().cpu().numpy().transpose(1, 2, 0)

|

| 168 |

+

support_kp = kp_src_3d

|

| 169 |

+

out = plot_results(vis_s_image,

|

| 170 |

+

vis_q_image,

|

| 171 |

+

support_kp,

|

| 172 |

+

vis_s_weight,

|

| 173 |

+

None,

|

| 174 |

+

vis_q_weight,

|

| 175 |

+

skeleton,

|

| 176 |

+

None,

|

| 177 |

+

torch.tensor(outputs['points']).squeeze(0),

|

| 178 |

+

)

|

| 179 |

+

return out

|

| 180 |

+

|

| 181 |

+

|

| 182 |

+

with gr.Blocks() as demo:

|

| 183 |

+

gr.Markdown('''

|

| 184 |

+

# Pose Anything Demo

|

| 185 |

+

We present a novel approach to category agnostic pose estimation that leverages the inherent geometrical relations between keypoints through a newly designed Graph Transformer Decoder. By capturing and incorporating this crucial structural information, our method enhances the accuracy of keypoint localization, marking a significant departure from conventional CAPE techniques that treat keypoints as isolated entities.

|

| 186 |

+

### [Paper](https://arxiv.org/abs/2311.17891) | [Official Repo](https://github.com/orhir/PoseAnything)

|

| 187 |

+

|

| 188 |

+

## Instructions

|

| 189 |

+

1. Upload an image of the object you want to pose on the **left** image.

|

| 190 |

+

2. Click on the **left** image to mark keypoints.

|

| 191 |

+

3. Click on the keypoints on the **right** image to mark limbs.

|

| 192 |

+

4. Upload an image of the object you want to pose to the query image (**bottom**).

|

| 193 |

+

5. Click **Evaluate** to pose the query image.

|

| 194 |

+

''')

|

| 195 |

+

with gr.Row():

|

| 196 |

+

support_img = gr.Image(label="Support Image",

|

| 197 |

+

type="pil",

|

| 198 |

+

info='Click to mark keypoints').style(

|

| 199 |

+

height=256, width=256)

|

| 200 |

+

posed_support = gr.Image(label="Posed Support Image",

|

| 201 |

+

type="pil",

|

| 202 |

+

interactive=False).style(height=256, width=256)

|

| 203 |

+

with gr.Row():

|

| 204 |

+

query_img = gr.Image(label="Query Image",

|

| 205 |

+

type="pil").style(height=256, width=256)

|

| 206 |

+

with gr.Row():

|

| 207 |

+

eval_btn = gr.Button(value="Evaluate")

|

| 208 |

+

with gr.Row():

|

| 209 |

+

output_img = gr.Plot(label="Output Image", height=256, width=256)

|

| 210 |

+

|

| 211 |

+

|

| 212 |

+

def get_select_coords(kp_support,

|

| 213 |

+

limb_support,

|

| 214 |

+

evt: gr.SelectData,

|

| 215 |

+

r=0.015):

|

| 216 |

+

pixels_in_queue = set()

|

| 217 |

+

pixels_in_queue.add((evt.index[1], evt.index[0]))

|

| 218 |

+

while len(pixels_in_queue) > 0:

|

| 219 |

+

pixel = pixels_in_queue.pop()

|

| 220 |

+

if pixel[0] is not None and pixel[

|

| 221 |

+

1] is not None and pixel not in kp_src:

|

| 222 |

+

kp_src.append(pixel)

|

| 223 |

+

else:

|

| 224 |

+

print("Invalid pixel")

|

| 225 |

+

if limb_support is None:

|

| 226 |

+

canvas_limb = kp_support

|

| 227 |

+

else:

|

| 228 |

+

canvas_limb = limb_support

|

| 229 |

+

canvas_kp = kp_support

|

| 230 |

+

w, h = canvas_kp.size

|

| 231 |

+

draw_pose = ImageDraw.Draw(canvas_kp)

|

| 232 |

+

draw_limb = ImageDraw.Draw(canvas_limb)

|

| 233 |

+

r = int(r * w)

|

| 234 |

+

leftUpPoint = (pixel[1] - r, pixel[0] - r)

|

| 235 |

+

rightDownPoint = (pixel[1] + r, pixel[0] + r)

|

| 236 |

+

twoPointList = [leftUpPoint, rightDownPoint]

|

| 237 |

+

draw_pose.ellipse(twoPointList, fill=(255, 0, 0, 255))

|

| 238 |

+

draw_limb.ellipse(twoPointList, fill=(255, 0, 0, 255))

|

| 239 |

+

|

| 240 |

+

return canvas_kp, canvas_limb

|

| 241 |

+

|

| 242 |

+

|

| 243 |

+

def get_limbs(kp_support,

|

| 244 |

+

evt: gr.SelectData,

|

| 245 |

+

r=0.02, width=0.02):

|

| 246 |

+

global count, color_idx, prev_pt, skeleton, prev_pt_idx, prev_clicked

|

| 247 |

+

curr_pixel = (evt.index[1], evt.index[0])

|

| 248 |

+

pixels_in_queue = set()

|

| 249 |

+

pixels_in_queue.add((evt.index[1], evt.index[0]))

|

| 250 |

+

canvas_kp = kp_support

|

| 251 |

+

w, h = canvas_kp.size

|

| 252 |

+

r = int(r * w)

|

| 253 |

+

width = int(width * w)

|

| 254 |

+

while (len(pixels_in_queue) > 0 and

|

| 255 |

+

curr_pixel != prev_clicked and

|

| 256 |

+

len(kp_src) > 0):

|

| 257 |

+

pixel = pixels_in_queue.pop()

|

| 258 |

+

prev_clicked = pixel

|

| 259 |

+

closest_point = min(kp_src,

|

| 260 |

+

key=lambda p: (p[0] - pixel[0]) ** 2 +

|

| 261 |

+

(p[1] - pixel[1]) ** 2)

|

| 262 |

+

closest_point_index = kp_src.index(closest_point)

|

| 263 |

+

draw_limb = ImageDraw.Draw(canvas_kp)

|

| 264 |

+

if color_idx < len(COLORS):

|

| 265 |

+

c = COLORS[color_idx]

|

| 266 |

+

else:

|

| 267 |

+

c = random.choices(range(256), k=3)

|

| 268 |

+

leftUpPoint = (closest_point[1] - r, closest_point[0] - r)

|

| 269 |

+

rightDownPoint = (closest_point[1] + r, closest_point[0] + r)

|

| 270 |

+

twoPointList = [leftUpPoint, rightDownPoint]

|

| 271 |

+

draw_limb.ellipse(twoPointList, fill=tuple(c))

|

| 272 |

+

if count == 0:

|

| 273 |

+

prev_pt = closest_point[1], closest_point[0]

|

| 274 |

+

prev_pt_idx = closest_point_index

|

| 275 |

+

count = count + 1

|

| 276 |

+

else:

|

| 277 |

+

if prev_pt_idx != closest_point_index:

|

| 278 |

+

# Create Line and add Limb

|

| 279 |

+

draw_limb.line([prev_pt, (closest_point[1], closest_point[0])],

|

| 280 |

+

fill=tuple(c),

|

| 281 |

+

width=width)

|

| 282 |

+

skeleton.append((prev_pt_idx, closest_point_index))

|

| 283 |

+

color_idx = color_idx + 1

|

| 284 |

+

else:

|

| 285 |

+

draw_limb.ellipse(twoPointList, fill=(255, 0, 0, 255))

|

| 286 |

+

count = 0

|

| 287 |

+

return canvas_kp

|

| 288 |

+

|

| 289 |

+

|

| 290 |

+

def set_query(support_img):

|

| 291 |

+

global original_support_image

|

| 292 |

+

skeleton.clear()

|

| 293 |

+

kp_src.clear()

|

| 294 |

+

original_support_image = np.array(support_img)[:, :, ::-1].copy()

|

| 295 |

+

support_img = support_img.resize((128, 128), Image.Resampling.LANCZOS)

|

| 296 |

+

return support_img, support_img

|

| 297 |

+

|

| 298 |

+

|

| 299 |

+

support_img.select(get_select_coords,

|

| 300 |

+

[support_img, posed_support],

|

| 301 |

+

[support_img, posed_support],

|

| 302 |

+

)

|

| 303 |

+

support_img.upload(set_query,

|

| 304 |

+

inputs=support_img,

|

| 305 |

+

outputs=[support_img,posed_support])

|

| 306 |

+

posed_support.select(get_limbs,

|

| 307 |

+

posed_support,

|

| 308 |

+

posed_support)

|

| 309 |

+

eval_btn.click(fn=process,

|

| 310 |

+

inputs=[query_img],

|

| 311 |

+

outputs=output_img)

|

| 312 |

+

|

| 313 |

+

if __name__ == "__main__":

|

| 314 |

+

parser = argparse.ArgumentParser(description='Pose Anything Demo')

|

| 315 |

+

parser.add_argument('--checkpoint',

|

| 316 |

+

help='checkpoint path',

|

| 317 |

+

default='https://huggingface.co/orhir/PoseAnything/blob/main/1shot-swin_graph_split1.pth')

|

| 318 |

+

args = parser.parse_args()

|

| 319 |

+

checkpoint_path = args.checkpoint

|

| 320 |

+

demo.launch()

|

configs/1shot-swin/base_split1_config.py

ADDED

|

@@ -0,0 +1,190 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|