Spaces:

Build error

Build error

Add application file

Browse files- .gitattributes +1 -0

- .gitignore +14 -0

- LICENSE +201 -0

- README.md +111 -11

- app.py +295 -0

- assets/OpenSans-Bold.ttf +0 -0

- assets/basketball.gif +0 -0

- assets/basketball.png +3 -0

- assets/dog.jpg +0 -0

- assets/ram.png +3 -0

- assets/ram_logo.png +3 -0

- assets/soccer.png +3 -0

- environment.yml +16 -0

- ram_train_eval.py +417 -0

- segment_anything/__init__.py +15 -0

- segment_anything/automatic_mask_generator.py +374 -0

- segment_anything/build_sam.py +107 -0

- segment_anything/modeling/__init__.py +11 -0

- segment_anything/modeling/common.py +43 -0

- segment_anything/modeling/image_encoder.py +395 -0

- segment_anything/modeling/mask_decoder.py +177 -0

- segment_anything/modeling/prompt_encoder.py +214 -0

- segment_anything/modeling/sam.py +175 -0

- segment_anything/modeling/transformer.py +240 -0

- segment_anything/predictor.py +269 -0

- segment_anything/utils/__init__.py +5 -0

- segment_anything/utils/amg.py +346 -0

- segment_anything/utils/onnx.py +144 -0

- segment_anything/utils/transforms.py +102 -0

- utils.py +152 -0

.gitattributes

CHANGED

|

@@ -32,3 +32,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 32 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*.png filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.h

|

| 2 |

+

*.cpp

|

| 3 |

+

*.o

|

| 4 |

+

*.so

|

| 5 |

+

*.pyc

|

| 6 |

+

*.pth

|

| 7 |

+

.vscode/*

|

| 8 |

+

|

| 9 |

+

.ipynb_checkpoints/

|

| 10 |

+

data

|

| 11 |

+

feats/

|

| 12 |

+

*.npz

|

| 13 |

+

share/

|

| 14 |

+

|

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright 2020 - present, Facebook, Inc

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,13 +1,113 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

-

title: Relate Anything

|

| 3 |

-

emoji: 📈

|

| 4 |

-

colorFrom: green

|

| 5 |

-

colorTo: red

|

| 6 |

-

sdk: gradio

|

| 7 |

-

sdk_version: 3.27.0

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

-

license: mit

|

| 11 |

-

---

|

| 12 |

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<p align="center" width="100%">

|

| 2 |

+

<img src="assets/ram_logo.png" width="60%" height="30%">

|

| 3 |

+

</p>

|

| 4 |

+

|

| 5 |

+

# RAM: Relate-Anything-Model

|

| 6 |

+

|

| 7 |

+

The following developers have equally contributed to this project in their spare time, the names are in alphabetical order.

|

| 8 |

+

|

| 9 |

+

[Zujin Guo](https://scholar.google.com/citations?user=G8DPsoUAAAAJ&hl=zh-CN),

|

| 10 |

+

[Bo Li](https://brianboli.com/),

|

| 11 |

+

[Jingkang Yang](https://jingkang50.github.io/),

|

| 12 |

+

[Zijian Zhou](https://sites.google.com/view/zijian-zhou/home).

|

| 13 |

+

|

| 14 |

+

**Affiliate: [MMLab@NTU](https://www.mmlab-ntu.com/)** & **[VisCom Lab, KCL/TongJi](https://viscom.nms.kcl.ac.uk/)**

|

| 15 |

+

|

| 16 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 17 |

|

| 18 |

+

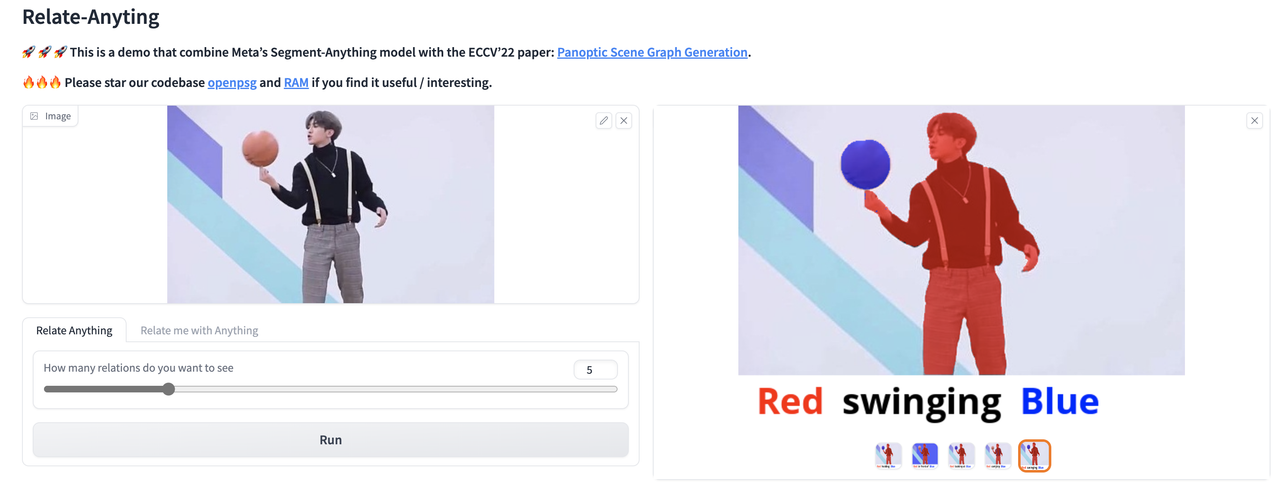

🚀 🚀 🚀 This is a demo that combine Meta's [Segment-Anything](https://segment-anything.com/) model with the ECCV'22 paper: [Panoptic Scene Graph Generation](https://psgdataset.org/).

|

| 19 |

+

|

| 20 |

+

🔥🔥🔥 Please star our codebase [OpenPSG](https://github.com/Jingkang50/OpenPSG) and [RAM](https://github.com/Luodian/RelateAnything) if you find it useful/interesting.

|

| 21 |

+

|

| 22 |

+

[[`Huggingface Demo`](#method)]

|

| 23 |

+

|

| 24 |

+

[[`Dataset`](https://psgdataset.org/)]

|

| 25 |

+

|

| 26 |

+

Relate Anything Model is capable of taking an image as input and utilizing SAM to identify the corresponding mask within the image. Subsequently, RAM can provide an analysis of the relationship between any arbitrary objects mask.

|

| 27 |

+

|

| 28 |

+

The object masks are generated using SAM. RAM was trained to detect the relationships between the object masks using the OpenPSG dataset, and the specifics of this method are outlined in a subsequent section.

|

| 29 |

+

|

| 30 |

+

[](https://postimg.cc/k2HDRryV)

|

| 31 |

+

|

| 32 |

+

## Examples

|

| 33 |

+

|

| 34 |

+

Our current demo supports:

|

| 35 |

+

|

| 36 |

+

(1) generate arbitary objects masks and reason relationships in between.

|

| 37 |

+

|

| 38 |

+

(2) given coordinates then generate object masks and reason the relationship between given objects and other objects in the image.

|

| 39 |

+

|

| 40 |

+

We will soon add support for detecting semantic labels of objects with the help of [OVSeg](https://github.com/facebookresearch/ov-seg).

|

| 41 |

+

|

| 42 |

+

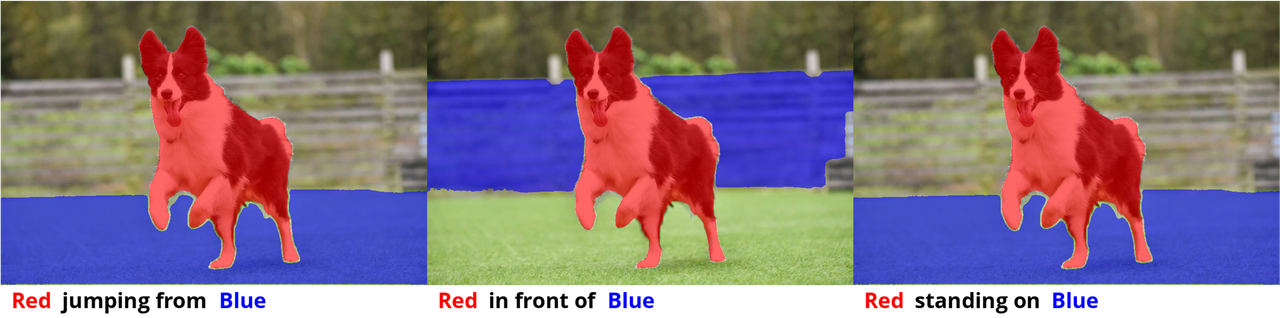

Here are some examples of the Relate Anything Model in action about playing soccer, dancing, and playing basketball.

|

| 43 |

+

|

| 44 |

+

<!--  -->

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

## Method

|

| 57 |

+

|

| 58 |

+

RAM utilizes the Segment Anything Model (SAM) to accurately mask objects within an image, and subsequently extract features corresponding to the segmented regions. Employ a Transformer module to facilitate feature interaction among distinct objects, and ultimately compute pairwise object relationships, thereby categorizing their interrelations.

|

| 59 |

+

|

| 60 |

+

## Setup

|

| 61 |

+

|

| 62 |

+

To set up the environment, we use Conda to manage dependencies.

|

| 63 |

+

To specify the appropriate version of cudatoolkit to install on your machine, you can modify the environment.yml file, and then create the Conda environment by running the following command:

|

| 64 |

+

|

| 65 |

+

```bash

|

| 66 |

+

conda env create -f environment.yml

|

| 67 |

+

```

|

| 68 |

+

|

| 69 |

+

Make sure to use `segment_anything` in this repository, which includes the mask feature extraction operation.

|

| 70 |

+

|

| 71 |

+

Download the pretrained model

|

| 72 |

+

1. SAM: [link](https://dl.fbaipublicfiles.com/segment_anything/sam_vit_h_4b8939.pth)

|

| 73 |

+

2. RAM: [link](https://1drv.ms/u/s!AgCc-d5Aw1cumQapZwcaKob8InQm?e=qyMeTS)

|

| 74 |

+

|

| 75 |

+

Place these two models in `./checkpoints/` from the root directory.

|

| 76 |

+

|

| 77 |

+

Run our demo locally by running the following command:

|

| 78 |

+

|

| 79 |

+

```bash

|

| 80 |

+

python app.py

|

| 81 |

+

```

|

| 82 |

+

|

| 83 |

+

<!-- ## Developers

|

| 84 |

+

|

| 85 |

+

We have equally contributed to this project in our spare time, in alphabetical order.

|

| 86 |

+

[Zujin Guo](https://scholar.google.com/citations?user=G8DPsoUAAAAJ&hl=zh-CN),

|

| 87 |

+

[Bo Li](https://brianboli.com/),

|

| 88 |

+

[Jingkang Yang](https://jingkang50.github.io/),

|

| 89 |

+

[Zijian Zhou](https://sites.google.com/view/zijian-zhou/home).

|

| 90 |

+

|

| 91 |

+

**[MMLab@NTU](https://www.mmlab-ntu.com/)** & **[VisCom Lab, KCL](https://viscom.nms.kcl.ac.uk/)** -->

|

| 92 |

+

|

| 93 |

+

## Acknowledgement

|

| 94 |

+

|

| 95 |

+

We thank [Chunyuan Li](https://chunyuan.li/) for his help in setting up the demo.

|

| 96 |

+

|

| 97 |

+

## Citation

|

| 98 |

+

If you find this project helpful for your research, please consider citing the following BibTeX entry.

|

| 99 |

+

```BibTex

|

| 100 |

+

@inproceedings{yang2022psg,

|

| 101 |

+

author = {Yang, Jingkang and Ang, Yi Zhe and Guo, Zujin and Zhou, Kaiyang and Zhang, Wayne and Liu, Ziwei},

|

| 102 |

+

title = {Panoptic Scene Graph Generation},

|

| 103 |

+

booktitle = {ECCV}

|

| 104 |

+

year = {2022}

|

| 105 |

+

}

|

| 106 |

+

|

| 107 |

+

@inproceedings{yang2023pvsg,

|

| 108 |

+

author = {Yang, Jingkang and Peng, Wenxuan and Li, Xiangtai and Guo, Zujin and Chen, Liangyu and Li, Bo and Ma, Zheng and Zhou, Kaiyang and Zhang, Wayne and Loy, Chen Change and Liu, Ziwei},

|

| 109 |

+

title = {Panoptic Video Scene Graph Generation},

|

| 110 |

+

booktitle = {CVPR},

|

| 111 |

+

year = {2023},

|

| 112 |

+

}

|

| 113 |

+

```

|

app.py

ADDED

|

@@ -0,0 +1,295 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import sys

|

| 2 |

+

sys.path.append('.')

|

| 3 |

+

|

| 4 |

+

from segment_anything import build_sam, SamPredictor, SamAutomaticMaskGenerator

|

| 5 |

+

import numpy as np

|

| 6 |

+

import gradio as gr

|

| 7 |

+

from PIL import Image, ImageDraw, ImageFont

|

| 8 |

+

from utils import iou, sort_and_deduplicate, relation_classes, MLP, show_anns, show_mask

|

| 9 |

+

import torch

|

| 10 |

+

|

| 11 |

+

from ram_train_eval import RamModel,RamPredictor

|

| 12 |

+

from mmengine.config import Config

|

| 13 |

+

|

| 14 |

+

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

|

| 15 |

+

|

| 16 |

+

input_size = 512

|

| 17 |

+

hidden_size = 256

|

| 18 |

+

num_classes = 56

|

| 19 |

+

|

| 20 |

+

# load sam model

|

| 21 |

+

sam = build_sam(checkpoint="./checkpoints/sam_vit_h_4b8939.pth").to(device)

|

| 22 |

+

predictor = SamPredictor(sam)

|

| 23 |

+

mask_generator = SamAutomaticMaskGenerator(sam)

|

| 24 |

+

|

| 25 |

+

# load ram model

|

| 26 |

+

model_path = "./checkpoints/ram_epoch12.pth"

|

| 27 |

+

config = dict(

|

| 28 |

+

model=dict(

|

| 29 |

+

pretrained_model_name_or_path='bert-base-uncased',

|

| 30 |

+

load_pretrained_weights=False,

|

| 31 |

+

num_transformer_layer=2,

|

| 32 |

+

input_feature_size=256,

|

| 33 |

+

output_feature_size=768,

|

| 34 |

+

cls_feature_size=512,

|

| 35 |

+

num_relation_classes=56,

|

| 36 |

+

pred_type='attention',

|

| 37 |

+

loss_type='multi_label_ce',

|

| 38 |

+

),

|

| 39 |

+

load_from=model_path,

|

| 40 |

+

)

|

| 41 |

+

config = Config(config)

|

| 42 |

+

|

| 43 |

+

class Predictor(RamPredictor):

|

| 44 |

+

def __init__(self,config):

|

| 45 |

+

self.config = config

|

| 46 |

+

self.device = torch.device(

|

| 47 |

+

'cuda' if torch.cuda.is_available() else 'cpu')

|

| 48 |

+

self._build_model()

|

| 49 |

+

|

| 50 |

+

def _build_model(self):

|

| 51 |

+

self.model = RamModel(**self.config.model).to(self.device)

|

| 52 |

+

if self.config.load_from is not None:

|

| 53 |

+

self.model.load_state_dict(torch.load(self.config.load_from, map_location=self.device))

|

| 54 |

+

self.model.train()

|

| 55 |

+

|

| 56 |

+

model = Predictor(config)

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

# visualization

|

| 60 |

+

def draw_selected_mask(mask, draw):

|

| 61 |

+

color = (255, 0, 0, 153)

|

| 62 |

+

nonzero_coords = np.transpose(np.nonzero(mask))

|

| 63 |

+

for coord in nonzero_coords:

|

| 64 |

+

draw.point(coord[::-1], fill=color)

|

| 65 |

+

|

| 66 |

+

def draw_object_mask(mask, draw):

|

| 67 |

+

color = (0, 0, 255, 153)

|

| 68 |

+

nonzero_coords = np.transpose(np.nonzero(mask))

|

| 69 |

+

for coord in nonzero_coords:

|

| 70 |

+

draw.point(coord[::-1], fill=color)

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

def vis_selected(pil_image, coords):

|

| 74 |

+

# get coords

|

| 75 |

+

coords_x, coords_y = coords.split(',')

|

| 76 |

+

input_point = np.array([[int(coords_x), int(coords_y)]])

|

| 77 |

+

input_label = np.array([1])

|

| 78 |

+

# load image

|

| 79 |

+

image = np.array(pil_image)

|

| 80 |

+

predictor.set_image(image)

|

| 81 |

+

mask1, score1, logit1, feat1 = predictor.predict(

|

| 82 |

+

point_coords=input_point,

|

| 83 |

+

point_labels=input_label,

|

| 84 |

+

multimask_output=False,

|

| 85 |

+

)

|

| 86 |

+

pil_image = pil_image.convert('RGBA')

|

| 87 |

+

mask_image = Image.new('RGBA', pil_image.size, color=(0, 0, 0, 0))

|

| 88 |

+

mask_draw = ImageDraw.Draw(mask_image)

|

| 89 |

+

draw_selected_mask(mask1[0], mask_draw)

|

| 90 |

+

pil_image.alpha_composite(mask_image)

|

| 91 |

+

|

| 92 |

+

yield [pil_image]

|

| 93 |

+

|

| 94 |

+

|

| 95 |

+

def create_title_image(word1, word2, word3, width, font_path='./assets/OpenSans-Bold.ttf'):

|

| 96 |

+

# Define the colors to use for each word

|

| 97 |

+

color_red = (255, 0, 0)

|

| 98 |

+

color_black = (0, 0, 0)

|

| 99 |

+

color_blue = (0, 0, 255)

|

| 100 |

+

|

| 101 |

+

# Define the initial font size and spacing between words

|

| 102 |

+

font_size = 40

|

| 103 |

+

|

| 104 |

+

# Create a new image with the specified width and white background

|

| 105 |

+

image = Image.new('RGB', (width, 60), (255, 255, 255))

|

| 106 |

+

|

| 107 |

+

# Load the specified font

|

| 108 |

+

font = ImageFont.truetype(font_path, font_size)

|

| 109 |

+

|

| 110 |

+

# Keep increasing the font size until all words fit within the desired width

|

| 111 |

+

while True:

|

| 112 |

+

# Create a draw object for the image

|

| 113 |

+

draw = ImageDraw.Draw(image)

|

| 114 |

+

|

| 115 |

+

word_spacing = font_size / 2

|

| 116 |

+

# Draw each word in the appropriate color

|

| 117 |

+

x_offset = word_spacing

|

| 118 |

+

draw.text((x_offset, 0), word1, color_red, font=font)

|

| 119 |

+

x_offset += font.getsize(word1)[0] + word_spacing

|

| 120 |

+

draw.text((x_offset, 0), word2, color_black, font=font)

|

| 121 |

+

x_offset += font.getsize(word2)[0] + word_spacing

|

| 122 |

+

draw.text((x_offset, 0), word3, color_blue, font=font)

|

| 123 |

+

|

| 124 |

+

word_sizes = [font.getsize(word) for word in [word1, word2, word3]]

|

| 125 |

+

total_width = sum([size[0] for size in word_sizes]) + word_spacing * 3

|

| 126 |

+

|

| 127 |

+

# Stop increasing font size if the image is within the desired width

|

| 128 |

+

if total_width <= width:

|

| 129 |

+

break

|

| 130 |

+

|

| 131 |

+

# Increase font size and reset the draw object

|

| 132 |

+

font_size -= 1

|

| 133 |

+

image = Image.new('RGB', (width, 50), (255, 255, 255))

|

| 134 |

+

font = ImageFont.truetype(font_path, font_size)

|

| 135 |

+

draw = None

|

| 136 |

+

|

| 137 |

+

return image

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

def concatenate_images_vertical(image1, image2):

|

| 141 |

+

# Get the dimensions of the two images

|

| 142 |

+

width1, height1 = image1.size

|

| 143 |

+

width2, height2 = image2.size

|

| 144 |

+

|

| 145 |

+

# Create a new image with the combined height and the maximum width

|

| 146 |

+

new_image = Image.new('RGBA', (max(width1, width2), height1 + height2))

|

| 147 |

+

|

| 148 |

+

# Paste the first image at the top of the new image

|

| 149 |

+

new_image.paste(image1, (0, 0))

|

| 150 |

+

|

| 151 |

+

# Paste the second image below the first image

|

| 152 |

+

new_image.paste(image2, (0, height1))

|

| 153 |

+

|

| 154 |

+

return new_image

|

| 155 |

+

|

| 156 |

+

|

| 157 |

+

def relate_selected(input_image, k, coords):

|

| 158 |

+

# load image

|

| 159 |

+

pil_image = input_image.convert('RGBA')

|

| 160 |

+

|

| 161 |

+

w, h = pil_image.size

|

| 162 |

+

if w > 800:

|

| 163 |

+

pil_image.thumbnail((800, 800*h/w))

|

| 164 |

+

input_image.thumbnail((800, 800*h/w))

|

| 165 |

+

coords = str(int(int(coords.split(',')[0]) * 800 / w)) + ',' + str(int(int(coords.split(',')[1]) * 800 / w))

|

| 166 |

+

|

| 167 |

+

image = np.array(input_image)

|

| 168 |

+

sam_masks = mask_generator.generate(image)

|

| 169 |

+

# get old mask

|

| 170 |

+

coords_x, coords_y = coords.split(',')

|

| 171 |

+

input_point = np.array([[int(coords_x), int(coords_y)]])

|

| 172 |

+

input_label = np.array([1])

|

| 173 |

+

mask1, score1, logit1, feat1 = predictor.predict(

|

| 174 |

+

point_coords=input_point,

|

| 175 |

+

point_labels=input_label,

|

| 176 |

+

multimask_output=False,

|

| 177 |

+

)

|

| 178 |

+

|

| 179 |

+

filtered_masks = sort_and_deduplicate(sam_masks)

|

| 180 |

+

filtered_masks = [d for d in sam_masks if iou(d['segmentation'], mask1[0]) < 0.95][:k]

|

| 181 |

+

pil_image_list = []

|

| 182 |

+

|

| 183 |

+

# run model

|

| 184 |

+

feat = feat1

|

| 185 |

+

for fm in filtered_masks:

|

| 186 |

+

feat2 = torch.Tensor(fm['feat']).unsqueeze(0).unsqueeze(0).to(device)

|

| 187 |

+

feat = torch.cat((feat, feat2), dim=1)

|

| 188 |

+

matrix_output, rel_triplets = model.predict(feat)

|

| 189 |

+

subject_output = matrix_output.permute([0,2,3,1])[:,0,1:]

|

| 190 |

+

|

| 191 |

+

for i in range(len(filtered_masks)):

|

| 192 |

+

|

| 193 |

+

output = subject_output[:,i]

|

| 194 |

+

|

| 195 |

+

topk_indices = torch.argsort(-output).flatten()

|

| 196 |

+

relation = relation_classes[topk_indices[:1][0]]

|

| 197 |

+

|

| 198 |

+

mask_image = Image.new('RGBA', pil_image.size, color=(0, 0, 0, 0))

|

| 199 |

+

mask_draw = ImageDraw.Draw(mask_image)

|

| 200 |

+

|

| 201 |

+

draw_selected_mask(mask1[0], mask_draw)

|

| 202 |

+

draw_object_mask(filtered_masks[i]['segmentation'], mask_draw)

|

| 203 |

+

|

| 204 |

+

current_pil_image = pil_image.copy()

|

| 205 |

+

current_pil_image.alpha_composite(mask_image)

|

| 206 |

+

|

| 207 |

+

title_image = create_title_image('Red', relation, 'Blue', current_pil_image.size[0])

|

| 208 |

+

concate_pil_image = concatenate_images_vertical(current_pil_image, title_image)

|

| 209 |

+

pil_image_list.append(concate_pil_image)

|

| 210 |

+

|

| 211 |

+

yield pil_image_list

|

| 212 |

+

|

| 213 |

+

|

| 214 |

+

def relate_anything(input_image, k):

|

| 215 |

+

# load image

|

| 216 |

+

pil_image = input_image.convert('RGBA')

|

| 217 |

+

w, h = pil_image.size

|

| 218 |

+

if w > 800:

|

| 219 |

+

pil_image.thumbnail((800, 800*h/w))

|

| 220 |

+

input_image.thumbnail((800, 800*h/w))

|

| 221 |

+

image = np.array(input_image)

|

| 222 |

+

sam_masks = mask_generator.generate(image)

|

| 223 |

+

filtered_masks = sort_and_deduplicate(sam_masks)

|

| 224 |

+

|

| 225 |

+

feat_list = []

|

| 226 |

+

for fm in filtered_masks:

|

| 227 |

+

feat = torch.Tensor(fm['feat']).unsqueeze(0).unsqueeze(0).to(device)

|

| 228 |

+

feat_list.append(feat)

|

| 229 |

+

feat = torch.cat(feat_list, dim=1).to(device)

|

| 230 |

+

matrix_output, rel_triplets = model.predict(feat)

|

| 231 |

+

|

| 232 |

+

pil_image_list = []

|

| 233 |

+

for i, rel in enumerate(rel_triplets[:k]):

|

| 234 |

+

s,o,r = int(rel[0]),int(rel[1]),int(rel[2])

|

| 235 |

+

relation = relation_classes[r]

|

| 236 |

+

|

| 237 |

+

mask_image = Image.new('RGBA', pil_image.size, color=(0, 0, 0, 0))

|

| 238 |

+

mask_draw = ImageDraw.Draw(mask_image)

|

| 239 |

+

|

| 240 |

+

draw_selected_mask(filtered_masks[s]['segmentation'], mask_draw)

|

| 241 |

+

draw_object_mask(filtered_masks[o]['segmentation'], mask_draw)

|

| 242 |

+

|

| 243 |

+

current_pil_image = pil_image.copy()

|

| 244 |

+

current_pil_image.alpha_composite(mask_image)

|

| 245 |

+

|

| 246 |

+

title_image = create_title_image('Red', relation, 'Blue', current_pil_image.size[0])

|

| 247 |

+

concate_pil_image = concatenate_images_vertical(current_pil_image, title_image)

|

| 248 |

+

pil_image_list.append(concate_pil_image)

|

| 249 |

+

|

| 250 |

+

yield pil_image_list

|

| 251 |

+

|

| 252 |

+

DESCRIPTION = '''# Relate-Anyting

|

| 253 |

+

|

| 254 |

+

### 🚀 🚀 🚀 This is a demo that combine Meta's Segment-Anything model with the ECCV'22 paper: [Panoptic Scene Graph Generation](https://psgdataset.org/).

|

| 255 |

+

|

| 256 |

+

### 🔥🔥🔥 Please star our codebase [openpsg](https://github.com/Jingkang50/OpenPSG) and [RAM](https://github.com/Luodian/RelateAnything) if you find it useful / interesting.

|

| 257 |

+

'''

|

| 258 |

+

|

| 259 |

+

block = gr.Blocks()

|

| 260 |

+

block = block.queue()

|

| 261 |

+

with block:

|

| 262 |

+

gr.Markdown(DESCRIPTION)

|

| 263 |

+

with gr.Row():

|

| 264 |

+

with gr.Column():

|

| 265 |

+

input_image = gr.Image(source="upload", type="pil", value="assets/dog.jpg")

|

| 266 |

+

|

| 267 |

+

with gr.Tab("Relate Anything"):

|

| 268 |

+

num_relation = gr.Slider(label="How many relations do you want to see", minimum=1, maximum=20, value=5, step=1)

|

| 269 |

+

relate_all_button = gr.Button(label="Relate Anything!")

|

| 270 |

+

|

| 271 |

+

with gr.Tab("Relate me with Anything"):

|

| 272 |

+

img_input_coords = gr.Textbox(label="Click anything to get input coords")

|

| 273 |

+

|

| 274 |

+

def select_handler(evt: gr.SelectData):

|

| 275 |

+

coords = evt.index

|

| 276 |

+

return f"{coords[0]},{coords[1]}"

|

| 277 |

+

|

| 278 |

+

input_image.select(select_handler, None, img_input_coords)

|

| 279 |

+

run_button_vis = gr.Button(label="Visualize the Select Thing")

|

| 280 |

+

selected_gallery = gr.Gallery(label="Selected Thing", show_label=True, elem_id="gallery").style(preview=True, grid=2, object_fit="scale-down")

|

| 281 |

+

|

| 282 |

+

k = gr.Slider(label="Number of things you want to relate", minimum=1, maximum=20, value=5, step=1)

|

| 283 |

+

relate_selected_button = gr.Button(value="Relate it with Anything", interactive=True)

|

| 284 |

+

|

| 285 |

+

with gr.Column():

|

| 286 |

+

image_gallery = gr.Gallery(label="Your Result", show_label=True, elem_id="gallery").style(preview=True, columns=5, object_fit="scale-down")

|

| 287 |

+

|

| 288 |

+

# relate anything

|

| 289 |

+

relate_all_button.click(fn=relate_anything, inputs=[input_image, num_relation], outputs=[image_gallery], show_progress=True, queue=True)

|

| 290 |

+

|

| 291 |

+

# relate selected

|

| 292 |

+

run_button_vis.click(fn=vis_selected, inputs=[input_image, img_input_coords], outputs=[selected_gallery], show_progress=True, queue=True)

|

| 293 |

+

relate_selected_button.click(fn=relate_selected, inputs=[input_image, k, img_input_coords], outputs=[image_gallery], show_progress=True, queue=True)

|

| 294 |

+

|

| 295 |

+

block.launch(debug=True, share=True)

|

assets/OpenSans-Bold.ttf

ADDED

|

Binary file (225 kB). View file

|

|

|

assets/basketball.gif

ADDED

|

assets/basketball.png

ADDED

|

Git LFS Details

|

assets/dog.jpg

ADDED

|

assets/ram.png

ADDED

|

Git LFS Details

|

assets/ram_logo.png

ADDED

|

Git LFS Details

|

assets/soccer.png

ADDED

|

Git LFS Details

|

environment.yml

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: relate_anything

|

| 2 |

+

channels:

|

| 3 |

+

- pytorch

|

| 4 |

+

- conda-forge

|

| 5 |

+

dependencies:

|

| 6 |

+

- python=3.8

|

| 7 |

+

- pytorch=1.7.0

|

| 8 |

+

- torchvision=0.8.0

|

| 9 |

+

- torchaudio==0.7.0

|

| 10 |

+

- cudatoolkit=10.1

|

| 11 |

+

- pip

|

| 12 |

+

- pip:

|

| 13 |

+

- openmim

|

| 14 |

+

- mmcv==2.0.0

|

| 15 |

+

- pre-commit

|

| 16 |

+

- git+https://github.com/Jingkang50/OpenPSG

|

ram_train_eval.py

ADDED

|

@@ -0,0 +1,417 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import time

|

| 3 |

+

from datetime import timedelta

|

| 4 |

+