Spaces:

Running

Running

Commit

·

9aaf024

1

Parent(s):

8f28809

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +23 -0

- .gitignore +174 -0

- .ipynb_checkpoints/Untitled-checkpoint.ipynb +6 -0

- LICENSE +21 -0

- README.md +268 -13

- Untitled.ipynb +533 -0

- app_sadtalker.py +111 -0

- checkpoints/SadTalker_V0.0.2_256.safetensors +3 -0

- checkpoints/SadTalker_V0.0.2_512.safetensors +3 -0

- checkpoints/mapping_00109-model.pth.tar +3 -0

- checkpoints/mapping_00229-model.pth.tar +3 -0

- cog.yaml +35 -0

- docs/FAQ.md +46 -0

- docs/best_practice.md +94 -0

- docs/changlelog.md +29 -0

- docs/example_crop.gif +3 -0

- docs/example_crop_still.gif +3 -0

- docs/example_full.gif +3 -0

- docs/example_full_crop.gif +0 -0

- docs/example_full_enhanced.gif +3 -0

- docs/face3d.md +48 -0

- docs/free_view_result.gif +3 -0

- docs/install.md +47 -0

- docs/resize_good.gif +3 -0

- docs/resize_no.gif +3 -0

- docs/sadtalker_logo.png +0 -0

- docs/using_ref_video.gif +3 -0

- docs/webui_extension.md +50 -0

- examples/driven_audio/RD_Radio31_000.wav +0 -0

- examples/driven_audio/RD_Radio34_002.wav +0 -0

- examples/driven_audio/RD_Radio36_000.wav +0 -0

- examples/driven_audio/RD_Radio40_000.wav +0 -0

- examples/driven_audio/bus_chinese.wav +0 -0

- examples/driven_audio/chinese_news.wav +3 -0

- examples/driven_audio/chinese_poem1.wav +0 -0

- examples/driven_audio/chinese_poem2.wav +0 -0

- examples/driven_audio/deyu.wav +3 -0

- examples/driven_audio/eluosi.wav +3 -0

- examples/driven_audio/fayu.wav +3 -0

- examples/driven_audio/imagine.wav +3 -0

- examples/driven_audio/itosinger1.wav +0 -0

- examples/driven_audio/japanese.wav +3 -0

- examples/ref_video/WDA_AlexandriaOcasioCortez_000.mp4 +3 -0

- examples/ref_video/WDA_KatieHill_000.mp4 +3 -0

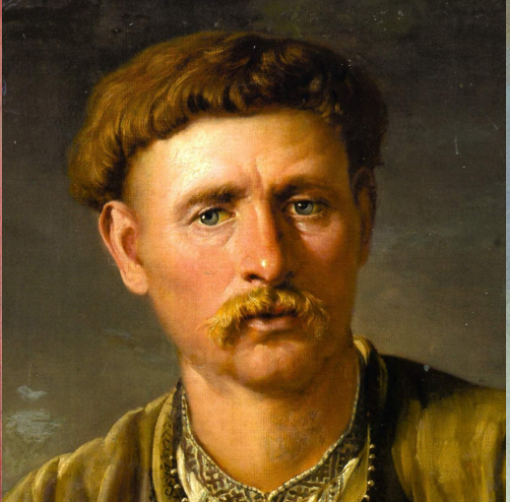

- examples/source_image/art_0.png +0 -0

- examples/source_image/art_1.png +0 -0

- examples/source_image/art_10.png +0 -0

- examples/source_image/art_11.png +0 -0

- examples/source_image/art_12.png +0 -0

- examples/source_image/art_13.png +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,26 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

docs/example_crop.gif filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

docs/example_crop_still.gif filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

docs/example_full.gif filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

docs/example_full_enhanced.gif filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

docs/free_view_result.gif filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

docs/resize_good.gif filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

docs/resize_no.gif filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

docs/using_ref_video.gif filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

examples/driven_audio/chinese_news.wav filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

examples/driven_audio/deyu.wav filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

examples/driven_audio/eluosi.wav filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

examples/driven_audio/fayu.wav filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

examples/driven_audio/imagine.wav filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

examples/driven_audio/japanese.wav filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

examples/ref_video/WDA_AlexandriaOcasioCortez_000.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

examples/ref_video/WDA_KatieHill_000.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

examples/source_image/art_16.png filter=lfs diff=lfs merge=lfs -text

|

| 53 |

+

examples/source_image/art_17.png filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

examples/source_image/art_3.png filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

examples/source_image/art_4.png filter=lfs diff=lfs merge=lfs -text

|

| 56 |

+

examples/source_image/art_5.png filter=lfs diff=lfs merge=lfs -text

|

| 57 |

+

examples/source_image/art_8.png filter=lfs diff=lfs merge=lfs -text

|

| 58 |

+

examples/source_image/art_9.png filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,174 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

|

| 9 |

+

# Distribution / packaging

|

| 10 |

+

.Python

|

| 11 |

+

build/

|

| 12 |

+

develop-eggs/

|

| 13 |

+

dist/

|

| 14 |

+

downloads/

|

| 15 |

+

eggs/

|

| 16 |

+

.eggs/

|

| 17 |

+

lib/

|

| 18 |

+

lib64/

|

| 19 |

+

parts/

|

| 20 |

+

sdist/

|

| 21 |

+

var/

|

| 22 |

+

wheels/

|

| 23 |

+

share/python-wheels/

|

| 24 |

+

*.egg-info/

|

| 25 |

+

.installed.cfg

|

| 26 |

+

*.egg

|

| 27 |

+

MANIFEST

|

| 28 |

+

|

| 29 |

+

# PyInstaller

|

| 30 |

+

# Usually these files are written by a python script from a template

|

| 31 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 32 |

+

*.manifest

|

| 33 |

+

*.spec

|

| 34 |

+

|

| 35 |

+

# Installer logs

|

| 36 |

+

pip-log.txt

|

| 37 |

+

pip-delete-this-directory.txt

|

| 38 |

+

|

| 39 |

+

# Unit test / coverage reports

|

| 40 |

+

htmlcov/

|

| 41 |

+

.tox/

|

| 42 |

+

.nox/

|

| 43 |

+

.coverage

|

| 44 |

+

.coverage.*

|

| 45 |

+

.cache

|

| 46 |

+

nosetests.xml

|

| 47 |

+

coverage.xml

|

| 48 |

+

*.cover

|

| 49 |

+

*.py,cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

cover/

|

| 53 |

+

|

| 54 |

+

# Translations

|

| 55 |

+

*.mo

|

| 56 |

+

*.pot

|

| 57 |

+

|

| 58 |

+

# Django stuff:

|

| 59 |

+

*.log

|

| 60 |

+

local_settings.py

|

| 61 |

+

db.sqlite3

|

| 62 |

+

db.sqlite3-journal

|

| 63 |

+

|

| 64 |

+

# Flask stuff:

|

| 65 |

+

instance/

|

| 66 |

+

.webassets-cache

|

| 67 |

+

|

| 68 |

+

# Scrapy stuff:

|

| 69 |

+

.scrapy

|

| 70 |

+

|

| 71 |

+

# Sphinx documentation

|

| 72 |

+

docs/_build/

|

| 73 |

+

|

| 74 |

+

# PyBuilder

|

| 75 |

+

.pybuilder/

|

| 76 |

+

target/

|

| 77 |

+

|

| 78 |

+

# Jupyter Notebook

|

| 79 |

+

.ipynb_checkpoints

|

| 80 |

+

|

| 81 |

+

# IPython

|

| 82 |

+

profile_default/

|

| 83 |

+

ipython_config.py

|

| 84 |

+

|

| 85 |

+

# pyenv

|

| 86 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 87 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 88 |

+

# .python-version

|

| 89 |

+

|

| 90 |

+

# pipenv

|

| 91 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 92 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 93 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 94 |

+

# install all needed dependencies.

|

| 95 |

+

#Pipfile.lock

|

| 96 |

+

|

| 97 |

+

# poetry

|

| 98 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 99 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 100 |

+

# commonly ignored for libraries.

|

| 101 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 102 |

+

#poetry.lock

|

| 103 |

+

|

| 104 |

+

# pdm

|

| 105 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 106 |

+

#pdm.lock

|

| 107 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 108 |

+

# in version control.

|

| 109 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 110 |

+

.pdm.toml

|

| 111 |

+

|

| 112 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 113 |

+

__pypackages__/

|

| 114 |

+

|

| 115 |

+

# Celery stuff

|

| 116 |

+

celerybeat-schedule

|

| 117 |

+

celerybeat.pid

|

| 118 |

+

|

| 119 |

+

# SageMath parsed files

|

| 120 |

+

*.sage.py

|

| 121 |

+

|

| 122 |

+

# Environments

|

| 123 |

+

.env

|

| 124 |

+

.venv

|

| 125 |

+

env/

|

| 126 |

+

venv/

|

| 127 |

+

ENV/

|

| 128 |

+

env.bak/

|

| 129 |

+

venv.bak/

|

| 130 |

+

|

| 131 |

+

# Spyder project settings

|

| 132 |

+

.spyderproject

|

| 133 |

+

.spyproject

|

| 134 |

+

|

| 135 |

+

# Rope project settings

|

| 136 |

+

.ropeproject

|

| 137 |

+

|

| 138 |

+

# mkdocs documentation

|

| 139 |

+

/site

|

| 140 |

+

|

| 141 |

+

# mypy

|

| 142 |

+

.mypy_cache/

|

| 143 |

+

.dmypy.json

|

| 144 |

+

dmypy.json

|

| 145 |

+

|

| 146 |

+

# Pyre type checker

|

| 147 |

+

.pyre/

|

| 148 |

+

|

| 149 |

+

# pytype static type analyzer

|

| 150 |

+

.pytype/

|

| 151 |

+

|

| 152 |

+

# Cython debug symbols

|

| 153 |

+

cython_debug/

|

| 154 |

+

|

| 155 |

+

# PyCharm

|

| 156 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 157 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 158 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 159 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 160 |

+

.idea/

|

| 161 |

+

|

| 162 |

+

examples/results/*

|

| 163 |

+

gfpgan/*

|

| 164 |

+

checkpoints/*

|

| 165 |

+

assets/*

|

| 166 |

+

results/*

|

| 167 |

+

Dockerfile

|

| 168 |

+

start_docker.sh

|

| 169 |

+

start.sh

|

| 170 |

+

|

| 171 |

+

checkpoints

|

| 172 |

+

|

| 173 |

+

# Mac

|

| 174 |

+

.DS_Store

|

.ipynb_checkpoints/Untitled-checkpoint.ipynb

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [],

|

| 3 |

+

"metadata": {},

|

| 4 |

+

"nbformat": 4,

|

| 5 |

+

"nbformat_minor": 5

|

| 6 |

+

}

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 Tencent AI Lab

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

README.md

CHANGED

|

@@ -1,13 +1,268 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<div align="center">

|

| 2 |

+

|

| 3 |

+

<img src='https://user-images.githubusercontent.com/4397546/229094115-862c747e-7397-4b54-ba4a-bd368bfe2e0f.png' width='500px'/>

|

| 4 |

+

|

| 5 |

+

|

| 6 |

+

<!--<h2> 😭 SadTalker: <span style="font-size:12px">Learning Realistic 3D Motion Coefficients for Stylized Audio-Driven Single Image Talking Face Animation </span> </h2> -->

|

| 7 |

+

|

| 8 |

+

<a href='https://arxiv.org/abs/2211.12194'><img src='https://img.shields.io/badge/ArXiv-PDF-red'></a> <a href='https://sadtalker.github.io'><img src='https://img.shields.io/badge/Project-Page-Green'></a> [](https://colab.research.google.com/github/Winfredy/SadTalker/blob/main/quick_demo.ipynb) [](https://huggingface.co/spaces/vinthony/SadTalker) [](https://colab.research.google.com/github/camenduru/stable-diffusion-webui-colab/blob/main/video/stable/stable_diffusion_1_5_video_webui_colab.ipynb) [](https://replicate.com/cjwbw/sadtalker)

|

| 9 |

+

|

| 10 |

+

<div>

|

| 11 |

+

<a target='_blank'>Wenxuan Zhang <sup>*,1,2</sup> </a>

|

| 12 |

+

<a href='https://vinthony.github.io/' target='_blank'>Xiaodong Cun <sup>*,2</a>

|

| 13 |

+

<a href='https://xuanwangvc.github.io/' target='_blank'>Xuan Wang <sup>3</sup></a>

|

| 14 |

+

<a href='https://yzhang2016.github.io/' target='_blank'>Yong Zhang <sup>2</sup></a>

|

| 15 |

+

<a href='https://xishen0220.github.io/' target='_blank'>Xi Shen <sup>2</sup></a>  </br>

|

| 16 |

+

<a href='https://yuguo-xjtu.github.io/' target='_blank'>Yu Guo<sup>1</sup> </a>

|

| 17 |

+

<a href='https://scholar.google.com/citations?hl=zh-CN&user=4oXBp9UAAAAJ' target='_blank'>Ying Shan <sup>2</sup> </a>

|

| 18 |

+

<a target='_blank'>Fei Wang <sup>1</sup> </a>

|

| 19 |

+

</div>

|

| 20 |

+

<br>

|

| 21 |

+

<div>

|

| 22 |

+

<sup>1</sup> Xi'an Jiaotong University   <sup>2</sup> Tencent AI Lab   <sup>3</sup> Ant Group

|

| 23 |

+

</div>

|

| 24 |

+

<br>

|

| 25 |

+

<i><strong><a href='https://arxiv.org/abs/2211.12194' target='_blank'>CVPR 2023</a></strong></i>

|

| 26 |

+

<br>

|

| 27 |

+

<br>

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

<b>TL;DR: single portrait image 🙎♂️ + audio 🎤 = talking head video 🎞.</b>

|

| 33 |

+

|

| 34 |

+

<br>

|

| 35 |

+

|

| 36 |

+

</div>

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

## 🔥 Highlight

|

| 41 |

+

|

| 42 |

+

- 🔥 The extension of the [stable-diffusion-webui](https://github.com/AUTOMATIC1111/stable-diffusion-webui) is online. Checkout more details [here](docs/webui_extension.md).

|

| 43 |

+

|

| 44 |

+

https://user-images.githubusercontent.com/4397546/231495639-5d4bb925-ea64-4a36-a519-6389917dac29.mp4

|

| 45 |

+

|

| 46 |

+

- 🔥 `full image mode` is online! checkout [here](https://github.com/Winfredy/SadTalker#full-bodyimage-generation) for more details.

|

| 47 |

+

|

| 48 |

+

| still+enhancer in v0.0.1 | still + enhancer in v0.0.2 | [input image @bagbag1815](https://twitter.com/bagbag1815/status/1642754319094108161) |

|

| 49 |

+

|:--------------------: |:--------------------: | :----: |

|

| 50 |

+

| <video src="https://user-images.githubusercontent.com/48216707/229484996-5d7be64f-2553-4c9e-a452-c5cf0b8ebafe.mp4" type="video/mp4"> </video> | <video src="https://user-images.githubusercontent.com/4397546/230717873-355b7bf3-d3de-49f9-a439-9220e623fce7.mp4" type="video/mp4"> </video> | <img src='./examples/source_image/full_body_2.png' width='380'>

|

| 51 |

+

|

| 52 |

+

- 🔥 Several new mode, eg, `still mode`, `reference mode`, `resize mode` are online for better and custom applications.

|

| 53 |

+

|

| 54 |

+

- 🔥 Happy to see more community demos at [bilibili](https://search.bilibili.com/all?keyword=sadtalker&from_source=webtop_search&spm_id_from=333.1007&search_source=3

|

| 55 |

+

), [Youtube](https://www.youtube.com/results?search_query=sadtalker&sp=CAM%253D) and [twitter #sadtalker](https://twitter.com/search?q=%23sadtalker&src=typed_query).

|

| 56 |

+

|

| 57 |

+

## 📋 Changelog (Previous changelog can be founded [here](docs/changlelog.md))

|

| 58 |

+

|

| 59 |

+

- __[2023.06.12]__: add more new features in WEBUI extension, see the discussion [here](https://github.com/OpenTalker/SadTalker/discussions/386).

|

| 60 |

+

|

| 61 |

+

- __[2023.06.05]__: release a new 512 beta face model. Fixed some bugs and improve the performance.

|

| 62 |

+

|

| 63 |

+

- __[2023.04.15]__: Adding automatic1111 colab by @camenduru, thanks for this awesome colab: [](https://colab.research.google.com/github/camenduru/stable-diffusion-webui-colab/blob/main/video/stable/stable_diffusion_1_5_video_webui_colab.ipynb).

|

| 64 |

+

|

| 65 |

+

- __[2023.04.12]__: adding a more detailed sd-webui installation document, fixed reinstallation problem.

|

| 66 |

+

|

| 67 |

+

- __[2023.04.12]__: Fixed the sd-webui safe issues becasue of the 3rd packages, optimize the output path in `sd-webui-extension`.

|

| 68 |

+

|

| 69 |

+

- __[2023.04.08]__: ❗️❗️❗️ In v0.0.2, we add a logo watermark to the generated video to prevent abusing since it is very realistic.

|

| 70 |

+

|

| 71 |

+

- __[2023.04.08]__: v0.0.2, full image animation, adding baidu driver for download checkpoints. Optimizing the logic about enhancer.

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

## 🚧 TODO: See the Discussion https://github.com/OpenTalker/SadTalker/issues/280

|

| 75 |

+

|

| 76 |

+

## If you have any problem, please view our [FAQ](docs/FAQ.md) before opening an issue.

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

## ⚙️ 1. Installation.

|

| 81 |

+

|

| 82 |

+

Tutorials from communities: [中文windows教程](https://www.bilibili.com/video/BV1Dc411W7V6/) | [日本語コース](https://br-d.fanbox.cc/posts/5685086?utm_campaign=manage_post_page&utm_medium=share&utm_source=twitter)

|

| 83 |

+

|

| 84 |

+

### Linux:

|

| 85 |

+

|

| 86 |

+

1. Installing [anaconda](https://www.anaconda.com/), python and git.

|

| 87 |

+

|

| 88 |

+

2. Creating the env and install the requirements.

|

| 89 |

+

```bash

|

| 90 |

+

git clone https://github.com/Winfredy/SadTalker.git

|

| 91 |

+

|

| 92 |

+

cd SadTalker

|

| 93 |

+

|

| 94 |

+

conda create -n sadtalker python=3.8

|

| 95 |

+

|

| 96 |

+

conda activate sadtalker

|

| 97 |

+

|

| 98 |

+

pip install torch==1.12.1+cu113 torchvision==0.13.1+cu113 torchaudio==0.12.1 --extra-index-url https://download.pytorch.org/whl/cu113

|

| 99 |

+

|

| 100 |

+

conda install ffmpeg

|

| 101 |

+

|

| 102 |

+

pip install -r requirements.txt

|

| 103 |

+

|

| 104 |

+

### tts is optional for gradio demo.

|

| 105 |

+

### pip install TTS

|

| 106 |

+

|

| 107 |

+

```

|

| 108 |

+

### Windows ([中文windows教程](https://www.bilibili.com/video/BV1Dc411W7V6/)):

|

| 109 |

+

|

| 110 |

+

1. Install [Python 3.10.6](https://www.python.org/downloads/windows/), checking "Add Python to PATH".

|

| 111 |

+

2. Install [git](https://git-scm.com/download/win) manually (OR `scoop install git` via [scoop](https://scoop.sh/)).

|

| 112 |

+

3. Install `ffmpeg`, following [this instruction](https://www.wikihow.com/Install-FFmpeg-on-Windows) (OR using `scoop install ffmpeg` via [scoop](https://scoop.sh/)).

|

| 113 |

+

4. Download our SadTalker repository, for example by running `git clone https://github.com/Winfredy/SadTalker.git`.

|

| 114 |

+

5. Download the `checkpoint` and `gfpgan` [below↓](https://github.com/Winfredy/SadTalker#-2-download-trained-models).

|

| 115 |

+

5. Run `start.bat` from Windows Explorer as normal, non-administrator, user, a gradio WebUI demo will be started.

|

| 116 |

+

|

| 117 |

+

### Macbook:

|

| 118 |

+

|

| 119 |

+

More tips about installnation on Macbook and the Docker file can be founded [here](docs/install.md)

|

| 120 |

+

|

| 121 |

+

## 📥 2. Download Trained Models.

|

| 122 |

+

|

| 123 |

+

You can run the following script to put all the models in the right place.

|

| 124 |

+

|

| 125 |

+

```bash

|

| 126 |

+

bash scripts/download_models.sh

|

| 127 |

+

```

|

| 128 |

+

|

| 129 |

+

Other alternatives:

|

| 130 |

+

> we also provide an offline patch (`gfpgan/`), thus, no model will be downloaded when generating.

|

| 131 |

+

|

| 132 |

+

**Google Driver**: download our pre-trained model from [ this link (main checkpoints)](https://drive.google.com/file/d/1gwWh45pF7aelNP_P78uDJL8Sycep-K7j/view?usp=sharing) and [ gfpgan (offline patch)](https://drive.google.com/file/d/19AIBsmfcHW6BRJmeqSFlG5fL445Xmsyi?usp=sharing)

|

| 133 |

+

|

| 134 |

+

**Github Release Page**: download all the files from the [lastest github release page](https://github.com/Winfredy/SadTalker/releases), and then, put it in ./checkpoints.

|

| 135 |

+

|

| 136 |

+

**百度云盘**: we provided the downloaded model in [checkpoints, 提取码: sadt.](https://pan.baidu.com/s/1P4fRgk9gaSutZnn8YW034Q?pwd=sadt) And [gfpgan, 提取码: sadt.](https://pan.baidu.com/s/1kb1BCPaLOWX1JJb9Czbn6w?pwd=sadt)

|

| 137 |

+

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

<details><summary>Model Details</summary>

|

| 141 |

+

|

| 142 |

+

|

| 143 |

+

Model explains:

|

| 144 |

+

|

| 145 |

+

##### New version

|

| 146 |

+

| Model | Description

|

| 147 |

+

| :--- | :----------

|

| 148 |

+

|checkpoints/mapping_00229-model.pth.tar | Pre-trained MappingNet in Sadtalker.

|

| 149 |

+

|checkpoints/mapping_00109-model.pth.tar | Pre-trained MappingNet in Sadtalker.

|

| 150 |

+

|checkpoints/SadTalker_V0.0.2_256.safetensors | packaged sadtalker checkpoints of old version, 256 face render).

|

| 151 |

+

|checkpoints/SadTalker_V0.0.2_512.safetensors | packaged sadtalker checkpoints of old version, 512 face render).

|

| 152 |

+

|gfpgan/weights | Face detection and enhanced models used in `facexlib` and `gfpgan`.

|

| 153 |

+

|

| 154 |

+

|

| 155 |

+

##### Old version

|

| 156 |

+

| Model | Description

|

| 157 |

+

| :--- | :----------

|

| 158 |

+

|checkpoints/auido2exp_00300-model.pth | Pre-trained ExpNet in Sadtalker.

|

| 159 |

+

|checkpoints/auido2pose_00140-model.pth | Pre-trained PoseVAE in Sadtalker.

|

| 160 |

+

|checkpoints/mapping_00229-model.pth.tar | Pre-trained MappingNet in Sadtalker.

|

| 161 |

+

|checkpoints/mapping_00109-model.pth.tar | Pre-trained MappingNet in Sadtalker.

|

| 162 |

+

|checkpoints/facevid2vid_00189-model.pth.tar | Pre-trained face-vid2vid model from [the reappearance of face-vid2vid](https://github.com/zhanglonghao1992/One-Shot_Free-View_Neural_Talking_Head_Synthesis).

|

| 163 |

+

|checkpoints/epoch_20.pth | Pre-trained 3DMM extractor in [Deep3DFaceReconstruction](https://github.com/microsoft/Deep3DFaceReconstruction).

|

| 164 |

+

|checkpoints/wav2lip.pth | Highly accurate lip-sync model in [Wav2lip](https://github.com/Rudrabha/Wav2Lip).

|

| 165 |

+

|checkpoints/shape_predictor_68_face_landmarks.dat | Face landmark model used in [dilb](http://dlib.net/).

|

| 166 |

+

|checkpoints/BFM | 3DMM library file.

|

| 167 |

+

|checkpoints/hub | Face detection models used in [face alignment](https://github.com/1adrianb/face-alignment).

|

| 168 |

+

|gfpgan/weights | Face detection and enhanced models used in `facexlib` and `gfpgan`.

|

| 169 |

+

|

| 170 |

+

The final folder will be shown as:

|

| 171 |

+

|

| 172 |

+

<img width="331" alt="image" src="https://user-images.githubusercontent.com/4397546/232511411-4ca75cbf-a434-48c5-9ae0-9009e8316484.png">

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

</details>

|

| 176 |

+

|

| 177 |

+

## 🔮 3. Quick Start ([Best Practice](docs/best_practice.md)).

|

| 178 |

+

|

| 179 |

+

### WebUI Demos:

|

| 180 |

+

|

| 181 |

+

**Online**: [Huggingface](https://huggingface.co/spaces/vinthony/SadTalker) | [SDWebUI-Colab](https://colab.research.google.com/github/camenduru/stable-diffusion-webui-colab/blob/main/video/stable/stable_diffusion_1_5_video_webui_colab.ipynb) | [Colab](https://colab.research.google.com/github/Winfredy/SadTalker/blob/main/quick_demo.ipynb)

|

| 182 |

+

|

| 183 |

+

**Local Autiomatic1111 stable-diffusion webui extension**: please refer to [Autiomatic1111 stable-diffusion webui docs](docs/webui_extension.md).

|

| 184 |

+

|

| 185 |

+

**Local gradio demo(highly recommanded!)**: Similar to our [hugging-face demo](https://huggingface.co/spaces/vinthony/SadTalker) can be run by:

|

| 186 |

+

|

| 187 |

+

```bash

|

| 188 |

+

## you need manually install TTS(https://github.com/coqui-ai/TTS) via `pip install tts` in advanced.

|

| 189 |

+

python app.py

|

| 190 |

+

```

|

| 191 |

+

|

| 192 |

+

**Local gradio demo(highly recommanded!)**:

|

| 193 |

+

|

| 194 |

+

- windows: just double click `webui.bat`, the requirements will be installed automatically.

|

| 195 |

+

- Linux/Mac OS: run `bash webui.sh` to start the webui.

|

| 196 |

+

|

| 197 |

+

|

| 198 |

+

### Manually usages:

|

| 199 |

+

|

| 200 |

+

##### Animating a portrait image from default config:

|

| 201 |

+

```bash

|

| 202 |

+

python inference.py --driven_audio <audio.wav> \

|

| 203 |

+

--source_image <video.mp4 or picture.png> \

|

| 204 |

+

--enhancer gfpgan

|

| 205 |

+

```

|

| 206 |

+

The results will be saved in `results/$SOME_TIMESTAMP/*.mp4`.

|

| 207 |

+

|

| 208 |

+

##### Full body/image Generation:

|

| 209 |

+

|

| 210 |

+

Using `--still` to generate a natural full body video. You can add `enhancer` to improve the quality of the generated video.

|

| 211 |

+

|

| 212 |

+

```bash

|

| 213 |

+

python inference.py --driven_audio <audio.wav> \

|

| 214 |

+

--source_image <video.mp4 or picture.png> \

|

| 215 |

+

--result_dir <a file to store results> \

|

| 216 |

+

--still \

|

| 217 |

+

--preprocess full \

|

| 218 |

+

--enhancer gfpgan

|

| 219 |

+

```

|

| 220 |

+

|

| 221 |

+

More examples and configuration and tips can be founded in the [ >>> best practice documents <<<](docs/best_practice.md).

|

| 222 |

+

|

| 223 |

+

## 🛎 Citation

|

| 224 |

+

|

| 225 |

+

If you find our work useful in your research, please consider citing:

|

| 226 |

+

|

| 227 |

+

```bibtex

|

| 228 |

+

@article{zhang2022sadtalker,

|

| 229 |

+

title={SadTalker: Learning Realistic 3D Motion Coefficients for Stylized Audio-Driven Single Image Talking Face Animation},

|

| 230 |

+

author={Zhang, Wenxuan and Cun, Xiaodong and Wang, Xuan and Zhang, Yong and Shen, Xi and Guo, Yu and Shan, Ying and Wang, Fei},

|

| 231 |

+

journal={arXiv preprint arXiv:2211.12194},

|

| 232 |

+

year={2022}

|

| 233 |

+

}

|

| 234 |

+

```

|

| 235 |

+

|

| 236 |

+

|

| 237 |

+

|

| 238 |

+

## 💗 Acknowledgements

|

| 239 |

+

|

| 240 |

+

Facerender code borrows heavily from [zhanglonghao's reproduction of face-vid2vid](https://github.com/zhanglonghao1992/One-Shot_Free-View_Neural_Talking_Head_Synthesis) and [PIRender](https://github.com/RenYurui/PIRender). We thank the authors for sharing their wonderful code. In training process, We also use the model from [Deep3DFaceReconstruction](https://github.com/microsoft/Deep3DFaceReconstruction) and [Wav2lip](https://github.com/Rudrabha/Wav2Lip). We thank for their wonderful work.

|

| 241 |

+

|

| 242 |

+

See also these wonderful 3rd libraries we use:

|

| 243 |

+

|

| 244 |

+

- **Face Utils**: https://github.com/xinntao/facexlib

|

| 245 |

+

- **Face Enhancement**: https://github.com/TencentARC/GFPGAN

|

| 246 |

+

- **Image/Video Enhancement**:https://github.com/xinntao/Real-ESRGAN

|

| 247 |

+

|

| 248 |

+

## 🥂 Extensions:

|

| 249 |

+

|

| 250 |

+

- [SadTalker-Video-Lip-Sync](https://github.com/Zz-ww/SadTalker-Video-Lip-Sync) from [@Zz-ww](https://github.com/Zz-ww): SadTalker for Video Lip Editing

|

| 251 |

+

|

| 252 |

+

## 🥂 Related Works

|

| 253 |

+

- [StyleHEAT: One-Shot High-Resolution Editable Talking Face Generation via Pre-trained StyleGAN (ECCV 2022)](https://github.com/FeiiYin/StyleHEAT)

|

| 254 |

+

- [CodeTalker: Speech-Driven 3D Facial Animation with Discrete Motion Prior (CVPR 2023)](https://github.com/Doubiiu/CodeTalker)

|

| 255 |

+

- [VideoReTalking: Audio-based Lip Synchronization for Talking Head Video Editing In the Wild (SIGGRAPH Asia 2022)](https://github.com/vinthony/video-retalking)

|

| 256 |

+

- [DPE: Disentanglement of Pose and Expression for General Video Portrait Editing (CVPR 2023)](https://github.com/Carlyx/DPE)

|

| 257 |

+

- [3D GAN Inversion with Facial Symmetry Prior (CVPR 2023)](https://github.com/FeiiYin/SPI/)

|

| 258 |

+

- [T2M-GPT: Generating Human Motion from Textual Descriptions with Discrete Representations (CVPR 2023)](https://github.com/Mael-zys/T2M-GPT)

|

| 259 |

+

|

| 260 |

+

## 📢 Disclaimer

|

| 261 |

+

|

| 262 |

+

This is not an official product of Tencent. This repository can only be used for personal/research/non-commercial purposes.

|

| 263 |

+

|

| 264 |

+

LOGO: color and font suggestion: [ChatGPT](ai.com), logo font:[Montserrat Alternates

|

| 265 |

+

](https://fonts.google.com/specimen/Montserrat+Alternates?preview.text=SadTalker&preview.text_type=custom&query=mont).

|

| 266 |

+

|

| 267 |

+

All the copyright of the demo images and audio are from communities users or the geneartion from stable diffusion. Free free to contact us if you feel uncomfortable.

|

| 268 |

+

|

Untitled.ipynb

ADDED

|

@@ -0,0 +1,533 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"cell_type": "code",

|

| 5 |

+

"execution_count": 1,

|

| 6 |

+

"id": "c69a901b-8c3f-4188-97bd-5594c4496ec5",

|

| 7 |

+

"metadata": {},

|

| 8 |

+

"outputs": [],

|

| 9 |

+

"source": [

|

| 10 |

+

"from huggingface_hub import HfApi\n",

|

| 11 |

+

"api = HfApi()"

|

| 12 |

+

]

|

| 13 |

+

},

|

| 14 |

+

{

|

| 15 |

+

"cell_type": "code",

|

| 16 |

+

"execution_count": 3,

|

| 17 |

+

"id": "8b249adc-ccd0-4145-86ce-64509ad276cf",

|

| 18 |

+

"metadata": {},

|

| 19 |

+

"outputs": [

|

| 20 |

+

{

|

| 21 |

+

"data": {

|

| 22 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 23 |

+

"model_id": "faf7b41b81e54705bae8921f2a86e9fd",

|

| 24 |

+

"version_major": 2,

|

| 25 |

+

"version_minor": 0

|

| 26 |

+

},

|

| 27 |

+

"text/plain": [

|

| 28 |

+

"VBox(children=(HTML(value='<center> <img\\nsrc=https://huggingface.co/front/assets/huggingface_logo-noborder.sv…"

|

| 29 |

+

]

|

| 30 |

+

},

|

| 31 |

+

"metadata": {},

|

| 32 |

+

"output_type": "display_data"

|

| 33 |

+

}

|

| 34 |

+

],

|

| 35 |

+

"source": [

|

| 36 |

+

"from huggingface_hub import login\n",

|

| 37 |

+

"login()"

|

| 38 |

+

]

|

| 39 |

+

},

|

| 40 |

+

{

|

| 41 |

+

"cell_type": "code",

|

| 42 |

+

"execution_count": null,

|

| 43 |

+

"id": "41815b66-b12b-45da-9a5e-92c527fe2b4a",

|

| 44 |

+

"metadata": {},

|

| 45 |

+

"outputs": [

|

| 46 |

+

{

|

| 47 |

+

"data": {

|

| 48 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 49 |

+

"model_id": "6bb4c00dda664acd9f70e029e15dbd57",

|

| 50 |

+

"version_major": 2,

|

| 51 |

+

"version_minor": 0

|

| 52 |

+

},

|

| 53 |

+

"text/plain": [

|

| 54 |

+

"SadTalker_V0.0.2_512.safetensors: 0%| | 0.00/725M [00:00<?, ?B/s]"

|

| 55 |

+

]

|

| 56 |

+

},

|

| 57 |

+

"metadata": {},

|

| 58 |

+

"output_type": "display_data"

|

| 59 |

+

},

|

| 60 |

+

{

|

| 61 |

+

"data": {

|

| 62 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 63 |

+

"model_id": "b9a78d85c20642ac86da6b6d0ab97031",

|

| 64 |

+

"version_major": 2,

|

| 65 |

+

"version_minor": 0

|

| 66 |

+

},

|

| 67 |

+

"text/plain": [

|

| 68 |

+

"mapping_00109-model.pth.tar: 0%| | 0.00/156M [00:00<?, ?B/s]"

|

| 69 |

+

]

|

| 70 |

+

},

|

| 71 |

+

"metadata": {},

|

| 72 |

+

"output_type": "display_data"

|

| 73 |

+

},

|

| 74 |

+

{

|

| 75 |

+

"data": {

|

| 76 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 77 |

+

"model_id": "d396b1372ea04ae79dd82677c1c33352",

|

| 78 |

+

"version_major": 2,

|

| 79 |

+

"version_minor": 0

|

| 80 |

+

},

|

| 81 |

+

"text/plain": [

|

| 82 |

+

"Upload 31 LFS files: 0%| | 0/31 [00:00<?, ?it/s]"

|

| 83 |

+

]

|

| 84 |

+

},

|

| 85 |

+

"metadata": {},

|

| 86 |

+

"output_type": "display_data"

|

| 87 |

+

},

|

| 88 |

+

{

|

| 89 |

+

"data": {

|

| 90 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 91 |

+

"model_id": "7b924c6f560b40b78152a87f5c388d6b",

|

| 92 |

+

"version_major": 2,

|

| 93 |

+

"version_minor": 0

|

| 94 |

+

},

|

| 95 |

+

"text/plain": [

|

| 96 |

+

"mapping_00229-model.pth.tar: 0%| | 0.00/156M [00:00<?, ?B/s]"

|

| 97 |

+

]

|

| 98 |

+

},

|

| 99 |

+

"metadata": {},

|

| 100 |

+

"output_type": "display_data"

|

| 101 |

+

},

|

| 102 |

+

{

|

| 103 |

+

"data": {

|

| 104 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 105 |

+

"model_id": "4245c014a4d04d6daacd47d7d81c6249",

|

| 106 |

+

"version_major": 2,

|

| 107 |

+

"version_minor": 0

|

| 108 |

+

},

|

| 109 |

+

"text/plain": [

|

| 110 |

+

"SadTalker_V0.0.2_256.safetensors: 0%| | 0.00/725M [00:00<?, ?B/s]"

|

| 111 |

+

]

|

| 112 |

+

},

|

| 113 |

+

"metadata": {},

|

| 114 |

+

"output_type": "display_data"

|

| 115 |

+

},

|

| 116 |

+

{

|

| 117 |

+

"data": {

|

| 118 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 119 |

+

"model_id": "d7f2b0a6b3ed4d5e895da21237f42577",

|

| 120 |

+

"version_major": 2,

|

| 121 |

+

"version_minor": 0

|

| 122 |

+

},

|

| 123 |

+

"text/plain": [

|

| 124 |

+

"example_crop.gif: 0%| | 0.00/1.55M [00:00<?, ?B/s]"

|

| 125 |

+

]

|

| 126 |

+

},

|

| 127 |

+

"metadata": {},

|

| 128 |

+

"output_type": "display_data"

|

| 129 |

+

},

|

| 130 |

+

{

|

| 131 |

+

"data": {

|

| 132 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 133 |

+

"model_id": "24ea098c821c4fc79ffa38b2fe5a1575",

|

| 134 |

+

"version_major": 2,

|

| 135 |

+

"version_minor": 0

|

| 136 |

+

},

|

| 137 |

+

"text/plain": [

|

| 138 |

+

"example_crop_still.gif: 0%| | 0.00/1.25M [00:00<?, ?B/s]"

|

| 139 |

+

]

|

| 140 |

+

},

|

| 141 |

+

"metadata": {},

|

| 142 |

+

"output_type": "display_data"

|

| 143 |

+

},

|

| 144 |

+

{

|

| 145 |

+

"data": {

|

| 146 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 147 |

+

"model_id": "b828fea9017846748cbe423f1b5fada7",

|

| 148 |

+

"version_major": 2,

|

| 149 |

+

"version_minor": 0

|

| 150 |

+

},

|

| 151 |

+

"text/plain": [

|

| 152 |

+

"example_full.gif: 0%| | 0.00/1.46M [00:00<?, ?B/s]"

|

| 153 |

+

]

|

| 154 |

+

},

|

| 155 |

+

"metadata": {},

|

| 156 |

+

"output_type": "display_data"

|

| 157 |

+

},

|

| 158 |

+

{

|

| 159 |

+

"data": {

|

| 160 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 161 |

+

"model_id": "a0b664fbf3f8465d9b286290e5f14d8f",

|

| 162 |

+

"version_major": 2,

|

| 163 |

+

"version_minor": 0

|

| 164 |

+

},

|

| 165 |

+

"text/plain": [

|

| 166 |

+

"example_full_enhanced.gif: 0%| | 0.00/5.78M [00:00<?, ?B/s]"

|

| 167 |

+

]

|

| 168 |

+

},

|

| 169 |

+

"metadata": {},

|

| 170 |

+

"output_type": "display_data"

|

| 171 |

+

},

|

| 172 |

+

{

|

| 173 |

+

"data": {

|

| 174 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 175 |

+

"model_id": "e7483527580845429ce33f4f987e7ddb",

|

| 176 |

+

"version_major": 2,

|

| 177 |

+

"version_minor": 0

|

| 178 |

+

},

|

| 179 |

+

"text/plain": [

|

| 180 |

+

"free_view_result.gif: 0%| | 0.00/5.61M [00:00<?, ?B/s]"

|

| 181 |

+

]

|

| 182 |

+

},

|

| 183 |

+

"metadata": {},

|

| 184 |

+

"output_type": "display_data"

|

| 185 |

+

},

|

| 186 |

+

{

|

| 187 |

+

"data": {

|

| 188 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 189 |

+

"model_id": "7662c74dc37e4e8a9e756da854c7267a",

|

| 190 |

+

"version_major": 2,

|

| 191 |

+

"version_minor": 0

|

| 192 |

+

},

|

| 193 |

+

"text/plain": [

|

| 194 |

+

"resize_good.gif: 0%| | 0.00/1.73M [00:00<?, ?B/s]"

|

| 195 |

+

]

|

| 196 |

+

},

|

| 197 |

+

"metadata": {},

|

| 198 |

+

"output_type": "display_data"

|

| 199 |

+

},

|

| 200 |

+

{

|

| 201 |

+

"data": {

|

| 202 |

+

"application/vnd.jupyter.widget-view+json": {

|

| 203 |

+