Spaces:

Runtime error

Runtime error

Commit

•

e88cdb9

1

Parent(s):

61b5cf5

upload space files

Browse files- README.md +3 -3

- app.py +53 -0

- requirements.txt +5 -0

- test_images/IMG_1879_jpg.rf.c0e9cd93962f7cf2df6fbeef63ab6ed9.jpg +0 -0

- test_images/IMG_1906_jpg.rf.e3d1ef9a4c55d6576e95ba057984d204.jpg +0 -0

- test_images/IMG_1931_jpg.rf.16f42f6d309c3de5661625af454aeb0c.jpg +0 -0

- test_images/IMG_2016_jpg.rf.6013a2119e90b56bb2a07d83954dc637.jpg +0 -0

- test_images/IMG_2023_jpg.rf.01d180bc2ed3d5bab7b0026cd8e4c09a.jpg +0 -0

- test_images/IMG_2034_jpg.rf.40ab3489487d018d0112f49622f0f9a0.jpg +0 -0

README.md

CHANGED

|

@@ -1,7 +1,7 @@

|

|

| 1 |

---

|

| 2 |

-

title: Clash

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

colorTo: gray

|

| 6 |

sdk: gradio

|

| 7 |

sdk_version: 3.15.0

|

|

|

|

| 1 |

---

|

| 2 |

+

title: Clash of Clans Object Detection

|

| 3 |

+

emoji: 🎮

|

| 4 |

+

colorFrom: red

|

| 5 |

colorTo: gray

|

| 6 |

sdk: gradio

|

| 7 |

sdk_version: 3.15.0

|

app.py

ADDED

|

@@ -0,0 +1,53 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

import json

|

| 3 |

+

import gradio as gr

|

| 4 |

+

import yolov5

|

| 5 |

+

from PIL import Image

|

| 6 |

+

from huggingface_hub import hf_hub_download

|

| 7 |

+

|

| 8 |

+

app_title = "Clash of Clans Object Detection"

|

| 9 |

+

models_ids = ['keremberke/yolov5n-clash-of-clans', 'keremberke/yolov5s-clash-of-clans', 'keremberke/yolov5m-clash-of-clans']

|

| 10 |

+

article = f"<p style='text-align: center'> <a href='https://huggingface.co/{models_ids[-1]}'>huggingface.co/{models_ids[-1]}</a> | <a href='https://huggingface.co/keremberke/clash-of-clans-object-detection'>huggingface.co/keremberke/clash-of-clans-object-detection</a> | <a href='https://github.com/keremberke/awesome-yolov5-models'>awesome-yolov5-models</a> </p>"

|

| 11 |

+

|

| 12 |

+

current_model_id = models_ids[-1]

|

| 13 |

+

model = yolov5.load(current_model_id)

|

| 14 |

+

|

| 15 |

+

examples = [['test_images/IMG_1879_jpg.rf.c0e9cd93962f7cf2df6fbeef63ab6ed9.jpg', 0.25, 'keremberke/yolov5m-clash-of-clans'], ['test_images/IMG_1906_jpg.rf.e3d1ef9a4c55d6576e95ba057984d204.jpg', 0.25, 'keremberke/yolov5m-clash-of-clans'], ['test_images/IMG_1931_jpg.rf.16f42f6d309c3de5661625af454aeb0c.jpg', 0.25, 'keremberke/yolov5m-clash-of-clans'], ['test_images/IMG_2016_jpg.rf.6013a2119e90b56bb2a07d83954dc637.jpg', 0.25, 'keremberke/yolov5m-clash-of-clans'], ['test_images/IMG_2023_jpg.rf.01d180bc2ed3d5bab7b0026cd8e4c09a.jpg', 0.25, 'keremberke/yolov5m-clash-of-clans'], ['test_images/IMG_2034_jpg.rf.40ab3489487d018d0112f49622f0f9a0.jpg', 0.25, 'keremberke/yolov5m-clash-of-clans']]

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

def predict(image, threshold=0.25, model_id=None):

|

| 19 |

+

# update model if required

|

| 20 |

+

global current_model_id

|

| 21 |

+

global model

|

| 22 |

+

if model_id != current_model_id:

|

| 23 |

+

model = yolov5.load(model_id)

|

| 24 |

+

current_model_id = model_id

|

| 25 |

+

|

| 26 |

+

# get model input size

|

| 27 |

+

config_path = hf_hub_download(repo_id=model_id, filename="config.json")

|

| 28 |

+

with open(config_path, "r") as f:

|

| 29 |

+

config = json.load(f)

|

| 30 |

+

input_size = config["input_size"]

|

| 31 |

+

|

| 32 |

+

# perform inference

|

| 33 |

+

model.conf = threshold

|

| 34 |

+

results = model(image, size=input_size)

|

| 35 |

+

numpy_image = results.render()[0]

|

| 36 |

+

output_image = Image.fromarray(numpy_image)

|

| 37 |

+

return output_image

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

gr.Interface(

|

| 41 |

+

title=app_title,

|

| 42 |

+

description="Created by 'keremberke'",

|

| 43 |

+

article=article,

|

| 44 |

+

fn=predict,

|

| 45 |

+

inputs=[

|

| 46 |

+

gr.Image(type="pil"),

|

| 47 |

+

gr.Slider(maximum=1, step=0.01, value=0.25),

|

| 48 |

+

gr.Dropdown(models_ids, value=models_ids[-1]),

|

| 49 |

+

],

|

| 50 |

+

outputs=gr.Image(type="pil"),

|

| 51 |

+

examples=examples,

|

| 52 |

+

cache_examples=True if examples else False,

|

| 53 |

+

).launch(enable_queue=True)

|

requirements.txt

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

yolov5==7.0.5

|

| 3 |

+

gradio==3.15.0

|

| 4 |

+

torch

|

| 5 |

+

huggingface-hub

|

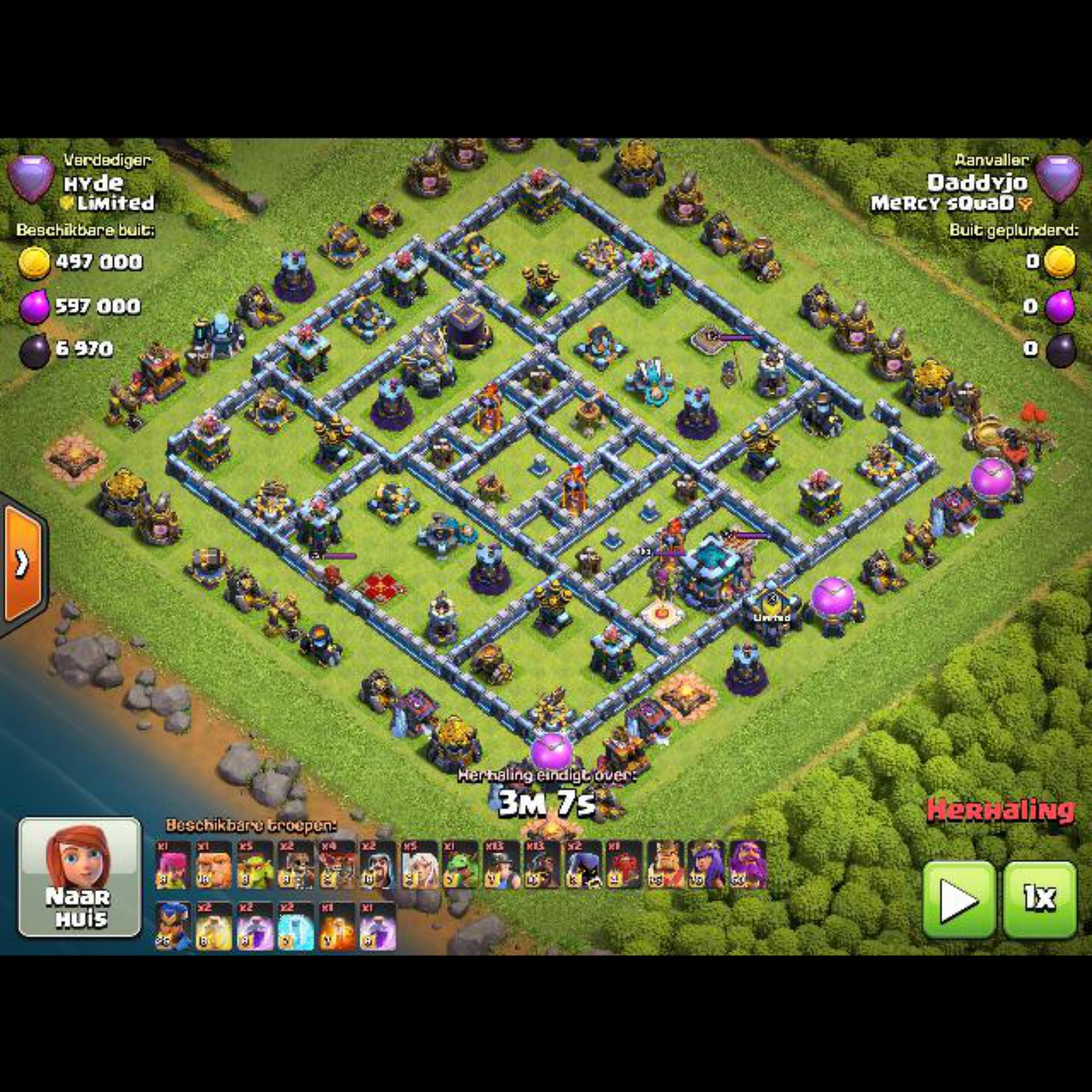

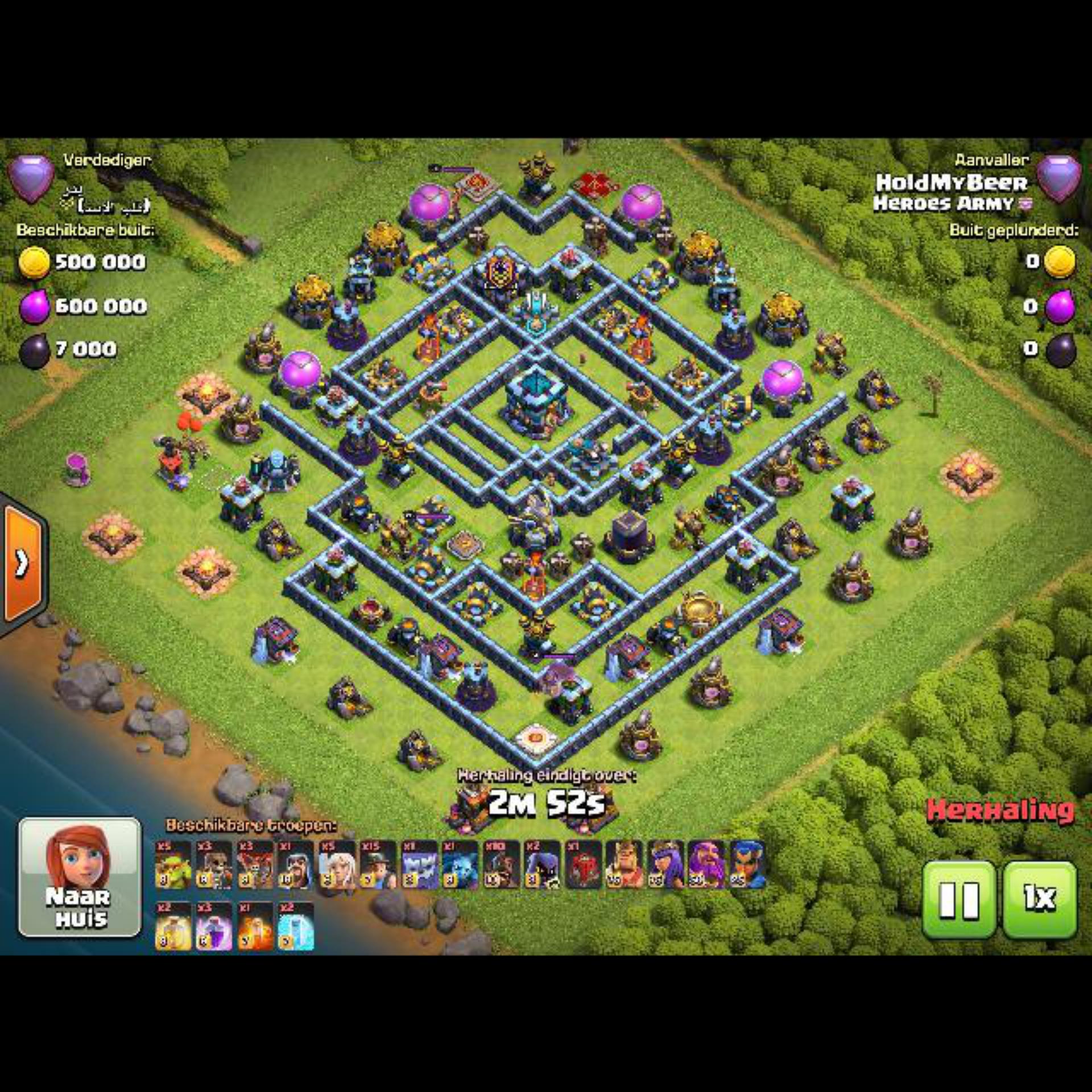

test_images/IMG_1879_jpg.rf.c0e9cd93962f7cf2df6fbeef63ab6ed9.jpg

ADDED

|

test_images/IMG_1906_jpg.rf.e3d1ef9a4c55d6576e95ba057984d204.jpg

ADDED

|

test_images/IMG_1931_jpg.rf.16f42f6d309c3de5661625af454aeb0c.jpg

ADDED

|

test_images/IMG_2016_jpg.rf.6013a2119e90b56bb2a07d83954dc637.jpg

ADDED

|

test_images/IMG_2023_jpg.rf.01d180bc2ed3d5bab7b0026cd8e4c09a.jpg

ADDED

|

test_images/IMG_2034_jpg.rf.40ab3489487d018d0112f49622f0f9a0.jpg

ADDED

|