Spaces:

Running

Running

Jason Adrian

commited on

Commit

•

95b697c

1

Parent(s):

c80976f

Changes on layout

Browse files- app.py +52 -2

- figures/resnet-residual-block-for-resnet18-from-scratch-using-pytorch.png +0 -0

- figures/resnet18-basic-blocks-1.png +0 -0

- index.html +51 -0

- sample/1.2.392.200036.9125.4.0.1964921730.2349552188.1786966286.dcm.jpeg +0 -0

- sample/10.127.133.1137.156.1251.20190404101039.dcm.jpeg +0 -0

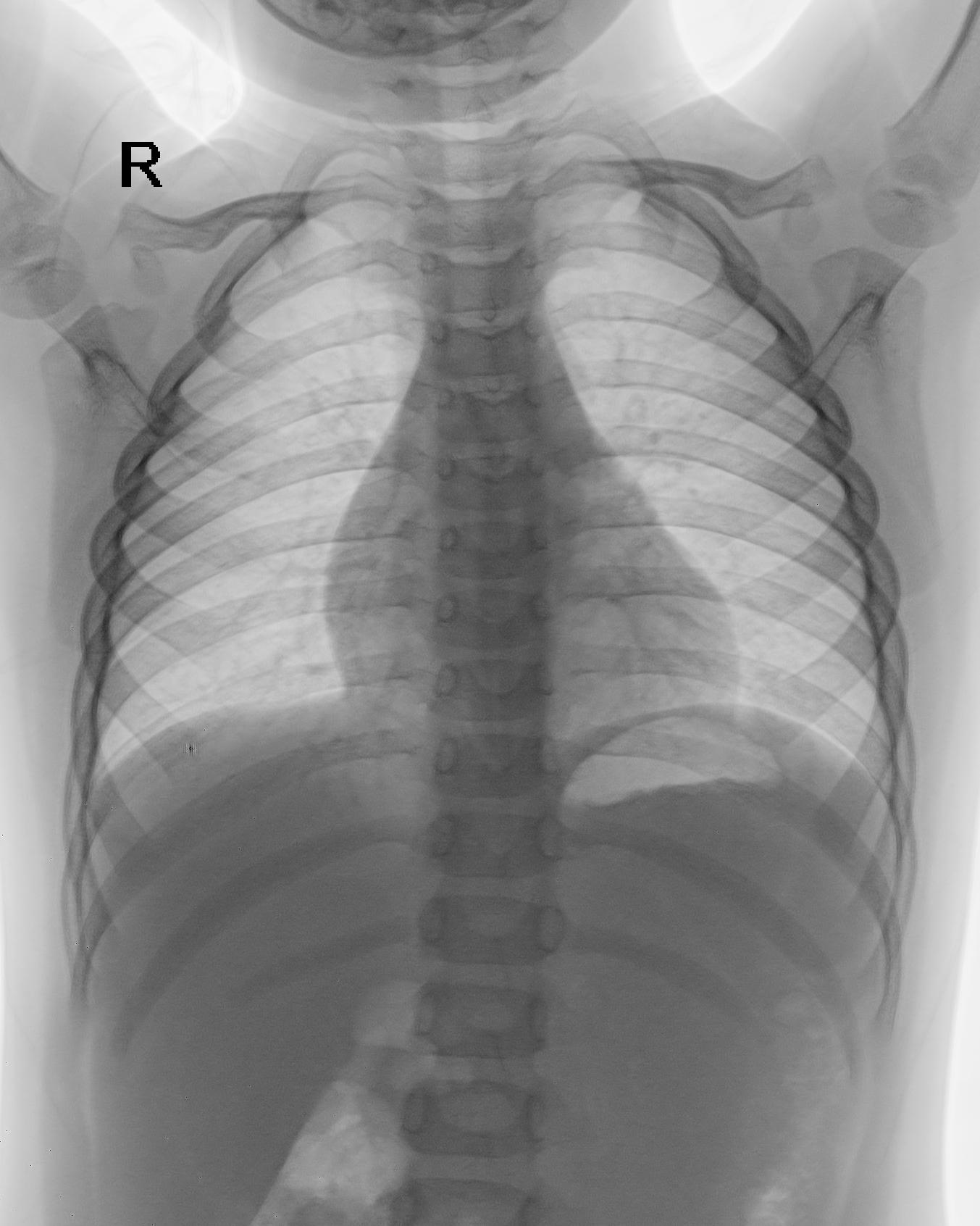

- sample/1b6a707131f787fe37d3ea40d2011d43.dicom.jpeg +0 -0

- sample/2e3204c2bb7a8fcdd6ec1ed547e2967e.dicom.jpeg +0 -0

- sample/badaec3e4d5f382ebf0b51ba2c917cea.dicom.jpeg +0 -0

- style.css +83 -0

- utils/page_utils.py +51 -0

app.py

CHANGED

|

@@ -4,6 +4,7 @@ from torchvision.transforms import transforms

|

|

| 4 |

import numpy as np

|

| 5 |

from typing import Optional

|

| 6 |

import torch.nn as nn

|

|

|

|

| 7 |

|

| 8 |

class BasicBlock(nn.Module):

|

| 9 |

"""ResNet Basic Block.

|

|

@@ -148,6 +149,8 @@ model.eval()

|

|

| 148 |

class_names = ['abdominal', 'adult', 'others', 'pediatric', 'spine']

|

| 149 |

class_names.sort()

|

| 150 |

|

|

|

|

|

|

|

| 151 |

transformation_pipeline = transforms.Compose([

|

| 152 |

transforms.ToPILImage(),

|

| 153 |

transforms.Grayscale(num_output_channels=1),

|

|

@@ -206,5 +209,52 @@ def image_classifier(inp):

|

|

| 206 |

|

| 207 |

return labeled_result

|

| 208 |

|

| 209 |

-

|

| 210 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 4 |

import numpy as np

|

| 5 |

from typing import Optional

|

| 6 |

import torch.nn as nn

|

| 7 |

+

import os

|

| 8 |

|

| 9 |

class BasicBlock(nn.Module):

|

| 10 |

"""ResNet Basic Block.

|

|

|

|

| 149 |

class_names = ['abdominal', 'adult', 'others', 'pediatric', 'spine']

|

| 150 |

class_names.sort()

|

| 151 |

|

| 152 |

+

examples_dir = "sample"

|

| 153 |

+

|

| 154 |

transformation_pipeline = transforms.Compose([

|

| 155 |

transforms.ToPILImage(),

|

| 156 |

transforms.Grayscale(num_output_channels=1),

|

|

|

|

| 209 |

|

| 210 |

return labeled_result

|

| 211 |

|

| 212 |

+

# gradio code block for input and output

|

| 213 |

+

with gr.Blocks() as app:

|

| 214 |

+

gr.Markdown("# Lung Cancer Classification")

|

| 215 |

+

|

| 216 |

+

with open('index.html', encoding="utf-8") as f:

|

| 217 |

+

description = f.read()

|

| 218 |

+

|

| 219 |

+

# gradio code block for input and output

|

| 220 |

+

with gr.Blocks(theme=gr.themes.Default(primary_hue=page_utils.KALBE_THEME_COLOR, secondary_hue=page_utils.KALBE_THEME_COLOR).set(

|

| 221 |

+

button_primary_background_fill="*primary_600",

|

| 222 |

+

button_primary_background_fill_hover="*primary_500",

|

| 223 |

+

button_primary_text_color="white",

|

| 224 |

+

)) as app:

|

| 225 |

+

with gr.Column():

|

| 226 |

+

gr.HTML(description)

|

| 227 |

+

|

| 228 |

+

with gr.Row():

|

| 229 |

+

with gr.Column():

|

| 230 |

+

inp_img = gr.Image()

|

| 231 |

+

with gr.Row():

|

| 232 |

+

clear_btn = gr.Button(value="Clear")

|

| 233 |

+

process_btn = gr.Button(value="Process", variant="primary")

|

| 234 |

+

with gr.Column():

|

| 235 |

+

out_txt = gr.Label(label="Probabilities", num_top_classes=3)

|

| 236 |

+

|

| 237 |

+

process_btn.click(image_classifier, inputs=inp_img, outputs=out_txt)

|

| 238 |

+

clear_btn.click(lambda:(

|

| 239 |

+

gr.update(value=None),

|

| 240 |

+

gr.update(value=None)

|

| 241 |

+

),

|

| 242 |

+

inputs=None,

|

| 243 |

+

outputs=[inp_img, out_txt])

|

| 244 |

+

|

| 245 |

+

gr.Markdown("## Image Examples")

|

| 246 |

+

gr.Examples(

|

| 247 |

+

examples=[os.path.join(examples_dir, "1.2.392.200036.9125.4.0.1964921730.2349552188.1786966286.dcm.jpeg"),

|

| 248 |

+

os.path.join(examples_dir, "1b6a707131f787fe37d3ea40d2011d43.dicom.jpeg"),

|

| 249 |

+

os.path.join(examples_dir, "2e3204c2bb7a8fcdd6ec1ed547e2967e.dicom.jpeg"),

|

| 250 |

+

os.path.join(examples_dir, "10.127.133.1137.156.1251.20190404101039.dcm.jpeg"),

|

| 251 |

+

os.path.join(examples_dir, "badaec3e4d5f382ebf0b51ba2c917cea.dicom.jpeg"),

|

| 252 |

+

],

|

| 253 |

+

inputs=inp_img,

|

| 254 |

+

outputs=out_txt,

|

| 255 |

+

fn=image_classifier,

|

| 256 |

+

cache_examples=False,

|

| 257 |

+

)

|

| 258 |

+

|

| 259 |

+

# demo = gr.Interface(fn=image_classifier, inputs="image", outputs="label")

|

| 260 |

+

app.launch(share=True)

|

figures/resnet-residual-block-for-resnet18-from-scratch-using-pytorch.png

ADDED

|

figures/resnet18-basic-blocks-1.png

ADDED

|

index.html

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<!DOCTYPE html>

|

| 2 |

+

<html>

|

| 3 |

+

<head>

|

| 4 |

+

<link rel="stylesheet" href="file/style.css" />

|

| 5 |

+

<link rel="preconnect" href="https://fonts.googleapis.com" />

|

| 6 |

+

<link rel="preconnect" href="https://fonts.gstatic.com" crossorigin />

|

| 7 |

+

<link href="https://fonts.googleapis.com/css2?family=Source+Sans+Pro:wght@400;600;700&display=swap" rel="stylesheet" />

|

| 8 |

+

<title><strong>Body Part Classification</strong></title>

|

| 9 |

+

</head>

|

| 10 |

+

<body>

|

| 11 |

+

<div class="container">

|

| 12 |

+

<h1 class="title"><strong> Body Part Classification</strong></h1>

|

| 13 |

+

<h2 class="subtitle"><strong>Kalbe Digital Lab</strong></h2>

|

| 14 |

+

<section class="overview">

|

| 15 |

+

<div class="grid-container">

|

| 16 |

+

<h3 class="overview-heading"><span class="vl">Overview</span></h3>

|

| 17 |

+

<p class="overview-content">

|

| 18 |

+

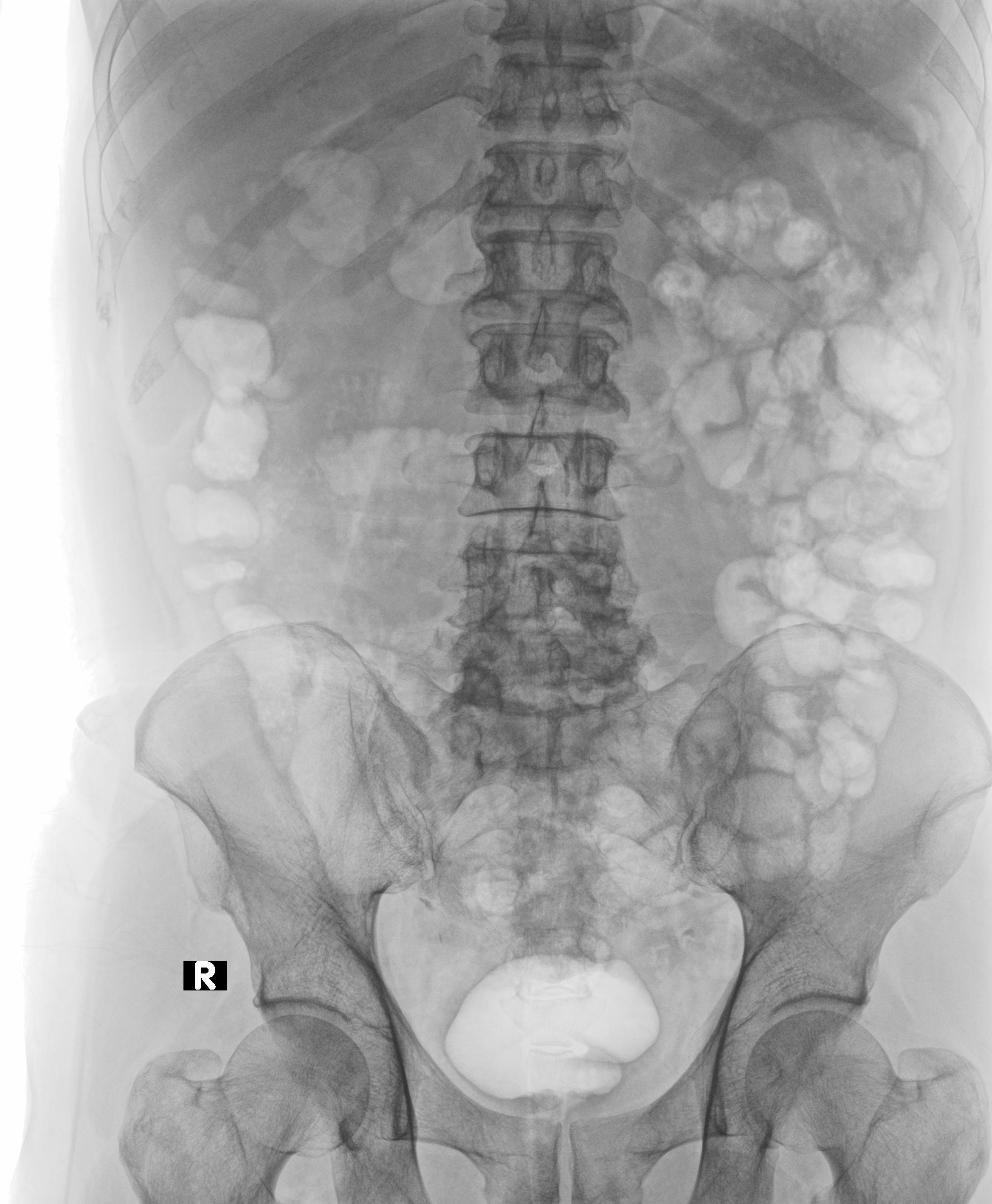

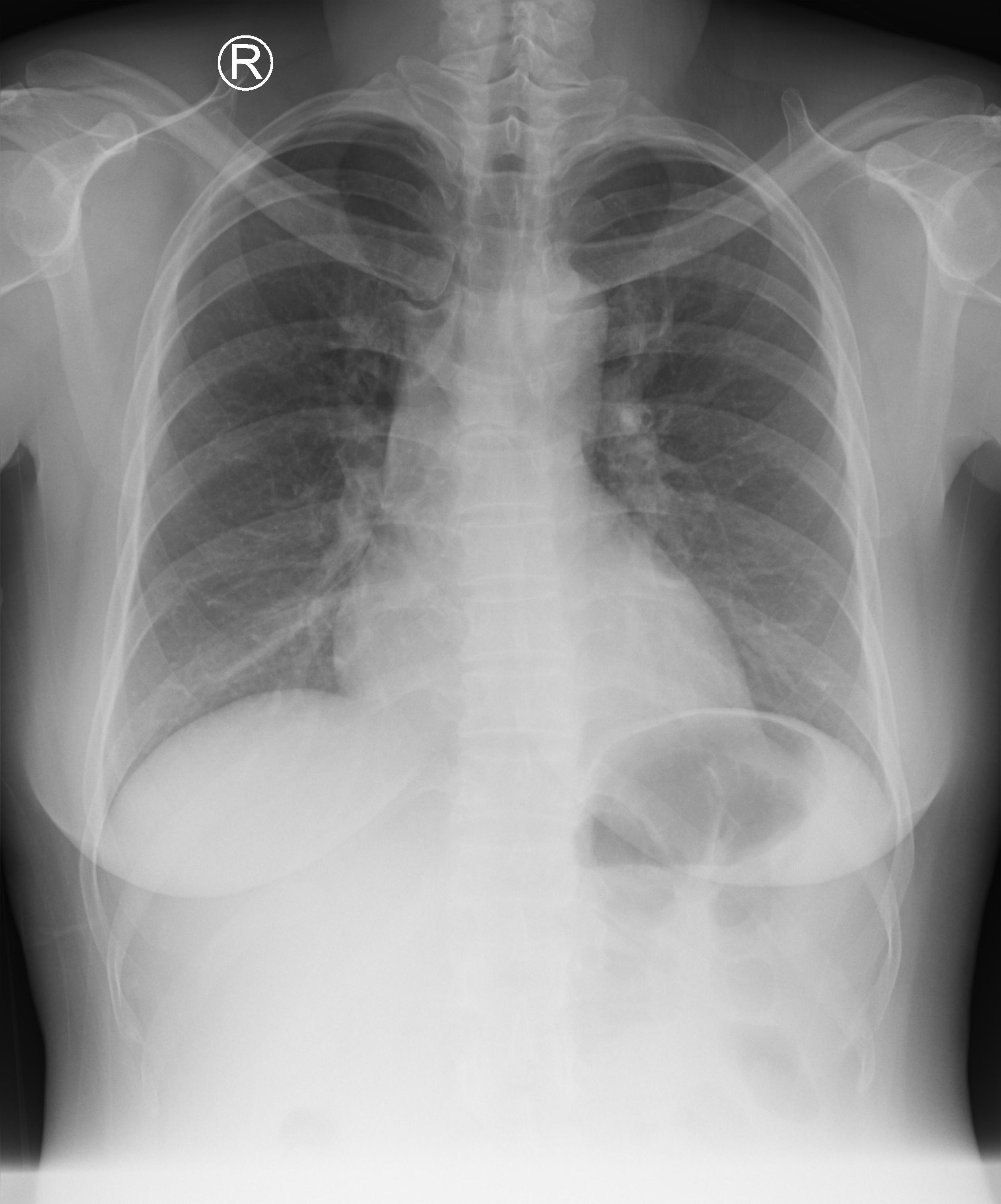

The Body Part Classification program serves the critical purpose of categorizing body parts from DICOM x-ray scans into five distinct classes: abdominal, adult chest, pediatric chest, spine, and others. This program trained using ResNet18 model.

|

| 19 |

+

</p>

|

| 20 |

+

</div>

|

| 21 |

+

<div class="grid-container">

|

| 22 |

+

<h3 class="overview-heading"><span class="vl">Dataset</span></h3>

|

| 23 |

+

<div>

|

| 24 |

+

<p class="overview-content">

|

| 25 |

+

The program has been meticulously trained on a robust and diverse dataset, specifically <a href="https://vindr.ai/datasets/bodypartxr" target="_blank">VinDrBodyPartXR Dataset.</a>.

|

| 26 |

+

<br/>

|

| 27 |

+

This dataset is introduced by Vingroup of Big Data Institute which include 16,093 x-ray images that are collected and manually annotated. It is a highly valuable resource that has been instrumental in the training of our model.

|

| 28 |

+

</p>

|

| 29 |

+

<ul>

|

| 30 |

+

<li>Objective: Body Part Identification</li>

|

| 31 |

+

<li>Task: Classification</li>

|

| 32 |

+

<li>Modality: Grayscale Images</li>

|

| 33 |

+

</ul>

|

| 34 |

+

</div>

|

| 35 |

+

</div>

|

| 36 |

+

<div class="grid-container">

|

| 37 |

+

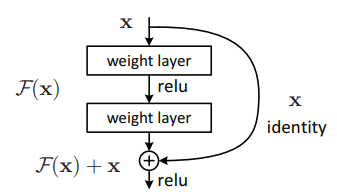

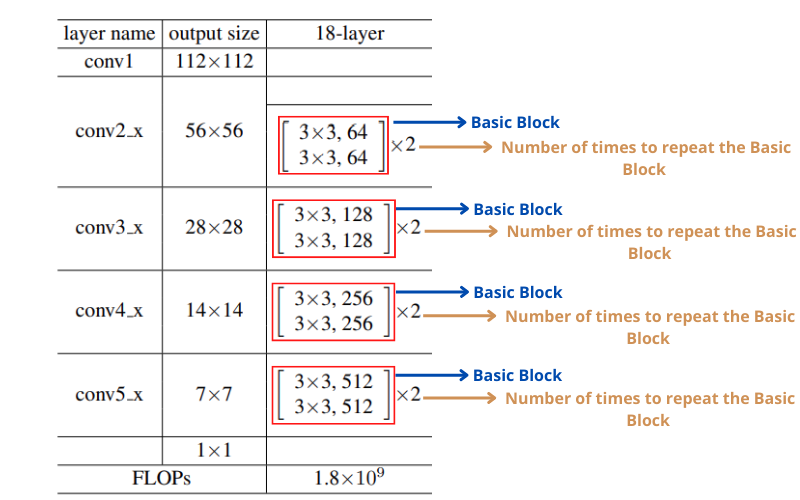

<h3 class="overview-heading"><span class="vl">Model Architecture</span></h3>

|

| 38 |

+

<div>

|

| 39 |

+

<p class="overview-content">

|

| 40 |

+

The model architecture of ResNet18 to train x-ray images for classifying body part.

|

| 41 |

+

</p>

|

| 42 |

+

<img class="content-image" src="file/figures/resnet18-basic-blocks-1.png" alt="model-architecture" />

|

| 43 |

+

<img class="content-image" src="file/figures/resnet-residual-block-for-resnet18-from-scratch-using-pytorch.png" alt="model-architecture" />

|

| 44 |

+

</div>

|

| 45 |

+

</div>

|

| 46 |

+

</section>

|

| 47 |

+

<h3 class="overview-heading"><span class="vl">Demo</span></h3>

|

| 48 |

+

<p class="overview-content">Please select or upload a body part x-ray scan image to see the capabilities of body part classification with this model</p>

|

| 49 |

+

</div>

|

| 50 |

+

</body>

|

| 51 |

+

</html>

|

sample/1.2.392.200036.9125.4.0.1964921730.2349552188.1786966286.dcm.jpeg

ADDED

|

sample/10.127.133.1137.156.1251.20190404101039.dcm.jpeg

ADDED

|

sample/1b6a707131f787fe37d3ea40d2011d43.dicom.jpeg

ADDED

|

sample/2e3204c2bb7a8fcdd6ec1ed547e2967e.dicom.jpeg

ADDED

|

sample/badaec3e4d5f382ebf0b51ba2c917cea.dicom.jpeg

ADDED

|

style.css

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

* {

|

| 2 |

+

box-sizing: border-box;

|

| 3 |

+

}

|

| 4 |

+

|

| 5 |

+

body {

|

| 6 |

+

font-family: 'Source Sans Pro', sans-serif;

|

| 7 |

+

font-size: 16px;

|

| 8 |

+

}

|

| 9 |

+

|

| 10 |

+

.container {

|

| 11 |

+

width: 100%;

|

| 12 |

+

margin: 0 auto;

|

| 13 |

+

}

|

| 14 |

+

|

| 15 |

+

.title {

|

| 16 |

+

font-size: 24px !important;

|

| 17 |

+

font-weight: 600 !important;

|

| 18 |

+

letter-spacing: 0em;

|

| 19 |

+

text-align: center;

|

| 20 |

+

color: #374159 !important;

|

| 21 |

+

}

|

| 22 |

+

|

| 23 |

+

.subtitle {

|

| 24 |

+

font-size: 24px !important;

|

| 25 |

+

font-style: italic;

|

| 26 |

+

font-weight: 400 !important;

|

| 27 |

+

letter-spacing: 0em;

|

| 28 |

+

text-align: center;

|

| 29 |

+

color: #1d652a !important;

|

| 30 |

+

padding-bottom: 0.5em;

|

| 31 |

+

}

|

| 32 |

+

|

| 33 |

+

.overview-heading {

|

| 34 |

+

font-size: 24px !important;

|

| 35 |

+

font-weight: 600 !important;

|

| 36 |

+

letter-spacing: 0em;

|

| 37 |

+

text-align: left;

|

| 38 |

+

}

|

| 39 |

+

|

| 40 |

+

.overview-content {

|

| 41 |

+

font-size: 14px !important;

|

| 42 |

+

font-weight: 400 !important;

|

| 43 |

+

line-height: 30px !important;

|

| 44 |

+

letter-spacing: 0em;

|

| 45 |

+

text-align: left;

|

| 46 |

+

}

|

| 47 |

+

|

| 48 |

+

.content-image {

|

| 49 |

+

width: 100% !important;

|

| 50 |

+

height: auto !important;

|

| 51 |

+

}

|

| 52 |

+

|

| 53 |

+

.vl {

|

| 54 |

+

border-left: 5px solid #1d652a;

|

| 55 |

+

padding-left: 20px;

|

| 56 |

+

color: #1d652a !important;

|

| 57 |

+

}

|

| 58 |

+

|

| 59 |

+

.grid-container {

|

| 60 |

+

display: grid;

|

| 61 |

+

grid-template-columns: 1fr 2fr;

|

| 62 |

+

gap: 20px;

|

| 63 |

+

align-items: flex-start;

|

| 64 |

+

margin-bottom: 0.7em;

|

| 65 |

+

}

|

| 66 |

+

|

| 67 |

+

.grid-container:nth-child(2) {

|

| 68 |

+

align-items: center;

|

| 69 |

+

}

|

| 70 |

+

|

| 71 |

+

@media screen and (max-width: 768px) {

|

| 72 |

+

.container {

|

| 73 |

+

width: 90%;

|

| 74 |

+

}

|

| 75 |

+

|

| 76 |

+

.grid-container {

|

| 77 |

+

display: block;

|

| 78 |

+

}

|

| 79 |

+

|

| 80 |

+

.overview-heading {

|

| 81 |

+

font-size: 18px !important;

|

| 82 |

+

}

|

| 83 |

+

}

|

utils/page_utils.py

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from typing import Optional

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

class ColorPalette:

|

| 5 |

+

"""Color Palette Container."""

|

| 6 |

+

all = []

|

| 7 |

+

|

| 8 |

+

def __init__(

|

| 9 |

+

self,

|

| 10 |

+

c50: str,

|

| 11 |

+

c100: str,

|

| 12 |

+

c200: str,

|

| 13 |

+

c300: str,

|

| 14 |

+

c400: str,

|

| 15 |

+

c500: str,

|

| 16 |

+

c600: str,

|

| 17 |

+

c700: str,

|

| 18 |

+

c800: str,

|

| 19 |

+

c900: str,

|

| 20 |

+

c950: str,

|

| 21 |

+

name: Optional[str] = None,

|

| 22 |

+

):

|

| 23 |

+

self.c50 = c50

|

| 24 |

+

self.c100 = c100

|

| 25 |

+

self.c200 = c200

|

| 26 |

+

self.c300 = c300

|

| 27 |

+

self.c400 = c400

|

| 28 |

+

self.c500 = c500

|

| 29 |

+

self.c600 = c600

|

| 30 |

+

self.c700 = c700

|

| 31 |

+

self.c800 = c800

|

| 32 |

+

self.c900 = c900

|

| 33 |

+

self.c950 = c950

|

| 34 |

+

self.name = name

|

| 35 |

+

ColorPalette.all.append(self)

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

KALBE_THEME_COLOR = ColorPalette(

|

| 39 |

+

name='kalbe',

|

| 40 |

+

c50='#f2f9e8',

|

| 41 |

+

c100='#dff3c4',

|

| 42 |

+

c200='#c2e78d',

|

| 43 |

+

c300='#9fd862',

|

| 44 |

+

c400='#7fc93f',

|

| 45 |

+

c500='#3F831C',

|

| 46 |

+

c600='#31661a',

|

| 47 |

+

c700='#244c13',

|

| 48 |

+

c800='#18340c',

|

| 49 |

+

c900='#0c1b06',

|

| 50 |

+

c950='#050a02',

|

| 51 |

+

)

|