Spaces:

Running

Running

Upload 12 files

Browse files- .gitignore +10 -0

- Dockerfile +21 -0

- Dockerfile.cpu +21 -0

- LICENSE +21 -0

- README.md +227 -11

- docker-compose.yml +40 -0

- icon.png +0 -0

- launcher.py +168 -0

- requirements.txt +10 -0

- subgen.env +6 -0

- subgen.py +1045 -0

- subgen.xml +56 -0

.gitignore

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.vscode/*

|

| 2 |

+

|

| 3 |

+

# Local History for Visual Studio Code

|

| 4 |

+

.history/

|

| 5 |

+

|

| 6 |

+

# Built Visual Studio Code Extensions

|

| 7 |

+

*.vsix

|

| 8 |

+

|

| 9 |

+

#ignore our settings

|

| 10 |

+

subgen.env

|

Dockerfile

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM nvidia/cuda:12.2.2-cudnn8-runtime-ubuntu22.04

|

| 2 |

+

|

| 3 |

+

WORKDIR /subgen

|

| 4 |

+

|

| 5 |

+

ADD https://raw.githubusercontent.com/McCloudS/subgen/main/requirements.txt /subgen/requirements.txt

|

| 6 |

+

|

| 7 |

+

RUN apt-get update \

|

| 8 |

+

&& apt-get install -y \

|

| 9 |

+

python3 \

|

| 10 |

+

python3-pip \

|

| 11 |

+

ffmpeg \

|

| 12 |

+

&& apt-get clean \

|

| 13 |

+

&& rm -rf /var/lib/apt/lists/* \

|

| 14 |

+

&& pip3 install -r requirements.txt

|

| 15 |

+

|

| 16 |

+

ENV PYTHONUNBUFFERED=1

|

| 17 |

+

|

| 18 |

+

ADD https://raw.githubusercontent.com/McCloudS/subgen/main/launcher.py /subgen/launcher.py

|

| 19 |

+

ADD https://raw.githubusercontent.com/McCloudS/subgen/main/subgen.py /subgen/subgen.py

|

| 20 |

+

|

| 21 |

+

CMD [ "bash", "-c", "python3 -u launcher.py" ]

|

Dockerfile.cpu

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM python:3.11-slim-bullseye

|

| 2 |

+

|

| 3 |

+

WORKDIR /subgen

|

| 4 |

+

|

| 5 |

+

ADD https://raw.githubusercontent.com/McCloudS/subgen/main/requirements.txt /subgen/requirements.txt

|

| 6 |

+

|

| 7 |

+

RUN apt-get update \

|

| 8 |

+

&& apt-get install -y \

|

| 9 |

+

python3 \

|

| 10 |

+

python3-pip \

|

| 11 |

+

ffmpeg \

|

| 12 |

+

&& apt-get clean \

|

| 13 |

+

&& rm -rf /var/lib/apt/lists/* \

|

| 14 |

+

&& pip install -r requirements.txt

|

| 15 |

+

|

| 16 |

+

ENV PYTHONUNBUFFERED=1

|

| 17 |

+

|

| 18 |

+

ADD https://raw.githubusercontent.com/McCloudS/subgen/main/launcher.py /subgen/launcher.py

|

| 19 |

+

ADD https://raw.githubusercontent.com/McCloudS/subgen/main/subgen.py /subgen/subgen.py

|

| 20 |

+

|

| 21 |

+

CMD [ "bash", "-c", "python3 -u launcher.py" ]

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 McCloudS

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

README.md

CHANGED

|

@@ -1,11 +1,227 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[](https://www.paypal.com/donate/?hosted_button_id=SU4QQP6LH5PF6)

|

| 2 |

+

<img src="https://raw.githubusercontent.com/McCloudS/subgen/main/icon.png" width="200">

|

| 3 |

+

|

| 4 |

+

<details>

|

| 5 |

+

<summary>Updates:</summary>

|

| 6 |

+

|

| 7 |

+

21 Apr 2024: Fixed queuing with thanks to https://github.com/xhzhu0628 @ https://github.com/McCloudS/subgen/pull/85. Bazarr intentionally doesn't follow `CONCURRENT_TRANSCRIPTIONS` because it needs a time sensitive response.

|

| 8 |

+

|

| 9 |

+

31 Mar 2024: Removed `/subsync` endpoint and general refactoring. Open an issue if you were using it!

|

| 10 |

+

|

| 11 |

+

24 Mar 2024: Added a 'webui' to configure environment variables. You can use this instead of manually editing the script or using Environment Variables in your OS or Docker (if you want). The config will prioritize OS Env Variables, then the .env file, then the defaults. You can access it at `http://subgen:9000/`

|

| 12 |

+

|

| 13 |

+

23 Mar 2024: Added `CUSTOM_REGROUP` to try to 'clean up' subtitles a bit.

|

| 14 |

+

|

| 15 |

+

22 Mar 2024: Added LRC capability via see: `'LRC_FOR_AUDIO_FILES' | True | Will generate LRC (instead of SRT) files for filetypes: '.mp3', '.flac', '.wav', '.alac', '.ape', '.ogg', '.wma', '.m4a', '.m4b', '.aac', '.aiff' |`

|

| 16 |

+

|

| 17 |

+

21 Mar 2024: Added a 'wizard' into the launcher that will help standalone users get common Bazarr variables configured. See below in Launcher section. Removed 'Transformers' as an option. While I usually don't like to remove features, I don't think anyone is using this and the results are wildly unpredictable and often cause out of memory errors. Added two new environment variables called `USE_MODEL_PROMPT` and `CUSTOM_MODEL_PROMPT`. If `USE_MODEL_PROMPT` is `True` it will use `CUSTOM_MODEL_PROMPT` if set, otherwise will default to using the pre-configured language pairings, such as: `"en": "Hello, welcome to my lecture.",

|

| 18 |

+

"zh": "你好,欢迎来到我的讲座。"` These pre-configurated translations are geared towards fixing some audio that may not have punctionation. We can prompt it to try to force the use of punctuation during transcription.

|

| 19 |

+

|

| 20 |

+

19 Mar 2024: Added a `MONITOR` environment variable. Will 'watch' or 'monitor' your `TRANSCRIBE_FOLDERS` for changes and run on them. Useful if you just want to paste files into a folder and get subtitles.

|

| 21 |

+

|

| 22 |

+

6 Mar 2024: Added a `/subsync` endpoint that can attempt to align/synchronize subtitles to a file. Takes audio_file, subtitle_file, language (2 letter code), and outputs an srt.

|

| 23 |

+

|

| 24 |

+

5 Mar 2024: Cleaned up logging. Added timestamps option (if Debug = True, timestamps will print in logs).

|

| 25 |

+

|

| 26 |

+

4 Mar 2024: Updated Dockerfile CUDA to 12.2.2 (From CTranslate2). Added endpoint `/status` to return Subgen version. Can also use distil models now! See variables below!

|

| 27 |

+

|

| 28 |

+

29 Feb 2024: Changed sefault port to align with whisper-asr and deconflict other consumers of the previous port.

|

| 29 |

+

|

| 30 |

+

11 Feb 2024: Added a 'launcher.py' file for Docker to prevent huge image downloads. Now set UPDATE to True if you want pull the latest version, otherwise it will default to what was in the image on build. Docker builds will still be auto-built on any commit. If you don't want to use the auto-update function, no action is needed on your part and continue to update docker images as before. Fixed bug where detect-langauge could return an empty result. Reduced useless debug output that was spamming logs and defaulted DEBUG to True. Added APPEND, which will add f"Transcribed by whisperAI with faster-whisper ({whisper_model}) on {datetime.now()}" at the end of a subtitle.

|

| 31 |

+

|

| 32 |

+

10 Feb 2024: Added some features from JaiZed's branch such as skipping if SDH subtitles are detected, functions updated to also be able to transcribe audio files, allow individual files to be manually transcribed, and a better implementation of forceLanguage. Added `/batch` endpoint (Thanks JaiZed). Allows you to navigate in a browser to http://subgen_ip:9000/docs and call the batch endpoint which can take a file or a folder to manually transcribe files. Added CLEAR_VRAM_ON_COMPLETE, HF_TRANSFORMERS, HF_BATCH_SIZE. Hugging Face Transformers boast '9x increase', but my limited testing shows it's comparable to faster-whisper or slightly slower. I also have an older 8gb GPU. Simplest way to persist HF Transformer models is to set "HF_HUB_CACHE" and set it to "/subgen/models" for Docker (assuming you have the matching volume).

|

| 33 |

+

|

| 34 |

+

8 Feb 2024: Added FORCE_DETECTED_LANGUAGE_TO to force a wrongly detected language. Fixed asr to actually use the language passed to it.

|

| 35 |

+

|

| 36 |

+

5 Feb 2024: General housekeeping, minor tweaks on the TRANSCRIBE_FOLDERS function.

|

| 37 |

+

|

| 38 |

+

28 Jan 2024: Fixed issue with ffmpeg python module not importing correctly. Removed separate GPU/CPU containers. Also removed the script from installing packages, which should help with odd updates I can't control (from other packages/modules). The image is a couple gigabytes larger, but allows easier maintenance.

|

| 39 |

+

|

| 40 |

+

19 Dec 2023: Added the ability for Plex and Jellyfin to automatically update metadata so the subtitles shows up properly on playback. (See https://github.com/McCloudS/subgen/pull/33 from Rikiar73574)

|

| 41 |

+

|

| 42 |

+

31 Oct 2023: Added Bazarr support via Whipser provider.

|

| 43 |

+

|

| 44 |

+

25 Oct 2023: Added Emby (IE http://192.168.1.111:9000/emby) support and TRANSCRIBE_FOLDERS, which will recurse through the provided folders and generate subtitles. It's geared towards attempting to transcribe existing media without using a webhook.

|

| 45 |

+

|

| 46 |

+

23 Oct 2023: There are now two docker images, ones for CPU (it's smaller): mccloud/subgen:latest, mccloud/subgen:cpu, the other is for cuda/GPU: mccloud/subgen:cuda. I also added Jellyfin support and considerable cleanup in the script. I also renamed the webhooks, so they will require new configuration/updates on your end. Instead of /webhook they are now /plex, /tautulli, and /jellyfin.

|

| 47 |

+

|

| 48 |

+

22 Oct 2023: The script should have backwards compability with previous envirionment settings, but just to be sure, look at the new options below. If you don't want to manually edit your environment variables, just edit the script manually. While I have added GPU support, I haven't tested it yet.

|

| 49 |

+

|

| 50 |

+

19 Oct 2023: And we're back! Uses faster-whisper and stable-ts. Shouldn't break anything from previous settings, but adds a couple new options that aren't documented at this point in time. As of now, this is not a docker image on dockerhub. The potential intent is to move this eventually to a pure python script, primarily to simplify my efforts. Quick and dirty to meet dependencies: pip or `pip3 install flask requests stable-ts faster-whisper`

|

| 51 |

+

|

| 52 |

+

This potentially has the ability to use CUDA/Nvidia GPU's, but I don't have one set up yet. Tesla T4 is in the mail!

|

| 53 |

+

|

| 54 |

+

2 Feb 2023: Added Tautulli webhooks back in. Didn't realize Plex webhooks was PlexPass only. See below for instructions to add it back in.

|

| 55 |

+

|

| 56 |

+

31 Jan 2023 : Rewrote the script substantially to remove Tautulli and fix some variable handling. For some reason my implementation requires the container to be in host mode. My Plex was giving "401 Unauthorized" when attempt to query from docker subnets during API calls. (**Fixed now, it can be in bridge**)

|

| 57 |

+

|

| 58 |

+

</details>

|

| 59 |

+

|

| 60 |

+

# What is this?

|

| 61 |

+

|

| 62 |

+

This will transcribe your personal media on a Plex, Emby, or Jellyfin server to create subtitles (.srt) from audio/video files with the following languages: https://github.com/McCloudS/subgen#audio-languages-supported-via-openai and transcribe or translate them into english. It can also be used as a Whisper provider in Bazarr (See below instructions). It technically has support to transcribe from a foreign langauge to itself (IE Japanese > Japanese, see [TRANSCRIBE_OR_TRANSLATE](https://github.com/McCloudS/subgen#variables)). It is currently reliant on webhooks from Jellyfin, Emby, Plex, or Tautulli. This uses stable-ts and faster-whisper which can use both Nvidia GPUs and CPUs.

|

| 63 |

+

|

| 64 |

+

# Why?

|

| 65 |

+

|

| 66 |

+

Honestly, I built this for me, but saw the utility in other people maybe using it. This works well for my use case. Since having children, I'm either deaf or wanting to have everything quiet. We watch EVERYTHING with subtitles now, and I feel like I can't even understand the show without them. I use Bazarr to auto-download, and gap fill with Plex's built-in capability. This is for everything else. Some shows just won't have subtitles available for some reason or another, or in some cases on my H265 media, they are wildly out of sync.

|

| 67 |

+

|

| 68 |

+

# What can it do?

|

| 69 |

+

|

| 70 |

+

* Create .srt subtitles when a media file is added or played which triggers off of Jellyfin, Plex, or Tautulli webhooks. It can also be called via the Whisper provider inside Bazarr.

|

| 71 |

+

|

| 72 |

+

# How do I set it up?

|

| 73 |

+

|

| 74 |

+

## Install/Setup

|

| 75 |

+

|

| 76 |

+

You can now configure all environment variables via `http://subgen:9000/` (fill in your appropriate IP and port). You can still use Docker variables or OS Env Variables if you prefer. A small snapshot of it below:

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

### Standalone/Without Docker

|

| 81 |

+

|

| 82 |

+

Install python3 and ffmpeg ~~and run `pip3 install numpy stable-ts fastapi requests faster-whisper uvicorn python-multipart python-ffmpeg whisper transformers optimum accelerate watchdog`~~. Then run it: `python3 launcher.py -u -i -s`. You need to have matching paths relative to your Plex server/folders, or use USE_PATH_MAPPING. Paths are not needed if you are only using Bazarr. You will need the appropriate NVIDIA drivers installed (12.2.0): https://developer.nvidia.com/cuda-12-2-0-download-archive?target_os=Windows&target_arch=x86_64

|

| 83 |

+

|

| 84 |

+

#### Using Launcher

|

| 85 |

+

|

| 86 |

+

launcher.py can launch subgen for you and automate the setup and can take the following options:

|

| 87 |

+

|

| 88 |

+

|

| 89 |

+

Using `-s` for Bazarr setup:

|

| 90 |

+

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

### Docker

|

| 95 |

+

|

| 96 |

+

The dockerfile is in the repo along with an example docker-compose file, and is also posted on dockerhub (mccloud/subgen).

|

| 97 |

+

|

| 98 |

+

If using Subgen without Bazarr, you MUST mount your media volumes in subgen the same way Plex (or your media server) sees them. For example, if Plex uses "/Share/media/TV:/tv" you must have that identical volume in subgen.

|

| 99 |

+

|

| 100 |

+

`"${APPDATA}/subgen/models:/subgen/models"` is just for storage of the language models. This isn't necessary, but you will have to redownload the models on any new image pulls if you don't use it.

|

| 101 |

+

|

| 102 |

+

`"${APPDATA}/subgen/subgen.py:/subgen/subgen.py"` If you want to control the version of subgen.py by yourself. Launcher.py can still be used to download a newer version.

|

| 103 |

+

|

| 104 |

+

If you want to use a GPU, you need to map it accordingly.

|

| 105 |

+

|

| 106 |

+

## Plex

|

| 107 |

+

|

| 108 |

+

Create a webhook in Plex that will call back to your subgen address, IE: http://192.168.1.111:9000/plex see: https://support.plex.tv/articles/115002267687-webhooks/ You will also need to generate the token to use it.

|

| 109 |

+

|

| 110 |

+

## Emby

|

| 111 |

+

|

| 112 |

+

All you need to do is create a webhook in Emby pointing to your subgen IE: http://192.168.154:9000/emby

|

| 113 |

+

|

| 114 |

+

Emby was really nice and provides good information in their responses, so we don't need to add an API token or server url to query for more information.

|

| 115 |

+

|

| 116 |

+

## Bazarr

|

| 117 |

+

|

| 118 |

+

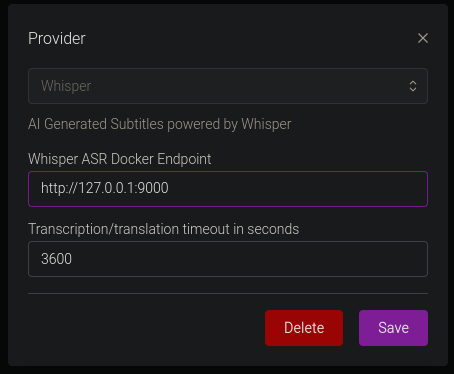

You only need to confiure the Whisper Provider as shown below: <br>

|

| 119 |

+

<br>

|

| 120 |

+

The Docker Endpoint is the ip address and port of your subgen container (IE http://192.168.1.111:9000) See https://wiki.bazarr.media/Additional-Configuration/Whisper-Provider/ for more info. I recomend not enabling this with other webhooks, or you will likely be generating duplicate subtitles. If you are using Bazarr, path mapping isn't necessary, as Bazarr sends the file over http.

|

| 121 |

+

|

| 122 |

+

## Tautulli

|

| 123 |

+

|

| 124 |

+

Create the webhooks in Tautulli with the following settings:

|

| 125 |

+

Webhook URL: http://yourdockerip:9000/tautulli

|

| 126 |

+

Webhook Method: Post

|

| 127 |

+

Triggers: Whatever you want, but you'll likely want "Playback Start" and "Recently Added"

|

| 128 |

+

Data: Under Playback Start, JSON Header will be:

|

| 129 |

+

```json

|

| 130 |

+

{ "source":"Tautulli" }

|

| 131 |

+

```

|

| 132 |

+

Data:

|

| 133 |

+

```json

|

| 134 |

+

{

|

| 135 |

+

"event":"played",

|

| 136 |

+

"file":"{file}",

|

| 137 |

+

"filename":"{filename}",

|

| 138 |

+

"mediatype":"{media_type}"

|

| 139 |

+

}

|

| 140 |

+

```

|

| 141 |

+

Similarly, under Recently Added, Header is:

|

| 142 |

+

```json

|

| 143 |

+

{ "source":"Tautulli" }

|

| 144 |

+

```

|

| 145 |

+

Data:

|

| 146 |

+

```json

|

| 147 |

+

{

|

| 148 |

+

"event":"added",

|

| 149 |

+

"file":"{file}",

|

| 150 |

+

"filename":"{filename}",

|

| 151 |

+

"mediatype":"{media_type}"

|

| 152 |

+

}

|

| 153 |

+

```

|

| 154 |

+

## Jellyfin

|

| 155 |

+

|

| 156 |

+

First, you need to install the Jellyfin webhooks plugin. Then you need to click "Add Generic Destination", name it anything you want, webhook url is your subgen info (IE http://192.168.1.154:9000/jellyfin). Next, check Item Added, Playback Start, and Send All Properties. Last, "Add Request Header" and add the Key: `Content-Type` Value: `application/json`<br><br>Click Save and you should be all set!

|

| 157 |

+

|

| 158 |

+

## Variables

|

| 159 |

+

|

| 160 |

+

You can define the port via environment variables, but the endpoints are static.

|

| 161 |

+

|

| 162 |

+

The following environment variables are available in Docker. They will default to the values listed below.

|

| 163 |

+

| Variable | Default Value | Description |

|

| 164 |

+

|---------------------------|------------------------|-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

| 165 |

+

| TRANSCRIBE_DEVICE | 'cpu' | Can transcribe via gpu (Cuda only) or cpu. Takes option of "cpu", "gpu", "cuda". |

|

| 166 |

+

| WHISPER_MODEL | 'medium' | Can be:'tiny', 'tiny.en', 'base', 'base.en', 'small', 'small.en', 'medium', 'medium.en', 'large-v1','large-v2', 'large-v3', 'large', 'distil-large-v2', 'distil-large-v3', 'distil-medium.en', 'distil-small.en' |

|

| 167 |

+

| CONCURRENT_TRANSCRIPTIONS | 2 | Number of files it will transcribe in parallel |

|

| 168 |

+

| WHISPER_THREADS | 4 | number of threads to use during computation |

|

| 169 |

+

| MODEL_PATH | './models' | This is where the WHISPER_MODEL will be stored. This defaults to placing it where you execute the script in the folder 'models' |

|

| 170 |

+

| PROCADDEDMEDIA | True | will gen subtitles for all media added regardless of existing external/embedded subtitles (based off of SKIPIFINTERNALSUBLANG) |

|

| 171 |

+

| PROCMEDIAONPLAY | True | will gen subtitles for all played media regardless of existing external/embedded subtitles (based off of SKIPIFINTERNALSUBLANG) |

|

| 172 |

+

| NAMESUBLANG | 'aa' | allows you to pick what it will name the subtitle. Instead of using EN, I'm using AA, so it doesn't mix with exiting external EN subs, and AA will populate higher on the list in Plex. |

|

| 173 |

+

| SKIPIFINTERNALSUBLANG | 'eng' | Will not generate a subtitle if the file has an internal sub matching the 3 letter code of this variable (See https://en.wikipedia.org/wiki/List_of_ISO_639-1_codes) |

|

| 174 |

+

| WORD_LEVEL_HIGHLIGHT | False | Highlights each words as it's spoken in the subtitle. See example video @ https://github.com/jianfch/stable-ts |

|

| 175 |

+

| PLEXSERVER | 'http://plex:32400' | This needs to be set to your local plex server address/port |

|

| 176 |

+

| PLEXTOKEN | 'token here' | This needs to be set to your plex token found by https://support.plex.tv/articles/204059436-finding-an-authentication-token-x-plex-token/ |

|

| 177 |

+

| JELLYFINSERVER | 'http://jellyfin:8096' | Set to your Jellyfin server address/port |

|

| 178 |

+

| JELLYFINTOKEN | 'token here' | Generate a token inside the Jellyfin interface |

|

| 179 |

+

| WEBHOOKPORT | 9000 | Change this if you need a different port for your webhook |

|

| 180 |

+

| USE_PATH_MAPPING | False | Similar to sonarr and radarr path mapping, this will attempt to replace paths on file systems that don't have identical paths. Currently only support for one path replacement. Examples below. |

|

| 181 |

+

| PATH_MAPPING_FROM | '/tv' | This is the path of my media relative to my Plex server |

|

| 182 |

+

| PATH_MAPPING_TO | '/Volumes/TV' | This is the path of that same folder relative to my Mac Mini that will run the script |

|

| 183 |

+

| TRANSCRIBE_FOLDERS | '' | Takes a pipe '\|' separated list (For example: /tv\|/movies\|/familyvideos) and iterates through and adds those files to be queued for subtitle generation if they don't have internal subtitles |

|

| 184 |

+

| TRANSCRIBE_OR_TRANSLATE | 'transcribe' | Takes either 'transcribe' or 'translate'. Transcribe will transcribe the audio in the same language as the input. Translate will transcribe and translate into English. |

|

| 185 |

+

| COMPUTE_TYPE | 'auto' | Set compute-type using the following information: https://github.com/OpenNMT/CTranslate2/blob/master/docs/quantization.md |

|

| 186 |

+

| DEBUG | True | Provides some debug data that can be helpful to troubleshoot path mapping and other issues. Fun fact, if this is set to true, any modifications to the script will auto-reload it (if it isn't actively transcoding). Useful to make small tweaks without re-downloading the whole file. |

|

| 187 |

+

| FORCE_DETECTED_LANGUAGE_TO | '' | This is to force the model to a language instead of the detected one, takes a 2 letter language code. For example, your audio is French but keeps detecting as English, you would set it to 'fr' |

|

| 188 |

+

| CLEAR_VRAM_ON_COMPLETE | True | This will delete the model and do garbage collection when queue is empty. Good if you need to use the VRAM for something else. |

|

| 189 |

+

| UPDATE | False | Will pull latest subgen.py from the repository if True. False will use the original subgen.py built into the Docker image. Standalone users can use this with launcher.py to get updates. |

|

| 190 |

+

| APPEND | False | Will add the following at the end of a subtitle: "Transcribed by whisperAI with faster-whisper ({whisper_model}) on {datetime.now()}"

|

| 191 |

+

| MONITOR | False | Will monitor `TRANSCRIBE_FOLDERS` for real-time changes to see if we need to generate subtitles |

|

| 192 |

+

| USE_MODEL_PROMPT | False | When set to `True`, will use the default prompt stored in greetings_translations "Hello, welcome to my lecture." to try and force the use of punctuation in transcriptions that don't. Automatic `CUSTOM_MODEL_PROMPT` will only work with ASR, but can still be set manually like so: `USE_MODEL_PROMPT=True and CUSTOM_MODEL_PROMPT=Hello, welcome to my lecture.` |

|

| 193 |

+

| CUSTOM_MODEL_PROMPT | '' | If `USE_MODEL_PROMPT` is `True`, you can override the default prompt (See: https://medium.com/axinc-ai/prompt-engineering-in-whisper-6bb18003562d for great examples). |

|

| 194 |

+

| LRC_FOR_AUDIO_FILES | True | Will generate LRC (instead of SRT) files for filetypes: '.mp3', '.flac', '.wav', '.alac', '.ape', '.ogg', '.wma', '.m4a', '.m4b', '.aac', '.aiff' |

|

| 195 |

+

| CUSTOM_REGROUP | 'cm_sl=84_sl=42++++++1' | Attempts to regroup some of the segments to make a cleaner looking subtitle. See https://github.com/McCloudS/subgen/issues/68 for discussion. Set to blank if you want to use Stable-TS default regroups algorithm of `cm_sp=,* /,_sg=.5_mg=.3+3_sp=.* /。/?/?` |

|

| 196 |

+

| DETECT_LANGUAGE_LENGTH | 30 | Detect language on the first x seconds of the audio. |

|

| 197 |

+

|

| 198 |

+

### Images:

|

| 199 |

+

`mccloud/subgen:latest` is GPU or CPU <br>

|

| 200 |

+

`mccloud/subgen:cpu` is for CPU only (slightly smaller image)

|

| 201 |

+

<br><br>

|

| 202 |

+

|

| 203 |

+

# What are the limitations/problems?

|

| 204 |

+

|

| 205 |

+

* I made it and know nothing about formal deployment for python coding.

|

| 206 |

+

* It's using trained AI models to transcribe, so it WILL mess up

|

| 207 |

+

|

| 208 |

+

# What's next?

|

| 209 |

+

|

| 210 |

+

Fix documentation and make it prettier!

|

| 211 |

+

|

| 212 |

+

# Audio Languages Supported (via OpenAI)

|

| 213 |

+

|

| 214 |

+

Afrikaans, Arabic, Armenian, Azerbaijani, Belarusian, Bosnian, Bulgarian, Catalan, Chinese, Croatian, Czech, Danish, Dutch, English, Estonian, Finnish, French, Galician, German, Greek, Hebrew, Hindi, Hungarian, Icelandic, Indonesian, Italian, Japanese, Kannada, Kazakh, Korean, Latvian, Lithuanian, Macedonian, Malay, Marathi, Maori, Nepali, Norwegian, Persian, Polish, Portuguese, Romanian, Russian, Serbian, Slovak, Slovenian, Spanish, Swahili, Swedish, Tagalog, Tamil, Thai, Turkish, Ukrainian, Urdu, Vietnamese, and Welsh.

|

| 215 |

+

|

| 216 |

+

# Additional reading:

|

| 217 |

+

|

| 218 |

+

* https://github.com/openai/whisper (Original OpenAI project)

|

| 219 |

+

* https://en.wikipedia.org/wiki/List_of_ISO_639-1_codes (2 letter subtitle codes)

|

| 220 |

+

|

| 221 |

+

# Credits:

|

| 222 |

+

* Whisper.cpp (https://github.com/ggerganov/whisper.cpp) for original implementation

|

| 223 |

+

* Google

|

| 224 |

+

* ffmpeg

|

| 225 |

+

* https://github.com/jianfch/stable-ts

|

| 226 |

+

* https://github.com/guillaumekln/faster-whisper

|

| 227 |

+

* Whipser ASR Webservice (https://github.com/ahmetoner/whisper-asr-webservice) for how to implement Bazarr webhooks.

|

docker-compose.yml

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#docker-compose.yml

|

| 2 |

+

version: '2'

|

| 3 |

+

services:

|

| 4 |

+

subgen:

|

| 5 |

+

container_name: subgen

|

| 6 |

+

tty: true

|

| 7 |

+

image: mccloud/subgen

|

| 8 |

+

environment:

|

| 9 |

+

- "WHISPER_MODEL=medium"

|

| 10 |

+

- "WHISPER_THREADS=4"

|

| 11 |

+

- "PROCADDEDMEDIA=True"

|

| 12 |

+

- "PROCMEDIAONPLAY=False"

|

| 13 |

+

- "NAMESUBLANG=aa"

|

| 14 |

+

- "SKIPIFINTERNALSUBLANG=eng"

|

| 15 |

+

- "PLEXTOKEN=plextoken"

|

| 16 |

+

- "PLEXSERVER=http://plexserver:32400"

|

| 17 |

+

- "JELLYFINTOKEN=token here"

|

| 18 |

+

- "JELLYFINSERVER=http://jellyfin:8096"

|

| 19 |

+

- "WEBHOOKPORT=9000"

|

| 20 |

+

- "CONCURRENT_TRANSCRIPTIONS=2"

|

| 21 |

+

- "WORD_LEVEL_HIGHLIGHT=False"

|

| 22 |

+

- "DEBUG=True"

|

| 23 |

+

- "USE_PATH_MAPPING=False"

|

| 24 |

+

- "PATH_MAPPING_FROM=/tv"

|

| 25 |

+

- "PATH_MAPPING_TO=/Volumes/TV"

|

| 26 |

+

- "TRANSCRIBE_DEVICE=cpu"

|

| 27 |

+

- "CLEAR_VRAM_ON_COMPLETE=True"

|

| 28 |

+

- "MODEL_PATH=./models"

|

| 29 |

+

- "UPDATE=False"

|

| 30 |

+

- "APPEND=False"

|

| 31 |

+

- "USE_MODEL_PROMPT=False"

|

| 32 |

+

- "CUSTOM_MODEL_PROMPT="

|

| 33 |

+

- "LRC_FOR_AUDIO_FILES=True"

|

| 34 |

+

- "CUSTOM_REGROUP=cm_sl=84_sl=42++++++1"

|

| 35 |

+

volumes:

|

| 36 |

+

- "${TV}:/tv"

|

| 37 |

+

- "${MOVIES}:/movies"

|

| 38 |

+

- "${APPDATA}/subgen/models:/subgen/models"

|

| 39 |

+

ports:

|

| 40 |

+

- "9000:9000"

|

icon.png

ADDED

|

|

launcher.py

ADDED

|

@@ -0,0 +1,168 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import sys

|

| 3 |

+

import urllib.request

|

| 4 |

+

import subprocess

|

| 5 |

+

import argparse

|

| 6 |

+

|

| 7 |

+

def convert_to_bool(in_bool):

|

| 8 |

+

# Convert the input to string and lower case, then check against true values

|

| 9 |

+

return str(in_bool).lower() in ('true', 'on', '1', 'y', 'yes')

|

| 10 |

+

|

| 11 |

+

def install_packages_from_requirements(requirements_file):

|

| 12 |

+

try:

|

| 13 |

+

# Try installing with pip3

|

| 14 |

+

subprocess.run(['pip3', 'install', '-r', requirements_file, '--upgrade'], check=True)

|

| 15 |

+

print("Packages installed successfully using pip3.")

|

| 16 |

+

except subprocess.CalledProcessError:

|

| 17 |

+

try:

|

| 18 |

+

# If pip3 fails, try installing with pip

|

| 19 |

+

subprocess.run(['pip', 'install', '-r', requirements_file, '--upgrade'], check=True)

|

| 20 |

+

print("Packages installed successfully using pip.")

|

| 21 |

+

except subprocess.CalledProcessError:

|

| 22 |

+

print("Failed to install packages using both pip3 and pip.")

|

| 23 |

+

|

| 24 |

+

def download_from_github(url, output_file):

|

| 25 |

+

try:

|

| 26 |

+

with urllib.request.urlopen(url) as response, open(output_file, 'wb') as out_file:

|

| 27 |

+

data = response.read() # a `bytes` object

|

| 28 |

+

out_file.write(data)

|

| 29 |

+

print(f"File downloaded successfully to {output_file}")

|

| 30 |

+

except urllib.error.HTTPError as e:

|

| 31 |

+

print(f"Failed to download file from {url}. HTTP Error Code: {e.code}")

|

| 32 |

+

except urllib.error.URLError as e:

|

| 33 |

+

print(f"URL Error: {e.reason}")

|

| 34 |

+

except Exception as e:

|

| 35 |

+

print(f"An error occurred: {e}")

|

| 36 |

+

|

| 37 |

+

def prompt_and_save_bazarr_env_variables():

|

| 38 |

+

"""

|

| 39 |

+

Prompts the user for Bazarr related environment variables with descriptions and saves them to a file.

|

| 40 |

+

If the user does not input anything, default values are used.

|

| 41 |

+

"""

|

| 42 |

+

# Instructions for the user

|

| 43 |

+

instructions = (

|

| 44 |

+

"You will be prompted for several configuration values.\n"

|

| 45 |

+

"If you wish to use the default value for any of them, simply press Enter without typing anything.\n"

|

| 46 |

+

"The default values are shown in brackets [] next to the prompts.\n"

|

| 47 |

+

"Items can be the value of true, on, 1, y, yes, false, off, 0, n, no, or an appropriate text response.\n"

|

| 48 |

+

)

|

| 49 |

+

print(instructions)

|

| 50 |

+

env_vars = {

|

| 51 |

+

'WHISPER_MODEL': ('Whisper Model', 'Enter the Whisper model you want to run: tiny, tiny.en, base, base.en, small, small.en, medium, medium.en, large, distil-large-v2, distil-medium.en, distil-small.en', 'medium'),

|

| 52 |

+

'WEBHOOKPORT': ('Webhook Port', 'Default listening port for subgen.py', '9000'),

|

| 53 |

+

'TRANSCRIBE_DEVICE': ('Transcribe Device', 'Set as cpu or gpu', 'gpu'),

|

| 54 |

+

'DEBUG': ('Debug', 'Enable debug logging', 'True'),

|

| 55 |

+

'CLEAR_VRAM_ON_COMPLETE': ('Clear VRAM', 'Attempt to clear VRAM when complete (Windows users may need to set this to False)', 'False'),

|

| 56 |

+

'APPEND': ('Append', 'Append \'Transcribed by whisper\' to generated subtitle', 'False'),

|

| 57 |

+

}

|

| 58 |

+

|

| 59 |

+

# Dictionary to hold the user's input

|

| 60 |

+

user_input = {}

|

| 61 |

+

|

| 62 |

+

# Prompt the user for each environment variable and write to .env file

|

| 63 |

+

with open('subgen.env', 'w') as file:

|

| 64 |

+

for var, (description, prompt, default) in env_vars.items():

|

| 65 |

+

value = input(f"{prompt} [{default}]: ") or default

|

| 66 |

+

file.write(f"{var}={value}\n")

|

| 67 |

+

|

| 68 |

+

print("Environment variables have been saved to subgen.env")

|

| 69 |

+

|

| 70 |

+

def load_env_variables(env_filename='subgen.env'):

|

| 71 |

+

"""

|

| 72 |

+

Loads environment variables from a specified .env file and sets them.

|

| 73 |

+

"""

|

| 74 |

+

try:

|

| 75 |

+

with open(env_filename, 'r') as file:

|

| 76 |

+

for line in file:

|

| 77 |

+

var, value = line.strip().split('=', 1)

|

| 78 |

+

os.environ[var] = value

|

| 79 |

+

|

| 80 |

+

print(f"Environment variables have been loaded from {env_filename}")

|

| 81 |

+

|

| 82 |

+

except FileNotFoundError:

|

| 83 |

+

print(f"{env_filename} file not found. Please run prompt_and_save_env_variables() first.")

|

| 84 |

+

|

| 85 |

+

def main():

|

| 86 |

+

# Check if the script is run with 'python' or 'python3'

|

| 87 |

+

if 'python3' in sys.executable:

|

| 88 |

+

python_cmd = 'python3'

|

| 89 |

+

elif 'python' in sys.executable:

|

| 90 |

+

python_cmd = 'python'

|

| 91 |

+

else:

|

| 92 |

+

print("Script started with an unknown command")

|

| 93 |

+

sys.exit(1)

|

| 94 |

+

if sys.version_info[0] < 3:

|

| 95 |

+

print(f"This script requires Python 3 or higher, you are running {sys.version}")

|

| 96 |

+

sys.exit(1) # Terminate the script

|

| 97 |

+

|

| 98 |

+

#Make sure we're saving subgen.py and subgen.env in the right folder

|

| 99 |

+

os.chdir(os.path.dirname(os.path.abspath(__file__)))

|

| 100 |

+

|

| 101 |

+

# Construct the argument parser

|

| 102 |

+

parser = argparse.ArgumentParser(prog="python launcher.py", formatter_class=argparse.ArgumentDefaultsHelpFormatter)

|

| 103 |

+

parser.add_argument('-d', '--debug', default=False, action='store_true', help="Enable console debugging")

|

| 104 |

+

parser.add_argument('-i', '--install', default=False, action='store_true', help="Install/update all necessary packages")

|

| 105 |

+

parser.add_argument('-a', '--append', default=False, action='store_true', help="Append 'Transcribed by whisper' to generated subtitle")

|

| 106 |

+

parser.add_argument('-u', '--update', default=False, action='store_true', help="Update Subgen")

|

| 107 |

+

parser.add_argument('-x', '--exit-early', default=False, action='store_true', help="Exit without running subgen.py")

|

| 108 |

+

parser.add_argument('-s', '--setup-bazarr', default=False, action='store_true', help="Prompt for common Bazarr setup parameters and save them for future runs")

|

| 109 |

+

parser.add_argument('-b', '--branch', type=str, default='main', help='Specify the branch to download from')

|

| 110 |

+

parser.add_argument('-l', '--launcher-update', default=False, action='store_true', help="Update launcher.py and re-launch")

|

| 111 |

+

|

| 112 |

+

args = parser.parse_args()

|

| 113 |

+

|

| 114 |

+

# Get the branch name from the BRANCH environment variable or default to 'main'

|

| 115 |

+

branch_name = args.branch if args.branch != 'main' else os.getenv('BRANCH', 'main')

|

| 116 |

+

# Determine the script name based on the branch name

|

| 117 |

+

script_name = f"-{branch_name}.py" if branch_name != "main" else ".py"

|

| 118 |

+

# Check we need to update the launcher

|

| 119 |

+

|

| 120 |

+

if args.launcher_update or convert_to_bool(os.getenv('LAUNCHER_UPDATE')):

|

| 121 |

+

print(f"Updating launcher.py from GitHub branch {branch_name}...")

|

| 122 |

+

download_from_github(f"https://raw.githubusercontent.com/McCloudS/subgen/{branch_name}/launcher.py", f'launcher{script_name}')

|

| 123 |

+

|

| 124 |

+

# Prepare the arguments to exclude update triggers

|

| 125 |

+

excluded_args = ['--launcher-update', '-l']

|

| 126 |

+

new_args = [arg for arg in sys.argv[1:] if arg not in excluded_args]

|

| 127 |

+

if branch_name == 'main' and args.launcher_update:

|

| 128 |

+

print("Running launcher.py for the 'main' branch.")

|

| 129 |

+

os.execl(sys.executable, sys.executable, "launcher.py", *new_args)

|

| 130 |

+

elif args.launcher_update:

|

| 131 |

+

print(f"Running launcher-{branch_name}.py for the '{branch_name}' branch.")

|

| 132 |

+

os.execl(sys.executable, sys.executable, f"launcher{script_name}", *new_args)

|

| 133 |

+

|

| 134 |

+

# Set environment variables based on the parsed arguments

|

| 135 |

+

os.environ['DEBUG'] = str(args.debug)

|

| 136 |

+

os.environ['APPEND'] = str(args.append)

|

| 137 |

+

|

| 138 |

+

if args.setup_bazarr:

|

| 139 |

+

prompt_and_save_bazarr_env_variables()

|

| 140 |

+

load_env_variables()

|

| 141 |

+

|

| 142 |

+

# URL to the requirements.txt file on GitHub

|

| 143 |

+

requirements_url = "https://raw.githubusercontent.com/McCloudS/subgen/main/requirements.txt"

|

| 144 |

+

requirements_file = "requirements.txt"

|

| 145 |

+

|

| 146 |

+

# Install packages from requirements.txt if the install or packageupdate argument is True

|

| 147 |

+

if args.install:

|

| 148 |

+

download_from_github(requirements_url, requirements_file)

|

| 149 |

+

install_packages_from_requirements(requirements_file)

|

| 150 |

+

|

| 151 |

+

# Check if the script exists or if the UPDATE environment variable is set to True

|

| 152 |

+

if not os.path.exists(f'subgen{script_name}') or args.update or convert_to_bool(os.getenv('UPDATE')):

|

| 153 |

+

print(f"Downloading subgen.py from GitHub branch {branch_name}...")

|

| 154 |

+

download_from_github(f"https://raw.githubusercontent.com/McCloudS/subgen/{branch_name}/subgen.py", f'subgen{script_name}')

|

| 155 |

+

else:

|

| 156 |

+

print("subgen.py exists and UPDATE is set to False, skipping download.")

|

| 157 |

+

|

| 158 |

+

if not args.exit_early:

|

| 159 |

+

print(f'Launching subgen{script_name}')

|

| 160 |

+

if branch_name != 'main':

|

| 161 |

+

subprocess.run([f'{python_cmd}', '-u', f'subgen{script_name}'], check=True)

|

| 162 |

+

else:

|

| 163 |

+

subprocess.run([f'{python_cmd}', '-u', 'subgen.py'], check=True)

|

| 164 |

+

else:

|

| 165 |

+

print("Not running subgen.py: -x or --exit-early set")

|

| 166 |

+

|

| 167 |

+

if __name__ == "__main__":

|

| 168 |

+

main()

|

requirements.txt

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

numpy

|

| 2 |

+

stable-ts

|

| 3 |

+

fastapi

|

| 4 |

+

requests

|

| 5 |

+

faster-whisper

|

| 6 |

+

uvicorn

|

| 7 |

+

python-multipart

|

| 8 |

+

python-ffmpeg

|

| 9 |

+

whisper

|

| 10 |

+

watchdog

|

subgen.env

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

WHISPER_MODEL=medium

|

| 2 |

+

WEBHOOKPORT=9000

|

| 3 |

+

TRANSCRIBE_DEVICE=gpu

|

| 4 |

+

DEBUG=True

|

| 5 |

+

CLEAR_VRAM_ON_COMPLETE=False

|

| 6 |

+

APPEND=False

|

subgen.py

ADDED

|

@@ -0,0 +1,1045 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|