Spaces:

Runtime error

Runtime error

Commit

•

2e4274a

1

Parent(s):

663fe1d

add all application files

Browse files- LICENSE +201 -0

- README.md +48 -7

- apps/.DS_Store +0 -0

- apps/app.py +386 -0

- apps/app_utils.py +262 -0

- apps/data_utils.py +150 -0

- apps/visualization_utils.py +159 -0

- requirements.txt +10 -0

- scripts/download_models.py +70 -0

- scripts/install_dependencies.py +41 -0

- scripts/launch_app.py +41 -0

- setup.py +50 -0

- src/.DS_Store +0 -0

- src/__init__.py +0 -0

- src/content_preservation.py +366 -0

- src/style_classification.py +245 -0

- src/style_transfer.py +94 -0

- src/transformer_interpretability.py +148 -0

- static/images/app_screenshot.png +0 -0

- static/images/cldr-favicon.ico +0 -0

- static/images/ffllogo2@1x.png +0 -0

- tests/__init__.py +0 -0

- tests/test_model_classes.py +164 -0

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,13 +1,54 @@

|

|

| 1 |

---

|

| 2 |

-

title: Exploring Intelligent Writing Assistance

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

sdk: streamlit

|

| 7 |

sdk_version: 1.10.0

|

| 8 |

-

app_file: app.py

|

| 9 |

-

|

|

|

|

| 10 |

license: apache-2.0

|

| 11 |

---

|

| 12 |

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

title: Exploring Intelligent Writing Assistance with Text Style Transfer

|

| 3 |

+

emoji: :twisted_rightwards_arrows:

|

| 4 |

+

colorFrom: blue

|

| 5 |

+

colorTo: green

|

| 6 |

sdk: streamlit

|

| 7 |

sdk_version: 1.10.0

|

| 8 |

+

app_file: apps/app.py

|

| 9 |

+

models: ["sentence-transformers/all-MiniLM-L6-v2", "cffl/bert-base-styleclassification-subjective-neutral", "cffl/bart-base-styletransfer-subjective-to-neutral", "cointegrated/roberta-base-formality", "prithivida/informal_to_formal_styletransfer"]

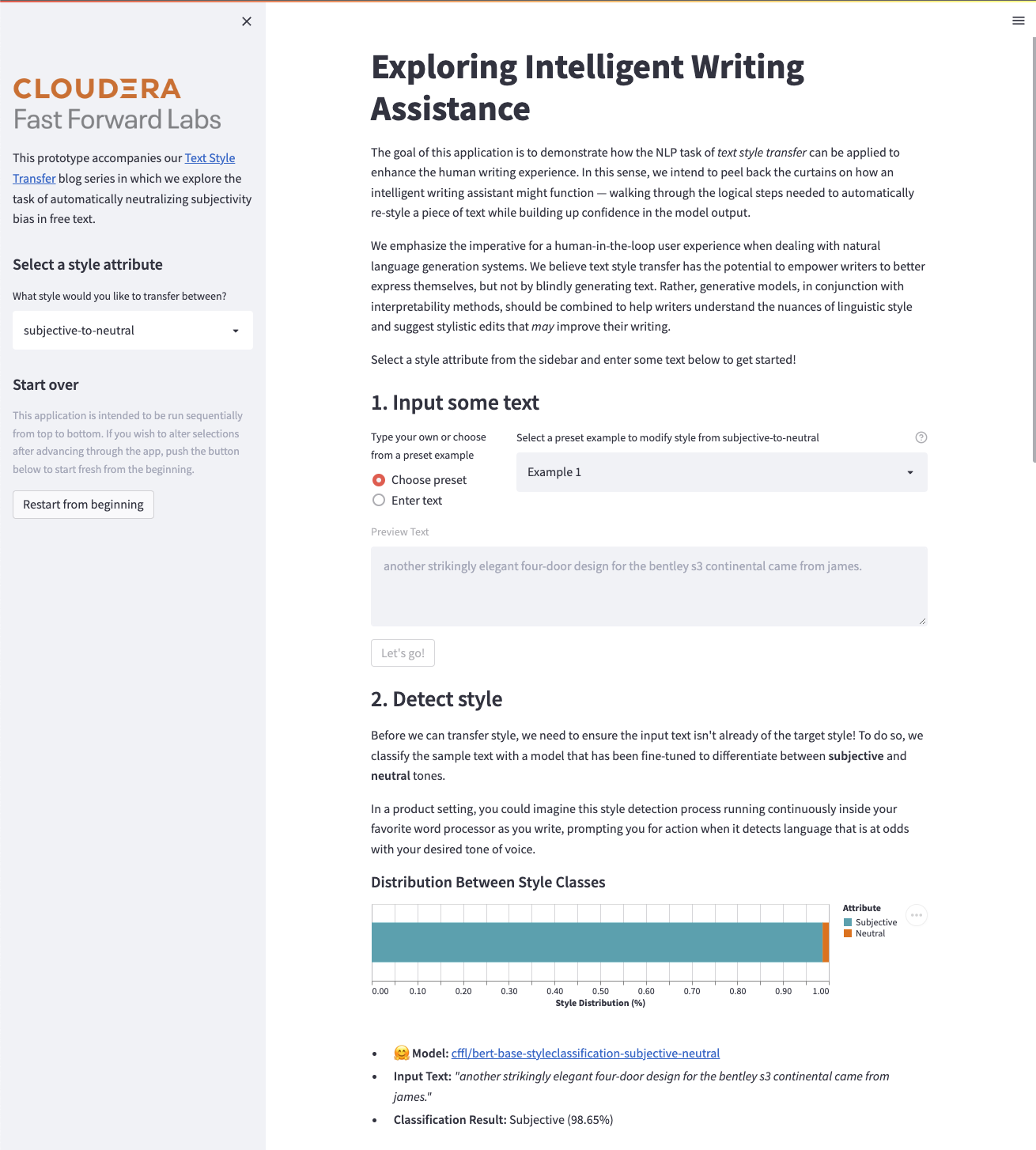

|

| 10 |

+

pinned: true

|

| 11 |

license: apache-2.0

|

| 12 |

---

|

| 13 |

|

| 14 |

+

# Exploring Intelligent Writing Assistance

|

| 15 |

+

|

| 16 |

+

A demonstration of how the NLP task of _text style transfer_ can be applied to enhance the human writing experience using [HuggingFace Transformers](https://huggingface.co/) and [Streamlit](https://streamlit.io/).

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

> This repo accompanies Cloudera Fast Forward Labs' [blog series](https://blog.fastforwardlabs.com/2022/03/22/an-introduction-to-text-style-transfer.html) in which we explore the task of automatically neutralizing subjectivity bias in free text.

|

| 21 |

+

|

| 22 |

+

The goal of this application is to demonstrate how the NLP task of text style transfer can be applied to enhance the human writing experience. In this sense, we intend to peel back the curtains on how an intelligent writing assistant might function — walking through the logical steps needed to automatically re-style a piece of text (from informal-to-formal **or** subjective-to-neutral) while building up confidence in the model output.

|

| 23 |

+

|

| 24 |

+

Through the application, we emphasize the imperative for a human-in-the-loop user experience when designing natural language generation systems. We believe text style transfer has the potential to empower writers to better express themselves, but not by blindly generating text. Rather, generative models, in conjunction with interpretability methods, should be combined to help writers understand the nuances of linguistic style and suggest stylistic edits that may improve their writing.

|

| 25 |

+

|

| 26 |

+

## Project Structure

|

| 27 |

+

|

| 28 |

+

```

|

| 29 |

+

.

|

| 30 |

+

├── LICENSE

|

| 31 |

+

├── README.md

|

| 32 |

+

├── apps

|

| 33 |

+

│ ├── app.py

|

| 34 |

+

│ ├── app_utils.py

|

| 35 |

+

│ ├── data_utils.py

|

| 36 |

+

│ └── visualization_utils.py

|

| 37 |

+

├── requirements.txt

|

| 38 |

+

├── scripts

|

| 39 |

+

│ ├── download_models.py

|

| 40 |

+

│ ├── install_dependencies.py

|

| 41 |

+

│ └── launch_app.py

|

| 42 |

+

├── setup.py

|

| 43 |

+

├── src

|

| 44 |

+

│ ├── __init__.py

|

| 45 |

+

│ ├── content_preservation.py

|

| 46 |

+

│ ├── style_classification.py

|

| 47 |

+

│ ├── style_transfer.py

|

| 48 |

+

│ └── transformer_interpretability.py

|

| 49 |

+

├── static

|

| 50 |

+

│ └── images

|

| 51 |

+

└── tests

|

| 52 |

+

├── __init__.py

|

| 53 |

+

└── test_model_classes.py

|

| 54 |

+

```

|

apps/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

apps/app.py

ADDED

|

@@ -0,0 +1,386 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ###########################################################################

|

| 2 |

+

#

|

| 3 |

+

# CLOUDERA APPLIED MACHINE LEARNING PROTOTYPE (AMP)

|

| 4 |

+

# (C) Cloudera, Inc. 2022

|

| 5 |

+

# All rights reserved.

|

| 6 |

+

#

|

| 7 |

+

# Applicable Open Source License: Apache 2.0

|

| 8 |

+

#

|

| 9 |

+

# NOTE: Cloudera open source products are modular software products

|

| 10 |

+

# made up of hundreds of individual components, each of which was

|

| 11 |

+

# individually copyrighted. Each Cloudera open source product is a

|

| 12 |

+

# collective work under U.S. Copyright Law. Your license to use the

|

| 13 |

+

# collective work is as provided in your written agreement with

|

| 14 |

+

# Cloudera. Used apart from the collective work, this file is

|

| 15 |

+

# licensed for your use pursuant to the open source license

|

| 16 |

+

# identified above.

|

| 17 |

+

#

|

| 18 |

+

# This code is provided to you pursuant a written agreement with

|

| 19 |

+

# (i) Cloudera, Inc. or (ii) a third-party authorized to distribute

|

| 20 |

+

# this code. If you do not have a written agreement with Cloudera nor

|

| 21 |

+

# with an authorized and properly licensed third party, you do not

|

| 22 |

+

# have any rights to access nor to use this code.

|

| 23 |

+

#

|

| 24 |

+

# Absent a written agreement with Cloudera, Inc. (“Cloudera”) to the

|

| 25 |

+

# contrary, A) CLOUDERA PROVIDES THIS CODE TO YOU WITHOUT WARRANTIES OF ANY

|

| 26 |

+

# KIND; (B) CLOUDERA DISCLAIMS ANY AND ALL EXPRESS AND IMPLIED

|

| 27 |

+

# WARRANTIES WITH RESPECT TO THIS CODE, INCLUDING BUT NOT LIMITED TO

|

| 28 |

+

# IMPLIED WARRANTIES OF TITLE, NON-INFRINGEMENT, MERCHANTABILITY AND

|

| 29 |

+

# FITNESS FOR A PARTICULAR PURPOSE; (C) CLOUDERA IS NOT LIABLE TO YOU,

|

| 30 |

+

# AND WILL NOT DEFEND, INDEMNIFY, NOR HOLD YOU HARMLESS FOR ANY CLAIMS

|

| 31 |

+

# ARISING FROM OR RELATED TO THE CODE; AND (D)WITH RESPECT TO YOUR EXERCISE

|

| 32 |

+

# OF ANY RIGHTS GRANTED TO YOU FOR THE CODE, CLOUDERA IS NOT LIABLE FOR ANY

|

| 33 |

+

# DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, PUNITIVE OR

|

| 34 |

+

# CONSEQUENTIAL DAMAGES INCLUDING, BUT NOT LIMITED TO, DAMAGES

|

| 35 |

+

# RELATED TO LOST REVENUE, LOST PROFITS, LOSS OF INCOME, LOSS OF

|

| 36 |

+

# BUSINESS ADVANTAGE OR UNAVAILABILITY, OR LOSS OR CORRUPTION OF

|

| 37 |

+

# DATA.

|

| 38 |

+

#

|

| 39 |

+

# ###########################################################################

|

| 40 |

+

|

| 41 |

+

import pandas as pd

|

| 42 |

+

from PIL import Image

|

| 43 |

+

import streamlit as st

|

| 44 |

+

import streamlit.components.v1 as components

|

| 45 |

+

|

| 46 |

+

from apps.data_utils import (

|

| 47 |

+

DATA_PACKET,

|

| 48 |

+

format_classification_results,

|

| 49 |

+

)

|

| 50 |

+

from apps.app_utils import (

|

| 51 |

+

DisableableButton,

|

| 52 |

+

reset_page_progress_state,

|

| 53 |

+

get_cached_style_intensity_classifier,

|

| 54 |

+

get_cached_word_attributions,

|

| 55 |

+

get_sti_metric,

|

| 56 |

+

get_cps_metric,

|

| 57 |

+

generate_style_transfer,

|

| 58 |

+

)

|

| 59 |

+

from apps.visualization_utils import build_altair_classification_plot

|

| 60 |

+

|

| 61 |

+

# SESSION STATE UTILS

|

| 62 |

+

if "page_progress" not in st.session_state:

|

| 63 |

+

st.session_state.page_progress = 1

|

| 64 |

+

|

| 65 |

+

if "st_result" not in st.session_state:

|

| 66 |

+

st.session_state.st_result = False

|

| 67 |

+

|

| 68 |

+

|

| 69 |

+

# PAGE CONFIG

|

| 70 |

+

ffl_favicon = Image.open("static/images/cldr-favicon.ico")

|

| 71 |

+

st.set_page_config(

|

| 72 |

+

page_title="CFFL: Text Style Transfer",

|

| 73 |

+

page_icon=ffl_favicon,

|

| 74 |

+

layout="centered",

|

| 75 |

+

initial_sidebar_state="expanded",

|

| 76 |

+

)

|

| 77 |

+

|

| 78 |

+

# SIDEBAR

|

| 79 |

+

ffl_logo = Image.open("static/images/ffllogo2@1x.png")

|

| 80 |

+

st.sidebar.image(ffl_logo)

|

| 81 |

+

|

| 82 |

+

st.sidebar.write(

|

| 83 |

+

"This prototype accompanies our [Text Style Transfer](https://blog.fastforwardlabs.com/2022/03/22/an-introduction-to-text-style-transfer.html)\

|

| 84 |

+

blog series in which we explore the task of automatically neutralizing subjectivity bias in free text."

|

| 85 |

+

)

|

| 86 |

+

|

| 87 |

+

st.sidebar.markdown("## Select a style attribute")

|

| 88 |

+

style_attribute = st.sidebar.selectbox(

|

| 89 |

+

"What style would you like to transfer between?", options=DATA_PACKET.keys()

|

| 90 |

+

)

|

| 91 |

+

STYLE_ATTRIBUTE_DATA = DATA_PACKET[style_attribute]

|

| 92 |

+

|

| 93 |

+

st.sidebar.markdown("## Start over")

|

| 94 |

+

st.sidebar.caption(

|

| 95 |

+

"This application is intended to be run sequentially from top to bottom. If you wish to alter selections after \

|

| 96 |

+

advancing through the app, push the button below to start fresh from the beginning."

|

| 97 |

+

)

|

| 98 |

+

st.sidebar.button("Restart from beginning", on_click=reset_page_progress_state)

|

| 99 |

+

|

| 100 |

+

# MAIN CONTENT

|

| 101 |

+

st.markdown("# Exploring Intelligent Writing Assistance")

|

| 102 |

+

|

| 103 |

+

st.write(

|

| 104 |

+

"""

|

| 105 |

+

The goal of this application is to demonstrate how the NLP task of _text style transfer_ can be applied to enhance the human writing experience.

|

| 106 |

+

In this sense, we intend to peel back the curtains on how an intelligent writing assistant might function — walking through the logical steps needed to

|

| 107 |

+

automatically re-style a piece of text while building up confidence in the model output.

|

| 108 |

+

|

| 109 |

+

We emphasize the imperative for a human-in-the-loop user experience when designing natural language generation systems. We believe text style

|

| 110 |

+

transfer has the potential to empower writers to better express themselves, but not by blindly generating text. Rather, generative models, in conjunction with

|

| 111 |

+

interpretability methods, should be combined to help writers understand the nuances of linguistic style and suggest stylistic edits that _may_ improve their writing.

|

| 112 |

+

|

| 113 |

+

Select a style attribute from the sidebar and enter some text below to get started!

|

| 114 |

+

"""

|

| 115 |

+

)

|

| 116 |

+

|

| 117 |

+

## 1. INPUT EXAMPLE

|

| 118 |

+

if st.session_state.page_progress > 0:

|

| 119 |

+

st.write("### 1. Input some text")

|

| 120 |

+

|

| 121 |

+

with st.container():

|

| 122 |

+

|

| 123 |

+

col1_1, col1_2 = st.columns([1, 3])

|

| 124 |

+

with col1_1:

|

| 125 |

+

input_type = st.radio(

|

| 126 |

+

"Type your own or choose from a preset example",

|

| 127 |

+

("Choose preset", "Enter text"),

|

| 128 |

+

horizontal=False,

|

| 129 |

+

)

|

| 130 |

+

with col1_2:

|

| 131 |

+

if input_type == "Enter text":

|

| 132 |

+

text_sample = st.text_input(

|

| 133 |

+

f"Enter some text to modify style from {style_attribute}",

|

| 134 |

+

help="You can also select one of our preset examples by toggling the radio button to the left.",

|

| 135 |

+

)

|

| 136 |

+

else:

|

| 137 |

+

option = st.selectbox(

|

| 138 |

+

f"Select a preset example to modify style from {style_attribute}",

|

| 139 |

+

[

|

| 140 |

+

f"Example {i+1}"

|

| 141 |

+

for i in range(len(STYLE_ATTRIBUTE_DATA.examples))

|

| 142 |

+

],

|

| 143 |

+

help="You can also enter your own text by toggling the radio button to the left.",

|

| 144 |

+

)

|

| 145 |

+

|

| 146 |

+

idx_key = int(option.split(" ")[-1]) - 1

|

| 147 |

+

text_sample = STYLE_ATTRIBUTE_DATA.examples[idx_key]

|

| 148 |

+

|

| 149 |

+

st.text_area(

|

| 150 |

+

"Preview Text",

|

| 151 |

+

value=text_sample,

|

| 152 |

+

placeholder="Enter some text above or toggle to choose a preset!",

|

| 153 |

+

disabled=True,

|

| 154 |

+

)

|

| 155 |

+

|

| 156 |

+

if text_sample != "":

|

| 157 |

+

db1 = DisableableButton(1, "Let's go!")

|

| 158 |

+

db1.create_enabled_button()

|

| 159 |

+

|

| 160 |

+

## 2. CLASSIFY INPUT

|

| 161 |

+

if st.session_state.page_progress > 1:

|

| 162 |

+

db1.disable()

|

| 163 |

+

|

| 164 |

+

st.write("### 2. Detect style")

|

| 165 |

+

st.write(

|

| 166 |

+

f"""

|

| 167 |

+

Before we can transfer style, we need to ensure the input text isn't already of the target style! To do so,

|

| 168 |

+

we classify the sample text with a model that has been fine-tuned to differentiate between

|

| 169 |

+

{STYLE_ATTRIBUTE_DATA.attribute_AND_string} tones.

|

| 170 |

+

|

| 171 |

+

In a product setting, you could imagine this style detection process running continuously inside your favorite word processor as you write,

|

| 172 |

+

prompting you for action when it detects language that is at odds with your desired tone of voice.

|

| 173 |

+

"""

|

| 174 |

+

)

|

| 175 |

+

|

| 176 |

+

with st.spinner("Detecting style, hang tight!"):

|

| 177 |

+

|

| 178 |

+

sic = get_cached_style_intensity_classifier(style_data=STYLE_ATTRIBUTE_DATA)

|

| 179 |

+

cls_result = sic.score(text_sample)

|

| 180 |

+

|

| 181 |

+

cls_result_df = pd.DataFrame(

|

| 182 |

+

cls_result[0]["distribution"],

|

| 183 |

+

columns=["Score"],

|

| 184 |

+

index=[v for k, v in sic.pipeline.model.config.id2label.items()],

|

| 185 |

+

)

|

| 186 |

+

|

| 187 |

+

with st.container():

|

| 188 |

+

|

| 189 |

+

format_cls_result = format_classification_results(

|

| 190 |

+

id2label=sic.pipeline.model.config.id2label, cls_result=cls_result

|

| 191 |

+

)

|

| 192 |

+

st.markdown("##### Distribution Between Style Classes")

|

| 193 |

+

chart = build_altair_classification_plot(format_cls_result)

|

| 194 |

+

st.altair_chart(chart.interactive(), use_container_width=True)

|

| 195 |

+

|

| 196 |

+

st.markdown(

|

| 197 |

+

f"""

|

| 198 |

+

- **:hugging_face: Model:** [{STYLE_ATTRIBUTE_DATA.cls_model_path}]({STYLE_ATTRIBUTE_DATA.build_model_url("cls")})

|

| 199 |

+

- **Input Text:** *"{text_sample}"*

|

| 200 |

+

- **Classification Result:** \t {cls_result[0]["label"].capitalize()} ({round(cls_result[0]["score"]*100, 2)}%)

|

| 201 |

+

"""

|

| 202 |

+

)

|

| 203 |

+

st.write(" ")

|

| 204 |

+

|

| 205 |

+

if cls_result[0]["label"].lower() != STYLE_ATTRIBUTE_DATA.target_attribute:

|

| 206 |

+

st.info(

|

| 207 |

+

f"""

|

| 208 |

+

**Looks like we have room for improvement!**

|

| 209 |

+

|

| 210 |

+

The distribution of classification scores suggests that the input text is more {STYLE_ATTRIBUTE_DATA.attribute_THAN_string}. Therefore,

|

| 211 |

+

an automated style transfer may improve our intended tone of voice."""

|

| 212 |

+

)

|

| 213 |

+

db2 = DisableableButton(2, "Let's see why")

|

| 214 |

+

db2.create_enabled_button()

|

| 215 |

+

else:

|

| 216 |

+

st.success(

|

| 217 |

+

f"""**No work to be done!** \n\n\n The distribution of classification scores suggests that the input text is less \

|

| 218 |

+

{STYLE_ATTRIBUTE_DATA.attribute_THAN_string}. Therefore, there's no need to transfer style. \

|

| 219 |

+

Enter a different text prompt or select one of the preset examples to re-run the analysis with."""

|

| 220 |

+

)

|

| 221 |

+

|

| 222 |

+

## 3. Here's why

|

| 223 |

+

if st.session_state.page_progress > 2:

|

| 224 |

+

db2.disable()

|

| 225 |

+

st.write("### 3. Interpret the classification result")

|

| 226 |

+

st.write(

|

| 227 |

+

f"""

|

| 228 |

+

Interpreting our model's output is a crucial practice that helps build trust and justify taking real-world action from the

|

| 229 |

+

model predictions. In this case, we apply a popular model interpretability technique called [Integrated Gradients](https://arxiv.org/pdf/1703.01365.pdf)

|

| 230 |

+

to the Transformer-based classifier to explain the model's prediction in terms of its features."""

|

| 231 |

+

)

|

| 232 |

+

|

| 233 |

+

with st.spinner("Interpreting the prediction, hang tight!"):

|

| 234 |

+

word_attributions_visual = get_cached_word_attributions(

|

| 235 |

+

text_sample=text_sample, style_data=STYLE_ATTRIBUTE_DATA

|

| 236 |

+

)

|

| 237 |

+

components.html(html=word_attributions_visual, height=200, scrolling=True)

|

| 238 |

+

|

| 239 |

+

st.write(

|

| 240 |

+

f"""

|

| 241 |

+

The visual above displays word attributions using the [Transformers Interpret](https://github.com/cdpierse/transformers-interpret) library.

|

| 242 |

+

Positive attribution values (green) indicate tokens that contribute positively towards the

|

| 243 |

+

predicted class ({STYLE_ATTRIBUTE_DATA.source_attribute}), while negative values (red) indicate tokens that contribute negatively towards the predicted class.

|

| 244 |

+

|

| 245 |

+

Visualizing word attributions is a helpful way to build intuition about what makes the input text _{STYLE_ATTRIBUTE_DATA.source_attribute}_!"""

|

| 246 |

+

)

|

| 247 |

+

db3 = DisableableButton(3, "Next")

|

| 248 |

+

db3.create_enabled_button()

|

| 249 |

+

|

| 250 |

+

|

| 251 |

+

## 4. SUGGEST GENERATED EDIT

|

| 252 |

+

if st.session_state.page_progress > 3:

|

| 253 |

+

db3.disable()

|

| 254 |

+

|

| 255 |

+

st.write("### 4. Generate a suggestion")

|

| 256 |

+

st.write(

|

| 257 |

+

f"Now that we've verified the input text is in fact *{STYLE_ATTRIBUTE_DATA.source_attribute}* and understand why that's the case, we can utilize a \

|

| 258 |

+

text style transfer model to generate a suggested replacement that retains the same semantic meaning, but achieves the *{STYLE_ATTRIBUTE_DATA.target_attribute}* target style.\

|

| 259 |

+

\n\n Expand the accordian below to toggle generation parameters, then click the button to transfer style!"

|

| 260 |

+

)

|

| 261 |

+

|

| 262 |

+

with st.expander("Toggle generation parameters"):

|

| 263 |

+

|

| 264 |

+

# st.markdown("##### Text generation parameters")

|

| 265 |

+

st.write("**max_gen_length**")

|

| 266 |

+

max_gen_length = st.slider(

|

| 267 |

+

"Whats the maximum generation length desired?", 1, 250, 200, 10

|

| 268 |

+

)

|

| 269 |

+

st.write("**num_beams**")

|

| 270 |

+

num_beams = st.slider(

|

| 271 |

+

"How many beams to use for beam-search decoding?", 1, 8, 4

|

| 272 |

+

)

|

| 273 |

+

st.write("**temperature**")

|

| 274 |

+

temperature = st.slider(

|

| 275 |

+

"What sensitivity value to model next token probabilities?",

|

| 276 |

+

0.0,

|

| 277 |

+

1.0,

|

| 278 |

+

1.0,

|

| 279 |

+

)

|

| 280 |

+

|

| 281 |

+

st.markdown(

|

| 282 |

+

f"""

|

| 283 |

+

**:hugging_face: Model:** [{STYLE_ATTRIBUTE_DATA.seq2seq_model_path}]({STYLE_ATTRIBUTE_DATA.build_model_url("seq2seq")})

|

| 284 |

+

"""

|

| 285 |

+

)

|

| 286 |

+

|

| 287 |

+

col4_1, col4_2, col4_3 = st.columns([1, 5, 4])

|

| 288 |

+

with col4_2:

|

| 289 |

+

st.markdown(

|

| 290 |

+

f"""

|

| 291 |

+

- **Max Generation Length:** {max_gen_length}

|

| 292 |

+

- **Number of Beams:** {num_beams}

|

| 293 |

+

- **Temperature:** {temperature}

|

| 294 |

+

"""

|

| 295 |

+

)

|

| 296 |

+

with col4_3:

|

| 297 |

+

with st.container():

|

| 298 |

+

st.write("")

|

| 299 |

+

st.button(

|

| 300 |

+

"Generate style transfer",

|

| 301 |

+

key="generate_text",

|

| 302 |

+

on_click=generate_style_transfer,

|

| 303 |

+

kwargs={

|

| 304 |

+

"text_sample": text_sample,

|

| 305 |

+

"style_data": STYLE_ATTRIBUTE_DATA,

|

| 306 |

+

"max_gen_length": max_gen_length,

|

| 307 |

+

"num_beams": num_beams,

|

| 308 |

+

"temperature": temperature,

|

| 309 |

+

},

|

| 310 |

+

)

|

| 311 |

+

|

| 312 |

+

if st.session_state.st_result:

|

| 313 |

+

st.warning(

|

| 314 |

+

f"""**{STYLE_ATTRIBUTE_DATA.source_attribute.capitalize()} Input:** "{text_sample}" """

|

| 315 |

+

)

|

| 316 |

+

st.info(

|

| 317 |

+

f"""

|

| 318 |

+

**{STYLE_ATTRIBUTE_DATA.target_attribute.capitalize()} Suggestion:** "{st.session_state.st_result[0]}" """

|

| 319 |

+

)

|

| 320 |

+

db4 = DisableableButton(4, "Next")

|

| 321 |

+

db4.create_enabled_button()

|

| 322 |

+

|

| 323 |

+

## 5. EVALUATE THE SUGGESTION

|

| 324 |

+

if st.session_state.page_progress > 4:

|

| 325 |

+

db4.disable()

|

| 326 |

+

st.write("### 5. Evaluate the suggestion")

|

| 327 |

+

st.markdown(

|

| 328 |

+

"""

|

| 329 |

+

Blindly prompting a writer with style suggestions without first checking quality would make for a noisy, error-prone product

|

| 330 |

+

with a poor user experience. Ultimately, we only want to suggest high quality edits. But what makes for a suggestion-worthy edit?

|

| 331 |

+

|

| 332 |

+

A comprehensive quality evaluation for text style transfer output should consider three criteria:

|

| 333 |

+

1. **Style strength** - To what degree does the generated text achieve the target style?

|

| 334 |

+

2. **Content preservation** - To what degree does the generated text retain the semantic meaning of the source text?

|

| 335 |

+

3. **Fluency** - To what degree does the generated text appear as if it were produced naturally by a human?

|

| 336 |

+

|

| 337 |

+

Below, we apply automated evaluation metrics - _Style Transfer Intensity (STI)_ & _Content Preservation Score (CPS)_ - to

|

| 338 |

+

measure the first two of these criteria by comparing the input text to the generated suggestion.

|

| 339 |

+

"""

|

| 340 |

+

)

|

| 341 |

+

|

| 342 |

+

with st.spinner("Evaluating text style transfer, hang tight!"):

|

| 343 |

+

|

| 344 |

+

sti = get_sti_metric(

|

| 345 |

+

input_text=text_sample,

|

| 346 |

+

output_text=st.session_state.st_result[0],

|

| 347 |

+

style_data=STYLE_ATTRIBUTE_DATA,

|

| 348 |

+

)

|

| 349 |

+

cps = get_cps_metric(

|

| 350 |

+

input_text=text_sample,

|

| 351 |

+

output_text=st.session_state.st_result[0],

|

| 352 |

+

style_data=STYLE_ATTRIBUTE_DATA,

|

| 353 |

+

)

|

| 354 |

+

|

| 355 |

+

st.markdown(

|

| 356 |

+

"""<hr style="height:2px;border:none;color:#333;background-color:#333;" /> """,

|

| 357 |

+

unsafe_allow_html=True,

|

| 358 |

+

)

|

| 359 |

+

|

| 360 |

+

col5_1, col5_2, col5_3 = st.columns([3, 1, 3])

|

| 361 |

+

|

| 362 |

+

with col5_1:

|

| 363 |

+

st.metric(

|

| 364 |

+

label="Style Transfer Intensity (STI)",

|

| 365 |

+

value=f"{round(sti[0]*100,2)}%",

|

| 366 |

+

)

|

| 367 |

+

st.caption(

|

| 368 |

+

f"""

|

| 369 |

+

STI compares the style class distributions (determined by the [style classifier]({STYLE_ATTRIBUTE_DATA.build_model_url("cls")}))

|

| 370 |

+

between the input text and generated suggestion using Earth Mover's Distance. Therefore, STI can be thought of as the percentage of the possible target style distribution

|

| 371 |

+

that was captured during the transfer.

|

| 372 |

+

"""

|

| 373 |

+

)

|

| 374 |

+

|

| 375 |

+

with col5_3:

|

| 376 |

+

st.metric(

|

| 377 |

+

label="Content Preservation Score (CPS)",

|

| 378 |

+

value=f"{round(cps[0]*100,2)}%",

|

| 379 |

+

)

|

| 380 |

+

st.caption(

|

| 381 |

+

f"""

|

| 382 |

+

CPS compares the latent space embeddings (determined by [SentenceBERT]({STYLE_ATTRIBUTE_DATA.build_model_url("sbert")}))

|

| 383 |

+

between the input text and generated suggestion using cosine similarity. Therefore, CPS can be thought of as the percentage of content that was perserved

|

| 384 |

+

during the style transfer.

|

| 385 |

+

"""

|

| 386 |

+

)

|

apps/app_utils.py

ADDED

|

@@ -0,0 +1,262 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ###########################################################################

|

| 2 |

+

#

|

| 3 |

+

# CLOUDERA APPLIED MACHINE LEARNING PROTOTYPE (AMP)

|

| 4 |

+

# (C) Cloudera, Inc. 2022

|

| 5 |

+

# All rights reserved.

|

| 6 |

+

#

|

| 7 |

+

# Applicable Open Source License: Apache 2.0

|

| 8 |

+

#

|

| 9 |

+

# NOTE: Cloudera open source products are modular software products

|

| 10 |

+

# made up of hundreds of individual components, each of which was

|

| 11 |

+

# individually copyrighted. Each Cloudera open source product is a

|

| 12 |

+

# collective work under U.S. Copyright Law. Your license to use the

|

| 13 |

+

# collective work is as provided in your written agreement with

|

| 14 |

+

# Cloudera. Used apart from the collective work, this file is

|

| 15 |

+

# licensed for your use pursuant to the open source license

|

| 16 |

+

# identified above.

|

| 17 |

+

#

|

| 18 |

+

# This code is provided to you pursuant a written agreement with

|

| 19 |

+

# (i) Cloudera, Inc. or (ii) a third-party authorized to distribute

|

| 20 |

+

# this code. If you do not have a written agreement with Cloudera nor

|

| 21 |

+

# with an authorized and properly licensed third party, you do not

|

| 22 |

+

# have any rights to access nor to use this code.

|

| 23 |

+

#

|

| 24 |

+

# Absent a written agreement with Cloudera, Inc. (“Cloudera”) to the

|

| 25 |

+

# contrary, A) CLOUDERA PROVIDES THIS CODE TO YOU WITHOUT WARRANTIES OF ANY

|

| 26 |

+

# KIND; (B) CLOUDERA DISCLAIMS ANY AND ALL EXPRESS AND IMPLIED

|

| 27 |

+

# WARRANTIES WITH RESPECT TO THIS CODE, INCLUDING BUT NOT LIMITED TO

|

| 28 |

+

# IMPLIED WARRANTIES OF TITLE, NON-INFRINGEMENT, MERCHANTABILITY AND

|

| 29 |

+

# FITNESS FOR A PARTICULAR PURPOSE; (C) CLOUDERA IS NOT LIABLE TO YOU,

|

| 30 |

+

# AND WILL NOT DEFEND, INDEMNIFY, NOR HOLD YOU HARMLESS FOR ANY CLAIMS

|

| 31 |

+

# ARISING FROM OR RELATED TO THE CODE; AND (D)WITH RESPECT TO YOUR EXERCISE

|

| 32 |

+

# OF ANY RIGHTS GRANTED TO YOU FOR THE CODE, CLOUDERA IS NOT LIABLE FOR ANY

|

| 33 |

+

# DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, PUNITIVE OR

|

| 34 |

+

# CONSEQUENTIAL DAMAGES INCLUDING, BUT NOT LIMITED TO, DAMAGES

|

| 35 |

+

# RELATED TO LOST REVENUE, LOST PROFITS, LOSS OF INCOME, LOSS OF

|

| 36 |

+

# BUSINESS ADVANTAGE OR UNAVAILABILITY, OR LOSS OR CORRUPTION OF

|

| 37 |

+

# DATA.

|

| 38 |

+

#

|

| 39 |

+

# ###########################################################################

|

| 40 |

+

|

| 41 |

+

from typing import List

|

| 42 |

+

|

| 43 |