Spaces:

Runtime error

Runtime error

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitignore +8 -0

- LICENSE +333 -0

- README.md +109 -8

- __pycache__/api.cpython-311.pyc +0 -0

- __pycache__/api.cpython-39.pyc +0 -0

- __pycache__/attentions.cpython-311.pyc +0 -0

- __pycache__/attentions.cpython-39.pyc +0 -0

- __pycache__/commons.cpython-311.pyc +0 -0

- __pycache__/commons.cpython-39.pyc +0 -0

- __pycache__/mel_processing.cpython-311.pyc +0 -0

- __pycache__/mel_processing.cpython-39.pyc +0 -0

- __pycache__/models.cpython-311.pyc +0 -0

- __pycache__/models.cpython-39.pyc +0 -0

- __pycache__/modules.cpython-311.pyc +0 -0

- __pycache__/modules.cpython-39.pyc +0 -0

- __pycache__/se_extractor.cpython-311.pyc +0 -0

- __pycache__/se_extractor.cpython-39.pyc +0 -0

- __pycache__/transforms.cpython-311.pyc +0 -0

- __pycache__/transforms.cpython-39.pyc +0 -0

- __pycache__/utils.cpython-311.pyc +0 -0

- __pycache__/utils.cpython-39.pyc +0 -0

- api.py +201 -0

- attentions.py +465 -0

- checkpoints/base_speakers/EN/checkpoint.pth +3 -0

- checkpoints/base_speakers/EN/config.json +145 -0

- checkpoints/base_speakers/EN/en_default_se.pth +3 -0

- checkpoints/base_speakers/EN/en_style_se.pth +3 -0

- checkpoints/base_speakers/ZH/checkpoint.pth +3 -0

- checkpoints/base_speakers/ZH/config.json +137 -0

- checkpoints/base_speakers/ZH/zh_default_se.pth +3 -0

- checkpoints/converter/checkpoint.pth +3 -0

- checkpoints/converter/config.json +57 -0

- checkpoints_1226.zip +3 -0

- commons.py +160 -0

- demo_part1.ipynb +236 -0

- demo_part2.ipynb +195 -0

- mel_processing.py +183 -0

- models.py +497 -0

- modules.py +598 -0

- openvoice_app.py +307 -0

- requirements.txt +15 -0

- resources/demo_speaker0.mp3 +0 -0

- resources/demo_speaker1.mp3 +0 -0

- resources/demo_speaker2.mp3 +0 -0

- resources/example_reference.mp3 +0 -0

- resources/framework-ipa.png +0 -0

- resources/framework.jpg +0 -0

- resources/lepton.jpg +0 -0

- resources/myshell.jpg +0 -0

- resources/openvoicelogo.jpg +0 -0

.gitignore

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

__pycache__/

|

| 2 |

+

.ipynb_checkpoints/

|

| 3 |

+

processed

|

| 4 |

+

outputs

|

| 5 |

+

checkpoints

|

| 6 |

+

trash

|

| 7 |

+

examples*

|

| 8 |

+

.env

|

LICENSE

ADDED

|

@@ -0,0 +1,333 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Creative Commons Attribution-NonCommercial 4.0 International Public

|

| 2 |

+

License

|

| 3 |

+

|

| 4 |

+

By exercising the Licensed Rights (defined below), You accept and agree

|

| 5 |

+

to be bound by the terms and conditions of this Creative Commons

|

| 6 |

+

Attribution-NonCommercial 4.0 International Public License ("Public

|

| 7 |

+

License"). To the extent this Public License may be interpreted as a

|

| 8 |

+

contract, You are granted the Licensed Rights in consideration of Your

|

| 9 |

+

acceptance of these terms and conditions, and the Licensor grants You

|

| 10 |

+

such rights in consideration of benefits the Licensor receives from

|

| 11 |

+

making the Licensed Material available under these terms and

|

| 12 |

+

conditions.

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

Section 1 -- Definitions.

|

| 16 |

+

|

| 17 |

+

a. Adapted Material means material subject to Copyright and Similar

|

| 18 |

+

Rights that is derived from or based upon the Licensed Material

|

| 19 |

+

and in which the Licensed Material is translated, altered,

|

| 20 |

+

arranged, transformed, or otherwise modified in a manner requiring

|

| 21 |

+

permission under the Copyright and Similar Rights held by the

|

| 22 |

+

Licensor. For purposes of this Public License, where the Licensed

|

| 23 |

+

Material is a musical work, performance, or sound recording,

|

| 24 |

+

Adapted Material is always produced where the Licensed Material is

|

| 25 |

+

synched in timed relation with a moving image.

|

| 26 |

+

|

| 27 |

+

b. Adapter's License means the license You apply to Your Copyright

|

| 28 |

+

and Similar Rights in Your contributions to Adapted Material in

|

| 29 |

+

accordance with the terms and conditions of this Public License.

|

| 30 |

+

|

| 31 |

+

c. Copyright and Similar Rights means copyright and/or similar rights

|

| 32 |

+

closely related to copyright including, without limitation,

|

| 33 |

+

performance, broadcast, sound recording, and Sui Generis Database

|

| 34 |

+

Rights, without regard to how the rights are labeled or

|

| 35 |

+

categorized. For purposes of this Public License, the rights

|

| 36 |

+

specified in Section 2(b)(1)-(2) are not Copyright and Similar

|

| 37 |

+

Rights.

|

| 38 |

+

d. Effective Technological Measures means those measures that, in the

|

| 39 |

+

absence of proper authority, may not be circumvented under laws

|

| 40 |

+

fulfilling obligations under Article 11 of the WIPO Copyright

|

| 41 |

+

Treaty adopted on December 20, 1996, and/or similar international

|

| 42 |

+

agreements.

|

| 43 |

+

|

| 44 |

+

e. Exceptions and Limitations means fair use, fair dealing, and/or

|

| 45 |

+

any other exception or limitation to Copyright and Similar Rights

|

| 46 |

+

that applies to Your use of the Licensed Material.

|

| 47 |

+

|

| 48 |

+

f. Licensed Material means the artistic or literary work, database,

|

| 49 |

+

or other material to which the Licensor applied this Public

|

| 50 |

+

License.

|

| 51 |

+

|

| 52 |

+

g. Licensed Rights means the rights granted to You subject to the

|

| 53 |

+

terms and conditions of this Public License, which are limited to

|

| 54 |

+

all Copyright and Similar Rights that apply to Your use of the

|

| 55 |

+

Licensed Material and that the Licensor has authority to license.

|

| 56 |

+

|

| 57 |

+

h. Licensor means the individual(s) or entity(ies) granting rights

|

| 58 |

+

under this Public License.

|

| 59 |

+

|

| 60 |

+

i. NonCommercial means not primarily intended for or directed towards

|

| 61 |

+

commercial advantage or monetary compensation. For purposes of

|

| 62 |

+

this Public License, the exchange of the Licensed Material for

|

| 63 |

+

other material subject to Copyright and Similar Rights by digital

|

| 64 |

+

file-sharing or similar means is NonCommercial provided there is

|

| 65 |

+

no payment of monetary compensation in connection with the

|

| 66 |

+

exchange.

|

| 67 |

+

|

| 68 |

+

j. Share means to provide material to the public by any means or

|

| 69 |

+

process that requires permission under the Licensed Rights, such

|

| 70 |

+

as reproduction, public display, public performance, distribution,

|

| 71 |

+

dissemination, communication, or importation, and to make material

|

| 72 |

+

available to the public including in ways that members of the

|

| 73 |

+

public may access the material from a place and at a time

|

| 74 |

+

individually chosen by them.

|

| 75 |

+

|

| 76 |

+

k. Sui Generis Database Rights means rights other than copyright

|

| 77 |

+

resulting from Directive 96/9/EC of the European Parliament and of

|

| 78 |

+

the Council of 11 March 1996 on the legal protection of databases,

|

| 79 |

+

as amended and/or succeeded, as well as other essentially

|

| 80 |

+

equivalent rights anywhere in the world.

|

| 81 |

+

|

| 82 |

+

l. You means the individual or entity exercising the Licensed Rights

|

| 83 |

+

under this Public License. Your has a corresponding meaning.

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

Section 2 -- Scope.

|

| 87 |

+

|

| 88 |

+

a. License grant.

|

| 89 |

+

|

| 90 |

+

1. Subject to the terms and conditions of this Public License,

|

| 91 |

+

the Licensor hereby grants You a worldwide, royalty-free,

|

| 92 |

+

non-sublicensable, non-exclusive, irrevocable license to

|

| 93 |

+

exercise the Licensed Rights in the Licensed Material to:

|

| 94 |

+

|

| 95 |

+

a. reproduce and Share the Licensed Material, in whole or

|

| 96 |

+

in part, for NonCommercial purposes only; and

|

| 97 |

+

|

| 98 |

+

b. produce, reproduce, and Share Adapted Material for

|

| 99 |

+

NonCommercial purposes only.

|

| 100 |

+

|

| 101 |

+

2. Exceptions and Limitations. For the avoidance of doubt, where

|

| 102 |

+

Exceptions and Limitations apply to Your use, this Public

|

| 103 |

+

License does not apply, and You do not need to comply with

|

| 104 |

+

its terms and conditions.

|

| 105 |

+

|

| 106 |

+

3. Term. The term of this Public License is specified in Section

|

| 107 |

+

6(a).

|

| 108 |

+

|

| 109 |

+

4. Media and formats; technical modifications allowed. The

|

| 110 |

+

Licensor authorizes You to exercise the Licensed Rights in

|

| 111 |

+

all media and formats whether now known or hereafter created,

|

| 112 |

+

and to make technical modifications necessary to do so. The

|

| 113 |

+

Licensor waives and/or agrees not to assert any right or

|

| 114 |

+

authority to forbid You from making technical modifications

|

| 115 |

+

necessary to exercise the Licensed Rights, including

|

| 116 |

+

technical modifications necessary to circumvent Effective

|

| 117 |

+

Technological Measures. For purposes of this Public License,

|

| 118 |

+

simply making modifications authorized by this Section 2(a)

|

| 119 |

+

(4) never produces Adapted Material.

|

| 120 |

+

|

| 121 |

+

5. Downstream recipients.

|

| 122 |

+

|

| 123 |

+

a. Offer from the Licensor -- Licensed Material. Every

|

| 124 |

+

recipient of the Licensed Material automatically

|

| 125 |

+

receives an offer from the Licensor to exercise the

|

| 126 |

+

Licensed Rights under the terms and conditions of this

|

| 127 |

+

Public License.

|

| 128 |

+

|

| 129 |

+

b. No downstream restrictions. You may not offer or impose

|

| 130 |

+

any additional or different terms or conditions on, or

|

| 131 |

+

apply any Effective Technological Measures to, the

|

| 132 |

+

Licensed Material if doing so restricts exercise of the

|

| 133 |

+

Licensed Rights by any recipient of the Licensed

|

| 134 |

+

Material.

|

| 135 |

+

|

| 136 |

+

6. No endorsement. Nothing in this Public License constitutes or

|

| 137 |

+

may be construed as permission to assert or imply that You

|

| 138 |

+

are, or that Your use of the Licensed Material is, connected

|

| 139 |

+

with, or sponsored, endorsed, or granted official status by,

|

| 140 |

+

the Licensor or others designated to receive attribution as

|

| 141 |

+

provided in Section 3(a)(1)(A)(i).

|

| 142 |

+

|

| 143 |

+

b. Other rights.

|

| 144 |

+

|

| 145 |

+

1. Moral rights, such as the right of integrity, are not

|

| 146 |

+

licensed under this Public License, nor are publicity,

|

| 147 |

+

privacy, and/or other similar personality rights; however, to

|

| 148 |

+

the extent possible, the Licensor waives and/or agrees not to

|

| 149 |

+

assert any such rights held by the Licensor to the limited

|

| 150 |

+

extent necessary to allow You to exercise the Licensed

|

| 151 |

+

Rights, but not otherwise.

|

| 152 |

+

|

| 153 |

+

2. Patent and trademark rights are not licensed under this

|

| 154 |

+

Public License.

|

| 155 |

+

|

| 156 |

+

3. To the extent possible, the Licensor waives any right to

|

| 157 |

+

collect royalties from You for the exercise of the Licensed

|

| 158 |

+

Rights, whether directly or through a collecting society

|

| 159 |

+

under any voluntary or waivable statutory or compulsory

|

| 160 |

+

licensing scheme. In all other cases the Licensor expressly

|

| 161 |

+

reserves any right to collect such royalties, including when

|

| 162 |

+

the Licensed Material is used other than for NonCommercial

|

| 163 |

+

purposes.

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

Section 3 -- License Conditions.

|

| 167 |

+

|

| 168 |

+

Your exercise of the Licensed Rights is expressly made subject to the

|

| 169 |

+

following conditions.

|

| 170 |

+

|

| 171 |

+

a. Attribution.

|

| 172 |

+

|

| 173 |

+

1. If You Share the Licensed Material (including in modified

|

| 174 |

+

form), You must:

|

| 175 |

+

|

| 176 |

+

a. retain the following if it is supplied by the Licensor

|

| 177 |

+

with the Licensed Material:

|

| 178 |

+

|

| 179 |

+

i. identification of the creator(s) of the Licensed

|

| 180 |

+

Material and any others designated to receive

|

| 181 |

+

attribution, in any reasonable manner requested by

|

| 182 |

+

the Licensor (including by pseudonym if

|

| 183 |

+

designated);

|

| 184 |

+

|

| 185 |

+

ii. a copyright notice;

|

| 186 |

+

|

| 187 |

+

iii. a notice that refers to this Public License;

|

| 188 |

+

|

| 189 |

+

iv. a notice that refers to the disclaimer of

|

| 190 |

+

warranties;

|

| 191 |

+

|

| 192 |

+

v. a URI or hyperlink to the Licensed Material to the

|

| 193 |

+

extent reasonably practicable;

|

| 194 |

+

|

| 195 |

+

b. indicate if You modified the Licensed Material and

|

| 196 |

+

retain an indication of any previous modifications; and

|

| 197 |

+

|

| 198 |

+

c. indicate the Licensed Material is licensed under this

|

| 199 |

+

Public License, and include the text of, or the URI or

|

| 200 |

+

hyperlink to, this Public License.

|

| 201 |

+

|

| 202 |

+

2. You may satisfy the conditions in Section 3(a)(1) in any

|

| 203 |

+

reasonable manner based on the medium, means, and context in

|

| 204 |

+

which You Share the Licensed Material. For example, it may be

|

| 205 |

+

reasonable to satisfy the conditions by providing a URI or

|

| 206 |

+

hyperlink to a resource that includes the required

|

| 207 |

+

information.

|

| 208 |

+

|

| 209 |

+

3. If requested by the Licensor, You must remove any of the

|

| 210 |

+

information required by Section 3(a)(1)(A) to the extent

|

| 211 |

+

reasonably practicable.

|

| 212 |

+

|

| 213 |

+

4. If You Share Adapted Material You produce, the Adapter's

|

| 214 |

+

License You apply must not prevent recipients of the Adapted

|

| 215 |

+

Material from complying with this Public License.

|

| 216 |

+

|

| 217 |

+

|

| 218 |

+

Section 4 -- Sui Generis Database Rights.

|

| 219 |

+

|

| 220 |

+

Where the Licensed Rights include Sui Generis Database Rights that

|

| 221 |

+

apply to Your use of the Licensed Material:

|

| 222 |

+

|

| 223 |

+

a. for the avoidance of doubt, Section 2(a)(1) grants You the right

|

| 224 |

+

to extract, reuse, reproduce, and Share all or a substantial

|

| 225 |

+

portion of the contents of the database for NonCommercial purposes

|

| 226 |

+

only;

|

| 227 |

+

|

| 228 |

+

b. if You include all or a substantial portion of the database

|

| 229 |

+

contents in a database in which You have Sui Generis Database

|

| 230 |

+

Rights, then the database in which You have Sui Generis Database

|

| 231 |

+

Rights (but not its individual contents) is Adapted Material; and

|

| 232 |

+

|

| 233 |

+

c. You must comply with the conditions in Section 3(a) if You Share

|

| 234 |

+

all or a substantial portion of the contents of the database.

|

| 235 |

+

|

| 236 |

+

For the avoidance of doubt, this Section 4 supplements and does not

|

| 237 |

+

replace Your obligations under this Public License where the Licensed

|

| 238 |

+

Rights include other Copyright and Similar Rights.

|

| 239 |

+

|

| 240 |

+

|

| 241 |

+

Section 5 -- Disclaimer of Warranties and Limitation of Liability.

|

| 242 |

+

|

| 243 |

+

a. UNLESS OTHERWISE SEPARATELY UNDERTAKEN BY THE LICENSOR, TO THE

|

| 244 |

+

EXTENT POSSIBLE, THE LICENSOR OFFERS THE LICENSED MATERIAL AS-IS

|

| 245 |

+

AND AS-AVAILABLE, AND MAKES NO REPRESENTATIONS OR WARRANTIES OF

|

| 246 |

+

ANY KIND CONCERNING THE LICENSED MATERIAL, WHETHER EXPRESS,

|

| 247 |

+

IMPLIED, STATUTORY, OR OTHER. THIS INCLUDES, WITHOUT LIMITATION,

|

| 248 |

+

WARRANTIES OF TITLE, MERCHANTABILITY, FITNESS FOR A PARTICULAR

|

| 249 |

+

PURPOSE, NON-INFRINGEMENT, ABSENCE OF LATENT OR OTHER DEFECTS,

|

| 250 |

+

ACCURACY, OR THE PRESENCE OR ABSENCE OF ERRORS, WHETHER OR NOT

|

| 251 |

+

KNOWN OR DISCOVERABLE. WHERE DISCLAIMERS OF WARRANTIES ARE NOT

|

| 252 |

+

ALLOWED IN FULL OR IN PART, THIS DISCLAIMER MAY NOT APPLY TO YOU.

|

| 253 |

+

|

| 254 |

+

b. TO THE EXTENT POSSIBLE, IN NO EVENT WILL THE LICENSOR BE LIABLE

|

| 255 |

+

TO YOU ON ANY LEGAL THEORY (INCLUDING, WITHOUT LIMITATION,

|

| 256 |

+

NEGLIGENCE) OR OTHERWISE FOR ANY DIRECT, SPECIAL, INDIRECT,

|

| 257 |

+

INCIDENTAL, CONSEQUENTIAL, PUNITIVE, EXEMPLARY, OR OTHER LOSSES,

|

| 258 |

+

COSTS, EXPENSES, OR DAMAGES ARISING OUT OF THIS PUBLIC LICENSE OR

|

| 259 |

+

USE OF THE LICENSED MATERIAL, EVEN IF THE LICENSOR HAS BEEN

|

| 260 |

+

ADVISED OF THE POSSIBILITY OF SUCH LOSSES, COSTS, EXPENSES, OR

|

| 261 |

+

DAMAGES. WHERE A LIMITATION OF LIABILITY IS NOT ALLOWED IN FULL OR

|

| 262 |

+

IN PART, THIS LIMITATION MAY NOT APPLY TO YOU.

|

| 263 |

+

|

| 264 |

+

c. The disclaimer of warranties and limitation of liability provided

|

| 265 |

+

above shall be interpreted in a manner that, to the extent

|

| 266 |

+

possible, most closely approximates an absolute disclaimer and

|

| 267 |

+

waiver of all liability.

|

| 268 |

+

|

| 269 |

+

|

| 270 |

+

Section 6 -- Term and Termination.

|

| 271 |

+

|

| 272 |

+

a. This Public License applies for the term of the Copyright and

|

| 273 |

+

Similar Rights licensed here. However, if You fail to comply with

|

| 274 |

+

this Public License, then Your rights under this Public License

|

| 275 |

+

terminate automatically.

|

| 276 |

+

|

| 277 |

+

b. Where Your right to use the Licensed Material has terminated under

|

| 278 |

+

Section 6(a), it reinstates:

|

| 279 |

+

|

| 280 |

+

1. automatically as of the date the violation is cured, provided

|

| 281 |

+

it is cured within 30 days of Your discovery of the

|

| 282 |

+

violation; or

|

| 283 |

+

|

| 284 |

+

2. upon express reinstatement by the Licensor.

|

| 285 |

+

|

| 286 |

+

For the avoidance of doubt, this Section 6(b) does not affect any

|

| 287 |

+

right the Licensor may have to seek remedies for Your violations

|

| 288 |

+

of this Public License.

|

| 289 |

+

|

| 290 |

+

c. For the avoidance of doubt, the Licensor may also offer the

|

| 291 |

+

Licensed Material under separate terms or conditions or stop

|

| 292 |

+

distributing the Licensed Material at any time; however, doing so

|

| 293 |

+

will not terminate this Public License.

|

| 294 |

+

|

| 295 |

+

d. Sections 1, 5, 6, 7, and 8 survive termination of this Public

|

| 296 |

+

License.

|

| 297 |

+

|

| 298 |

+

|

| 299 |

+

Section 7 -- Other Terms and Conditions.

|

| 300 |

+

|

| 301 |

+

a. The Licensor shall not be bound by any additional or different

|

| 302 |

+

terms or conditions communicated by You unless expressly agreed.

|

| 303 |

+

|

| 304 |

+

b. Any arrangements, understandings, or agreements regarding the

|

| 305 |

+

Licensed Material not stated herein are separate from and

|

| 306 |

+

independent of the terms and conditions of this Public License.

|

| 307 |

+

|

| 308 |

+

|

| 309 |

+

Section 8 -- Interpretation.

|

| 310 |

+

|

| 311 |

+

a. For the avoidance of doubt, this Public License does not, and

|

| 312 |

+

shall not be interpreted to, reduce, limit, restrict, or impose

|

| 313 |

+

conditions on any use of the Licensed Material that could lawfully

|

| 314 |

+

be made without permission under this Public License.

|

| 315 |

+

|

| 316 |

+

b. To the extent possible, if any provision of this Public License is

|

| 317 |

+

deemed unenforceable, it shall be automatically reformed to the

|

| 318 |

+

minimum extent necessary to make it enforceable. If the provision

|

| 319 |

+

cannot be reformed, it shall be severed from this Public License

|

| 320 |

+

without affecting the enforceability of the remaining terms and

|

| 321 |

+

conditions.

|

| 322 |

+

|

| 323 |

+

c. No term or condition of this Public License will be waived and no

|

| 324 |

+

failure to comply consented to unless expressly agreed to by the

|

| 325 |

+

Licensor.

|

| 326 |

+

|

| 327 |

+

d. Nothing in this Public License constitutes or may be interpreted

|

| 328 |

+

as a limitation upon, or waiver of, any privileges and immunities

|

| 329 |

+

that apply to the Licensor or You, including from the legal

|

| 330 |

+

processes of any jurisdiction or authority.

|

| 331 |

+

|

| 332 |

+

=======================================================================

|

| 333 |

+

|

README.md

CHANGED

|

@@ -1,12 +1,113 @@

|

|

| 1 |

---

|

| 2 |

-

title: OpenVoice

|

| 3 |

-

|

| 4 |

-

colorFrom: red

|

| 5 |

-

colorTo: purple

|

| 6 |

sdk: gradio

|

| 7 |

-

sdk_version:

|

| 8 |

-

app_file: app.py

|

| 9 |

-

pinned: false

|

| 10 |

---

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

title: OpenVoice-main

|

| 3 |

+

app_file: openvoice_app.py

|

|

|

|

|

|

|

| 4 |

sdk: gradio

|

| 5 |

+

sdk_version: 3.50.2

|

|

|

|

|

|

|

| 6 |

---

|

| 7 |

+

<div align="center">

|

| 8 |

+

<div> </div>

|

| 9 |

+

<img src="resources/openvoicelogo.jpg" width="400"/>

|

| 10 |

|

| 11 |

+

[Paper](https://arxiv.org/abs/2312.01479) |

|

| 12 |

+

[Website](https://research.myshell.ai/open-voice)

|

| 13 |

+

|

| 14 |

+

</div>

|

| 15 |

+

|

| 16 |

+

## Join Our Community

|

| 17 |

+

|

| 18 |

+

Join our [Discord community](https://discord.gg/myshell) and select the `Developer` role upon joining to gain exclusive access to our developer-only channel! Don't miss out on valuable discussions and collaboration opportunities.

|

| 19 |

+

|

| 20 |

+

## Introduction

|

| 21 |

+

As we detailed in our [paper](https://arxiv.org/abs/2312.01479) and [website](https://research.myshell.ai/open-voice), the advantages of OpenVoice are three-fold:

|

| 22 |

+

|

| 23 |

+

**1. Accurate Tone Color Cloning.**

|

| 24 |

+

OpenVoice can accurately clone the reference tone color and generate speech in multiple languages and accents.

|

| 25 |

+

|

| 26 |

+

**2. Flexible Voice Style Control.**

|

| 27 |

+

OpenVoice enables granular control over voice styles, such as emotion and accent, as well as other style parameters including rhythm, pauses, and intonation.

|

| 28 |

+

|

| 29 |

+

**3. Zero-shot Cross-lingual Voice Cloning.**

|

| 30 |

+

Neither of the language of the generated speech nor the language of the reference speech needs to be presented in the massive-speaker multi-lingual training dataset.

|

| 31 |

+

|

| 32 |

+

[Video](https://github.com/myshell-ai/OpenVoice/assets/40556743/3cba936f-82bf-476c-9e52-09f0f417bb2f)

|

| 33 |

+

|

| 34 |

+

<div align="center">

|

| 35 |

+

<div> </div>

|

| 36 |

+

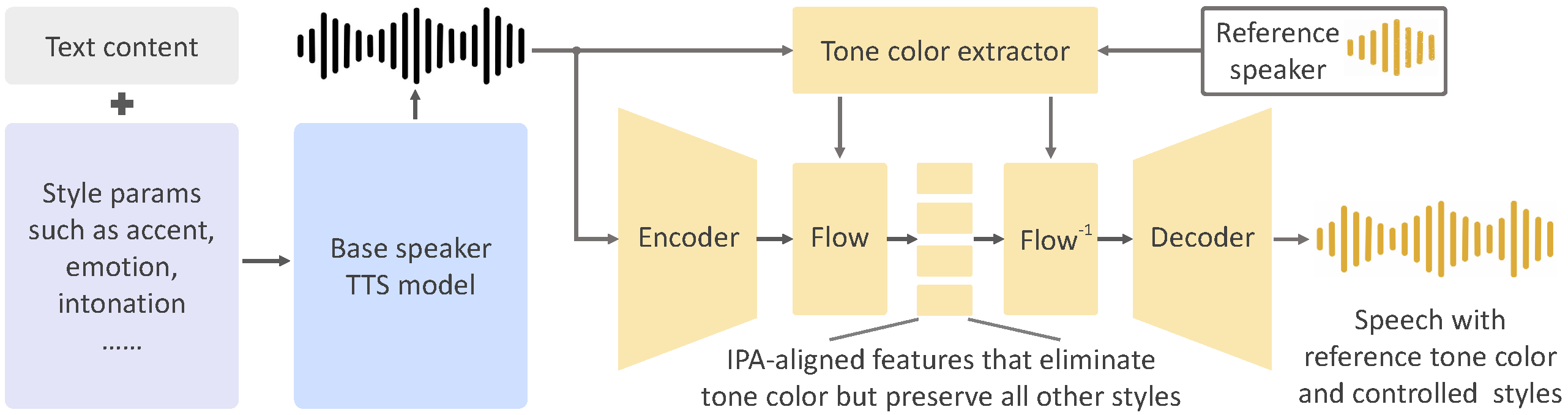

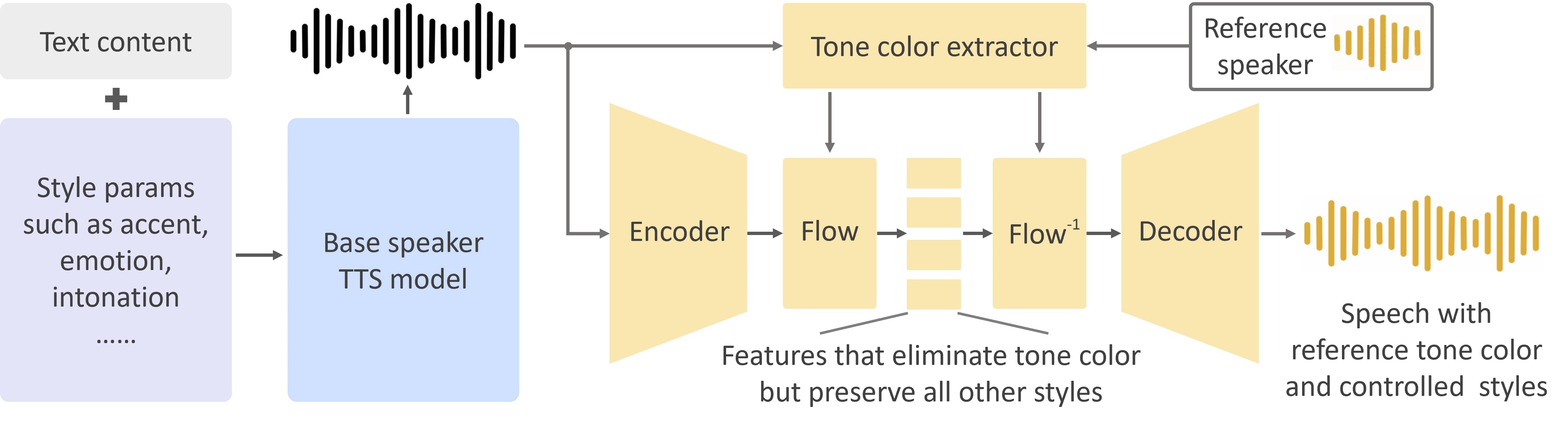

<img src="resources/framework-ipa.png" width="800"/>

|

| 37 |

+

<div> </div>

|

| 38 |

+

</div>

|

| 39 |

+

|

| 40 |

+

OpenVoice has been powering the instant voice cloning capability of [myshell.ai](https://app.myshell.ai/explore) since May 2023. Until Nov 2023, the voice cloning model has been used tens of millions of times by users worldwide, and witnessed the explosive user growth on the platform.

|

| 41 |

+

|

| 42 |

+

## Main Contributors

|

| 43 |

+

|

| 44 |

+

- [Zengyi Qin](https://www.qinzy.tech) at MIT and MyShell

|

| 45 |

+

- [Wenliang Zhao](https://wl-zhao.github.io) at Tsinghua University

|

| 46 |

+

- [Xumin Yu](https://yuxumin.github.io) at Tsinghua University

|

| 47 |

+

- [Ethan Sun](https://twitter.com/ethan_myshell) at MyShell

|

| 48 |

+

|

| 49 |

+

## Live Demo

|

| 50 |

+

|

| 51 |

+

<div align="center">

|

| 52 |

+

<a href="https://www.lepton.ai/playground/openvoice"><img src="resources/lepton.jpg"></a>

|

| 53 |

+

|

| 54 |

+

<a href="https://app.myshell.ai/bot/z6Bvua/1702636181"><img src="resources/myshell.jpg"></a>

|

| 55 |

+

</div>

|

| 56 |

+

|

| 57 |

+

## Disclaimer

|

| 58 |

+

|

| 59 |

+

This is a implementation that approximates the performance of the internal voice clone technology of [myshell.ai](https://app.myshell.ai/explore). The online version in myshell.ai has better 1) audio quality, 2) voice cloning similarity, 3) speech naturalness and 4) computational efficiency.

|

| 60 |

+

|

| 61 |

+

## Installation

|

| 62 |

+

Clone this repo, and run

|

| 63 |

+

```

|

| 64 |

+

conda create -n openvoice python=3.9

|

| 65 |

+

conda activate openvoice

|

| 66 |

+

conda install pytorch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 pytorch-cuda=11.7 -c pytorch -c nvidia

|

| 67 |

+

pip install -r requirements.txt

|

| 68 |

+

```

|

| 69 |

+

Download the checkpoint from [here](https://myshell-public-repo-hosting.s3.amazonaws.com/checkpoints_1226.zip) and extract it to the `checkpoints` folder

|

| 70 |

+

|

| 71 |

+

## Usage

|

| 72 |

+

|

| 73 |

+

**1. Flexible Voice Style Control.**

|

| 74 |

+

Please see [`demo_part1.ipynb`](demo_part1.ipynb) for an example usage of how OpenVoice enables flexible style control over the cloned voice.

|

| 75 |

+

|

| 76 |

+

**2. Cross-Lingual Voice Cloning.**

|

| 77 |

+

Please see [`demo_part2.ipynb`](demo_part2.ipynb) for an example for languages seen or unseen in the MSML training set.

|

| 78 |

+

|

| 79 |

+

**3. Gradio Demo.**

|

| 80 |

+

Launch a local gradio demo with [`python -m openvoice_app --share`](openvoice_app.py).

|

| 81 |

+

|

| 82 |

+

**4. Advanced Usage.**

|

| 83 |

+

The base speaker model can be replaced with any model (in any language and style) that the user prefer. Please use the `se_extractor.get_se` function as demonstrated in the demo to extract the tone color embedding for the new base speaker.

|

| 84 |

+

|

| 85 |

+

**5. Tips to Generate Natural Speech.**

|

| 86 |

+

There are many single or multi-speaker TTS methods that can generate natural speech, and are readily available. By simply replacing the base speaker model with the model you prefer, you can push the speech naturalness to a level you desire.

|

| 87 |

+

|

| 88 |

+

## Roadmap

|

| 89 |

+

|

| 90 |

+

- [x] Inference code

|

| 91 |

+

- [x] Tone color converter model

|

| 92 |

+

- [x] Multi-style base speaker model

|

| 93 |

+

- [x] Multi-style and multi-lingual demo

|

| 94 |

+

- [x] Base speaker model in other languages

|

| 95 |

+

- [x] EN base speaker model with better naturalness

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

## Citation

|

| 99 |

+

```

|

| 100 |

+

@article{qin2023openvoice,

|

| 101 |

+

title={OpenVoice: Versatile Instant Voice Cloning},

|

| 102 |

+

author={Qin, Zengyi and Zhao, Wenliang and Yu, Xumin and Sun, Xin},

|

| 103 |

+

journal={arXiv preprint arXiv:2312.01479},

|

| 104 |

+

year={2023}

|

| 105 |

+

}

|

| 106 |

+

```

|

| 107 |

+

|

| 108 |

+

## License

|

| 109 |

+

This repository is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License, which prohibits commercial usage. **MyShell reserves the ability to detect whether an audio is generated by OpenVoice**, no matter whether the watermark is added or not.

|

| 110 |

+

|

| 111 |

+

|

| 112 |

+

## Acknowledgements

|

| 113 |

+

This implementation is based on several excellent projects, [TTS](https://github.com/coqui-ai/TTS), [VITS](https://github.com/jaywalnut310/vits), and [VITS2](https://github.com/daniilrobnikov/vits2). Thanks for their awesome work!

|

__pycache__/api.cpython-311.pyc

ADDED

|

Binary file (15.4 kB). View file

|

|

|

__pycache__/api.cpython-39.pyc

ADDED

|

Binary file (7.16 kB). View file

|

|

|

__pycache__/attentions.cpython-311.pyc

ADDED

|

Binary file (23.4 kB). View file

|

|

|

__pycache__/attentions.cpython-39.pyc

ADDED

|

Binary file (11.1 kB). View file

|

|

|

__pycache__/commons.cpython-311.pyc

ADDED

|

Binary file (10.3 kB). View file

|

|

|

__pycache__/commons.cpython-39.pyc

ADDED

|

Binary file (5.76 kB). View file

|

|

|

__pycache__/mel_processing.cpython-311.pyc

ADDED

|

Binary file (9.16 kB). View file

|

|

|

__pycache__/mel_processing.cpython-39.pyc

ADDED

|

Binary file (4.16 kB). View file

|

|

|

__pycache__/models.cpython-311.pyc

ADDED

|

Binary file (27.2 kB). View file

|

|

|

__pycache__/models.cpython-39.pyc

ADDED

|

Binary file (12.6 kB). View file

|

|

|

__pycache__/modules.cpython-311.pyc

ADDED

|

Binary file (27.1 kB). View file

|

|

|

__pycache__/modules.cpython-39.pyc

ADDED

|

Binary file (13 kB). View file

|

|

|

__pycache__/se_extractor.cpython-311.pyc

ADDED

|

Binary file (7.62 kB). View file

|

|

|

__pycache__/se_extractor.cpython-39.pyc

ADDED

|

Binary file (3.74 kB). View file

|

|

|

__pycache__/transforms.cpython-311.pyc

ADDED

|

Binary file (7.6 kB). View file

|

|

|

__pycache__/transforms.cpython-39.pyc

ADDED

|

Binary file (3.91 kB). View file

|

|

|

__pycache__/utils.cpython-311.pyc

ADDED

|

Binary file (11.1 kB). View file

|

|

|

__pycache__/utils.cpython-39.pyc

ADDED

|

Binary file (6.24 kB). View file

|

|

|

api.py

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

import numpy as np

|

| 3 |

+

import re

|

| 4 |

+

import soundfile

|

| 5 |

+

import utils

|

| 6 |

+

import commons

|

| 7 |

+

import os

|

| 8 |

+

import librosa

|

| 9 |

+

from text import text_to_sequence

|

| 10 |

+

from mel_processing import spectrogram_torch

|

| 11 |

+

from models import SynthesizerTrn

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

class OpenVoiceBaseClass(object):

|

| 15 |

+

def __init__(self,

|

| 16 |

+

config_path,

|

| 17 |

+

device='cuda:0'):

|

| 18 |

+

if 'cuda' in device:

|

| 19 |

+

assert torch.cuda.is_available()

|

| 20 |

+

|

| 21 |

+

hps = utils.get_hparams_from_file(config_path)

|

| 22 |

+

|

| 23 |

+

model = SynthesizerTrn(

|

| 24 |

+

len(getattr(hps, 'symbols', [])),

|

| 25 |

+

hps.data.filter_length // 2 + 1,

|

| 26 |

+

n_speakers=hps.data.n_speakers,

|

| 27 |

+

**hps.model,

|

| 28 |

+

).to(device)

|

| 29 |

+

|

| 30 |

+

model.eval()

|

| 31 |

+

self.model = model

|

| 32 |

+

self.hps = hps

|

| 33 |

+

self.device = device

|

| 34 |

+

|

| 35 |

+

def load_ckpt(self, ckpt_path):

|

| 36 |

+

checkpoint_dict = torch.load(ckpt_path, map_location=torch.device(self.device))

|

| 37 |

+

a, b = self.model.load_state_dict(checkpoint_dict['model'], strict=False)

|

| 38 |

+

print("Loaded checkpoint '{}'".format(ckpt_path))

|

| 39 |

+

print('missing/unexpected keys:', a, b)

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

class BaseSpeakerTTS(OpenVoiceBaseClass):

|

| 43 |

+

language_marks = {

|

| 44 |

+

"english": "EN",

|

| 45 |

+

"chinese": "ZH",

|

| 46 |

+

}

|

| 47 |

+

|

| 48 |

+

@staticmethod

|

| 49 |

+

def get_text(text, hps, is_symbol):

|

| 50 |

+

text_norm = text_to_sequence(text, hps.symbols, [] if is_symbol else hps.data.text_cleaners)

|

| 51 |

+

if hps.data.add_blank:

|

| 52 |

+

text_norm = commons.intersperse(text_norm, 0)

|

| 53 |

+

text_norm = torch.LongTensor(text_norm)

|

| 54 |

+

return text_norm

|

| 55 |

+

|

| 56 |

+

@staticmethod

|

| 57 |

+

def audio_numpy_concat(segment_data_list, sr, speed=1.):

|

| 58 |

+

audio_segments = []

|

| 59 |

+

for segment_data in segment_data_list:

|

| 60 |

+

audio_segments += segment_data.reshape(-1).tolist()

|

| 61 |

+

audio_segments += [0] * int((sr * 0.05)/speed)

|

| 62 |

+

audio_segments = np.array(audio_segments).astype(np.float32)

|

| 63 |

+

return audio_segments

|

| 64 |

+

|

| 65 |

+

@staticmethod

|

| 66 |

+

def split_sentences_into_pieces(text, language_str):

|

| 67 |

+

texts = utils.split_sentence(text, language_str=language_str)

|

| 68 |

+

print(" > Text splitted to sentences.")

|

| 69 |

+

print('\n'.join(texts))

|

| 70 |

+

print(" > ===========================")

|

| 71 |

+

return texts

|

| 72 |

+

|

| 73 |

+

def tts(self, text, output_path, speaker, language='English', speed=1.0):

|

| 74 |

+

mark = self.language_marks.get(language.lower(), None)

|

| 75 |

+

assert mark is not None, f"language {language} is not supported"

|

| 76 |

+

|

| 77 |

+

texts = self.split_sentences_into_pieces(text, mark)

|

| 78 |

+

|

| 79 |

+

audio_list = []

|

| 80 |

+

for t in texts:

|

| 81 |

+

t = re.sub(r'([a-z])([A-Z])', r'\1 \2', t)

|

| 82 |

+

t = f'[{mark}]{t}[{mark}]'

|

| 83 |

+

stn_tst = self.get_text(t, self.hps, False)

|

| 84 |

+

device = self.device

|

| 85 |

+

speaker_id = self.hps.speakers[speaker]

|

| 86 |

+

with torch.no_grad():

|

| 87 |

+

x_tst = stn_tst.unsqueeze(0).to(device)

|

| 88 |

+

x_tst_lengths = torch.LongTensor([stn_tst.size(0)]).to(device)

|

| 89 |

+

sid = torch.LongTensor([speaker_id]).to(device)

|

| 90 |

+

audio = self.model.infer(x_tst, x_tst_lengths, sid=sid, noise_scale=0.667, noise_scale_w=0.6,

|

| 91 |

+

length_scale=1.0 / speed)[0][0, 0].data.cpu().float().numpy()

|

| 92 |

+

audio_list.append(audio)

|

| 93 |

+

audio = self.audio_numpy_concat(audio_list, sr=self.hps.data.sampling_rate, speed=speed)

|

| 94 |

+

|

| 95 |

+

if output_path is None:

|

| 96 |

+

return audio

|

| 97 |

+

else:

|

| 98 |

+

soundfile.write(output_path, audio, self.hps.data.sampling_rate)

|

| 99 |

+

|

| 100 |

+

|

| 101 |

+

class ToneColorConverter(OpenVoiceBaseClass):

|

| 102 |

+

def __init__(self, *args, **kwargs):

|

| 103 |

+

super().__init__(*args, **kwargs)

|

| 104 |

+

|

| 105 |

+

if kwargs.get('enable_watermark', True):

|

| 106 |

+

import wavmark

|

| 107 |

+

self.watermark_model = wavmark.load_model().to(self.device)

|

| 108 |

+

else:

|

| 109 |

+

self.watermark_model = None

|

| 110 |

+

|

| 111 |

+

|

| 112 |

+

|

| 113 |

+

def extract_se(self, ref_wav_list, se_save_path=None):

|

| 114 |

+

if isinstance(ref_wav_list, str):

|

| 115 |

+

ref_wav_list = [ref_wav_list]

|

| 116 |

+

|

| 117 |

+

device = self.device

|

| 118 |

+

hps = self.hps

|

| 119 |

+

gs = []

|

| 120 |

+

|

| 121 |

+

for fname in ref_wav_list:

|

| 122 |

+

audio_ref, sr = librosa.load(fname, sr=hps.data.sampling_rate)

|

| 123 |

+

y = torch.FloatTensor(audio_ref)

|

| 124 |

+

y = y.to(device)

|

| 125 |

+

y = y.unsqueeze(0)

|

| 126 |

+

y = spectrogram_torch(y, hps.data.filter_length,

|

| 127 |

+

hps.data.sampling_rate, hps.data.hop_length, hps.data.win_length,

|

| 128 |

+

center=False).to(device)

|

| 129 |

+

with torch.no_grad():

|

| 130 |

+

g = self.model.ref_enc(y.transpose(1, 2)).unsqueeze(-1)

|

| 131 |

+

gs.append(g.detach())

|

| 132 |

+

gs = torch.stack(gs).mean(0)

|

| 133 |

+

|

| 134 |

+

if se_save_path is not None:

|

| 135 |

+

os.makedirs(os.path.dirname(se_save_path), exist_ok=True)

|

| 136 |

+

torch.save(gs.cpu(), se_save_path)

|

| 137 |

+

|

| 138 |

+

return gs

|

| 139 |

+

|

| 140 |

+

def convert(self, audio_src_path, src_se, tgt_se, output_path=None, tau=0.3, message="default"):

|

| 141 |

+

hps = self.hps

|

| 142 |

+

# load audio

|

| 143 |

+

audio, sample_rate = librosa.load(audio_src_path, sr=hps.data.sampling_rate)

|

| 144 |

+

audio = torch.tensor(audio).float()

|

| 145 |

+

|

| 146 |

+

with torch.no_grad():

|

| 147 |

+

y = torch.FloatTensor(audio).to(self.device)

|

| 148 |

+

y = y.unsqueeze(0)

|

| 149 |

+

spec = spectrogram_torch(y, hps.data.filter_length,

|

| 150 |

+

hps.data.sampling_rate, hps.data.hop_length, hps.data.win_length,

|

| 151 |

+

center=False).to(self.device)

|

| 152 |

+

spec_lengths = torch.LongTensor([spec.size(-1)]).to(self.device)

|

| 153 |

+

audio = self.model.voice_conversion(spec, spec_lengths, sid_src=src_se, sid_tgt=tgt_se, tau=tau)[0][

|

| 154 |

+

0, 0].data.cpu().float().numpy()

|

| 155 |

+

audio = self.add_watermark(audio, message)

|

| 156 |

+

if output_path is None:

|

| 157 |

+

return audio

|

| 158 |

+

else:

|

| 159 |

+

soundfile.write(output_path, audio, hps.data.sampling_rate)

|

| 160 |

+

|

| 161 |

+

def add_watermark(self, audio, message):

|

| 162 |

+

if self.watermark_model is None:

|

| 163 |

+

return audio

|

| 164 |

+

device = self.device

|

| 165 |

+

bits = utils.string_to_bits(message).reshape(-1)

|

| 166 |

+

n_repeat = len(bits) // 32

|

| 167 |

+

|

| 168 |

+

K = 16000

|

| 169 |

+

coeff = 2

|

| 170 |

+

for n in range(n_repeat):

|

| 171 |

+

trunck = audio[(coeff * n) * K: (coeff * n + 1) * K]

|

| 172 |

+

if len(trunck) != K:

|

| 173 |

+

print('Audio too short, fail to add watermark')

|

| 174 |

+

break

|

| 175 |

+

message_npy = bits[n * 32: (n + 1) * 32]

|

| 176 |

+

|

| 177 |

+

with torch.no_grad():

|

| 178 |

+

signal = torch.FloatTensor(trunck).to(device)[None]

|

| 179 |

+

message_tensor = torch.FloatTensor(message_npy).to(device)[None]

|

| 180 |

+

signal_wmd_tensor = self.watermark_model.encode(signal, message_tensor)

|

| 181 |

+

signal_wmd_npy = signal_wmd_tensor.detach().cpu().squeeze()

|

| 182 |

+

audio[(coeff * n) * K: (coeff * n + 1) * K] = signal_wmd_npy

|

| 183 |

+

return audio

|

| 184 |

+

|

| 185 |

+

def detect_watermark(self, audio, n_repeat):

|

| 186 |

+

bits = []

|

| 187 |

+

K = 16000

|

| 188 |

+

coeff = 2

|

| 189 |

+

for n in range(n_repeat):

|

| 190 |

+

trunck = audio[(coeff * n) * K: (coeff * n + 1) * K]

|

| 191 |

+

if len(trunck) != K:

|

| 192 |

+

print('Audio too short, fail to detect watermark')

|

| 193 |

+

return 'Fail'

|

| 194 |

+

with torch.no_grad():

|

| 195 |

+

signal = torch.FloatTensor(trunck).to(self.device).unsqueeze(0)

|

| 196 |

+

message_decoded_npy = (self.watermark_model.decode(signal) >= 0.5).int().detach().cpu().numpy().squeeze()

|

| 197 |

+

bits.append(message_decoded_npy)

|

| 198 |

+

bits = np.stack(bits).reshape(-1, 8)

|

| 199 |

+

message = utils.bits_to_string(bits)

|

| 200 |

+

return message

|

| 201 |

+

|

attentions.py

ADDED

|

@@ -0,0 +1,465 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|