Spaces:

Running

Running

deploy: sync 2c1b516d from GitHub Actions

Browse files- blog.md +0 -211

- src/train.py +1 -1

blog.md

DELETED

|

@@ -1,211 +0,0 @@

|

|

| 1 |

-

# VisionCoder OpenEnv | Screenshot-to-HTML with Multi-Agent RL

|

| 2 |

-

|

| 3 |

-

**Scaler × Meta PyTorch Hackathon 2026 | Solo submission by [@amaljoe88](https://huggingface.co/spaces/amaljoe88/vision-coder-openenv)**

|

| 4 |

-

|

| 5 |

-

---

|

| 6 |

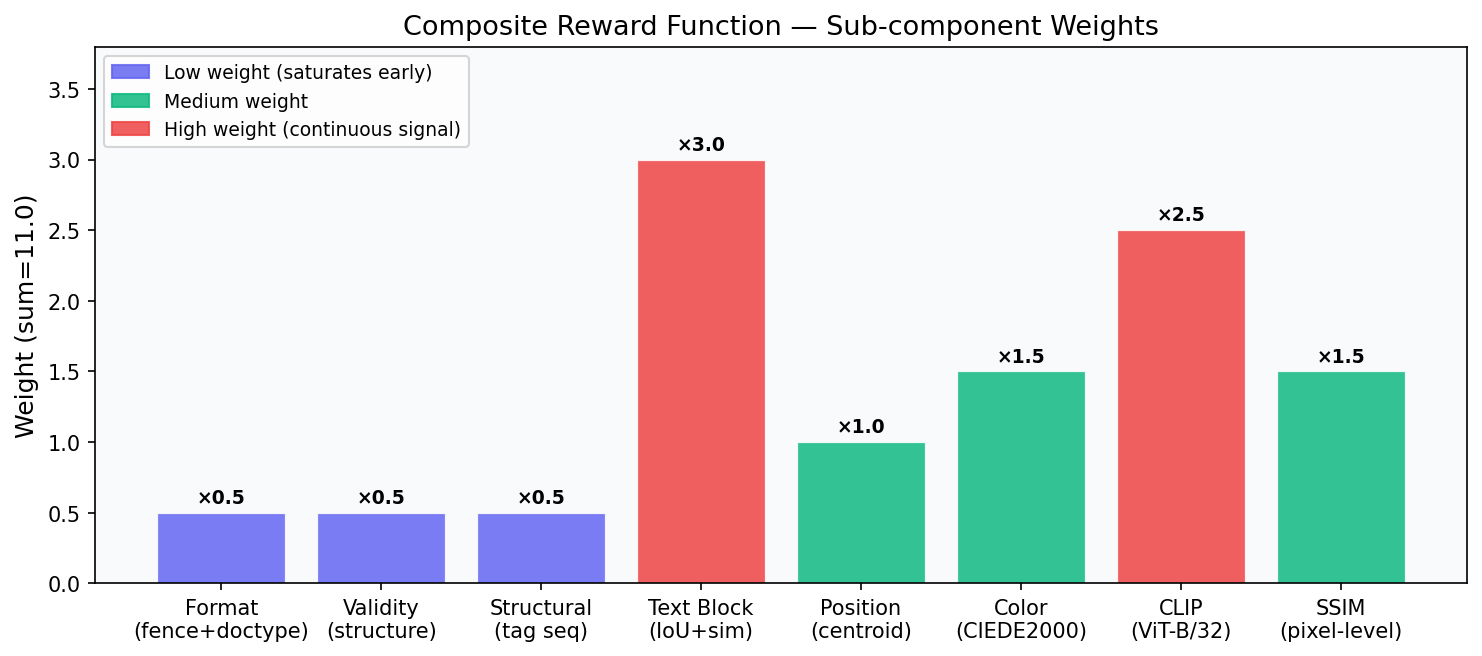

-

|

| 7 |

-

## The Problem

|

| 8 |

-

|

| 9 |

-

Turn a screenshot into working HTML. It sounds simple but it forces a model to do two hard things at once: *understand what the UI looks like visually* and *express that understanding in code*. A single LLM call tends to produce structurally valid HTML that looks nothing like the reference. Headings are present, a button is present but the layout is wrong, colors are off, nothing is positioned correctly.

|

| 10 |

-

|

| 11 |

-

The deeper problem: **the model can't see its own output.** It generates HTML blindly, has no way to compare what it produced against the target, and has no feedback loop to improve.

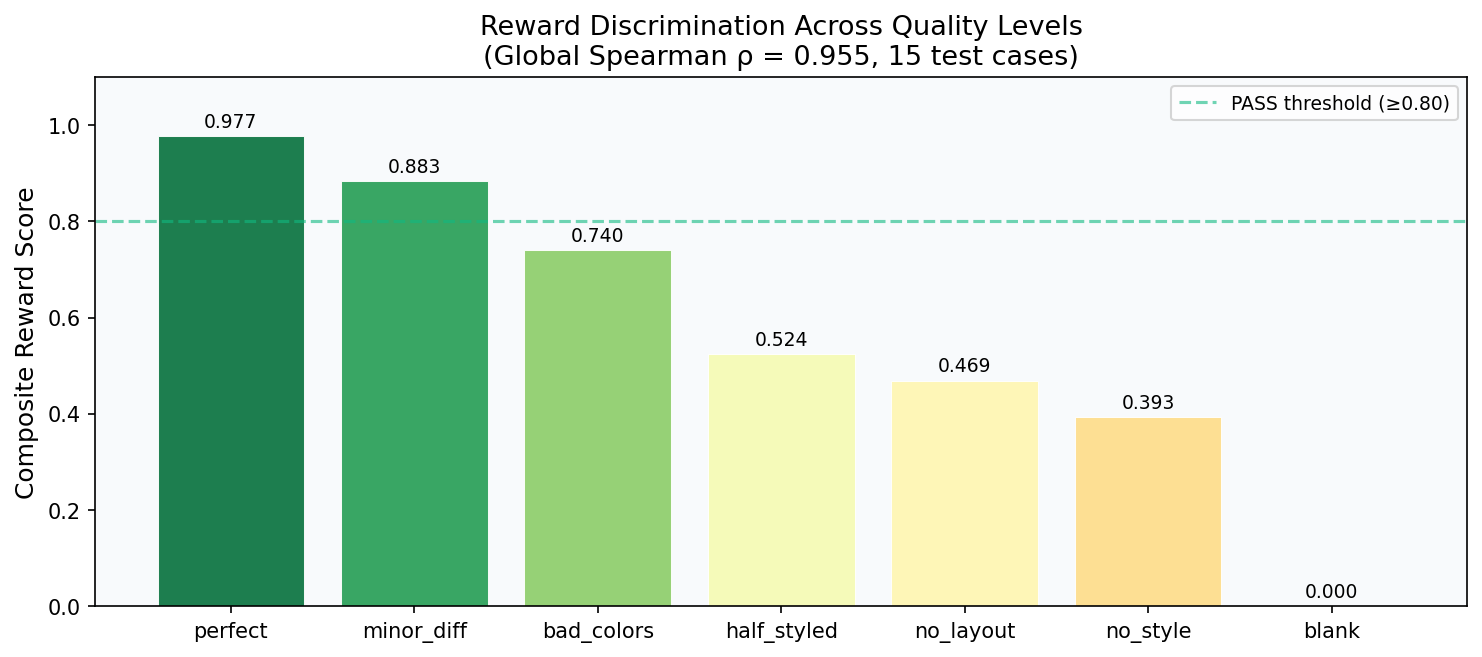

|

| 12 |

-

|

| 13 |

-

We turned this into a **reinforcement learning problem**. The agent generates HTML, a real browser renders it, a reward function computes visual similarity to the reference, and the agent iterates. The environment runs as an HTTP API compatible with the OpenEnv standard.

|

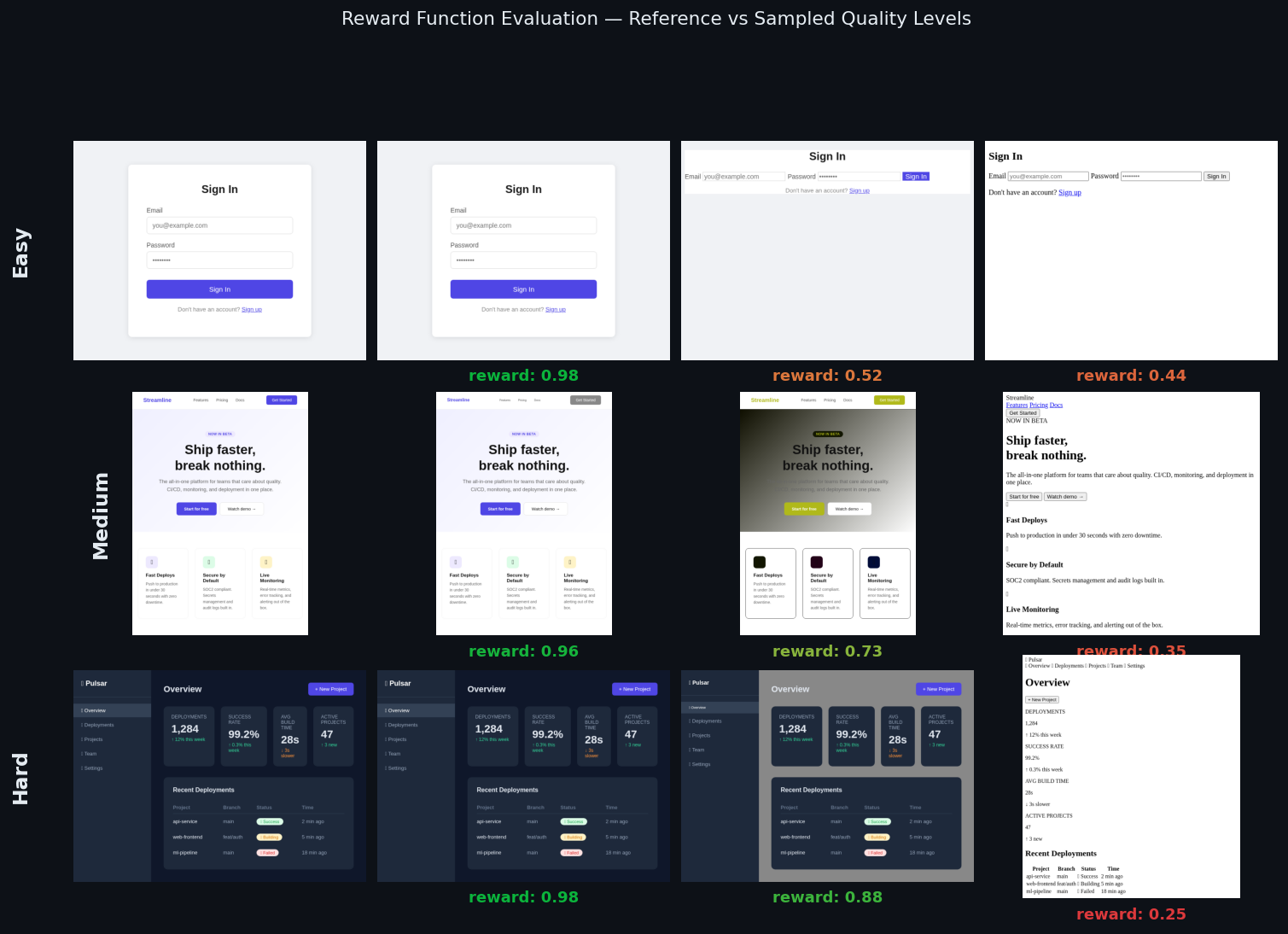

| 14 |

-

|

| 15 |

-

---

|

| 16 |

-

|

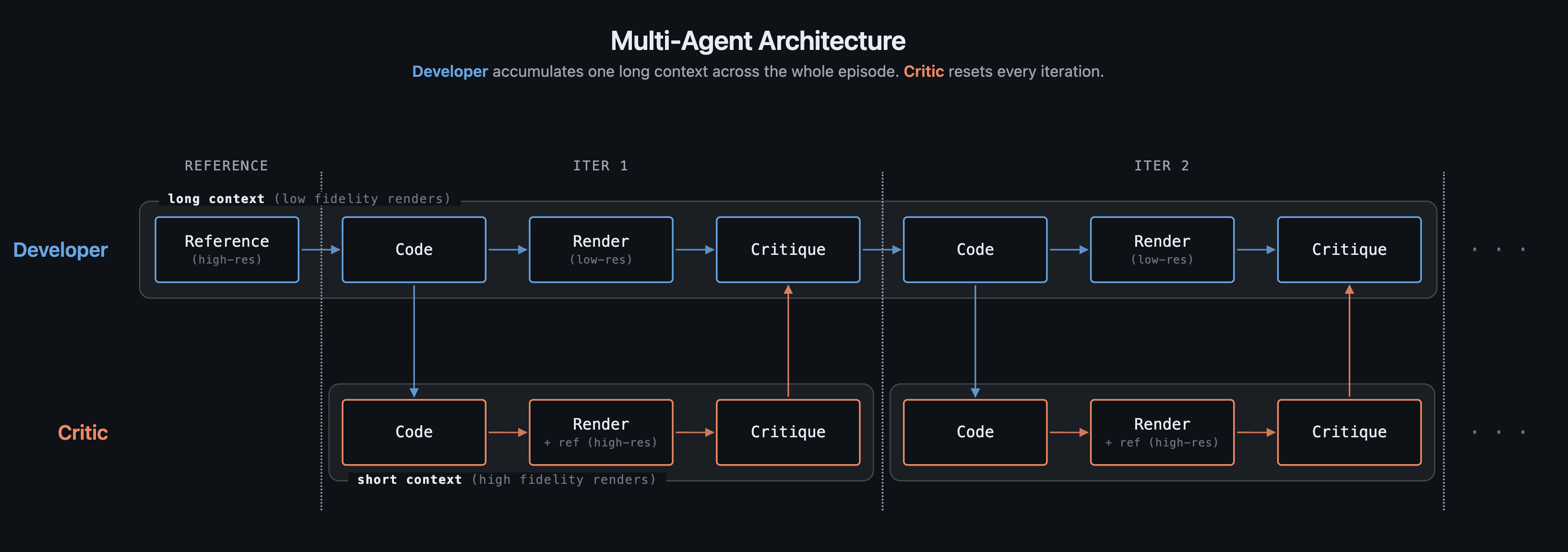

| 17 |

-

## The Environment

|

| 18 |

-

|

| 19 |

-

### OpenEnv-Compatible HTTP API

|

| 20 |

-

|

| 21 |

-

```

|

| 22 |

-

POST /reset?difficulty=easy|medium|hard → { session_id, screenshot_b64 }

|

| 23 |

-

POST /step { html, session_id } → { reward, render_low, render_full, done }

|

| 24 |

-

POST /render { html } → { image_b64 }

|

| 25 |

-

```

|

| 26 |

-

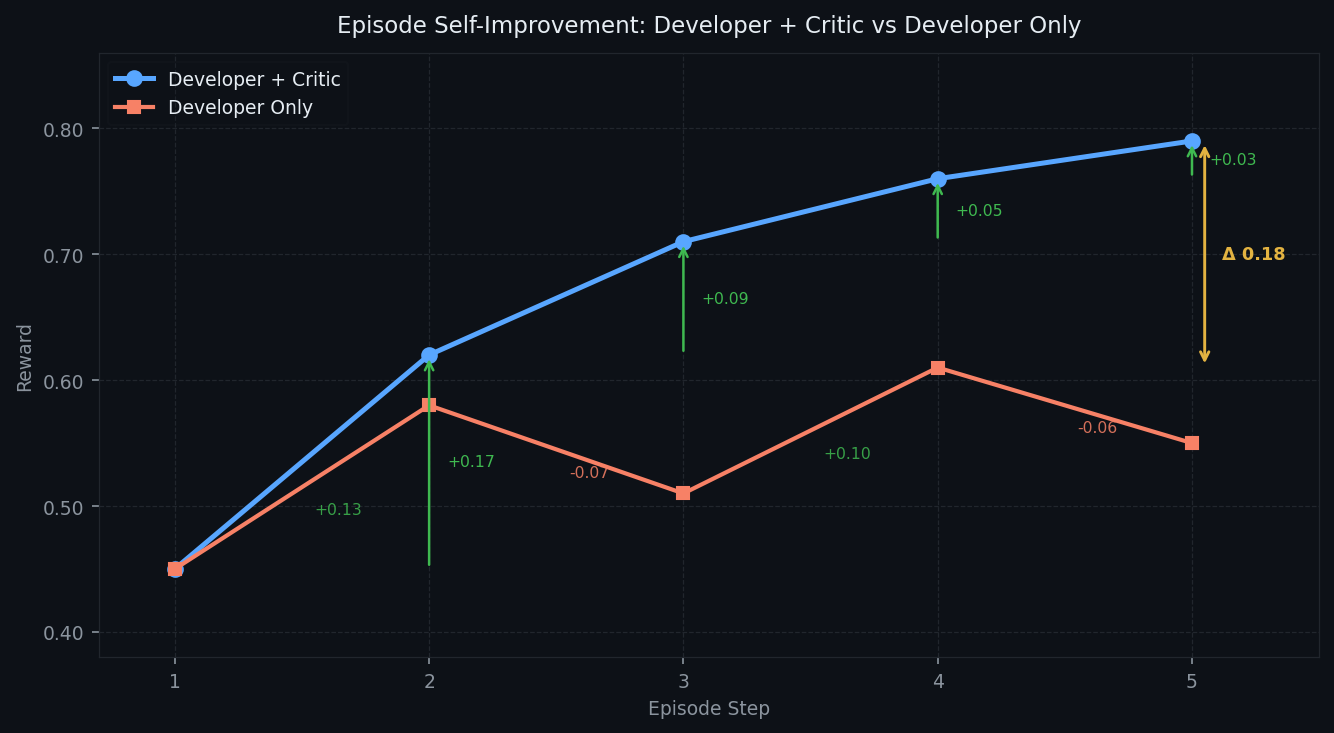

|

| 27 |

-

Every HTML submission is rendered by a headless Chromium at two resolutions: `320×240` (low-res, passed back to the Developer each turn) and `640×480` (full-res, used by the Critic and reward computation). Episodes run for up to n(=5) steps.

|

| 28 |

-

|

| 29 |

-

### Composite Reward Function

|

| 30 |

-

|

| 31 |

-

The reward is a weighted sum of 8 sub-scores, each measuring a different aspect of visual and structural similarity. The weights asssigned to each reward are tuned using an auto research style approach (similar to [Andrej Karpathy's](https://github.com/karpathy/autoresearch)) - an AI agent loops through a large set of candidate weight combinations parallely and compares the reward ranking against human quality judgements to find the best correlation.

|

| 32 |

-

|

| 33 |

-

|

| 34 |

-

|

| 35 |

-

| Reward | Weight | What it measures |

|

| 36 |

-

|---|---|---|

|

| 37 |

-

| `format` | 0.5 | Has ` ```html ` fence + `<!DOCTYPE html>` |

|

| 38 |

-

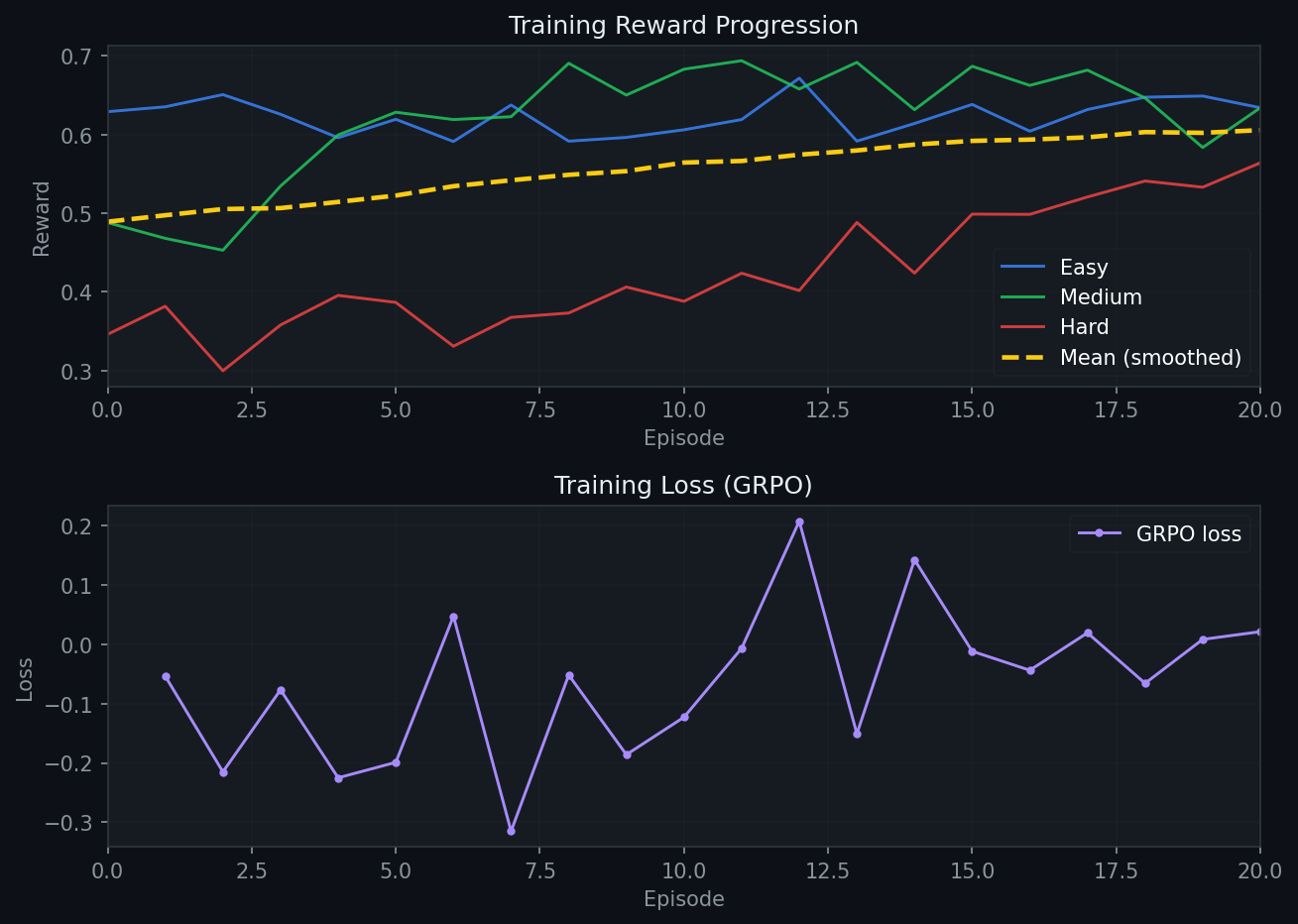

| `validity` | 0.5 | Structural completeness (html/head/body, diverse tags) |

|

| 39 |

-

| `structural` | 0.5 | Tag-sequence similarity + inline-style property coverage |

|

| 40 |

-

| `text_block` | **3.0** | Hungarian-matched text block IoU + text similarity |

|

| 41 |

-

| `position` | 1.0 | Hungarian-matched centroid distance |

|

| 42 |

-

| `color` | 1.5 | Spatial CIEDE2000 on reference non-white pixels |

|

| 43 |

-

| `clip` | **2.5** | CLIP ViT-B/32 cosine similarity, renormalised (threshold 0.65) |

|

| 44 |

-

| `ssim` | 1.5 | Pixel-level SSIM (skimage, 320×240 RGB) |

|

| 45 |

-

|

| 46 |

-

Low-weight rewards (`format`, `validity`, `structural`) saturate early, a structurally complete page already scores near 1.0 on these regardless of visual quality. The high-weight rewards (`text_block`, `clip`, `ssim`) stay discriminative all the way to near-perfect renders. This keeps the gradient signal alive even when the model is already producing good output.

|

| 47 |

-

|

| 48 |

-

### Does the Reward Reflect Human Judgement?

|

| 49 |

-

|

| 50 |

-

We validated the final reward function against human-labelled quality levels across 15 reference pages (5 per difficulty). For each reference, we tested 7 variants ranging from blank to perfect:

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

**Global Spearman ρ = 0.955** — the reward ranking matches human quality judgement on most of the test cases. The chart above shows the reward correctly ordering all 7 levels with clear gaps between them.

|

| 55 |

-

|

| 56 |

-

Browse all 15 test case renders with per-sub-reward breakdowns in the **[interactive demo](https://amaljoe.github.io/vision-coder-openenv/)**.

|

| 57 |

-

|

| 58 |

-

The grid below shows sampled renders from three tasks alongside their reward scores. Each row shows a reference and three variants at different quality levels, ordered from best to worst:

|

| 59 |

-

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

> **Content Multiplier:** We noticed strong correlation with human judgement for most pages, but blank renders were receiving rewards of ~0.3 from sub-rewards like `format` and `validity` that don't require visual content. To fix this, we applied a content multiplier: if the predicted render has fewer than 0.5% non-white pixels while the reference has content, the total reward is forced to 0. A blank page which typically means something prevented rendering (a JavaScript error, a malformed tag, or the model failing to generate HTML at all) now gets the worst possible reward and is correctly treated as a major failure signal.

|

| 63 |

-

|

| 64 |

-

---

|

| 65 |

-

|

| 66 |

-

## The Multi-Agent Architecture

|

| 67 |

-

|

| 68 |

-

### Why Two Agents?

|

| 69 |

-

|

| 70 |

-

A single agent can generate HTML and receive a reward. But the reward is a single number: it tells the model *how bad* the output is, not *what is wrong* or *which selector to fix*. Without visual feedback, the model improvises changes at random and often regresses.

|

| 71 |

-

|

| 72 |

-

The Critic solves this. It looks at both the reference and the current render side by side, reads the HTML source, and produces specific CSS fix instructions. The Developer reads those fixes and applies them in the next step; no guessing required.

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

### Why Not Just Pass Everything to One Model?

|

| 77 |

-

|

| 78 |

-

Context cost. Vision models encode images as sequences of tokens; the number of tokens scales with pixel count:

|

| 79 |

-

|

| 80 |

-

| Image | Resolution | Visual tokens |

|

| 81 |

-

|---|---|---|

|

| 82 |

-

| Low-res render | 320×240 | ~256 |

|

| 83 |

-

| Full-res render / reference | 640×480 | ~1,024 |

|

| 84 |

-

| Full HD (hypothetical) | 1920×1080 | ~9,800 |

|

| 85 |

-

|

| 86 |

-

With full-HD inputs, two images alone would cost ~19,600 tokens exhausting the context budget of a typical consumer GPU before a single token of HTML is generated. Even at our working resolution, giving the Developer both high-res images every step would double its context cost per step across the entire episode and this cost increases quadratically with higher resolutions.

|

| 87 |

-

|

| 88 |

-

### What the Critic Produces

|

| 89 |

-

|

| 90 |

-

```

|

| 91 |

-

[+] HIGH | LAYOUT — products grid is 1-column; reference shows 3-column

|

| 92 |

-

→ FIX: `.products { display: grid; grid-template-columns: repeat(3, 1fr); gap: 24px; }`

|

| 93 |

-

|

| 94 |

-

[+] MEDIUM | COLOR — nav background is white; reference shows dark navy

|

| 95 |

-

→ FIX: `nav { background-color: #0f172a; }`

|

| 96 |

-

```

|

| 97 |

-

|

| 98 |

-

This is fundamentally different from abstract feedback ("the layout is wrong"). The Developer reads the `→ FIX:` line and applies it to the exact CSS selector, no interpretation required.

|

| 99 |

-

|

| 100 |

-

### Self-Improvement Over an Episode

|

| 101 |

-

|

| 102 |

-

Each developer step sees the HTML code generated so far alongside reviews from the critic model and its low-resolution renders (to maintain a manageable context size).

|

| 103 |

-

|

| 104 |

-

The graph below shows what happens with and without the Critic over a 5-step episode:

|

| 105 |

-

|

| 106 |

-

|

| 107 |

-

|

| 108 |

-

Without structured feedback, the Developer oscillates: it makes changes that sometimes improve and sometimes regress the reward. With the Critic providing selector-specific fixes, the reward climbs monotonically. By step 5, Developer + Critic has opened a **Δ0.21 gap** over Developer Only.

|

| 109 |

-

|

| 110 |

-

---

|

| 111 |

-

|

| 112 |

-

## RL Training: Full-Episode GRPO

|

| 113 |

-

|

| 114 |

-

### Full-Episode Training

|

| 115 |

-

|

| 116 |

-

Full-episode GRPO samples K complete trajectories, scores each one by total episode reward, and applies group-relative advantage to every token in the trajectory. Reward shaping is also used to add additional intermediate rewards (difference in rewards between each iteration):

|

| 117 |

-

|

| 118 |

-

```

|

| 119 |

-

R_total(t) = R_terminal + λ · Σ(r_s - r_{s-1} for s = t..n)

|

| 120 |

-

|

| 121 |

-

R_terminal = environment score at final step n ← main signal

|

| 122 |

-

r_s - r_{s-1} = per-step improvement delta ← shaped signal

|

| 123 |

-

λ = 0.2 ← keeps shaped signal subordinate

|

| 124 |

-

```

|

| 125 |

-

|

| 126 |

-

```

|

| 127 |

-

for each task:

|

| 128 |

-

sample K=4 full trajectories (different temperatures/seeds)

|

| 129 |

-

score each: R_terminal_k + shaped improvement deltas

|

| 130 |

-

advantage: A_t = (G_t - mean_k) / std_k

|

| 131 |

-

update: ∇ log π(a_t | s_t) · A_t for all tokens in trajectory

|

| 132 |

-

```

|

| 133 |

-

|

| 134 |

-

### Training Configuration

|

| 135 |

-

|

| 136 |

-

- **Base model**: [`Qwen/Qwen3.5-2B`](https://huggingface.co/Qwen/Qwen3.5-2B) (unified vision+text)

|

| 137 |

-

- **LoRA**: rank=16, α=32, 0.49% trainable parameters (10.9M / 2.2B)

|

| 138 |

-

- **Optimizer**: AdamW, lr=2e-5, max_grad_norm=1.0

|

| 139 |

-

- **Hardware**: 2× NVIDIA A100 80GB PCIe

|

| 140 |

-

- **Episodes**: 20 × 4 rollouts = 80 trajectories

|

| 141 |

-

|

| 142 |

-

### Training Curve

|

| 143 |

-

|

| 144 |

-

|

| 145 |

-

|

| 146 |

-

The three difficulty tracks tell different stories:

|

| 147 |

-

|

| 148 |

-

**Easy (blue)** starts at 0.629. Simple login forms and single-column layouts are already within reach of the base model. There is very little headroom left, so the curve shows mostly small fluctuations with a slight upward drift. The model is already close to its ceiling on these tasks at baseline.

|

| 149 |

-

|

| 150 |

-

**Medium (green)** starts at 0.488 and ends at 0.634 (+0.146). Multi-column grids and landing pages require the Critic's feedback to land correctly. The reward climbs early as the model learns to apply CSS fixes more precisely.

|

| 151 |

-

|

| 152 |

-

**Hard (red)** shows the clearest improvement: 0.346 → 0.564 (+0.218). Complex dashboards and Kanban boards depend on deeply nested flex/grid structures where small CSS errors collapse entire layout regions. At baseline, the model struggles to reconstruct these. With GRPO reinforcing the Critic's CSS fix patterns, it learns which selectors control which regions and how to fix them efficiently. The performance keeps on climbing even at 20 iterations and shows potential for more improvement. **Hard tasks benefit the most because they have the most to gain.**

|

| 153 |

-

|

| 154 |

-

---

|

| 155 |

-

|

| 156 |

-

## RL Training Results: Base vs Trained 2B

|

| 157 |

-

|

| 158 |

-

Scores at iteration 0 (untrained) vs iteration 20 (after GRPO training), from `https://raw.githubusercontent.com/amaljoe/vision-coder-openenv/main/assets/train.jsonl`:

|

| 159 |

-

|

| 160 |

-

| Difficulty | Base (iter 0) | Trained (iter 20) | Delta |

|

| 161 |

-

|---|---|---|---|

|

| 162 |

-

| easy | 0.629 | **0.634** | +0.005 |

|

| 163 |

-

| medium | 0.488 | **0.634** | +0.146 |

|

| 164 |

-

| hard | 0.346 | **0.564** | +0.218 |

|

| 165 |

-

| **mean** | 0.488 | **0.611** | +0.123 |

|

| 166 |

-

|

| 167 |

-

**+25.2% overall improvement** from 20 iterations of full-episode GRPO on 2× A100 80GB (~2h). The pattern matches the training curve: easy was already near its ceiling, medium gained meaningfully, and hard improved the most. The Critic's structured feedback is most valuable precisely where the task is most complex.

|

| 168 |

-

|

| 169 |

-

---

|

| 170 |

-

|

| 171 |

-

## Reproduce

|

| 172 |

-

|

| 173 |

-

### Run the Environment

|

| 174 |

-

|

| 175 |

-

```bash

|

| 176 |

-

pip install -e .

|

| 177 |

-

uvicorn openenv.server.app:app --host 0.0.0.0 --port 7860

|

| 178 |

-

```

|

| 179 |

-

|

| 180 |

-

### Run Inference

|

| 181 |

-

|

| 182 |

-

```bash

|

| 183 |

-

export API_BASE_URL=https://router.huggingface.co/v1

|

| 184 |

-

export MODEL_NAME=Qwen/Qwen3.5-35B-A3B

|

| 185 |

-

export HF_TOKEN=hf_...

|

| 186 |

-

python inference.py

|

| 187 |

-

```

|

| 188 |

-

|

| 189 |

-

### Run RL Training

|

| 190 |

-

|

| 191 |

-

```bash

|

| 192 |

-

python train.py --phase combined --episodes 20 --k-rollouts 4 \

|

| 193 |

-

--model Qwen/Qwen3.5-2B --checkpoint-dir checkpoints/run1

|

| 194 |

-

```

|

| 195 |

-

|

| 196 |

-

### Run Test Suite

|

| 197 |

-

|

| 198 |

-

Run the test suite to generate rewards for the test set. These rewards can be visualised in the [interactive demo](https://amaljoe.github.io/vision-coder-openenv/).

|

| 199 |

-

|

| 200 |

-

```bash

|

| 201 |

-

python tests/test_rewards.py --render # first run (needs Playwright)

|

| 202 |

-

python tests/test_rewards.py # subsequent runs (uses cached renders)

|

| 203 |

-

```

|

| 204 |

-

|

| 205 |

-

---

|

| 206 |

-

|

| 207 |

-

## Links

|

| 208 |

-

|

| 209 |

-

- **HF Space**: [amaljoe88/vision-coder-openenv](https://huggingface.co/spaces/amaljoe88/vision-coder-openenv)

|

| 210 |

-

- **GitHub**: [amaljoe/vision-coder-openenv](https://github.com/amaljoe/vision-coder-openenv)

|

| 211 |

-

- **Interactive demo**: [amaljoe.github.io/vision-coder-openenv](https://amaljoe.github.io/vision-coder-openenv/)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

src/train.py

CHANGED

|

@@ -1,7 +1,7 @@

|

|

| 1 |

"""VisionCoder OpenEnv — Round 2 RL training.

|

| 2 |

|

| 3 |

Full-episode GRPO with shaped reward for Developer and Critic agents.

|

| 4 |

-

Alternating training phases: Developer (critic frozen) → Critic (developer frozen) → repeat.

|

| 5 |

|

| 6 |

Reward design:

|

| 7 |

R_total(t) = R_terminal + λ · Σ(r_s - r_{s-1} for s = t+1 .. n)

|

|

|

|

| 1 |

"""VisionCoder OpenEnv — Round 2 RL training.

|

| 2 |

|

| 3 |

Full-episode GRPO with shaped reward for Developer and Critic agents.

|

| 4 |

+

Alternating training phases: Developer (critic frozen) → Critic (developer frozen) → repeat or Combined training.

|

| 5 |

|

| 6 |

Reward design:

|

| 7 |

R_total(t) = R_terminal + λ · Σ(r_s - r_{s-1} for s = t+1 .. n)

|