Spaces:

Runtime error

Runtime error

Add screenshot text location feature

Browse filesAlso add option to try more detailed captioning.

- app.py +99 -24

- assets/localization_example_1.jpeg +0 -0

app.py

CHANGED

|

@@ -1,9 +1,8 @@

|

|

| 1 |

import gradio as gr

|

|

|

|

| 2 |

import torch

|

| 3 |

-

from transformers import FuyuForCausalLM, AutoTokenizer

|

| 4 |

-

from transformers.models.fuyu.processing_fuyu import FuyuProcessor

|

| 5 |

-

from transformers.models.fuyu.image_processing_fuyu import FuyuImageProcessor

|

| 6 |

from PIL import Image

|

|

|

|

| 7 |

|

| 8 |

model_id = "adept/fuyu-8b"

|

| 9 |

dtype = torch.bfloat16

|

|

@@ -13,36 +12,89 @@ tokenizer = AutoTokenizer.from_pretrained(model_id)

|

|

| 13 |

model = FuyuForCausalLM.from_pretrained(model_id, device_map="auto", torch_dtype=dtype)

|

| 14 |

processor = FuyuProcessor(image_processor=FuyuImageProcessor(), tokenizer=tokenizer)

|

| 15 |

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

def resize_to_max(image, max_width=1080, max_height=1080):

|

| 19 |

-

width, height = image.size

|

| 20 |

-

if width <= max_width and height <= max_height:

|

| 21 |

-

return image

|

| 22 |

-

|

| 23 |

-

scale = min(max_width/width, max_height/height)

|

| 24 |

-

width = int(width*scale)

|

| 25 |

-

height = int(height*scale)

|

| 26 |

-

|

| 27 |

-

return image.resize((width, height), Image.LANCZOS)

|

| 28 |

|

| 29 |

def predict(image, prompt):

|

| 30 |

# image = image.convert('RGB')

|

| 31 |

-

image = resize_to_max(image)

|

| 32 |

-

|

| 33 |

model_inputs = processor(text=prompt, images=[image])

|

| 34 |

model_inputs = {k: v.to(dtype=dtype if torch.is_floating_point(v) else v.dtype, device=device) for k,v in model_inputs.items()}

|

| 35 |

|

| 36 |

-

generation_output = model.generate(**model_inputs, max_new_tokens=

|

| 37 |

prompt_len = model_inputs["input_ids"].shape[-1]

|

| 38 |

return tokenizer.decode(generation_output[0][prompt_len:], skip_special_tokens=True)

|

| 39 |

|

| 40 |

-

def caption(image):

|

| 41 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 42 |

|

| 43 |

def set_example_image(example: list) -> dict:

|

| 44 |

return gr.Image.update(value=example[0])

|

| 45 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 46 |

|

| 47 |

|

| 48 |

css = """

|

|

@@ -88,21 +140,44 @@ with gr.Blocks(css=css) as demo:

|

|

| 88 |

|

| 89 |

with gr.Tab("Image Captioning"):

|

| 90 |

with gr.Row():

|

| 91 |

-

|

|

|

|

|

|

|

| 92 |

captioning_output = gr.Textbox(label="Output")

|

| 93 |

captioning_btn = gr.Button("Generate Caption")

|

| 94 |

|

| 95 |

gr.Examples(

|

| 96 |

-

[["assets/captioning_example_1.png"], ["assets/captioning_example_2.png"]],

|

| 97 |

-

inputs = [captioning_input],

|

| 98 |

outputs = [captioning_output],

|

| 99 |

fn=caption,

|

| 100 |

cache_examples=True,

|

| 101 |

label='Click on any Examples below to get captioning results quickly 👇'

|

| 102 |

)

|

| 103 |

|

| 104 |

-

captioning_btn.click(fn=caption, inputs=captioning_input, outputs=captioning_output)

|

| 105 |

vqa_btn.click(fn=predict, inputs=[image_input, text_input], outputs=vqa_output)

|

| 106 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 107 |

|

| 108 |

demo.launch(server_name="0.0.0.0")

|

|

|

|

| 1 |

import gradio as gr

|

| 2 |

+

import re

|

| 3 |

import torch

|

|

|

|

|

|

|

|

|

|

| 4 |

from PIL import Image

|

| 5 |

+

from transformers import AutoTokenizer, FuyuForCausalLM, FuyuImageProcessor, FuyuProcessor

|

| 6 |

|

| 7 |

model_id = "adept/fuyu-8b"

|

| 8 |

dtype = torch.bfloat16

|

|

|

|

| 12 |

model = FuyuForCausalLM.from_pretrained(model_id, device_map="auto", torch_dtype=dtype)

|

| 13 |

processor = FuyuProcessor(image_processor=FuyuImageProcessor(), tokenizer=tokenizer)

|

| 14 |

|

| 15 |

+

CAPTION_PROMPT = "Generate a coco-style caption.\n"

|

| 16 |

+

DETAILED_CAPTION_PROMPT = "What is happening in this image?"

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 17 |

|

| 18 |

def predict(image, prompt):

|

| 19 |

# image = image.convert('RGB')

|

|

|

|

|

|

|

| 20 |

model_inputs = processor(text=prompt, images=[image])

|

| 21 |

model_inputs = {k: v.to(dtype=dtype if torch.is_floating_point(v) else v.dtype, device=device) for k,v in model_inputs.items()}

|

| 22 |

|

| 23 |

+

generation_output = model.generate(**model_inputs, max_new_tokens=50)

|

| 24 |

prompt_len = model_inputs["input_ids"].shape[-1]

|

| 25 |

return tokenizer.decode(generation_output[0][prompt_len:], skip_special_tokens=True)

|

| 26 |

|

| 27 |

+

def caption(image, detailed_captioning):

|

| 28 |

+

if detailed_captioning:

|

| 29 |

+

caption_prompt = DETAILED_CAPTION_PROMPT

|

| 30 |

+

else:

|

| 31 |

+

caption_prompt = CAPTION_PROMPT

|

| 32 |

+

return predict(image, caption_prompt).lstrip()

|

| 33 |

|

| 34 |

def set_example_image(example: list) -> dict:

|

| 35 |

return gr.Image.update(value=example[0])

|

| 36 |

|

| 37 |

+

def scale_factor_to_fit(original_size, target_size=(1920, 1080)):

|

| 38 |

+

width, height = original_size

|

| 39 |

+

max_width, max_height = target_size

|

| 40 |

+

if width <= max_width and height <= max_height:

|

| 41 |

+

return 1.0

|

| 42 |

+

return min(max_width/width, max_height/height)

|

| 43 |

+

|

| 44 |

+

def tokens_to_box(tokens, original_size):

|

| 45 |

+

bbox_start = tokenizer.convert_tokens_to_ids("<0x00>")

|

| 46 |

+

bbox_end = tokenizer.convert_tokens_to_ids("<0x01>")

|

| 47 |

+

try:

|

| 48 |

+

# Assumes a single box

|

| 49 |

+

bbox_start_pos = (tokens == bbox_start).nonzero(as_tuple=True)[0].item()

|

| 50 |

+

bbox_end_pos = (tokens == bbox_end).nonzero(as_tuple=True)[0].item()

|

| 51 |

+

|

| 52 |

+

if bbox_end_pos != bbox_start_pos + 5:

|

| 53 |

+

return tokens

|

| 54 |

+

|

| 55 |

+

# Retrieve transformed coordinates from tokens

|

| 56 |

+

coords = tokenizer.convert_ids_to_tokens(tokens[bbox_start_pos+1:bbox_end_pos])

|

| 57 |

+

|

| 58 |

+

# Scale back to original image size and multiply by 2

|

| 59 |

+

scale = scale_factor_to_fit(original_size)

|

| 60 |

+

top, left, bottom, right = [2 * int(float(c)/scale) for c in coords]

|

| 61 |

+

|

| 62 |

+

# Replace the IDs so they get detokenized right

|

| 63 |

+

replacement = f" <box>{top}, {left}, {bottom}, {right}</box>"

|

| 64 |

+

replacement = tokenizer.tokenize(replacement)[1:]

|

| 65 |

+

replacement = tokenizer.convert_tokens_to_ids(replacement)

|

| 66 |

+

replacement = torch.tensor(replacement).to(tokens)

|

| 67 |

+

|

| 68 |

+

tokens = torch.cat([tokens[:bbox_start_pos], replacement, tokens[bbox_end_pos+1:]], 0)

|

| 69 |

+

return tokens

|

| 70 |

+

except:

|

| 71 |

+

gr.Error("Can't convert tokens.")

|

| 72 |

+

return tokens

|

| 73 |

+

|

| 74 |

+

def coords_from_response(response):

|

| 75 |

+

# y1, x1, y2, x2

|

| 76 |

+

pattern = r"<box>(\d+),\s*(\d+),\s*(\d+),\s*(\d+)</box>"

|

| 77 |

+

|

| 78 |

+

match = re.search(pattern, response)

|

| 79 |

+

if match:

|

| 80 |

+

# Unpack and change order

|

| 81 |

+

y1, x1, y2, x2 = [int(coord) for coord in match.groups()]

|

| 82 |

+

return (x1, y1, x2, y2)

|

| 83 |

+

else:

|

| 84 |

+

gr.Error("The string is malformed or does not match the expected pattern.")

|

| 85 |

+

|

| 86 |

+

def localize(image, query):

|

| 87 |

+

prompt= f"When presented with a box, perform OCR to extract text contained within it. If provided with text, generate the corresponding bounding box.\n{query}"

|

| 88 |

+

model_inputs = processor(text=prompt, images=[image])

|

| 89 |

+

model_inputs = {k: v.to(dtype=dtype if torch.is_floating_point(v) else v.dtype, device=device) for k,v in model_inputs.items()}

|

| 90 |

+

|

| 91 |

+

generation_output = model.generate(**model_inputs, max_new_tokens=40)

|

| 92 |

+

prompt_len = model_inputs["input_ids"].shape[-1]

|

| 93 |

+

tokens = generation_output[0][prompt_len:]

|

| 94 |

+

tokens = tokens_to_box(tokens, image.size)

|

| 95 |

+

decoded = tokenizer.decode(tokens, skip_special_tokens=True)

|

| 96 |

+

coords = coords_from_response(decoded)

|

| 97 |

+

return image, [(coords, f"Location of \"{query}\"")]

|

| 98 |

|

| 99 |

|

| 100 |

css = """

|

|

|

|

| 140 |

|

| 141 |

with gr.Tab("Image Captioning"):

|

| 142 |

with gr.Row():

|

| 143 |

+

with gr.Column():

|

| 144 |

+

captioning_input = gr.Image(label="Upload your Image", type="pil")

|

| 145 |

+

detailed_captioning_checkbox = gr.Checkbox(label="Enable detailed captioning")

|

| 146 |

captioning_output = gr.Textbox(label="Output")

|

| 147 |

captioning_btn = gr.Button("Generate Caption")

|

| 148 |

|

| 149 |

gr.Examples(

|

| 150 |

+

[["assets/captioning_example_1.png", False], ["assets/captioning_example_2.png", True]],

|

| 151 |

+

inputs = [captioning_input, detailed_captioning_checkbox],

|

| 152 |

outputs = [captioning_output],

|

| 153 |

fn=caption,

|

| 154 |

cache_examples=True,

|

| 155 |

label='Click on any Examples below to get captioning results quickly 👇'

|

| 156 |

)

|

| 157 |

|

| 158 |

+

captioning_btn.click(fn=caption, inputs=[captioning_input, detailed_captioning_checkbox], outputs=captioning_output)

|

| 159 |

vqa_btn.click(fn=predict, inputs=[image_input, text_input], outputs=vqa_output)

|

| 160 |

|

| 161 |

+

with gr.Tab("Find Text in Screenshots"):

|

| 162 |

+

gr.Markdown("This demo is designed to locate text in desktop screenshots. Please, ensure to upload images of 1920x1080 for best results!")

|

| 163 |

+

with gr.Row():

|

| 164 |

+

with gr.Column():

|

| 165 |

+

localization_input = gr.Image(label="Upload your Image", type="pil")

|

| 166 |

+

query_input = gr.Textbox(label="Text to find")

|

| 167 |

+

localization_btn = gr.Button("Locate Text")

|

| 168 |

+

with gr.Column():

|

| 169 |

+

with gr.Row(height=800):

|

| 170 |

+

localization_output = gr.AnnotatedImage(label="Text Position")

|

| 171 |

+

|

| 172 |

+

gr.Examples(

|

| 173 |

+

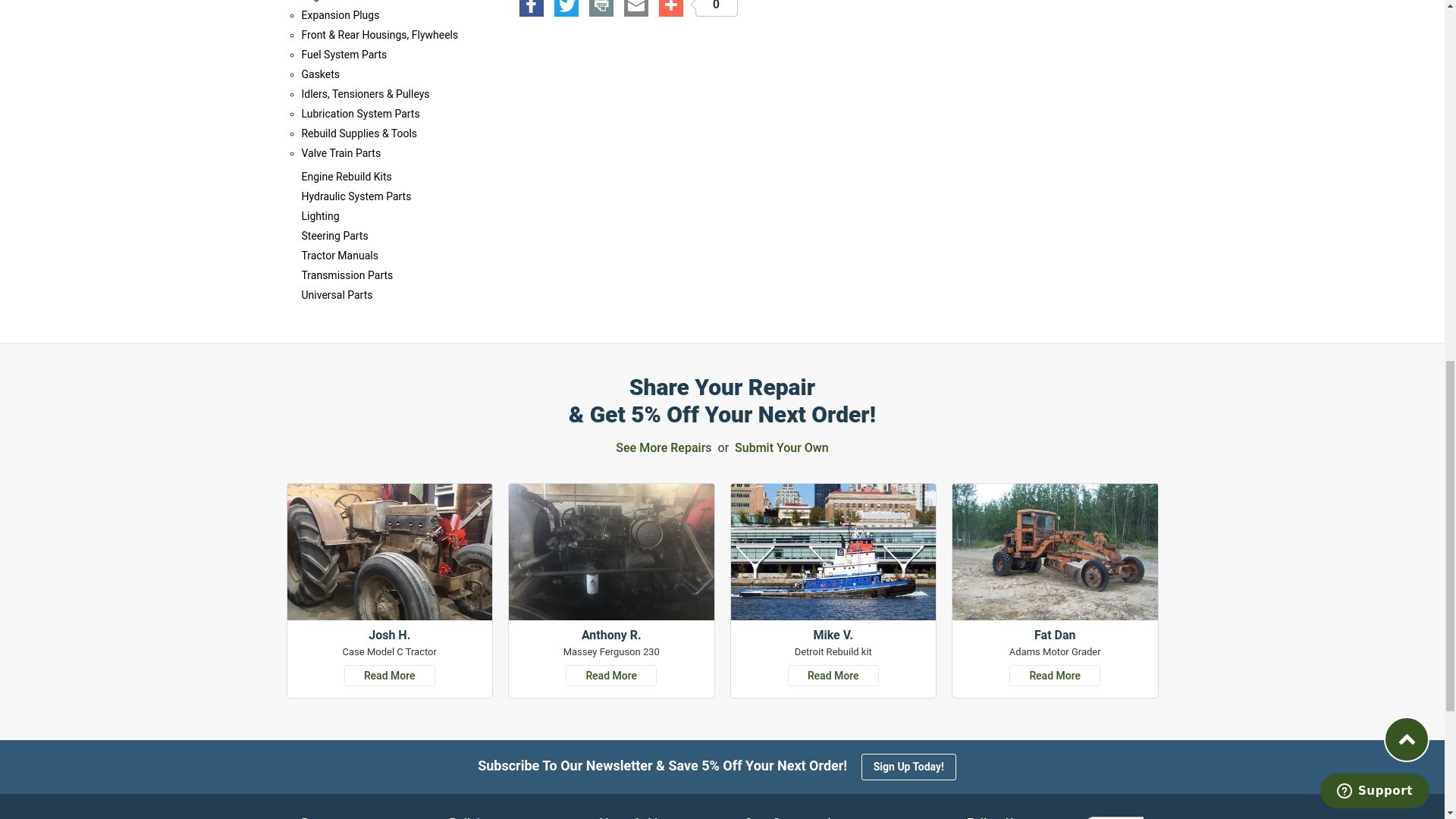

[["assets/localization_example_1.jpeg", "Share your repair"]],

|

| 174 |

+

inputs = [localization_input, query_input],

|

| 175 |

+

outputs = [localization_output],

|

| 176 |

+

fn=localize,

|

| 177 |

+

cache_examples=True,

|

| 178 |

+

label='Click on any Examples below to get localization results quickly 👇'

|

| 179 |

+

)

|

| 180 |

+

|

| 181 |

+

localization_btn.click(fn=localize, inputs=[localization_input, query_input], outputs=localization_output)

|

| 182 |

|

| 183 |

demo.launch(server_name="0.0.0.0")

|

assets/localization_example_1.jpeg

ADDED

|