Spaces:

Runtime error

Runtime error

Upload 68 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +5 -0

- Dockerfile +25 -0

- LICENSE +21 -0

- README.md +288 -11

- app.py +239 -0

- assets/BBOX_SHIFT.md +26 -0

- assets/demo/man/man.png +3 -0

- assets/demo/monalisa/monalisa.png +0 -0

- assets/demo/musk/musk.png +0 -0

- assets/demo/sit/sit.jpeg +0 -0

- assets/demo/sun1/sun.png +0 -0

- assets/demo/sun2/sun.png +0 -0

- assets/demo/video1/video1.png +0 -0

- assets/demo/yongen/yongen.jpeg +0 -0

- assets/figs/landmark_ref.png +0 -0

- assets/figs/musetalk_arc.jpg +0 -0

- configs/inference/test.yaml +10 -0

- data/audio/sun.wav +3 -0

- data/audio/yongen.wav +3 -0

- data/video/sun.mp4 +3 -0

- data/video/yongen.mp4 +3 -0

- musetalk/models/unet.py +47 -0

- musetalk/models/vae.py +148 -0

- musetalk/utils/__init__.py +5 -0

- musetalk/utils/blending.py +59 -0

- musetalk/utils/dwpose/default_runtime.py +54 -0

- musetalk/utils/dwpose/rtmpose-l_8xb32-270e_coco-ubody-wholebody-384x288.py +257 -0

- musetalk/utils/face_detection/README.md +1 -0

- musetalk/utils/face_detection/__init__.py +7 -0

- musetalk/utils/face_detection/api.py +240 -0

- musetalk/utils/face_detection/detection/__init__.py +1 -0

- musetalk/utils/face_detection/detection/core.py +130 -0

- musetalk/utils/face_detection/detection/sfd/__init__.py +1 -0

- musetalk/utils/face_detection/detection/sfd/bbox.py +129 -0

- musetalk/utils/face_detection/detection/sfd/detect.py +114 -0

- musetalk/utils/face_detection/detection/sfd/net_s3fd.py +129 -0

- musetalk/utils/face_detection/detection/sfd/sfd_detector.py +59 -0

- musetalk/utils/face_detection/models.py +261 -0

- musetalk/utils/face_detection/utils.py +313 -0

- musetalk/utils/face_parsing/__init__.py +56 -0

- musetalk/utils/face_parsing/model.py +283 -0

- musetalk/utils/face_parsing/resnet.py +109 -0

- musetalk/utils/preprocessing.py +113 -0

- musetalk/utils/utils.py +61 -0

- musetalk/whisper/audio2feature.py +124 -0

- musetalk/whisper/whisper/__init__.py +116 -0

- musetalk/whisper/whisper/__main__.py +4 -0

- musetalk/whisper/whisper/assets/gpt2/merges.txt +0 -0

- musetalk/whisper/whisper/assets/gpt2/special_tokens_map.json +1 -0

- musetalk/whisper/whisper/assets/gpt2/tokenizer_config.json +1 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,8 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/demo/man/man.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

data/audio/sun.wav filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

data/audio/yongen.wav filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

data/video/sun.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

data/video/yongen.mp4 filter=lfs diff=lfs merge=lfs -text

|

Dockerfile

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM anchorxia/musev:1.0.0

|

| 2 |

+

|

| 3 |

+

#MAINTAINER 维护者信息

|

| 4 |

+

LABEL MAINTAINER="anchorxia"

|

| 5 |

+

LABEL Email="anchorxia@tencent.com"

|

| 6 |

+

LABEL Description="musev gpu runtime image, base docker is pytorch/pytorch:2.0.1-cuda11.7-cudnn8-devel"

|

| 7 |

+

ARG DEBIAN_FRONTEND=noninteractive

|

| 8 |

+

|

| 9 |

+

USER root

|

| 10 |

+

|

| 11 |

+

SHELL ["/bin/bash", "--login", "-c"]

|

| 12 |

+

|

| 13 |

+

RUN . /opt/conda/etc/profile.d/conda.sh \

|

| 14 |

+

&& echo "source activate musev" >> ~/.bashrc \

|

| 15 |

+

&& conda activate musev \

|

| 16 |

+

&& conda env list \

|

| 17 |

+

&& pip install -r requirements.txt \

|

| 18 |

+

&& pip install --no-cache-dir -U openmim \

|

| 19 |

+

&& mim install mmengine \

|

| 20 |

+

&& mim install "mmcv>=2.0.1" \

|

| 21 |

+

&& mim install "mmdet>=3.1.0" \

|

| 22 |

+

&& mim install "mmpose>=1.1.0" \

|

| 23 |

+

|

| 24 |

+

USER root

|

| 25 |

+

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 TMElyralab

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

README.md

CHANGED

|

@@ -1,11 +1,288 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# MuseTalk

|

| 2 |

+

|

| 3 |

+

MuseTalk: Real-Time High Quality Lip Synchronization with Latent Space Inpainting

|

| 4 |

+

</br>

|

| 5 |

+

Yue Zhang <sup>\*</sup>,

|

| 6 |

+

Minhao Liu<sup>\*</sup>,

|

| 7 |

+

Zhaokang Chen,

|

| 8 |

+

Bin Wu<sup>†</sup>,

|

| 9 |

+

Yingjie He,

|

| 10 |

+

Chao Zhan,

|

| 11 |

+

Wenjiang Zhou

|

| 12 |

+

(<sup>*</sup>Equal Contribution, <sup>†</sup>Corresponding Author, benbinwu@tencent.com)

|

| 13 |

+

|

| 14 |

+

**[github](https://github.com/TMElyralab/MuseTalk)** **[huggingface](https://huggingface.co/TMElyralab/MuseTalk)** **Project (comming soon)** **Technical report (comming soon)**

|

| 15 |

+

|

| 16 |

+

We introduce `MuseTalk`, a **real-time high quality** lip-syncing model (30fps+ on an NVIDIA Tesla V100). MuseTalk can be applied with input videos, e.g., generated by [MuseV](https://github.com/TMElyralab/MuseV), as a complete virtual human solution.

|

| 17 |

+

|

| 18 |

+

# Overview

|

| 19 |

+

`MuseTalk` is a real-time high quality audio-driven lip-syncing model trained in the latent space of `ft-mse-vae`, which

|

| 20 |

+

|

| 21 |

+

1. modifies an unseen face according to the input audio, with a size of face region of `256 x 256`.

|

| 22 |

+

1. supports audio in various languages, such as Chinese, English, and Japanese.

|

| 23 |

+

1. supports real-time inference with 30fps+ on an NVIDIA Tesla V100.

|

| 24 |

+

1. supports modification of the center point of the face region proposes, which **SIGNIFICANTLY** affects generation results.

|

| 25 |

+

1. checkpoint available trained on the HDTF dataset.

|

| 26 |

+

1. training codes (comming soon).

|

| 27 |

+

|

| 28 |

+

# News

|

| 29 |

+

- [04/02/2024] Released MuseTalk project and pretrained models.

|

| 30 |

+

|

| 31 |

+

## Model

|

| 32 |

+

|

| 33 |

+

MuseTalk was trained in latent spaces, where the images were encoded by a freezed VAE. The audio was encoded by a freezed `whisper-tiny` model. The architecture of the generation network was borrowed from the UNet of the `stable-diffusion-v1-4`, where the audio embeddings were fused to the image embeddings by cross-attention.

|

| 34 |

+

|

| 35 |

+

## Cases

|

| 36 |

+

### MuseV + MuseTalk make human photos alive!

|

| 37 |

+

<table class="center">

|

| 38 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 39 |

+

<td width="33%">Image</td>

|

| 40 |

+

<td width="33%">MuseV</td>

|

| 41 |

+

<td width="33%">+MuseTalk</td>

|

| 42 |

+

</tr>

|

| 43 |

+

<tr>

|

| 44 |

+

<td>

|

| 45 |

+

<img src=assets/demo/musk/musk.png width="95%">

|

| 46 |

+

</td>

|

| 47 |

+

<td >

|

| 48 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/4a4bb2d1-9d14-4ca9-85c8-7f19c39f712e controls preload></video>

|

| 49 |

+

</td>

|

| 50 |

+

<td >

|

| 51 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/b2a879c2-e23a-4d39-911d-51f0343218e4 controls preload></video>

|

| 52 |

+

</td>

|

| 53 |

+

</tr>

|

| 54 |

+

<tr>

|

| 55 |

+

<td>

|

| 56 |

+

<img src=assets/demo/yongen/yongen.jpeg width="95%">

|

| 57 |

+

</td>

|

| 58 |

+

<td >

|

| 59 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/57ef9dee-a9fd-4dc8-839b-3fbbbf0ff3f4 controls preload></video>

|

| 60 |

+

</td>

|

| 61 |

+

<td >

|

| 62 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/94d8dcba-1bcd-4b54-9d1d-8b6fc53228f0 controls preload></video>

|

| 63 |

+

</td>

|

| 64 |

+

</tr>

|

| 65 |

+

<tr>

|

| 66 |

+

<td>

|

| 67 |

+

<img src=assets/demo/sit/sit.jpeg width="95%">

|

| 68 |

+

</td>

|

| 69 |

+

<td >

|

| 70 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/5fbab81b-d3f2-4c75-abb5-14c76e51769e controls preload></video>

|

| 71 |

+

</td>

|

| 72 |

+

<td >

|

| 73 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/f8100f4a-3df8-4151-8de2-291b09269f66 controls preload></video>

|

| 74 |

+

</td>

|

| 75 |

+

</tr>

|

| 76 |

+

<tr>

|

| 77 |

+

<td>

|

| 78 |

+

<img src=assets/demo/man/man.png width="95%">

|

| 79 |

+

</td>

|

| 80 |

+

<td >

|

| 81 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/a6e7d431-5643-4745-9868-8b423a454153 controls preload></video>

|

| 82 |

+

</td>

|

| 83 |

+

<td >

|

| 84 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/6ccf7bc7-cb48-42de-85bd-076d5ee8a623 controls preload></video>

|

| 85 |

+

</td>

|

| 86 |

+

</tr>

|

| 87 |

+

<tr>

|

| 88 |

+

<td>

|

| 89 |

+

<img src=assets/demo/monalisa/monalisa.png width="95%">

|

| 90 |

+

</td>

|

| 91 |

+

<td >

|

| 92 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/1568f604-a34f-4526-a13a-7d282aa2e773 controls preload></video>

|

| 93 |

+

</td>

|

| 94 |

+

<td >

|

| 95 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/a40784fc-a885-4c1f-9b7e-8f87b7caf4e0 controls preload></video>

|

| 96 |

+

</td>

|

| 97 |

+

</tr>

|

| 98 |

+

<tr>

|

| 99 |

+

<td>

|

| 100 |

+

<img src=assets/demo/sun1/sun.png width="95%">

|

| 101 |

+

</td>

|

| 102 |

+

<td >

|

| 103 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/37a3a666-7b90-4244-8d3a-058cb0e44107 controls preload></video>

|

| 104 |

+

</td>

|

| 105 |

+

<td >

|

| 106 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/172f4ff1-d432-45bd-a5a7-a07dec33a26b controls preload></video>

|

| 107 |

+

</td>

|

| 108 |

+

</tr>

|

| 109 |

+

<tr>

|

| 110 |

+

<td>

|

| 111 |

+

<img src=assets/demo/sun2/sun.png width="95%">

|

| 112 |

+

</td>

|

| 113 |

+

<td >

|

| 114 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/37a3a666-7b90-4244-8d3a-058cb0e44107 controls preload></video>

|

| 115 |

+

</td>

|

| 116 |

+

<td >

|

| 117 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/85a6873d-a028-4cce-af2b-6c59a1f2971d controls preload></video>

|

| 118 |

+

</td>

|

| 119 |

+

</tr>

|

| 120 |

+

</table >

|

| 121 |

+

|

| 122 |

+

* The character of the last two rows, `Xinying Sun`, is a supermodel KOL. You can follow her on [douyin](https://www.douyin.com/user/MS4wLjABAAAAWDThbMPN_6Xmm_JgXexbOii1K-httbu2APdG8DvDyM8).

|

| 123 |

+

|

| 124 |

+

## Video dubbing

|

| 125 |

+

<table class="center">

|

| 126 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 127 |

+

<td width="70%">MuseTalk</td>

|

| 128 |

+

<td width="30%">Original videos</td>

|

| 129 |

+

</tr>

|

| 130 |

+

<tr>

|

| 131 |

+

<td>

|

| 132 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/4d7c5fa1-3550-4d52-8ed2-52f158150f24 controls preload></video>

|

| 133 |

+

</td>

|

| 134 |

+

<td>

|

| 135 |

+

<a href="//www.bilibili.com/video/BV1wT411b7HU">Link</a>

|

| 136 |

+

<href src=""></href>

|

| 137 |

+

</td>

|

| 138 |

+

</tr>

|

| 139 |

+

</table>

|

| 140 |

+

|

| 141 |

+

* For video dubbing, we applied a self-developed tool which can identify the talking person.

|

| 142 |

+

|

| 143 |

+

## Some interesting videos!

|

| 144 |

+

<table class="center">

|

| 145 |

+

<tr style="font-weight: bolder;text-align:center;">

|

| 146 |

+

<td width="50%">Image</td>

|

| 147 |

+

<td width="50%">MuseV + MuseTalk</td>

|

| 148 |

+

</tr>

|

| 149 |

+

<tr>

|

| 150 |

+

<td>

|

| 151 |

+

<img src=assets/demo/video1/video1.png width="95%">

|

| 152 |

+

</td>

|

| 153 |

+

<td>

|

| 154 |

+

<video src=https://github.com/TMElyralab/MuseTalk/assets/163980830/1f02f9c6-8b98-475e-86b8-82ebee82fe0d controls preload></video>

|

| 155 |

+

</td>

|

| 156 |

+

</tr>

|

| 157 |

+

</table>

|

| 158 |

+

|

| 159 |

+

# TODO:

|

| 160 |

+

- [x] trained models and inference codes.

|

| 161 |

+

- [ ] technical report.

|

| 162 |

+

- [ ] training codes.

|

| 163 |

+

- [ ] online UI.

|

| 164 |

+

- [ ] a better model (may take longer).

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

# Getting Started

|

| 168 |

+

We provide a detailed tutorial about the installation and the basic usage of MuseTalk for new users:

|

| 169 |

+

## Installation

|

| 170 |

+

To prepare the Python environment and install additional packages such as opencv, diffusers, mmcv, etc., please follow the steps below:

|

| 171 |

+

### Build environment

|

| 172 |

+

|

| 173 |

+

We recommend a python version >=3.10 and cuda version =11.7. Then build environment as follows:

|

| 174 |

+

|

| 175 |

+

```shell

|

| 176 |

+

pip install -r requirements.txt

|

| 177 |

+

```

|

| 178 |

+

|

| 179 |

+

### mmlab packages

|

| 180 |

+

```bash

|

| 181 |

+

pip install --no-cache-dir -U openmim

|

| 182 |

+

mim install mmengine

|

| 183 |

+

mim install "mmcv>=2.0.1"

|

| 184 |

+

mim install "mmdet>=3.1.0"

|

| 185 |

+

mim install "mmpose>=1.1.0"

|

| 186 |

+

```

|

| 187 |

+

|

| 188 |

+

### Download ffmpeg-static

|

| 189 |

+

Download the ffmpeg-static and

|

| 190 |

+

```

|

| 191 |

+

export FFMPEG_PATH=/path/to/ffmpeg

|

| 192 |

+

```

|

| 193 |

+

for example:

|

| 194 |

+

```

|

| 195 |

+

export FFMPEG_PATH=/musetalk/ffmpeg-4.4-amd64-static

|

| 196 |

+

```

|

| 197 |

+

### Download weights

|

| 198 |

+

You can download weights manually as follows:

|

| 199 |

+

|

| 200 |

+

1. Download our trained [weights](https://huggingface.co/TMElyralab/MuseTalk).

|

| 201 |

+

|

| 202 |

+

2. Download the weights of other components:

|

| 203 |

+

- [sd-vae-ft-mse](https://huggingface.co/stabilityai/sd-vae-ft-mse)

|

| 204 |

+

- [whisper](https://openaipublic.azureedge.net/main/whisper/models/65147644a518d12f04e32d6f3b26facc3f8dd46e5390956a9424a650c0ce22b9/tiny.pt)

|

| 205 |

+

- [dwpose](https://huggingface.co/yzd-v/DWPose/tree/main)

|

| 206 |

+

- [face-parse-bisent](https://github.com/zllrunning/face-parsing.PyTorch)

|

| 207 |

+

- [resnet18](https://download.pytorch.org/models/resnet18-5c106cde.pth)

|

| 208 |

+

|

| 209 |

+

|

| 210 |

+

Finally, these weights should be organized in `models` as follows:

|

| 211 |

+

```

|

| 212 |

+

./models/

|

| 213 |

+

├── musetalk

|

| 214 |

+

│ └── musetalk.json

|

| 215 |

+

│ └── pytorch_model.bin

|

| 216 |

+

├── dwpose

|

| 217 |

+

│ └── dw-ll_ucoco_384.pth

|

| 218 |

+

├── face-parse-bisent

|

| 219 |

+

│ ├── 79999_iter.pth

|

| 220 |

+

│ └── resnet18-5c106cde.pth

|

| 221 |

+

├── sd-vae-ft-mse

|

| 222 |

+

│ ├── config.json

|

| 223 |

+

│ └── diffusion_pytorch_model.bin

|

| 224 |

+

└── whisper

|

| 225 |

+

└── tiny.pt

|

| 226 |

+

```

|

| 227 |

+

## Quickstart

|

| 228 |

+

|

| 229 |

+

### Inference

|

| 230 |

+

Here, we provide the inference script.

|

| 231 |

+

```

|

| 232 |

+

python -m scripts.inference --inference_config configs/inference/test.yaml

|

| 233 |

+

```

|

| 234 |

+

configs/inference/test.yaml is the path to the inference configuration file, including video_path and audio_path.

|

| 235 |

+

The video_path should be either a video file or a directory of images.

|

| 236 |

+

|

| 237 |

+

You are recommended to input video with `25fps`, the same fps used when training the model. If your video is far less than 25fps, you are recommended to apply frame interpolation or directly convert the video to 25fps using ffmpeg.

|

| 238 |

+

|

| 239 |

+

#### Use of bbox_shift to have adjustable results

|

| 240 |

+

:mag_right: We have found that upper-bound of the mask has an important impact on mouth openness. Thus, to control the mask region, we suggest using the `bbox_shift` parameter. Positive values (moving towards the lower half) increase mouth openness, while negative values (moving towards the upper half) decrease mouth openness.

|

| 241 |

+

|

| 242 |

+

You can start by running with the default configuration to obtain the adjustable value range, and then re-run the script within this range.

|

| 243 |

+

|

| 244 |

+

For example, in the case of `Xinying Sun`, after running the default configuration, it shows that the adjustable value rage is [-9, 9]. Then, to decrease the mouth openness, we set the value to be `-7`.

|

| 245 |

+

```

|

| 246 |

+

python -m scripts.inference --inference_config configs/inference/test.yaml --bbox_shift -7

|

| 247 |

+

```

|

| 248 |

+

:pushpin: More technical details can be found in [bbox_shift](assets/BBOX_SHIFT.md).

|

| 249 |

+

|

| 250 |

+

#### Combining MuseV and MuseTalk

|

| 251 |

+

|

| 252 |

+

As a complete solution to virtual human generation, you are suggested to first apply [MuseV](https://github.com/TMElyralab/MuseV) to generate a video (text-to-video, image-to-video or pose-to-video) by referring [this](https://github.com/TMElyralab/MuseV?tab=readme-ov-file#text2video). Frame interpolation is suggested to increase frame rate. Then, you can use `MuseTalk` to generate a lip-sync video by referring [this](https://github.com/TMElyralab/MuseTalk?tab=readme-ov-file#inference).

|

| 253 |

+

|

| 254 |

+

# Note

|

| 255 |

+

|

| 256 |

+

If you want to launch online video chats, you are suggested to generate videos using MuseV and apply necessary pre-processing such as face detection and face parsing in advance. During online chatting, only UNet and the VAE decoder are involved, which makes MuseTalk real-time.

|

| 257 |

+

|

| 258 |

+

|

| 259 |

+

# Acknowledgement

|

| 260 |

+

1. We thank open-source components like [whisper](https://github.com/openai/whisper), [dwpose](https://github.com/IDEA-Research/DWPose), [face-alignment](https://github.com/1adrianb/face-alignment), [face-parsing](https://github.com/zllrunning/face-parsing.PyTorch), [S3FD](https://github.com/yxlijun/S3FD.pytorch).

|

| 261 |

+

1. MuseTalk has referred much to [diffusers](https://github.com/huggingface/diffusers) and [isaacOnline/whisper](https://github.com/isaacOnline/whisper/tree/extract-embeddings).

|

| 262 |

+

1. MuseTalk has been built on [HDTF](https://github.com/MRzzm/HDTF) datasets.

|

| 263 |

+

|

| 264 |

+

Thanks for open-sourcing!

|

| 265 |

+

|

| 266 |

+

# Limitations

|

| 267 |

+

- Resolution: Though MuseTalk uses a face region size of 256 x 256, which make it better than other open-source methods, it has not yet reached the theoretical resolution bound. We will continue to deal with this problem.

|

| 268 |

+

If you need higher resolution, you could apply super resolution models such as [GFPGAN](https://github.com/TencentARC/GFPGAN) in combination with MuseTalk.

|

| 269 |

+

|

| 270 |

+

- Identity preservation: Some details of the original face are not well preserved, such as mustache, lip shape and color.

|

| 271 |

+

|

| 272 |

+

- Jitter: There exists some jitter as the current pipeline adopts single-frame generation.

|

| 273 |

+

|

| 274 |

+

# Citation

|

| 275 |

+

```bib

|

| 276 |

+

@article{musetalk,

|

| 277 |

+

title={MuseTalk: Real-Time High Quality Lip Synchorization with Latent Space Inpainting},

|

| 278 |

+

author={Zhang, Yue and Liu, Minhao and Chen, Zhaokang and Wu, Bin and He, Yingjie and Zhan, Chao and Zhou, Wenjiang},

|

| 279 |

+

journal={arxiv},

|

| 280 |

+

year={2024}

|

| 281 |

+

}

|

| 282 |

+

```

|

| 283 |

+

# Disclaimer/License

|

| 284 |

+

1. `code`: The code of MuseTalk is released under the MIT License. There is no limitation for both academic and commercial usage.

|

| 285 |

+

1. `model`: The trained model are available for any purpose, even commercially.

|

| 286 |

+

1. `other opensource model`: Other open-source models used must comply with their license, such as `whisper`, `ft-mse-vae`, `dwpose`, `S3FD`, etc..

|

| 287 |

+

1. The testdata are collected from internet, which are available for non-commercial research purposes only.

|

| 288 |

+

1. `AIGC`: This project strives to impact the domain of AI-driven video generation positively. Users are granted the freedom to create videos using this tool, but they are expected to comply with local laws and utilize it responsibly. The developers do not assume any responsibility for potential misuse by users.

|

app.py

ADDED

|

@@ -0,0 +1,239 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import time

|

| 3 |

+

import pdb

|

| 4 |

+

|

| 5 |

+

import gradio as gr

|

| 6 |

+

import spaces

|

| 7 |

+

import numpy as np

|

| 8 |

+

import sys

|

| 9 |

+

import subprocess

|

| 10 |

+

|

| 11 |

+

from huggingface_hub import snapshot_download

|

| 12 |

+

|

| 13 |

+

import argparse

|

| 14 |

+

import os

|

| 15 |

+

from omegaconf import OmegaConf

|

| 16 |

+

import numpy as np

|

| 17 |

+

import cv2

|

| 18 |

+

import torch

|

| 19 |

+

import glob

|

| 20 |

+

import pickle

|

| 21 |

+

from tqdm import tqdm

|

| 22 |

+

import copy

|

| 23 |

+

from argparse import Namespace

|

| 24 |

+

|

| 25 |

+

from musetalk.utils.utils import get_file_type,get_video_fps,datagen

|

| 26 |

+

from musetalk.utils.preprocessing import get_landmark_and_bbox,read_imgs,coord_placeholder

|

| 27 |

+

from musetalk.utils.blending import get_image

|

| 28 |

+

from musetalk.utils.utils import load_all_model

|

| 29 |

+

import shutil

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

ProjectDir = os.path.abspath(os.path.dirname(__file__))

|

| 34 |

+

CheckpointsDir = os.path.join(ProjectDir, "checkpoints")

|

| 35 |

+

|

| 36 |

+

def download_model():

|

| 37 |

+

if not os.path.exists(CheckpointsDir):

|

| 38 |

+

os.makedirs(CheckpointsDir)

|

| 39 |

+

print("Checkpoint Not Downloaded, start downloading...")

|

| 40 |

+

tic = time.time()

|

| 41 |

+

snapshot_download(

|

| 42 |

+

repo_id="TMElyralab/MuseTalk",

|

| 43 |

+

local_dir=CheckpointsDir,

|

| 44 |

+

max_workers=8,

|

| 45 |

+

local_dir_use_symlinks=True,

|

| 46 |

+

)

|

| 47 |

+

toc = time.time()

|

| 48 |

+

print(f"download cost {toc-tic} seconds")

|

| 49 |

+

else:

|

| 50 |

+

print("Already download the model.")

|

| 51 |

+

|

| 52 |

+

@spaces.GPU(duration=600)

|

| 53 |

+

@torch.no_grad()

|

| 54 |

+

def inference(audio_path,video_path,bbox_shift,progress=gr.Progress(track_tqdm=True)):

|

| 55 |

+

args_dict={"result_dir":'./results', "fps":25, "batch_size":8, "output_vid_name":'', "use_saved_coord":False}#same with inferenece script

|

| 56 |

+

args = Namespace(**args_dict)

|

| 57 |

+

|

| 58 |

+

input_basename = os.path.basename(video_path).split('.')[0]

|

| 59 |

+

audio_basename = os.path.basename(audio_path).split('.')[0]

|

| 60 |

+

output_basename = f"{input_basename}_{audio_basename}"

|

| 61 |

+

result_img_save_path = os.path.join(args.result_dir, output_basename) # related to video & audio inputs

|

| 62 |

+

crop_coord_save_path = os.path.join(result_img_save_path, input_basename+".pkl") # only related to video input

|

| 63 |

+

os.makedirs(result_img_save_path,exist_ok =True)

|

| 64 |

+

|

| 65 |

+

if args.output_vid_name=="":

|

| 66 |

+

output_vid_name = os.path.join(args.result_dir, output_basename+".mp4")

|

| 67 |

+

else:

|

| 68 |

+

output_vid_name = os.path.join(args.result_dir, args.output_vid_name)

|

| 69 |

+

############################################## extract frames from source video ##############################################

|

| 70 |

+

if get_file_type(video_path)=="video":

|

| 71 |

+

save_dir_full = os.path.join(args.result_dir, input_basename)

|

| 72 |

+

os.makedirs(save_dir_full,exist_ok = True)

|

| 73 |

+

cmd = f"ffmpeg -v fatal -i {video_path} -start_number 0 {save_dir_full}/%08d.png"

|

| 74 |

+

os.system(cmd)

|

| 75 |

+

input_img_list = sorted(glob.glob(os.path.join(save_dir_full, '*.[jpJP][pnPN]*[gG]')))

|

| 76 |

+

fps = get_video_fps(video_path)

|

| 77 |

+

else: # input img folder

|

| 78 |

+

input_img_list = glob.glob(os.path.join(video_path, '*.[jpJP][pnPN]*[gG]'))

|

| 79 |

+

input_img_list = sorted(input_img_list, key=lambda x: int(os.path.splitext(os.path.basename(x))[0]))

|

| 80 |

+

fps = args.fps

|

| 81 |

+

#print(input_img_list)

|

| 82 |

+

############################################## extract audio feature ##############################################

|

| 83 |

+

whisper_feature = audio_processor.audio2feat(audio_path)

|

| 84 |

+

whisper_chunks = audio_processor.feature2chunks(feature_array=whisper_feature,fps=fps)

|

| 85 |

+

############################################## preprocess input image ##############################################

|

| 86 |

+

if os.path.exists(crop_coord_save_path) and args.use_saved_coord:

|

| 87 |

+

print("using extracted coordinates")

|

| 88 |

+

with open(crop_coord_save_path,'rb') as f:

|

| 89 |

+

coord_list = pickle.load(f)

|

| 90 |

+

frame_list = read_imgs(input_img_list)

|

| 91 |

+

else:

|

| 92 |

+

print("extracting landmarks...time consuming")

|

| 93 |

+

coord_list, frame_list = get_landmark_and_bbox(input_img_list, bbox_shift)

|

| 94 |

+

with open(crop_coord_save_path, 'wb') as f:

|

| 95 |

+

pickle.dump(coord_list, f)

|

| 96 |

+

|

| 97 |

+

i = 0

|

| 98 |

+

input_latent_list = []

|

| 99 |

+

for bbox, frame in zip(coord_list, frame_list):

|

| 100 |

+

if bbox == coord_placeholder:

|

| 101 |

+

continue

|

| 102 |

+

x1, y1, x2, y2 = bbox

|

| 103 |

+

crop_frame = frame[y1:y2, x1:x2]

|

| 104 |

+

crop_frame = cv2.resize(crop_frame,(256,256),interpolation = cv2.INTER_LANCZOS4)

|

| 105 |

+

latents = vae.get_latents_for_unet(crop_frame)

|

| 106 |

+

input_latent_list.append(latents)

|

| 107 |

+

|

| 108 |

+

# to smooth the first and the last frame

|

| 109 |

+

frame_list_cycle = frame_list + frame_list[::-1]

|

| 110 |

+

coord_list_cycle = coord_list + coord_list[::-1]

|

| 111 |

+

input_latent_list_cycle = input_latent_list + input_latent_list[::-1]

|

| 112 |

+

############################################## inference batch by batch ##############################################

|

| 113 |

+

print("start inference")

|

| 114 |

+

video_num = len(whisper_chunks)

|

| 115 |

+

batch_size = args.batch_size

|

| 116 |

+

gen = datagen(whisper_chunks,input_latent_list_cycle,batch_size)

|

| 117 |

+

res_frame_list = []

|

| 118 |

+

for i, (whisper_batch,latent_batch) in enumerate(tqdm(gen,total=int(np.ceil(float(video_num)/batch_size)))):

|

| 119 |

+

|

| 120 |

+

tensor_list = [torch.FloatTensor(arr) for arr in whisper_batch]

|

| 121 |

+

audio_feature_batch = torch.stack(tensor_list).to(unet.device) # torch, B, 5*N,384

|

| 122 |

+

audio_feature_batch = pe(audio_feature_batch)

|

| 123 |

+

|

| 124 |

+

pred_latents = unet.model(latent_batch, timesteps, encoder_hidden_states=audio_feature_batch).sample

|

| 125 |

+

recon = vae.decode_latents(pred_latents)

|

| 126 |

+

for res_frame in recon:

|

| 127 |

+

res_frame_list.append(res_frame)

|

| 128 |

+

|

| 129 |

+

############################################## pad to full image ##############################################

|

| 130 |

+

print("pad talking image to original video")

|

| 131 |

+

for i, res_frame in enumerate(tqdm(res_frame_list)):

|

| 132 |

+

bbox = coord_list_cycle[i%(len(coord_list_cycle))]

|

| 133 |

+

ori_frame = copy.deepcopy(frame_list_cycle[i%(len(frame_list_cycle))])

|

| 134 |

+

x1, y1, x2, y2 = bbox

|

| 135 |

+

try:

|

| 136 |

+

res_frame = cv2.resize(res_frame.astype(np.uint8),(x2-x1,y2-y1))

|

| 137 |

+

except:

|

| 138 |

+

# print(bbox)

|

| 139 |

+

continue

|

| 140 |

+

|

| 141 |

+

combine_frame = get_image(ori_frame,res_frame,bbox)

|

| 142 |

+

cv2.imwrite(f"{result_img_save_path}/{str(i).zfill(8)}.png",combine_frame)

|

| 143 |

+

|

| 144 |

+

cmd_img2video = f"ffmpeg -y -v fatal -r {fps} -f image2 -i {result_img_save_path}/%08d.png -vcodec libx264 -vf format=rgb24,scale=out_color_matrix=bt709,format=yuv420p -crf 18 temp.mp4"

|

| 145 |

+

print(cmd_img2video)

|

| 146 |

+

os.system(cmd_img2video)

|

| 147 |

+

|

| 148 |

+

cmd_combine_audio = f"ffmpeg -y -v fatal -i {audio_path} -i temp.mp4 {output_vid_name}"

|

| 149 |

+

print(cmd_combine_audio)

|

| 150 |

+

os.system(cmd_combine_audio)

|

| 151 |

+

|

| 152 |

+

os.remove("temp.mp4")

|

| 153 |

+

shutil.rmtree(result_img_save_path)

|

| 154 |

+

print(f"result is save to {output_vid_name}")

|

| 155 |

+

return output_vid_name

|

| 156 |

+

|

| 157 |

+

download_model() # for huggingface deployment.

|

| 158 |

+

|

| 159 |

+

|

| 160 |

+

# load model weights

|

| 161 |

+

audio_processor,vae,unet,pe = load_all_model()

|

| 162 |

+

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

|

| 163 |

+

timesteps = torch.tensor([0], device=device)

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

|

| 168 |

+

def check_video(video):

|

| 169 |

+

# Define the output video file name

|

| 170 |

+

dir_path, file_name = os.path.split(video)

|

| 171 |

+

if file_name.startswith("outputxxx_"):

|

| 172 |

+

return video

|

| 173 |

+

# Add the output prefix to the file name

|

| 174 |

+

output_file_name = "outputxxx_" + file_name

|

| 175 |

+

|

| 176 |

+

# Combine the directory path and the new file name

|

| 177 |

+

output_video = os.path.join(dir_path, output_file_name)

|

| 178 |

+

|

| 179 |

+

|

| 180 |

+

# Run the ffmpeg command to change the frame rate to 25fps

|

| 181 |

+

command = f"ffmpeg -i {video} -r 25 {output_video} -y"

|

| 182 |

+

subprocess.run(command, shell=True, check=True)

|

| 183 |

+

return output_video

|

| 184 |

+

|

| 185 |

+

|

| 186 |

+

|

| 187 |

+

|

| 188 |

+

css = """#input_img {max-width: 1024px !important} #output_vid {max-width: 1024px; max-height: 576px}"""

|

| 189 |

+

|

| 190 |

+

with gr.Blocks(css=css) as demo:

|

| 191 |

+

gr.Markdown(

|

| 192 |

+

"<div align='center'> <h1>MuseTalk: Real-Time High Quality Lip Synchronization with Latent Space Inpainting </span> </h1> \

|

| 193 |

+

<h2 style='font-weight: 450; font-size: 1rem; margin: 0rem'>\

|

| 194 |

+

</br>\

|

| 195 |

+

Yue Zhang <sup>\*</sup>,\

|

| 196 |

+

Minhao Liu<sup>\*</sup>,\

|

| 197 |

+

Zhaokang Chen,\

|

| 198 |

+

Bin Wu<sup>†</sup>,\

|

| 199 |

+

Yingjie He,\

|

| 200 |

+

Chao Zhan,\

|

| 201 |

+

Wenjiang Zhou\

|

| 202 |

+

(<sup>*</sup>Equal Contribution, <sup>†</sup>Corresponding Author, benbinwu@tencent.com)\

|

| 203 |

+

Lyra Lab, Tencent Music Entertainment\

|

| 204 |

+

</h2> \

|

| 205 |

+

<a style='font-size:18px;color: #000000' href='https://github.com/TMElyralab/MuseTalk'>[Github Repo]</a>\

|

| 206 |

+

<a style='font-size:18px;color: #000000' href='https://github.com/TMElyralab/MuseTalk'>[Huggingface]</a>\

|

| 207 |

+

<a style='font-size:18px;color: #000000' href=''> [Technical report(Coming Soon)] </a>\

|

| 208 |

+

<a style='font-size:18px;color: #000000' href=''> [Project Page(Coming Soon)] </a> </div>"

|

| 209 |

+

)

|

| 210 |

+

|

| 211 |

+

with gr.Row():

|

| 212 |

+

with gr.Column():

|

| 213 |

+

audio = gr.Audio(label="Driven Audio",type="filepath")

|

| 214 |

+

video = gr.Video(label="Reference Video")

|

| 215 |

+

bbox_shift = gr.Number(label="BBox_shift,[-9,9]", value=-1)

|

| 216 |

+

btn = gr.Button("Generate")

|

| 217 |

+

out1 = gr.Video()

|

| 218 |

+

|

| 219 |

+

video.change(

|

| 220 |

+

fn=check_video, inputs=[video], outputs=[video]

|

| 221 |

+

)

|

| 222 |

+

btn.click(

|

| 223 |

+

fn=inference,

|

| 224 |

+

inputs=[

|

| 225 |

+

audio,

|

| 226 |

+

video,

|

| 227 |

+

bbox_shift,

|

| 228 |

+

],

|

| 229 |

+

outputs=out1,

|

| 230 |

+

)

|

| 231 |

+

|

| 232 |

+

# Set the IP and port

|

| 233 |

+

ip_address = "0.0.0.0" # Replace with your desired IP address

|

| 234 |

+

port_number = 7860 # Replace with your desired port number

|

| 235 |

+

|

| 236 |

+

|

| 237 |

+

demo.queue().launch(

|

| 238 |

+

share=False , debug=True, server_name=ip_address, server_port=port_number

|

| 239 |

+

)

|

assets/BBOX_SHIFT.md

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

## Why is there a "bbox_shift" parameter?

|

| 2 |

+

When processing training data, we utilize the combination of face detection results (bbox) and facial landmarks to determine the region of the head segmentation box. Specifically, we use the upper bound of the bbox as the upper boundary of the segmentation box, the maximum y value of the facial landmarks coordinates as the lower boundary of the segmentation box, and the minimum and maximum x values of the landmarks coordinates as the left and right boundaries of the segmentation box. By processing the dataset in this way, we can ensure the integrity of the face.

|

| 3 |

+

|

| 4 |

+

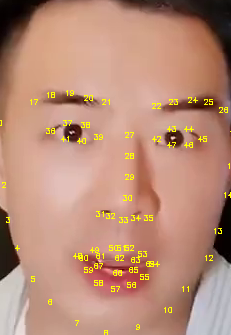

However, we have observed that the masked ratio on the face varies across different images due to the varying face shapes of subjects. Furthermore, we found that the upper-bound of the mask mainly lies close to the landmark28, landmark29 and landmark30 landmark points (as shown in Fig.1), which correspond to proportions of 15%, 63%, and 22% in the dataset, respectively.

|

| 5 |

+

|

| 6 |

+

During the inference process, we discover that as the upper-bound of the mask gets closer to the mouth (near landmark30), the audio features contribute more to lip movements. Conversely, as the upper-bound of the mask moves away from the mouth (near landmark28), the audio features contribute more to generating details of facial appearance. Hence, we define this characteristic as a parameter that can adjust the contribution of audio features to generating lip movements, which users can modify according to their specific needs in practical scenarios.

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

Fig.1. Facial landmarks

|

| 11 |

+

### Step 0.

|

| 12 |

+

Running with the default configuration to obtain the adjustable value range.

|

| 13 |

+

```

|

| 14 |

+

python -m scripts.inference --inference_config configs/inference/test.yaml

|

| 15 |

+

```

|

| 16 |

+

```

|

| 17 |

+

********************************************bbox_shift parameter adjustment**********************************************************

|

| 18 |

+

Total frame:「838」 Manually adjust range : [ -9~9 ] , the current value: 0

|

| 19 |

+

*************************************************************************************************************************************

|

| 20 |

+

```

|

| 21 |

+

### Step 1.

|

| 22 |

+

Re-run the script within the above range.

|

| 23 |

+

```

|

| 24 |

+

python -m scripts.inference --inference_config configs/inference/test.yaml --bbox_shift xx # where xx is in [-9, 9].

|

| 25 |

+

```

|

| 26 |

+

In our experimental observations, we found that positive values (moving towards the lower half) generally increase mouth openness, while negative values (moving towards the upper half) generally decrease mouth openness. However, it's important to note that this is not an absolute rule, and users may need to adjust the parameter according to their specific needs and the desired effect.

|

assets/demo/man/man.png

ADDED

|

Git LFS Details

|

assets/demo/monalisa/monalisa.png

ADDED

|

assets/demo/musk/musk.png

ADDED

|

assets/demo/sit/sit.jpeg

ADDED

|

assets/demo/sun1/sun.png

ADDED

|

assets/demo/sun2/sun.png

ADDED

|

assets/demo/video1/video1.png

ADDED

|

assets/demo/yongen/yongen.jpeg

ADDED

|

assets/figs/landmark_ref.png

ADDED

|

assets/figs/musetalk_arc.jpg

ADDED

|

configs/inference/test.yaml

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

task_0:

|

| 2 |

+

video_path: "data/video/yongen.mp4"

|

| 3 |

+

audio_path: "data/audio/yongen.wav"

|

| 4 |

+

|

| 5 |

+

task_1:

|

| 6 |

+

video_path: "data/video/sun.mp4"

|

| 7 |

+

audio_path: "data/audio/sun.wav"

|

| 8 |

+

bbox_shift: -7

|

| 9 |

+

|

| 10 |

+

|

data/audio/sun.wav

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3f163b0fe2f278504c15cab74cd37b879652749e2a8a69f7848ad32c847d8007

|

| 3 |

+

size 1983572

|

data/audio/yongen.wav

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2b775c363c968428d1d6df4456495e4c11f00e3204d3082e51caff415ec0e2ba

|

| 3 |

+

size 1536078

|

data/video/sun.mp4

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9f240982090f4255a7589e3cd67b4219be7820f9eb9a7461fc915eb5f0c8e075

|

| 3 |

+

size 2217973

|

data/video/yongen.mp4

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1effa976d410571cd185554779d6d43a6ba636e0e3401385db1d607daa46441f

|

| 3 |

+

size 1870923

|

musetalk/models/unet.py

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

import torch.nn as nn

|

| 3 |

+

import math

|

| 4 |

+

import json

|

| 5 |

+

|

| 6 |

+

from diffusers import UNet2DConditionModel

|

| 7 |

+

import sys

|

| 8 |

+

import time

|

| 9 |

+

import numpy as np

|

| 10 |

+

import os

|

| 11 |

+

|

| 12 |

+

class PositionalEncoding(nn.Module):

|

| 13 |

+

def __init__(self, d_model=384, max_len=5000):

|

| 14 |

+

super(PositionalEncoding, self).__init__()

|

| 15 |

+

pe = torch.zeros(max_len, d_model)

|

| 16 |

+

position = torch.arange(0, max_len, dtype=torch.float).unsqueeze(1)

|

| 17 |

+

div_term = torch.exp(torch.arange(0, d_model, 2).float() * (-math.log(10000.0) / d_model))

|

| 18 |

+

pe[:, 0::2] = torch.sin(position * div_term)

|

| 19 |

+

pe[:, 1::2] = torch.cos(position * div_term)

|

| 20 |

+

pe = pe.unsqueeze(0)

|

| 21 |

+

self.register_buffer('pe', pe)

|

| 22 |

+

|

| 23 |

+

def forward(self, x):

|

| 24 |

+

b, seq_len, d_model = x.size()

|

| 25 |

+

pe = self.pe[:, :seq_len, :]

|

| 26 |

+

x = x + pe.to(x.device)

|

| 27 |

+

return x

|

| 28 |

+

|

| 29 |

+

class UNet():

|

| 30 |

+

def __init__(self,

|

| 31 |

+

unet_config,

|

| 32 |

+

model_path,

|

| 33 |

+

use_float16=False,

|

| 34 |

+

):

|

| 35 |

+

with open(unet_config, 'r') as f:

|

| 36 |

+

unet_config = json.load(f)

|

| 37 |

+

self.model = UNet2DConditionModel(**unet_config)

|

| 38 |

+

self.pe = PositionalEncoding(d_model=384)

|

| 39 |

+

self.device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

|

| 40 |

+

self.weights = torch.load(model_path) if torch.cuda.is_available() else torch.load(model_path, map_location=self.device)

|

| 41 |

+

self.model.load_state_dict(self.weights)

|

| 42 |

+

if use_float16:

|

| 43 |

+

self.model = self.model.half()

|

| 44 |

+

self.model.to(self.device)

|

| 45 |

+

|

| 46 |

+

if __name__ == "__main__":

|

| 47 |

+

unet = UNet()

|

musetalk/models/vae.py

ADDED

|

@@ -0,0 +1,148 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from diffusers import AutoencoderKL

|

| 2 |

+

import torch

|

| 3 |

+

import torchvision.transforms as transforms

|

| 4 |

+

import torch.nn.functional as F

|

| 5 |

+

import cv2

|

| 6 |

+

import numpy as np

|

| 7 |

+

from PIL import Image

|

| 8 |

+

import os

|

| 9 |

+

|

| 10 |

+

class VAE():

|

| 11 |

+

"""

|

| 12 |

+

VAE (Variational Autoencoder) class for image processing.

|

| 13 |

+

"""

|

| 14 |

+

|

| 15 |

+

def __init__(self, model_path="./models/sd-vae-ft-mse/", resized_img=256, use_float16=False):