one

Browse files- .DS_Store +0 -0

- LICENSE +21 -0

- __pycache__/model.cpython-310.pyc +0 -0

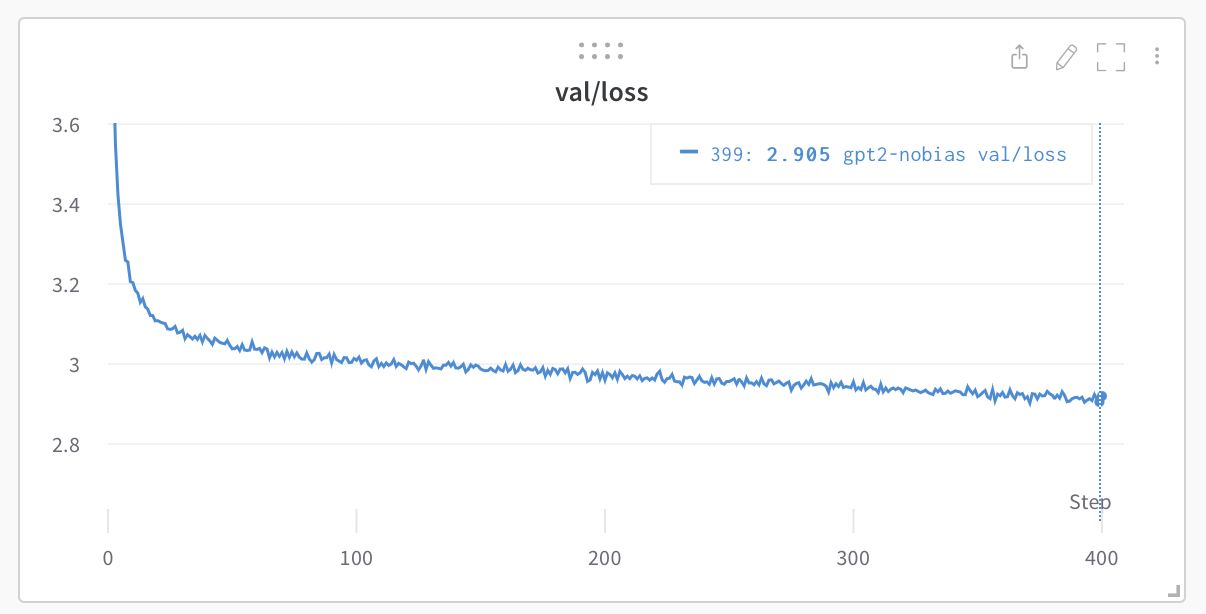

- assets/gpt2_124M_loss.png +0 -0

- assets/nanogpt.jpg +0 -0

- bench.py +117 -0

- config/eval_gpt2.py +8 -0

- config/eval_gpt2_large.py +8 -0

- config/eval_gpt2_medium.py +8 -0

- config/eval_gpt2_xl.py +8 -0

- config/finetune_shakespeare.py +25 -0

- config/train_gpt2.py +25 -0

- config/train_shakespeare_char.py +37 -0

- configurator.py +47 -0

- data/.DS_Store +0 -0

- data/openwebtext/prepare.py +80 -0

- data/openwebtext/readme.md +15 -0

- data/shakespeare/prepare.py +33 -0

- data/shakespeare/readme.md +9 -0

- data/shakespeare_char/.DS_Store +0 -0

- data/shakespeare_char/input.txt +0 -0

- data/shakespeare_char/meta.pkl +0 -0

- data/shakespeare_char/prepare.py +68 -0

- data/shakespeare_char/readme.md +9 -0

- model.py +330 -0

- out-shakespeare-char/.DS_Store +0 -0

- sample.py +89 -0

- scaling_laws.ipynb +0 -0

- train.py +333 -0

- transformer_sizing.ipynb +402 -0

.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2022 Andrej Karpathy

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

__pycache__/model.cpython-310.pyc

ADDED

|

Binary file (12.6 kB). View file

|

|

|

assets/gpt2_124M_loss.png

ADDED

|

assets/nanogpt.jpg

ADDED

|

bench.py

ADDED

|

@@ -0,0 +1,117 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

A much shorter version of train.py for benchmarking

|

| 3 |

+

"""

|

| 4 |

+

import os

|

| 5 |

+

from contextlib import nullcontext

|

| 6 |

+

import numpy as np

|

| 7 |

+

import time

|

| 8 |

+

import torch

|

| 9 |

+

from model import GPTConfig, GPT

|

| 10 |

+

|

| 11 |

+

# -----------------------------------------------------------------------------

|

| 12 |

+

batch_size = 12

|

| 13 |

+

block_size = 1024

|

| 14 |

+

bias = False

|

| 15 |

+

real_data = True

|

| 16 |

+

seed = 1337

|

| 17 |

+

device = 'cuda' # examples: 'cpu', 'cuda', 'cuda:0', 'cuda:1', etc.

|

| 18 |

+

dtype = 'bfloat16' if torch.cuda.is_available() and torch.cuda.is_bf16_supported() else 'float16' # 'float32' or 'bfloat16' or 'float16'

|

| 19 |

+

compile = True # use PyTorch 2.0 to compile the model to be faster

|

| 20 |

+

profile = False # use pytorch profiler, or just simple benchmarking?

|

| 21 |

+

exec(open('configurator.py').read()) # overrides from command line or config file

|

| 22 |

+

# -----------------------------------------------------------------------------

|

| 23 |

+

|

| 24 |

+

torch.manual_seed(seed)

|

| 25 |

+

torch.cuda.manual_seed(seed)

|

| 26 |

+

torch.backends.cuda.matmul.allow_tf32 = True # allow tf32 on matmul

|

| 27 |

+

torch.backends.cudnn.allow_tf32 = True # allow tf32 on cudnn

|

| 28 |

+

device_type = 'cuda' if 'cuda' in device else 'cpu' # for later use in torch.autocast

|

| 29 |

+

ptdtype = {'float32': torch.float32, 'bfloat16': torch.bfloat16, 'float16': torch.float16}[dtype]

|

| 30 |

+

ctx = nullcontext() if device_type == 'cpu' else torch.amp.autocast(device_type=device_type, dtype=ptdtype)

|

| 31 |

+

|

| 32 |

+

# data loading init

|

| 33 |

+

if real_data:

|

| 34 |

+

dataset = 'openwebtext'

|

| 35 |

+

data_dir = os.path.join('data', dataset)

|

| 36 |

+

train_data = np.memmap(os.path.join(data_dir, 'train.bin'), dtype=np.uint16, mode='r')

|

| 37 |

+

def get_batch(split):

|

| 38 |

+

data = train_data # note ignore split in benchmarking script

|

| 39 |

+

ix = torch.randint(len(data) - block_size, (batch_size,))

|

| 40 |

+

x = torch.stack([torch.from_numpy((data[i:i+block_size]).astype(np.int64)) for i in ix])

|

| 41 |

+

y = torch.stack([torch.from_numpy((data[i+1:i+1+block_size]).astype(np.int64)) for i in ix])

|

| 42 |

+

x, y = x.pin_memory().to(device, non_blocking=True), y.pin_memory().to(device, non_blocking=True)

|

| 43 |

+

return x, y

|

| 44 |

+

else:

|

| 45 |

+

# alternatively, if fixed data is desired to not care about data loading

|

| 46 |

+

x = torch.randint(50304, (batch_size, block_size), device=device)

|

| 47 |

+

y = torch.randint(50304, (batch_size, block_size), device=device)

|

| 48 |

+

get_batch = lambda split: (x, y)

|

| 49 |

+

|

| 50 |

+

# model init

|

| 51 |

+

gptconf = GPTConfig(

|

| 52 |

+

block_size = block_size, # how far back does the model look? i.e. context size

|

| 53 |

+

n_layer = 12, n_head = 12, n_embd = 768, # size of the model

|

| 54 |

+

dropout = 0, # for determinism

|

| 55 |

+

bias = bias,

|

| 56 |

+

)

|

| 57 |

+

model = GPT(gptconf)

|

| 58 |

+

model.to(device)

|

| 59 |

+

|

| 60 |

+

optimizer = model.configure_optimizers(weight_decay=1e-2, learning_rate=1e-4, betas=(0.9, 0.95), device_type=device_type)

|

| 61 |

+

|

| 62 |

+

if compile:

|

| 63 |

+

print("Compiling model...")

|

| 64 |

+

model = torch.compile(model) # pytorch 2.0

|

| 65 |

+

|

| 66 |

+

if profile:

|

| 67 |

+

# useful docs on pytorch profiler:

|

| 68 |

+

# - tutorial https://pytorch.org/tutorials/intermediate/tensorboard_profiler_tutorial.html

|

| 69 |

+

# - api https://pytorch.org/docs/stable/profiler.html#torch.profiler.profile

|

| 70 |

+

wait, warmup, active = 5, 5, 5

|

| 71 |

+

num_steps = wait + warmup + active

|

| 72 |

+

with torch.profiler.profile(

|

| 73 |

+

activities=[torch.profiler.ProfilerActivity.CPU, torch.profiler.ProfilerActivity.CUDA],

|

| 74 |

+

schedule=torch.profiler.schedule(wait=wait, warmup=warmup, active=active, repeat=1),

|

| 75 |

+

on_trace_ready=torch.profiler.tensorboard_trace_handler('./bench_log'),

|

| 76 |

+

record_shapes=False,

|

| 77 |

+

profile_memory=False,

|

| 78 |

+

with_stack=False, # incurs an additional overhead, disable if not needed

|

| 79 |

+

with_flops=True,

|

| 80 |

+

with_modules=False, # only for torchscript models atm

|

| 81 |

+

) as prof:

|

| 82 |

+

|

| 83 |

+

X, Y = get_batch('train')

|

| 84 |

+

for k in range(num_steps):

|

| 85 |

+

with ctx:

|

| 86 |

+

logits, loss = model(X, Y)

|

| 87 |

+

X, Y = get_batch('train')

|

| 88 |

+

optimizer.zero_grad(set_to_none=True)

|

| 89 |

+

loss.backward()

|

| 90 |

+

optimizer.step()

|

| 91 |

+

lossf = loss.item()

|

| 92 |

+

print(f"{k}/{num_steps} loss: {lossf:.4f}")

|

| 93 |

+

|

| 94 |

+

prof.step() # notify the profiler at end of each step

|

| 95 |

+

|

| 96 |

+

else:

|

| 97 |

+

|

| 98 |

+

# simple benchmarking

|

| 99 |

+

torch.cuda.synchronize()

|

| 100 |

+

for stage, num_steps in enumerate([10, 20]): # burnin, then benchmark

|

| 101 |

+

t0 = time.time()

|

| 102 |

+

X, Y = get_batch('train')

|

| 103 |

+

for k in range(num_steps):

|

| 104 |

+

with ctx:

|

| 105 |

+

logits, loss = model(X, Y)

|

| 106 |

+

X, Y = get_batch('train')

|

| 107 |

+

optimizer.zero_grad(set_to_none=True)

|

| 108 |

+

loss.backward()

|

| 109 |

+

optimizer.step()

|

| 110 |

+

lossf = loss.item()

|

| 111 |

+

print(f"{k}/{num_steps} loss: {lossf:.4f}")

|

| 112 |

+

torch.cuda.synchronize()

|

| 113 |

+

t1 = time.time()

|

| 114 |

+

dt = t1-t0

|

| 115 |

+

mfu = model.estimate_mfu(batch_size * 1 * num_steps, dt)

|

| 116 |

+

if stage == 1:

|

| 117 |

+

print(f"time per iteration: {dt/num_steps*1000:.4f}ms, MFU: {mfu*100:.2f}%")

|

config/eval_gpt2.py

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# evaluate the base gpt2

|

| 2 |

+

# n_layer=12, n_head=12, n_embd=768

|

| 3 |

+

# 124M parameters

|

| 4 |

+

batch_size = 8

|

| 5 |

+

eval_iters = 500 # use more iterations to get good estimate

|

| 6 |

+

eval_only = True

|

| 7 |

+

wandb_log = False

|

| 8 |

+

init_from = 'gpt2'

|

config/eval_gpt2_large.py

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# evaluate the base gpt2

|

| 2 |

+

# n_layer=36, n_head=20, n_embd=1280

|

| 3 |

+

# 774M parameters

|

| 4 |

+

batch_size = 8

|

| 5 |

+

eval_iters = 500 # use more iterations to get good estimate

|

| 6 |

+

eval_only = True

|

| 7 |

+

wandb_log = False

|

| 8 |

+

init_from = 'gpt2-large'

|

config/eval_gpt2_medium.py

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# evaluate the base gpt2

|

| 2 |

+

# n_layer=24, n_head=16, n_embd=1024

|

| 3 |

+

# 350M parameters

|

| 4 |

+

batch_size = 8

|

| 5 |

+

eval_iters = 500 # use more iterations to get good estimate

|

| 6 |

+

eval_only = True

|

| 7 |

+

wandb_log = False

|

| 8 |

+

init_from = 'gpt2-medium'

|

config/eval_gpt2_xl.py

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# evaluate the base gpt2

|

| 2 |

+

# n_layer=48, n_head=25, n_embd=1600

|

| 3 |

+

# 1558M parameters

|

| 4 |

+

batch_size = 8

|

| 5 |

+

eval_iters = 500 # use more iterations to get good estimate

|

| 6 |

+

eval_only = True

|

| 7 |

+

wandb_log = False

|

| 8 |

+

init_from = 'gpt2-xl'

|

config/finetune_shakespeare.py

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import time

|

| 2 |

+

|

| 3 |

+

out_dir = 'out-shakespeare'

|

| 4 |

+

eval_interval = 5

|

| 5 |

+

eval_iters = 40

|

| 6 |

+

wandb_log = False # feel free to turn on

|

| 7 |

+

wandb_project = 'shakespeare'

|

| 8 |

+

wandb_run_name = 'ft-' + str(time.time())

|

| 9 |

+

|

| 10 |

+

dataset = 'shakespeare'

|

| 11 |

+

init_from = 'gpt2-xl' # this is the largest GPT-2 model

|

| 12 |

+

|

| 13 |

+

# only save checkpoints if the validation loss improves

|

| 14 |

+

always_save_checkpoint = False

|

| 15 |

+

|

| 16 |

+

# the number of examples per iter:

|

| 17 |

+

# 1 batch_size * 32 grad_accum * 1024 tokens = 32,768 tokens/iter

|

| 18 |

+

# shakespeare has 301,966 tokens, so 1 epoch ~= 9.2 iters

|

| 19 |

+

batch_size = 1

|

| 20 |

+

gradient_accumulation_steps = 32

|

| 21 |

+

max_iters = 20

|

| 22 |

+

|

| 23 |

+

# finetune at constant LR

|

| 24 |

+

learning_rate = 3e-5

|

| 25 |

+

decay_lr = False

|

config/train_gpt2.py

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# config for training GPT-2 (124M) down to very nice loss of ~2.85 on 1 node of 8X A100 40GB

|

| 2 |

+

# launch as the following (e.g. in a screen session) and wait ~5 days:

|

| 3 |

+

# $ torchrun --standalone --nproc_per_node=8 train.py config/train_gpt2.py

|

| 4 |

+

|

| 5 |

+

wandb_log = True

|

| 6 |

+

wandb_project = 'owt'

|

| 7 |

+

wandb_run_name='gpt2-124M'

|

| 8 |

+

|

| 9 |

+

# these make the total batch size be ~0.5M

|

| 10 |

+

# 12 batch size * 1024 block size * 5 gradaccum * 8 GPUs = 491,520

|

| 11 |

+

batch_size = 12

|

| 12 |

+

block_size = 1024

|

| 13 |

+

gradient_accumulation_steps = 5 * 8

|

| 14 |

+

|

| 15 |

+

# this makes total number of tokens be 300B

|

| 16 |

+

max_iters = 600000

|

| 17 |

+

lr_decay_iters = 600000

|

| 18 |

+

|

| 19 |

+

# eval stuff

|

| 20 |

+

eval_interval = 1000

|

| 21 |

+

eval_iters = 200

|

| 22 |

+

log_interval = 10

|

| 23 |

+

|

| 24 |

+

# weight decay

|

| 25 |

+

weight_decay = 1e-1

|

config/train_shakespeare_char.py

ADDED

|

@@ -0,0 +1,37 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# train a miniature character-level shakespeare model

|

| 2 |

+

# good for debugging and playing on macbooks and such

|

| 3 |

+

|

| 4 |

+

out_dir = 'out-shakespeare-char'

|

| 5 |

+

eval_interval = 250 # keep frequent because we'll overfit

|

| 6 |

+

eval_iters = 200

|

| 7 |

+

log_interval = 10 # don't print too too often

|

| 8 |

+

|

| 9 |

+

# we expect to overfit on this small dataset, so only save when val improves

|

| 10 |

+

always_save_checkpoint = False

|

| 11 |

+

|

| 12 |

+

wandb_log = False # override via command line if you like

|

| 13 |

+

wandb_project = 'shakespeare-char'

|

| 14 |

+

wandb_run_name = 'mini-gpt'

|

| 15 |

+

|

| 16 |

+

dataset = 'shakespeare_char'

|

| 17 |

+

gradient_accumulation_steps = 1

|

| 18 |

+

batch_size = 64

|

| 19 |

+

block_size = 256 # context of up to 256 previous characters

|

| 20 |

+

|

| 21 |

+

# baby GPT model :)

|

| 22 |

+

n_layer = 6

|

| 23 |

+

n_head = 6

|

| 24 |

+

n_embd = 384

|

| 25 |

+

dropout = 0.2

|

| 26 |

+

|

| 27 |

+

learning_rate = 1e-3 # with baby networks can afford to go a bit higher

|

| 28 |

+

max_iters = 5000

|

| 29 |

+

lr_decay_iters = 5000 # make equal to max_iters usually

|

| 30 |

+

min_lr = 1e-4 # learning_rate / 10 usually

|

| 31 |

+

beta2 = 0.99 # make a bit bigger because number of tokens per iter is small

|

| 32 |

+

|

| 33 |

+

warmup_iters = 100 # not super necessary potentially

|

| 34 |

+

|

| 35 |

+

# on macbook also add

|

| 36 |

+

# device = 'cpu' # run on cpu only

|

| 37 |

+

# compile = False # do not torch compile the model

|

configurator.py

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

Poor Man's Configurator. Probably a terrible idea. Example usage:

|

| 3 |

+

$ python train.py config/override_file.py --batch_size=32

|

| 4 |

+

this will first run config/override_file.py, then override batch_size to 32

|

| 5 |

+

|

| 6 |

+

The code in this file will be run as follows from e.g. train.py:

|

| 7 |

+

>>> exec(open('configurator.py').read())

|

| 8 |

+

|

| 9 |

+

So it's not a Python module, it's just shuttling this code away from train.py

|

| 10 |

+

The code in this script then overrides the globals()

|

| 11 |

+

|

| 12 |

+

I know people are not going to love this, I just really dislike configuration

|

| 13 |

+

complexity and having to prepend config. to every single variable. If someone

|

| 14 |

+

comes up with a better simple Python solution I am all ears.

|

| 15 |

+

"""

|

| 16 |

+

|

| 17 |

+

import sys

|

| 18 |

+

from ast import literal_eval

|

| 19 |

+

|

| 20 |

+

for arg in sys.argv[1:]:

|

| 21 |

+

if '=' not in arg:

|

| 22 |

+

# assume it's the name of a config file

|

| 23 |

+

assert not arg.startswith('--')

|

| 24 |

+

config_file = arg

|

| 25 |

+

print(f"Overriding config with {config_file}:")

|

| 26 |

+

with open(config_file) as f:

|

| 27 |

+

print(f.read())

|

| 28 |

+

exec(open(config_file).read())

|

| 29 |

+

else:

|

| 30 |

+

# assume it's a --key=value argument

|

| 31 |

+

assert arg.startswith('--')

|

| 32 |

+

key, val = arg.split('=')

|

| 33 |

+

key = key[2:]

|

| 34 |

+

if key in globals():

|

| 35 |

+

try:

|

| 36 |

+

# attempt to eval it it (e.g. if bool, number, or etc)

|

| 37 |

+

attempt = literal_eval(val)

|

| 38 |

+

except (SyntaxError, ValueError):

|

| 39 |

+

# if that goes wrong, just use the string

|

| 40 |

+

attempt = val

|

| 41 |

+

# ensure the types match ok

|

| 42 |

+

assert type(attempt) == type(globals()[key])

|

| 43 |

+

# cross fingers

|

| 44 |

+

print(f"Overriding: {key} = {attempt}")

|

| 45 |

+

globals()[key] = attempt

|

| 46 |

+

else:

|

| 47 |

+

raise ValueError(f"Unknown config key: {key}")

|

data/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

data/openwebtext/prepare.py

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# saves the openwebtext dataset to a binary file for training. following was helpful:

|

| 2 |

+

# https://github.com/HazyResearch/flash-attention/blob/main/training/src/datamodules/language_modeling_hf.py

|

| 3 |

+

|

| 4 |

+

import os

|

| 5 |

+

from tqdm import tqdm

|

| 6 |

+

import numpy as np

|

| 7 |

+

import tiktoken

|

| 8 |

+

from datasets import load_dataset # huggingface datasets

|

| 9 |

+

|

| 10 |

+

# number of workers in .map() call

|

| 11 |

+

# good number to use is ~order number of cpu cores // 2

|

| 12 |

+

num_proc = 8

|

| 13 |

+

|

| 14 |

+

# number of workers in load_dataset() call

|

| 15 |

+

# best number might be different from num_proc above as it also depends on NW speed.

|

| 16 |

+

# it is better than 1 usually though

|

| 17 |

+

num_proc_load_dataset = num_proc

|

| 18 |

+

|

| 19 |

+

if __name__ == '__main__':

|

| 20 |

+

# takes 54GB in huggingface .cache dir, about 8M documents (8,013,769)

|

| 21 |

+

dataset = load_dataset("openwebtext", num_proc=num_proc_load_dataset)

|

| 22 |

+

|

| 23 |

+

# owt by default only contains the 'train' split, so create a test split

|

| 24 |

+

split_dataset = dataset["train"].train_test_split(test_size=0.0005, seed=2357, shuffle=True)

|

| 25 |

+

split_dataset['val'] = split_dataset.pop('test') # rename the test split to val

|

| 26 |

+

|

| 27 |

+

# this results in:

|

| 28 |

+

# >>> split_dataset

|

| 29 |

+

# DatasetDict({

|

| 30 |

+

# train: Dataset({

|

| 31 |

+

# features: ['text'],

|

| 32 |

+

# num_rows: 8009762

|

| 33 |

+

# })

|

| 34 |

+

# val: Dataset({

|

| 35 |

+

# features: ['text'],

|

| 36 |

+

# num_rows: 4007

|

| 37 |

+

# })

|

| 38 |

+

# })

|

| 39 |

+

|

| 40 |

+

# we now want to tokenize the dataset. first define the encoding function (gpt2 bpe)

|

| 41 |

+

enc = tiktoken.get_encoding("gpt2")

|

| 42 |

+

def process(example):

|

| 43 |

+

ids = enc.encode_ordinary(example['text']) # encode_ordinary ignores any special tokens

|

| 44 |

+

ids.append(enc.eot_token) # add the end of text token, e.g. 50256 for gpt2 bpe

|

| 45 |

+

# note: I think eot should be prepended not appended... hmm. it's called "eot" though...

|

| 46 |

+

out = {'ids': ids, 'len': len(ids)}

|

| 47 |

+

return out

|

| 48 |

+

|

| 49 |

+

# tokenize the dataset

|

| 50 |

+

tokenized = split_dataset.map(

|

| 51 |

+

process,

|

| 52 |

+

remove_columns=['text'],

|

| 53 |

+

desc="tokenizing the splits",

|

| 54 |

+

num_proc=num_proc,

|

| 55 |

+

)

|

| 56 |

+

|

| 57 |

+

# concatenate all the ids in each dataset into one large file we can use for training

|

| 58 |

+

for split, dset in tokenized.items():

|

| 59 |

+

arr_len = np.sum(dset['len'], dtype=np.uint64)

|

| 60 |

+

filename = os.path.join(os.path.dirname(__file__), f'{split}.bin')

|

| 61 |

+

dtype = np.uint16 # (can do since enc.max_token_value == 50256 is < 2**16)

|

| 62 |

+

arr = np.memmap(filename, dtype=dtype, mode='w+', shape=(arr_len,))

|

| 63 |

+

total_batches = 1024

|

| 64 |

+

|

| 65 |

+

idx = 0

|

| 66 |

+

for batch_idx in tqdm(range(total_batches), desc=f'writing {filename}'):

|

| 67 |

+

# Batch together samples for faster write

|

| 68 |

+

batch = dset.shard(num_shards=total_batches, index=batch_idx, contiguous=True).with_format('numpy')

|

| 69 |

+

arr_batch = np.concatenate(batch['ids'])

|

| 70 |

+

# Write into mmap

|

| 71 |

+

arr[idx : idx + len(arr_batch)] = arr_batch

|

| 72 |

+

idx += len(arr_batch)

|

| 73 |

+

arr.flush()

|

| 74 |

+

|

| 75 |

+

# train.bin is ~17GB, val.bin ~8.5MB

|

| 76 |

+

# train has ~9B tokens (9,035,582,198)

|

| 77 |

+

# val has ~4M tokens (4,434,897)

|

| 78 |

+

|

| 79 |

+

# to read the bin files later, e.g. with numpy:

|

| 80 |

+

# m = np.memmap('train.bin', dtype=np.uint16, mode='r')

|

data/openwebtext/readme.md

ADDED

|

@@ -0,0 +1,15 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

## openwebtext dataset

|

| 3 |

+

|

| 4 |

+

after running `prepare.py` (preprocess) we get:

|

| 5 |

+

|

| 6 |

+

- train.bin is ~17GB, val.bin ~8.5MB

|

| 7 |

+

- train has ~9B tokens (9,035,582,198)

|

| 8 |

+

- val has ~4M tokens (4,434,897)

|

| 9 |

+

|

| 10 |

+

this came from 8,013,769 documents in total.

|

| 11 |

+

|

| 12 |

+

references:

|

| 13 |

+

|

| 14 |

+

- OpenAI's WebText dataset is discussed in [GPT-2 paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf)

|

| 15 |

+

- [OpenWebText](https://skylion007.github.io/OpenWebTextCorpus/) dataset

|

data/shakespeare/prepare.py

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import requests

|

| 3 |

+

import tiktoken

|

| 4 |

+

import numpy as np

|

| 5 |

+

|

| 6 |

+

# download the tiny shakespeare dataset

|

| 7 |

+

input_file_path = os.path.join(os.path.dirname(__file__), 'input.txt')

|

| 8 |

+

if not os.path.exists(input_file_path):

|

| 9 |

+

data_url = 'https://raw.githubusercontent.com/karpathy/char-rnn/master/data/tinyshakespeare/input.txt'

|

| 10 |

+

with open(input_file_path, 'w') as f:

|

| 11 |

+

f.write(requests.get(data_url).text)

|

| 12 |

+

|

| 13 |

+

with open(input_file_path, 'r') as f:

|

| 14 |

+

data = f.read()

|

| 15 |

+

n = len(data)

|

| 16 |

+

train_data = data[:int(n*0.9)]

|

| 17 |

+

val_data = data[int(n*0.9):]

|

| 18 |

+

|

| 19 |

+

# encode with tiktoken gpt2 bpe

|

| 20 |

+

enc = tiktoken.get_encoding("gpt2")

|

| 21 |

+

train_ids = enc.encode_ordinary(train_data)

|

| 22 |

+

val_ids = enc.encode_ordinary(val_data)

|

| 23 |

+

print(f"train has {len(train_ids):,} tokens")

|

| 24 |

+

print(f"val has {len(val_ids):,} tokens")

|

| 25 |

+

|

| 26 |

+

# export to bin files

|

| 27 |

+

train_ids = np.array(train_ids, dtype=np.uint16)

|

| 28 |

+

val_ids = np.array(val_ids, dtype=np.uint16)

|

| 29 |

+

train_ids.tofile(os.path.join(os.path.dirname(__file__), 'train.bin'))

|

| 30 |

+

val_ids.tofile(os.path.join(os.path.dirname(__file__), 'val.bin'))

|

| 31 |

+

|

| 32 |

+

# train.bin has 301,966 tokens

|

| 33 |

+

# val.bin has 36,059 tokens

|

data/shakespeare/readme.md

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

# tiny shakespeare

|

| 3 |

+

|

| 4 |

+

Tiny shakespeare, of the good old char-rnn fame :)

|

| 5 |

+

|

| 6 |

+

After running `prepare.py`:

|

| 7 |

+

|

| 8 |

+

- train.bin has 301,966 tokens

|

| 9 |

+

- val.bin has 36,059 tokens

|

data/shakespeare_char/.DS_Store

ADDED

|

Binary file (6.15 kB). View file

|

|

|

data/shakespeare_char/input.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

data/shakespeare_char/meta.pkl

ADDED

|

Binary file (703 Bytes). View file

|

|

|

data/shakespeare_char/prepare.py

ADDED

|

@@ -0,0 +1,68 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

Prepare the Shakespeare dataset for character-level language modeling.

|

| 3 |

+

So instead of encoding with GPT-2 BPE tokens, we just map characters to ints.

|

| 4 |

+

Will save train.bin, val.bin containing the ids, and meta.pkl containing the

|

| 5 |

+

encoder and decoder and some other related info.

|

| 6 |

+

"""

|

| 7 |

+

import os

|

| 8 |

+

import pickle

|

| 9 |

+

import requests

|

| 10 |

+

import numpy as np

|

| 11 |

+

|

| 12 |

+

# download the tiny shakespeare dataset

|

| 13 |

+

input_file_path = os.path.join(os.path.dirname(__file__), 'input.txt')

|

| 14 |

+

if not os.path.exists(input_file_path):

|

| 15 |

+

data_url = 'https://raw.githubusercontent.com/karpathy/char-rnn/master/data/tinyshakespeare/input.txt'

|

| 16 |

+

with open(input_file_path, 'w') as f:

|

| 17 |

+

f.write(requests.get(data_url).text)

|

| 18 |

+

|

| 19 |

+

with open(input_file_path, 'r') as f:

|

| 20 |

+

data = f.read()

|

| 21 |

+

print(f"length of dataset in characters: {len(data):,}")

|

| 22 |

+

|

| 23 |

+

# get all the unique characters that occur in this text

|

| 24 |

+

chars = sorted(list(set(data)))

|

| 25 |

+

vocab_size = len(chars)

|

| 26 |

+

print("all the unique characters:", ''.join(chars))

|

| 27 |

+

print(f"vocab size: {vocab_size:,}")

|

| 28 |

+

|

| 29 |

+

# create a mapping from characters to integers

|

| 30 |

+

stoi = { ch:i for i,ch in enumerate(chars) }

|

| 31 |

+

itos = { i:ch for i,ch in enumerate(chars) }

|

| 32 |

+

def encode(s):

|

| 33 |

+

return [stoi[c] for c in s] # encoder: take a string, output a list of integers

|

| 34 |

+

def decode(l):

|

| 35 |

+

return ''.join([itos[i] for i in l]) # decoder: take a list of integers, output a string

|

| 36 |

+

|

| 37 |

+

# create the train and test splits

|

| 38 |

+

n = len(data)

|

| 39 |

+

train_data = data[:int(n*0.9)]

|

| 40 |

+

val_data = data[int(n*0.9):]

|

| 41 |

+

|

| 42 |

+

# encode both to integers

|

| 43 |

+

train_ids = encode(train_data)

|

| 44 |

+

val_ids = encode(val_data)

|

| 45 |

+

print(f"train has {len(train_ids):,} tokens")

|

| 46 |

+

print(f"val has {len(val_ids):,} tokens")

|

| 47 |

+

|

| 48 |

+

# export to bin files

|

| 49 |

+

train_ids = np.array(train_ids, dtype=np.uint16)

|

| 50 |

+

val_ids = np.array(val_ids, dtype=np.uint16)

|

| 51 |

+

train_ids.tofile(os.path.join(os.path.dirname(__file__), 'train.bin'))

|

| 52 |

+

val_ids.tofile(os.path.join(os.path.dirname(__file__), 'val.bin'))

|

| 53 |

+

|

| 54 |

+

# save the meta information as well, to help us encode/decode later

|

| 55 |

+

meta = {

|

| 56 |

+

'vocab_size': vocab_size,

|

| 57 |

+

'itos': itos,

|

| 58 |

+

'stoi': stoi,

|

| 59 |

+

}

|

| 60 |

+

with open(os.path.join(os.path.dirname(__file__), 'meta.pkl'), 'wb') as f:

|

| 61 |

+

pickle.dump(meta, f)

|

| 62 |

+

|

| 63 |

+

# length of dataset in characters: 1115394

|

| 64 |

+

# all the unique characters:

|

| 65 |

+

# !$&',-.3:;?ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz

|

| 66 |

+

# vocab size: 65

|

| 67 |

+

# train has 1003854 tokens

|

| 68 |

+

# val has 111540 tokens

|

data/shakespeare_char/readme.md

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

# tiny shakespeare, character-level

|

| 3 |

+

|

| 4 |

+

Tiny shakespeare, of the good old char-rnn fame :) Treated on character-level.

|

| 5 |

+

|

| 6 |

+

After running `prepare.py`:

|

| 7 |

+

|

| 8 |

+

- train.bin has 1,003,854 tokens

|

| 9 |

+

- val.bin has 111,540 tokens

|

model.py

ADDED

|

@@ -0,0 +1,330 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

Full definition of a GPT Language Model, all of it in this single file.

|

| 3 |

+

References:

|

| 4 |

+

1) the official GPT-2 TensorFlow implementation released by OpenAI:

|

| 5 |

+

https://github.com/openai/gpt-2/blob/master/src/model.py

|

| 6 |

+

2) huggingface/transformers PyTorch implementation:

|

| 7 |

+

https://github.com/huggingface/transformers/blob/main/src/transformers/models/gpt2/modeling_gpt2.py

|

| 8 |

+

"""

|

| 9 |

+

|

| 10 |

+

import math

|

| 11 |

+

import inspect

|

| 12 |

+

from dataclasses import dataclass

|

| 13 |

+

|

| 14 |

+

import torch

|

| 15 |

+

import torch.nn as nn

|

| 16 |

+

from torch.nn import functional as F

|

| 17 |

+

|

| 18 |

+

class LayerNorm(nn.Module):

|

| 19 |

+

""" LayerNorm but with an optional bias. PyTorch doesn't support simply bias=False """

|

| 20 |

+

|

| 21 |

+

def __init__(self, ndim, bias):

|

| 22 |

+

super().__init__()

|

| 23 |

+

self.weight = nn.Parameter(torch.ones(ndim))

|

| 24 |

+

self.bias = nn.Parameter(torch.zeros(ndim)) if bias else None

|

| 25 |

+

|

| 26 |

+

def forward(self, input):

|

| 27 |

+

return F.layer_norm(input, self.weight.shape, self.weight, self.bias, 1e-5)

|

| 28 |

+

|

| 29 |

+

class CausalSelfAttention(nn.Module):

|

| 30 |

+

|

| 31 |

+

def __init__(self, config):

|

| 32 |

+

super().__init__()

|

| 33 |

+

assert config.n_embd % config.n_head == 0

|

| 34 |

+

# key, query, value projections for all heads, but in a batch

|

| 35 |

+

self.c_attn = nn.Linear(config.n_embd, 3 * config.n_embd, bias=config.bias)

|

| 36 |

+

# output projection

|

| 37 |

+

self.c_proj = nn.Linear(config.n_embd, config.n_embd, bias=config.bias)

|

| 38 |

+

# regularization

|

| 39 |

+

self.attn_dropout = nn.Dropout(config.dropout)

|

| 40 |

+

self.resid_dropout = nn.Dropout(config.dropout)

|

| 41 |

+

self.n_head = config.n_head

|

| 42 |

+

self.n_embd = config.n_embd

|

| 43 |

+

self.dropout = config.dropout

|

| 44 |

+

# flash attention make GPU go brrrrr but support is only in PyTorch >= 2.0

|

| 45 |

+

self.flash = hasattr(torch.nn.functional, 'scaled_dot_product_attention')

|

| 46 |

+

if not self.flash:

|

| 47 |

+

print("WARNING: using slow attention. Flash Attention requires PyTorch >= 2.0")

|

| 48 |

+

# causal mask to ensure that attention is only applied to the left in the input sequence

|

| 49 |

+

self.register_buffer("bias", torch.tril(torch.ones(config.block_size, config.block_size))

|

| 50 |

+

.view(1, 1, config.block_size, config.block_size))

|

| 51 |

+

|

| 52 |

+

def forward(self, x):

|

| 53 |

+

B, T, C = x.size() # batch size, sequence length, embedding dimensionality (n_embd)

|

| 54 |

+

|

| 55 |

+

# calculate query, key, values for all heads in batch and move head forward to be the batch dim

|

| 56 |

+

q, k, v = self.c_attn(x).split(self.n_embd, dim=2)

|

| 57 |

+

k = k.view(B, T, self.n_head, C // self.n_head).transpose(1, 2) # (B, nh, T, hs)

|

| 58 |

+

q = q.view(B, T, self.n_head, C // self.n_head).transpose(1, 2) # (B, nh, T, hs)

|

| 59 |

+

v = v.view(B, T, self.n_head, C // self.n_head).transpose(1, 2) # (B, nh, T, hs)

|

| 60 |

+

|

| 61 |

+

# causal self-attention; Self-attend: (B, nh, T, hs) x (B, nh, hs, T) -> (B, nh, T, T)

|

| 62 |

+

if self.flash:

|

| 63 |

+

# efficient attention using Flash Attention CUDA kernels

|

| 64 |

+

y = torch.nn.functional.scaled_dot_product_attention(q, k, v, attn_mask=None, dropout_p=self.dropout if self.training else 0, is_causal=True)

|

| 65 |

+

else:

|

| 66 |

+

# manual implementation of attention

|

| 67 |

+

att = (q @ k.transpose(-2, -1)) * (1.0 / math.sqrt(k.size(-1)))

|

| 68 |

+

att = att.masked_fill(self.bias[:,:,:T,:T] == 0, float('-inf'))

|

| 69 |

+

att = F.softmax(att, dim=-1)

|

| 70 |

+

att = self.attn_dropout(att)

|

| 71 |

+

y = att @ v # (B, nh, T, T) x (B, nh, T, hs) -> (B, nh, T, hs)

|

| 72 |

+

y = y.transpose(1, 2).contiguous().view(B, T, C) # re-assemble all head outputs side by side

|

| 73 |

+

|

| 74 |

+

# output projection

|

| 75 |

+

y = self.resid_dropout(self.c_proj(y))

|

| 76 |

+

return y

|

| 77 |

+

|

| 78 |

+

class MLP(nn.Module):

|

| 79 |

+

|

| 80 |

+

def __init__(self, config):

|

| 81 |

+

super().__init__()

|

| 82 |

+

self.c_fc = nn.Linear(config.n_embd, 4 * config.n_embd, bias=config.bias)

|

| 83 |

+

self.gelu = nn.GELU()

|

| 84 |

+

self.c_proj = nn.Linear(4 * config.n_embd, config.n_embd, bias=config.bias)

|

| 85 |

+

self.dropout = nn.Dropout(config.dropout)

|

| 86 |

+

|

| 87 |

+

def forward(self, x):

|

| 88 |

+

x = self.c_fc(x)

|

| 89 |

+

x = self.gelu(x)

|

| 90 |

+

x = self.c_proj(x)

|

| 91 |

+

x = self.dropout(x)

|

| 92 |

+

return x

|

| 93 |

+

|

| 94 |

+

class Block(nn.Module):

|

| 95 |

+

|

| 96 |

+

def __init__(self, config):

|

| 97 |

+

super().__init__()

|

| 98 |

+

self.ln_1 = LayerNorm(config.n_embd, bias=config.bias)

|

| 99 |

+

self.attn = CausalSelfAttention(config)

|

| 100 |

+

self.ln_2 = LayerNorm(config.n_embd, bias=config.bias)

|

| 101 |

+

self.mlp = MLP(config)

|

| 102 |

+

|

| 103 |

+

def forward(self, x):

|

| 104 |

+

x = x + self.attn(self.ln_1(x))

|

| 105 |

+

x = x + self.mlp(self.ln_2(x))

|

| 106 |

+

return x

|

| 107 |

+

|

| 108 |

+

@dataclass

|

| 109 |

+

class GPTConfig:

|

| 110 |

+

block_size: int = 1024

|

| 111 |

+

vocab_size: int = 50304 # GPT-2 vocab_size of 50257, padded up to nearest multiple of 64 for efficiency

|

| 112 |

+

n_layer: int = 12

|

| 113 |

+

n_head: int = 12

|

| 114 |

+

n_embd: int = 768

|

| 115 |

+

dropout: float = 0.0

|

| 116 |

+

bias: bool = True # True: bias in Linears and LayerNorms, like GPT-2. False: a bit better and faster

|

| 117 |

+

|

| 118 |

+

class GPT(nn.Module):

|

| 119 |

+

|

| 120 |

+

def __init__(self, config):

|

| 121 |

+

super().__init__()

|

| 122 |

+

assert config.vocab_size is not None

|

| 123 |

+

assert config.block_size is not None

|

| 124 |

+

self.config = config

|

| 125 |

+

|

| 126 |

+

self.transformer = nn.ModuleDict(dict(

|

| 127 |

+

wte = nn.Embedding(config.vocab_size, config.n_embd),

|

| 128 |

+

wpe = nn.Embedding(config.block_size, config.n_embd),

|

| 129 |

+

drop = nn.Dropout(config.dropout),

|

| 130 |

+

h = nn.ModuleList([Block(config) for _ in range(config.n_layer)]),

|

| 131 |

+

ln_f = LayerNorm(config.n_embd, bias=config.bias),

|

| 132 |

+

))

|

| 133 |

+

self.lm_head = nn.Linear(config.n_embd, config.vocab_size, bias=False)

|

| 134 |

+

# with weight tying when using torch.compile() some warnings get generated:

|

| 135 |

+

# "UserWarning: functional_call was passed multiple values for tied weights.

|

| 136 |

+

# This behavior is deprecated and will be an error in future versions"

|

| 137 |

+

# not 100% sure what this is, so far seems to be harmless. TODO investigate

|

| 138 |

+

self.transformer.wte.weight = self.lm_head.weight # https://paperswithcode.com/method/weight-tying

|

| 139 |

+

|

| 140 |

+

# init all weights

|

| 141 |

+

self.apply(self._init_weights)

|

| 142 |

+

# apply special scaled init to the residual projections, per GPT-2 paper

|

| 143 |

+

for pn, p in self.named_parameters():

|

| 144 |

+

if pn.endswith('c_proj.weight'):

|

| 145 |

+

torch.nn.init.normal_(p, mean=0.0, std=0.02/math.sqrt(2 * config.n_layer))

|

| 146 |

+

|

| 147 |

+

# report number of parameters

|

| 148 |

+

print("number of parameters: %.2fM" % (self.get_num_params()/1e6,))

|

| 149 |

+

|

| 150 |

+

def get_num_params(self, non_embedding=True):

|

| 151 |

+

"""

|

| 152 |

+

Return the number of parameters in the model.

|

| 153 |

+

For non-embedding count (default), the position embeddings get subtracted.

|

| 154 |

+

The token embeddings would too, except due to the parameter sharing these

|

| 155 |

+

params are actually used as weights in the final layer, so we include them.

|

| 156 |

+

"""

|

| 157 |

+

n_params = sum(p.numel() for p in self.parameters())

|

| 158 |

+

if non_embedding:

|

| 159 |

+

n_params -= self.transformer.wpe.weight.numel()

|

| 160 |

+

return n_params

|

| 161 |

+

|

| 162 |

+

def _init_weights(self, module):

|

| 163 |

+

if isinstance(module, nn.Linear):

|

| 164 |

+

torch.nn.init.normal_(module.weight, mean=0.0, std=0.02)

|

| 165 |

+

if module.bias is not None:

|

| 166 |

+

torch.nn.init.zeros_(module.bias)

|

| 167 |

+

elif isinstance(module, nn.Embedding):

|

| 168 |

+

torch.nn.init.normal_(module.weight, mean=0.0, std=0.02)

|

| 169 |

+

|

| 170 |

+

def forward(self, idx, targets=None):

|

| 171 |

+

device = idx.device

|

| 172 |

+

b, t = idx.size()

|

| 173 |

+

assert t <= self.config.block_size, f"Cannot forward sequence of length {t}, block size is only {self.config.block_size}"

|

| 174 |

+

pos = torch.arange(0, t, dtype=torch.long, device=device) # shape (t)

|

| 175 |

+

|

| 176 |

+

# forward the GPT model itself

|

| 177 |

+

tok_emb = self.transformer.wte(idx) # token embeddings of shape (b, t, n_embd)

|

| 178 |

+

pos_emb = self.transformer.wpe(pos) # position embeddings of shape (t, n_embd)

|

| 179 |

+

x = self.transformer.drop(tok_emb + pos_emb)

|

| 180 |

+

for block in self.transformer.h:

|

| 181 |

+

x = block(x)

|

| 182 |

+

x = self.transformer.ln_f(x)

|

| 183 |

+

|

| 184 |

+

if targets is not None:

|

| 185 |

+

# if we are given some desired targets also calculate the loss

|

| 186 |

+

logits = self.lm_head(x)

|

| 187 |

+

loss = F.cross_entropy(logits.view(-1, logits.size(-1)), targets.view(-1), ignore_index=-1)

|

| 188 |

+

else:

|

| 189 |

+

# inference-time mini-optimization: only forward the lm_head on the very last position

|

| 190 |

+

logits = self.lm_head(x[:, [-1], :]) # note: using list [-1] to preserve the time dim

|

| 191 |

+

loss = None

|

| 192 |

+

|

| 193 |

+

return logits, loss

|

| 194 |

+

|

| 195 |

+

def crop_block_size(self, block_size):

|

| 196 |

+

# model surgery to decrease the block size if necessary

|

| 197 |

+

# e.g. we may load the GPT2 pretrained model checkpoint (block size 1024)

|

| 198 |

+

# but want to use a smaller block size for some smaller, simpler model

|

| 199 |

+

assert block_size <= self.config.block_size

|

| 200 |

+

self.config.block_size = block_size

|

| 201 |

+

self.transformer.wpe.weight = nn.Parameter(self.transformer.wpe.weight[:block_size])

|

| 202 |

+

for block in self.transformer.h:

|

| 203 |

+

if hasattr(block.attn, 'bias'):

|

| 204 |

+

block.attn.bias = block.attn.bias[:,:,:block_size,:block_size]

|

| 205 |

+

|

| 206 |

+

@classmethod

|

| 207 |

+

def from_pretrained(cls, model_type, override_args=None):

|

| 208 |

+

assert model_type in {'gpt2', 'gpt2-medium', 'gpt2-large', 'gpt2-xl'}

|

| 209 |

+

override_args = override_args or {} # default to empty dict

|

| 210 |

+

# only dropout can be overridden see more notes below

|

| 211 |

+

assert all(k == 'dropout' for k in override_args)

|

| 212 |

+

from transformers import GPT2LMHeadModel

|

| 213 |

+

print("loading weights from pretrained gpt: %s" % model_type)

|

| 214 |

+

|

| 215 |

+

# n_layer, n_head and n_embd are determined from model_type

|

| 216 |

+

config_args = {

|

| 217 |

+

'gpt2': dict(n_layer=12, n_head=12, n_embd=768), # 124M params

|

| 218 |

+

'gpt2-medium': dict(n_layer=24, n_head=16, n_embd=1024), # 350M params

|

| 219 |

+

'gpt2-large': dict(n_layer=36, n_head=20, n_embd=1280), # 774M params

|

| 220 |

+

'gpt2-xl': dict(n_layer=48, n_head=25, n_embd=1600), # 1558M params

|

| 221 |

+

}[model_type]

|

| 222 |

+

print("forcing vocab_size=50257, block_size=1024, bias=True")

|

| 223 |

+

config_args['vocab_size'] = 50257 # always 50257 for GPT model checkpoints

|

| 224 |

+

config_args['block_size'] = 1024 # always 1024 for GPT model checkpoints

|

| 225 |

+

config_args['bias'] = True # always True for GPT model checkpoints

|

| 226 |

+

# we can override the dropout rate, if desired

|

| 227 |