Spaces:

Runtime error

Runtime error

akhaliq3

commited on

Commit

·

833ef7e

1

Parent(s):

928d198

New message for the combined commit

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- CLIP/.gitignore +10 -0

- CLIP/CLIP.png +0 -0

- CLIP/LICENSE +22 -0

- CLIP/MANIFEST.in +1 -0

- CLIP/README.md +193 -0

- CLIP/clip/__init__.py +1 -0

- CLIP/clip/bpe_simple_vocab_16e6.txt.gz +0 -0

- CLIP/clip/clip.py +221 -0

- CLIP/clip/model.py +432 -0

- CLIP/clip/simple_tokenizer.py +132 -0

- CLIP/data/yfcc100m.md +14 -0

- CLIP/model-card.md +120 -0

- CLIP/notebooks/Interacting_with_CLIP.ipynb +0 -0

- CLIP/notebooks/Prompt_Engineering_for_ImageNet.ipynb +1188 -0

- CLIP/requirements.txt +5 -0

- CLIP/setup.py +21 -0

- CLIP/tests/test_consistency.py +25 -0

- steps/temp.txt +0 -0

- taming-transformers/License.txt +19 -0

- taming-transformers/README.md +377 -0

- taming-transformers/assets/birddrawnbyachild.png +0 -0

- taming-transformers/assets/drin.jpg +0 -0

- taming-transformers/assets/faceshq.jpg +0 -0

- taming-transformers/assets/first_stage_mushrooms.png +0 -0

- taming-transformers/assets/first_stage_squirrels.png +0 -0

- taming-transformers/assets/imagenet.png +0 -0

- taming-transformers/assets/lake_in_the_mountains.png +0 -0

- taming-transformers/assets/mountain.jpeg +0 -0

- taming-transformers/assets/stormy.jpeg +0 -0

- taming-transformers/assets/sunset_and_ocean.jpg +0 -0

- taming-transformers/assets/teaser.png +0 -0

- taming-transformers/configs/coco_cond_stage.yaml +49 -0

- taming-transformers/configs/custom_vqgan.yaml +43 -0

- taming-transformers/configs/drin_transformer.yaml +77 -0

- taming-transformers/configs/faceshq_transformer.yaml +61 -0

- taming-transformers/configs/faceshq_vqgan.yaml +42 -0

- taming-transformers/configs/imagenet_vqgan.yaml +42 -0

- taming-transformers/configs/imagenetdepth_vqgan.yaml +41 -0

- taming-transformers/configs/sflckr_cond_stage.yaml +43 -0

- taming-transformers/data/ade20k_examples.txt +30 -0

- taming-transformers/data/ade20k_images/ADE_val_00000123.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000125.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000126.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000203.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000262.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000287.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000289.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000303.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000509.jpg +0 -0

- taming-transformers/data/ade20k_images/ADE_val_00000532.jpg +0 -0

CLIP/.gitignore

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

__pycache__/

|

| 2 |

+

*.py[cod]

|

| 3 |

+

*$py.class

|

| 4 |

+

*.egg-info

|

| 5 |

+

.pytest_cache

|

| 6 |

+

.ipynb_checkpoints

|

| 7 |

+

|

| 8 |

+

thumbs.db

|

| 9 |

+

.DS_Store

|

| 10 |

+

.idea

|

CLIP/CLIP.png

ADDED

|

CLIP/LICENSE

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2021 OpenAI

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

| 22 |

+

|

CLIP/MANIFEST.in

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

include clip/bpe_simple_vocab_16e6.txt.gz

|

CLIP/README.md

ADDED

|

@@ -0,0 +1,193 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# CLIP

|

| 2 |

+

|

| 3 |

+

[[Blog]](https://openai.com/blog/clip/) [[Paper]](https://arxiv.org/abs/2103.00020) [[Model Card]](model-card.md) [[Colab]](https://colab.research.google.com/github/openai/clip/blob/master/notebooks/Interacting_with_CLIP.ipynb)

|

| 4 |

+

|

| 5 |

+

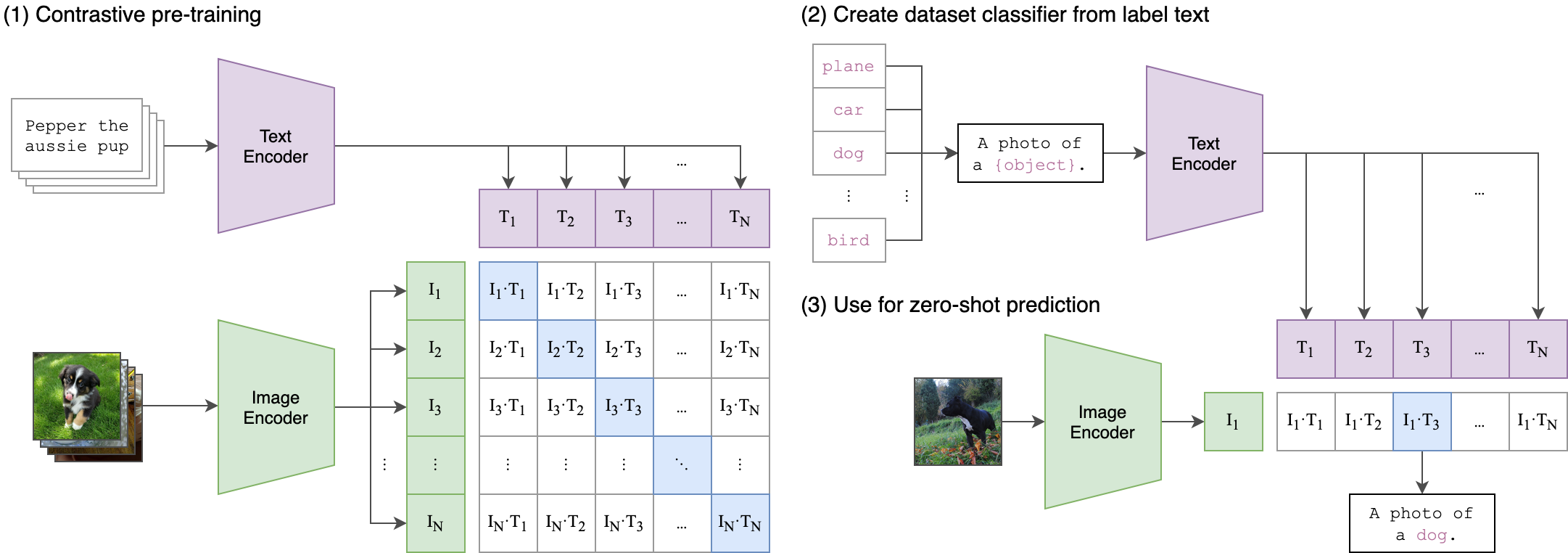

CLIP (Contrastive Language-Image Pre-Training) is a neural network trained on a variety of (image, text) pairs. It can be instructed in natural language to predict the most relevant text snippet, given an image, without directly optimizing for the task, similarly to the zero-shot capabilities of GPT-2 and 3. We found CLIP matches the performance of the original ResNet50 on ImageNet “zero-shot” without using any of the original 1.28M labeled examples, overcoming several major challenges in computer vision.

|

| 6 |

+

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

## Approach

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

## Usage

|

| 16 |

+

|

| 17 |

+

First, [install PyTorch 1.7.1](https://pytorch.org/get-started/locally/) and torchvision, as well as small additional dependencies, and then install this repo as a Python package. On a CUDA GPU machine, the following will do the trick:

|

| 18 |

+

|

| 19 |

+

```bash

|

| 20 |

+

$ conda install --yes -c pytorch pytorch=1.7.1 torchvision cudatoolkit=11.0

|

| 21 |

+

$ pip install ftfy regex tqdm

|

| 22 |

+

$ pip install git+https://github.com/openai/CLIP.git

|

| 23 |

+

```

|

| 24 |

+

|

| 25 |

+

Replace `cudatoolkit=11.0` above with the appropriate CUDA version on your machine or `cpuonly` when installing on a machine without a GPU.

|

| 26 |

+

|

| 27 |

+

```python

|

| 28 |

+

import torch

|

| 29 |

+

import clip

|

| 30 |

+

from PIL import Image

|

| 31 |

+

|

| 32 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 33 |

+

model, preprocess = clip.load("ViT-B/32", device=device)

|

| 34 |

+

|

| 35 |

+

image = preprocess(Image.open("CLIP.png")).unsqueeze(0).to(device)

|

| 36 |

+

text = clip.tokenize(["a diagram", "a dog", "a cat"]).to(device)

|

| 37 |

+

|

| 38 |

+

with torch.no_grad():

|

| 39 |

+

image_features = model.encode_image(image)

|

| 40 |

+

text_features = model.encode_text(text)

|

| 41 |

+

|

| 42 |

+

logits_per_image, logits_per_text = model(image, text)

|

| 43 |

+

probs = logits_per_image.softmax(dim=-1).cpu().numpy()

|

| 44 |

+

|

| 45 |

+

print("Label probs:", probs) # prints: [[0.9927937 0.00421068 0.00299572]]

|

| 46 |

+

```

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

## API

|

| 50 |

+

|

| 51 |

+

The CLIP module `clip` provides the following methods:

|

| 52 |

+

|

| 53 |

+

#### `clip.available_models()`

|

| 54 |

+

|

| 55 |

+

Returns the names of the available CLIP models.

|

| 56 |

+

|

| 57 |

+

#### `clip.load(name, device=..., jit=False)`

|

| 58 |

+

|

| 59 |

+

Returns the model and the TorchVision transform needed by the model, specified by the model name returned by `clip.available_models()`. It will download the model as necessary. The `name` argument can also be a path to a local checkpoint.

|

| 60 |

+

|

| 61 |

+

The device to run the model can be optionally specified, and the default is to use the first CUDA device if there is any, otherwise the CPU. When `jit` is `False`, a non-JIT version of the model will be loaded.

|

| 62 |

+

|

| 63 |

+

#### `clip.tokenize(text: Union[str, List[str]], context_length=77)`

|

| 64 |

+

|

| 65 |

+

Returns a LongTensor containing tokenized sequences of given text input(s). This can be used as the input to the model

|

| 66 |

+

|

| 67 |

+

---

|

| 68 |

+

|

| 69 |

+

The model returned by `clip.load()` supports the following methods:

|

| 70 |

+

|

| 71 |

+

#### `model.encode_image(image: Tensor)`

|

| 72 |

+

|

| 73 |

+

Given a batch of images, returns the image features encoded by the vision portion of the CLIP model.

|

| 74 |

+

|

| 75 |

+

#### `model.encode_text(text: Tensor)`

|

| 76 |

+

|

| 77 |

+

Given a batch of text tokens, returns the text features encoded by the language portion of the CLIP model.

|

| 78 |

+

|

| 79 |

+

#### `model(image: Tensor, text: Tensor)`

|

| 80 |

+

|

| 81 |

+

Given a batch of images and a batch of text tokens, returns two Tensors, containing the logit scores corresponding to each image and text input. The values are cosine similarities between the corresponding image and text features, times 100.

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

|

| 85 |

+

## More Examples

|

| 86 |

+

|

| 87 |

+

### Zero-Shot Prediction

|

| 88 |

+

|

| 89 |

+

The code below performs zero-shot prediction using CLIP, as shown in Appendix B in the paper. This example takes an image from the [CIFAR-100 dataset](https://www.cs.toronto.edu/~kriz/cifar.html), and predicts the most likely labels among the 100 textual labels from the dataset.

|

| 90 |

+

|

| 91 |

+

```python

|

| 92 |

+

import os

|

| 93 |

+

import clip

|

| 94 |

+

import torch

|

| 95 |

+

from torchvision.datasets import CIFAR100

|

| 96 |

+

|

| 97 |

+

# Load the model

|

| 98 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 99 |

+

model, preprocess = clip.load('ViT-B/32', device)

|

| 100 |

+

|

| 101 |

+

# Download the dataset

|

| 102 |

+

cifar100 = CIFAR100(root=os.path.expanduser("~/.cache"), download=True, train=False)

|

| 103 |

+

|

| 104 |

+

# Prepare the inputs

|

| 105 |

+

image, class_id = cifar100[3637]

|

| 106 |

+

image_input = preprocess(image).unsqueeze(0).to(device)

|

| 107 |

+

text_inputs = torch.cat([clip.tokenize(f"a photo of a {c}") for c in cifar100.classes]).to(device)

|

| 108 |

+

|

| 109 |

+

# Calculate features

|

| 110 |

+

with torch.no_grad():

|

| 111 |

+

image_features = model.encode_image(image_input)

|

| 112 |

+

text_features = model.encode_text(text_inputs)

|

| 113 |

+

|

| 114 |

+

# Pick the top 5 most similar labels for the image

|

| 115 |

+

image_features /= image_features.norm(dim=-1, keepdim=True)

|

| 116 |

+

text_features /= text_features.norm(dim=-1, keepdim=True)

|

| 117 |

+

similarity = (100.0 * image_features @ text_features.T).softmax(dim=-1)

|

| 118 |

+

values, indices = similarity[0].topk(5)

|

| 119 |

+

|

| 120 |

+

# Print the result

|

| 121 |

+

print("\nTop predictions:\n")

|

| 122 |

+

for value, index in zip(values, indices):

|

| 123 |

+

print(f"{cifar100.classes[index]:>16s}: {100 * value.item():.2f}%")

|

| 124 |

+

```

|

| 125 |

+

|

| 126 |

+

The output will look like the following (the exact numbers may be slightly different depending on the compute device):

|

| 127 |

+

|

| 128 |

+

```

|

| 129 |

+

Top predictions:

|

| 130 |

+

|

| 131 |

+

snake: 65.31%

|

| 132 |

+

turtle: 12.29%

|

| 133 |

+

sweet_pepper: 3.83%

|

| 134 |

+

lizard: 1.88%

|

| 135 |

+

crocodile: 1.75%

|

| 136 |

+

```

|

| 137 |

+

|

| 138 |

+

Note that this example uses the `encode_image()` and `encode_text()` methods that return the encoded features of given inputs.

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

### Linear-probe evaluation

|

| 142 |

+

|

| 143 |

+

The example below uses [scikit-learn](https://scikit-learn.org/) to perform logistic regression on image features.

|

| 144 |

+

|

| 145 |

+

```python

|

| 146 |

+

import os

|

| 147 |

+

import clip

|

| 148 |

+

import torch

|

| 149 |

+

|

| 150 |

+

import numpy as np

|

| 151 |

+

from sklearn.linear_model import LogisticRegression

|

| 152 |

+

from torch.utils.data import DataLoader

|

| 153 |

+

from torchvision.datasets import CIFAR100

|

| 154 |

+

from tqdm import tqdm

|

| 155 |

+

|

| 156 |

+

# Load the model

|

| 157 |

+

device = "cuda" if torch.cuda.is_available() else "cpu"

|

| 158 |

+

model, preprocess = clip.load('ViT-B/32', device)

|

| 159 |

+

|

| 160 |

+

# Load the dataset

|

| 161 |

+

root = os.path.expanduser("~/.cache")

|

| 162 |

+

train = CIFAR100(root, download=True, train=True, transform=preprocess)

|

| 163 |

+

test = CIFAR100(root, download=True, train=False, transform=preprocess)

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

def get_features(dataset):

|

| 167 |

+

all_features = []

|

| 168 |

+

all_labels = []

|

| 169 |

+

|

| 170 |

+

with torch.no_grad():

|

| 171 |

+

for images, labels in tqdm(DataLoader(dataset, batch_size=100)):

|

| 172 |

+

features = model.encode_image(images.to(device))

|

| 173 |

+

|

| 174 |

+

all_features.append(features)

|

| 175 |

+

all_labels.append(labels)

|

| 176 |

+

|

| 177 |

+

return torch.cat(all_features).cpu().numpy(), torch.cat(all_labels).cpu().numpy()

|

| 178 |

+

|

| 179 |

+

# Calculate the image features

|

| 180 |

+

train_features, train_labels = get_features(train)

|

| 181 |

+

test_features, test_labels = get_features(test)

|

| 182 |

+

|

| 183 |

+

# Perform logistic regression

|

| 184 |

+

classifier = LogisticRegression(random_state=0, C=0.316, max_iter=1000, verbose=1)

|

| 185 |

+

classifier.fit(train_features, train_labels)

|

| 186 |

+

|

| 187 |

+

# Evaluate using the logistic regression classifier

|

| 188 |

+

predictions = classifier.predict(test_features)

|

| 189 |

+

accuracy = np.mean((test_labels == predictions).astype(np.float)) * 100.

|

| 190 |

+

print(f"Accuracy = {accuracy:.3f}")

|

| 191 |

+

```

|

| 192 |

+

|

| 193 |

+

Note that the `C` value should be determined via a hyperparameter sweep using a validation split.

|

CLIP/clip/__init__.py

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

from .clip import *

|

CLIP/clip/bpe_simple_vocab_16e6.txt.gz

ADDED

|

Binary file (1.36 MB). View file

|

|

|

CLIP/clip/clip.py

ADDED

|

@@ -0,0 +1,221 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import hashlib

|

| 2 |

+

import os

|

| 3 |

+

import urllib

|

| 4 |

+

import warnings

|

| 5 |

+

from typing import Union, List

|

| 6 |

+

|

| 7 |

+

import torch

|

| 8 |

+

from PIL import Image

|

| 9 |

+

from torchvision.transforms import Compose, Resize, CenterCrop, ToTensor, Normalize

|

| 10 |

+

from tqdm import tqdm

|

| 11 |

+

|

| 12 |

+

from .model import build_model

|

| 13 |

+

from .simple_tokenizer import SimpleTokenizer as _Tokenizer

|

| 14 |

+

|

| 15 |

+

try:

|

| 16 |

+

from torchvision.transforms import InterpolationMode

|

| 17 |

+

BICUBIC = InterpolationMode.BICUBIC

|

| 18 |

+

except ImportError:

|

| 19 |

+

BICUBIC = Image.BICUBIC

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

if torch.__version__.split(".") < ["1", "7", "1"]:

|

| 23 |

+

warnings.warn("PyTorch version 1.7.1 or higher is recommended")

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

__all__ = ["available_models", "load", "tokenize"]

|

| 27 |

+

_tokenizer = _Tokenizer()

|

| 28 |

+

|

| 29 |

+

_MODELS = {

|

| 30 |

+

"RN50": "https://openaipublic.azureedge.net/clip/models/afeb0e10f9e5a86da6080e35cf09123aca3b358a0c3e3b6c78a7b63bc04b6762/RN50.pt",

|

| 31 |

+

"RN101": "https://openaipublic.azureedge.net/clip/models/8fa8567bab74a42d41c5915025a8e4538c3bdbe8804a470a72f30b0d94fab599/RN101.pt",

|

| 32 |

+

"RN50x4": "https://openaipublic.azureedge.net/clip/models/7e526bd135e493cef0776de27d5f42653e6b4c8bf9e0f653bb11773263205fdd/RN50x4.pt",

|

| 33 |

+

"RN50x16": "https://openaipublic.azureedge.net/clip/models/52378b407f34354e150460fe41077663dd5b39c54cd0bfd2b27167a4a06ec9aa/RN50x16.pt",

|

| 34 |

+

"ViT-B/32": "https://openaipublic.azureedge.net/clip/models/40d365715913c9da98579312b702a82c18be219cc2a73407c4526f58eba950af/ViT-B-32.pt",

|

| 35 |

+

"ViT-B/16": "https://openaipublic.azureedge.net/clip/models/5806e77cd80f8b59890b7e101eabd078d9fb84e6937f9e85e4ecb61988df416f/ViT-B-16.pt",

|

| 36 |

+

}

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

def _download(url: str, root: str = os.path.expanduser("~/.cache/clip")):

|

| 40 |

+

os.makedirs(root, exist_ok=True)

|

| 41 |

+

filename = os.path.basename(url)

|

| 42 |

+

|

| 43 |

+

expected_sha256 = url.split("/")[-2]

|

| 44 |

+

download_target = os.path.join(root, filename)

|

| 45 |

+

|

| 46 |

+

if os.path.exists(download_target) and not os.path.isfile(download_target):

|

| 47 |

+

raise RuntimeError(f"{download_target} exists and is not a regular file")

|

| 48 |

+

|

| 49 |

+

if os.path.isfile(download_target):

|

| 50 |

+

if hashlib.sha256(open(download_target, "rb").read()).hexdigest() == expected_sha256:

|

| 51 |

+

return download_target

|

| 52 |

+

else:

|

| 53 |

+

warnings.warn(f"{download_target} exists, but the SHA256 checksum does not match; re-downloading the file")

|

| 54 |

+

|

| 55 |

+

with urllib.request.urlopen(url) as source, open(download_target, "wb") as output:

|

| 56 |

+

with tqdm(total=int(source.info().get("Content-Length")), ncols=80, unit='iB', unit_scale=True) as loop:

|

| 57 |

+

while True:

|

| 58 |

+

buffer = source.read(8192)

|

| 59 |

+

if not buffer:

|

| 60 |

+

break

|

| 61 |

+

|

| 62 |

+

output.write(buffer)

|

| 63 |

+

loop.update(len(buffer))

|

| 64 |

+

|

| 65 |

+

if hashlib.sha256(open(download_target, "rb").read()).hexdigest() != expected_sha256:

|

| 66 |

+

raise RuntimeError(f"Model has been downloaded but the SHA256 checksum does not not match")

|

| 67 |

+

|

| 68 |

+

return download_target

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

def _transform(n_px):

|

| 72 |

+

return Compose([

|

| 73 |

+

Resize(n_px, interpolation=BICUBIC),

|

| 74 |

+

CenterCrop(n_px),

|

| 75 |

+

lambda image: image.convert("RGB"),

|

| 76 |

+

ToTensor(),

|

| 77 |

+

Normalize((0.48145466, 0.4578275, 0.40821073), (0.26862954, 0.26130258, 0.27577711)),

|

| 78 |

+

])

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

def available_models() -> List[str]:

|

| 82 |

+

"""Returns the names of available CLIP models"""

|

| 83 |

+

return list(_MODELS.keys())

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

def load(name: str, device: Union[str, torch.device] = "cuda" if torch.cuda.is_available() else "cpu", jit=False):

|

| 87 |

+

"""Load a CLIP model

|

| 88 |

+

|

| 89 |

+

Parameters

|

| 90 |

+

----------

|

| 91 |

+

name : str

|

| 92 |

+

A model name listed by `clip.available_models()`, or the path to a model checkpoint containing the state_dict

|

| 93 |

+

|

| 94 |

+

device : Union[str, torch.device]

|

| 95 |

+

The device to put the loaded model

|

| 96 |

+

|

| 97 |

+

jit : bool

|

| 98 |

+

Whether to load the optimized JIT model or more hackable non-JIT model (default).

|

| 99 |

+

|

| 100 |

+

Returns

|

| 101 |

+

-------

|

| 102 |

+

model : torch.nn.Module

|

| 103 |

+

The CLIP model

|

| 104 |

+

|

| 105 |

+

preprocess : Callable[[PIL.Image], torch.Tensor]

|

| 106 |

+

A torchvision transform that converts a PIL image into a tensor that the returned model can take as its input

|

| 107 |

+

"""

|

| 108 |

+

if name in _MODELS:

|

| 109 |

+

model_path = _download(_MODELS[name])

|

| 110 |

+

elif os.path.isfile(name):

|

| 111 |

+

model_path = name

|

| 112 |

+

else:

|

| 113 |

+

raise RuntimeError(f"Model {name} not found; available models = {available_models()}")

|

| 114 |

+

|

| 115 |

+

try:

|

| 116 |

+

# loading JIT archive

|

| 117 |

+

model = torch.jit.load(model_path, map_location=device if jit else "cpu").eval()

|

| 118 |

+

state_dict = None

|

| 119 |

+

except RuntimeError:

|

| 120 |

+

# loading saved state dict

|

| 121 |

+

if jit:

|

| 122 |

+

warnings.warn(f"File {model_path} is not a JIT archive. Loading as a state dict instead")

|

| 123 |

+

jit = False

|

| 124 |

+

state_dict = torch.load(model_path, map_location="cpu")

|

| 125 |

+

|

| 126 |

+

if not jit:

|

| 127 |

+

model = build_model(state_dict or model.state_dict()).to(device)

|

| 128 |

+

if str(device) == "cpu":

|

| 129 |

+

model.float()

|

| 130 |

+

return model, _transform(model.visual.input_resolution)

|

| 131 |

+

|

| 132 |

+

# patch the device names

|

| 133 |

+

device_holder = torch.jit.trace(lambda: torch.ones([]).to(torch.device(device)), example_inputs=[])

|

| 134 |

+

device_node = [n for n in device_holder.graph.findAllNodes("prim::Constant") if "Device" in repr(n)][-1]

|

| 135 |

+

|

| 136 |

+

def patch_device(module):

|

| 137 |

+

try:

|

| 138 |

+

graphs = [module.graph] if hasattr(module, "graph") else []

|

| 139 |

+

except RuntimeError:

|

| 140 |

+

graphs = []

|

| 141 |

+

|

| 142 |

+

if hasattr(module, "forward1"):

|

| 143 |

+

graphs.append(module.forward1.graph)

|

| 144 |

+

|

| 145 |

+

for graph in graphs:

|

| 146 |

+

for node in graph.findAllNodes("prim::Constant"):

|

| 147 |

+

if "value" in node.attributeNames() and str(node["value"]).startswith("cuda"):

|

| 148 |

+

node.copyAttributes(device_node)

|

| 149 |

+

|

| 150 |

+

model.apply(patch_device)

|

| 151 |

+

patch_device(model.encode_image)

|

| 152 |

+

patch_device(model.encode_text)

|

| 153 |

+

|

| 154 |

+

# patch dtype to float32 on CPU

|

| 155 |

+

if str(device) == "cpu":

|

| 156 |

+

float_holder = torch.jit.trace(lambda: torch.ones([]).float(), example_inputs=[])

|

| 157 |

+

float_input = list(float_holder.graph.findNode("aten::to").inputs())[1]

|

| 158 |

+

float_node = float_input.node()

|

| 159 |

+

|

| 160 |

+

def patch_float(module):

|

| 161 |

+

try:

|

| 162 |

+

graphs = [module.graph] if hasattr(module, "graph") else []

|

| 163 |

+

except RuntimeError:

|

| 164 |

+

graphs = []

|

| 165 |

+

|

| 166 |

+

if hasattr(module, "forward1"):

|

| 167 |

+

graphs.append(module.forward1.graph)

|

| 168 |

+

|

| 169 |

+

for graph in graphs:

|

| 170 |

+

for node in graph.findAllNodes("aten::to"):

|

| 171 |

+

inputs = list(node.inputs())

|

| 172 |

+

for i in [1, 2]: # dtype can be the second or third argument to aten::to()

|

| 173 |

+

if inputs[i].node()["value"] == 5:

|

| 174 |

+

inputs[i].node().copyAttributes(float_node)

|

| 175 |

+

|

| 176 |

+

model.apply(patch_float)

|

| 177 |

+

patch_float(model.encode_image)

|

| 178 |

+

patch_float(model.encode_text)

|

| 179 |

+

|

| 180 |

+

model.float()

|

| 181 |

+

|

| 182 |

+

return model, _transform(model.input_resolution.item())

|

| 183 |

+

|

| 184 |

+

|

| 185 |

+

def tokenize(texts: Union[str, List[str]], context_length: int = 77, truncate: bool = False) -> torch.LongTensor:

|

| 186 |

+

"""

|

| 187 |

+

Returns the tokenized representation of given input string(s)

|

| 188 |

+

|

| 189 |

+

Parameters

|

| 190 |

+

----------

|

| 191 |

+

texts : Union[str, List[str]]

|

| 192 |

+

An input string or a list of input strings to tokenize

|

| 193 |

+

|

| 194 |

+

context_length : int

|

| 195 |

+

The context length to use; all CLIP models use 77 as the context length

|

| 196 |

+

|

| 197 |

+

truncate: bool

|

| 198 |

+

Whether to truncate the text in case its encoding is longer than the context length

|

| 199 |

+

|

| 200 |

+

Returns

|

| 201 |

+

-------

|

| 202 |

+

A two-dimensional tensor containing the resulting tokens, shape = [number of input strings, context_length]

|

| 203 |

+

"""

|

| 204 |

+

if isinstance(texts, str):

|

| 205 |

+

texts = [texts]

|

| 206 |

+

|

| 207 |

+

sot_token = _tokenizer.encoder["<|startoftext|>"]

|

| 208 |

+

eot_token = _tokenizer.encoder["<|endoftext|>"]

|

| 209 |

+

all_tokens = [[sot_token] + _tokenizer.encode(text) + [eot_token] for text in texts]

|

| 210 |

+

result = torch.zeros(len(all_tokens), context_length, dtype=torch.long)

|

| 211 |

+

|

| 212 |

+

for i, tokens in enumerate(all_tokens):

|

| 213 |

+

if len(tokens) > context_length:

|

| 214 |

+

if truncate:

|

| 215 |

+

tokens = tokens[:context_length]

|

| 216 |

+

tokens[-1] = eot_token

|

| 217 |

+

else:

|

| 218 |

+

raise RuntimeError(f"Input {texts[i]} is too long for context length {context_length}")

|

| 219 |

+

result[i, :len(tokens)] = torch.tensor(tokens)

|

| 220 |

+

|

| 221 |

+

return result

|

CLIP/clip/model.py

ADDED

|

@@ -0,0 +1,432 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from collections import OrderedDict

|

| 2 |

+

from typing import Tuple, Union

|

| 3 |

+

|

| 4 |

+

import numpy as np

|

| 5 |

+

import torch

|

| 6 |

+

import torch.nn.functional as F

|

| 7 |

+

from torch import nn

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

class Bottleneck(nn.Module):

|

| 11 |

+

expansion = 4

|

| 12 |

+

|

| 13 |

+

def __init__(self, inplanes, planes, stride=1):

|

| 14 |

+

super().__init__()

|

| 15 |

+

|

| 16 |

+

# all conv layers have stride 1. an avgpool is performed after the second convolution when stride > 1

|

| 17 |

+

self.conv1 = nn.Conv2d(inplanes, planes, 1, bias=False)

|

| 18 |

+

self.bn1 = nn.BatchNorm2d(planes)

|

| 19 |

+

|

| 20 |

+

self.conv2 = nn.Conv2d(planes, planes, 3, padding=1, bias=False)

|

| 21 |

+

self.bn2 = nn.BatchNorm2d(planes)

|

| 22 |

+

|

| 23 |

+

self.avgpool = nn.AvgPool2d(stride) if stride > 1 else nn.Identity()

|

| 24 |

+

|

| 25 |

+

self.conv3 = nn.Conv2d(planes, planes * self.expansion, 1, bias=False)

|

| 26 |

+

self.bn3 = nn.BatchNorm2d(planes * self.expansion)

|

| 27 |

+

|

| 28 |

+

self.relu = nn.ReLU(inplace=True)

|

| 29 |

+

self.downsample = None

|

| 30 |

+

self.stride = stride

|

| 31 |

+

|

| 32 |

+

if stride > 1 or inplanes != planes * Bottleneck.expansion:

|

| 33 |

+

# downsampling layer is prepended with an avgpool, and the subsequent convolution has stride 1

|

| 34 |

+

self.downsample = nn.Sequential(OrderedDict([

|

| 35 |

+

("-1", nn.AvgPool2d(stride)),

|

| 36 |

+

("0", nn.Conv2d(inplanes, planes * self.expansion, 1, stride=1, bias=False)),

|

| 37 |

+

("1", nn.BatchNorm2d(planes * self.expansion))

|

| 38 |

+

]))

|

| 39 |

+

|

| 40 |

+

def forward(self, x: torch.Tensor):

|

| 41 |

+

identity = x

|

| 42 |

+

|

| 43 |

+

out = self.relu(self.bn1(self.conv1(x)))

|

| 44 |

+

out = self.relu(self.bn2(self.conv2(out)))

|

| 45 |

+

out = self.avgpool(out)

|

| 46 |

+

out = self.bn3(self.conv3(out))

|

| 47 |

+

|

| 48 |

+

if self.downsample is not None:

|

| 49 |

+

identity = self.downsample(x)

|

| 50 |

+

|

| 51 |

+

out += identity

|

| 52 |

+

out = self.relu(out)

|

| 53 |

+

return out

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

class AttentionPool2d(nn.Module):

|

| 57 |

+

def __init__(self, spacial_dim: int, embed_dim: int, num_heads: int, output_dim: int = None):

|

| 58 |

+

super().__init__()

|

| 59 |

+

self.positional_embedding = nn.Parameter(torch.randn(spacial_dim ** 2 + 1, embed_dim) / embed_dim ** 0.5)

|

| 60 |

+

self.k_proj = nn.Linear(embed_dim, embed_dim)

|

| 61 |

+

self.q_proj = nn.Linear(embed_dim, embed_dim)

|

| 62 |

+

self.v_proj = nn.Linear(embed_dim, embed_dim)

|

| 63 |

+

self.c_proj = nn.Linear(embed_dim, output_dim or embed_dim)

|

| 64 |

+

self.num_heads = num_heads

|

| 65 |

+

|

| 66 |

+

def forward(self, x):

|

| 67 |

+

x = x.reshape(x.shape[0], x.shape[1], x.shape[2] * x.shape[3]).permute(2, 0, 1) # NCHW -> (HW)NC

|

| 68 |

+

x = torch.cat([x.mean(dim=0, keepdim=True), x], dim=0) # (HW+1)NC

|

| 69 |

+

x = x + self.positional_embedding[:, None, :].to(x.dtype) # (HW+1)NC

|

| 70 |

+

x, _ = F.multi_head_attention_forward(

|

| 71 |

+

query=x, key=x, value=x,

|

| 72 |

+

embed_dim_to_check=x.shape[-1],

|

| 73 |

+

num_heads=self.num_heads,

|

| 74 |

+

q_proj_weight=self.q_proj.weight,

|

| 75 |

+

k_proj_weight=self.k_proj.weight,

|

| 76 |

+

v_proj_weight=self.v_proj.weight,

|

| 77 |

+

in_proj_weight=None,

|

| 78 |

+

in_proj_bias=torch.cat([self.q_proj.bias, self.k_proj.bias, self.v_proj.bias]),

|

| 79 |

+

bias_k=None,

|

| 80 |

+

bias_v=None,

|

| 81 |

+

add_zero_attn=False,

|

| 82 |

+

dropout_p=0,

|

| 83 |

+

out_proj_weight=self.c_proj.weight,

|

| 84 |

+

out_proj_bias=self.c_proj.bias,

|

| 85 |

+

use_separate_proj_weight=True,

|

| 86 |

+

training=self.training,

|

| 87 |

+

need_weights=False

|

| 88 |

+

)

|

| 89 |

+

|

| 90 |

+

return x[0]

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

class ModifiedResNet(nn.Module):

|

| 94 |

+

"""

|

| 95 |

+

A ResNet class that is similar to torchvision's but contains the following changes:

|

| 96 |

+

- There are now 3 "stem" convolutions as opposed to 1, with an average pool instead of a max pool.

|

| 97 |

+

- Performs anti-aliasing strided convolutions, where an avgpool is prepended to convolutions with stride > 1

|

| 98 |

+

- The final pooling layer is a QKV attention instead of an average pool

|

| 99 |

+

"""

|

| 100 |

+

|

| 101 |

+

def __init__(self, layers, output_dim, heads, input_resolution=224, width=64):

|

| 102 |

+

super().__init__()

|

| 103 |

+

self.output_dim = output_dim

|

| 104 |

+

self.input_resolution = input_resolution

|

| 105 |

+

|

| 106 |

+

# the 3-layer stem

|

| 107 |

+

self.conv1 = nn.Conv2d(3, width // 2, kernel_size=3, stride=2, padding=1, bias=False)

|

| 108 |

+

self.bn1 = nn.BatchNorm2d(width // 2)

|

| 109 |

+

self.conv2 = nn.Conv2d(width // 2, width // 2, kernel_size=3, padding=1, bias=False)

|

| 110 |

+

self.bn2 = nn.BatchNorm2d(width // 2)

|

| 111 |

+

self.conv3 = nn.Conv2d(width // 2, width, kernel_size=3, padding=1, bias=False)

|

| 112 |

+

self.bn3 = nn.BatchNorm2d(width)

|

| 113 |

+

self.avgpool = nn.AvgPool2d(2)

|

| 114 |

+

self.relu = nn.ReLU(inplace=True)

|

| 115 |

+

|

| 116 |

+

# residual layers

|

| 117 |

+

self._inplanes = width # this is a *mutable* variable used during construction

|

| 118 |

+

self.layer1 = self._make_layer(width, layers[0])

|

| 119 |

+

self.layer2 = self._make_layer(width * 2, layers[1], stride=2)

|

| 120 |

+

self.layer3 = self._make_layer(width * 4, layers[2], stride=2)

|

| 121 |

+

self.layer4 = self._make_layer(width * 8, layers[3], stride=2)

|

| 122 |

+

|

| 123 |

+

embed_dim = width * 32 # the ResNet feature dimension

|

| 124 |

+

self.attnpool = AttentionPool2d(input_resolution // 32, embed_dim, heads, output_dim)

|

| 125 |

+

|

| 126 |

+

def _make_layer(self, planes, blocks, stride=1):

|

| 127 |

+

layers = [Bottleneck(self._inplanes, planes, stride)]

|

| 128 |

+

|

| 129 |

+

self._inplanes = planes * Bottleneck.expansion

|

| 130 |

+

for _ in range(1, blocks):

|

| 131 |

+

layers.append(Bottleneck(self._inplanes, planes))

|

| 132 |

+

|

| 133 |

+

return nn.Sequential(*layers)

|

| 134 |

+

|

| 135 |

+

def forward(self, x):

|

| 136 |

+

def stem(x):

|

| 137 |

+

for conv, bn in [(self.conv1, self.bn1), (self.conv2, self.bn2), (self.conv3, self.bn3)]:

|

| 138 |

+

x = self.relu(bn(conv(x)))

|

| 139 |

+

x = self.avgpool(x)

|

| 140 |

+

return x

|

| 141 |

+

|

| 142 |

+

x = x.type(self.conv1.weight.dtype)

|

| 143 |

+

x = stem(x)

|

| 144 |

+

x = self.layer1(x)

|

| 145 |

+

x = self.layer2(x)

|

| 146 |

+

x = self.layer3(x)

|

| 147 |

+

x = self.layer4(x)

|

| 148 |

+

x = self.attnpool(x)

|

| 149 |

+

|

| 150 |

+

return x

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

class LayerNorm(nn.LayerNorm):

|

| 154 |

+

"""Subclass torch's LayerNorm to handle fp16."""

|

| 155 |

+

|

| 156 |

+

def forward(self, x: torch.Tensor):

|

| 157 |

+

orig_type = x.dtype

|

| 158 |

+

ret = super().forward(x.type(torch.float32))

|

| 159 |

+

return ret.type(orig_type)

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

class QuickGELU(nn.Module):

|

| 163 |

+

def forward(self, x: torch.Tensor):

|

| 164 |

+

return x * torch.sigmoid(1.702 * x)

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

class ResidualAttentionBlock(nn.Module):

|

| 168 |

+

def __init__(self, d_model: int, n_head: int, attn_mask: torch.Tensor = None):

|

| 169 |

+

super().__init__()

|

| 170 |

+

|

| 171 |

+

self.attn = nn.MultiheadAttention(d_model, n_head)

|

| 172 |

+

self.ln_1 = LayerNorm(d_model)

|

| 173 |

+

self.mlp = nn.Sequential(OrderedDict([

|

| 174 |

+

("c_fc", nn.Linear(d_model, d_model * 4)),

|

| 175 |

+

("gelu", QuickGELU()),

|

| 176 |

+

("c_proj", nn.Linear(d_model * 4, d_model))

|

| 177 |

+

]))

|

| 178 |

+

self.ln_2 = LayerNorm(d_model)

|

| 179 |

+

self.attn_mask = attn_mask

|

| 180 |

+

|

| 181 |

+

def attention(self, x: torch.Tensor):

|

| 182 |

+

self.attn_mask = self.attn_mask.to(dtype=x.dtype, device=x.device) if self.attn_mask is not None else None

|

| 183 |

+

return self.attn(x, x, x, need_weights=False, attn_mask=self.attn_mask)[0]

|

| 184 |

+

|

| 185 |

+

def forward(self, x: torch.Tensor):

|

| 186 |

+

x = x + self.attention(self.ln_1(x))

|

| 187 |

+

x = x + self.mlp(self.ln_2(x))

|

| 188 |

+

return x

|

| 189 |

+

|

| 190 |

+

|

| 191 |

+

class Transformer(nn.Module):

|

| 192 |

+

def __init__(self, width: int, layers: int, heads: int, attn_mask: torch.Tensor = None):

|

| 193 |

+

super().__init__()

|

| 194 |

+

self.width = width

|

| 195 |

+

self.layers = layers

|

| 196 |

+

self.resblocks = nn.Sequential(*[ResidualAttentionBlock(width, heads, attn_mask) for _ in range(layers)])

|

| 197 |

+

|

| 198 |

+

def forward(self, x: torch.Tensor):

|

| 199 |

+

return self.resblocks(x)

|

| 200 |

+

|

| 201 |

+

|

| 202 |

+

class VisionTransformer(nn.Module):

|

| 203 |

+

def __init__(self, input_resolution: int, patch_size: int, width: int, layers: int, heads: int, output_dim: int):

|

| 204 |

+

super().__init__()

|

| 205 |

+

self.input_resolution = input_resolution

|

| 206 |

+

self.output_dim = output_dim

|

| 207 |

+

self.conv1 = nn.Conv2d(in_channels=3, out_channels=width, kernel_size=patch_size, stride=patch_size, bias=False)

|

| 208 |

+

|

| 209 |

+

scale = width ** -0.5

|

| 210 |

+

self.class_embedding = nn.Parameter(scale * torch.randn(width))

|

| 211 |

+

self.positional_embedding = nn.Parameter(scale * torch.randn((input_resolution // patch_size) ** 2 + 1, width))

|

| 212 |

+

self.ln_pre = LayerNorm(width)

|

| 213 |

+

|

| 214 |

+

self.transformer = Transformer(width, layers, heads)

|

| 215 |

+

|

| 216 |

+

self.ln_post = LayerNorm(width)

|

| 217 |

+

self.proj = nn.Parameter(scale * torch.randn(width, output_dim))

|

| 218 |

+

|

| 219 |

+

def forward(self, x: torch.Tensor):

|

| 220 |

+

x = self.conv1(x) # shape = [*, width, grid, grid]

|

| 221 |

+

x = x.reshape(x.shape[0], x.shape[1], -1) # shape = [*, width, grid ** 2]

|

| 222 |

+

x = x.permute(0, 2, 1) # shape = [*, grid ** 2, width]

|

| 223 |

+

x = torch.cat([self.class_embedding.to(x.dtype) + torch.zeros(x.shape[0], 1, x.shape[-1], dtype=x.dtype, device=x.device), x], dim=1) # shape = [*, grid ** 2 + 1, width]

|

| 224 |

+

x = x + self.positional_embedding.to(x.dtype)

|

| 225 |

+

x = self.ln_pre(x)

|

| 226 |

+

|

| 227 |

+

x = x.permute(1, 0, 2) # NLD -> LND

|

| 228 |

+

x = self.transformer(x)

|

| 229 |

+

x = x.permute(1, 0, 2) # LND -> NLD

|

| 230 |

+

|

| 231 |

+

x = self.ln_post(x[:, 0, :])

|

| 232 |

+

|

| 233 |

+

if self.proj is not None:

|

| 234 |

+

x = x @ self.proj

|

| 235 |

+

|

| 236 |

+

return x

|

| 237 |

+

|

| 238 |

+

|

| 239 |

+

class CLIP(nn.Module):

|

| 240 |

+

def __init__(self,

|

| 241 |

+

embed_dim: int,

|

| 242 |

+

# vision

|

| 243 |

+

image_resolution: int,

|

| 244 |

+

vision_layers: Union[Tuple[int, int, int, int], int],

|

| 245 |

+

vision_width: int,

|

| 246 |

+

vision_patch_size: int,

|

| 247 |

+

# text

|

| 248 |

+

context_length: int,

|

| 249 |

+

vocab_size: int,

|

| 250 |

+

transformer_width: int,

|

| 251 |

+

transformer_heads: int,

|

| 252 |

+

transformer_layers: int

|

| 253 |

+

):

|

| 254 |

+

super().__init__()

|

| 255 |

+

|

| 256 |

+

self.context_length = context_length

|

| 257 |

+

|

| 258 |

+

if isinstance(vision_layers, (tuple, list)):

|

| 259 |

+

vision_heads = vision_width * 32 // 64

|

| 260 |

+

self.visual = ModifiedResNet(

|

| 261 |

+

layers=vision_layers,

|

| 262 |

+

output_dim=embed_dim,

|

| 263 |

+

heads=vision_heads,

|

| 264 |

+

input_resolution=image_resolution,

|

| 265 |

+

width=vision_width

|

| 266 |

+

)

|

| 267 |

+

else:

|

| 268 |

+

vision_heads = vision_width // 64

|

| 269 |

+

self.visual = VisionTransformer(

|

| 270 |

+

input_resolution=image_resolution,

|

| 271 |

+

patch_size=vision_patch_size,

|

| 272 |

+

width=vision_width,

|

| 273 |

+

layers=vision_layers,

|

| 274 |

+

heads=vision_heads,

|

| 275 |

+

output_dim=embed_dim

|

| 276 |

+

)

|

| 277 |

+

|

| 278 |

+

self.transformer = Transformer(

|

| 279 |

+

width=transformer_width,

|

| 280 |

+

layers=transformer_layers,

|

| 281 |

+

heads=transformer_heads,

|

| 282 |

+

attn_mask=self.build_attention_mask()

|

| 283 |

+

)

|

| 284 |

+

|

| 285 |

+

self.vocab_size = vocab_size

|

| 286 |

+

self.token_embedding = nn.Embedding(vocab_size, transformer_width)

|

| 287 |

+

self.positional_embedding = nn.Parameter(torch.empty(self.context_length, transformer_width))

|

| 288 |

+

self.ln_final = LayerNorm(transformer_width)

|

| 289 |

+

|

| 290 |

+

self.text_projection = nn.Parameter(torch.empty(transformer_width, embed_dim))

|

| 291 |

+

self.logit_scale = nn.Parameter(torch.ones([]) * np.log(1 / 0.07))

|

| 292 |

+

|

| 293 |

+

self.initialize_parameters()

|

| 294 |

+

|

| 295 |

+

def initialize_parameters(self):

|

| 296 |

+

nn.init.normal_(self.token_embedding.weight, std=0.02)

|

| 297 |

+

nn.init.normal_(self.positional_embedding, std=0.01)

|

| 298 |

+

|

| 299 |

+

if isinstance(self.visual, ModifiedResNet):

|

| 300 |

+

if self.visual.attnpool is not None:

|

| 301 |

+

std = self.visual.attnpool.c_proj.in_features ** -0.5

|

| 302 |

+

nn.init.normal_(self.visual.attnpool.q_proj.weight, std=std)

|

| 303 |

+

nn.init.normal_(self.visual.attnpool.k_proj.weight, std=std)

|

| 304 |

+

nn.init.normal_(self.visual.attnpool.v_proj.weight, std=std)

|

| 305 |

+

nn.init.normal_(self.visual.attnpool.c_proj.weight, std=std)

|

| 306 |

+

|

| 307 |

+

for resnet_block in [self.visual.layer1, self.visual.layer2, self.visual.layer3, self.visual.layer4]:

|

| 308 |

+

for name, param in resnet_block.named_parameters():

|

| 309 |

+