Spaces:

Runtime error

Runtime error

lodeil

commited on

Commit

·

e3e7ac5

1

Parent(s):

9ad5abe

Retrieve document structure for space

Browse files

app.py

ADDED

|

@@ -0,0 +1,104 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

|

| 3 |

+

os.system('pip install pyyaml==5.1')

|

| 4 |

+

# workaround: install old version of pytorch since detectron2 hasn't released packages for pytorch 1.9 (issue: https://github.com/facebookresearch/detectron2/issues/3158)

|

| 5 |

+

os.system('pip install torch==1.8.0+cu101 torchvision==0.9.0+cu101 -f https://download.pytorch.org/whl/torch_stable.html')

|

| 6 |

+

|

| 7 |

+

# install detectron2 that matches pytorch 1.8

|

| 8 |

+

# See https://detectron2.readthedocs.io/tutorials/install.html for instructions

|

| 9 |

+

os.system('pip install -q detectron2 -f https://dl.fbaipublicfiles.com/detectron2/wheels/cu101/torch1.8/index.html')

|

| 10 |

+

|

| 11 |

+

## install PyTesseract

|

| 12 |

+

os.system('pip install -q pytesseract')

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

import gradio as gr

|

| 16 |

+

import numpy as np

|

| 17 |

+

from transformers import LayoutLMv2Processor, LayoutLMv2ForTokenClassification

|

| 18 |

+

from datasets import load_dataset

|

| 19 |

+

from PIL import Image, ImageDraw, ImageFont

|

| 20 |

+

import pytesseract

|

| 21 |

+

|

| 22 |

+

# If you don't have tesseract executable in your PATH, include the following:

|

| 23 |

+

# pytesseract.pytesseract.tesseract_cmd = r'D:\\softwares\\Tesseract-OCR\\tesseract.exe'

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

processor = LayoutLMv2Processor.from_pretrained("microsoft/layoutlmv2-base-uncased")

|

| 27 |

+

model = LayoutLMv2ForTokenClassification.from_pretrained("nielsr/layoutlmv2-finetuned-funsd")

|

| 28 |

+

|

| 29 |

+

# load image example

|

| 30 |

+

dataset = load_dataset("nielsr/funsd", split="test")

|

| 31 |

+

image = Image.open(dataset[0]["image_path"]).convert("RGB")

|

| 32 |

+

image = Image.open("./doc.png")

|

| 33 |

+

image.save("document.png")

|

| 34 |

+

# define id2label, label2color

|

| 35 |

+

labels = dataset.features['ner_tags'].feature.names

|

| 36 |

+

id2label = {v: k for v, k in enumerate(labels)}

|

| 37 |

+

label2color = {'question':'blue', 'answer':'green', 'header':'orange', 'other':'violet'}

|

| 38 |

+

|

| 39 |

+

def unnormalize_box(bbox, width, height):

|

| 40 |

+

return [

|

| 41 |

+

width * (bbox[0] / 1000),

|

| 42 |

+

height * (bbox[1] / 1000),

|

| 43 |

+

width * (bbox[2] / 1000),

|

| 44 |

+

height * (bbox[3] / 1000),

|

| 45 |

+

]

|

| 46 |

+

|

| 47 |

+

def iob_to_label(label):

|

| 48 |

+

label = label[2:]

|

| 49 |

+

if not label:

|

| 50 |

+

return 'other'

|

| 51 |

+

return label

|

| 52 |

+

|

| 53 |

+

def process_image(image):

|

| 54 |

+

width, height = image.size

|

| 55 |

+

|

| 56 |

+

# encode

|

| 57 |

+

encoding = processor(image, truncation=True, return_offsets_mapping=True, return_tensors="pt")

|

| 58 |

+

offset_mapping = encoding.pop('offset_mapping')

|

| 59 |

+

|

| 60 |

+

# forward pass

|

| 61 |

+

outputs = model(**encoding)

|

| 62 |

+

|

| 63 |

+

# get predictions

|

| 64 |

+

predictions = outputs.logits.argmax(-1).squeeze().tolist()

|

| 65 |

+

token_boxes = encoding.bbox.squeeze().tolist()

|

| 66 |

+

|

| 67 |

+

# only keep non-subword predictions

|

| 68 |

+

is_subword = np.array(offset_mapping.squeeze().tolist())[:,0] != 0

|

| 69 |

+

true_predictions = [id2label[pred] for idx, pred in enumerate(predictions) if not is_subword[idx]]

|

| 70 |

+

true_boxes = [unnormalize_box(box, width, height) for idx, box in enumerate(token_boxes) if not is_subword[idx]]

|

| 71 |

+

|

| 72 |

+

# draw predictions over the image

|

| 73 |

+

draw = ImageDraw.Draw(image)

|

| 74 |

+

font = ImageFont.load_default()

|

| 75 |

+

for prediction, box in zip(true_predictions, true_boxes):

|

| 76 |

+

predicted_label = iob_to_label(prediction).lower()

|

| 77 |

+

draw.rectangle(box, outline=label2color[predicted_label])

|

| 78 |

+

draw.text((box[0]+10, box[1]-10), text=predicted_label, fill=label2color[predicted_label], font=font)

|

| 79 |

+

|

| 80 |

+

return image

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

title = "🧱 Document structure inference"

|

| 84 |

+

description = f""" ℹ️ \n Retrieve document structure from image. \n Tag detected boxes as QUESTION - ANSWER - HEADER - OTHER. \n Upload your own document or use the example below.

|

| 85 |

+

"""

|

| 86 |

+

article = "layoutlmv2-base-uncased model"

|

| 87 |

+

examples =[['document.png']]

|

| 88 |

+

|

| 89 |

+

css = ".output-image, .input-image {height: 40rem !important; width: 100% !important;}"

|

| 90 |

+

#css = "@media screen and (max-width: 600px) { .output_image, .input_image {height:20rem !important; width: 100% !important;} }"

|

| 91 |

+

# css = ".output_image, .input_image {height: 600px !important}"

|

| 92 |

+

|

| 93 |

+

css = ".image-preview {height: auto !important;}"

|

| 94 |

+

|

| 95 |

+

iface = gr.Interface(fn=process_image,

|

| 96 |

+

inputs=gr.inputs.Image(type="pil"),

|

| 97 |

+

outputs=gr.outputs.Image(type="pil", label="annotated image"),

|

| 98 |

+

title=title,

|

| 99 |

+

description=description,

|

| 100 |

+

article=article,

|

| 101 |

+

examples=examples,

|

| 102 |

+

css=css,

|

| 103 |

+

enable_queue=True)

|

| 104 |

+

iface.launch(debug=True)

|

books.png

ADDED

|

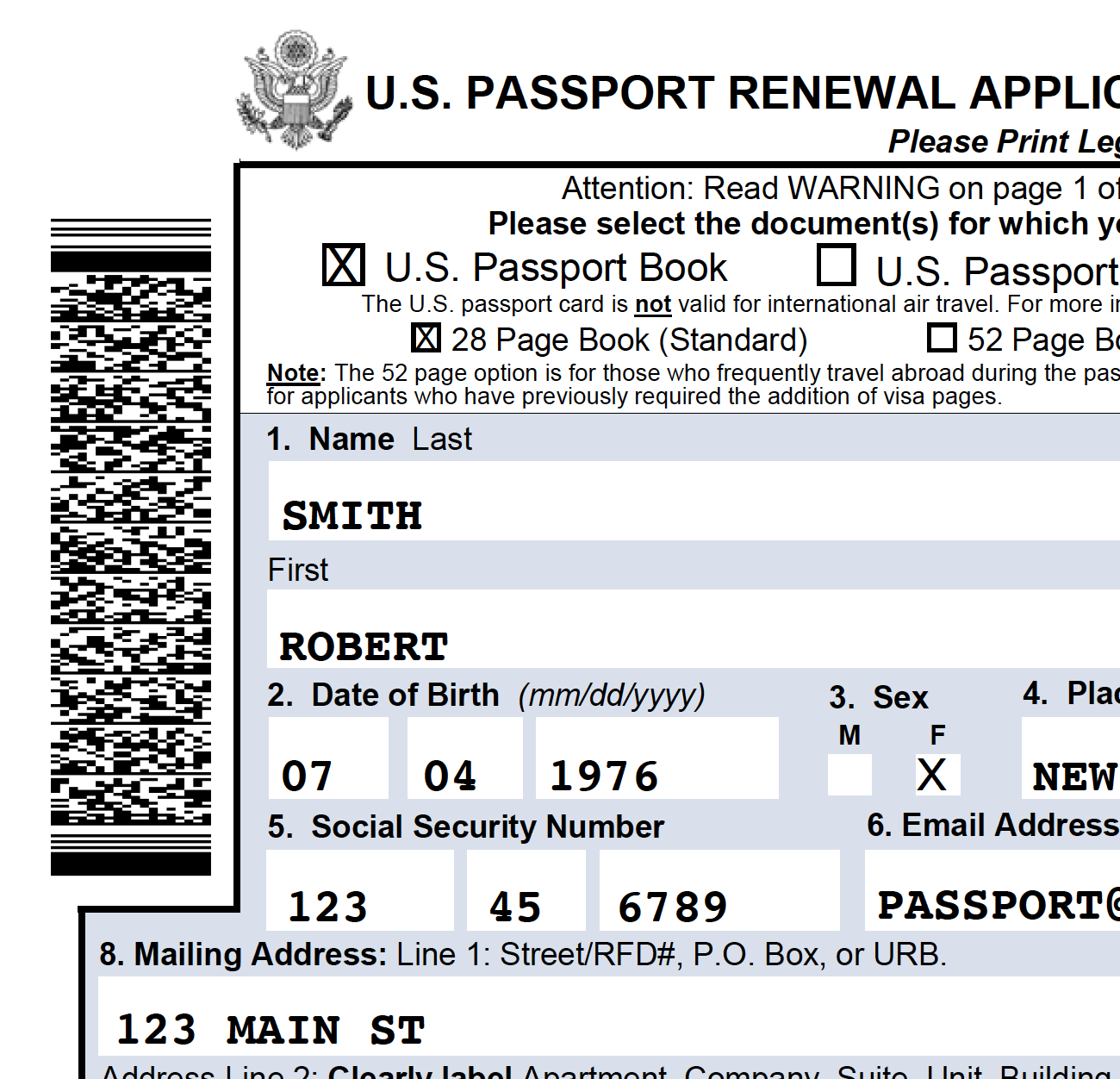

doc.png

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

gradio

|

| 2 |

+

Pillow

|

| 3 |

+

numpy

|

| 4 |

+

datasets

|

| 5 |

+

torch

|

| 6 |

+

transformers

|