Spaces:

Running

Running

init the space

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- README.md +5 -4

- SegVol_v1.pth +3 -0

- __pycache__/utils.cpython-39.pyc +0 -0

- app.py +308 -0

- model/LICENSE +21 -0

- model/README.md +74 -0

- model/__pycache__/inference_cpu.cpython-39.pyc +0 -0

- model/asset/FLARE22_Tr_0002_0000.nii.gz +3 -0

- model/asset/FLARE22_Tr_0005_0000.nii.gz +3 -0

- model/asset/FLARE22_Tr_0034_0000.nii.gz +3 -0

- model/asset/FLARE22_Tr_0045_0000.nii.gz +3 -0

- model/asset/model.png +0 -0

- model/asset/overview back.png +0 -0

- model/asset/overview.png +0 -0

- model/config/clip/config.json +157 -0

- model/config/clip/special_tokens_map.json +1 -0

- model/config/clip/tokenizer.json +0 -0

- model/config/clip/tokenizer_config.json +1 -0

- model/config/clip/vocab.json +0 -0

- model/config/config_demo.json +8 -0

- model/data_process/__pycache__/demo_data_process.cpython-39.pyc +0 -0

- model/data_process/demo_data_process.py +91 -0

- model/inference_cpu.py +173 -0

- model/inference_demo.py +219 -0

- model/network/__pycache__/model.cpython-39.pyc +0 -0

- model/network/model.py +91 -0

- model/script/inference_demo.sh +8 -0

- model/segment_anything_volumetric/.ipynb_checkpoints/build_sam-checkpoint.py +172 -0

- model/segment_anything_volumetric/__init__.py +12 -0

- model/segment_anything_volumetric/__pycache__/__init__.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/__init__.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/automatic_mask_generator.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/automatic_mask_generator.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/build_sam.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/build_sam.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/predictor.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/__pycache__/predictor.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/automatic_mask_generator.py +372 -0

- model/segment_anything_volumetric/build_sam.py +111 -0

- model/segment_anything_volumetric/modeling/.ipynb_checkpoints/image_encoder_swin-checkpoint.py +709 -0

- model/segment_anything_volumetric/modeling/.ipynb_checkpoints/prompt_encoder-checkpoint.py +232 -0

- model/segment_anything_volumetric/modeling/__init__.py +11 -0

- model/segment_anything_volumetric/modeling/__pycache__/__init__.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/__init__.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/common.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/common.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/image_encoder.cpython-310.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/image_encoder.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/image_encoder_swin.cpython-39.pyc +0 -0

- model/segment_anything_volumetric/modeling/__pycache__/mask_decoder.cpython-310.pyc +0 -0

README.md

CHANGED

|

@@ -1,12 +1,13 @@

|

|

| 1 |

---

|

| 2 |

title: SegVol

|

| 3 |

-

emoji:

|

| 4 |

-

colorFrom:

|

| 5 |

-

colorTo:

|

| 6 |

sdk: streamlit

|

| 7 |

-

sdk_version: 1.

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

|

|

|

| 10 |

---

|

| 11 |

|

| 12 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

|

|

|

| 1 |

---

|

| 2 |

title: SegVol

|

| 3 |

+

emoji: 🏢

|

| 4 |

+

colorFrom: indigo

|

| 5 |

+

colorTo: blue

|

| 6 |

sdk: streamlit

|

| 7 |

+

sdk_version: 1.28.2

|

| 8 |

app_file: app.py

|

| 9 |

pinned: false

|

| 10 |

+

license: mit

|

| 11 |

---

|

| 12 |

|

| 13 |

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

|

SegVol_v1.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b751dc95f1a0c0c6086c1e6fa7f8a17bbb87635e5226e15f5d156fbd364dbb85

|

| 3 |

+

size 1660308695

|

__pycache__/utils.cpython-39.pyc

ADDED

|

Binary file (3.85 kB). View file

|

|

|

app.py

ADDED

|

@@ -0,0 +1,308 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import streamlit as st

|

| 2 |

+

from streamlit_drawable_canvas import st_canvas

|

| 3 |

+

from streamlit_image_coordinates import streamlit_image_coordinates

|

| 4 |

+

|

| 5 |

+

|

| 6 |

+

from model.data_process.demo_data_process import process_ct_gt

|

| 7 |

+

import numpy as np

|

| 8 |

+

import matplotlib.pyplot as plt

|

| 9 |

+

from PIL import Image, ImageDraw

|

| 10 |

+

import monai.transforms as transforms

|

| 11 |

+

from utils import show_points, make_fig, reflect_points_into_model, initial_rectangle, reflect_json_data_to_3D_box, reflect_box_into_model, run

|

| 12 |

+

|

| 13 |

+

print('script run')

|

| 14 |

+

|

| 15 |

+

#############################################

|

| 16 |

+

# init session_state

|

| 17 |

+

if 'option' not in st.session_state:

|

| 18 |

+

st.session_state.option = None

|

| 19 |

+

if 'text_prompt' not in st.session_state:

|

| 20 |

+

st.session_state.text_prompt = None

|

| 21 |

+

|

| 22 |

+

if 'reset_demo_case' not in st.session_state:

|

| 23 |

+

st.session_state.reset_demo_case = False

|

| 24 |

+

|

| 25 |

+

if 'preds_3D' not in st.session_state:

|

| 26 |

+

st.session_state.preds_3D = None

|

| 27 |

+

|

| 28 |

+

if 'data_item' not in st.session_state:

|

| 29 |

+

st.session_state.data_item = None

|

| 30 |

+

|

| 31 |

+

if 'points' not in st.session_state:

|

| 32 |

+

st.session_state.points = []

|

| 33 |

+

|

| 34 |

+

if 'use_text_prompt' not in st.session_state:

|

| 35 |

+

st.session_state.use_text_prompt = False

|

| 36 |

+

|

| 37 |

+

if 'use_point_prompt' not in st.session_state:

|

| 38 |

+

st.session_state.use_point_prompt = False

|

| 39 |

+

|

| 40 |

+

if 'use_box_prompt' not in st.session_state:

|

| 41 |

+

st.session_state.use_box_prompt = False

|

| 42 |

+

|

| 43 |

+

if 'rectangle_3Dbox' not in st.session_state:

|

| 44 |

+

st.session_state.rectangle_3Dbox = [0,0,0,0,0,0]

|

| 45 |

+

|

| 46 |

+

if 'irregular_box' not in st.session_state:

|

| 47 |

+

st.session_state.irregular_box = False

|

| 48 |

+

|

| 49 |

+

if 'running' not in st.session_state:

|

| 50 |

+

st.session_state.running = False

|

| 51 |

+

|

| 52 |

+

if 'transparency' not in st.session_state:

|

| 53 |

+

st.session_state.transparency = 0.25

|

| 54 |

+

|

| 55 |

+

case_list = [

|

| 56 |

+

'model/asset/FLARE22_Tr_0002_0000.nii.gz',

|

| 57 |

+

'model/asset/FLARE22_Tr_0005_0000.nii.gz',

|

| 58 |

+

'model/asset/FLARE22_Tr_0034_0000.nii.gz',

|

| 59 |

+

'model/asset/FLARE22_Tr_0045_0000.nii.gz'

|

| 60 |

+

]

|

| 61 |

+

|

| 62 |

+

#############################################

|

| 63 |

+

|

| 64 |

+

#############################################

|

| 65 |

+

# reset functions

|

| 66 |

+

def clear_prompts():

|

| 67 |

+

st.session_state.points = []

|

| 68 |

+

st.session_state.rectangle_3Dbox = [0,0,0,0,0,0]

|

| 69 |

+

|

| 70 |

+

def reset_demo_case():

|

| 71 |

+

st.session_state.data_item = None

|

| 72 |

+

st.session_state.reset_demo_case = True

|

| 73 |

+

clear_prompts()

|

| 74 |

+

|

| 75 |

+

def clear_file():

|

| 76 |

+

st.session_state.option = None

|

| 77 |

+

process_ct_gt.clear()

|

| 78 |

+

reset_demo_case()

|

| 79 |

+

clear_prompts()

|

| 80 |

+

|

| 81 |

+

#############################################

|

| 82 |

+

|

| 83 |

+

st.image(Image.open('model/asset/overview back.png'), use_column_width=True)

|

| 84 |

+

|

| 85 |

+

github_col, arxive_col = st.columns(2)

|

| 86 |

+

|

| 87 |

+

with github_col:

|

| 88 |

+

st.write('GitHub repo:https://github.com/BAAI-DCAI/SegVol')

|

| 89 |

+

|

| 90 |

+

with arxive_col:

|

| 91 |

+

st.write('Paper:https://arxiv.org/abs/2311.13385')

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

# modify demo case here

|

| 95 |

+

demo_type = st.radio(

|

| 96 |

+

"Demo case source",

|

| 97 |

+

["Select", "Upload"],

|

| 98 |

+

on_change=clear_file

|

| 99 |

+

)

|

| 100 |

+

|

| 101 |

+

if demo_type=="Select":

|

| 102 |

+

uploaded_file = st.selectbox(

|

| 103 |

+

"Select a demo case",

|

| 104 |

+

case_list,

|

| 105 |

+

index=None,

|

| 106 |

+

placeholder="Select a demo case...",

|

| 107 |

+

on_change=reset_demo_case

|

| 108 |

+

)

|

| 109 |

+

else:

|

| 110 |

+

uploaded_file = st.file_uploader("Upload demo case(nii.gz)", type='nii.gz', on_change=reset_demo_case)

|

| 111 |

+

|

| 112 |

+

st.session_state.option = uploaded_file

|

| 113 |

+

|

| 114 |

+

if st.session_state.option is not None and \

|

| 115 |

+

st.session_state.reset_demo_case or (st.session_state.data_item is None and st.session_state.option is not None):

|

| 116 |

+

|

| 117 |

+

st.session_state.data_item = process_ct_gt(st.session_state.option)

|

| 118 |

+

st.session_state.reset_demo_case = False

|

| 119 |

+

st.session_state.preds_3D = None

|

| 120 |

+

|

| 121 |

+

prompt_col1, prompt_col2 = st.columns(2)

|

| 122 |

+

|

| 123 |

+

with prompt_col1:

|

| 124 |

+

st.session_state.use_text_prompt = st.toggle('Sematic prompt')

|

| 125 |

+

text_prompt_type = st.radio(

|

| 126 |

+

"Sematic prompt type",

|

| 127 |

+

["Predefined", "Custom"],

|

| 128 |

+

disabled=(not st.session_state.use_text_prompt)

|

| 129 |

+

)

|

| 130 |

+

if text_prompt_type == "Predefined":

|

| 131 |

+

pre_text = st.selectbox(

|

| 132 |

+

"Predefined anatomical category:",

|

| 133 |

+

['liver', 'right kidney', 'spleen', 'pancreas', 'aorta', 'inferior vena cava', 'right adrenal gland', 'left adrenal gland', 'gallbladder', 'esophagus', 'stomach', 'duodenum', 'left kidney'],

|

| 134 |

+

index=None,

|

| 135 |

+

disabled=(not st.session_state.use_text_prompt)

|

| 136 |

+

)

|

| 137 |

+

else:

|

| 138 |

+

pre_text = st.text_input('Enter an Anatomical word or phrase:', None, max_chars=20,

|

| 139 |

+

disabled=(not st.session_state.use_text_prompt))

|

| 140 |

+

if pre_text is None or len(pre_text) > 0:

|

| 141 |

+

st.session_state.text_prompt = pre_text

|

| 142 |

+

else:

|

| 143 |

+

st.session_state.text_prompt = None

|

| 144 |

+

|

| 145 |

+

|

| 146 |

+

with prompt_col2:

|

| 147 |

+

spatial_prompt_on = st.toggle('Spatial prompt', on_change=clear_prompts)

|

| 148 |

+

spatial_prompt = st.radio(

|

| 149 |

+

"Spatial prompt type",

|

| 150 |

+

["Point prompt", "Box prompt"],

|

| 151 |

+

on_change=clear_prompts,

|

| 152 |

+

disabled=(not spatial_prompt_on))

|

| 153 |

+

|

| 154 |

+

if spatial_prompt == "Point prompt":

|

| 155 |

+

st.session_state.use_point_prompt = True

|

| 156 |

+

st.session_state.use_box_prompt = False

|

| 157 |

+

elif spatial_prompt == "Box prompt":

|

| 158 |

+

st.session_state.use_box_prompt = True

|

| 159 |

+

st.session_state.use_point_prompt = False

|

| 160 |

+

else:

|

| 161 |

+

st.session_state.use_point_prompt = False

|

| 162 |

+

st.session_state.use_box_prompt = False

|

| 163 |

+

|

| 164 |

+

if not spatial_prompt_on:

|

| 165 |

+

st.session_state.use_point_prompt = False

|

| 166 |

+

st.session_state.use_box_prompt = False

|

| 167 |

+

|

| 168 |

+

if not st.session_state.use_text_prompt:

|

| 169 |

+

st.session_state.text_prompt = None

|

| 170 |

+

|

| 171 |

+

if st.session_state.option is None:

|

| 172 |

+

st.write('please select demo case first')

|

| 173 |

+

else:

|

| 174 |

+

image_3D = st.session_state.data_item['z_image'][0].numpy()

|

| 175 |

+

col_control1, col_control2 = st.columns(2)

|

| 176 |

+

|

| 177 |

+

with col_control1:

|

| 178 |

+

selected_index_z = st.slider('X-Y view', 0, image_3D.shape[0] - 1, 162, key='xy', disabled=st.session_state.running)

|

| 179 |

+

|

| 180 |

+

with col_control2:

|

| 181 |

+

selected_index_y = st.slider('X-Z view', 0, image_3D.shape[1] - 1, 162, key='xz', disabled=st.session_state.running)

|

| 182 |

+

if st.session_state.use_box_prompt:

|

| 183 |

+

top, bottom = st.select_slider(

|

| 184 |

+

'Top and bottom of box',

|

| 185 |

+

options=range(0, 325),

|

| 186 |

+

value=(0, 324),

|

| 187 |

+

disabled=st.session_state.running

|

| 188 |

+

)

|

| 189 |

+

st.session_state.rectangle_3Dbox[0] = top

|

| 190 |

+

st.session_state.rectangle_3Dbox[3] = bottom

|

| 191 |

+

col_image1, col_image2 = st.columns(2)

|

| 192 |

+

|

| 193 |

+

if st.session_state.preds_3D is not None:

|

| 194 |

+

st.session_state.transparency = st.slider('Mask opacity', 0.0, 1.0, 0.25, disabled=st.session_state.running)

|

| 195 |

+

|

| 196 |

+

with col_image1:

|

| 197 |

+

|

| 198 |

+

image_z_array = image_3D[selected_index_z]

|

| 199 |

+

|

| 200 |

+

preds_z_array = None

|

| 201 |

+

if st.session_state.preds_3D is not None:

|

| 202 |

+

preds_z_array = st.session_state.preds_3D[selected_index_z]

|

| 203 |

+

|

| 204 |

+

image_z = make_fig(image_z_array, preds_z_array, st.session_state.points, selected_index_z, 'xy')

|

| 205 |

+

|

| 206 |

+

|

| 207 |

+

if st.session_state.use_point_prompt:

|

| 208 |

+

value_xy = streamlit_image_coordinates(image_z, width=325)

|

| 209 |

+

|

| 210 |

+

if value_xy is not None:

|

| 211 |

+

point_ax_xy = (selected_index_z, value_xy['y'], value_xy['x'])

|

| 212 |

+

if len(st.session_state.points) >= 3:

|

| 213 |

+

st.warning('Max point num is 3', icon="⚠️")

|

| 214 |

+

elif point_ax_xy not in st.session_state.points:

|

| 215 |

+

st.session_state.points.append(point_ax_xy)

|

| 216 |

+

print('point_ax_xy add rerun')

|

| 217 |

+

st.rerun()

|

| 218 |

+

elif st.session_state.use_box_prompt:

|

| 219 |

+

canvas_result_xy = st_canvas(

|

| 220 |

+

fill_color="rgba(255, 165, 0, 0.3)", # Fixed fill color with some opacity

|

| 221 |

+

stroke_width=3,

|

| 222 |

+

stroke_color='#2909F1',

|

| 223 |

+

background_image=image_z,

|

| 224 |

+

update_streamlit=True,

|

| 225 |

+

height=325,

|

| 226 |

+

width=325,

|

| 227 |

+

drawing_mode='transform',

|

| 228 |

+

point_display_radius=0,

|

| 229 |

+

key="canvas_xy",

|

| 230 |

+

initial_drawing=initial_rectangle,

|

| 231 |

+

display_toolbar=True

|

| 232 |

+

)

|

| 233 |

+

try:

|

| 234 |

+

print(canvas_result_xy.json_data['objects'][0]['angle'])

|

| 235 |

+

if canvas_result_xy.json_data['objects'][0]['angle'] != 0:

|

| 236 |

+

st.warning('Rotating is undefined behavior', icon="⚠️")

|

| 237 |

+

st.session_state.irregular_box = True

|

| 238 |

+

else:

|

| 239 |

+

st.session_state.irregular_box = False

|

| 240 |

+

reflect_json_data_to_3D_box(canvas_result_xy.json_data, view='xy')

|

| 241 |

+

except:

|

| 242 |

+

print('exception')

|

| 243 |

+

pass

|

| 244 |

+

else:

|

| 245 |

+

st.image(image_z, use_column_width=False)

|

| 246 |

+

|

| 247 |

+

with col_image2:

|

| 248 |

+

image_y_array = image_3D[:, selected_index_y, :]

|

| 249 |

+

|

| 250 |

+

preds_y_array = None

|

| 251 |

+

if st.session_state.preds_3D is not None:

|

| 252 |

+

preds_y_array = st.session_state.preds_3D[:, selected_index_y, :]

|

| 253 |

+

|

| 254 |

+

image_y = make_fig(image_y_array, preds_y_array, st.session_state.points, selected_index_y, 'xz')

|

| 255 |

+

|

| 256 |

+

if st.session_state.use_point_prompt:

|

| 257 |

+

value_yz = streamlit_image_coordinates(image_y, width=325)

|

| 258 |

+

|

| 259 |

+

if value_yz is not None:

|

| 260 |

+

point_ax_xz = (value_yz['y'], selected_index_y, value_yz['x'])

|

| 261 |

+

if len(st.session_state.points) >= 3:

|

| 262 |

+

st.warning('Max point num is 3', icon="⚠️")

|

| 263 |

+

elif point_ax_xz not in st.session_state.points:

|

| 264 |

+

st.session_state.points.append(point_ax_xz)

|

| 265 |

+

print('point_ax_xz add rerun')

|

| 266 |

+

st.rerun()

|

| 267 |

+

elif st.session_state.use_box_prompt:

|

| 268 |

+

if st.session_state.rectangle_3Dbox[1] <= selected_index_y and selected_index_y <= st.session_state.rectangle_3Dbox[4]:

|

| 269 |

+

draw = ImageDraw.Draw(image_y)

|

| 270 |

+

#rectangle xz view (upper-left and lower-right)

|

| 271 |

+

rectangle_coords = [(st.session_state.rectangle_3Dbox[2], st.session_state.rectangle_3Dbox[0]),

|

| 272 |

+

(st.session_state.rectangle_3Dbox[5], st.session_state.rectangle_3Dbox[3])]

|

| 273 |

+

# Draw the rectangle on the image

|

| 274 |

+

draw.rectangle(rectangle_coords, outline='#2909F1', width=3)

|

| 275 |

+

st.image(image_y, use_column_width=False)

|

| 276 |

+

else:

|

| 277 |

+

st.image(image_y, use_column_width=False)

|

| 278 |

+

|

| 279 |

+

|

| 280 |

+

col1, col2, col3 = st.columns(3)

|

| 281 |

+

|

| 282 |

+

with col1:

|

| 283 |

+

if st.button("Clear", use_container_width=True,

|

| 284 |

+

disabled=(st.session_state.option is None or (len(st.session_state.points)==0 and not st.session_state.use_box_prompt and st.session_state.preds_3D is None))):

|

| 285 |

+

clear_prompts()

|

| 286 |

+

st.session_state.preds_3D = None

|

| 287 |

+

st.rerun()

|

| 288 |

+

|

| 289 |

+

with col3:

|

| 290 |

+

run_button_name = 'Run'if not st.session_state.running else 'Running'

|

| 291 |

+

if st.button(run_button_name, type="primary", use_container_width=True,

|

| 292 |

+

disabled=(

|

| 293 |

+

st.session_state.data_item is None or

|

| 294 |

+

(st.session_state.text_prompt is None and len(st.session_state.points) == 0 and st.session_state.use_box_prompt is False) or

|

| 295 |

+

st.session_state.irregular_box or

|

| 296 |

+

st.session_state.running

|

| 297 |

+

)):

|

| 298 |

+

st.session_state.running = True

|

| 299 |

+

st.rerun()

|

| 300 |

+

|

| 301 |

+

# if len(st.session_state.points) > 0:

|

| 302 |

+

# st.write(st.session_state.points)

|

| 303 |

+

|

| 304 |

+

if st.session_state.running:

|

| 305 |

+

st.session_state.running = False

|

| 306 |

+

with st.status("Running...", expanded=False) as status:

|

| 307 |

+

run()

|

| 308 |

+

st.rerun()

|

model/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 BAAI-DCAI

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

model/README.md

ADDED

|

@@ -0,0 +1,74 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

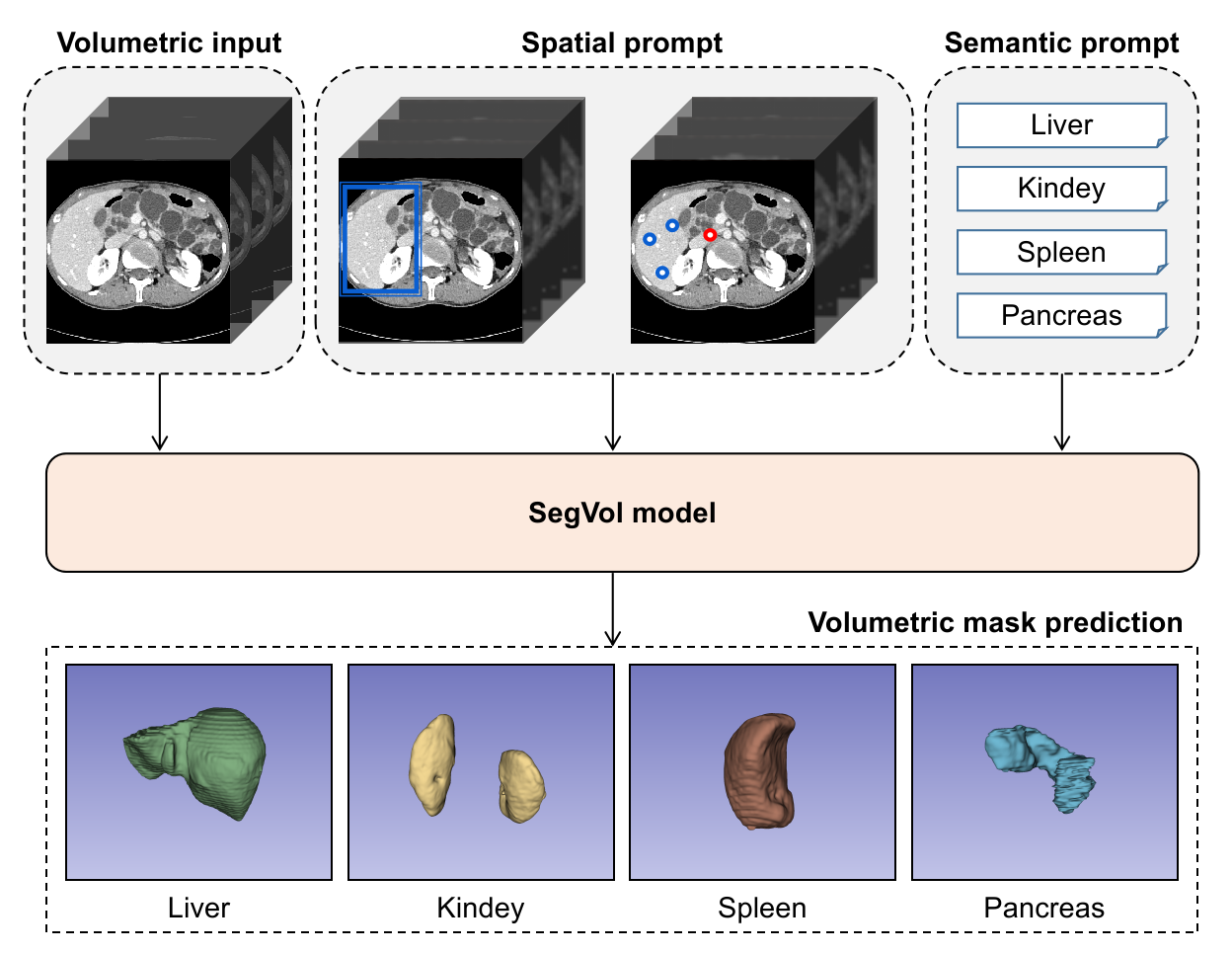

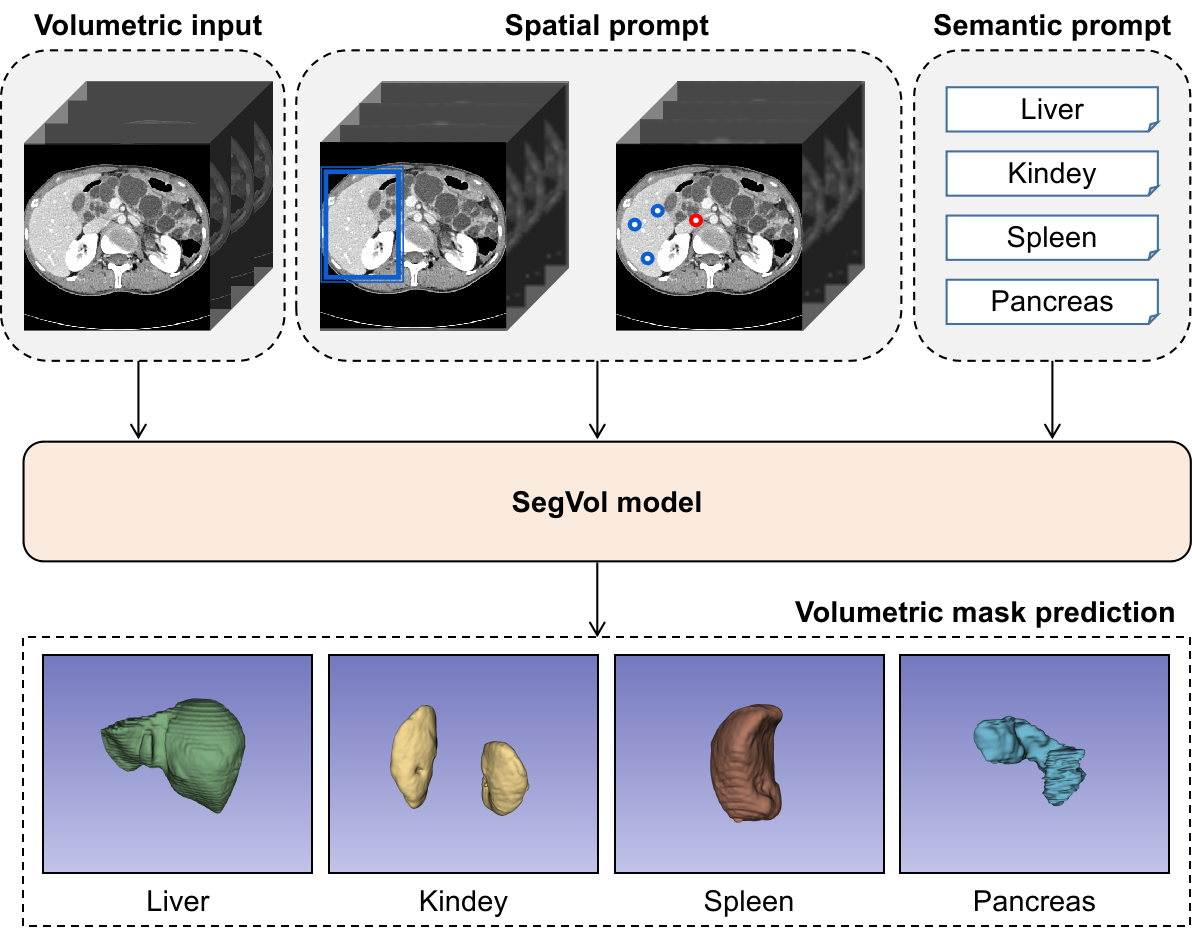

| 1 |

+

# SegVol: Universal and Interactive Volumetric Medical Image Segmentation

|

| 2 |

+

This repo is the official implementation of [SegVol: Universal and Interactive Volumetric Medical Image Segmentation](https://arxiv.org/abs/2311.13385).

|

| 3 |

+

|

| 4 |

+

## News🚀

|

| 5 |

+

(2023.11.24) *You can download weight files of SegVol and ViT(CTs pre-train) [here](https://drive.google.com/drive/folders/1TEJtgctH534Ko5r4i79usJvqmXVuLf54?usp=drive_link).* 🔥

|

| 6 |

+

|

| 7 |

+

(2023.11.23) *The brief introduction and instruction have been uploaded.*

|

| 8 |

+

|

| 9 |

+

(2023.11.23) *The inference demo code has been uploaded.*

|

| 10 |

+

|

| 11 |

+

(2023.11.22) *The first edition of our paper has been uploaded to arXiv.* 📃

|

| 12 |

+

|

| 13 |

+

## Introduction

|

| 14 |

+

<img src="https://github.com/BAAI-DCAI/SegVol/blob/main/asset/overview.png" width="60%" height="60%">

|

| 15 |

+

|

| 16 |

+

The SegVol is a universal and interactive model for volumetric medical image segmentation. SegVol accepts **point**, **box** and **text** prompt while output volumetric segmentation. By training on 90k unlabeled Computed Tomography (CT) volumes and 6k labeled CTs, this foundation model supports the segmentation of over 200 anatomical categories.

|

| 17 |

+

|

| 18 |

+

We will release SegVol's **inference code**, **training code**, **model params** and **ViT pre-training params** (pre-training is performed over 2,000 epochs on 96k CTs).

|

| 19 |

+

|

| 20 |

+

## Usage

|

| 21 |

+

### Requirements

|

| 22 |

+

The [pytorch v1.11.0](https://pytorch.org/get-started/previous-versions/) (or higher virsion) is needed first. Following install key requirements using commands:

|

| 23 |

+

|

| 24 |

+

```

|

| 25 |

+

pip install 'monai[all]==0.9.0'

|

| 26 |

+

pip install einops==0.6.1

|

| 27 |

+

pip install transformers==4.18.0

|

| 28 |

+

pip install matplotlib

|

| 29 |

+

```

|

| 30 |

+

### Config and run demo script

|

| 31 |

+

1. You can download the demo case [here](https://drive.google.com/drive/folders/1TEJtgctH534Ko5r4i79usJvqmXVuLf54?usp=drive_link), or download the whole demo dataset [AbdomenCT-1K](https://github.com/JunMa11/AbdomenCT-1K) and choose any demo case you want.

|

| 32 |

+

2. Please set CT path and Ground Truth path of the case in the [config_demo.json](https://github.com/BAAI-DCAI/SegVol/blob/main/config/config_demo.json).

|

| 33 |

+

3. After that, config the [inference_demo.sh](https://github.com/BAAI-DCAI/SegVol/blob/main/script/inference_demo.sh) file for execution:

|

| 34 |

+

|

| 35 |

+

- `$segvol_ckpt`: the path of SegVol's checkpoint (Download from [here](https://drive.google.com/drive/folders/1TEJtgctH534Ko5r4i79usJvqmXVuLf54?usp=drive_link)).

|

| 36 |

+

|

| 37 |

+

- `$work_dir`: any path of folder you want to save the log files and visualizaion results.

|

| 38 |

+

|

| 39 |

+

4. Finally, you can control the **prompt type**, **zoom-in-zoom-out mechanism** and **visualizaion switch** [here](https://github.com/BAAI-DCAI/SegVol/blob/35f3ff9c943a74f630e6948051a1fe21aaba91bc/inference_demo.py#L208C11-L208C11).

|

| 40 |

+

5. Now, just run `bash script/inference_demo.sh` to infer your demo case.

|

| 41 |

+

|

| 42 |

+

## Citation

|

| 43 |

+

If you find this repository helpful, please consider citing:

|

| 44 |

+

```

|

| 45 |

+

@misc{du2023segvol,

|

| 46 |

+

title={SegVol: Universal and Interactive Volumetric Medical Image Segmentation},

|

| 47 |

+

author={Yuxin Du and Fan Bai and Tiejun Huang and Bo Zhao},

|

| 48 |

+

year={2023},

|

| 49 |

+

eprint={2311.13385},

|

| 50 |

+

archivePrefix={arXiv},

|

| 51 |

+

primaryClass={cs.CV}

|

| 52 |

+

}

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

## Acknowledgement

|

| 56 |

+

Thanks for the following amazing works:

|

| 57 |

+

|

| 58 |

+

[HuggingFace](https://huggingface.co/).

|

| 59 |

+

|

| 60 |

+

[CLIP](https://github.com/openai/CLIP).

|

| 61 |

+

|

| 62 |

+

[MONAI](https://github.com/Project-MONAI/MONAI).

|

| 63 |

+

|

| 64 |

+

[Image by brgfx](https://www.freepik.com/free-vector/anatomical-structure-human-bodies_26353260.htm) on Freepik.

|

| 65 |

+

|

| 66 |

+

[Image by muammark](https://www.freepik.com/free-vector/people-icon-collection_1157380.htm#query=user&position=2&from_view=search&track=sph) on Freepik.

|

| 67 |

+

|

| 68 |

+

[Image by pch.vector](https://www.freepik.com/free-vector/different-phone-hand-gestures-set_9649376.htm#query=Vector%20touch%20screen%20hand%20gestures&position=4&from_view=search&track=ais) on Freepik.

|

| 69 |

+

|

| 70 |

+

[Image by starline](https://www.freepik.com/free-vector/set-three-light-bulb-represent-effective-business-idea-concept_37588597.htm#query=idea&position=0&from_view=search&track=sph) on Freepik.

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

|

model/__pycache__/inference_cpu.cpython-39.pyc

ADDED

|

Binary file (4.67 kB). View file

|

|

|

model/asset/FLARE22_Tr_0002_0000.nii.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:eb16eced003524fa005e28b2822c0b53503f1223d758cdf72528fad359aa10ba

|

| 3 |

+

size 30611274

|

model/asset/FLARE22_Tr_0005_0000.nii.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2be5019bfc7e805d5e24785bcd44ffe7720e13e38b2a3124ad25b454811b221c

|

| 3 |

+

size 26615527

|

model/asset/FLARE22_Tr_0034_0000.nii.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:023c5d06ea2a6c8866c1e214ecee06a4447a8d0c50225142cdfdbbccc2bf8c66

|

| 3 |

+

size 28821917

|

model/asset/FLARE22_Tr_0045_0000.nii.gz

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:336b3719af673fd6fafe89d7d5d95d5f18239a9faccde9753703fc1465f43736

|

| 3 |

+

size 32885093

|

model/asset/model.png

ADDED

|

model/asset/overview back.png

ADDED

|

model/asset/overview.png

ADDED

|

model/config/clip/config.json

ADDED

|

@@ -0,0 +1,157 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "openai/clip-vit-base-patch32",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPModel"

|

| 5 |

+

],

|

| 6 |

+

"initializer_factor": 1.0,

|

| 7 |

+

"logit_scale_init_value": 2.6592,

|

| 8 |

+

"model_type": "clip",

|

| 9 |

+

"projection_dim": 512,

|

| 10 |

+

"text_config": {

|

| 11 |

+

"_name_or_path": "",

|

| 12 |

+

"add_cross_attention": false,

|

| 13 |

+

"architectures": null,

|

| 14 |

+

"attention_dropout": 0.0,

|

| 15 |

+

"bad_words_ids": null,

|

| 16 |

+

"bos_token_id": 0,

|

| 17 |

+

"chunk_size_feed_forward": 0,

|

| 18 |

+

"cross_attention_hidden_size": null,

|

| 19 |

+

"decoder_start_token_id": null,

|

| 20 |

+

"diversity_penalty": 0.0,

|

| 21 |

+

"do_sample": false,

|

| 22 |

+

"dropout": 0.0,

|

| 23 |

+

"early_stopping": false,

|

| 24 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 25 |

+

"eos_token_id": 2,

|

| 26 |

+

"finetuning_task": null,

|

| 27 |

+

"forced_bos_token_id": null,

|

| 28 |

+

"forced_eos_token_id": null,

|

| 29 |

+

"hidden_act": "quick_gelu",

|

| 30 |

+

"hidden_size": 512,

|

| 31 |

+

"id2label": {

|

| 32 |

+

"0": "LABEL_0",

|

| 33 |

+

"1": "LABEL_1"

|

| 34 |

+

},

|

| 35 |

+

"initializer_factor": 1.0,

|

| 36 |

+

"initializer_range": 0.02,

|

| 37 |

+

"intermediate_size": 2048,

|

| 38 |

+

"is_decoder": false,

|

| 39 |

+

"is_encoder_decoder": false,

|

| 40 |

+

"label2id": {

|

| 41 |

+

"LABEL_0": 0,

|

| 42 |

+

"LABEL_1": 1

|

| 43 |

+

},

|

| 44 |

+

"layer_norm_eps": 1e-05,

|

| 45 |

+

"length_penalty": 1.0,

|

| 46 |

+

"max_length": 20,

|

| 47 |

+

"max_position_embeddings": 77,

|

| 48 |

+

"min_length": 0,

|

| 49 |

+

"model_type": "clip_text_model",

|

| 50 |

+

"no_repeat_ngram_size": 0,

|

| 51 |

+

"num_attention_heads": 8,

|

| 52 |

+

"num_beam_groups": 1,

|

| 53 |

+

"num_beams": 1,

|

| 54 |

+

"num_hidden_layers": 12,

|

| 55 |

+

"num_return_sequences": 1,

|

| 56 |

+

"output_attentions": false,

|

| 57 |

+

"output_hidden_states": false,

|

| 58 |

+

"output_scores": false,

|

| 59 |

+

"pad_token_id": 1,

|

| 60 |

+

"prefix": null,

|

| 61 |

+

"projection_dim": 512,

|

| 62 |

+

"problem_type": null,

|

| 63 |

+

"pruned_heads": {},

|

| 64 |

+

"remove_invalid_values": false,

|

| 65 |

+

"repetition_penalty": 1.0,

|

| 66 |

+

"return_dict": true,

|

| 67 |

+

"return_dict_in_generate": false,

|

| 68 |

+

"sep_token_id": null,

|

| 69 |

+

"task_specific_params": null,

|

| 70 |

+

"temperature": 1.0,

|

| 71 |

+

"tie_encoder_decoder": false,

|

| 72 |

+

"tie_word_embeddings": true,

|

| 73 |

+

"tokenizer_class": null,

|

| 74 |

+

"top_k": 50,

|

| 75 |

+

"top_p": 1.0,

|

| 76 |

+

"torch_dtype": null,

|

| 77 |

+

"torchscript": false,

|

| 78 |

+

"transformers_version": "4.16.0.dev0",

|

| 79 |

+

"use_bfloat16": false,

|

| 80 |

+

"vocab_size": 49408

|

| 81 |

+

},

|

| 82 |

+

"text_config_dict": null,

|

| 83 |

+

"transformers_version": null,

|

| 84 |

+

"vision_config": {

|

| 85 |

+

"_name_or_path": "",

|

| 86 |

+

"add_cross_attention": false,

|

| 87 |

+

"architectures": null,

|

| 88 |

+

"attention_dropout": 0.0,

|

| 89 |

+

"bad_words_ids": null,

|

| 90 |

+

"bos_token_id": null,

|

| 91 |

+

"chunk_size_feed_forward": 0,

|

| 92 |

+

"cross_attention_hidden_size": null,

|

| 93 |

+

"decoder_start_token_id": null,

|

| 94 |

+

"diversity_penalty": 0.0,

|

| 95 |

+

"do_sample": false,

|

| 96 |

+

"dropout": 0.0,

|

| 97 |

+

"early_stopping": false,

|

| 98 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 99 |

+

"eos_token_id": null,

|

| 100 |

+

"finetuning_task": null,

|

| 101 |

+

"forced_bos_token_id": null,

|

| 102 |

+

"forced_eos_token_id": null,

|

| 103 |

+

"hidden_act": "quick_gelu",

|

| 104 |

+

"hidden_size": 768,

|

| 105 |

+

"id2label": {

|

| 106 |

+

"0": "LABEL_0",

|

| 107 |

+

"1": "LABEL_1"

|

| 108 |

+

},

|

| 109 |

+

"image_size": 224,

|

| 110 |

+

"initializer_factor": 1.0,

|

| 111 |

+

"initializer_range": 0.02,

|

| 112 |

+

"intermediate_size": 3072,

|

| 113 |

+

"is_decoder": false,

|

| 114 |

+

"is_encoder_decoder": false,

|

| 115 |

+

"label2id": {

|

| 116 |

+

"LABEL_0": 0,

|

| 117 |

+

"LABEL_1": 1

|

| 118 |

+

},

|

| 119 |

+

"layer_norm_eps": 1e-05,

|

| 120 |

+

"length_penalty": 1.0,

|

| 121 |

+

"max_length": 20,

|

| 122 |

+

"min_length": 0,

|

| 123 |

+

"model_type": "clip_vision_model",

|

| 124 |

+

"no_repeat_ngram_size": 0,

|

| 125 |

+

"num_attention_heads": 12,

|

| 126 |

+

"num_beam_groups": 1,

|

| 127 |

+

"num_beams": 1,

|

| 128 |

+

"num_hidden_layers": 12,

|

| 129 |

+

"num_return_sequences": 1,

|

| 130 |

+

"output_attentions": false,

|

| 131 |

+

"output_hidden_states": false,

|

| 132 |

+

"output_scores": false,

|

| 133 |

+

"pad_token_id": null,

|

| 134 |

+

"patch_size": 32,

|

| 135 |

+

"prefix": null,

|

| 136 |

+

"projection_dim" : 512,

|

| 137 |

+

"problem_type": null,

|

| 138 |

+

"pruned_heads": {},

|

| 139 |

+

"remove_invalid_values": false,

|

| 140 |

+

"repetition_penalty": 1.0,

|

| 141 |

+

"return_dict": true,

|

| 142 |

+

"return_dict_in_generate": false,

|

| 143 |

+

"sep_token_id": null,

|

| 144 |

+

"task_specific_params": null,

|

| 145 |

+

"temperature": 1.0,

|

| 146 |

+

"tie_encoder_decoder": false,

|

| 147 |

+

"tie_word_embeddings": true,

|

| 148 |

+

"tokenizer_class": null,

|

| 149 |

+

"top_k": 50,

|

| 150 |

+

"top_p": 1.0,

|

| 151 |

+

"torch_dtype": null,

|

| 152 |

+

"torchscript": false,

|

| 153 |

+

"transformers_version": "4.16.0.dev0",

|

| 154 |

+

"use_bfloat16": false

|

| 155 |

+

},

|

| 156 |

+

"vision_config_dict": null

|

| 157 |

+

}

|

model/config/clip/special_tokens_map.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"bos_token": {"content": "<|startoftext|>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, "eos_token": {"content": "<|endoftext|>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, "unk_token": {"content": "<|endoftext|>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, "pad_token": "<|endoftext|>"}

|

model/config/clip/tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model/config/clip/tokenizer_config.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"unk_token": {"content": "<|endoftext|>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "bos_token": {"content": "<|startoftext|>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "eos_token": {"content": "<|endoftext|>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "pad_token": "<|endoftext|>", "add_prefix_space": false, "errors": "replace", "do_lower_case": true, "name_or_path": "./clip_ViT_B_32/"}

|

model/config/clip/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model/config/config_demo.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "AbdomenCT-1k",

|

| 3 |

+

"categories": ["liver", "kidney", "spleen", "pancreas"],

|

| 4 |

+

"demo_case": {

|

| 5 |

+

"ct_path": "path/to/Case_image",

|

| 6 |

+

"gt_path": "path/to/Case_label"

|

| 7 |

+

}

|

| 8 |

+

}

|

model/data_process/__pycache__/demo_data_process.cpython-39.pyc

ADDED

|

Binary file (3.26 kB). View file

|

|

|

model/data_process/demo_data_process.py

ADDED

|

@@ -0,0 +1,91 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import numpy as np

|

| 2 |

+

import monai.transforms as transforms

|

| 3 |

+

import streamlit as st

|

| 4 |

+

import tempfile

|

| 5 |

+

|

| 6 |

+

class MinMaxNormalization(transforms.Transform):

|

| 7 |

+

def __call__(self, data):

|

| 8 |

+

d = dict(data)

|

| 9 |

+

k = "image"

|

| 10 |

+

d[k] = d[k] - d[k].min()

|

| 11 |

+

d[k] = d[k] / np.clip(d[k].max(), a_min=1e-8, a_max=None)

|

| 12 |

+

return d

|

| 13 |

+

|

| 14 |

+

class DimTranspose(transforms.Transform):

|

| 15 |

+

def __init__(self, keys):

|

| 16 |

+

self.keys = keys

|

| 17 |

+

|

| 18 |

+

def __call__(self, data):

|

| 19 |

+

d = dict(data)

|

| 20 |

+

for key in self.keys:

|

| 21 |

+

d[key] = np.swapaxes(d[key], -1, -3)

|

| 22 |

+

return d

|

| 23 |

+

|

| 24 |

+

class ForegroundNormalization(transforms.Transform):

|

| 25 |

+

def __init__(self, keys):

|

| 26 |

+

self.keys = keys

|

| 27 |

+

|

| 28 |

+

def __call__(self, data):

|

| 29 |

+

d = dict(data)

|

| 30 |

+

|

| 31 |

+

for key in self.keys:

|

| 32 |

+

d[key] = self.normalize(d[key])

|

| 33 |

+

return d

|

| 34 |

+

|

| 35 |

+

def normalize(self, ct_narray):

|

| 36 |

+

ct_voxel_ndarray = ct_narray.copy()

|

| 37 |

+

ct_voxel_ndarray = ct_voxel_ndarray.flatten()

|

| 38 |

+

thred = np.mean(ct_voxel_ndarray)

|

| 39 |

+

voxel_filtered = ct_voxel_ndarray[(ct_voxel_ndarray > thred)]

|

| 40 |

+

upper_bound = np.percentile(voxel_filtered, 99.95)

|

| 41 |

+

lower_bound = np.percentile(voxel_filtered, 00.05)

|

| 42 |

+

mean = np.mean(voxel_filtered)

|

| 43 |

+

std = np.std(voxel_filtered)

|

| 44 |

+

### transform ###

|

| 45 |

+

ct_narray = np.clip(ct_narray, lower_bound, upper_bound)

|

| 46 |

+

ct_narray = (ct_narray - mean) / max(std, 1e-8)

|

| 47 |

+

return ct_narray

|

| 48 |

+

|

| 49 |

+

@st.cache_data

|

| 50 |

+

def process_ct_gt(case_path, spatial_size=(32,256,256)):

|

| 51 |

+

if case_path is None:

|

| 52 |

+

return None

|

| 53 |

+

print('Data preprocessing...')

|

| 54 |

+

# transform

|

| 55 |

+

img_loader = transforms.LoadImage(dtype=np.float32)

|

| 56 |

+

transform = transforms.Compose(

|

| 57 |

+

[

|

| 58 |

+

transforms.Orientationd(keys=["image"], axcodes="RAS"),

|

| 59 |

+

ForegroundNormalization(keys=["image"]),

|

| 60 |

+

DimTranspose(keys=["image"]),

|

| 61 |

+

MinMaxNormalization(),

|

| 62 |

+

transforms.SpatialPadd(keys=["image"], spatial_size=spatial_size, mode='constant'),

|

| 63 |

+

transforms.CropForegroundd(keys=["image"], source_key="image"),

|

| 64 |

+

transforms.ToTensord(keys=["image"]),

|

| 65 |

+

]

|

| 66 |

+

)

|

| 67 |

+

zoom_out_transform = transforms.Resized(keys=["image"], spatial_size=spatial_size, mode='nearest-exact')

|

| 68 |

+

z_transform = transforms.Resized(keys=["image"], spatial_size=(325,325,325), mode='nearest-exact')

|

| 69 |

+

###

|

| 70 |

+

item = {}

|

| 71 |

+

# generate ct_voxel_ndarray

|

| 72 |

+

if type(case_path) is str:

|

| 73 |

+

ct_voxel_ndarray, _ = img_loader(case_path)

|

| 74 |

+

else:

|

| 75 |

+

bytes_data = case_path.read()

|

| 76 |

+

with tempfile.NamedTemporaryFile(suffix='.nii.gz') as tmp:

|

| 77 |

+

tmp.write(bytes_data)

|

| 78 |

+

tmp.seek(0)

|

| 79 |

+

ct_voxel_ndarray, _ = img_loader(tmp.name)

|

| 80 |

+

ct_voxel_ndarray = np.array(ct_voxel_ndarray).squeeze()

|

| 81 |

+

ct_voxel_ndarray = np.expand_dims(ct_voxel_ndarray, axis=0)

|

| 82 |

+

item['image'] = ct_voxel_ndarray

|

| 83 |

+

|

| 84 |

+

# transform

|

| 85 |

+

item = transform(item)

|

| 86 |

+

item_zoom_out = zoom_out_transform(item)

|

| 87 |

+

item['zoom_out_image'] = item_zoom_out['image']

|

| 88 |

+

|

| 89 |

+

item_z = z_transform(item)

|

| 90 |

+

item['z_image'] = item_z['image']

|

| 91 |

+

return item

|

model/inference_cpu.py

ADDED

|

@@ -0,0 +1,173 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import os

|

| 3 |

+

import torch

|

| 4 |

+

import torch.nn.functional as F

|

| 5 |

+

import json

|

| 6 |

+

import monai.transforms as transforms

|

| 7 |

+

|

| 8 |

+

from model.segment_anything_volumetric import sam_model_registry

|

| 9 |

+

from model.network.model import SegVol

|

| 10 |

+

from model.data_process.demo_data_process import process_ct_gt

|

| 11 |

+

from model.utils.monai_inferers_utils import sliding_window_inference, generate_box, select_points, build_binary_cube, build_binary_points, logits2roi_coor

|

| 12 |

+

from model.utils.visualize import draw_result

|

| 13 |

+

import streamlit as st

|

| 14 |

+

|

| 15 |

+

def set_parse():

|

| 16 |

+

# %% set up parser

|

| 17 |

+

parser = argparse.ArgumentParser()

|

| 18 |

+

parser.add_argument("--test_mode", default=True, type=bool)

|

| 19 |

+

parser.add_argument("--resume", type = str, default = 'SegVol_v1.pth')

|

| 20 |

+

parser.add_argument("-infer_overlap", default=0.0, type=float, help="sliding window inference overlap")

|

| 21 |

+

parser.add_argument("-spatial_size", default=(32, 256, 256), type=tuple)

|

| 22 |

+

parser.add_argument("-patch_size", default=(4, 16, 16), type=tuple)

|

| 23 |

+

parser.add_argument('-work_dir', type=str, default='./work_dir')

|

| 24 |

+

### demo

|

| 25 |

+

parser.add_argument("--clip_ckpt", type = str, default = 'model/config/clip')

|

| 26 |

+

args = parser.parse_args()

|

| 27 |

+

return args

|

| 28 |

+

|

| 29 |

+

def zoom_in_zoom_out(args, segvol_model, image, image_resize, text_prompt, point_prompt, box_prompt):

|

| 30 |

+

image_single_resize = image_resize

|

| 31 |

+