Spaces:

Runtime error

Runtime error

first upload

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- LICENSE +201 -0

- README.md +214 -12

- app.py +59 -0

- configs/stable-diffusion/app.yaml +87 -0

- configs/stable-diffusion/test_keypose.yaml +87 -0

- configs/stable-diffusion/test_mask.yaml +87 -0

- configs/stable-diffusion/test_mask_sketch.yaml +87 -0

- configs/stable-diffusion/test_sketch.yaml +87 -0

- configs/stable-diffusion/test_sketch_edit.yaml +87 -0

- configs/stable-diffusion/train_keypose.yaml +87 -0

- configs/stable-diffusion/train_mask.yaml +87 -0

- configs/stable-diffusion/train_sketch.yaml +87 -0

- dataset_coco.py +138 -0

- demo/demos.py +82 -0

- demo/model.py +390 -0

- dist_util.py +91 -0

- environment.yaml +31 -0

- examples/edit_cat/edge.png +0 -0

- examples/edit_cat/edge_2.png +0 -0

- examples/edit_cat/im.png +0 -0

- examples/edit_cat/mask.png +0 -0

- examples/keypose/iron.png +0 -0

- examples/seg/dinner.png +0 -0

- examples/seg/motor.png +0 -0

- examples/seg_sketch/edge.png +0 -0

- examples/seg_sketch/mask.png +0 -0

- examples/sketch/car.png +0 -0

- examples/sketch/girl.jpeg +0 -0

- examples/sketch/human.png +0 -0

- examples/sketch/scenery.jpg +0 -0

- examples/sketch/scenery2.jpg +0 -0

- experiments/README.md +0 -0

- gradio_keypose.py +254 -0

- gradio_sketch.py +147 -0

- ldm/data/__init__.py +0 -0

- ldm/data/base.py +23 -0

- ldm/data/imagenet.py +394 -0

- ldm/data/lsun.py +92 -0

- ldm/lr_scheduler.py +98 -0

- ldm/models/autoencoder.py +443 -0

- ldm/models/diffusion/__init__.py +0 -0

- ldm/models/diffusion/classifier.py +267 -0

- ldm/models/diffusion/ddim.py +241 -0

- ldm/models/diffusion/ddpm.py +1446 -0

- ldm/models/diffusion/dpm_solver/__init__.py +1 -0

- ldm/models/diffusion/dpm_solver/dpm_solver.py +1184 -0

- ldm/models/diffusion/dpm_solver/sampler.py +82 -0

- ldm/models/diffusion/plms.py +254 -0

- ldm/modules/attention.py +261 -0

- ldm/modules/diffusionmodules/__init__.py +0 -0

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

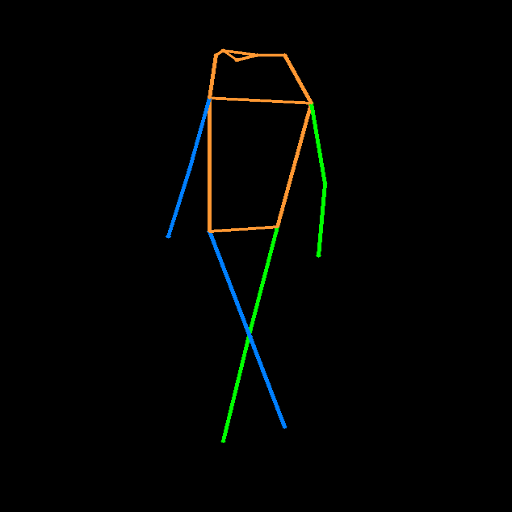

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,12 +1,214 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<p align="center">

|

| 2 |

+

<img src="assets/logo2.png" height=65>

|

| 3 |

+

</p>

|

| 4 |

+

|

| 5 |

+

<div align="center">

|

| 6 |

+

|

| 7 |

+

⏬[**Download Models**](#-download-models) **|** 💻[**How to Test**](#-how-to-test)

|

| 8 |

+

|

| 9 |

+

</div>

|

| 10 |

+

|

| 11 |

+

Official implementation of T2I-Adapter: Learning Adapters to Dig out More Controllable Ability for Text-to-Image Diffusion Models.

|

| 12 |

+

|

| 13 |

+

#### [Paper](https://arxiv.org/abs/2302.08453)

|

| 14 |

+

|

| 15 |

+

<p align="center">

|

| 16 |

+

<img src="assets/overview1.png" height=250>

|

| 17 |

+

</p>

|

| 18 |

+

|

| 19 |

+

We propose T2I-Adapter, a **simple and small (~70M parameters, ~300M storage space)** network that can provide extra guidance to pre-trained text-to-image models while **freezing** the original large text-to-image models.

|

| 20 |

+

|

| 21 |

+

T2I-Adapter aligns internal knowledge in T2I models with external control signals.

|

| 22 |

+

We can train various adapters according to different conditions, and achieve rich control and editing effects.

|

| 23 |

+

|

| 24 |

+

<p align="center">

|

| 25 |

+

<img src="assets/teaser.png" height=500>

|

| 26 |

+

</p>

|

| 27 |

+

|

| 28 |

+

### ⏬ Download Models

|

| 29 |

+

|

| 30 |

+

Put the downloaded models in the `T2I-Adapter/models` folder.

|

| 31 |

+

|

| 32 |

+

1. The **T2I-Adapters** can be download from <https://huggingface.co/TencentARC/T2I-Adapter>.

|

| 33 |

+

2. The pretrained **Stable Diffusion v1.4** models can be download from <https://huggingface.co/CompVis/stable-diffusion-v-1-4-original/tree/main>. You need to download the `sd-v1-4.ckpt

|

| 34 |

+

` file.

|

| 35 |

+

3. [Optional] If you want to use **Anything v4.0** models, you can download the pretrained models from <https://huggingface.co/andite/anything-v4.0/tree/main>. You need to download the `anything-v4.0-pruned.ckpt` file.

|

| 36 |

+

4. The pretrained **clip-vit-large-patch14** folder can be download from <https://huggingface.co/openai/clip-vit-large-patch14/tree/main>. Remember to download the whole folder!

|

| 37 |

+

5. The pretrained keypose detection models include FasterRCNN (human detection) from <https://download.openmmlab.com/mmdetection/v2.0/faster_rcnn/faster_rcnn_r50_fpn_1x_coco/faster_rcnn_r50_fpn_1x_coco_20200130-047c8118.pth> and HRNet (pose detection) from <https://download.openmmlab.com/mmpose/top_down/hrnet/hrnet_w48_coco_256x192-b9e0b3ab_20200708.pth>.

|

| 38 |

+

|

| 39 |

+

After downloading, the folder structure should be like this:

|

| 40 |

+

|

| 41 |

+

<p align="center">

|

| 42 |

+

<img src="assets/downloaded_models.png" height=100>

|

| 43 |

+

</p>

|

| 44 |

+

|

| 45 |

+

### 🔧 Dependencies and Installation

|

| 46 |

+

|

| 47 |

+

- Python >= 3.6 (Recommend to use [Anaconda](https://www.anaconda.com/download/#linux) or [Miniconda](https://docs.conda.io/en/latest/miniconda.html))

|

| 48 |

+

- [PyTorch >= 1.4](https://pytorch.org/)

|

| 49 |

+

```bash

|

| 50 |

+

pip install -r requirements.txt

|

| 51 |

+

```

|

| 52 |

+

- If you want to use the full function of keypose-guided generation, you need to install MMPose. For details please refer to <https://github.com/open-mmlab/mmpose>.

|

| 53 |

+

|

| 54 |

+

### 💻 How to Test

|

| 55 |

+

|

| 56 |

+

- The results are in the `experiments` folder.

|

| 57 |

+

- If you want to use the `Anything v4.0`, please add `--ckpt models/anything-v4.0-pruned.ckpt` in the following commands.

|

| 58 |

+

|

| 59 |

+

#### **For Simple Experience**

|

| 60 |

+

|

| 61 |

+

> python app.py

|

| 62 |

+

|

| 63 |

+

#### **Sketch Adapter**

|

| 64 |

+

|

| 65 |

+

- Sketch to Image Generation

|

| 66 |

+

|

| 67 |

+

> python test_sketch.py --plms --auto_resume --prompt "A car with flying wings" --path_cond examples/sketch/car.png --ckpt models/sd-v1-4.ckpt --type_in sketch

|

| 68 |

+

|

| 69 |

+

- Image to Image Generation

|

| 70 |

+

|

| 71 |

+

> python test_sketch.py --plms --auto_resume --prompt "A beautiful girl" --path_cond examples/anything_sketch/human.png --ckpt models/sd-v1-4.ckpt --type_in image

|

| 72 |

+

|

| 73 |

+

- Generation with **Anything** setting

|

| 74 |

+

|

| 75 |

+

> python test_sketch.py --plms --auto_resume --prompt "A beautiful girl" --path_cond examples/anything_sketch/human.png --ckpt models/anything-v4.0-pruned.ckpt --type_in image

|

| 76 |

+

|

| 77 |

+

##### Gradio Demo

|

| 78 |

+

<p align="center">

|

| 79 |

+

<img src="assets/gradio_sketch.png">

|

| 80 |

+

</p>

|

| 81 |

+

You can use gradio to experience all these three functions at once. CPU is also supported by setting device to 'cpu'.

|

| 82 |

+

|

| 83 |

+

```bash

|

| 84 |

+

python gradio_sketch.py

|

| 85 |

+

```

|

| 86 |

+

|

| 87 |

+

#### **Keypose Adapter**

|

| 88 |

+

|

| 89 |

+

- Keypose to Image Generation

|

| 90 |

+

|

| 91 |

+

> python test_keypose.py --plms --auto_resume --prompt "A beautiful girl" --path_cond examples/keypose/iron.png --type_in pose

|

| 92 |

+

|

| 93 |

+

- Image to Image Generation

|

| 94 |

+

|

| 95 |

+

> python test_keypose.py --plms --auto_resume --prompt "A beautiful girl" --path_cond examples/sketch/human.png --type_in image

|

| 96 |

+

|

| 97 |

+

- Generation with **Anything** setting

|

| 98 |

+

|

| 99 |

+

> python test_keypose.py --plms --auto_resume --prompt "A beautiful girl" --path_cond examples/sketch/human.png --ckpt models/anything-v4.0-pruned.ckpt --type_in image

|

| 100 |

+

|

| 101 |

+

##### Gradio Demo

|

| 102 |

+

<p align="center">

|

| 103 |

+

<img src="assets/gradio_keypose.png">

|

| 104 |

+

</p>

|

| 105 |

+

You can use gradio to experience all these three functions at once. CPU is also supported by setting device to 'cpu'.

|

| 106 |

+

|

| 107 |

+

```bash

|

| 108 |

+

python gradio_keypose.py

|

| 109 |

+

```

|

| 110 |

+

|

| 111 |

+

#### **Segmentation Adapter**

|

| 112 |

+

|

| 113 |

+

> python test_seg.py --plms --auto_resume --prompt "A black Honda motorcycle parked in front of a garage" --path_cond examples/seg/motor.png

|

| 114 |

+

|

| 115 |

+

#### **Two adapters: Segmentation and Sketch Adapters**

|

| 116 |

+

|

| 117 |

+

> python test_seg_sketch.py --plms --auto_resume --prompt "An all white kitchen with an electric stovetop" --path_cond examples/seg_sketch/mask.png --path_cond2 examples/seg_sketch/edge.png

|

| 118 |

+

|

| 119 |

+

#### **Local editing with adapters**

|

| 120 |

+

|

| 121 |

+

> python test_sketch_edit.py --plms --auto_resume --prompt "A white cat" --path_cond examples/edit_cat/edge_2.png --path_x0 examples/edit_cat/im.png --path_mask examples/edit_cat/mask.png

|

| 122 |

+

|

| 123 |

+

## Stable Diffusion + T2I-Adapters (only ~70M parameters, ~300M storage space)

|

| 124 |

+

|

| 125 |

+

The following is the detailed structure of a **Stable Diffusion** model with the **T2I-Adapter**.

|

| 126 |

+

<p align="center">

|

| 127 |

+

<img src="assets/overview2.png" height=300>

|

| 128 |

+

</p>

|

| 129 |

+

|

| 130 |

+

<!-- ## Web Demo

|

| 131 |

+

|

| 132 |

+

* All the usage of three T2I-Adapters (i.e, sketch, keypose and segmentation) are integrated into [Huggingface Spaces]() 🤗 using [Gradio](). Have fun with the Web Demo. -->

|

| 133 |

+

|

| 134 |

+

## 🚀 Interesting Applications

|

| 135 |

+

|

| 136 |

+

### Stable Diffusion results guided with the sketch T2I-Adapter

|

| 137 |

+

|

| 138 |

+

The corresponding edge maps are predicted by PiDiNet. The sketch T2I-Adapter can well generalize to other similar sketch types, for example, sketches from the Internet and user scribbles.

|

| 139 |

+

|

| 140 |

+

<p align="center">

|

| 141 |

+

<img src="assets/sketch_base.png" height=800>

|

| 142 |

+

</p>

|

| 143 |

+

|

| 144 |

+

### Stable Diffusion results guided with the keypose T2I-Adapter

|

| 145 |

+

|

| 146 |

+

The keypose results predicted by the [MMPose](https://github.com/open-mmlab/mmpose).

|

| 147 |

+

With the keypose guidance, the keypose T2I-Adapter can also help to generate animals with the same keypose, for example, pandas and tigers.

|

| 148 |

+

|

| 149 |

+

<p align="center">

|

| 150 |

+

<img src="assets/keypose_base.png" height=600>

|

| 151 |

+

</p>

|

| 152 |

+

|

| 153 |

+

### T2I-Adapter with Anything-v4.0

|

| 154 |

+

|

| 155 |

+

Once the T2I-Adapter is trained, it can act as a **plug-and-play module** and can be seamlessly integrated into the finetuned diffusion models **without re-training**, for example, Anything-4.0.

|

| 156 |

+

|

| 157 |

+

#### ✨ Anything results with the plug-and-play sketch T2I-Adapter (no extra training)

|

| 158 |

+

|

| 159 |

+

<p align="center">

|

| 160 |

+

<img src="assets/sketch_anything.png" height=600>

|

| 161 |

+

</p>

|

| 162 |

+

|

| 163 |

+

#### Anything results with the plug-and-play keypose T2I-Adapter (no extra training)

|

| 164 |

+

|

| 165 |

+

<p align="center">

|

| 166 |

+

<img src="assets/keypose_anything.png" height=600>

|

| 167 |

+

</p>

|

| 168 |

+

|

| 169 |

+

### Local editing with the sketch adapter

|

| 170 |

+

|

| 171 |

+

When combined with the inpaiting mode of Stable Diffusion, we can realize local editing with user specific guidance.

|

| 172 |

+

|

| 173 |

+

#### ✨ Change the head direction of the cat

|

| 174 |

+

|

| 175 |

+

<p align="center">

|

| 176 |

+

<img src="assets/local_editing_cat.png" height=300>

|

| 177 |

+

</p>

|

| 178 |

+

|

| 179 |

+

#### ✨ Add rabbit ears on the head of the Iron Man.

|

| 180 |

+

|

| 181 |

+

<p align="center">

|

| 182 |

+

<img src="assets/local_editing_ironman.png" height=400>

|

| 183 |

+

</p>

|

| 184 |

+

|

| 185 |

+

### Combine different concepts with adapter

|

| 186 |

+

|

| 187 |

+

Adapter can be used to enhance the SD ability to combine different concepts.

|

| 188 |

+

|

| 189 |

+

#### ✨ A car with flying wings. / A doll in the shape of letter ‘A’.

|

| 190 |

+

|

| 191 |

+

<p align="center">

|

| 192 |

+

<img src="assets/enhance_SD2.png" height=600>

|

| 193 |

+

</p>

|

| 194 |

+

|

| 195 |

+

### Sequential editing with the sketch adapter

|

| 196 |

+

|

| 197 |

+

We can realize the sequential editing with the adapter guidance.

|

| 198 |

+

|

| 199 |

+

<p align="center">

|

| 200 |

+

<img src="assets/sequential_edit.png">

|

| 201 |

+

</p>

|

| 202 |

+

|

| 203 |

+

### Composable Guidance with multiple adapters

|

| 204 |

+

|

| 205 |

+

Stable Diffusion results guided with the segmentation and sketch adapters together.

|

| 206 |

+

|

| 207 |

+

<p align="center">

|

| 208 |

+

<img src="assets/multiple_adapters.png">

|

| 209 |

+

</p>

|

| 210 |

+

|

| 211 |

+

|

| 212 |

+

|

| 213 |

+

|

| 214 |

+

Logo materials: [adapter](https://www.flaticon.com/free-icon/adapter_4777242), [lightbulb](https://www.flaticon.com/free-icon/lightbulb_3176369)

|

app.py

ADDED

|

@@ -0,0 +1,59 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from demo.model import Model_all

|

| 2 |

+

import gradio as gr

|

| 3 |

+

from demo.demos import create_demo_keypose, create_demo_sketch, create_demo_draw

|

| 4 |

+

import torch

|

| 5 |

+

import subprocess

|

| 6 |

+

import os

|

| 7 |

+

import shlex

|

| 8 |

+

from huggingface_hub import hf_hub_url

|

| 9 |

+

|

| 10 |

+

urls = {

|

| 11 |

+

'TencentARC/T2I-Adapter':['models/t2iadapter_keypose_sd14v1.pth', 'models/t2iadapter_seg_sd14v1.pth', 'models/t2iadapter_sketch_sd14v1.pth'],

|

| 12 |

+

'CompVis/stable-diffusion-v-1-4-original':['sd-v1-4.ckpt'],

|

| 13 |

+

'andite/anything-v4.0':['anything-v4.0-pruned.ckpt'],

|

| 14 |

+

}

|

| 15 |

+

urls_mmpose = [

|

| 16 |

+

'https://download.openmmlab.com/mmdetection/v2.0/faster_rcnn/faster_rcnn_r50_fpn_1x_coco/faster_rcnn_r50_fpn_1x_coco_20200130-047c8118.pth',

|

| 17 |

+

'https://download.openmmlab.com/mmpose/top_down/hrnet/hrnet_w48_coco_256x192-b9e0b3ab_20200708.pth',

|

| 18 |

+

]

|

| 19 |

+

if os.path.exists('models') == False:

|

| 20 |

+

os.mkdir('models')

|

| 21 |

+

for repo in urls:

|

| 22 |

+

files = urls[repo]

|

| 23 |

+

for file in files:

|

| 24 |

+

url = hf_hub_url(repo, file)

|

| 25 |

+

name_ckp = url.split('/')[-1]

|

| 26 |

+

save_path = os.path.join('models',name_ckp)

|

| 27 |

+

if os.path.exists(save_path) == False:

|

| 28 |

+

subprocess.run(shlex.split(f'wget {url} -O {save_path}'))

|

| 29 |

+

|

| 30 |

+

for url in urls_mmpose:

|

| 31 |

+

name_ckp = url.split('/')[-1]

|

| 32 |

+

save_path = os.path.join('models',name_ckp)

|

| 33 |

+

if os.path.exists(save_path) == False:

|

| 34 |

+

subprocess.run(shlex.split(f'wget {url} -O {save_path}'))

|

| 35 |

+

|

| 36 |

+

device = 'cuda' if torch.cuda.is_available() else 'cpu'

|

| 37 |

+

model = Model_all(device)

|

| 38 |

+

|

| 39 |

+

DESCRIPTION = '''# T2I-Adapter (Sketch & Keypose)

|

| 40 |

+

[Paper](https://arxiv.org/abs/2302.08453) [GitHub](https://github.com/TencentARC/T2I-Adapter)

|

| 41 |

+

|

| 42 |

+

This gradio demo is for a simple experience of T2I-Adapter:

|

| 43 |

+

- Keypose/Sketch to Image Generation

|

| 44 |

+

- Image to Image Generation

|

| 45 |

+

- Support the base model of Stable Diffusion v1.4 and Anything 4.0

|

| 46 |

+

'''

|

| 47 |

+

|

| 48 |

+

with gr.Blocks(css='style.css') as demo:

|

| 49 |

+

gr.Markdown(DESCRIPTION)

|

| 50 |

+

|

| 51 |

+

with gr.Tabs():

|

| 52 |

+

with gr.TabItem('Keypose'):

|

| 53 |

+

create_demo_keypose(model.process_keypose)

|

| 54 |

+

with gr.TabItem('Sketch'):

|

| 55 |

+

create_demo_sketch(model.process_sketch)

|

| 56 |

+

with gr.TabItem('Draw'):

|

| 57 |

+

create_demo_draw(model.process_draw)

|

| 58 |

+

|

| 59 |

+

demo.queue(api_open=False).launch(server_name='0.0.0.0')

|

configs/stable-diffusion/app.yaml

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: app

|

| 2 |

+

model:

|

| 3 |

+

base_learning_rate: 1.0e-04

|

| 4 |

+

target: ldm.models.diffusion.ddpm.LatentDiffusion

|

| 5 |

+

params:

|

| 6 |

+

linear_start: 0.00085

|

| 7 |

+

linear_end: 0.0120

|

| 8 |

+

num_timesteps_cond: 1

|

| 9 |

+

log_every_t: 200

|

| 10 |

+

timesteps: 1000

|

| 11 |

+

first_stage_key: "jpg"

|

| 12 |

+

cond_stage_key: "txt"

|

| 13 |

+

image_size: 64

|

| 14 |

+

channels: 4

|

| 15 |

+

cond_stage_trainable: false # Note: different from the one we trained before

|

| 16 |

+

conditioning_key: crossattn

|

| 17 |

+

monitor: val/loss_simple_ema

|

| 18 |

+

scale_factor: 0.18215

|

| 19 |

+

use_ema: False

|

| 20 |

+

|

| 21 |

+

scheduler_config: # 10000 warmup steps

|

| 22 |

+

target: ldm.lr_scheduler.LambdaLinearScheduler

|

| 23 |

+

params:

|

| 24 |

+

warm_up_steps: [ 10000 ]

|

| 25 |

+

cycle_lengths: [ 10000000000000 ] # incredibly large number to prevent corner cases

|

| 26 |

+

f_start: [ 1.e-6 ]

|

| 27 |

+

f_max: [ 1. ]

|

| 28 |

+

f_min: [ 1. ]

|

| 29 |

+

|

| 30 |

+

unet_config:

|

| 31 |

+

target: ldm.modules.diffusionmodules.openaimodel.UNetModel

|

| 32 |

+

params:

|

| 33 |

+

image_size: 32 # unused

|

| 34 |

+

in_channels: 4

|

| 35 |

+

out_channels: 4

|

| 36 |

+

model_channels: 320

|

| 37 |

+

attention_resolutions: [ 4, 2, 1 ]

|

| 38 |

+

num_res_blocks: 2

|

| 39 |

+

channel_mult: [ 1, 2, 4, 4 ]

|

| 40 |

+

num_heads: 8

|

| 41 |

+

use_spatial_transformer: True

|

| 42 |

+

transformer_depth: 1

|

| 43 |

+

context_dim: 768

|

| 44 |

+

use_checkpoint: True

|

| 45 |

+

legacy: False

|

| 46 |

+

|

| 47 |

+

first_stage_config:

|

| 48 |

+

target: ldm.models.autoencoder.AutoencoderKL

|

| 49 |

+

params:

|

| 50 |

+

embed_dim: 4

|

| 51 |

+

monitor: val/rec_loss

|

| 52 |

+

ddconfig:

|

| 53 |

+

double_z: true

|

| 54 |

+

z_channels: 4

|

| 55 |

+

resolution: 256

|

| 56 |

+

in_channels: 3

|

| 57 |

+

out_ch: 3

|

| 58 |

+

ch: 128

|

| 59 |

+

ch_mult:

|

| 60 |

+

- 1

|

| 61 |

+

- 2

|

| 62 |

+

- 4

|

| 63 |

+

- 4

|

| 64 |

+

num_res_blocks: 2

|

| 65 |

+

attn_resolutions: []

|

| 66 |

+

dropout: 0.0

|

| 67 |

+

lossconfig:

|

| 68 |

+

target: torch.nn.Identity

|

| 69 |

+

|

| 70 |

+

cond_stage_config:

|

| 71 |

+

target: ldm.modules.encoders.modules.FrozenCLIPEmbedder

|

| 72 |

+

params:

|

| 73 |

+

device: 'cuda'

|

| 74 |

+

|

| 75 |

+

logger:

|

| 76 |

+

print_freq: 100

|

| 77 |

+

save_checkpoint_freq: !!float 1e4

|

| 78 |

+

use_tb_logger: true

|

| 79 |

+

wandb:

|

| 80 |

+

project: ~

|

| 81 |

+

resume_id: ~

|

| 82 |

+

dist_params:

|

| 83 |

+

backend: nccl

|

| 84 |

+

port: 29500

|

| 85 |

+

training:

|

| 86 |

+

lr: !!float 1e-5

|

| 87 |

+

save_freq: 1e4

|

configs/stable-diffusion/test_keypose.yaml

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: test_keypose

|

| 2 |

+

model:

|

| 3 |

+

base_learning_rate: 1.0e-04

|

| 4 |

+

target: ldm.models.diffusion.ddpm.LatentDiffusion

|

| 5 |

+

params:

|

| 6 |

+

linear_start: 0.00085

|

| 7 |

+

linear_end: 0.0120

|

| 8 |

+

num_timesteps_cond: 1

|

| 9 |

+

log_every_t: 200

|

| 10 |

+

timesteps: 1000

|

| 11 |

+

first_stage_key: "jpg"

|

| 12 |

+

cond_stage_key: "txt"

|

| 13 |

+

image_size: 64

|

| 14 |

+

channels: 4

|

| 15 |

+

cond_stage_trainable: false # Note: different from the one we trained before

|

| 16 |

+

conditioning_key: crossattn

|

| 17 |

+

monitor: val/loss_simple_ema

|

| 18 |

+

scale_factor: 0.18215

|

| 19 |

+

use_ema: False

|

| 20 |

+

|

| 21 |

+

scheduler_config: # 10000 warmup steps

|

| 22 |

+

target: ldm.lr_scheduler.LambdaLinearScheduler

|

| 23 |

+

params:

|

| 24 |

+

warm_up_steps: [ 10000 ]

|

| 25 |

+

cycle_lengths: [ 10000000000000 ] # incredibly large number to prevent corner cases

|

| 26 |

+

f_start: [ 1.e-6 ]

|

| 27 |

+

f_max: [ 1. ]

|

| 28 |

+

f_min: [ 1. ]

|

| 29 |

+

|

| 30 |

+

unet_config:

|

| 31 |

+

target: ldm.modules.diffusionmodules.openaimodel.UNetModel

|

| 32 |

+

params:

|

| 33 |

+

image_size: 32 # unused

|

| 34 |

+

in_channels: 4

|

| 35 |

+

out_channels: 4

|

| 36 |

+

model_channels: 320

|

| 37 |

+

attention_resolutions: [ 4, 2, 1 ]

|

| 38 |

+

num_res_blocks: 2

|

| 39 |

+

channel_mult: [ 1, 2, 4, 4 ]

|

| 40 |

+

num_heads: 8

|

| 41 |

+

use_spatial_transformer: True

|

| 42 |

+

transformer_depth: 1

|

| 43 |

+

context_dim: 768

|

| 44 |

+

use_checkpoint: True

|

| 45 |

+

legacy: False

|

| 46 |

+

|

| 47 |

+

first_stage_config:

|

| 48 |

+

target: ldm.models.autoencoder.AutoencoderKL

|

| 49 |

+

params:

|

| 50 |

+

embed_dim: 4

|

| 51 |

+

monitor: val/rec_loss

|

| 52 |

+

ddconfig:

|

| 53 |

+

double_z: true

|

| 54 |

+

z_channels: 4

|

| 55 |

+

resolution: 256

|

| 56 |

+

in_channels: 3

|

| 57 |

+

out_ch: 3

|

| 58 |

+

ch: 128

|

| 59 |

+

ch_mult:

|

| 60 |

+

- 1

|

| 61 |

+

- 2

|

| 62 |

+

- 4

|

| 63 |

+

- 4

|

| 64 |

+

num_res_blocks: 2

|

| 65 |

+

attn_resolutions: []

|

| 66 |

+

dropout: 0.0

|

| 67 |

+

lossconfig:

|

| 68 |

+

target: torch.nn.Identity

|

| 69 |

+

|

| 70 |

+

cond_stage_config: #__is_unconditional__

|

| 71 |

+

target: ldm.modules.encoders.modules.FrozenCLIPEmbedder

|

| 72 |

+

params:

|

| 73 |

+

version: models/clip-vit-large-patch14

|

| 74 |

+

|

| 75 |

+

logger:

|

| 76 |

+

print_freq: 100

|

| 77 |

+

save_checkpoint_freq: !!float 1e4

|

| 78 |

+

use_tb_logger: true

|

| 79 |

+

wandb:

|

| 80 |

+

project: ~

|

| 81 |

+

resume_id: ~

|

| 82 |

+

dist_params:

|

| 83 |

+

backend: nccl

|

| 84 |

+

port: 29500

|

| 85 |

+

training:

|

| 86 |

+

lr: !!float 1e-5

|

| 87 |

+

save_freq: 1e4

|

configs/stable-diffusion/test_mask.yaml

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: test_mask

|

| 2 |

+

model:

|

| 3 |

+

base_learning_rate: 1.0e-04

|

| 4 |

+

target: ldm.models.diffusion.ddpm.LatentDiffusion

|

| 5 |

+

params:

|

| 6 |

+

linear_start: 0.00085

|

| 7 |

+

linear_end: 0.0120

|

| 8 |

+

num_timesteps_cond: 1

|

| 9 |

+

log_every_t: 200

|

| 10 |

+

timesteps: 1000

|

| 11 |

+

first_stage_key: "jpg"

|

| 12 |

+

cond_stage_key: "txt"

|

| 13 |

+

image_size: 64

|

| 14 |

+

channels: 4

|

| 15 |

+

cond_stage_trainable: false # Note: different from the one we trained before

|

| 16 |

+

conditioning_key: crossattn

|

| 17 |

+

monitor: val/loss_simple_ema

|

| 18 |

+

scale_factor: 0.18215

|

| 19 |

+

use_ema: False

|

| 20 |

+

|

| 21 |

+

scheduler_config: # 10000 warmup steps

|

| 22 |

+

target: ldm.lr_scheduler.LambdaLinearScheduler

|

| 23 |

+

params:

|

| 24 |

+

warm_up_steps: [ 10000 ]

|

| 25 |

+

cycle_lengths: [ 10000000000000 ] # incredibly large number to prevent corner cases

|

| 26 |

+

f_start: [ 1.e-6 ]

|

| 27 |

+

f_max: [ 1. ]

|

| 28 |

+

f_min: [ 1. ]

|

| 29 |

+

|

| 30 |

+

unet_config:

|

| 31 |

+

target: ldm.modules.diffusionmodules.openaimodel.UNetModel

|

| 32 |

+

params:

|

| 33 |

+

image_size: 32 # unused

|

| 34 |

+

in_channels: 4

|

| 35 |

+

out_channels: 4

|

| 36 |

+

model_channels: 320

|

| 37 |

+

attention_resolutions: [ 4, 2, 1 ]

|

| 38 |

+

num_res_blocks: 2

|

| 39 |

+

channel_mult: [ 1, 2, 4, 4 ]

|

| 40 |

+

num_heads: 8

|

| 41 |

+

use_spatial_transformer: True

|

| 42 |

+

transformer_depth: 1

|

| 43 |

+

context_dim: 768

|

| 44 |

+

use_checkpoint: True

|

| 45 |

+

legacy: False

|

| 46 |

+

|

| 47 |

+

first_stage_config:

|

| 48 |

+

target: ldm.models.autoencoder.AutoencoderKL

|

| 49 |

+

params:

|

| 50 |

+

embed_dim: 4

|

| 51 |

+

monitor: val/rec_loss

|

| 52 |

+

ddconfig:

|

| 53 |

+

double_z: true

|

| 54 |

+

z_channels: 4

|

| 55 |

+

resolution: 256

|

| 56 |

+

in_channels: 3

|

| 57 |

+

out_ch: 3

|

| 58 |

+

ch: 128

|

| 59 |

+

ch_mult:

|

| 60 |

+

- 1

|

| 61 |

+

- 2

|

| 62 |

+

- 4

|

| 63 |

+

- 4

|

| 64 |

+

num_res_blocks: 2

|

| 65 |

+

attn_resolutions: []

|

| 66 |

+

dropout: 0.0

|

| 67 |

+

lossconfig:

|

| 68 |

+

target: torch.nn.Identity

|

| 69 |

+

|

| 70 |

+

cond_stage_config: #__is_unconditional__

|

| 71 |

+

target: ldm.modules.encoders.modules.FrozenCLIPEmbedder

|

| 72 |

+

params:

|

| 73 |

+

version: models/clip-vit-large-patch14

|

| 74 |

+

|

| 75 |

+

logger:

|

| 76 |

+

print_freq: 100

|

| 77 |

+

save_checkpoint_freq: !!float 1e4

|

| 78 |

+

use_tb_logger: true

|

| 79 |

+

wandb:

|

| 80 |

+

project: ~

|

| 81 |

+

resume_id: ~

|

| 82 |

+

dist_params:

|

| 83 |

+

backend: nccl

|

| 84 |

+

port: 29500

|

| 85 |

+

training:

|

| 86 |

+

lr: !!float 1e-5

|

| 87 |

+

save_freq: 1e4

|

configs/stable-diffusion/test_mask_sketch.yaml

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: test_mask_sketch

|

| 2 |

+

model:

|

| 3 |

+

base_learning_rate: 1.0e-04

|

| 4 |

+

target: ldm.models.diffusion.ddpm.LatentDiffusion

|

| 5 |

+

params:

|

| 6 |

+

linear_start: 0.00085

|

| 7 |

+

linear_end: 0.0120

|

| 8 |

+

num_timesteps_cond: 1

|

| 9 |

+

log_every_t: 200

|

| 10 |

+

timesteps: 1000

|

| 11 |

+

first_stage_key: "jpg"

|

| 12 |

+

cond_stage_key: "txt"

|

| 13 |

+

image_size: 64

|

| 14 |

+

channels: 4

|

| 15 |

+

cond_stage_trainable: false # Note: different from the one we trained before

|

| 16 |

+

conditioning_key: crossattn

|

| 17 |

+

monitor: val/loss_simple_ema

|

| 18 |

+

scale_factor: 0.18215

|

| 19 |

+

use_ema: False

|

| 20 |

+

|

| 21 |

+

scheduler_config: # 10000 warmup steps

|

| 22 |

+

target: ldm.lr_scheduler.LambdaLinearScheduler

|

| 23 |

+

params:

|

| 24 |

+

warm_up_steps: [ 10000 ]

|

| 25 |

+

cycle_lengths: [ 10000000000000 ] # incredibly large number to prevent corner cases

|

| 26 |

+

f_start: [ 1.e-6 ]

|

| 27 |

+

f_max: [ 1. ]

|

| 28 |

+

f_min: [ 1. ]

|

| 29 |

+

|

| 30 |

+

unet_config:

|

| 31 |

+

target: ldm.modules.diffusionmodules.openaimodel.UNetModel

|

| 32 |

+

params:

|

| 33 |

+

image_size: 32 # unused

|

| 34 |

+

in_channels: 4

|

| 35 |

+

out_channels: 4

|

| 36 |

+

model_channels: 320

|

| 37 |

+

attention_resolutions: [ 4, 2, 1 ]

|

| 38 |

+

num_res_blocks: 2

|

| 39 |

+

channel_mult: [ 1, 2, 4, 4 ]

|

| 40 |

+

num_heads: 8

|

| 41 |

+