Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,73 @@

|

|

| 1 |

---

|

| 2 |

license: cc-by-nc-4.0

|

|

|

|

|

|

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: cc-by-nc-4.0

|

| 3 |

+

tags:

|

| 4 |

+

- text-to-video

|

| 5 |

---

|

| 6 |

+

|

| 7 |

+

# show-1-sr2

|

| 8 |

+

|

| 9 |

+

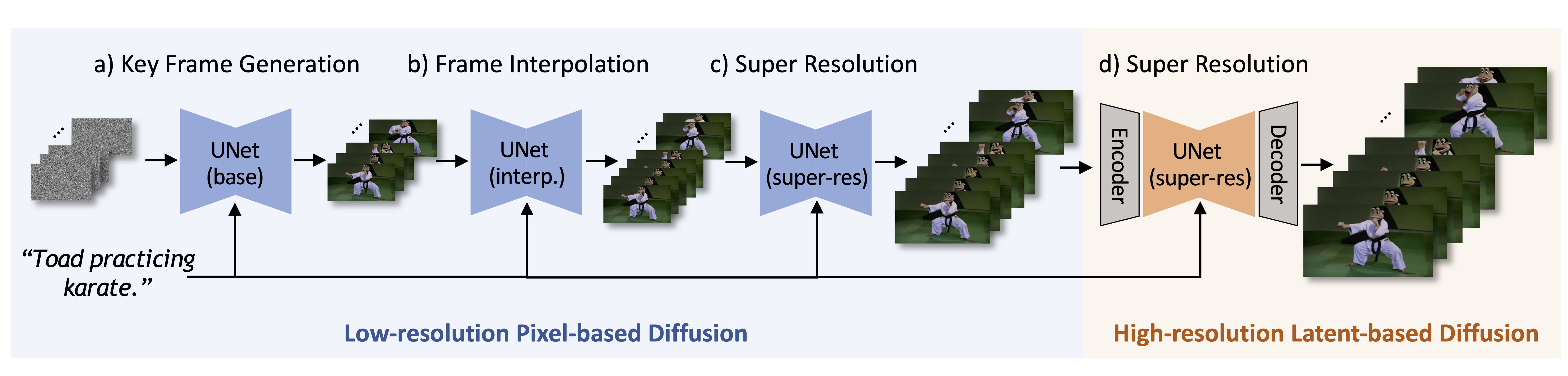

Pixel-based VDMs can generate motion accurately aligned with the textual prompt but typically demand expensive computational costs in terms of time and GPU memory, especially when generating high-resolution videos. Latent-based VDMs are more resource-efficient because they work in a reduced-dimension latent space. But it is challenging for such small latent space (e.g., 64×40 for 256×160 videos) to cover rich yet necessary visual semantic details as described by the textual prompt.

|

| 10 |

+

|

| 11 |

+

To marry the strength and alleviate the weakness of pixel-based and latent-based VDMs, we introduce **Show-1**, an efficient text-to-video model that generates videos of not only decent video-text alignment but also high visual quality.

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

## Model Details

|

| 16 |

+

|

| 17 |

+

This is the super-resolution model of Show-1 that upscales videos from a 256x160 resolution to 576x320. The model is finetuned from [cerspense/zeroscope_v2_576w](https://huggingface.co/cerspense/zeroscope_v2_576w) on the [WebVid-10M](https://maxbain.com/webvid-dataset/) dataset.

|

| 18 |

+

|

| 19 |

+

- **Developed by:** [Show Lab, National University of Singapore](https://sites.google.com/view/showlab/home?authuser=0)

|

| 20 |

+

- **Model type:** pixel- and latent-based cascaded text-to-video diffusion model

|

| 21 |

+

- **Cascade stage:** super-resolution (256x160->576x320)

|

| 22 |

+

- **Finetuned from model:** [cerspense/zeroscope_v2_576w](https://huggingface.co/cerspense/zeroscope_v2_576w)

|

| 23 |

+

- **License:** Creative Commons Attribution Non Commercial 4.0

|

| 24 |

+

- **Resources for more information:** [GitHub](https://github.com/showlab/Show-1), [Website](https://showlab.github.io/Show-1/), [arXiv](https://arxiv.org/abs/2309.15818)

|

| 25 |

+

|

| 26 |

+

## Usage

|

| 27 |

+

|

| 28 |

+

Clone the GitHub repository and install the requirements:

|

| 29 |

+

|

| 30 |

+

```bash

|

| 31 |

+

git clone https://github.com/showlab/Show-1.git

|

| 32 |

+

pip install -r requirements.txt

|

| 33 |

+

```

|

| 34 |

+

|

| 35 |

+

Run the following command to generate a video from a text prompt. By default, this will automatically download all the model weights from huggingface.

|

| 36 |

+

|

| 37 |

+

```bash

|

| 38 |

+

python run_inference.py

|

| 39 |

+

```

|

| 40 |

+

|

| 41 |

+

You can also download the weights manually and change the `pretrained_model_path` in `run_inference.py` to run the inference.

|

| 42 |

+

|

| 43 |

+

```bash

|

| 44 |

+

git lfs install

|

| 45 |

+

|

| 46 |

+

# base

|

| 47 |

+

git clone https://huggingface.co/showlab/show-1-base

|

| 48 |

+

# interp

|

| 49 |

+

git clone https://huggingface.co/showlab/show-1-interpolation

|

| 50 |

+

# sr1

|

| 51 |

+

git clone https://huggingface.co/showlab/show-1-sr1

|

| 52 |

+

# sr2

|

| 53 |

+

git clone https://huggingface.co/showlab/show-1-sr2

|

| 54 |

+

|

| 55 |

+

```

|

| 56 |

+

|

| 57 |

+

## Citation

|

| 58 |

+

|

| 59 |

+

If you make use of our work, please cite our paper.

|

| 60 |

+

```bibtex

|

| 61 |

+

@misc{zhang2023show1,

|

| 62 |

+

title={Show-1: Marrying Pixel and Latent Diffusion Models for Text-to-Video Generation},

|

| 63 |

+

author={David Junhao Zhang and Jay Zhangjie Wu and Jia-Wei Liu and Rui Zhao and Lingmin Ran and Yuchao Gu and Difei Gao and Mike Zheng Shou},

|

| 64 |

+

year={2023},

|

| 65 |

+

eprint={2309.15818},

|

| 66 |

+

archivePrefix={arXiv},

|

| 67 |

+

primaryClass={cs.CV}

|

| 68 |

+

}

|

| 69 |

+

```

|

| 70 |

+

|

| 71 |

+

## Model Card Contact

|

| 72 |

+

|

| 73 |

+

This model card is maintained by [David Junhao Zhang](https://junhaozhang98.github.io/) and [Jay Zhangjie Wu](https://jayzjwu.github.io/). For any questions, please feel free to contact us or open an issue in the repository.

|